Over the last couple of months there has been much blog-viating about what the models used in the IPCC 4th Assessment Report (AR4) do and do not predict about natural variability in the presence of a long-term greenhouse gas related trend. Unfortunately, much of the discussion has been based on graphics, energy-balance models and descriptions of what the forced component is, rather than the full ensemble from the coupled models. That has lead to some rather excitable but ill-informed buzz about very short time scale tendencies. We have already discussed how short term analysis of the data can be misleading, and we have previously commented on the use of the uncertainty in the ensemble mean being confused with the envelope of possible trajectories (here). The actual model outputs have been available for a long time, and it is somewhat surprising that no-one has looked specifically at it given the attention the subject has garnered. So in this post we will examine directly what the individual model simulations actually show.

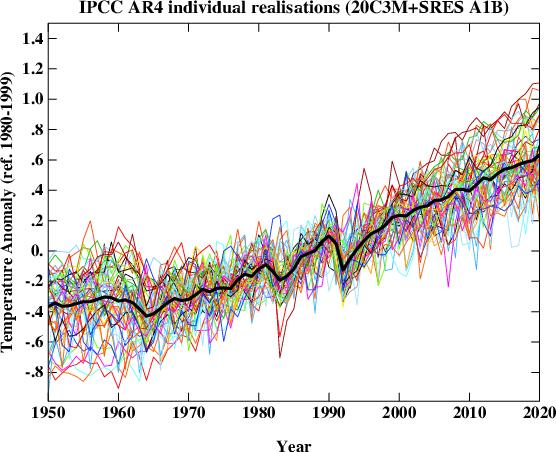

First, what does the spread of simulations look like? The following figure plots the global mean temperature anomaly for 55 individual realizations of the 20th Century and their continuation for the 21st Century following the SRES A1B scenario. For our purposes this scenario is close enough to the actual forcings over recent years for it to be a valid approximation to the simulations up to the present and probable future. The equal weighted ensemble mean is plotted on top. This isn’t quite what IPCC plots (since they average over single model ensembles before averaging across models) but in this case the difference is minor.

It should be clear from the above the plot that the long term trend (the global warming signal) is robust, but it is equally obvious that the short term behaviour of any individual realisation is not. This is the impact of the uncorrelated stochastic variability (weather!) in the models that is associated with interannual and interdecadal modes in the models – these can be associated with tropical Pacific variability or fluctuations in the ocean circulation for instance. Different models have different magnitudes of this variability that spans what can be inferred from the observations and in a more sophisticated analysis you would want to adjust for that. For this post however, it suffices to just use them ‘as is’.

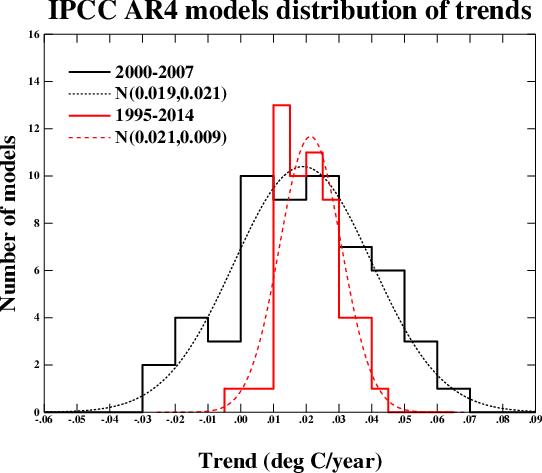

We can characterise the variability very easily by looking at the range of regressions (linear least squares) over various time segments and plotting the distribution. This figure shows the results for the period 2000 to 2007 and for 1995 to 2014 (inclusive) along with a Gaussian fit to the distributions. These two periods were chosen since they correspond with some previous analyses. The mean trend (and mode) in both cases is around 0.2ºC/decade (as has been widely discussed) and there is no significant difference between the trends over the two periods. There is of course a big difference in the standard deviation – which depends strongly on the length of the segment.

Over the short 8 year period, the regressions range from -0.23ºC/dec to 0.61ºC/dec. Note that this is over a period with no volcanoes, and so the variation is predominantly internal (some models have solar cycle variability included which will make a small difference). The model with the largest trend has a range of -0.21 to 0.61ºC/dec in 4 different realisations, confirming the role of internal variability. 9 simulations out of 55 have negative trends over the period.

Over the longer period, the distribution becomes tighter, and the range is reduced to -0.04 to 0.42ºC/dec. Note that even for a 20 year period, there is one realisation that has a negative trend. For that model, the 5 different realisations give a range of trends of -0.04 to 0.19ºC/dec.

Therefore:

- Claims that GCMs project monotonic rises in temperature with increasing greenhouse gases are not valid. Natural variability does not disappear because there is a long term trend. The ensemble mean is monotonically increasing in the absence of large volcanoes, but this is the forced component of climate change, not a single realisation or anything that could happen in the real world.

- Claims that a negative observed trend over the last 8 years would be inconsistent with the models cannot be supported. Similar claims that the IPCC projection of about 0.2ºC/dec over the next few decades would be falsified with such an observation are equally bogus.

- Over a twenty year period, you would be on stronger ground in arguing that a negative trend would be outside the 95% confidence limits of the expected trend (the one model run in the above ensemble suggests that would only happen ~2% of the time).

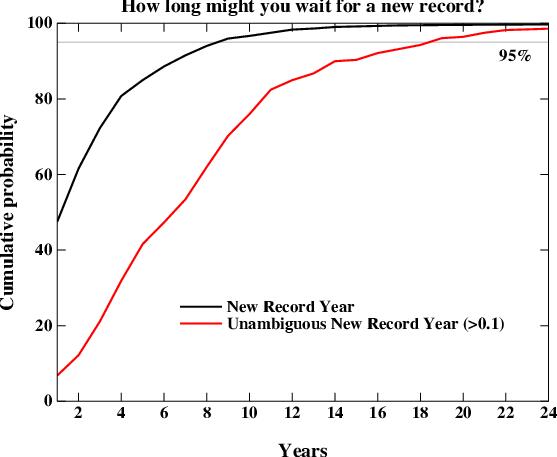

A related question that comes up is how often we should expect a global mean temperature record to be broken. This too is a function of the natural variability (the smaller it is, the sooner you expect a new record). We can examine the individual model runs to look at the distribution. There is one wrinkle here though which relates to the uncertainty in the observations. For instance, while the GISTEMP series has 2005 being slightly warmer than 1998, that is not the case in the HadCRU data. So what we are really interested in is the waiting time to the next unambiguous record i.e. a record that is at least 0.1ºC warmer than the previous one (so that it would be clear in all observational datasets). That is obviously going to take a longer time.

This figure shows the cumulative distribution of waiting times for new records in the models starting from 1990 and going to 2030. The curves should be read as the percentage of new records that you would see if you waited X years. The two curves are for a new record of any size (black) and for an unambiguous record (> 0.1ºC above the previous, red). The main result is that 95% of the time, a new record will be seen within 8 years, but that for an unambiguous record, you need to wait for 18 years to have a similar confidence. As I mentioned above, this result is dependent on the magnitude of natural variability which varies over the different models. Thus the real world expectation would not be exactly what is seen here, but this is probably reasonably indicative.

We can also look at how the Keenlyside et al results compare to the natural variability in the standard (un-initiallised) simulations. In their experiments, the decadal mean of the period 2001-2010 and 2006-2015 are cooler than 1995-2004 (using the closest approximation to their results with only annual data). In the IPCC runs, this only happens in one simulation, and then only for the first decadal mean, not the second. This implies that there may be more going on than just the tapping into the internal variability in their model. We can specifically look at the same model in the un-initiallised runs. There, the differences between first decadal means spans the range 0.09 to 0.19ºC – significantly above zero. For the second period, the range is 0.16 to 0.32 ºC. One could speculate that there is actually a cooling that is implicit to their initialisation process itself. It would be instructive to try some similar ‘perfect model’ experiments (where you try and replicate another model run rather than the real world) to investigate this further though.

Finally, I would just like to emphasize that for many of these examples, claims have circulated about the spectrum of the IPCC model responses without anyone actually looking at what those responses are. Given that the archive of these models exists and is publicly available, there is no longer any excuse for this. Therefore, if you want to make a claim about the IPCC model results, download them first!

Much thanks to Sonya Miller for producing these means from the IPCC archive.

RE #227: “I still want an answer from someone: what kind of GCM did Arrhenius use back in 1896?”

Maybe a bit OT, but it was mentioned somewhere that he did his sums for some 17000 cells. Of course those times there existed numerous persons with the title of “research assistant”.

Unfortunately I have lost the site with a facsimile of his original paper. Radiative properties of carbon dioxide as well as the other major gas components were obviously known at that time. He was also able to include water vapor feedback in his calculations and also presented a rough estimate of cloud impact (cooling of about 1 degC, though he conceded this was not well founded on observations). His final sensitivity figure was 4 degC.

He also had some 600 temperature time series to test his theoretical results against.

In his introduction he credited a number of earlier works – by “giants on whose shoulders” he stood. Perhaps an interesting concept today.

Re #251

He didn’t use a GCM. Here is a link to the paper. I doubt very seriously that he used 17,000 cells.

Radiative properties of CO2 were poorly known. I don’t have time to search through this scan but I’m pretty sure that he got a sensitivity figure of 6C not 4C. Gilbert Plass got 3.something C 50 years later.

http://www.globalwarmingart.com/images/1/18/Arrhenius.pdf

Re #227, 251: (Arrhenius’ original paper)

The site you want is:

http://wiki.nsdl.org/index.php/PALE:ClassicArticles/GlobalWarming

A wonderful resource.

Gerald Browning, So, how about suggestions as to how the modeling should have been done? Specifically, how would you simulate a volcanic eruption in a GCM if you did not put it in “ad hoc”. Do you know of any modeling efforts where such a departure from fidelity to the physics produced spurious improvement in the simulation? My experience has been that it would introduce inaccuracies rather than hide them.

And while eliminating “excess verbiage” is always good writing practice, I would think you of all people would be happy to see caveats.

Jerry, the reality of anthropogenic causation of the current warming does not depend on the models for support. We know CO2 is a greenhouse gas up to 280 ppmv, and the physics doesn’t tell us to expect anything different at higher concentrations. So, the question is how best to model that given finite computing resources. If you have concrete suggestions for how to do that, then they will be welcome. If you do not, modelers will go ahead as best computational limitations allow–and they’ll still succeed.

re: 250. An actual scientist would pose questions through the peer review process (journals) and through scientific conferences. Not by pretending to be holier-than-thou about a topic outside their area of expertise (climate science) and disingenuously have someone else ask their questions for them.

[edit]

1. What is the ideal concentration of atmospheric CO2 for the Earth?

2. What is the ideal level of Greenhouse Efect for life on Earth?

[Response: There is none. Some. Life on Earth has persisted for billions of years throughout hugely varying climate regimes that have gone from Snowball Earth to the hothouse of the Cretaceous. It will persist whatever we do. The relevance to humans of these two questions is zero. – gavin]

Re #227 Arrhenius’s paper is available from Global Warming Art. The paper is here.

Cheers, Alastair.

Thank you Gavin.

Then why, if therefore there is no generally accepted ideal levels, are we concerned about current or projected levels?

Alan

[Response: Hmmm…. possibly because ‘life on Earth’ hasn’t constructed an extremely complex society that is heavily dependent on the stability of the climate? I wonder who has….. – gavin]

Re #250

According to Browning:

“You might want to read Tom Vonk’s discussion of the Pinatubo climate run

on Climate Audit.He makes the very valid scientific point that the ocean does

not change that much during the time frame of the climate model.”

Tom doesn’t discuss that at all, he imagines what the model might be and talks about that!

“I do not know if you will be able to get the complete and accurate information about what the models did and I doubt it ………..So if you get the information , I am ready to bet that it doesn’t contain much more than what I sketched above , envelopped in fancy vocabulary like spectral absorptivity and such”

He also assumes that “the time is too short to perturbate the oceans’ systems”, however El Niños last between 0.5-1.5 years so it would seem that the ‘time frame’ is comparable with the time scale of perturbations of the ocean.

Ray Ladbury (#254),

First I mention that there does not seem to be any understaning of the difference between well posed and ill posed time dependent continuum systems on this site (see Anthony Kendall’s comment #223) and my response in #230.

Secondly, for someone to make a statement that it is not important for the numerical solution to be close to the continuum solution is beyond comprehension. The entire subject of numerical analysis for time dependent equations is to ensure that the numerical approximation accurately and stablely approximates a given well posed system so that it will converge to the continuum solution as the mesh size is reduced. Both of these are well established mathematical concepts.Thus I am not sure there is any point in continuing any discussion when well developed mathematical concepts are being completely ignored.

[Response: There is little point in continuing because you refuse to listen to anything anyone says. If climate models were solving an ill-posed system they would have no results at all – no seasons, no storm tracks, no ITCZ, no NAO, no ENSO, no tropopause, no polar vortex, no jet stream, no anything. Since they have all of these things, they are ipso facto solving a well-posed system. The solutions are however chaotic and so even in a perfect model, no discrete system would be able to stay arbitrarily close to the continuum solution for more than a few days. This is therefore an impossible standard to set. If you mean that the attractor of the solution should be arbitrarily close to the attractor of the continuum solution that would be more sensible, but since we don’t know the attractor of the real system, it’s rather impractical to judge. Convergence is a trickier issue since the equations being solved change as you go to a smaller mesh size (ie. mesoscale circulations in the atmosphere to eddy effects in the ocean go from being parameterised to being resolved). However there is still no evidence that structure of climate model solutions, nor their climate sensitivity are a function of resolution. – gavin]

# Ray Ladbury Says:

20 mai 2008 at 4:36 PM

>Gerald Browning, So, how about suggestions as to how the modeling should have been done? Specifically, how would you simulate a volcanic eruption in a GCM if you did not put it in “ad hoc”. Do you know of any modeling efforts where such a departure from fidelity to the physics produced spurious improvement in the simulation? My experience has been that it would introduce inaccuracies rather than hide them.

You might start with a well posed system.

>And while eliminating “excess verbiage” is always good writing practice, I would think you of all people would be happy to see caveats.

It was the caveats that turned me off. If the science is rigorous, it can be shown with rigorous mathematics in a quantitative manner. I am not impressed by hand waving.

>Jerry, the reality of anthropogenic causation of the current warming does not depend on the models for support. We know CO2 is a greenhouse gas up to 280 ppmv, and the physics doesn’t tell us to expect anything different at higher concentrations. So, the question is how best to model that given finite computing resources. If you have concrete suggestions for how to do that, then they will be welcome. If you do not, modelers will go ahead as best computational limitations allow–and they’ll still succeed.

Heinz and I have introduced a well posed system that will converge to the continuum solution of the nonhydrostatic system. We have shown that the system works for all scales of motion. And different stable and accurate numerical approximations produce the same answers as the numerical solutions converge, But my guess is that it will take a new generation before things change.

Jerry

Phil Felyon (#259),

So provide the specific changes that were made to the model

(mathematical equations and parameterizations) so we can determine if

it is possible to determine the result from perturbation theory.

Jerry

#258. As many have repeatedly said – its not what the climate is so much as how fast you change it. Life including humans can adapt if change happens slowly but rapid change can overwhelm us. We will probably survive as a species but many individuals will not if change is too fast. We must also look at the past and realise that there is a risk that civilisation “as we know it” is at risk as well.

Alan Millar — it’s the rate of change that matters biologically.

For some reason, my previous post was flagged as spam. Can the site administrator please re-post? Thanks.

[Response: Posts rejected as spam are not stored anywhere. You need to resubmit without the offending words (drug names, gambling references, etc.)]

[edit – no personal comments]

I cited Collins’ 2002 article (Skeptic ref. 28) merely to show from a different perspective that GCM climate projections are unreliable. Likewise Merryfield, 2006 (ref. 29). Citing them had nothing to do with validating my error analysis.

Collins and Allen, 2002, mentioned by Schneider, tests the “potential predictability” of climate trends following boundary value changes. E.g., whether a GCM can detect a GHG temperature trend against the climate noise produced by the same GCM. This test includes the assumption that the GCM accurately models the natural variability of the real climate. But that’s exactly what is not known, which is why the test is about “potential predictability” and not about real predictability. Supposing Collins and Allen, 2002, tells us something about the physical accuracy of climate modeling is an exercise in circular reasoning.

[Response: I’m fascinated that two papers that use the same model, by the same author, in two different ‘potential predicitability’ configurations are treated so differently. The first says that initial condition predictability is small, the second says the boundary value predictability is high. The first is accepted and incorrectly extrapolated to imply the converse of second, while the second is dismissed as circular reasoning. Cognitive dissonance anyone? – gavin]

The error relevant to uncertainty propagation in the Skeptic article is theory-bias. Schneider’s claim of confusion over type 1 and type 2 errors is both wrong and irrelevant. Of the major commentators in this thread, only Jerry Browning has posted deeply on theory-bias, and his references to the physics of that topic have been dismissed.

“Response: If convection is the only thing going on then tropospheric tropical trends would be larger than at the surface. Therefore a departure from that either implies the convection is not the only thing going on, or the data aren’t good enough to say. I at no time indicated that the models ‘have to be right’, I merely implied that an apparent model-data discrepancy doesn’t automatically imply that the models are wrong. That may be too subtle though. If the data were so good that they precluded any accommodation with the model results, then of course the models would need to be looked at (they have been looked at in any case). However, that isn’t the case. That doesn’t imply that modelled convection processes are perfect (far from it), but it is has proven very hard to get the models to deviate much from the moist adiabat in the long term. Simply assuming that the data must be perfect is not however sensible. Jumping to conclusions about my meaning is not either. – gavin”

Would it be fair to say from this that getting better data should take a higher priority than refining models, for the time being?

[Response: There is no conflict here. Many groups are working on the data. – gavin]

Re #240,

Chris,

I have not got a lot of time to spare right now, but I will provide a fuller reply when I can.

I noticed and will note that you are not responding to my original point.

Do you maintain that the statement:

“In terms of climate forcing, greenhouse gases added to the atmosphere through mans activities since the late 19th Century have already produced three-quarters of the radiative forcing that we expect from a doubling of CO2.”

[Response: This is roughly correct: all GHGs (CO2+CH4+N2O+CFCs) = ~2.6 W/m2, about 2/3 of the 2xCo2 forcing (~4 W/m2). – gavin]

and hence its summary that you quoted

“2. Although we are far from the benchmark of doubled CO2, climate forcing is already about 3/4 of what we expect from such a doubling.”

[Response: This is false. First, there is more to radiative forcing than just greenhouse gases – aerosol increases are roughly -1 W/m2 (with lots of uncertainty) reducing the net forcing to a best estimate of 1.6 W/m2 (so about 40% of what you would see at a doubling). The follow on point then goes on to estimate climate sensitivity, but this ignores the lag imposed by the ocean which implies that we are not in equilibrium with the current forcing. Therefore the temperature rise now cannot simply be assumed to scale linearly with the current forcing to get the sensitivity. Lindzen knows this very well, and so why do you think he doesn’t mention these things? – gavin]

are “wildly incorrect”.

If you don’t: it would be nice for you to say so. If you do please explain how.

Best Wishes

Alexander Harvey

194 asked “Do you think Carl Wunsch would agree with your assessment, and correct me if I misunderstood your drift, that these guys have a lousy hypothesis?”

If I’ve understood your question your asking if Carl Wunsch thinks that AGW is a lousy hypothesis. Here is a direct quote from his web page: http://ocean.mit.edu/~cwunsch/papersonline/responseto_channel4.htm

I believe that climate change is real, a major threat, and almost surely has a major human-induced component. But I have tried to stay out of the climate wars because all nuance tends to be lost, and the distinction between what we know firmly, as scientists, and what we suspect is happening, is so difficult to maintain in the presence of rhetorical excess. In the long run, our credibility as scientists rests on being very careful of, and protective of, our authority and expertise.

RE Jerry, Gavin and GCM’s

OK. I get the point. Gavin: “The GCM is reliable, it can predict. It predicts in conformity with what basic science (re CO2 and the greenhouse effect)tells us we should expect. The uncertainties regarding other feedbacks, climate responses etc notwithstanding.”

Jerry: “A host of uncertainties result in the modeller choosing values and setting boundaries etc in such a way that the result is not a prediction but a function of the modeller’s whim.”

Jerry I do think however that you need to write this all up in a meaningful way and submit for peer review. Surely if you have a substantial and justifiable criticism of a GCM that should be ventilated in the journals? With this I do not mean a criticism which simply reiterates that there are some uncertainties, but a criticism that impacts the overall confidence climate modellers seem to have in their product.

I mean a flight simulator is not identical to a plane but it does teach a novice how a plane responds in real flight – by and large. Is there any reason a GCM is not similar?

For the time being I will have to tentatively accept that the GCM provides at least an approximation of future global mean temperature.

So global mean temperature increases over time, now why should that bother me? I mean, surely the GCM can not reliably tell what for instance precipitation in my neighbourhood will be 25 years from now?

Gavin,

“[Response: There is no conflict here. Many groups are working on the data. – gavin]”

There’s conflict in the sense that funding is finite (in research or anything else). To put the question another way, where would you have the next $1M of funding go: into modelling or data collection?

[Response: It’s a false dichotomy and not up to me in any case. Scientific projects get funded on a competitive basis – if you have a good proposal, that’s tractable, interesting and novel it will (sometimes) get funded. I write proposals to get things that I want to work on funded, and other scientists do similarly. Strategic priorities are set much further up the chain. The issue with the long term data gathering is that this is funded by and large by weather services whose priorities don’t necessarily include the use of the data for climate purposes. This is a much bigger issue than $1 million dollars could possibly solve. Should we be putting more money into a ‘National Climate Service’? Definitely. Will that money need to come out of the climate modelling budget? No. Climate modelling is just not that big a player (e.g. GISS climate modelling is less than 0.5% of NASA’s Earth Science budget). – gavin]

Re #266,

Gavin,

I am aware just how tricksy his an others use of numbers and language is.

If you go back to my #133, I think I had shown how by ingenious use of language he can say things that are undeniably misleading while still not without justification. For instance I showed how he could claim the three-quarters simply by not stating the period accurately (end of the 19th century is a bit vague)

That is how it is done.

I think I also showed that it relied on the use of figures that suit him. I do not think Lindzen is stupid and I expect he (as you note) knows exactly how much he is playing fast and loose. That said I think if taken to task he could show how his presentation is correct “in his own terms”.

From the GISS figures viewed in a certain way you can get 75% (1880-2003) for the WellMixedGHG and a bit over 50% for total forcings as I stated originally.

To sum up I do not think blanket responses likes “wildly incorrect” are as strong trying to explain how his argument could be justified and what they rely on.

I showed that he went on to pick low values for temperature increase (.6C +/- .15C) and insisting that models with 4C/doubling are typical.

I do not support such methods but I thought it interesting to show how such presentations can be created.

Best Wishes

Alexander Harvey

Re #261 Geraldo

“Phil Felyon (#259),

So provide the specific changes that were made to the model

(mathematical equations and parameterizations) so we can determine if

it is possible to determine the result from perturbation theory.”

This has nothing to do with what I posted! Do you agree that Vonk’s “very valid point” in fact wasn’t?

Re 249, Chris said: “Now if the tropical troposphere was not actually warming as much as models say, then that could mean still higher climate sensitivity (or the models actually underestimating surface warming), since the lapse rate feedback represents the most negative feedback (aside from the OLR). That’s because the more sharp the temperature drop with height, the stronger your greenhouse effect is”.

That’s wrong. IF the troposphere didn’t warm as much as the models say AND the lack of warming in the troposphere came because of an extra warming at the surface, THEN the climate sensitivity would be higher (I would rather say that the consecuences come faster, but in the short term both are the same).

But IF the troposphere doesn’t warm as much as the models say AND the surface warms in the same way as predicted by the models, which is what is happening, THEN the models are showing a system that, overall, accumulates more heat than the observations, or using Gavin’s terms, they show a system with a higher difficulty to lose heat to the space than what the observations reveal.

The models show the correct warming for the surface BECAUSE, although they are (incorrectly) considering a GHE far too strong, they rely on a delayed response of the surface temperatures to the GHE (you will recall statements saying that the warming in the middle term, like 50 years from now, won’t be stopped even if we stopped emitting CO2 right now). This delayed response of the surface temperatures in the models allows them to have the right prediction for today, and still a catastrophic prediction for tomorrow. Well, I just don’t buy it.

[Response: Unfortunately radiative transfer is not dependent on your shopping habits. – gavin]

That’s it Gavin? Won’t you agree that, if we were going to give “any” credit to the observations of tropospheric temperatures (you have already shown your doubts about the measures, but this is a what-if), then the models predict a system which accumulates more heat than observed? I mean, if they get everything else right, but show a hotter troposphere, then there is more energy in their system than in the real system, isn’t it? If the tropical tropospheric temperatures happened to be like measured, the models would not be letting escape as much energy to space as it would be escaping in real life.

[Response: The difference would be tiny and swamped by the difference due to cloud changes or ocean heat accumulation. – gavin]

re 272 and delays:

this is trivial. that there are delays is trivial. The change in forcing due to CO2 is instantaneous but the system requires time to respond to the change.

Start with a system of odes:

dx/dt = f(x,p) where p is a parameter. Find static solution x=xo such that f(xo,p) = 0

Now make small INSTANTANEOUS perturbation in p: p -> p + epsilon

and expand everything in site: x = xo + epsilon x1 + …

then at leading order in epsilon

dx1/dt = df(xo,p)/dx x1 + df(xo,p)/dp

and wait for x1 to reach its steady state x1 = [df(xo,p)/dx]^-1 df(xo,p)/dp.

It takes time for x1 to reach it’s static solution. Thus it takes time for x to reach its new static solution. That time is governed by the dynamics around the unperturbed system. The deviation of x1 is governed by the perturbation df/dp.

If the timescales encoded in df/dx are a decades then it will take (approximately) decades for the system to respond and x1 to take on its new value.

Although this example is trivial it is inconceivable that something similar doesn’t happen in the climate.

My challenge to you is this:

Find a dynamical system, any dynamical system, that doesn’t require time in order to settle down to its new behavior after a change in its parameters.

I know nothing about atmospheric dynamics but a relaxation time on the order of decades would be my starting guess.

Re #274 John E. Pearson:

That’s the theoretical side. Then there’s observations. Sea areas warm slower than land areas. The Southern hemisphere, mostly sea, warms slower than the Northern hemisphere. Look at any surface temperature data set. Obviously something is taking time to warm up; and it’s wet, salty and deep.

Phil (#217),

Direct from Tom Vonk’s comment:

> As those are short signals , the problem can be solved by a perturbation method where more or less everything can be considered constant and it wouldn’t imply anything about the skill to predict absolute values or variations over periods of hundreds of years when oceans , sun and ice cover kick in .

So I repeat, Provide the exact changes to the equations and parameterizations so a simple perturbation analysis can be done. [edit]

Jerry

[Response: Maybe it is the medium, but do you have any idea how rude you sound? You might also want to ask yourself how Phil is supposed to know any more than you about a study that was done in 1991? Please be sensible. As usual though, you miss the point entirely. Of course you could fit some simple model to a single output of the GCM – look up Crowley (2000) or any number of 1-D energy balance models – but the point here is that a GCM which you claim cannot possibly work, clearly did. Thus you still have not provided any reason why your critique of models in general has any validity whatsoever. – gavin]

RE: 275 I agree. Thing is that Nylo in 272 wrote “(you will recall statements saying that the warming in the middle term, like 50 years from now, won’t be stopped even if we stopped emitting CO2 right now). This delayed response of the surface temperatures in the models allows them to have the right prediction for today, and still a catastrophic prediction for tomorrow. Well, I just don’t buy it.”

I was simply trying to point out that one should expect a lag time for ANY dynamical system to respond to a perturbation. This includes any and all models of the climate, as well as the climate itself.

Gerald (#260)

Why on earth do you keep on bothering people here regarding the well-posedness of the models. As far as I can tell you are a mathematician, and I’m an applied mathematician myself, and so you should perfectly well that much of our everyday technology is based on numerical simulations of system which we do not know to be well posed.

The main example is the Navier-Stokes equations. It is not even known if these equations have have long term smooth solutions with finite energy. Despite this fact we use them to numerically design cars, aeroplanes, boats… The list goes on.

Ideally one would like know that one is solving a well posed problem but if you are working with applications you often have too complicated equations to be able to prove this. However, the observed success of these various models means that they are close enough to being well posed to give us the stable behaviour we need in our numerical simulations.

If you trust numerical solutions of Navier-Stokes enough to step into a modern aeroplane you should stop trying to bully people aout techincalities and instead make a constructive contribution.

Re 272. Nylo,I am puzzled by your last paragraph. Do you expect an instantaneous surface temperature response to eliminate any radiative energy imbalance? It seems to me that the models are producing exactly the result I would expect, given the thermal lag of the oceans.

Jonas, thank you for your comment in 278. It gives me a bit of insight on what is going on here with Gerald Browning.

As an undergraduate student in aeronautical engineering, I was absolutely entranced by the development of the Navier-Stokes equations. It never occured to us to ask whether they were “well posed.” We simply wanted to know whether they yielded useful results, which they certainly did, even when greatly simplified for specific applications. The same is true of climate models.

This “ill-posedness” business is nothing more than an irrelevant diversion as far as I am concerned. With a few more Gerald Brownings around, we never would have gotten to the moon.

Jonas (#278),

Well as usual this site has yet to produce someone that understands the definition of well posedness. The compressible Navier-Stokes equations

are well posed, The question of the long term existence of their solutions in 3D is a different matter. You have managed to confuse the two concepts.

As I have said a number of times, unphysically large dissipation can hide a number of sins including the ill posedness of the hydrostatic system. Just because someone can run a model that is no where close to the continuum solution does that mean the continuum system that the model “approximates” is well posed or that the model is producing reasonable results. I have shown on Climate Audit that by choosing the forcing appropriately, one can obtain any solution one wants from a model. That does not mean the dynamics or physics in the model are accurate.

I have cited two manuscripts by Dave Williamson that are easy to read and understand that show some of the problems with incorrect cascades and parameterizations. Interesting that they are being ignored,

Jerry

[Response: Actually you haven’t cited them at all. Can we get a journal, year, doi or linked pdf? Similarly, you have not given a citation for any of your apparently seminal works where your thesis is proved in meticulous detail. – gavin]

Ron Taylor (#280),

One of the reasons that we got to the moon is that numerical analysts developed highly accurate integration methods to compute accurate trajectoroes.

At a conference I was informed by a colleague that the simulations of the rocket nozzels was completely in error. Essentially the nozzles had to be over engineered to hold the propulsion exaust.

Jerry

Re 281:

Oh, you’re published at Climate Audit — why didn’t you say so? I’ll take that over JGR any day.

Specific references.

Phillips, T., …, D. L. Williamson (2004)

Evaluating parameterizations in general circulation models

BAMS, 85-12, pp. 1903-1915

Jablonowski, C., and D. L. Williamson (2006)

A baroclinic instability test case for atmospheric model dynamical cores.

Q.J.R.Met.Soc., 132, pp 2943-2975

Lu, C., W. Hall, and S. Koch: High-resolution numerical simulation of gravity wave-induced turbulence in association with an upper-level jet system. American Meteorological Society 2006 Annual Meeting, 12th Conference on Aviation Range and Aerospace Meteorology.

Browning, G. and H.-O. Kreiss: Numerical problems connected with weather prediction. Progress and Supercomputing in Computational Fluid Dynamics, Birkhauser.

Jerry

[Response: Thanks. I put in the links for pdf’s for the first three and to the book for the last. – gavin]

[Further response: What point are you making with these papers? I can’t see anything in first two that is relevant. Both sets of authors are talking about test beds for improving GCMs and if they thought they were useless, I doubt they’d be bothering. Jablonowski is organising a workshop in the summer on this where most of the model dynamic cores will be tested, but the idea is not to show they are all ill-posed! I don’t see the connection to the Lu et al paper at all. -gavin]

Gerald #281

No Gerald, I have not missunderstood the definition of a well posed problem. In order to be well posed a problem should

a) Have a solution at all

b) Given the initial/boundary data that solution should be unique.

c) The solution should denpend continuously on the given data

Your comment have focused on point c) but the other two are also part of the definition of well-posedness.

In fact if you are going to object to simulations knowing that the problem is well posed isn’t even the best thing to complain about. Even if the problem is well posed it is far more important to know if the problem is well-conditioned. Just knowing that the solution depends continuously is is of little value.

Even for problems which are not well posed we can often do a lot. If you talk to the people doing radiology you will find that they have to compute inverse Radon transform, and that problem is known the be ill-posed. However there are ways around that too.

For something like a climate model you probably don’t even need to looks for classical solutions, since one is interested in long term averages of different quantities. Knowing that e.g. a weak solution or a viscosity solution exists, together with having an ergodic attractor in the phase space of the model would give many correct averages, even if the system is ill-conditioned and chaotic within the attractor.

I had a look at what you wrote over at Climate Audit too, and calling the people who are writing at RC “pathetic” for for knowing the definition of a well posed problem in mathematics isn’t exactly in the spirit of the good scientific discussion you claim to want.

While I’m at it I can also let you know that the performance of a modern supercomputer is not given as the single processor flops times the number of processors. It is based on running a standardised set of appliction benchmarks. Take a look at http://www.top500.org if you want to get up to date on the subject.

Perhaps some might consider the following as just a difference in semantics, but I believe the use of the word “predictions” for GCMs or any other digital models that attempt to describe future possible outcomes, is overstating their purpose. What these models do, to the best of my understanding, is to present what could occur given different assumptions,including human choices of future energy use, economical, environmental and geo political decisions, such as described by the SRES scenarios in the IPPC reports.

Models aren’t crystal balls as much as they are roadmaps with many different routes available. If you start out in Chicago and take one route, you might end up in Seattle, another and you’d end up in Miami. In the same vein, much depends on the scenario or combination of scenarios taken.

A more accurate word than prediction would be a projection of possible outcomes that depend on the choices that we make.

Jerry, OK, so you don’t buy the models. It doesn’t alter the fact that CO2 is a greenhouse gas or that CO2 has increased 38%. It doesn’t change the fact that the planet is warming. In fact, all it changes is how much insight into the ultimate effects of warming we would have independent of paleoclimate. And paleoclimate provides no comfort, since it provides little constraint on the high side of CO2 forcing. In fact, if the models are wrong, the need for action is all the more urgent, because we are flying blind.

Models are essential for directing our efforts toward mitigation and adaptation, so we are going to have models. The questions is how to make them as good as they can be given constraints we operate under. So if you want to “use your powers for good,” great. However, saying “You’re all wrong,” isn’t particularly productive for anyone, including you. I’m having a hard time seeing what you get out of it.

Gerald, what point are you trying to make? Of course the numerical integration methods used for the trajectories were accurate. And I frankly doubt your comment about the engines, which I assume refers to the Saturn V. But, even if true, what on earth does that have to do with anything being discussed here?

Re #288

Actually combustion instabilities in rocket motors was a major problem, rockets exploding on the pad was a common occurrence in the 50s. Several colleagues of mine on both sides of the atlantic worked on the problem and it was solved by experiments not computations.

I repeat, so the GCM’s indicate persistent increase in global mean temperature – why is that such a train smash?

Why should it have much policy impact?

[Response: Please read the IPCC WG2 report. -gavin]

Lawrence Brown (#286),

And if the projections (or whatever you want to call them) are completely incorrect and you base some kind of climate modification on them, then what?

One of the first tests of any model should be obtaining a similar solution with decreasing mesh size. Williamson has shown this is not the case and it is appalling that these standard tests in numerical analysis have not been performed on models that are being used to determine our future.

Jerry

[Response: What rubbish. A) these tests are regularly done for most of the model dynamical cores, and B) the solutions are similar as the mesh size decreases, but obviously small scale features are better resolved. – gavin]

Jonas (#285),

You seem to conveniently have forgotten the interval of time mentioned in Peter Lax’s original theory. You might look in Richtmyer and Morton’s classic book on the initial value problem on pages 39-41.

Jerry

Well this site is selectively editing comments. It published Tapio Schneider’s comment because it supported the political goals of the site, but would not show either my reponse or Pat Frank’s response. We both had to put out comments on Climate Audit so they could be seen. Such selective editing clearly is for political reasons, i.e. the site cannot allow rigorous scientific criticism.

I doubt this comment will be shown.

Jerry

[Response: Rubbish again. Criticism is fine – ad homs and baseless accusations are not. If you just stuck to the point, you’d be fine. Frank’s response has not been submitted – even after I told him what to fix to get past the spam filters. It’s really easy – don’t insult people and don’t get tedious. – gavin]

Gavin (#291),

And you claim I am rude. Only in reponse to your insults. Williamson’s tests show very clearly the problem with inordinately large dissipation, i.e. the vorticity cascade is wrong and the paramterizations necessarily inaccurate exactly as I have stated. Anyone that takes the time to read the manuscripts can see the problems and can judge for themselves.

Jerry

[Response: Presumably they will get to this line in the conclusions of J+W(2006): “In summary, it has been shown that all four dynamical cores with 26 levels converge to within the uncertainty … “. Ummm…. wasn’t your claim that the models don’t converge? To be clearer, it is your interpretation that is rubbish, not the papers themselves which seem to be very professional and useful.- gavin]

Gavin (#294),

And what is the uncertainy caused by and how quickly do the models diverge?

By exponential growth in the solution (although the original perturbation was tiny compared to the size of the jet) and in less than 10 days. Also note how the models use several forms of numerical gimmicks – hyperviscosity and sponge layer near upper boundary) for the “dynamical core” tests, i.e. for what was suppose to be a test of numerical approximations of the dynamics only. And finally, the models have not approached the critical mesh size in the runs.

Jerry

Gavin

Thank you for your earlier comments about the dangers of methane feedbacks. You didn’t mention the other “missing” feedbacks – failing sinks, burning forests & etc. Are they OK?

I have an email from the Professor Balzter, which includes the following:

also

I still fret about the possible impact of positive feedbacks. A positive feedback, if I understand the term, only has an impact after global warming is under way. Surely this makes it very difficult to incorporate such feedbacks into GCMs calibrated on past events.

Since you are a betting man, what odds would you give on serious effects from positive feedbacks catching us by surprise?

P.S. Can you help me make the previous question more precise?

Gerald Browning (#291): Well, on one thing, we are agreed. I would not trust model output sufficiently to recommend a mitigation measure other than reduction of greenhouse gas emissions. When you find you are in a mine field, the only way you can be sure to get out is the way you came in. Indeed, we could guess this even based on the known physics of the greenhouse effect. The value of models is that they give us some insight into how hard we need to step on the brakes, and how best to direct our mitigation efforts.

So, Jerry, given that without the models, we do not have a way of establishing a limit on risk or of directing our efforts to best effect, is it your contention that dynamical modeling of climate is impossible given current computing resources and understanding. Or do you have concrete suggestions for improving the models? I suspect that because without the models mitigation is an intractable problem, we are stuck with making the models work. It is up to you whether you want to contribute to that effort, but it will go on whether you do or do not.

Phil (#289),

Thank you for the supporting facts.

Jerry

The article by Jablonowski and Williamson refers to a Tech Note at NCAR that I suggest be read as it contains more details about the tuning of the

unphysical dissipation that is of considerable interest.

Jerry

Gerald #292.

Lax is a great mathematician but his original papers are not among my readings. R&M I have on the other hand read, some time ago. Unless my memory is wrong(I’m at home and don’t have the book available here) the initial part of the book covers linear differential equations and the Lax-Richtmyer equivalence theorem for finite-difference schemes.

So, if by “the interval of time” you refer to the fact that this theorem only assumes that the solution exists from time 0 up to some time T and that for e.g Navier-Stokes it is known that such a T, depending on the initial data, exists then I understand what you are trying to say. However the problem with this is that unless you can give a proper estimate of T you can not know that the time step you choose in the difference scheme is not greater than T. If that is the case the analysis for small times will not apply to the computation. So long term existence of solutions is indeed important even when using the equivalence theorem.

Furthermore, the equations used here are not linear and for nonlinear equations neither of the two implications in the theorem are true.

There is no doubt that there are interesting practical and purely mathematical, questions here, but if you are worried about the methods used try to make a constructive contribution to the field rather than treating people here like fools and calling them names at other web sites. I’m not working with climate or weather simulation but I have doubts about something I read outside my own field I will politely ask the practitioners for an explanation or a good reference rather than trying to bully them.