Yesterday was the day that NASA, NOAA, the Hadley Centre and Berkeley Earth delivered their final assessments for temperatures in Dec 2020, and thus their annual summaries. The headline results have received a fair bit of attention in the media (NYT, WaPo, BBC, The Guardian etc.) and the conclusion that 2020 was pretty much tied with 2016 for the warmest year in the instrumental record is robust.

There is some more background here:

A bit more background on the temperature anomalies in 2020, which were statistically tied with 2016 for the warmest year in the instrumental record. pic.twitter.com/y3L6vgVnc3

— Gavin Schmidt (@ClimateOfGavin) January 14, 2021

[Note we will work on the model-observation comparison page to add the 2020 data point to the graphs [DONE], and update the datasets to their latest versions, but nothing dramatic will change – the latest observations remain pretty much in line with what models predicted. ]

But there are a few issues that readers here might appreciate that goes beyond what usually gets reported.

How does ENSO affect annual temperatures?

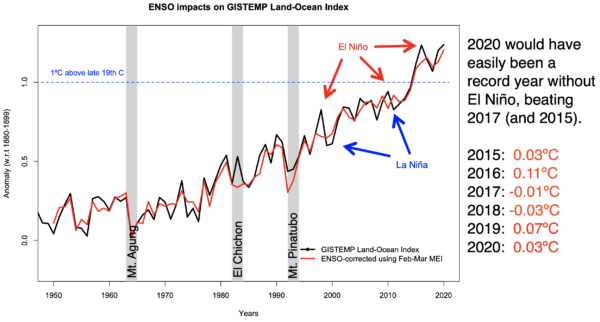

If you do a regression of the year-to-year variations in global temperature, you’ll find that the highest correlations are with the spring ENSO index (the February-March average to be specific, but almost any index from the winter/spring works equally well). Using that regression, you can estimate that the 2016 El Niño added 0.11ºC to the global temperatures in that year, and that we would have expected a much smaller 0.03ºC for 2020 (given the slight ENSO positive conditions early in the year. However, in the map of 2020 anomalies, the tropical Pacific looks to be (on average) slightly negative in phase, driven by the emerging La Niña event this fall/winter.

That suggests that we could usefully build a more complex connection between the global mean and ENSO – either with more predictors (say Feb/Mar but also Oct/Nov?) or by using a lagged model on the monthly anomalies. I’d be interested in any results people get and if it changes the ENSO-corrected annual timeseries substantially.

What are the uncertainties in these estimates?

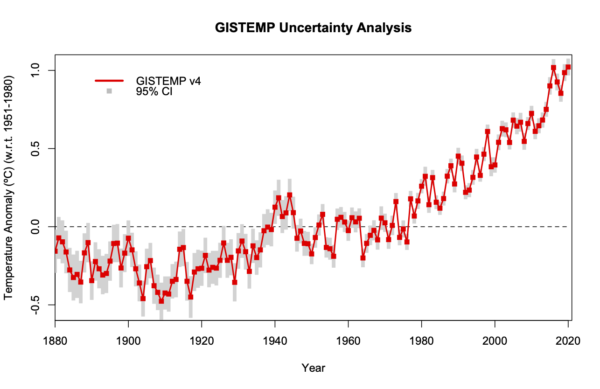

There have been some real advances over the years in how we think about the uncertainty in these estimates. The work with the HadCRUT ensemble, the Berkeley Earth statistical model and the work in Lenssen et al (2019) for GISTEMP, have all gone way beyond the old style of estimates from a decade ago. But there are some aspects of the uncertainty that remain hard to analyse – for instance, it isn’t quite right to assume the margin for one year is independent of the margin for the next year – since they will have been similarly affected by the station network at these times or the homogeneity adjustments which will be similar for both. So while the probabilities for record years given here are reasonable, they may be due for a (minor) revision in the near future.

The perennial issue of the Arctic coverage is now almost done with. HadCRUT5 now extrapolates into the Arctic as well, and the upcoming revisions to the NOAA methodology (Vose et al, in press) do the same as well as ingesting Arctic buoy data. This will effectively eliminate the cool bias that resulted from only partially weighting the Arctic changes and reduce the difference between the products to almost negligible values (except where the HadSST and ERSST products differ).

Structural uncertainty in satellite records

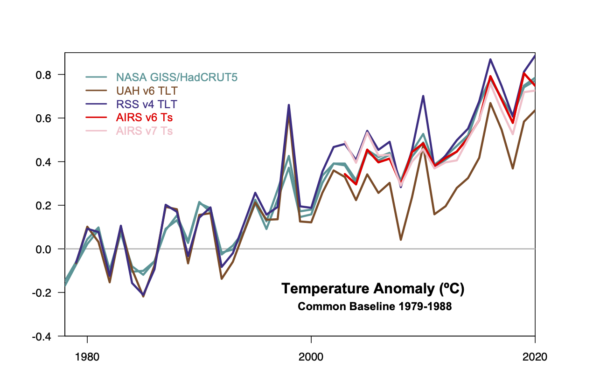

The main focus of these annual announcements is on the in situ land station/ocean buoy/ship data compilations, but as many will know there are a number of satellite products of related variables that offer an independent view of recent trends. Specifically, there are the MSU TLT products (from RSS and UAH) and the AIRS instrument data (flying on NASA’s Aqua satellite since 2003). These products are the combination of raw data (brightness temperatures in the microwave band and IR band respectively) together with complex retrieval algorithms which correct for the presence of clouds or surface emissivity or atmospheric distortions of various sorts. As such, the retrievals are often updated as improved methods are found, or calibration targets refined, or corrections found.

RSS retrievals are on version 4, UAH on version 6, and the AIRS retrievals have just moved to version 7. At each new version, the whole record is reprocessed and while the new results are often highly correlated with the older versions, trends can sometimes be quite different. This ‘structural’ uncertainty in the long term is often neglected when comparing these observations with other products or model output. Nonetheless, it is a significant issue. To illustrate this, note the difference between UAH and RSS below – highly correlated year-to-year, but radically different trends (and therefore interpretations) over the length of the record. For reference, the GISTEMP and HadCRUT5 products can barely be distinguished.

Similarly, the two versions of the AIRS product (v6 (red) and v7 (pink)) are well-correlated from year to year, but diverge notably in the early years of that record (2003-2006). The point being that structural issues in satellite products can have much larger impacts than structural issues in the surface station products (even if you consider the polar problem). Only drawing conclusions that are robust to these issues seems sensible.

And one pet peeve.

It seems I need to say this every year, but attempts to give a high-precision absolute temperature value for a single year are scientifically invalid. Our knowledge of the absolute global mean temperature has an uncertainty of about 0.5ºC, while the uncertainty in the annual mean anomaly is more like 0.05ºC. You don’t get an accurate number by adding an inaccurate one to an accurate one. What happens when this occurs is that updates in the absolute global mean (because of a new reanalysis, better observational data, etc.) can dwarf the year-to-year anomaly and you end up with an ‘absolute’ number from 24 years ago weirdly being larger than an absolute number today:

One example is sufficient to demonstrate the problem. In 1997, the NOAA state of the climate summary stated that the global average temperature was 62.45ºF (16.92ºC). The page now has a caveat added about the issue of the baseline, but a casual comparison to the statement in 2016 stating that the record-breaking year had a mean temperature of 58.69ºF (14.83ºC) could be mightily confusing. In reality, 2016 was warmer than 1997 by about 0.5ºC!

Just don’t do it.

References

- N.J.L. Lenssen, G.A. Schmidt, J.E. Hansen, M.J. Menne, A. Persin, R. Ruedy, and D. Zyss, "Improvements in the GISTEMP Uncertainty Model", Journal of Geophysical Research: Atmospheres, vol. 124, pp. 6307-6326, 2019. http://dx.doi.org/10.1029/2018JD029522

- R.S. Vose, B. Huang, X. Yin, D. Arndt, D.R. Easterling, J.H. Lawrimore, M.J. Menne, A. Sanchez‐Lugo, and H.M. Zhang, "Implementing Full Spatial Coverage in NOAA’s Global Temperature Analysis", Geophysical Research Letters, vol. 48, 2021. http://dx.doi.org/10.1029/2020GL090873

Is the plan for this blog to do more interesting climate science? It’s just not that interesting tracking temperatures like a horse race unless there’s more understanding on what’s driving the natural variations. People can always go to my blog, where I have a new post on nonlinear impact in power spectra analysis

https://geoenergymath.com/2021/01/16/nonlinear-generation-of-power-spectrum-enso/

If 2020 was tied with 2016 as the warmest, then we can say there was a pause in warming since 2016, with a slight cooling in 2017-2019. Am I right? Graphs don’t lie.

:)

Similarly, if a man can claim to be a woman, and vice-versa, and people actually agree to his face that it is so, then it is perfectly acceptable that those same folks can agree that 58.69 deg F is warmer than 62.45 deg F. You cain’t make this stuff up! This is where we are today. The lunatics are running the asylum!

:)

The 2020 global average may have been tied with the warmest, but here in the Pacific Northwest we had a moderate summer. Not many super hot days. I was pleased about that!

#2, KIA–

No, because a sensible person wouldn’t use “pause in warming” or “slight cooling” to describe random variability as if it were meaningful. It’s misleading, because what we really care about is the characteristics of the trend–which will not be detectable anyway over spans of a couple of years.

https://hubpages.com/politics/When-Did-Global-Warming-Stop

Is a post really published if nobody comments on a trifling grammatical error rather than anything of substance? “How does ENSO effect annual temperatures?” should be “affect.” You’re welcome.

Over the years watching the weather effects of a relatively small area of the Pacific Ocean heating less than a handful of degrees C, then flowing into South America, has been astounding to watch unfold. Then to watch the El Nino and La Nina translate into significant world wide weather events become doubly so.

Now, compare that to the Arctic Ocean, with a far larger area, and which is in the process of transitioning from minus a -C, high albedo area, to a plus +C, low albedo area, as well as releasing significant amounts of latent heat in the process, a difference of tens of degrees C. Far more than has triggered the Western Pacific. With roughly half the Arctic Ice Cap lost during the summer months, human impacts have already knocked the Polar Vortex off its commanding position at the North Pole. It has intensified severe weather events throughout the Northern Hemisphere, displacing large numbers of climate refugees from hither and yon, unknown numbers of species extinction, adversely affected food distribution, increased malnutrition and starvation deaths, to name just a few of the most obvious impacts. All in all, I feel that the attention offered to the Arctic disruption is far less than it warrants.

Leif, the equatorial Pacific is important because that is the central concentration of heat received from the sun, so it has the most energy to be released as well. By contrast, the poles hold far less heat. The stratospheric polar vortex is connected to equatorial QBO and ENSO cycles according to many sources.

https://acp.copernicus.org/articles/18/8227/2018/acp-18-8227-2018.pdf

2 Mr KIA Another thing you might note is that 2016 was coming off an El Nino event that haD warmed the atmosphere, this dissipated as the year went on but had to have had an affect upon that year, there was no El Nino event though one had been forecast. Let us see what happens when we have another El Nino.

@KIA…”If 2020 was tied with 2016 as the warmest, then we can say there was a pause in warming since 2016…”

Nope. To say so would be scientific nonsense (something you are quitee familiar with as we have all observed)

“… with a slight cooling in 2017-2019. Am I right? …”

Nope. To say so would be the same nonsense.

“…Graphs don’t lie.”

Graphs don’t lie, true. However, people like you lie with posts like this.

KIA 2: If 2020 was tied with 2016 as the warmest, then we can say there was a pause in warming since 2016, with a slight cooling in 2017-2019.

BPL: No, we cannot. You need 30 years to tell a climate trend. 4 years is noise, not signal. Read and learn:

https://bartonlevenson.com/30Years.html

BPL – telling KIA to “read and learn” would seem to be an exercise in futility based on posting history.

Paul Pukite (@whut) @1,

It’s an interesting projection of ENSO you generate. Will we see a couple of years of La Niña followed by a rising El Niño through 2023/24? And while the “horse race” of annual global temperature is in the short term decided by the ENSO, the rise over time is perhaps the point of interest and, hidden by those ENSO wobbles, how quickly that is going.

And with or without ENSO, with 2020 temperatures so high, it does add fuel to analysis demonstrating an acceleration in the AGW temperature rise. If it is assumed there is a linear acceleration within the GISTEMP global data, it looks as though it woould be something like +0.0005ºC/yr/yr with the 2020 rate of increase sitting at +0.028ºC/yr (ie +0.005ºC above the +0.023ºC/yr rise fould from OLS over 2001-2020). That would suggest an additional +0.5ºC global temperature rise would appear by 2035 and +1.0ºC by 2048.

Of course, the linearity of any acceleration in AGW is no more than an assumption, although the system is a very big one so unless there are big sustained changes things will go on with rises and rates altering very little.

Gavin, I noticed that you didn’t update the Satellite to CMIP5 comparisons last year. Is that because of the structural uncertainty issues you discussed in this post? Are there to be no more updates of those?

One thing I found very useful on the Satellites section was the trend histograms. Could those be done for the surface data? Thanks.

[Response: Just haven’t got round to it yet. Soon. – gavin]

@MARodger Re: possible acceleration in temperature

Greg Jericho published a graph in the Guardian, showing GISTemp together with three “forecasts” apparently made by him of the linear trends based on the past 10, 15, and 20 years of data.

https://www.theguardian.com/business/commentisfree/2021/jan/17/the-biggest-coalition-conspiracy-theory-is-climate-change-denial

Surely 10 years of data is too little to make forecasts out to 2050 (let alone 2100)? He should at least have included the confidence intervals for 2050 which I find come out to 2ºC–3.2ºC. (Warming of 0.46º/decade, standard error 0.09º.)

The default in the context of simple data fitting would be to use 30 years of data, which gives a 2050 forecast of 1.7ºC–2ºC. (Warming of 0.23º/decade, standard error 0.02º.)

Nevertheless I would be interested to hear the experts weigh in on the question of possible acceleration in temperature.

Paul Pukite #1,

From a quick look, I don’t think I am convinced that your analysis tells us anything real, but I am completely in agreement that the obsession with GMST is not all that interesting and may be harmful.

There are so many interesting topics that might be discussed, but it seems most people are stuck in what Susan Anderson nicely described as a “call and response” ritual.

Actual science posts are quickly abandoned to get back to UV and FR, where they can demonstrate that they are ‘smarter’ than people like KIA and JDS and the others, (and of course for the the juicy high school level on-line-relationship talk.)

GMST alone tells us very little, unless we want to play the Denialists game and rehash the settled science over and over.

Robert McLachlan @13,

I would agree that the Greg Jericho analysis you link-to which uses an OLS of the last 20, 15 and 10-years to suggest how long before we would hit +2ºC above pre-industrial is over-gilding the lily. The three regressions do remain statistically significant in the mathematical sense with the 10-yr OLR yielding a linear trend of +0.045ºC/yr+/-0.009ºC(2sd).

But thinks, the three trends for 20, 15 & 10-year periods yield central-value trends of +0.023ºC 2001-20, +0.031ºC 2006-20 & +0.045ºC 2011-20. If there were any physical sense to those results, it would suggest an acceleration in AGW of 0.0044ºC/yr/yr occurred during the years between 2010 and 2015.

By comparison, I was carrying out 20-year OLS at monthly intervals for the data 1979-2020 (NH & SH as well as global). I would have used longer periods but I wanted to get the cooling from Pinatubo well out of the reckoning for the most recent periods. And the finding shows a reasonably constant increase in the rate of AGW of 0.0005ºC/yr/yr.

I was really only seeking the evidence of acceleration. The effect of such acceleration on future AGW might be arguable if we keep pumping out the see-oh-too but hopefully we won’t be doing that.

And in lieu of any experts weighing in….

At a simplistic level, there perhaps should be signs of an acceleration with a constant rate of forcing. (And the likes of NOAA’s AGGI does show a pretty linear rate of GHG forcing.)

The first decade of operation of an imposed forcing is usually shown to be several times greater than that shown through the following decades, and thus conversely the following decades have a response to the forcing several times smaller. Since the 1970s when the rate of positive forcing trebled, those trebled first-decade responses have dominated. But as the number of decades since 1970 accumulates, the trebled follow-on decade responses, while individually much smaller, will together become increasingly significant. Thus AGW under a constant rate of forcing should show an acceleration.

MA Rodger #15,

Who cares? I mean that literally as well as rhetorically.

Putting aside that we don’t know, as I think you acknowledge, what is the physical causality of any minor changes in the rate of this one metric, how can it possibly affect what happens?

We’ve seen a positive (apparent) change in public perception about climate recently, but it isn’t because of diligent reporting on GMST. It seems obvious to me that what has been getting people’s attention is:

Crazy Wildfires Everywhere!

Crazy Hurricanes Everywhere!

Crazy Derechos Causing Major Crop Loss!

Crazy Flooding Everywhere!

Crazy Temperatures In Siberia!

And so on. Of course, the usual suspects will dispute attributions, but the average citizen can relate to the physics relationships of those local climate phenomena much more easily. That’s why I am disappointed that posts on those topics are infrequent and mostly ignored.

“upcoming revisions to the NOAA methodology (Vose et al, in press) do the same as well as ingesting Arctic buoy data”

That sounds very interesting. Is a link available? Are they using data similar to IABP-POLES?

zebra @16,

Your response to my consideration of the measure of the planet’s rise in average temperature and where it is going is to ask “Who cares?”

You set out how the whole planet is about to melt, catch fire and sink (which is perhaps a touch pythonesque) and it is these apocalyptical futures that should be waved at the citizenry and the body-politic to get proper AGW mitigation started, not an understanding of rate of rising global temperature. Because we only have the one planet and cannot build a second one!

So thank you for that. If your comment was on-topic on this thread I may have provided some response in turn, but it isn’t.

zebra:

Thanks z. It seems obvious to me too that recent extreme weather disasters are driving a change in public perception, at least in the US. IMO, the best way to rebut lukewarmist claims that AGW “won’t be bad” is to show that it already is! As an average physics-aware citizen, I’m interested in attribution of costs already paid due to the anthropogenic trend of GMST, and how to bring them to the public’s attention. I’d appreciate hearing from more experts in hazard insurance, mass communications, social psychology, etc.

First, it would be good to narrow the range of physics-based estimates. See for example Oldenburg et al. 2017, Attribution of extreme rainfall from Hurricane Harvey, August 2017. From the abstract:

Compare that finding with Wang et al. 2018, Quantitative attribution of climate effects on Hurricane Harvey’s extreme rainfall in Texas:

As for how much AGW is already costing the US economy, AFAICT that’s even more of a work-in-progress. See for example Frame et al. 2020, The economic costs of Hurricane Harvey attributable to climate change:

Frame et al. claim their physics-aware, “bottom-up” approach is superior to “top-down” ones. I for one am confident, from basic physics, that the cost of AGW in dollars, homes, livelihoods and lives to date is greater than zero. I’m frankly not meta-literate enough in this area to judge specific numbers. I’m inclined to credit guest posts on RC, however.

Gavin – RE “I’d be interested in any results people get and if it changes the ENSO-corrected annual timeseries substantially.”

Using average of ONI3.4 with 4 month lag and 0.1 multiplier for influence on global mean surface temperature, I get a +0.14 C ENSO bump for 2016 and a +0.02 C ENSO bump for 2020. Not much different from the +0.11 C and +0.03 C you got using just Feb/Mar ONI3.4 for 2016 and 2020. I used ONI3.4 values from https://www.cpc.ncep.noaa.gov/products/analysis_monitoring/ensostuff/detrend.nino34.ascii.txt

While a smaller effect, I think it also worth noting that 2016 had a ca. +0.02 bump from peak of solar cycle while 2020 had the opposite, ca. -0.02 C debit. (both as per Hansen http://www.columbia.edu/~jeh1/mailings/2020/20201214_GlobalWarmingAcceleration.pdf, and Hansen also saying a change of 1 watt/m2 gives ca. 0.75C global avg. surface temperature.

The difference in aerosol influences as measured by https://www.esrl.noaa.gov/gmd/webdata/grad/mloapt/mauna_loa_transmission.dat between 2016 and 2020 are too small to bother with.

Put all those together and the 2020 temperature is more alarming than even a tie for 1st place suggests.

Chart at https://forum.arctic-sea-ice.net/index.php/topic,2274.msg298989.html#msg298989

[Response: Thanks! The solar component isn’t that convincing (and there is a danger of aliasing from the volcanoes in trying to estimate it, but I’ll look into what you’ve done too. – gavin]

I forgot to mention that I used a 2 year lag for solar cycle influence and did include aerosol difference with 7 month lag. The aerosol difference was very small.

By extracting the ENSO/solar/aerosol influences, 2016 goes from outstanding warmest year to right on the trendline. But 2020 is ca. 0.15 C above the trendline and is warmer than the second-place adjusted year (2019) by 0.11 C.

This has me wondering if this is because of other unaccounted-for short-term forcings affecting 2020, or if it indicates a fundamental acceleration of surface warming. Perhaps there was a reduction in aerosol cooling from COVID-19 economic impact not tracked in the aerosol data I used.

It seems like we may not understand climate change until just after we’ve committed ourselves to irreversible changes (if we haven’t done that already). If 2020 reflects the base temperature absent ENSO/solar/aerosol, then GISSTemp vs. 1850-1900 could reach +1.5C by 2028 or even earlier with a strong El Nino.

Phil Scadden @10

Mr. Know Nothing has had plenty of opportunity to understand the robustness of the science behind AGW, but refuses to acknowledge any attempt to learn. How morally corrupt and bankrupt is this? He represents the worst of humankind—caring more about one’s self interests and priorities above the planet’s livability and the future of ensuing generations. This is so glaringly obvious because if the likes of Mr. Know Nothing had any compunction at all, they would make an attempt or at least acknowledge an attempt to understand the science before actively making posts whose appearance is that of casting doubt and obstructing action. This is so morally irresponsible and shameful. Sure Mr. Know Nothing posts in a friendly folksy way to come across as a nice guy who is just a little doubtful, but this is likely just a facade to mask his lack of compassion and evil selfish intent. Let’s call a spade a spade.

re: 22. “Mr. Know Nothing posts in a friendly folksy way to come across as a nice guy ”

No, anyone who ssentially deflected the fact that the Pittsburgh synagogue killer was one of his brethren politically is not a “nice guy”. He is a coward through and through.

CC Holley @22

“This is so morally irresponsible and shameful. Sure Mr. Know Nothing posts in a friendly folksy way to come across as a nice guy who is just a little doubtful, but this is likely just a facade to mask his lack of compassion and evil selfish intent. Let’s call a spade a spade.’

He does post in that style. This is standard lobby group approach based on standard public relations tactics. Its a formula and is often used to hide real motivations. And while you are probably right about his motivations, its clear he is also one of these libertarian leaning people with an almost paranoid fear of taxes and environmental rules.

No 24 the word “almost” in your last sentence is incorrect, he is paranoid about those and other things!

nigelj @24

It isn’t always wise to paint with a broad brush, but I believe there are many who use the libertarian philosophy of personal freedom and free markets to justify their own selfishness.

Take mask wearing for instance. Those declaring “no one has the right to tell me to wear a mask because I have the sole right to determine if I need to protect myself” are missing the point that 50% of those infected with corona virus are asymptomatic and the real point of masks is to prevent those unwittingly infected from spreading the virus to others. Nope, they don’t want to understand that fact because their selfish rights are more important than the common good of their fellow human beings.

Same thing goes for the so called paranoia of taxes and environmental rules, they’d rather not pay taxes or support environmental rules that promote the common good because they themselves are more important than their fellow humans. And…they are not going to make any attempt to understand those issues and the real facts because that would be to question their own justification of their selfishness.

It is difficult to argue against the efficiencies of the free market for a strong economy or the value of personal rights for the individual pursuit of happiness, but to blindly support those philosophies to an extreme without making an attempt to understand the deficiencies that harm the common good is morally corrupt.

Mr. Know Nothing is paranoid of taxes and environmental rules because he is evilly selfish to the point of willfully making posts for the sole purpose of spreading doubt and minimizing public support for action on the climate emergency while apparently justifying to himself his own selfishness.

Nice overview! There is also an aspect that is rarely mentioned in discussions on global mean temperature which involves variance and how it varies if there are changes in reported temperatures from different locations with different variance. This can be explored by projecting the data onto EOFs, something that @Mike suggested many years ago, and that I finally got around to: Benestad, et al. (2019) “Geographical distribution of thermometers gives the appearance of lower historical global warming”, GRL, DOI:10.1029/2019GL083474. The effect of an inhomogeneity in variance may affect trends, and we found that the global mean temperature estimate based on available data (mainly around Europe and North America) in the early 20th century may lead to a warm bias because there were little observations from the Arctic and Siberia where the temperature has large variance.

I notice that Gavin A. Schmidt’s graphs do not reflect what was the hottest decade in US history, the 1930’s

[Response: Conceivably because the graphs are for the global temperature? Nah… – gavin]

The Great Heat Wave of 1936; Hottest Summer in U.S. on Record

1936; A Year of Extremes

The climatological summer (June-August) of 1936 was the warmest nationwide on record (since 1895) with an average temperature of 74.6° (2nd warmest summer was that of 2006 with an average of 74.4°) and July of 1936 was the single warmest month ever measured with an average of 77.4° (beating out July 2006 by .1°). Ironically, February of 1936 was the coldest such on record with an average nationwide temperature of 26.0° (single coldest month on record was January 1977 with a 23.6° average). In February of 1936 temperatures fell as low as -60° in North Dakota, an all-time state record and Turtle Lake, North Dakota averaged -19.4° for the entire month, the coldest average monthly temperature ever recorded in the United States outside of Alaska. One town in North Dakota, Langdon, went for 41 consecutive days below zero (from January 11 to February 20), the longest stretch of below zero (including maximum temperatures) ever endured at any site in the lower 48.

With this in mind, it is truly astonishing what occurred the following summer. The temperature in North Dakota that had reached -60° on February 15 at Parshall rose to 121° at Steele by July 6, 1936. The two towns are just 110 miles from one another!

https://www.wunderground.com/blog/weatherhistorian/the-great-heat-wave-of-1936-hottest-summer-in-us-on-record.html

#28 12 Feb 2021 at 7:07 AM Gavin A. Schmidt said; “[Response: Conceivably because the graphs are for the global temperature? Nah… – gavin]”

Does Gavin A. Schmidt believe that this IPCC graph from 1991 was global?

Figure 2 Variations in regional surface temperatures for the last 18,000 years, estimated from a variety of sources. Shown are changes in°C, from the value for 1900. Compiled by R. S. Bradley and J. A. Eddy based on J. T. Houghton et al., Climate Change: The IPCC Assessment, Cambridge University Press, Cambridge, 1990 and published in EarthQuest, vol 5, no 1, 1991.

http://www.gcrio.org/CONSEQUENCES/winter96/article1-fig2.html

[Response: Not quite sure why you are so hard of reading – it’s right there in the caption “regional”, so no. I don’t think it’s an accurate global picture. As a schematic of what people thought in the early 90s it has some historical value – as does the Figure in IPCC 1990 that it is derived from (and it was derived in part from Lamb’s Central England reconstruction). – gavin]

JDS 28: I notice that Gavin A. Schmidt’s graphs do not reflect what was the hottest decade in US history, the 1930’s

BPL: Because they’re global maps. The lower 48 are only 1.5% of the Earth’s surface.

And it’s no longer true even for the United States. You’re quoting a denier talking point from 30 years ago. Here’s what the chart of US temperatures looks like now:

https://data.giss.nasa.gov/gistemp/graphs_v4/

These graphs from this EPA site are interesting, unless you want to believe that the planet is burning up.

Climate Change Indicators: High and Low Temperatures

This indicator describes trends in unusually hot and cold temperatures across the United States.

• Figure 1. U.S. Annual Heat Wave Index, 1895–2015

This figure shows the annual values of the U.S. Heat Wave Index from 1895 to 2015. These data cover the contiguous 48 states. Interpretation: An index value of 0.2 (for example) could mean that 20 percent of the country experienced one heat wave, 10 percent of the country experienced two heat waves, or some other combination of frequency and area resulted in this value.

https://www.epa.gov/climate-indicators/climate-change-indicators-high-and-low-temperatures

I guess it never occurred to J. Doug Swallow to actually read the responses to his diatribes. Had he done so, he would have discovered that his arguments based on cherrypicked and inappropriate statistics are utter, complete bullshit.

For those who do wonder where the flaw lies, it is this: Local extreme-value statistic are a piss-poor statistic to use as an indicator of a global trend in averages. This is precisely why denialist imbeciles use them–whether they are sufficiently intelligent to understand their inadequacy or not.

#32 17 Feb 2021 at 10:04 AM Ray Ladbury says: “Had he done so, he would have discovered that his arguments based on cherrypicked and inappropriate statistics are utter, complete bullshit. For those who do wonder where the flaw lies, it is this: Local extreme-value statistic are a piss-poor statistic to use as an indicator of a global trend in averages”.

I really appreciate Ray Padbury’s attempt to get me on the right path and prevaricate like a normal climate alarmist about basically everything to do with the climate. Where are any of Ray Ladbury’s facts if he is so terribly concerned about what I present?

These are some facts about January 2021.

https://yaleclimateconnections.org/2021/02/noaa-january-2021-was-ninth-warmest-on-record-in-the-u-s-seventh-warmest-globally/

J Doug Swallow would do well to read all the posts on the Yale Climate Connections blog he references. But of course, finding “January is 9th” fits his bias.

https://yaleclimateconnections.org/section/eye-on-the-storm/

For example: NOAA names 2020 second-hottest year on record; NASA says it tied for hottest ever: The different rankings result from crunching the data in slightly different ways. But the results leave no doubt that the Earth is continuing to warm. – https://yaleclimateconnections.org/2021/01/noaa-names-2020-second-hottest-year-on-record-nasa-says-it-tied-for-hottest-ever/

But that’s a whole year. Confusing, an’t it!?

Trouble is, almost all scientists have a “bad” habit of telling the truth, and some cold does enter in; winter isn’t gone yet.

J. Doug Swallow, The problem with your facts is that:

1) They are cherrypicked.

2) They are not based on statistics that are likely to reveal a warming trend. That is, they are quite insensitive to the trend.

Looking at record highs and record lows is a problem in extreme-value statistics. Climate is about averages, and climate change is about changes in those averages. Yes, climate change will affect record highs and record lows–it is already doing so. If you look at the # of record highs globally there is a clear trend to more record temperatures for each succeeding decade since warming began in earnest in the ’80s. There is also a decrease in the pace of record cold temperatures. But these are lagging indicators.

Just for fun, look at record highs and record lows for the DJIA or the NASDAQ. That is clearly rising over time, but the records are a lot noisier.

Pick statistics that are appropriate for the phenomenon under investigation.

Ray Ladbury can go about averaging these ‘cherry picked’ cold record temperatures that have been broken into his attempt to make 2021 the hottest year on record.

“At least 2 400 cold temperature records broken or tied in the U.S. from February 12 to 16, 2021

February 18, 2021 at 22:13 UTC (2 days ago)

At least 2 400 preliminary daily cold temperature records, including cold maximums and minimums, were broken or tied at longer-term sites (75+ years of data) in the United States from February 12 to 16, 2021. The cold snap peaked from February 14 to 16. Another winter storm will affect a large area from Friday, February 19 — from the Lower Mississippi Valley into the Mid-Atlantic and Northeast.

Over just the past week, much of the Lower 48 has been punished with record-breaking cold and unusually heavy snow and ice, NWS Weather Prediction Center said.

From the Pacific Northwest across the Rockies and into the Southern Plains and Midwest, the snowfall has been measured in feet. Ice and snow continue to plague Texas and the Northeast”.

https://watchers.news/2021/02/18/2400-cold-temperature-records-broken-or-tied-us-february-2021/

JDS apparently still doesn’t know the difference between weather and climate.