Once more unto the breach, dear friends, once more!

Some old-timers will remember a series of ‘bombshell’ papers back in 2004 which were going to “knock the stuffing out” of the consensus position on climate change science (see here for example). Needless to say, nothing of the sort happened. The issue in two of those papers was whether satellite and radiosonde data were globally consistent with model simulations over the same time. Those papers claimed that they weren’t, but they did so based on a great deal of over-confidence in observational data accuracy (see here or here for how that turned out) and an insufficient appreciation of the statistics of trends over short time periods.

Well, the same authors (Douglass, Pearson and Singer, now joined by Christy) are back with a new (but necessarily more constrained) claim, but with the same over-confidence in observational accuracy and a similar lack of appreciation of short term statistics.

Previously, the claim was that satellites (in particular the MSU 2LT record produced by UAH) showed a global cooling that was not apparent in the surface temperatures or model runs. That disappeared with a longer record and some important corrections to the processing. Now the claim has been greatly restricted in scope and concerns only the tropics, and the rate of warming in the troposphere (rather than the fact of warming itself, which is now undisputed).

The basis of the issue is that models produce an enhanced warming in the tropical troposphere when there is warming at the surface. This is true enough. Whether the warming is from greenhouse gases, El Nino’s, or solar forcing, trends aloft are enhanced. For instance, the GISS model equilibrium runs with 2xCO2 or a 2% increase in solar forcing both show a maximum around 20N to 20S around 300mb (10 km):

The first thing to note about the two pictures is how similar they are. They both have the same enhancement in the tropics and similar amplification in the Arctic. They differ most clearly in the stratosphere (the part above 100mb) where CO2 causes cooling while solar causes warming. It’s important to note however, that these are long-term equilibrium results and therefore don’t tell you anything about the signal-to-noise ratio for any particular time period or with any particular forcings.

If the pictures are very similar despite the different forcings that implies that the pattern really has nothing to do with greenhouse gas changes, but is a more fundamental response to warming (however caused). Indeed, there is a clear physical reason why this is the case – the increase in water vapour as surface air temperature rises causes a change in the moist-adiabatic lapse rate (the decrease of temperature with height) such that the surface to mid-tropospheric gradient decreases with increasing temperature (i.e. it warms faster aloft). This is something seen in many observations and over many timescales, and is not something unique to climate models.

If this is what should be expected over a long time period, what should be expected on the short time-scale available for comparison to the satellite or radiosonde records? This period, 1979 to present, has seen a fair bit of warming, but also a number of big El Niño events and volcanic eruptions which clearly add noise to any potential signal. In comparing the real world with models, these sources of additional variability must be taken into account. It’s straightforward for the volcanic signal, since many simulations of the 20th century done in support of the IPCC report included volcanic forcing. However, the occurrence of El Niño events in any model simulation is uncorrelated with their occurrence in the real world and so special care is needed to estimate their impact.

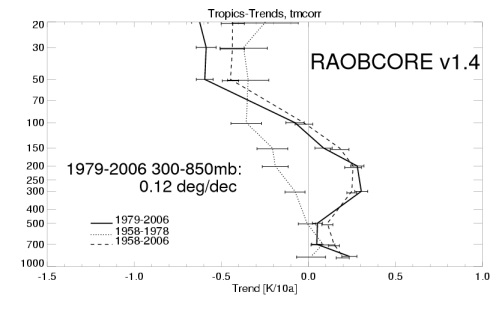

Additionally, it’s important to make a good estimate of the uncertainty in the observations. This is not simply the uncertainty in estimating the linear trend, but the more systematic uncertainty due to processing problems, drifts and other biases. One estimate of that error for the MSU 2 product (a weighted average of tropospheric+lower stratospheric trends) is that two different groups (UAH and RSS) come up with a range of tropical trends of 0.048 to 0.133 °C/decade – a much larger difference than the simple uncertainty in the trend. In the radiosonde records, there is additional uncertainty due to adjustments to correct for various biases. This is an ongoing project (see RAOBCORE for instance).

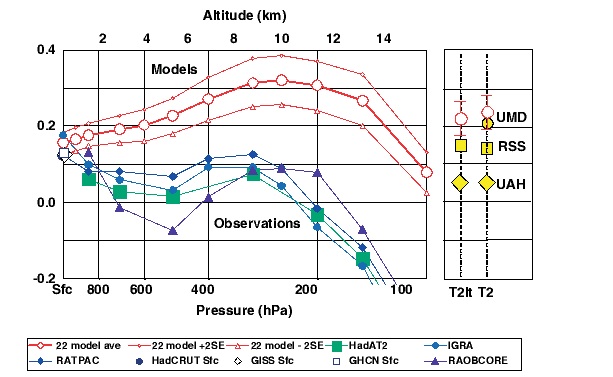

So what do Douglass et al come up with?

Superficially it seems clear that there is a separation between the models and the observations, but let’s look more closely….

First, note that the observations aren’t shown with any uncertainty at all, not even the uncertainty in defining a linear trend – (roughly 0.1°C/dec). Secondly, the offsets between UAH, RSS and UMD should define the minimum systematic uncertainty in the satellite observations, which therefore would overlap with the model ‘uncertainty’. The sharp eyed among you will notice that the satellite estimates (even UAH Correction: the UAH trends are consistent (see comments)) – which are basically weighted means of the vertical temperature profiles – are also apparently inconsistent with the selected radiosonde estimates (you can’t get a weighted mean trend larger than any of the individual level trends!).

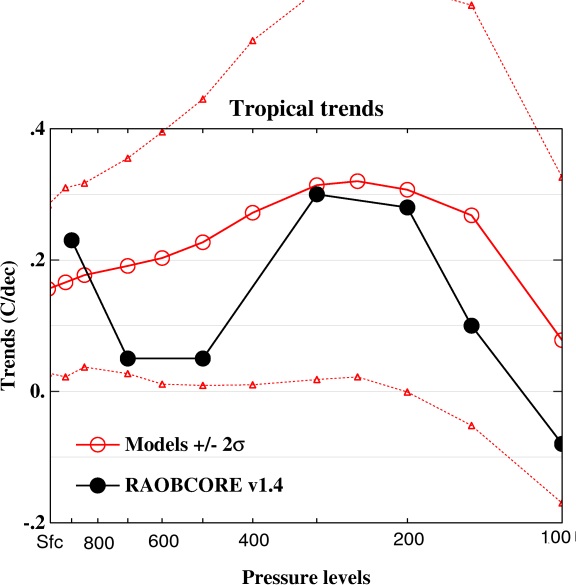

It turns out that the radiosonde data used in this paper (version 1.2 of the RAOBCORE data) does not have the full set of adjustments. Subsequent to that dataset being put together (Haimberger, 2007), two newer versions have been developed (v1.3 and v1.4) which do a better, but still not perfect, job, and additionally have much larger amplification with height. For instance, look at version 1.4:

The authors of Douglass et al were given this last version along with the one they used, yet they only decided to show the first (the one with the smallest tropical trend) without any additional comment even though they knew their results would be less clear.

But more egregious by far is the calculation of the model uncertainty itself. Their description of that calculation is as follows:

For the models, we calculate the mean, standard deviation (sigma), and estimate of the uncertainty of the mean (sigma_SE) of the predictions of the trends at various altitude levels. We assume that sigma_SE and standard deviation are related by sigma_SE = sigma/sqrt(N – 1), where N = 22 is the number of independent models. ….. Thus, in a repeat of the 22-model computational runs one would expect that a new mean that would lie between these limits with 95% probability.

The interpretation of this is a little unclear (what exactly does the sigma refer to?), but the most likely interpretation, and the one borne out by looking at their Table IIa, is that sigma is calculated as the standard deviation of the model trends. In that case, the formula given defines the uncertainty on the estimate of the mean – i.e. how well we know what the average trend really is. But it only takes a moment to realise why that is irrelevant. Imagine there were 1000’s of simulations drawn from the same distribution, then our estimate of the mean trend would get sharper and sharper as N increased. However, the chances that any one realisation would be within those error bars, would become smaller and smaller. Instead, the key standard deviation is simply sigma itself. That defines the likelihood that one realisation (i.e. the real world) is conceivably drawn from the distribution defined by the models.

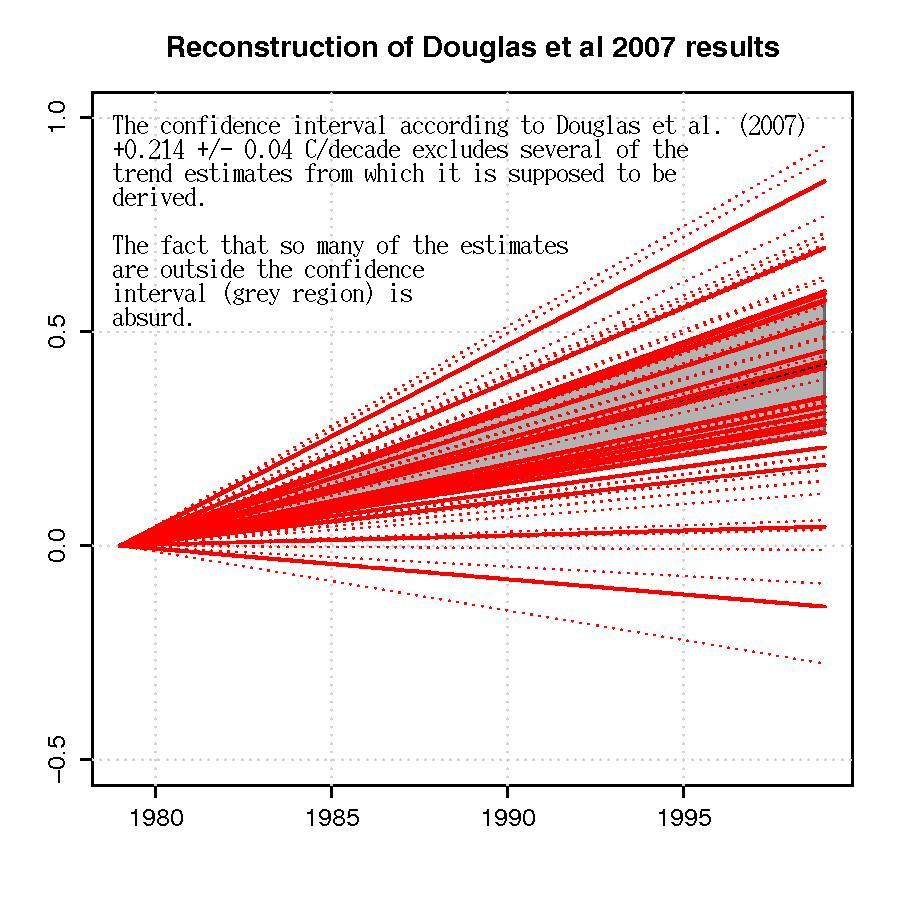

To make this even clearer, a 49-run subset (from 18 models) of the 67 model runs in Douglass et al was used by Santer et al (2005). This subset only used the runs that included volcanic forcing and stratospheric ozone depletion – the most appropriate selection for this kind of comparison. The trends in T2LT can be used as an example. I calculated the 1979-1999 trends (as done by Douglass et al) for each of the individual simulations. The values range from -0.07 to 0.426 °C/dec, with a mean trend of 0.185 °C/dec and a standard deviation of 0.113 °C/dec. That spread is not predominantly from uncertain physics, but of uncertain noise for each realisation.

From their formula the Douglass et al 2 sigma uncertainty would be 2*0.113/sqrt(17) = 0.06 °C/dec. Yet the 10 to 90 percentile for the trends among the models is 0.036–0.35 °C/dec – a much larger range (+/- 0.19 °C/dec) – and one, needless to say, that encompasses all the observational estimates. This figure illustrates the point clearly:

What happens to Douglass’ figure if you incorporate the up-dated radiosonde estimates and a reasonable range of uncertainty for the models? This should be done properly (and could be) but assuming the slight difference in period for the RAOBCORE v1.4 data or the selection of model runs because of volcanic forcings aren’t important, then using the standard deviations in their Table IIa you’d end up with something like this:

Not quite so impressive.

To be sure, this isn’t a demonstration that the tropical trends in the model simulations or the data are perfectly matched – there remain multiple issues with moist convection parameterisations, the Madden-Julian oscillation, ENSO, the ‘double ITCZ’ problem, biases, drifts etc. Nor does it show that RAOBCORE v1.4 is necessarily better than v1.2. But it is a demonstration that there is no clear model-data discrepancy in tropical tropospheric trends once you take the systematic uncertainties in data and models seriously. Funnily enough, this is exactly the conclusion reached by a much better paper by P. Thorne and colleagues. Douglass et al’s claim to the contrary is simply unsupportable.

DC FACTOID SHOWER ALERT

Singer & Co will be unleashing it all on the media tomorrow-

http://adamant.typepad.com/seitz/2007/12/factoid-shower.html

[Response: Be sure to have your umbrella handy… (but actually this press conference has been cancelled) – gavin]

[Response: Update: despite getting a email notifying of the cancellation of this press conference, it turns out it went ahead anyway… – gavin]

Better take the kevlar one.

In your last figure, shouldn’t you exclude all models with a surface trend outside the observed confidence interval at the surface?

[Response: The model ensemble is supposed to be a collection of possible realisations given the appropriate forcing. The actual surface or mid-troposphere trends will be different in each case. The issue here is whether the trends seen at any individual level in the obs fall within the sample of model outputs. Now you could have done this differently by focussing on the ratio of trends – and I think you could work that out from their paper, but ratios have tricky noise characteristics if the denominator gets small (as here). This is what was done in Santer et al, 2005 and the CCSP report though. Their conclusion was the same as ours (or Thorne et al), you cannot reliably detect a difference between the models and the obs. – gavin]

I see your point, but I think I do not agree completely with you. Perhaps the failure to detect a difference is just a sign that the statistical test is not powerful enough, not that the signal is not there.

Imagine for instance that we take thousand of simulations, each one conducted with realistic and unrealistc forcings and with realistic and unrealistic models. If you do not discriminate you end up with temperature profiles all over the place. I think one should define a priori criteria for the realism of the simulations.

In other words, if we take just random temperature profiles, we will probably cannot detect a signal either but the exercise will not be not very informative.

Another unrelated question, and please, correct me if I am wrong. Higher temperatures aloft imply a stronger negative feedback (?), so what Douglas et al are arguing is that models are wrong because their feedback is too negative??

[Response: You could certainly calculate the conditional distribution of the tropospheric trends given a reasonable estimate of the surface trend. I’ll have a look. Your interpretation of the D et al claim might be correct – I’ll think about that as well… – gavin]

A few questions,

Am I the only one that finds odd that the observations have to be within the uncertainty of the models? Shouldn’t be the other way around?

[Response: It depends on the question. Douglass et al claim that the observed trends are inconsistent with the models. The range in the models occurs mainly because of unforced ‘noise’ in the simulations and given that noise (which can be characterised by the model spread), there is no difference. If we were looking at a different metric, perhaps for a longer period, for which noise was not an issue, it would be the other way around. – gavin]

Why 2-sigma instead of only 1-sigma for the uncertainty of the models, are there realizations of the models that show as weak a trend in the lower troposphere as models-2sigma in the last plot?

[Response: Douglass used 2 sigma, we followed. These data are from the paper – the three lowest trends at 850 mb are 0.058, 0.073 and 0.099 degC/dec.]

Why should things like El Niño add noise to the trend?. Radiation and convection, which drive the temperature structure in the tropics, act in a very short time scale so that vertical temperatures in the tropics should relax to moist-adiabatic in that same short time scale (This would be a problem if we were trying to look at hourly or daily trends).

[Response: Because ENSO is a huge signal compared to the trends and so the structure of the last 25 years is quite sensitive to where any model El Ninos occur. You therefore need to average over many realisations.]

“The authors of Douglass et al were given this last version along with the one they used, yet they only decided to show the first (the one with the smallest tropical trend) without any additional comment even though they knew their results would be less clear.”

[Response: From the RAOBCORE group among others. ]

How do you know this? Did you edit or refereed the paper? If you knew this much perhaps you could explain us why the paper was published anyway.

[Response: I cannot explain how this was published. Had I reviewed it, I would have made this same comments. ]

Why is there no surface point in the last plot?

[Response: It just shows the RAOBCORE v1.4 data which doesn’t have surface values. The surface trends and errors in the first plot are reasonably uncontroversial – I could add them if you like. – gavin]

Viento, If you restrict the simulations you want to those that have surface trends within the obs uncertainty as defined by Douglass et al (+0.12 +/- 0.04), then you only retain 9 of the models. For the trends at 300mb, the 2 sigma range for those models is then: 0.23+/-0.13 degC/dec (compared to 0.31+/-0.25 in the full set). So you do get a restriction on the uncertainty, and in each case the RAOBCORE v1.4 is clearly within the range. At 500mb, you get 0.17+/-0.076 (from 0.23+/-0.22), which puts the obs just outside the range – but with overlapping error bars. So I don’t think the situation changes – you still cannot find a significant difference between the obs and the models.

Excellent post!

What really needs to be discussed is the poor editorial policies of journals that publish people like Michaels and Singer. These journals still fail to meet disclosure standards that are common in the medical field.

As Don Kennedy pointed out last year, when these journals fail to require authors to disclose their funding, “people are entitled to doubt the objectivity of the science.”

Thanks for all the replies, now I understand what the issue with El Niño could be. The ratio between the trends should follow moist adiabatic independent of where El Niño’s occur though. I wouldn’t like to put data where there is none, I was just sincerely asking.

FYI, this paper is already being widely cited on blogs by global warming deniers as proof that all of the climate models are wrong, wrong, wrong and that the whole concept of anthropogenic GHG-caused warming has been refuted.

Meanwhile, in Bali according to The Washington Post:

So the current US government is rejecting both emission reductions by rich industrialized nations and adaptation assistance to poor developing nations that will experience the worst consequences of the rich nations’ emissions, and is blocking international consensus. It is hard to see this as anything other than criminal.

anyone want to give me a laymen version?

I tried to find any press conference info, but SEPP’s site search for press conferences finds pages attacking the idea that chloroflurocarbons degrade the ozone layer. It’s like warped time.

DOES THIS BLOG accept comments which disagree with your postings?

[Response: Try reading them to see. We do have a moderation policy, but stick to the rules, and you’ll be fine. – gavin]

Joe says: “Anyone want to give me a laymen version?”

Hmmmm, a short answer might look like this:

A new study (that is already full of fatal omissions and inaccuracies) has just come out in a legitimate peer-reviewed journal (Inernational Journal of climatology).

Remember, a study needs at least two things to really be important scientifically:

1. To come out in a legitimate peer-reviewed journal (this is true with this study).

2. This same study has to stand up under world-wide peer-review scrutiny for accuracy (This study has already failed this criteria).

A rundown of the study might be this:

Independent computer models (about 23 or so world-wide, I believe), generally show a warming of the surface and even more in the tropsophere in the tropics due to increased water vapor (warm the air up and it has more available water vapor (a greenhouse gas)..so a “new greenhouse gas” comes into play where the air is warmed (ouch, what a simplification).

…the higher up you go the less water vapor you normally get because it is too cold to have available water vapor (the rate of condensation strongly exceeds the rate of evaporation)…unless you warm it and “suddenly water vapor just appears” where it was mostly absent before. However, it did already exist lower down because it was already warm and already contained water vapor because it was warm.

The study states that that instruments do *not* show more warming the higher you go in the tropics…even though the models do.

Hence, independent world-wide computer models are wrong when they predict global warming in the next 100 years…

and secondly, because computer models base their future (and present) warming predictions on increasing greenhouse gases (and they “don’t get the warming correct now”), that greenhouse gases actually are not causing the warming we have been seeing for the last 100 years.

This means then, that mainstream science only predicts global warming based on computer simulations…so global warming is not a problem.

This then means that the warming (most of it) is part of a natural cycle (cosmic rays and solar wind) and is not man-made…

so we can burn all the oil, coal and gas that we want without guilt (and we certainly don’t have to regulate them)…and the IPCC (Intergovernmental Panel on Climate Change) is irrevocably wrong and can be ignored with impunity.

This also would mean that President Bush is “correct” to do nothing right now about global warming even though every other major world country is taking action including the last holdout- Australia on Kyoto, I believe).

Anyway, here are some fatal problems with the study as I understand them that invalidate this study:

1. Even if the study were right…(which it is not) mainstream scientists use *three* methods to predict a global warming trend…not just climate computer models (which stand up extremely well for general projections by the way) under world-wide scrutiny…and have for all intents and purposes already correctly predicted the future-(Hansen 1988 in front of Congress and Pinatubo).

Now the three scientific methods for predicting the general future warming trend is:

1. Paleoclimate reconstructions which show that there is a direct correlation between carbon dioxide increasing and the warming that follows.

2. Curent energy imbalance situation between the energy coming in at the top of the atmosphere (about 243 watts per square meter WM2) and fewer watts/M2 now leaving due mostly to the driving force of CO2…ergo the Earth has to heat up.

3. Thirdly, climate computer simulations that have been tested against actual records before they actually happened….and were correct.

Now, on to actual problems with the paper:

Any real scientist, ahem, includes error bars in their projections because of possible variables. The study does not include them. If it did, or they were honest enough to, they would fit the real-life records (enough to overlap the two records) and be a non issue.

Secondly, this study is dishonest and does not show all the evidence available (v1.3 and V1.4)…boing…this paper has just failed peer-review. Science is an *open* process and you just don’t cherry pick or real scienists will correctly invalidate your results.

Third, with this omitted data, the computer models agree with the actual data (enough for it to be a non-issue).

Fourthly, the study does not honestly work out the error bars for the models themselves by giving them reasonable uncertainty for accounted-for unknowns such as El Nino (Enso) and other tropical events.

Now however, there are honest unknowns with the models and how they (slightly) mismatch histoical records…but they are accounted for in the big scheme of things…more work needs to be done…but it does not invalidate what the models are saying for general warming trends…unbrella anyone?

In other words, this study is a strawman and the authors know it.

Thanks for great post Gavin. What is John Christy’s story? I thought he was a serious scientist whose earlier work on the discrepancy between satellite data and climate models was respected at the time though, ultimately, shown to be wrong. His appearance in Martin Durkin’s film “The Great Global Warming Swindle” earlier this year and now this paper suggests he is following Richard Lindzen’s slide [edit]

I was curious about the tropical upper tropospheric

trend, so I plotted this for my own satisfaction:

( Something I urge everyone to do )

http://climatewatcher.blogspot.com/

While the stratospheric cooling and Arctic warming

are evident, the modeled TUT maxima is not ocurring.

Thank you, Gavin, for the original post and to you, Richard Ordway, for the layman’s version. I now think I understand, in broad terms, the main points of the discussion.

I wish we could leave quibbling over some minor descepancies in scientific data alone for a while and concentrate our efforts and education of the public to the matter at hand. Latest nasa report says the artic will be free of summer sea ice within 5 years. I said within five years because the computer model of the Navel Postgraduate school in california did not factor in the two record years 2005,2006. That means in actuality the artic summer should be free of ice well within the 5 years and completely ice free year round in around 2040 or earlier. This year also greenland recorded it’s highest ever melt at 10%. An increasing number of top scientists now believe we have passed the tipping point..the point of no return. So stop quibbling over technocalities and lets all concentrate and apply the blow torch to our respective leaders.

With Steven Kimball, “Amen, amen.” Thank you gentle folk all.

ref #12. I asked in another thread why CO2 is blamed for global warming if eg greenland was as warm 1000 yrs ago as it is today. Not only was the post not answered, it was not posted. Surely not because the question was too tricky?

[Response: No. It’s because it makes no sense logically. It’s equivalent to this: “Why are arsonists being blamed for recent California wild fires when there were wild fires before?” – gavin]

It seems to me that you are misquoting the paper.

You say “Now the claim has been greatly restricted in scope and concerns only .. the rate of warming”, but the abstract of the paper says “above 8 km, modelled and observed trends have opposite signs”.

[Response: Well that’s wrong too, even from the data in the paper. Since everyone agrees that the stratosphere is getting colder and the surface warmer, there must be a height at which the sign of the trend switches. With different estimates of the trends that height will vary – in some models it happen between 200mb and 100mb, just as in the Douglas et al obs (RAOBCORE has the switch between 200 and 100mb (v1.2) or 150mb to 100mb (v1.4)). In fact, none of the obs data sets nor the models have sign changes near 8km (~350 mb). – gavin]

I echo Steven Kimball’s thanks to both Gavin and Richard.

Indeed, there is a clear physical reason why this is the case – the increase in water vapour as surface air temperature rises causes a change in the moist-adiabatic lapse rate (the decrease of temperature with height) such that the surface to mid-tropospheric gradient decreases with increasing temperature (i.e. it warms faster aloft). This is something seen in many observations and over many timescales, and is not something to climate models.

I used to think laspe rate, WV and clouds feedbacks are bound in the Tropics. For example, nearly all recent model intercomparisons show that AOGCMs poorly reproduce precipitation in 30°S-30°N, they still diverge for cloud cover evolution at different levels of the vertical column, and I don’t clearly understand for my part how we can speculate on long term trends of tropospheric T without a good understanding of these convection-condensation-precipitation process. Would it mean latent heat budget, deep/shallow convections, low-medium-high cloud cover are indifferent to the “hot spot” at 200-300 hPa ? And do you think we’ve presently a satisfying quality of simulation for these domains ?

Another question : why solar forcing would have the same signature that GHGs forcing on Tropics? Shouldn’t the first be much more dependant of cloud cover in ascendant or subsident areas (another way to put this : is the Giss simulation for spatial repartition of solar forcing/feedback cloud-dependant?)

[Response:A simple and short explanation is that a warming would affect the rate of evaporation, air humidity and the Hadley cell. -rasmus]

I second all those thanking Richard Ordway. Much appreciated!!

Btw, it would be useful if there was a byline for the posts. I never know who’s writing what.

If there is so much uncertainty in the observed data and the model outputs that one cannot conclude that they are significantly different, then it also follows that one cannot conclude that the models are accurately representing the real world.

A further consideration is changes in stratospheric ozone in the tropics. This affects the heat balance of the uppermost troposphere. See P. M.Forster et al.,

Effects of ozone cooling in the tropical lower stratosphere and upper troposphere, Geophys. Res. Lett., Vol. 34, No. 23, L23813, 10.1029/2007GL031994

which appeared on AGU’s website today.

Please correct the spelling of the name Douglass. (This helps when doing web searches.)

[Response: … and it’s just better. My bad, apologies all round – gavin]

#18. Thanks v much for the response. (oh no) It’s not illogical. The key issue is what causes temperatures to rise. Your view is man-made CO2 emissions. But temperatures rose without man-made CO2 emissions 1000 years ago. My original post asked what, therefore, caused temperature to rise 1000 years ago.

Your arsonist analogy works against you. Not all wildfires are caused by arsonists so some are caused by other means. Do you agree, therefore that not all warming events are caused by man-made CO2 emissions in which case what other causes are there? What was it 1000 years ago, why couldn’t it be the same thing now and why this time round are you sure it is CO2 emissions?

[Response: Hardly. I’m not sure where you get your information from but I’ve never suggested that warming events in the past were all related to CO2. Previous events have been tied to many causes – solar variation, Milankovitch, plate tectonics and, yes, greenhouse gases. The issue is what is happening now – solar is not changing, Milankovitch and plate tectonics are too slow, ocean circulation appears relatively stable etc. etc. and all the fingerprints that we can detect point to greenhouse gases (strat cooling etc.). Each case is separate – and the ‘arsonist’ this time is pretty clear. – gavin]

Thanks for your extensive critique. I heard Singer pitch this stuff last month, and he wasn’t very convincing even to a gaggle of undergrads.

The poor old Pope has somehow been inspired, during the Bali conference, to announce that global warming is all “environmentalist dogma” that puts “trees and animals” above people, and is not science. The irony inherent in his “judgment” is truly stupendous.

link

[Response: No he didn’t. Check out this for the real story, and the actual text of the Pope’s statement. – gavin]

Could you folks speculate on the nature of the peer review process for the International Journal of Climatology? Is it a top-tier journal, or a lesser one? In my field, we all know that some peer-reviewed journals can basically be ignored because the quality of the peer-review process is poor, and the journals are so short of material that they often fast-track papers to fill their issues. Of course, outside the field, this is unseen, so people have no ability to better journals from the poorer ones.

The critique here seems pretty compelling, and obvious once stated. But is it widely known in the field? Is there a reason why the peer-review process would have missed it?

If you assume that comparing with this set of data is the only way we have to test models. An assumption that would be dead wrong …

From the essay:

Given the complexity of the climate system, it seems rather likely that there will always be some areas of poor fit between the models and the evidence. However, the fact that climate models are not an instance of curve-fitting, but are instead built upon a solid (albeit cummulative) foundation of physics, the incorporation of physics to describe processes in one area tend to lead to a progressive tightening of the fit in others.

As such, the areas where the fit is still relatively poor will tend to become smaller in magnitude, narrower in scope, and require a more specialized knowledge simply in order to have a vague idea of what they are. This would seem to be a case in point.

As such, if one’s job depended merely upon pointing out that such areas exist, given the existence of researchers who will in essence being doing this work for you, I believe one would have a great deal of job security for quite some time to come. However, if it also depended upon making such things seem make-or-break, it would seem the bell has begun to toll.

Why does the plot included above that shows the results of the solar forcing look so different from the plot of solar forcing included in AR4, p675 fig 9.1.a?

[Response: Scale of the forcing. Figure 9.1a is from the solar forcing estimated over this century from solar (a few tenths of W/m2). The figure above is for a 2% increase in solar, which is comparable to the impact of 2xCO2 and so the global mean change in SAT in the two figures above is comparable (around 3 to 4 deg C). – gavin]

Re: #18

Another way to put it is that your initial question seems to be premised on the idea that *only* CO2 can cause warming. This is not the case, and in the past we know it’s naturally happened. However, in the last few centuries, we have good evidence that the natural mechanisms are not contributing as much to warming as the man-made inputs. This is in fact what the IPCC sections say.

A similar objection is raised by stating that various other planets are warming, so why do we blame ourselves, since (as Sen. Thompson naively put it) there are no greenhouse gas emitting industrialists on Pluto? The obvious point is that these all have the sun in common. However, the output of the sun has not varied nearly enough to cause our warming, and each of the other planets has wildly different atmospheres and…uh…planetologies. So again, there’s no one cause we can point to that is common across all these scenarios. Each is different, so Earth’s warming is unrelated to other planets’ warming.

Someone will correct if I got anything wrong, I’m sure. :-)

No. 28 wrote:

“If you assume that comparing with this set of data is the only way we have to test models. An assumption that would be dead wrong …”

Okay, what are the other ways to test the models?

[Response: I think they key was ‘this set of data’ – there’s lots of other data that does not have either as much noise or as much uncertainty. – gavin]

1) I took a look at Figure 9.1 in the latest IPCC of zonal mean atmospheric temperature change from 1890 to 1999 (similated by the PCM model). That figure shows a significant difference in the magnitude of the trend at 10 km over the tropics between solar forcing and greenhouse gas forcing. This is at odds with your first two graphics. Are you saying that the IPCC graphic is wrong?

2)Given that your last graphic is correct (Roabcore v1.4), it still shows not much difference between the surface and 10 km. I’d compare it to figure 9.1 a and c, but I am likely comparing apples and oranges. But it seems to me that it is closer to figure 9.1 a.

3) Bottomline, I think this comparison is highly flawed. Who is to say that the models have all the science in it needed to put the warming in the right place? Given that H2O is the dominant greenhouse gas in the tropics, doubling CO2 levels will not change temperatures much there. It is in places where water vapor is scarce where the effects of CO2 would have the most impact.

It is amazing to see how this paper is already being “spun” by the global warming denialist blogosphere. While RealClimate has called into question the soundness of the paper’s quite narrow conclusions of discrepancy between model predictions and measurements of the relative rate of warming of different levels of the atmosphere over the tropics, this paper is being touted by the deniers as showing that the models are wrong to predict any warming at all, and that predictions of future warming and climate change can be entirely discounted. It is really a case study in the propagandistic distortion of science (which apparently was not particularly good science to begin with).

Re #32: 1) see Gavin’s response to #29 (probably crossed your post)

3) H2O may be the dominant greenhouse gas, but you missed that it is a feedback, not a forcing. Water vapour partial pressure is an exponential function of temperature: it just amplifies the CO2 effect — more or less independent of where you are (It requires careful spectral analysis to say so — part of all model codes).

This is a post at an Australian Blog, Jennifer Morohasy, where a co-author has posted what is reported as a quote from John Christie:

“My Environmental sciences colleague contacted JC.

Response to RC:

The (treatment of) errors on all datasets were discussed in the text.

ALSO

To quote from realclimate. org “The sharp eyed among you will notice that the satellite estimates (even UAH) – which are basically weighted means of the vertical temperature profiles – are also apparently inconsistent with the selected radiosonde estimates (you can’t get a weighted mean trend larger than any of the individual level trends!).” This was written by someone of significant inexperience. The weighting functions include the surface, and the sondes align almost exactly with UAH data … the weights are proportional, depending most on 850-400 but use all from the surface to the stratosphere. The quote is simply false.

[Response: JC is of course welcome to point it out to us ourselves. I will amend the post accordingly. If he would be so kind as to mention whether the RSS and UMD changes are also in line with the sondes, I will be more specific as well. We have no wish to add to the sum total of misleading statements. – gavin]

ALSO

The LT trend for RAOBCORE v1.4 is still considerably less than the models. However, as I stated before, v1.4 relies very strongly on the first guess of the ECMWF ERA-40 reanalyses that experienced a sudden warm shift from 300 to 100 hPa with a change in the processing stream (I think stream #4 ?). Consistent with this was a sudden increase in precipitation (I think about 10%), a sudden increase in upper level divergence (consistent with the increase in precipitation) and a sudden rise in temperature. We have a paper in review on this, but we were not the first to report it. I think Uppala was the first. v1.2 had less dependence on the ERA-40 forecast model, and so was truer to the observations. There is more on this, but what I said should suffice.

[Response: The point was made above that we are not trying to demonstrate the v1.4 or v1.2 is better, merely that there are quantitative and systematic uncertainties in the observational data set that were not reported in this paper. If JC wanted to make the point that v1.2 was better, then why was the existence of 1.4 or 1.3 not even mentioned? At minimum, this is regrettable. – gavin]

Remember, in the paper, the fundamental question we needed to answer before the comparison was “what would models show for the upper air trends, if they did get the surface trend correct?” We found that through the averaging of 67 runs (RealClimate seems to miss this point … we wanted to compare apples with apples). Then the comparison could be made with the real world.

John C.

[Response: That is not the test they made. Though I did do it, in response to Viento (comment #6) – gavin]

John is quoted as saying that one of the items in your post is false. I am not sure if you have communicated with Christie about the paper however as a layman I cannot tell what is the truth here. Is there anyway you can explain what you said in your post that I would be able to understand?

Gavin:

Thank you for responding to me in number 31. Can you give us a link to other data sets on tropical tropospheric temperatures that have less noise and uncertainty which corroborate the models?

Thanks again for taking the time to respond.

[Response: I wish I could. The Thorne et al paper suggests that it will become clearer in time – another 5 to 10 years should do it. In the meantime, there are lots of signals (global SAT, Arctic sea ice, strat cooling, Hadley cell expansion (perhaps)….) with much better signal-to-noise ratios… – gavin]

#34 – So if the signature of solar and greenhouse gas forcing are the same over the last 120 years, how can we be sure that the current warming is mostly greenhouse gas induced? The point of Figure 9.1a was to show that there was a different signature between the various forcings and observations should point to the culprit.

Of course, the IPCC report admits that solar influence on our climate is poorly understood, so who is to say that the model zonally averaged derived temperature trends in Figure 9.1a is accurate? Also who is to say that the models have water vapor/cloud feedbacks correct too? The IPCC rates the understanding of these processes as low, so it could be possible that the models are putting the warming in the wrong place for that reason. Or the modelers could be lucky with their parametization and got it right before the science came in.

[Response: Perhaps you misunderstand. Fig 9.1 gives the patterns associated with the forcings for 20th Century – solar is much smaller than CO2 and the other GHGs. The figure above is what happens if the amount of forcing is similar (you get a similar pattern). There’s no inconsistency there. The big difference between solar and CO2 forcing is in the stratosphere – where CO2 causes cooling – just as is seen in the real world. – gavin]

Was this paper peer-reviewed?

RE:DOES THIS BLOG accept comments which disagree with your postings? A simple yes or no answer would have been fine. Your oblique response does not in fact answer my question.

Your response, “Try reading them to see. We do have a moderation policy, but stick to the rules, and you’ll be fine. – gavin”, is in fact not a clear yes or no. “Try reading them to see” only presents to me those comments which have been approved by you for posting. Among other things, this ignores the Base Rate. That is, of 1000 comments that you receive which disagree with you, how many do you accept? Then, compare that with 1000 comments that you receive which agree with you. How many of those do you accept?

This issue is critically important for all websites and blogs which discuss global warming, given the highly charged atmosphere, and the proneness to biased views.

Blogs without disinterested and balanced discussions and comments do not qualify as true discourse.

Gavin – thank you for your reply – this clears it up for me. I am cross posting it back to the original where I hope it clears it up for a few people there as well.

RE #26, I read the Pope’s statement, and felt it could have been stronger, but I think the Pope might be surrounded by some anti-environmentalists.

Here’s my transcription of part of “Rome Reports” segment from 10/10/07, “Is Pope Benedict the First Eco-Pope?” aired on EWTN, and prominently featured Monckton as their climate expert (it can be viewed just past the middle of the video at http://www.romereports.com/index.php?lnk=750&id=461 ):

In other word “some Catholic scholars” are calling the Pope a liar, since he has on several occasions been talking about the dangers of global warming and our need to mitigate it.

Furthermore Pope Benedict XVI is NOT the first eco-pope. John Paul II was, especially when he said in “Peace with All Creation” (1990), “Today the ecological crisis has assumed such proportions as to be the responsibility of everyone…The…’greenhouse effect’ has now reached crisis proportions…”

Back to topic, the devil seems to be in the details not in the general overview of climate change science (and working his tail off close to the Vatican).

Hi there. There is a curious conversation over at Desmogblog. John Holliday has posted a large comment there responding to this thread here, and stating that he doubts the moderators at RealClimate would allow his question to be posted.

So I thought I would ask the moderators here if they had blocked Holliday’s post from this thread, or if he did not actually try to comment here.

It looks to me like his purpose is more to smear RC than to argue about the Douglass paper.

http://www.desmogblog.com/singers-deniers-misrepresenting-new-climatology-journal-article#comment-140321

Alan K, try this:

http://en.wikipedia.org/wiki/Non_sequitur_%28logic%29

1) Previous “Natural Events” occurrd

2) Warming event is occurring now

3) therefore, todays trend is natual (even though we put in a new variable not seen in 4.6 billion years)

anyone?

Also, VJ (#37), you are correct- scientific sounding, content-free.

Thanks, Gavin. The thing I don’t get about Douglass et al. (and your article, for that matter) is the way of treating different models as if this was a democracy. My understanding was, that GCMs were different approaches to simulating parts of the climate system. I thought a bunch of scientists and programmers put their efforts into creating a celled representation of the atmosphere, oceans etc. and try to come up with formulas and parameters which – to their best knowledge – mirror what really happens. I guess each of these more or less independent efforts has its pros and cons and some perform nicely in areas where others fail and vice versa. If we mix these together in a single mean and give it a wide (2 sigma) range, naturally everything this side of a new ice age will somehow fall into the modelled range. But even with your 2 sigma graph, most of the other, non-RAOBCORE v1.4 observations would fall out of the range (although a proper deviation for the observations would probably overlap). Wouldn’t the best approach be focusing on individual models and trying to find out, why they come up with tropospheric trends that don’t seem to match the observations? Or are you saying, that the observations themselves or Douglass et al.’s trending is deeply flawed?

[Response: Good point. There are two classes of uncertainty in models – one is the systematic bias in any particular metric due to a misrepresentation of the physics etc, the other is uncertainty related to weather (the noise). When you average them together (as in this case), you reduce the uncertainty related to noise considerably. You even reduce the systematic biases somewhat because it turns out that this is also somewhat randomly distributed (i.e. the mean climatology of all the models is a better fit to the real climatology than any one of them). However, in this particular example, we have a great deal of weather noise over this short interval, therefore the spread of the runs due to weather is key. How we distinguish weather-related noise from physics-related noise requires longer time periods, but could perhaps be done. With respect to RAOBCORE, I don’t have a position on which analysis is best (same with the UAH or RSS differences). However, similarly reasonable procedures have come up with very different trends implying that the systematic uncertainty in the obs is at least as large as the weather-related uncertainty. That means it’s hard to come to definitive conclusions (or should be at least). – gavin]

RE #13, thanks, Richard. That really helped.

However, I never really take their nipping around the edges in an effort to disprove AGW too seriously for several reasons:

(1) In this case even if they were correct and the models failed to predict or match reality (which, acc to this post has not been adequately established, bec we’re still in overlapping data and model confidence intervals), it could just as well mean that AGW stands and the modelers have failed to include some less well understood or unquantifiable earth system variable into the models, or there are other unknowns within our weather/climate/earth systems, or some noise or choas or catastrophe (whose equation has not been found yet) thing.

This is sort of how “regular” science works (assuming no hidden agenda, only truth-seeking); there will always be outlier skeptics considering alternative explanations, and when they find some prima facie basis for one, will investigate. Just as most mutant genes never express as phenotypes, and if they do, most never succeed in becoming dominant in a gene pool, so there are a variety of ideas or “ideotypes” in an idea pool, most of which never pan out into actual cultural (widely shared) knowledge and behavior based on that knowledge.

In this case, the vast preponderance of evidence and theory (such as long established basic physics) is on the side of AGW, so there would have to be a serious paradigm shift based on some new physics, a cooling trend (with increasing GHG levels and decreasing aerosol effect), and that they had failed to detect the extreme increase in solar irradiance to dislodge AGW theory.

(2) Prudence requires us to mitigate global warming, even if we are not sure it is being caused by human emissions (and we are sure, and this new skeptical study does not reduce that high level of certainty). This is too serious a threat to take any risks, even if AGW were brought into strong doubt by new findings or theories.

*************

RE #40, VJ, RC fails to post my entries now and then (sometimes I think unfairly), but I find if I reword it and make it more polite or more on-topic, they do post it. So it may take several tries, esp if it has invective and ad hominem attacks, or is well outside of science (like too much on religion or economics), or too off-topic.

As for disputing their science on a scientific basis — I think RC scientists love to engage at that level. I’m just not savvy enough to engage them on that level.

I don’t understand your final chart. Why did you leave out the other 3 temperature series (HadAT2, IGRA and RATPAC)? Does your analysis not leave RAOBCORE v1.4 as an observational outlier, especially at 200-300 hPA by around 0.3 degrees??

[Response: See above. The point was simply to demonstrate the systematic uncertainty associated with the obs. Better people than me are looking into what the reality was. – gavin]