This month’s open thread. Try to stick to climate topics.

Climate Science

Unforced variations: Jun 2025

Predicted Arctic sea ice trends over time

Over multiple generations of CMIP models Arctic sea ice trend predictions have gone from much too stable to about right. Why?

[Read more…] about Predicted Arctic sea ice trends over timeThe most recent climate status

The Arctic Council’s Arctic Monitoring and assessment Programme (AMAP) recently released a Summary for PolicyMakers’ Arctic Climate Change Update 2024.

[Read more…] about The most recent climate statusUnforced variations: May 2025

This month’s open thread. Note that the Nenana Ice challenge break up date graph has been updated, and the Yukon river ice break up is imminent (or may have already happened! [Update – it already had]). Please stay focused on climate issues.

Unforced Variations: Apr 2025

WMO: Update on 2023/4 Anomalies

The WMO released its (now) annual state of the climate report this week. As well as the (now) standard set of graphs related to increasing greenhouse gas concentrations, rising temperatures, reducing glacier mass, etc., Zeke Hausfather and I wrote up a short synthesis on the contributions to recent temperature anomalies.

[Read more…] about WMO: Update on 2023/4 AnomaliesAndean glaciers have shrunk more than ever before in the entire Holocene

Glaciers are important indicators of climate change. A recent study published in the leading journal Science shows that glaciers in the tropical Andes have now retreated further than at any other time in the entire Holocene – which covers the whole history of human civilisation since the invention of agriculture. These findings are likely to resonate beyond the scientific community, as they strongly support the lawsuit filed by a Peruvian farmer against the energy company RWE, which has returned to court this week.

Paleoclimatologists can determine how long bedrock beneath a glacier has been covered by ice using measurements of specific isotopes. When rock surfaces are exposed, isotopes such as carbon-14 and beryllium-10 form due to bombardment by cosmic radiation. If, however, the rock is covered by an ice sheet, it is shielded from this radiation, and these unstable isotopes gradually disappear through radioactive decay (with half-lives of 5,700 and 1.4 million years, respectively). This method, known as cosmogenic radionuclide dating, has been well-established for decades. I first encountered it myself 23 years ago during an excursion with glacier experts to New Zealand’s Southern Alps.

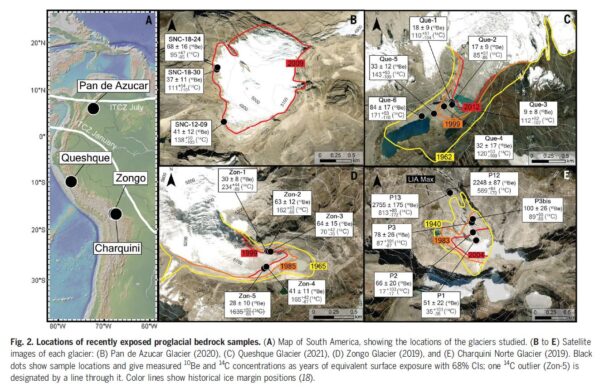

The new study applied this method to examine several glaciers in the tropical Andes (see Fig. 1).

In rock samples collected at the edges of the glaciers, researchers found isotope concentrations close to zero. From this, they conclude that these rocks must have remained covered by ice throughout the entire Holocene, shielding them from cosmic radiation. This indicates that these glaciers are very likely smaller today than at any point in at least the last 11,700 years.

This finding aligns with several previous studies showing that temperatures in the tropical Andes have never been warmer during the Holocene than they are today. For instance, reconstructions of the glacier margin of the Quelccaya Ice Cap demonstrate that it has not been smaller than today at any time in at least the last 7,000 years. Temperature reconstructions based on proxy data further support this conclusion.

Global Warming Means Global Glacier Retreat

The Andes are not an exception: according to current research, global average temperatures today are very likely higher than at any other point during the entire Holocene. Given that an ice age lasted for more than 100,000 years before the Holocene, today’s temperatures are probably the highest experienced in about 120,000 years. This unprecedented warming, which began in the 19th century and has so far reached around 1.3–1.4°C, is almost entirely driven by human activity – primarily the burning of fossil fuels. According to the Intergovernmental Panel on Climate Change (IPCC), natural factors have contributed very little to recent warming, probably even having a slightly cooling effect, due to declining solar activity since the mid-20th century (a fact reflected in the title of former RWE manager Fritz Vahrenholt’s book, Die kalte Sonne – The Cold Sun).

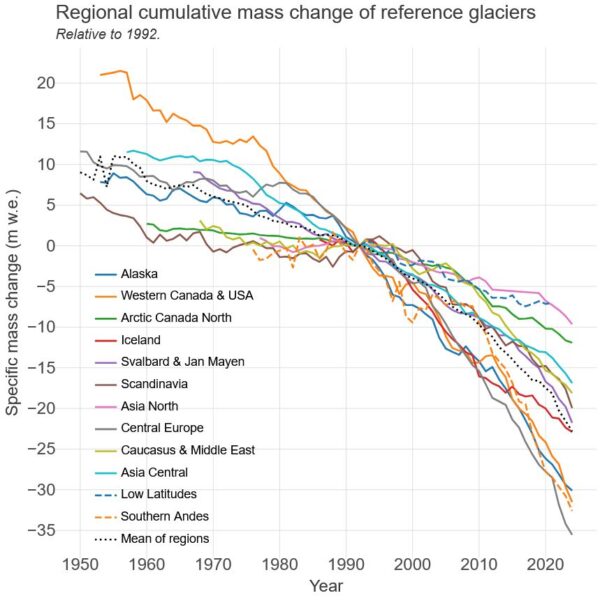

As a result, glaciers worldwide continue to lose mass (see Figure 2). In Germany, only four glaciers remain, following the disappearance of the Southern Schneeferner glacier in September 2022. Soon, there will be no glaciers left in Germany at all.

Implications for the RWE Case

The RWE case addresses, among other things, whether global warming caused by CO₂ emissions is responsible for the severe glacier melt, the substantial retreat of the glacier by approximately 1.5 km over the past 140 years and the thawing of permafrost above the city of Huaraz in Peru. A 2021 attribution study published in the respected journal Nature Geoscience has already conclusively demonstrated this connection; however, RWE appears to continue challenging these findings.

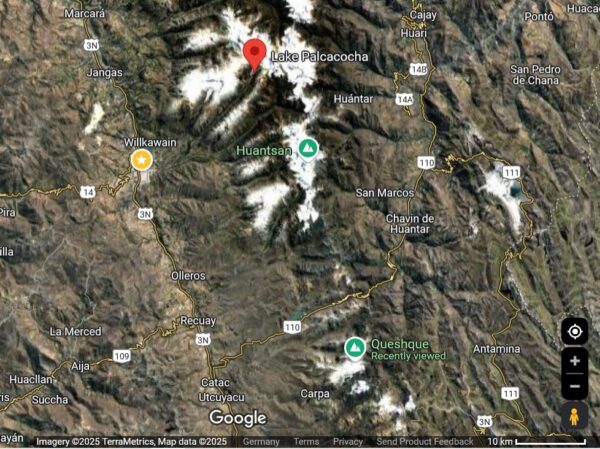

In this context, the new data from Gorin et al. are particularly relevant. The Queshque Glacier, now smaller than at any other time in at least the last 11,700 years, is located only 40 km from Huaraz, in the same mountain range as Lake Palcacocha (see Fig. 3).

It is highly likely that local climate changes across this area differ minimally at most. Although average climate conditions can vary over short distances due to local topography, climate warming typically has a correlation radius of more than 1,000 km. Therefore, there is no meaningful difference in climate change effects between Queshque Glacier and Lake Palcacocha.

This region is already experiencing the most significant climate warming in the history of human civilisation. It will undoubtedly continue until the global economy achieves climate neutrality, essentially, net-zero CO₂ emissions.

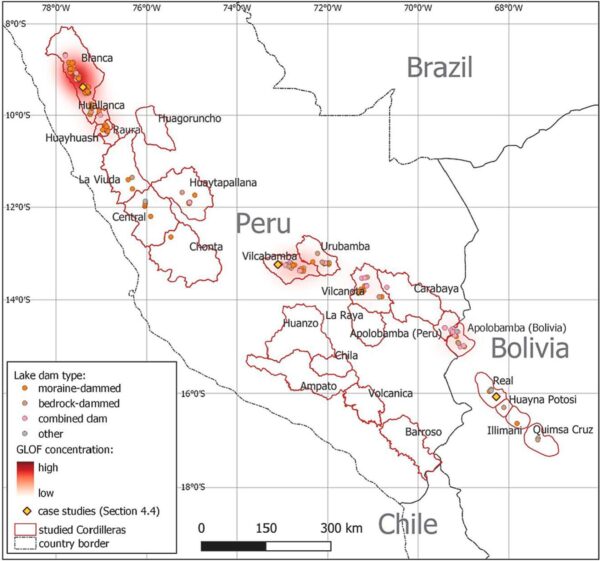

In the RWE trial, the central issue will be whether, and to what extent, the city of Huaraz and the plaintiff would be affected by a glacier flood. A systematic analysis of past glacial lake outburst floods (GLOFs) in the region has examined 160 such events based on satellite imagery. The findings clearly identify the Andes around Huaraz as a hotspot for this risk (see Fig. 4).

Huaraz is located at 9.5° south latitude within the high-risk zone marked in red. Source: Emmer et al. 2022.

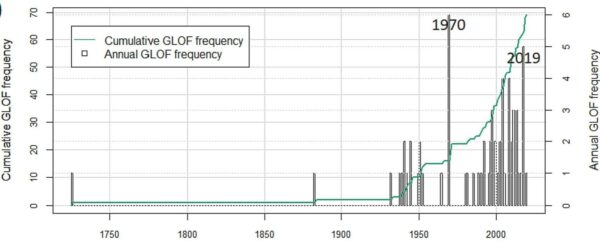

Additionally, this study shows that the frequency of such floods has increased significantly since 1980 (see Fig. 5). Before 1980, there was only one year with more than two recorded GLOFs: 1970 due to a severe earthquake. However, there are now repeatedly years with 3, 4 or even 5 glacial lake outbursts.

One thing is clear: given the existing research, it would be absurd to assume that the risk of a Lake Palcacocha outburst could be calculated based solely on historical data, without explicitly accounting for global warming caused by fossil fuels. Anyone who suggests that climate change is not happening in Huaraz – that there is no human fingerprint, and therefore no connection to RWE’s share of CO₂ emissions – may have their reasons for doing so. But the evidence clearly shows otherwise.

Climate change in Africa

While there have been some recent set-backs within science and climate research and disturbing news about NOAA, there is also continuing efforts on responding to climate change. During my travels to Mozambique and Ghana, I could sense a real appreciation for knowledge, and an eagerness to learn how to calculate risks connected to climate change.

[Read more…] about Climate change in AfricaWe need NOAA now more than ever

Guest commentary by Robert Hart, Kerry Emanuel, & Lance Bosart

The National Weather Service (NWS) and its parent agency, the National Oceanic and Atmospheric

Administration (NOAA), delivers remarkable value to the taxpayers. This efficiency can be demonstrated by its enormous return on investment. For example, the NWS costs only several dollars per citizen to operate each year, yet results in an estimated 10-100 times larger financial return that includes: improved citizen preparedness, improved transportation efficiency and safety, increased private sector profits, improved disaster prevention and mitigation, and impressive scientific research innovation that is significantly also contributed to by other related federal agencies, the private sector, and the academic research community.

Recent NWS initiatives have even more directly connected weather and ocean observations and forecasts to emergency preparation and public impact. To quote a 2019 study referenced below, “Partnership with the NWS has revolutionized this Emergency Management community from on that reacts to events to one that proactively prepares and stays ahead of the extreme events.” The societal benefits of reasonably predicting the future cannot be understated, and such prediction and resulting benefits were unimaginable only 75 years ago.

Critical taxpayer-funded investments over the past decades have led to greatly improved weather forecast models, observations from the ocean, ground, aircraft, and space, and theoretical understanding through scientific research. These all have had an enormous impact on lives and property. The forecasts and associated critical watches and warnings we see every day on television, the internet, or phone apps could not be possible without NOAA and the NWS. It is estimated that the tax revenue generated from the private sector using NOAA data and services easily pays for the entire cost of the NWS.

Those who remember weather forecasts from the 1970s through 1980s can appreciate these dramatic evolutionary improvements given how inferior those forecasts were compared to today. Going further back, landfalling hurricanes in the first half of that century often came with no warning. If you read newspaper front pages from the mornings of September 7, 1900, or September 21, 1938, you will find there is no mention of the historic and catastrophic events about to unfold only hours later. This would be unthinkable today given the scientific investments we have paid for.

These massive improvements extend beyond hurricane (and also snowstorm) forecasting and preparedness. Tornado warning lead time has also improved markedly during the same time period. Casualty rates from tornadoes have not increased despite a very rapid increase in population. At minimum, hundreds of thousands of people are alive today who would not be without our investments in NOAA and NWS.

The advent of skillful weather forecasting, along with the increased preparedness it allows, remains a landmark achievement of not only this country but of the human race. There are few other fields in the sciences where skillful prediction not only has had immense impact on our society, but is even possible. We should be extraordinarily proud of this achievement.

The current expulsion of primarily younger NOAA employees without cause and with disturbingly short notice is cruel to them personally and professionally. The youngest employees are the future of any organization, government or otherwise, and bring with them unique energy, skills, and ideas. Every government organization should strive to become more efficient, and must be subjected to careful oversight, since taxpayer funding is precious and entrusted to the government by the people. However, the instrument of wise oversight is the scalpel, not the chainsaw. The recent seemingly arbitrary and capricious reductions, notably made without Congressional oversight, are seriously jeopardizing the future of the country and more generally the property and lives of hundreds of millions of tax-paying families who have invested in these truly remarkable achievements over many decades.

References:

“Evolving the National Weather Service to Build a Weather-Ready Nation: Connecting

Observations, Forecasts, and Warnings to Decision-Makers through Impact-Based Decision

Support Services”, Bulletin of the American Meteorological Society, October 2019.

“Using the National Weather Service’s impact-based decision support services to prepare for

extreme winter storms“, Journal of Emergency Management, November/December 2019.

“Impact-Based Decision Support Services (IDSS) and Socioeconomic Impacts of Winter Storms”,

Bulletin of the American Meteorological Society, May 2020.

“Communicating Forecast Uncertainty (CoFU) 2: Replication and Extension of a Survey of the US

Public’s Sources, Perceptions, Uses, and Values for Weather Information.” American

Meteorological Society Policy Program Study, September 2024.

“The Social Value of Hurricane Forecasts”, SSRN Journal, December 2024.