Two and a half years ago, a paper was published in Nature purporting to be a real prediction of how global temperatures would develop, based on a method for initialising the ocean state using temperature observations (Keenlyside et al, 2008) (K08). In the subsequent period, this paper has been highly cited, very often in a misleading way by contrarians (for instance, Lindzen misrepresents it on a regular basis). But what of the paper’s actual claims, how are they holding up?

At the time K08 was published, we wrote two posts on the topic pointing out that a) the methodology was not very mature (and in our opinion, not likely to work), and b) that the temperature predictions being made (for the 10 year overlapping periods Nov 2000-Oct 2010, Nov 2005-Oct 2015 etc.), were very unlikely to come true. These critiques were framed as a bet to see whether the authors were serious about their predictions, similar in conception to other bets that have been offered on climate related matters. This offer was studiously ignored by the scientists involved, who may have thought the whole exercise was beneath them. Oh well.

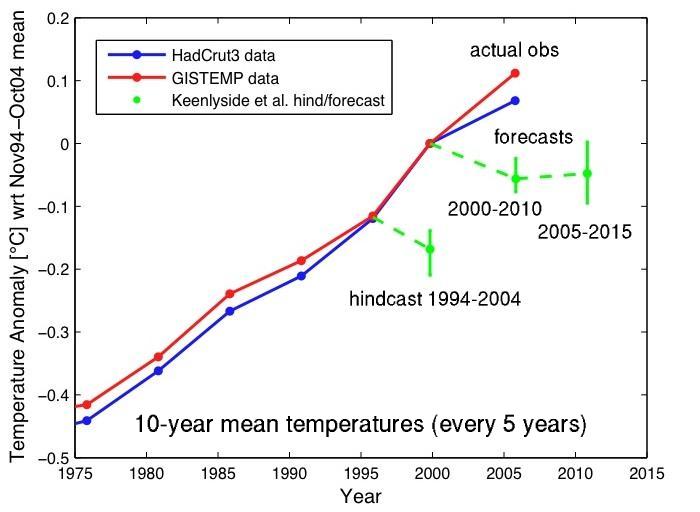

However, with the publication of the October 2010 temperatures from HadCRUT, the first prediction period has now ended, and so the predictions can be assessed. Looking first at the global mean temperatures…

we can see clearly that while K08 projected 0.06ºC cooling, the temperature record from HadCRUT (which was the basis of the bet) shows 0.07ºC warming (using GISTEMP, it is 0.11ºC). As in K08 this refers to T(Nov 2000:Oct 2010) as compared to T(Nov 1994:Oct 2004). For reference, the IPCC AR4 ensemble gives 0.129±0.075ºC (1![]() ) (and a range of -0.07 to 0.30ºC related to internal variability in the simulations) (using full annual means).

) (and a range of -0.07 to 0.30ºC related to internal variability in the simulations) (using full annual means).

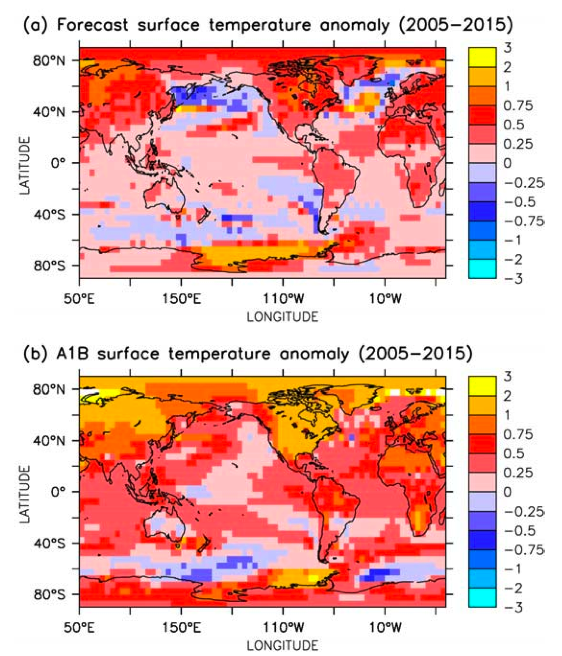

More interestingly, we can look at the regional pattern. The K08 supplemental data showed their predicted anomaly along with anomalies from a free-running version of their model the standard IPCC results for the 2005-2015 period (which is half over), rather than the 2000-2010 period, but the patterns might be expected to be similar:

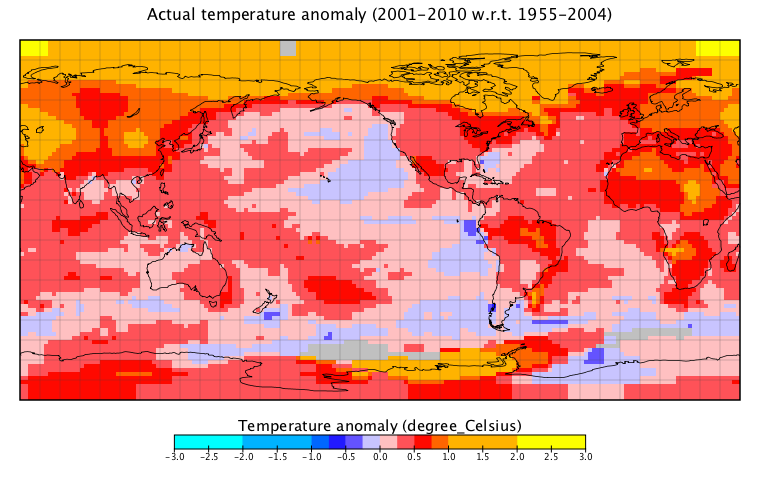

The anomalies are with respect to the average of all the decadal periods they looked at, which is roughly (though not exactly equal to) a 1955-2004 baseline. The actual temperature changes for 2000-2010, using GISTEMP for convenience, look like this:

It is striking to what extent they resemble the spatial pattern seen in the AR4 ensemble free-running version rather than the initiallised forecast, though there are also some correlations there too (for instance, west of the Antarctic peninsula, related to the ozone-hole and GHG related increase in the Southern Annular Mode).

It is worth emphasising that the RC bet offer was not frivolously made, but reflected some very clear indications in the paper that the predictions would not come true (as explained in our second post). Specifically, their ‘free’ model run, without data assimilation, performed better in hindcasts when compared to observed data, i.e. the new assimilation technique degraded the model performance. Both previous hindcasts showing cooling of the model were wrong. Since global warming took off in the 1970s, the observed data have never shown a cooling in their chosen metric (ten-year means spaced 5 years apart). Other climate models run for standard global warming scenarios only rarely show this level of cooling. On the other hand, there is a simple explanation for such a temporary cooling in a model: an artifact known as ‘coupling shock’ (e.g. Rahmstorf 1995), which arises when the ocean is switched over from a forced to a coupled mode of operation, something that has no counterpart in the real world.

The basic issue is that nudging surface temperatures in the North Atlantic closer to observed data would probably nudge the Atlantic overturning circulation in the wrong direction since changing the temperature without changing the salinity will give the opposite buoyancy forcing to what would be needed. The model indeed shows negative skill in the critical regions of the North Atlantic which are most affected by the overturning circulation. All this can be seen from the paper. Last but not least, by the time the paper was published three quarters of the 2000-2010 forecast period were over with no sign of the predicted cooling – barring an unprecedented massive temperature drop, the prediction was always very unlikely.

Was this then an “improved climate prediction“? The answer is clearly no.

So what can we conclude? First off, the basic idea of short term predictions using initialised ocean data is a priori a good one. Many groups around the world are exploring to what extent this is possible, and what techniques will be the most successful. However, before claiming that a new methodology is an improvement on other efforts and that it predicts a very counter-intuitive result, a lot of effort is required to demonstrate that even theoretically or in ideal circumstances that it will work. This can involve ‘perfect model’ experiments (where you test to see whether you can predict the evolution of a model simulation given only what we know about the real world), or hindcasts (as used by K08), and only where there is demonstrated skill is there any point in making a prediction for the real world. It is nonetheless important to try new methods, and even when they fail, lessons can be learned about how to improve things going forward.

It is perhaps inevitable that novel prediction methods that appear to ‘go against the mainstream’ are going to be higher profile than they warrant in retrospect – such is the way of the world. But scientists need to appreciate that these high profile statements will be taken and spread far more widely than they possibly anticipate. Thus it behoves them to be scrupulous in explaining the context, giving the caveats and making clear the experimental nature of any new result. This is undoubtedly hard, especially where there are people ready to twist anything to fit an anti-AGW agenda, but we should at least try.

Note, we asked Noel Keenlyside if he wanted to comment on our assessment of their prediction, and he declined to do so. We would be still be happy to post any of his or his co-authors comments in response though.

Update Dec 2: The Stuttgarter Zeitung newspaper (in German) followed up on this and got the following comments from the authors:

Keenlyside:

“The forecast for global mean temperature which we published highlights the ability of natural variability to cause climate fluctuations on decadal scale, even on a global scale. I am still completely convinced that this is correct.”

Latif:

“I do not want to comment on this.”

Then an indirect quote: the fact that warming for 2000-2010 was greater than predicted in their study does in itself not speak against their study, and then

“You have to look at this long-term. I would not weigh a few years earlier or later too much.” But if the forecast turns out to be wrong by 2015, “I will be the last one to deny it”.

I don’t understand how anyone can neglect these 4 basic facts:

1) Greenhouse gasses absorb infrared radiation in the atmosphere and re-emit much of it back toward the surface, thus warming the planet (less heat escapes; Fourier, 1824).

2) CO2 is a greenhouse gas and thus has the capacity to warm the planet (Tyndall, 1858).

3) By burning fossil fuels, humans activities are increasing the greenhouse gas concentration of the Earth (Arrhenius, 1896).

4) Increased greenhouse gas concentrations lead to more heat being trapped, warming the planet further (Arrhenius, 1896).

Anyone that is neglecting these basic facts without some substantial evidence that contradicts them should not be paid much heed.

I have some back-to-basics questions about the 2000-2010 GISTEMP chart shown here. First, what is the reason for a 50 year baseline for the beginning of the time period and only 10 years at the end? I know it is important to average out short term fluctuations, but why the difference? It is not obvious what the time period is for the amount of warming shown, I assume it is 25 years (1980 – 2005). When presenting this information to the public it might help to clarify this.

The color scale also seems unusual. The color blue “feels” coldest and red the warmest, but those colors are placed in the middle of the scale, making the chart a little harder to interpret at an intuitive level. Is there a reason for this choice, and are there any standards?

[Response: No, there is no standard. But we are just following the color scheme in the original paper for ease of comparison. – gavin]

Ah, the good old cooling predictions from 2008. Interesting how “skeptics” keep pounding the few articles back in the 70’s that talked about cooling, when they launched the same idea themselves, just two years ago. Critical difference is that the idea actually made some sense back in the 70’s.

Expect the skeptics to relaunch the cooling meme again in 2011 when we might see a slight drop in temps due to the La Nina.

Again this is great. K08 can make bold claims about how the Earth works, just like the solar spectral stumper or Keppler’s plants and ch4 paper from 2006. The data accumulate and science progresses. This is EXACTLY how the whole thing is supposed to work. Kudos to all.

Todd #1,

well said. But your comment might be read in context as suggesting Keenlyside et al. dispute or neglect those facts. I’m sure they don’t.

I suppose it is still too early to assess how the predictions of Smith et al. of the Hadley Centre are doing ( http://www.sciencemag.org/content/317/5839/796.full )? This seemed to me like perhaps a more realistic effort at decadal climate prediction.

Re #6:

And, Smith et al. did demonstrate improved skill in predicting global mean temperature. They also did a preliminary assessment of their final prediction in the Supplementary Information, which could be updated to present….

I am not a scientist, but devoted to heat transfer with concentration in cooling. First of all, your articles are of great intellectual value for me and my professional interest as stated above. I keep them exclusively in a key folder for continuously references and analysis.

At this stage and, for the time being, I have only one

petition to ask. Is there a temperature prediction for the Caribbean that you can share, as of today?

Thank you very much for this attention.

Mario J.

The last map again shows strikingly how much more the Arctic has warmed than other parts of the globe.

Sorry if this has been covered to death elsewhere, but what is the current thinking about the break down for relative strengths of causes for this anomaly?

How much is attributable to albedo feedback versus higher concentrations of methane (and the feedback involved in methane emissions form tundra and seabed…), for example?

Very interesting post, thanks. The AR4 simulations seem to match the observed patterns quite well!

I gather that K08 were conducting ten-yr runs. But can you help to clarify when the AR4 ensemble runs were initialized?

Also, perhaps I’m misunderstanding the methodology you’ve described, but I find the idea of delivering short term predictions from AR4 models a little strange, based on previous discussions made here about the Cox and Stephenson’s “sweet spot” of climate model simulations of ~20-50 years. Is there some way to get around process/parameter uncertainty over shorter time periods?

Thanks for either answering or directing me to a link with an answer.

[Response: The AR4 runs aren’t initialised in this sense at all. They start with conditions from the 19th Century and run forward with only input from the forcings. Thus they are not ‘short term’ predictions in any sense. The fact that they nonetheless provide a better estimate of the decadal anomaly is quite telling. – gavin]

Re Todd at #1 and CM at #5: Am I right in understanding that the key point is that it’s quite possible for global surface temperatures to decrease even as the globe warms if more than the excess inflow of heat goes into the deep oceans?

[Response: Theoretically you could have a change in ocean circulation that could cause a drop in global mean temperature even while the total heat content of the climate system increased. Though I have not seen this in any free-running simulation. – gavin]

Is it possible the Great Recession changed the situation in the atmosphere enough to negate the cooling they predicted?

Ed (#11), how on earth did you get to there from #1? I don’t think that was the key point AT ALL.

#9 (Wili): Absolutely correct about the Arctic warming.

The rapid warming of the Arctic introduces an interesting dilemma: large regions of the Arctic are not covered by either surface stations or satellites, so the temperatures that increase the fastest are not being measured in most of the datasets. This has, of course, been known for a long time. However, with the new trend of strong negative AO during the winter season, this will likely have an impact on the global average temperature. Cold Arctic air flows down to lower latitudes, where it affects the temperature readings. However, the increased temperatures that result from the warm air that flows into the northern regions of the Arctic are not being measured. In other words, the current trend of negative AO should introduce a cold bias in the global average temperature.

Just want to clarify the basic approaches. I can only access the abstract of the Keenlyside et al paper. This is what I think was done:

1) Keenlyside et al start a climate model with the actual Sea Surface Temperatures (dynamic, multi-year spin up with nudging?) and then run the model (which one?) into the future (10-20 years out).

2) IPCC AR4 A1B runs start in 1800s and run to 2100 under the A1B forcings without any data assimilation.

I agree that it’s impressive that (2) seems to have much more skill than (1), although I have to ask: what was the model used by Keenlyside et al and which model produced the A1B results shown (or is it the average of all the AR4 models)?

[Response: K08 use the ECHAM5 model, which also participated in the AR4 ensemble (in free running mode). The A1B simulation is just the results from (I think) a 3 member ensemble of the ECHAM5 model run as you suggest. I have amended the text above to reflect that. – gavin]

Re 9 wili – I know of a paper suggesting, as I recall, that enhanced ‘backradiation’ (downward radiation reaching the surface emitted by the air/clouds) contributed more to Arctic amplification specifically in the cold part of the year (just to be clear, backradiation should generally increase with any warming (aside from greenhouse feedbacks) and more so with a warming due to an increase in the greenhouse effect (including feedbacks like water vapor and, if positive, clouds, though regional changes in water vapor and clouds can go against the global trend); otherwise it was always my understanding that the albedo feedback was key (while sea ice decreases so far have been more a summer phenomenon (when it would be warmer to begin with), the heat capacity of the sea prevents much temperature response, but there is a greater build up of heat from the albedo feedback, and this is released in the cold part of the year when ice forms later or would have formed or would have been thicker; the seasonal effect of reduced winter snow cover decreasing at those latitudes which still recieve sunlight in the winter would not be so delayed). Also, the lapse rate feedback, while generally negative due to the temperature dependence of the moist adiabatic lapse rate, can be positive in some places and times (when there is an increase in lower-level radiative heating and the stability of the air mass prevents direct convective vertical spreading of the temperature response), such as in the Arctic.

The effect of CH4 shouldn’t be concentrated much near surface CH4 sources; while CH4 oxydizes over a decade or two (? which one is closer), the mixing of the atmosphere is much faster, so wherever CH4 is coming from, it should tend to cause the same pattern in climate changes.

“I don’t understand how anyone can neglect these 4 basic facts:” – 1

Perhaps this will clarify matters for you…

Ousted congressman Bob Inglis (R-SC) has a theory about why he lost this year’s midterm primary: conservative voters did not much care for Inglis’s belief in global warming. This is because, according to the congressman, his environmentalism fell on “Satan’s side” of the climate-change debate.

“…by the time the paper was published three quarters of the 2000-2010 forecast period were over with no sign of the predicted cooling – barring an unprecedented massive temperature drop, the prediction was always very unlikely.”

I remember this a few years ago when this was published. This is what doesn’t make much sense to me. So what’s the motivation of publishing a hypothesis that doesn’t show past skill and is very likely to be wrong? Do you think that 2007-2008’s fairly substantial la Nina, and the associated temporary temperature drop, might have given the authors some reason to believe they might be right?

[Response: You would need to ask them. – gavin]

Hi Gavin

I’m interested by the tropical tropospheric hotspot.

In the NASA-GISS site there are very interesting climate simulations in response from different forcings.

The ouputs of these simulations give us, among others, T2m, T2, T3,T4.

I think that T3 = TTS and T4 = TLS, but I have a problem with T2.

[Response: MSU-2 is TMT (since that was the channel on the original instrument). MSU-3 is not used much, and TLT is MSU-2R – though I don’t know that this is online. – gavin]

Is this TLT, TMT, a mix?

And is there a combination of these data to compute (roughly) the more important hotspot (roughly in 400hPa/150hPa)?

The best should be to get the numerical values of the tropical profile.

thanks for your response

I am really impressed by the spatial correlation between the model run and the actual temperature anomalies. The spatial structure of the temperature anomalies are almost captured perfectly. It looks almost too good to be true. I think it would be really nice for all those sceptics that claim that models are just “garbage in”, garbage out” to look at these two graphs. I do have a question about this: Is this level of agreement between model runs and temperature measurements normal, or was the agreement found here extraordinary? Would it be possible to have a post here once on the general agreements of GCMs and (spatial) temperature data, hindcasts etcetera? Or are there previous posts on this topic that I missed?

Thanks for the good work!

[Response: I was pretty surprised too – and so I would say that this merits more attention. – gavin]

“So what’s the motivation of publishing a hypothesis that doesn’t show past skill and is very likely to be wrong?”

My recollection from reading the paper was that it was mostly a proof-of-concept paper, along with improved skill for predicting certain temperature attributes that were specifically tied to oceanic state (mainly around the Atlantic & Pacific basins, right?), so that even though the global skill was less than the raw model, there were suggestions that certain regions had improved skill. As such a paper, it would have been an interesting first attempt at a new method that would encourage follow-on work in the area.

Unfortunately, it was way overblown and the global predictions (without skill) were promoted over the other parts of the paper…

-M

Maya #13: “Ed (#11), how on earth did you get to there from #1?”

My logic was:

#1 says the planet is heating up.

K08 says the surface of the planet will cool down (or, at least, not warm).

Two possibilities spring to mind:

1) The extra heat is going to latent heat (e.g., melting ice) on the surface so the temperatures don’t rise even though there’s more heat and/or

2) The extra heat goes elsewhere.

As the abstract to K08 talks about ocean currents and that was the impression I got from previous discussions of that paper I thought (2) was the main concern and specifically that the heat was going into the deep ocean. Note that the K08 abstract talks about he problems of insufficient deep ocean instrumentation.

Gavin’s response to my previous comment indicates that I’ve got the wrong end of the stick on all this – what I’m thinking is theoretically possible but not actually what’s being considered. I’d welcome clarification or a pointer to some discussion I’ve missed.

[Response: Maybe it was me who wasn’t clear. It is possible that the K08 simulations do exhibit this phenomena, but I doubt very much that this is possible/probable in the real world. – gavin]

thanks Gavin

hence, T2=TMT OK

for 1880-2000 I find, in tropical regions:

Tsurf = 0.576°C

T2=TMT=0.789°C

T3=0.816°C

the TMT-Tsurf ratio = 1.339 for your model

[Response: Be careful – many things happened in the 20th Century, so this statement is only true (in the ensemble mean?) for those particular experiments. Another set (perhaps without ozone depletion or aerosols might give a different ratio). There is also the issue of variability in the value in any specific short time period from a single simulation. – gavin]

For the observed anomalies, I have, from NASA-GISS (-24/24) Tsurf = 0.11°C/decade,

and from RSS (-20/20) TMT = 0.11°C/decade

The ratio is 1

So, if I can, 3 questions (I am off topic, sorry):

1-can we apply to the tropical regions the correction made by UW on the global anomaly?

this correction which accounts the strato cooling is roughly 1.45

In this case the model and the observations are in phase

[Response: Not really. Tropical areas have a different structure. – gavin]

2-do you think we can compare the “real” ratios of 1979-2009 with model ratios of 1880-2000?

[Response: No. At least not without allowances for issues raised above. – gavin]

3-What do you think of McKitrick et al 2010 who finds 0.24°C/decade for models (likely not the NASA model) and for 1979/2009?

[Response: Actual it is exactly from our model. But it is a weird metric because it is only based on selected CRUTem grid points as opposed to a proper integral. It exaggerates the global trend. – gavin]

Ed #11, 22,

These notes might be helpful: K08 noted that the MOC plays an important role in driving decadal sea surface temperatures, but did not go into details on why. They did not discuss what mechanisms were involved or where the heat would go.

Also, just in case it’s not clear, what K08 forecast was a downward fluctuation in MOC and North Atlantic temperatures temporarily offsetting anthropogenic forcing for perhaps a decade and a half. (Not just a statistical ‘blip’, more like a ‘bleeep’.) On the upstroke, however, by the 2020s their forecast catches up with the warming in an anthropogenic-forcing-only scenario.

The contours coloured in red look scarier every time they are presented.

What do climate experts on here suggest the human race does about it?

Otherwise we will be on RC having these scientific debates in 20 and 50 years time…….

Gavin

I don’t know if it was a mean model response.

I used this link: http://data.giss.nasa.gov/efficacy/#table1

With a forcing of 5/4CO2 (centennial response) the ratio was roughly the same that in 1880-2000 all forcings case.

Ok, Ed, thank you. I dug up a few papers on the subject that seemed to fit the question. They look reputable, but I can’t vouch for them being the best examples in existence, only what I could find on the net that didn’t require a subscription. :)

http://www.atmos.ucla.edu/csrl/publications/Hall/boe_grl_2009published.pdf

That one seems to support the temporary offset CM refers to, although they aren’t clear on how long one might expect the offset to last.

http://dspace.mit.edu/bitstream/handle/1721.1/3557/MITJPSPGC_Rpt87.pdf?sequence=1

That one made my head hurt to read, and I’m not sure if it really came to any conclusions, but it’s part of the puzzle.

http://www.pmel.noaa.gov/people/gjohnson/Recent_AABW_Warming_v3.pdf

This one is recent, and considerably more readable. I thought it was interesting how uneven the deep ocean warming appears to be, almost the opposite of what we see in the graphs like the one above. Above, you see the amplification in the high northern latitudes; in the figures of this paper you see the heat collecting in the southern latitudes. Interesting.

On coupling shock:

The aspect of climate modeling that I’ve always had the most trouble believing are regional climate predictions. My understanding is that high resolution regional climate models (RCMs) are embedded in lower resolution GCMs so that GCMs force the RCMs via the boundaries. That strikes me as likely to produce to produce artifacts similar in spirit to coupling shock. I’d love to see an RC article that kind of walked through this at a level appropriate for armchair climate scientists.

If C02 is the largest single contributing factor to the Greenhouse Effect (because supposedly water vapor is only involved as a feedback to primary chemistry involving C02 itself), and C02 lags temperature increases (as has been stated on this very blog), how has the Earth ever returned to colder glacial conditions following periods of warming?

[Response: This is way OT, but it’s because as the orbit changes the insolation forcing periodically makes it easier to form ice sheets. You have a pacemaker (Milankovitch) and a response. When the pacemaker flips, so will the response. – gavin]

Non-paywalled copy of Keenlyside et al. here:

http://www.usclivar.org/Pubs/2May08Keenlyside.pdf

Alex Katarsis: You might also consider that the molecule is “CO2”, not “C02”. Subtle difference (“oh” as in “oxygen”, versus “zero” as in a nonsensical chemical formula), but often a key indicator of the understanding of the writer. (and yes, it does creep in as a typo occasionally, but you made the mistake 3 times in one sentence!).

-M

[Response: Thanks, but let’s try and keep the grammar/typo police at bay for the most part. – gavin]

Re: ice melt in the arctic

Seems to me the most recent data were seeing 2/3 as bottom melt, i.e., sea water temps. This will give you an idea. http://www.joss.ucar.edu/events/2009/aon/reports/richter-menge_jackie.pdf

Note the greatest effect is found in the east and west, and more balanced in the center. This definitely supports the other research we’ve seen on warmer than expected water in the fjords of Greenland, etc.

Cheers

A bit obvious (and perhaps requiring too much computer time?), but a suggestion for avoiding coupling shock: take a large number of model runs; for each assign a location in some n-space for indices of the more important modes of (internal) variability (but maybe also include indices for timing relative to and magnitude of eruptions, solar cycles, etc.). The ‘real climate’ (pun not intended but I’ll use it anyway) at any one time will be on a trajectory in this n-space, at a location with some nearest neighbors in the model runs. Some linear (?) combination of those model runs could be used for short term predictions, out to a time horizon limited by the butterfly effect (or to go a bit farther, if the nearest neighbors diverge but remain in a few families, then the prediction can be: ‘likely A or B or C but not everything else’ – and as all the trajectories diverge, they’ll still tend to follow the strange attractor (which itself will be changing via external forcing changes, of course).

[Response: People have tried that with actual weather forecasts, but the problem is that there are too many degrees of freedom and so you never end up with a model that is ‘close enough’ to the reality to make it useful. It would be good to see this rigourously tested though. – gavin]

… out to a time horizon limited by the butterfly effect … plus some additional limitation due to a lower than (?)infinite(?) density of model runs.

Fred Pearce [@ the Guardian and New Scientist]

Do you intend to report this article prominently?

Alex Katarsis (29): I think I understand what you’re asking (and I don’t think Gavin does :) ). What you must remember is that (positive) feedback amplify forcings in both directions (relative to current temperatures).

Warmer temperatures causes more water vapor, which causes more warmth, etc. This is quite correct and often mentioned in climate discussions. What isn’t mentioned, because it’s assumed that people just understand it, is that it works the other way too: If it gets colder for some reason, it will cause less water vapor, which will cause it to get even colder, etc.

You can substitute “CO2 from the oceans”, “lower albedo”, or any of the other positive feedbacks for water vapor. Technically, they only make the world warmer. But relative to current temperatures, they can make the world either warmer (as in the beginning of an interglacial) or colder (as in the beginning of an ice age).

Climate scientists may think this is obvious. But I think many people are led to deny global warming because from the part of the argument they understand, they think temperatures have to rise forever. Obviously that can’t be true!

#36–

To elaborate a bit more for Alex (if he’s still following this), here’s the sequence, schematically:

1) Orbital change increases solar forcing slightly;

2) Resultant warming raises atmospheric CO2 concentration;

3) Further warming results, but *eventually achieves a new equilibrium* at a new, higher quasi-stable mean temp;

4) Orbital change decreases solar forcing slightly;

5) Resultant cooling lowers CO2;

6) Further cooling results, until a new cooler equilibrium is reached.

Of course, in reality there are multiple feedbacks and the “noise” of variability, making this much less clean that the neat schematic. But I think I’ve got the big picture right; and I’m even more confident that, if I haven’t, correction/elaboration will follow!

Your temperature plot figure is highly misleading. You connect the 2000-2010 forecast temperature centered on 2005 to the actual observed temperature in 2000, and show a .05C decline, with actual observations showing a 0.1C +/- increase. Hah! forecast not only wrong but in wrong direction. But this in not what Keenlyside et al did. They connected the forecast value to their hindcast value and actually forecast a 0.1C increase between 2000 and 2005, not bad. What was awful was their hindcast in 2008 which missed actual temps by a mile.

[Response: Pretty sure that this is not the case. Their simulation for 2000-2010 period was initialised with 2000 temperatures from the real world, not from a previous hindcast. The earlier forecast also incorrectly predicted a cooling. The lines on our figure are actual trajectories taken by their model. This is not what was shown in the original paper. – gavin]

Reading these comments I’m now totally confused. Positive forcing, cooling, warming, wetter, drought. Charts from way back showed us the scary tipping points where temperature increases would consume us. This has clearly not happened. The official line was and still seems to be that 2 degrees warming is what we must avoid by reducing CO2 or end up with catastrophic consequences.

Am I now to understand that this was wrong and that positive forcing caused by CO2 will create a new ice age? Observations seem to be leading us in that direction.

[Response: No, not really. – gavin]

Re 37 Kevin McKinney – actually, orbitally-forced global annual average changes in TOA solar insolation are very small (in the case of Earth) and depend only on variations in eccentricity (setting aside the idea that there is a plane of dust and the plane of the orbit has a significant effect that way – heard the idea awhile ago, not sure there’s much to support it ?). But eccentricity modulates the effect of precession (the alignment of perihelion and aphelion with solstices or equinoxes) (actually, obliquity does modulate this too, but while obliquity variations have significant effect by themselves, they are relatively small in proportion to the difference with zero obliquity, whereas Earth’s eccentricity variations include getting near zero, where aphelion and perihelion would have no effect as they wouldn’t exist.

Smaller obliquity transfers annual average insolation from higher latitudes (would make polar regions darker) to lower latitudes and reduces the seasonal ranges (would make winters less dark for less long). (Both hemispheres at the same time)

Alignment of perihelion near winter solstice would reduce the annual average insolation (because that hemisphere ’tilts away’ from the sun during the time of year when global TOA insolation is largest) while reducing the seasonal range (tendency for cooler summers, warmer winters -but also, longer spring-summer and shorter fall-winter because the Earth’s angular speed around the Sun is faster when Earth is closer to the Sun. (Hemispheres are ~ 180** degrees out of phase)

The effect of annual average would tend to affect both land and water; seasonal ranges would have a bigger impact on land. So long as winters stay cold (and moist – could be hampered if winters are too cold, ?depending on ocean currents etc.?) enough for enough snow to fall and accumulate, having cooler summers would allow snow cover to linger longer, with an albedo feedback that has a global cooling effect; when last year’s snow never completely melts you can start building an ice sheet.

If the Earth were completely symmetrical across the equator, the effect of precession would by a ~ 20,000 (I’m rounding) year cycle, but with two cycles of the global average in that time (with perihelion going from solstice to equinox to solstice completing one such global average cycle). Assymetries across the equator allow a ~20,000 year cycle in the global average.

Without any land, the effects of seasonal cycles are reduced and it is also harder to build up a thick ice sheet (the basal lubrication of sea ice being large). If land masses at high latitudes are too large, the seasonal cycles may be two large, with summers still warm enough to melt snow – also, possibly winters would be too cold to have snow cover thick enough to last, and also much area could be too distant from sources of humidity to get enough snow. The atmospheric circulation and ocean currents are also important, then (and oceanic circulation is also important to biogeochemical feedbacks). Continental drift and biological evolution, and the longer-term climatic state (ie solar brightness and the CO2,etc. level in equilibrium with geologic outgassing and chemical weathering, the later affected by climate but also plate tectonics and biology) modulate the Earth’s response to orbital forcing.

For the ice age – interglacial variations of the last few million years, a transition occured within the last million years where a 100,000 year timescale seemed to become dominant, whereas previously the variations followed the obliquity (~ 40,000 years) and precession cycles. One possible explanation is that earlier glaciations produced thinner ice sheets because the looser soil/sediment? – that helped lubricate the base – had not been scoured away yet; thinner ice sheets have lower elevations and thus tend to have warmer surfaces. Thicker ice sheets can be more resistant to melting by having colder surfaces (but also depress the crust more, so that when melting occurs, it may leave ocean instead of land (isostatic adjustment being a slow process – from memory, a timescale of ~ 15,000 years ?); possibly only when the eccentricity is large can the ice age be ended during the necessary phase of the precession cycle. Note that there is some hysteresis there; the thresholds for glaciation and deglaciation are not generally at the same point.

PS Precession cycles continue to affect low-latitude monsoons even when the Earth is not vascillating between ice ages and interglacials.

(See Ruddiman (from memory: Earth’s Climate – Past and Future), also Hartmann (Global Physical Climatology))

PS orbital cycles depend on Earth’s rotation speed (via equatorial bulge, which allows tidal forces from the Sun and Moon, etc, to exert a torque on the Earth) and the Moon’s orbit; the Earth has been slowing down (torques on the tidal bulges from the Moon and Sun) and the Moon has been moving out from the Earth (same mechanism; conservation of angular momentum; the Earth’s orbit around the Sun has too much angular momentum and energy to change much in relative terms), so the orbital cycles have varied over time. (Also, they aren’t just three simple exactly repeating cycles because the variables involved affect the cycles.) The eccentricity cycle presumably depends more exclusively on torques on the orbit by the other planets (?). The precession cycle is actually a combined effect of precession of the Earth’s axis (the most obvious forcing of that is the torque on the equatorial bulge from the Moon and Sun) and the shift of the orbit’s semimajor axis, the later being affected by other Planets but also some contribution from relativistic corrections to Newtonian physics, the relative importance of which I don’t know. I saw of graph of the precession cycle once and it appeared to occasionally skip a beat – perhaps when eccentricity got near zero – this makes some intuitive sense at least… (cause of Obliquity cycle is less obvious than precession of axis; perhaps some contribution comes from the Earth-Moon orbit and Earth+Moon – Sun orbit not being in the same plane – although the Moon’s orbit will ‘average’ near the plane of the Earth-Sun orbit over a relatively short time, but there’s lunar orbit eccentricity, etc, … residuals might build up … ? – of course there are people who understand this much better.

…’vascillating’ between ice ages and interglacials – maybe the wrong word choice for that…?

Earlier this week an article was published that seems to be very relevant for the present discussion on cooling/ lack of warming. The authors of the article realized that there will be no direct one-on-one relation between cosmic radiation and cloudiness, since there are several other requirements for cloud formation. They turned around the question: when there is a sudden change in cloudiness, is there also a change in the amount of cosmic radiation measured? The answer is clearly yes. http://www.atmos-chem-phys.net/10/10941/2010/acp-10-10941-2010.pdf

[Moderator please ignore if already received, got confused with the ReCaptcha]

I just do not know how the K08 paper got published, let alone in Nature. The reviewers seem not judging the paper by scienctific evidence, but by their loving of “global cooling”.

http://www.atmos-chem-phys.net/10/10941/2010/acp-10-10941-2010.pdf

[Response: What does this have to do with the topic at hand? – gavin]

Ever since someone posted the link to this anomaly animation here (thanks) I have been watching it often, especially these days, because I’m in Oslo, and it has been so cold here for a few weeks, while it appears to have been warm pretty much everywhere else in the northern hemisphere except for the north pole. Watching the current animation, it occurs to me that I don’t know how these concentrations of heat and cold come about. And then when I watch the current pressure animation for the same period, it appears that the red areas of high temps build up from waves of high pressure followed by low pressure passing through. Is the repeating compression/expansion of the air forcing energy to be picked up from some areas and left behind in others? And what fraction of the daily average temperature is due to new energy from the sun?

temperature: http://www.esrl.noaa.gov/psd/map/images/rnl/sfctmpmer_07a.rnl.anim.html

pressure: http://www.esrl.noaa.gov/psd/map/images/rnl/slp_07a.rnl.anim.html

Re:44,

I agree that this link is somewhat OT, but ‘cloudiness’ is indeed a last-gasp topic of AGW deniers. So, I would have appreciated even a little technical comment from you Gavin on it – (btw, not trying to ‘trick’ you but, I have respected your technical views on Climate topics previously above almost everybody elses).

Second point: In a post in another recent thread, I stated that some of the data in the NASA ‘Eyes on the Earth’ Website was quite out of date (especially Greenland ice melt. Do you have any influence to correct it?

Regards

[Response: Send the page link you are discussing and I’ll investigate. – gavin]

watch out, Gavin. The usual deniers have now teamed up and brought out a new book, ‘Slaying the Dragon: Death of the Greenhouse Gas Theory.’ Might be something there you want to pick apart.

[edit]

[Response: This is pretty far out. Might be amusing to have Lindezn and Spencer debunk it. – gavin]

[Further Response: I had a closer look: definitely more Buffy than Beowulf. – gavin]

http://www.atmos-chem-phys.net/10/10941/2010/acp-10-10941-2010.pdf

“[Response: What does this have to do with the topic at hand? – gavin]”

It’s the cat’s meow on WUWT today…so clearly important to John of comment #44. You can tell by all the insight and discussion he added while providing the link.

36/37:

Thank you for the explanations and the sequence. The part I’m now failing to comprehend is 37 item 3: “Further warming results, but *eventually achieves a new equilibrium* at a new, higher quasi-stable mean temp”. This is primarily because Item 2 doesn’t explain “when” or “by how much” – since SEE OH TOO lags temperature. Seriously now, since the orbital changes can be calculated precisely and the CO2 concentrations can also be derived via experimentation (that is apparently very accurate also), can’t we create a very accurate model of the natural cooling process? It just seems odd to attribute the cooling, in effect, to the “eccentricities” of the sun in a time of warming and lagging GHG concentration. Isn’t there some other catalyst?

This is an aside, but Shu Wu at UW-Madison (with whom I played a secondary role in piecing together a Journal of Climate article with that has been submitted over the summer) has shown, somewhat counter-intuitively, that persistence forecasting based off of the last year in a set of say, 10 years, provides a better baseline for comparison than the running mean of that 10 years, within the framework of red noise process(which also extends to damped persistence forecasting). This provides a better benchmark for comparison since persistence is often used as a mark to beat for decadal-scale prediction studies.