Gavin Schmidt and Stefan Rahmstorf

John Tierney and Roger Pielke Jr. have recently discussed attempts to validate (or falsify) IPCC projections of global temperature change over the period 2000-2007. Others have attempted to show that last year’s numbers imply that ‘Global Warming has stopped’ or that it is ‘taking a break’ (Uli Kulke, Die Welt)). However, as most of our readers will realise, these comparisons are flawed since they basically compare long term climate change to short term weather variability.

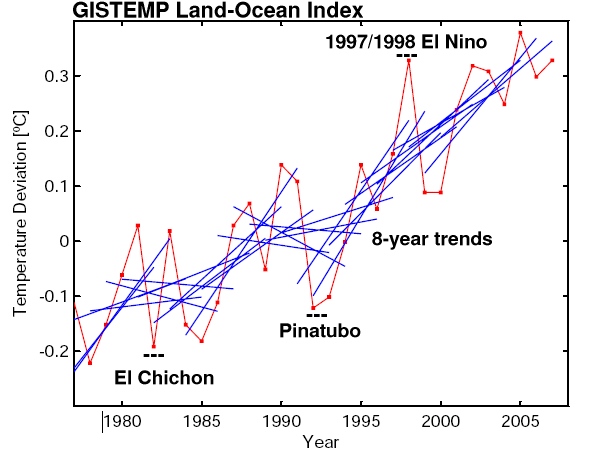

This becomes immediately clear when looking at the following graph:

The red line is the annual global-mean GISTEMP temperature record (though any other data set would do just as well), while the blue lines are 8-year trend lines – one for each 8-year period of data in the graph. What it shows is exactly what anyone should expect: the trends over such short periods are variable; sometimes small, sometimes large, sometimes negative – depending on which year you start with. The mean of all the 8 year trends is close to the long term trend (0.19ºC/decade), but the standard deviation is almost as large (0.17ºC/decade), implying that a trend would have to be either >0.5ºC/decade or much more negative (< -0.2ºC/decade) for it to obviously fall outside the distribution. Thus comparing short trends has very little power to distinguish between alternate expectations.

So, it should be clear that short term comparisons are misguided, but the reasons why, and what should be done instead, are worth exploring.

The first point to make (and indeed the first point we always make) is that the climate system has enormous amounts of variability on day-to-day, month-to-month, year-to-year and decade-to-decade periods. Much of this variability (once you account for the diurnal cycle and the seasons) is apparently chaotic and unrelated to any external factor – it is the weather. Some aspects of weather are predictable – the location of mid-latitude storms a few days in advance, the progression of an El Niño event a few months in advance etc, but predictability quickly evaporates due to the extreme sensitivity of the weather to the unavoidable uncertainty in the initial conditions. So for most intents and purposes, the weather component can be thought of as random.

If you are interested in the forced component of the climate – and many people are – then you need to assess the size of an expected forced signal relative to the unforced weather ‘noise’. Without this, the significance of any observed change is impossible to determine. The signal to noise ratio is actually very sensitive to exactly what climate record (or ‘metric’) you are looking at, and so whether a signal can be clearly seen will vary enormously across different aspects of the climate.

An obvious example is looking at the temperature anomaly in a single temperature station. The standard deviation in New York City for a monthly mean anomaly is around 2.5ºC, for the annual mean it is around 0.6ºC, while for the global mean anomaly it is around 0.2ºC. So the longer the averaging time-period and the wider the spatial average, the smaller the weather noise and the greater chance to detect any particular signal.

In the real world, there are other sources of uncertainty which add to the ‘noise’ part of this discussion. First of all there is the uncertainty that any particular climate metric is actually representing what it claims to be. This can be due to sparse sampling or it can relate to the procedure by which the raw data is put together. It can either be random or systematic and there are a couple of good examples of this in the various surface or near-surface temperature records.

Sampling biases are easy to see in the difference between the GISTEMP surface temperature data product (which extrapolates over the Arctic region) and the HADCRUT3v product which assumes that Arctic temperature anomalies don’t extend past the land. These are both defendable choices, but when calculating global mean anomalies in a situation where the Arctic is warming up rapidly, there is an obvious offset between the two records (and indeed GISTEMP has been trending higher). However, the long term trends are very similar.

A more systematic bias is seen in the differences between the RSS and UAH versions of the MSU-LT (lower troposphere) satellite temperature record. Both groups are nominally trying to estimate the same thing from the same data, but because of assumptions and methods used in tying together the different satellites involved, there can be large differences in trends. Given that we only have two examples of this metric, the true systematic uncertainty is clearly larger than the simply the difference between them.

What we are really after is how to evaluate our understanding of what’s driving climate change as encapsulated in models of the climate system. Those models though can be as simple as an extrapolated trend, or as complex as a state-of-the-art GCM. Whatever the source of an estimate of what ‘should’ be happening, there are three issues that need to be addressed:

- Firstly, are the drivers changing as we expected? It’s all very well to predict that a pedestrian will likely be knocked over if they step into the path of a truck, but the prediction can only be validated if they actually step off the curb! In the climate case, we need to know how well we estimated forcings (greenhouse gases, volcanic effects, aerosols, solar etc.) in the projections.

- Secondly, what is the uncertainty in that prediction given a particular forcing? For instance, how often is our poor pedestrian saved because the truck manages to swerve out of the way? For temperature changes this is equivalent to the uncertainty in the long-term projected trends. This uncertainty depends on climate sensitivity, the length of time and the size of the unforced variability.

- Thirdly, we need to compare like with like and be careful about what questions are really being asked. This has become easier with the archive of model simulations for the 20th Century (but more about this in a future post).

It’s worthwhile expanding on the third point since it is often the one that trips people up. In model projections, it is now standard practice to do a number of different simulations that have different initial conditions in order to span the range of possible weather states. Any individual simulation will have the same forced climate change, but will have a different realisation of the unforced noise. By averaging over the runs, the noise (which is uncorrelated from one run to another) averages out, and what is left is an estimate of the forced signal and its uncertainty. This is somewhat analogous to the averaging of all the short trends in the figure above, and as there, you can often get a very good estimate of the forced change (or long term mean).

Problems can occur though if the estimate of the forced change is compared directly to the real trend in order to see if they are consistent. You need to remember that the real world consists of both a (potentially) forced trend but also a random weather component. This was an issue with the recent Douglass et al paper, where they claimed the observations were outside the mean model tropospheric trend and its uncertainty. They confused the uncertainty in how well we can estimate the forced signal (the mean of the all the models) with the distribution of trends+noise.

This might seem confusing, but an dice-throwing analogy might be useful. If you have a bunch of normal dice (‘models’) then the mean point value is 3.5 with a standard deviation of ~1.7. Thus, the mean over 100 throws will have a distribution of 3.5 +/- 0.17 which means you’ll get a pretty good estimate. To assess whether another dice is loaded it is not enough to just compare one throw of that dice. For instance, if you threw a 5, that is significantly outside the expected value derived from the 100 previous throws, but it is clearly within the expected distribution.

Bringing it back to climate models, there can be strong agreement that 0.2ºC/dec is the expected value for the current forced trend, but comparing the actual trend simply to that number plus or minus the uncertainty in its value is incorrect. This is what is implicitly being done in the figure on Tierney’s post.

If that isn’t the right way to do it, what is a better way? Well, if you start to take longer trends, then the uncertainty in the trend estimate approaches the uncertainty in the expected trend, at which point it becomes meaningful to compare them since the ‘weather’ component has been averaged out. In the global surface temperature record, that happens for trends longer than about 15 years, but for smaller areas with higher noise levels (like Antarctica), the time period can be many decades.

Are people going back to the earliest projections and assessing how good they are? Yes. We’ve done so here for Hansen’s 1988 projections, Stefan and colleagues did it for CO2, temperature and sea level projections from IPCC TAR (Rahmstorf et al, 2007), and IPCC themselves did so in Fig 1.1 of AR4 Chapter 1. Each of these analyses show that the longer term temperature trends are indeed what is expected. Sea level rise, on the other hand, appears to be under-estimated by the models for reasons that are as yet unclear.

Finally, this subject appears to have been raised from the expectation that some short term weather event over the next few years will definitively prove that either anthropogenic global warming is a problem or it isn’t. As the above discussion should have made clear this is not the right question to ask. Instead, the question should be, are there analyses that will be made over the next few years that will improve the evaluation of climate models? There the answer is likely to be yes. There will be better estimates of long term trends in precipitation, cloudiness, winds, storm intensity, ice thickness, glacial retreat, ocean warming etc. We have expectations of what those trends should be, but in many cases the ‘noise’ is still too large for those metrics to be a useful constraint. As time goes on, the noise in ever-longer trends diminishes, and what gets revealed then will determine how well we understand what’s happening.

Update: We are pleased to see such large interest in our post. Several readers asked for additional graphs. Here they are:

– UK Met Office data (instead of GISS data) with 8-year trend lines

– GISS data with 7-year trend lines (instead of 8-year).

– GISS data with 15-year trend lines

These graphs illustrate that the 8-year trends in the UK Met Office data are of course just as noisy as in the GISS data; that 7-year trend lines are of course even noisier than 8-year trend lines; and that things start to stabilise (trends getting statistically robust) when 15-year averaging is used. This illustrates the key point we were trying to make: looking at only 8 years of data is looking primarily at the “noise” of interannual variability rather than at the forced long-term trend. This makes as much sense as analysing the temperature observations from 10-17 April to check whether it really gets warmer during spring.

And here is an update of the comparison of global temperature data with the IPCC TAR projections (Rahmstorf et al., Science 2007) with the 2007 values added in (for caption see that paper). With both data sets the observed long-term trends are still running in the upper half of the range that IPCC projected.

Its a little off topic, but in the sunday comics there was a funny strip about interpreting statistics in research. It reminded me of the current discussion. The strip even uses the +/- symbol. Its funny.

http://www.comics.com/comics/getfuzzy/archive/getfuzzy-20080106.html

I would say that every modern weather event bears the fingerprints of global warming. The problem is that it is hard to find “fingerprints” on a thunderstorm or hurricane. However, it is easy to find the fingerprints of global warming on the weather prediction models. Those models use sea surface temperatures and atmospheric temperatures that reflect the full impact of global warming. The success of these weather models proves that global warming affects our daily weather.

Climate we can plan for, engineer for, and survive. The problem is always the weather, ( E.g., a rain event, a snow event, a drought, ice melt, a heat wave, a cyclone, a typhoon.) Global warming brings us increasingly intense weather. That means the weather problems become more intense.

Last week there were tornadoes across the US in January. In our recent climate that would have been a very rare event. Global warming supplied heat to make it a less rare event. Sudden Arctic Sea ice melt was a rare event. Global warming has supplied the extra heat necessary to make it less rare. At some point you have to say, ”Global warming has given us a new climate. We are having weather that for all practical purposes did not occur in the old climate.” We may not be sure what else global warming may bring us, but we can be very sure that most of us are not going to like it, because our infrastructure is not designed to withstand it

Odd weather also confuses the plants that provide us with food. Over the last few decades, the climate around my house has warmed from temperate to subtropical. My fruit trees are no longer adapted to our climate. I live near major commercial fruit growing areas, so this is a real economic cost in the near future. Oh, but you say that, there are colder regions that can still grow fruit. Yes, but they currently grow varieties that are adapted to high chill conditions. As their climate warms, their trees will stop producing, and they will have to replant with trees that require less chill. This will happen around the world. Expect the price of fruit and nuts to go way up – soon.

We can not wait for certainty. We need to act. We are dealing with very nonlinear processes, some of which can proceed much faster than any current published peer reviewed modeling predicts. One case in point is Arctic sea ice. The melt ponds and moulins on Greenland suggest that we will soon have another example. There are reports of melt water on WAIS. Mother Nature can out run our fastest models. We need to “cut her off at the pass.” Any effort to do that is cheap compared to the costs if she (global warming) gets away from us.

Re #95: Sorry for the last statement, you are the expert, so let me rephrase in the form of some questions. 1) Why are yearly or multi-year changes in ocean heat content are unimportant or uninteresting? 2) Assuming the finding of very little or any upper ocean warming over the last three-four years holds up (I am pursuaded that following the corrections to the Argo data, this is likely), does it challenge *anything* that modelers think they know about the sum of *current* radiative forcings and feedbacks? 3)If the earths heat balance can be neutral or even negative for multiple years, even with the increasing dominance from the radiative forcing of C02, doesn’t it make you even a little curious that the models handling of feedbacks, or deep ocean mixing, or other processes may be incomplete?

[Response: If… if…. if…. all those things were demonstrably so, of course one would be interested in why. But they aren’t. Right now there is substantial systematic uncertainty in OHC numbers for a single year (much greater in terms of signal to noise than in the surface temperature record). There is additionally substantial natural variability in the system – i.e. OHC changes due to La Nina/El Nino events are likely to be very significant. And although the balance of evidence does suggest significant warming in the long-term – there are certainly issues related to spatial coverage in earlier periods. Given that uncertainty, it will be a long time before this dataset becomes primary in issues of attribution. That’s unfortunate, but there’s not much to be done about it. – gavin]

Bob North (76) — (1) That all GW is AGW follows from the theory of orbital forcing. The climate should be (on average) slowly cooling now and for the next 20,000 years, if humans had not added about 500 billion tonnes of carbon (so far) to the active carbon cycle.

(2) Predictions of drought are easily understood as predictions that water vapor patterns will change. Indeed, Australia is already experiencing this, as is the southern portion of the Amazon Basin and in the Sahel. Hadley Centre has regional predictions for 2050. While your concern for accuracy is reasonable, you still may find that report of interest.

90#

Gavin,

“and slide 19 shows no big changes in either ISCCP or CERES”

Solved it, it’s two different things. Loeb’s is albedo, my link is net TOA.

http://isccp.giss.nasa.gov/projects/browse_fc.html

I doubt the ISCCP net TOA is wrong. There’s a close to perfect correlation between that and volcanic activity.

http://www.volcano.si.edu/world/largeeruptions.cfm

After every VEI4 or larger eruption the net TOA drops. There were no large eruptions between 1994 and 2000, therefore high radiation input. After 2000 there have been 6.

A OHC level off should be expected.

[Response: There have been no climatically significant eruptions since 2000. What you need for that is not just an highly explosive one, but one that deposits significant sulphate in the stratosphere – this has not occurred (you don’t see the telltale signs of lower strat warming in MSU4 for instance, and there is no increase in aerosol optical depth either). I suggest emailing someone at ISCCP and asking about the post 2000 offset. I would not be at all surprised if it did not coincide with the inclusion of data from CERES. – gavin]

In 97 Gavin writes “The fact that the model match to obs doesn’t depend on whether you use GISTEMP, NOAA or HADCRU”. Do you have a reference where I can read up all the details? Preferably one that has been peer reviewed.

[Response: Just plot one on top of the other from 1975 to 2007. it’s not rocket science. – gavin]

[Response: For the Hadley and GISS data the graph and corresponding peer-reviewed paper is linked in the “update” at the bottom of the post. -stefan]]

Re: #105, Gavin, I am quite surprised of your reluctance to embrace the new Argo network data, even given some of the recent issues. As far as I can understand, these are being worked, and it seems that appropriate corrections are now being made. New drop rate calculations for the XBT’s are being implemented, and the cold bias on the infected Argo floats has been removed. Some recent work on integration techniques has also been performed, and seem to show that the difference between techniques is quite small and produce very small error bars. Are you aware of other potential problems? If so, please advise.

So, are you going on the record saying the Argo float coverage or collection techniques, or instrumentation are not up to the task of providing reliable yearly or multi-year ocean heat content numbers? If so, I am sure the entities who have invested lots of money in this program will be mighty dissapointed, being that just a couple of years ago, the climate science and ocean science communities heralded the Argo network as the greatest thing since sliced bread.

Also, I am skeptical of your suggestion that ENSO makes a significant difference in the annual global heat content integral. Is the amount of heat transferred to the atmosphere during an El Nino of a similar magnitude to the total change in heat content of the entire upper ocean on an annual basis? The heat content changes manifested by SST anomolies in the equatorial Pacific are regional changes, and the Joules are already in the ocean, and should be accounted for in the global volume integral. If what you say is correct, then one would expect there to be a clear ENSO signal manifested in the OHCA graph over the past (ie a drop in the global heat integral following an El Nino event). I see none. Maybe I misunderstand something fundamental here, and if so, please clarify.

[Response: You appear to be jumping to conclusions. How did you get the idea I didn’t think the ARGO program was useful? None of the resolutions of issues that you mention have made it into the literature yet and I’m happy to refrain from commenting on papers that don’t yet exist. When they do, ask me again what they might mean. As to whether a big El Nino should impact the OHC numbers, surely the answer must be yes. Whether the magnitude is sufficient to take it into negative territory is unclear to me (but I haven’t looked into it), but I’d be very surprised if there was no effect -at minimum you’d expect a slowing of the rate of growth. During a La Nina, you expect the opposite – an increase in the rate of growth. Once ARGO has been in place for a few more years and the teething problems dealt with, it will indeed be a big boost. But don’t prejudge what will be found in the future (unless you have good reasons). – gavin]

gavin> OHC changes due to La Nina/El Nino events are likely to be very significant.

I understand there may be measurement problems, but aside from that, how can OHC change significantly other than by radiation balance?

[Response: OHC changes if the net heat flux at the ocean surface changes. That can be driven by sensible/latent/SW and LW changes – and all of those change with ENSO. Looking roughly at some AMIP runs, the GISS model has around 0.3 to 0.4 W/m2 DJF heat loss from the oceans for the 1997/1998 El Nino which is significantly smaller than the heat loss anomaly to space (implying a significant heating of the atmosphere). One could look into that more closely of course…. – gavin]

FWIW I predict 2008 will be a scorcher based on the dramatically different start to the year with temperatures in places up to 15C higher than the January average. For some reason the oceans must have ‘sucked it in’ last year and are now ready to ‘let it out’. If not the next year or two then down the track.

> FWIW I predict

You can decide WIW and see if anyone will take your bet:

http://www.jamstec.go.jp/frcgc/research/d5/jdannan/betting.html

with more at http://julesandjames.blogspot.com/

I’ve left a question for Mr. Tierney in his blog comments; perhaps we should do likewise for Christopher Booker of the Telegraph? (re Bob Ward at #5)

For some entertaining reading, search for “Christopher Booker” in the tobacco papers…

“The lead from four-star petrol falls harmlessly on the roadside… this is simply another pointless victory for a self-righteous lobby that is not even aware that its dogma rests on science that has long since been discredited.”

Ok Gavin, in post 68 you suggested that I ask Dr. Pielke to revise the confidence interval shown to reflect how the annual average anomaly has varied in the past. I suggested at least twice the natural system standard deviation of 0.1 deg. I posted this on his blog.

I also put the following posts up on Tierney’s blog at the NY Times in response to a poster who interpreted the confidence level shown by Pielke:

Post #34 Frank’s comments include this:

“1. If the dotted lines are 95% confidence levels, the probability that the IPCC forcast is too high is quite large over the past four years. Two of the series are below the 95% confidence level in 3 of the last 4 years.”

If I understand the statistical analysis on this site, the dashed lines are not confidence levels based on historical annual temperature anomaly data.

http://tamino.wordpress.com/2007/12/16/wiggles/

The data indicates the standard deviation on the yearly data since 1975 is 0.1 deg C. Any data within +/- 0.2 deg C (two standard deviations) would be in the 95% confidence interval. Instead Pielke has shown dashed lines only one fourth that interval on his chart.

Incidentally this standard deviation is calculated from the temperature data alone, not from the IPCC models.

I suspect, the confidence levels shown on the Pielke chart, must be based on some kind of longer term average, and seem to be overlaid on the annual data.

Do we have an apple to apple comparison here?

We could draw the expected 95% confidence interval by placing the dashed lines on his chart, 0.2 deg above and below the forecast. From natural weather variation alone, about 95% of the data collected should fall in that band. And the real data shown, does.

Of course to do this correctly, error bands should be set by the standard deviations calculated for each measurement system; NASA, UKMET, UAH, and RSS. Each measurement system has its own individual measurement error that should be added to the natural system variation

.

But at the very least, the error bands must be +/- 0.2 degrees… anything less would be clearly [edit] misleading.

#105

Gavin,

“There have been no climatically significant eruptions since 2000”

How can you be so sure sulphate to the trosphere is insignificant?

VEI4 eruptions in 73,74,75 and 76 and a significant drop in temperature in 76 for instance.

And we are talking a level off since 2003, not a significant drop (yet).

[Response: The only way sulfate to the troposphere is climatically relevant is if large amounts are output persistently for months at least. Otherwise it just washes out. Laki (1783) is a good example perhaps (Oman et al). But, as far as I am aware, none of the volcanoes in recent years have been that persistent or voluminous. Overall levels of aerosol have been dropping (ever so slightly) over that period in any case (Mischenko et al 2007). – gavin]

It is midnight in Sweden, 100 km NW inland from Stockholm, the night between Jan 12 and 13. Outside it is +6°C, there is no snow, no ice on Lake Mälaren, our third biggest lake in Sweden, no ice in the Bothnian Gulf. Last year average temp in Sweden was 1.5-2°C above average and in January so far average temperatures are 5-6”C above normal. You may call this “weather” but is really starting to look like climate

Given the impact of volcanoes, I think that Krakatoa and Tambora should be referenced when temperatures from the 19th Century are compared to today.

Krakatoa was 3 times bigger than Pinatuba and Tambora was 10 times bigger yet they are never referenced when temperature records are compared.

[Response: They are referenced in model simulations (at least Krakatoa which is after the 1850/1880 start date for most simulations)- see http://pubs.giss.nasa.gov/abstracts/2007/Hansen_etal_3.html – gavin]

Gavin– In answer to #2m you discussed the multiple response times of various components of the planet. Could you point me to a reference that describes how the response time to Pinatubo, the deep oceans or any other components? Thanks in advance.

[Response: Where do you want to start? Google 14C distributions in the ocean, the seasonal cycle, glacier retreat etc. The literature on responses to Pinatubo is also pretty extensive: Hansen et al 1992, Wigley et al 2005 (in response to Douglass), …. If you want to be more specific, I could probably be more helpful. – gavin]

[Response: If you don’t have access to the Wigley et al ’05 paper, which indeed addresses this most directly, you can find some discussion in the IPCC AR4 report, which is publicly available. See page 61 of chapter 9 of the Working Group I report (warning, its 5 MB in size and may take a minute or two to download, depending on the speed of your internet connection). – mike]

I’m sad. I provided references for Hank, and he has yet to follow up with me, even though he is still active in the thread. And nobody has a comment on the graph I labored most of the night to create?

I’m feeling neglected :-)

Predictions:

1. We are due for a spike year. We haven’t realy spiked since 2002, and it is about time for another one, based on what I see on the historical graph.

2. On any scale: 5 year, 10 year or 30 year, it is unmistakable that the warming trend continues. The rate may be slowing or accelerating; we should know the answer to that in 5 years. However, “cooling” would be the wrong word no matter how you look at the last 9 years, when all are well above the 1951 – 1980 mean and 2 of them exceeded 1998. As I have been saying, the “spike” has become the “norm.” Is that not news?

Walt, did you see Gavin’s inline response at #86? It seemed to me to answer your question as well as you could hope for, though it was in response to someone else asking something similar.

I’m just another reader here, not a climatologist, I can hardly answer questions like the one you asked –“Is Hadley right?”

I doubt anyone could. If you mean do their published measurements reflect the instruments they used — likely so.

You could ask what their error bars are for short term measurement (those will be relatively large) compared to their error bars for long term trends (those will be relatively smaller), perhaps.

Re: #118,

Hank,

I am specifically asking (anybody):

1. Can Hadley’s SST measurements be considered reliable to the same extent land measurements can?

2. Is it correct to allow the extrapolated SST signal to dominate the reconstructed climate signal? I suppose that rolls back to question 1, but also the method by which overall SSTs are determined. I assume there are very few measuring points on the open ocean; what method is used to extrapolate the overall temp, and has it been in any way verified with, for example, spot sampling from passing ships?

My intuition is telling me that the warming is clear, detectable and accelerating, at least here in the northeast U.S., and from what I read, the same is true in many other places. We have visual evidence of rapid change in the Arctic region, and I suspect we will be seeing much the same soon in certain parts of the Antarctic continent, even moreso than we have already seen. There may be some confusing year-to-year feedbacks, but the overall trend is clear to me.

If we have SST measurements that seem to offset the warming, there is bound to be less concern about the overall increase in global temp. If it turns out that the methods used to determine overall SSTs were severely in error, we would be looking back and asking “Why did we let ourselves believe overall global temps were stable, when all of the visual evidence told us that the warming was continuing?”

Idea – someone should run a “Debunking for Dollars” site where anyone with a denialist talking point could submit it with a PayPal donation of appropriate size & get said point addressed, instead of sitting around at Dot Earth (and elsewhere) complaining that RealClimate won’t address the point [for the 152nd time].

If there are conflict-of-interest issues, the money could go to a charity. And multiple people could do the debunking, to spread the load.

(I’ve just now registered the url as a precaution, and would be happy to help defray the costs of setting up the site…)

Walt, when you write this:

> If we have SST measurements that seem to offset the

> warming, there is bound to be less concern about the

> overall increase in global temp.

you’re now talking PR, political spin … not science.

It doesn’t matter what your political beliefs say would be good PR or good spin or good press, if you want to talk about the science. What matters is getting good information.

The models have all indicated areas that will be warmer, and areas that will be cooler, over time. Hadley in particular has that 10-year look into the model’s future that I already pointed to. But look at any of the pictures of what may occur and you’ll see areas warmer and other areas cooler.

Getting it right is what matters. Not getting it one way for PR.

Mike– Thanks, for the citation. (I have a fast connection.) I’ve glanced at that, and it appear to be a summary/ literature review type document. So, while it doesn’t contain what I’m looking for, I suspect I can order some of ther referenes to find the basis for some numbers. It should help.

Gavin,

Thanks. I’d probably be more specific if I had a clue what types of things existed. :)

I’m interested in papers that specifically describe how that set or particular authors estimate time scales rather than simply citing other authors and mention what someone esle found. I have, for example, Schwartz 2007, ““Heat Capacity, Time Constant, and Sensitivity of Earth’s Climate System””, which suggested the climate system can be modeled as a simple lumped parameter and then found a time constant based on the autocorrelation of surface temperatures. Schwartz cites a number of papers in section 4. I’m in the process of trying to get them and familiarize myself with the sorts of approaches used, but Schwartz doesn’t, for example, cite Hansen 1992. (He does cite a Hansen 1996, Geophys Rev Letters. He also cites Wigley 2005. I assume Hansen wrote more than 1 paper in 1996? )

Anyway, as I know you criticized Schwartz’s paper, it occurred to me you might cite papers Schwartz did not. I guess as long as you are writing about this topic, and you answered a question mentioning these time constants, I’m asking hoping to find which papers describing time constants you think are most worth reading rather than simply googling.

It sounds like the Wigley paper’s that Mike, you and Steven Schwartz’ all cite must be a good start. If it’s not I’ll probably be able to trace back through the references.

The sun is quiet, and has been on a downturn for a few years. Why are cooler ocean temps any surprise? It *is* possible that the sun will soon enter a relatively extended quiet period. What ramifications that would have on our climate remains to be seen.

In reply to comment 120, Why are all of us with open minds labeled debunkers? Are open minds now a bad thing? Thanks, but I will keep my open mind and skepticism readily handy for both sides. Everyone these days seems to have an agenda. If the oceans are cooling.. Thats great. If not, that is great too. This whole thing makes me miss the old days when everyone thought nuclear war was inevitable. :)

Re:#107 Gavin, you say “none of the resolutions of issues that you mention have made it into the literature yet and I’m happy to refrain from commenting on papers that don’t yet exist”

Ah, but they will be in the literature soon. A steak dinner at your favorite steakhouse if the OHC gain for 2004, 2005, 2006, and 2007 turns out to be more than “statistically insignificant” in any refereed paper on the issue. If less, then you buy at mine. If the result is overturned later, I will refund your dinner in kind. Are you having any of it? Maybe afterward we can get down to business talking about some interesting climate science.

P.S. The beef is better down here in Texas.

How well do the CGM models used in the various generations of IPCC studies capture the effects of the two volcanoes highlighted in the main article. I gather that volcanic aerosols are specified in model runs up to present. One would expect that they should show the two cooling pulses. Has anyone plotted model outputs for the few years around these eruptions?

Dusty, is there some correlation between sunspot cycles and El Nino/La Nina/ENSO published somewhere? Cite please? Hadley’s prediction for the next decade doesn’t involve the sun missing a cycle. And the sun’s on the upswing, first sunspot of the new cycle happened.

As per #78, an even better way to get over all this arguing about 7 years, 8 years, etc goes like this:

1) Download the GISTEMP data (year, anomaly), I used 1977-2006.

2) Compute N-year SLOPEs centered on each year:

N years

3 1978-2005

7 1980-2003

11 1982-2001

15 1980-1999

19 1978-1997

3) Do a scatter plot of the 5 series, which shows how the slopes vary over time for a different number of years.

4) One finds:

–3– –7– -11– -15– -19–

.018 .017 .017 .017 .016 MEAN Not much difference

.074 .023 .008 .007 .003 STDEVP Standard deviation decreases strongly

.135 .069 .036 .030 .020 MAX

-.130 -.022 .006 .006 .011 MIN

.265 .091 .030 .024 .010 range Range shrinks strongly, unsurprisingly

5) People sometimes argue with any specific series-length as cherry-picking, but by showing multiple lengths on one chart, that clearly isn’t happening.

The chart is another way to show what anybody *should* know, but some people seem to not understand, or don’t want to:

a) If you pick a short span in a noisy series, you can prove anything.

b) As series get longer, the variability shrinks, and that is obvious on the chart.

6) And in this particular case, once you get to 11-years, there are *no* negative-slope series [min = .006], i.e., even Pinataubo doesn’t do it.

Re: #121

Hank,

I fear that you have once again missed my point; I do not intend to be so obscure.

I am singularly focused on getting it right. I have asked a battery of questions on that very subject and have gotten zero response.

The point, if there was one, to my question was, is it possible that we are getting it wrong with regard to SST cooling? Hadley’s own graph shows that the ocean tends to warm when land warms. Why in the last two years has that not been the case? What would the explanation be for the oceans, overall, to be cooling for what to my eyes seem to be at least the last two years?

http://www.metoffice.gov.uk/research/hadleycentre/CR_data/Monthly/NMAT_SST_LSAT_plot.gif

Re # 60 etc on Ocean Heat Content (OHC):

please see the new study

Johnson, Gregory C., Sabine Mecking, Bernadette M. Sloyan, and Susan E. Wijffels, 2007. Recent Bottom Water Warming in the Pacific Ocean. Journal of Climate Vol. 20, No 21, pp. 5365-5375, November 2007, online http://www.pmel.noaa.gov/people/gjohnson/gcj_3m.pdf

Abstract

Decadal changes of abyssal temperature in the Pacific Ocean are analyzed using high-quality, full-depth hydrographic sections, each occupied at least twice between 1984 and 2006. The deep warming found over this time period agrees with previous analyses. The analysis presented here suggests it may have occurred after 1991, at least in the North Pacific. Mean temperature changes for the three zonal and three meridional hydrographic sections analyzed here exhibit abyssal warming often significantly different from zero at 95% confidence limits for this time period. Warming rates are generally larger to the south, and smaller to the north. This pattern is consistent with changes being attenuated with distance from the source of bottom water for the Pacific Ocean, which enters the main deep basins of this ocean southeast of New Zealand. Rough estimates of the change in ocean heat content suggest that the abyssal warming may amount to a significant fraction of upper World Ocean heat gain over the past few decades.

Hmmm, was it a low sunspot number, or was it bad observing conditions due to volcanic activity? Interesting idea:

http://ntrs.nasa.gov/search.jsp?R=522471&id=4&qs=Ntt%3Dsunspot%26Ntk%3Dall%26Ntx%3Dmode%2520matchall%26N%3D4294826660%2B53%26Ns%3DHarvestDate%257c1

Volcanism, Cold Temperature, and Paucity of Sunspot Observing Days (1818-1858): A Connection

Author(s): Wilson, Robert M.

Abstract: During the interval of 1818-1858, several curious decreases in the number of sunspot observing days per year are noted in the observing record of Samuel Heinrich Schwabe, the discoverer of the sunspot cycle, and in the reconstructed record of Rudolf Wolf, the founder of the now familiar relative sunspot number. These decreases appear to be nonrandom in nature and often extended for 13 yr (or more)…. The drop in equivalent annual mean temperature associated with each decrease, as determined from the moving averages, measured about 0.1-0.7 C. The decreases in number of observing days are found to be closely related to the occurrences of large, cataclysmic volcanic eruptions in the tropics or northern hemisphere….”

Interesting to think that the counts of sunspot numbers could actually have been counts of dirty air days, and the variations in temperature due not to sunspots but volcanic activity far around the Earth.

I don’t know where this idea when, if anywhere. Just happened on it.

RE this post, it’s a good thing there are some scientists around, like you folks, to do the science.

I’m thinking sci-fi on this — a world in which journalists do the science. Title? Maybe, THE NEW DARK AGES.

I saw a program on Nat Geo, RING OF FIRE (around the Pacific Ocean). It said volcanism is higher now than thousands of years ago, and that it could get a lot worse.

If it does and it gets extremely worse (like 251 mya ago), then I’m guessing the initial impact might be an aerosol cooling effect, but that once that clears from the atmosphere, then it would be leaving GHGs behind for a very long time to cause increased GW.

But before the denialists start gloating about this, we should consider that we might need those very fossil fuels we are wastefully burning now to help us keep warm during the cold snap.

So either way, whether volcanism is due to increase drastically or not, we’ve got to cease and desist from our fossil fuel burning right now.

From the referenced Jan 10, 2008 article: “Dr. Pielke suggests that more scientists do reality checks”…

However, Pielke fails to mention that U.S. scientists have run into a road block in attempting to do reality checks on climate change with National Weather Service climate and streamflow data. Pielke ought to know that to be true in his having received funding from NOAA NWS in years past.

I think what the original article was tapping into is the general publics observation that there has been no meaningful global temperature rise in the last decade. This is at odds with the alarmism that is continually being preached. I agree with the point that the IPCC predictions are not meant for the short term but they create a credibility problem for themselves when the alarmist predictions don’t materialise in the short term. Looking at the global HADCRUT3 data set and overlaying the IPCC (2001 report) range of predictions clearly shows the global data points (or rolling averages if you prefer) for the last 6 or 7 years plotting underneath the entire range given in the 2001 report. This is a fact and making excuses for it is pointless. It would be much better to just state : “the predictions are meant for the long term (eg.2100)and are still valid but there has clearly been an overestimate of warming in the short term”. Being honest about it will allow some credibility to remain. Otherwise by this time next year (should 2008 be another flat or cooler year) these small ripples of doubt will have become an unstoppable wave of disbelief and apathy.

[Response: Pointing out that short term values are not ‘meaningful’ is being ‘honest’. And your suggestion to the contrary is not appreciated. – gavin]

Walt, don’t confuse sea surface temperature with the temperature of the oceans overall. The ocean can be absorbing heat and still show bigger areas of surface cold water happening. You might want to look up the research behind this recent science news story, for example, to understand how apparently paradoxical reports can make sense:

http://www.sciencedaily.com/releases/2007/12/071220133426.htm

Walt Bennett> Hadley’s own graph shows that the ocean tends to warm when land warms. Why in the last two years has that not been the case?

Their graph seems to show 0.5C more land warming than ocean warming since 1975. I do not think all of that can be attributed to thermal lag. Some speculation:

Recent land pollution (more soot, black carbon relative to sulfates) causes a net addition to warming.

Ocean measurements are more accurate (no UHI or micro-climate effect warm biases).

Walt, there’s a somewhat better article, here:

http://www.alphagalileo.org/index.cfm?fuseaction=readrelease&releaseid=526086

Alphagalileo is a good scienc news service, and does us the courtesy of citing the publication — any science news source should. Sciencedaily didn’t.

http://www.agu.org/pubs/crossref/2007/2006GL028812.shtml

Why, I wonder, has no-one quoted the results of F-testing against a linear regression of temperature changes against time.

The F-Test will compare the residual variation about the regression line to the overall mean. In other words, it will compare the variation “explained” by the regression with the residual variation about the line. The calculated significance takes into account the number of observations, so we can see if any calculated trend differs significantly from zero.

To be convincing, we need both a trend greater than zero, and a probability less than, say, one chance in 20 that the trend arises by chance.

For example, using the UAH monthly data, from December 1978 to November 2007, the regression line increase is 1.43 degrees centigrade per century, and relative to the monthly variations there is no doubt that the increase is significant.

But, a big but, the starting point is 1978 (happily about the same as Hansen A, B, and C for model comparisons), after a fall in temperature from the previous peak in the forties.

From the same 1978 start point, how far forward must we go before we find a significant increase? The answer is that from 1978 to 1995 there was no significant increase in global temperatures, and the (not significant) trend was only 0.38 degrees centigrade per century. It was the sharp increase over the next few years that substantiated Hansen’s original forecast.

To see if “global warming has stopped”, we can work backwards from today, and see how far back we must go to detect a significant increase. The answer, not surprisingly is before the peak in 1998. From July 1997 to 2007 there has been no significant increase in global temperature.

Take those two results together and you can see that it would be difficult to make a convincing case for global warming without the 0.5 degree increase in temperatures from 1996 to 2001.

[Response: What 0.5 deg rise between 1996 and 2001? – gavin]

Re (120), last week we had the first sunspot of opposing polarity, which means the next 11year cycle has officially begun. By definition we are at a minima of the cycle.

(132) I’d take anything heard on Nat. Geo, of Science Channel with a substantial grain of salt. So many statements are obviously based on misunderstood things from scientists, that their credibility has to be considered low.

This is one of those times when I feel like the kid who just doesn’t get it, and nobody (except you, Hank) considers it worth the time to straighten the kid out.

Gavin? Stefan? Anybody? Am I insane or has GISS shown continued warming where Hadley shows cooling, and is this not directly related to SST measurements? How can those measurements be so radically different, simply based on differences in approaches which are “defensible”?

We aren’t talking about forecasts; these are the actual conditions we are talking about.

You can’t both be right, and it sounds weak to me to hear it chalked up to “different approaches”.

You gonna keep ignoring the (47 year old) kid? Or at least tell me why I’m wasting time pursuing this inquiry?

[Response: If you want to investigate further, compare the spatial patterns. I haven’t done it yet for 2007, but I’ll guess that the biggest anomalies will be over the Arctic Ocean. The sea ice retreat in the summer certainly lends support to the GISS analysis, but the Arctic Buoy Program would be the best check. – gavin]

#105

Gavin,

ISCCP confirms your assumption, the post 2000 offset is ‘not real’. Whether this means that ‘probably exaggerated’ on their page should be changed to ‘totally wrong’ or something else I don’t know, but then why put the curves on the web at all.

Are there any reliable measurements of the net TOA somewhere else? And I don’t mean albedo.

[Response: Thanks. Actually, the CERES data is the best bet. – gavin]

Fred,

> using the UAH monthly data

Point to the data set so people know what you’re talking about please.

That could mean a lot of different altitudes and instruments.

> we can work backwards from today, and see how far back we must

> go to detect a significant increase

Cite please for this idea of how to apply statistics? I don’t believe it’s correct, though it was thirty years ago I had my statistics classes; at that point in time, the rule was you had to decide on your test _before_ looking at the data, not look at the data until you found a set that when tested gave you the desired answer.

Whoah, Fred, are you basically saying that if you take the 1998 peak out of this graph, what’s left is no warming? Recipe for fudge.

http://www.atmoz.org/img/giss_vs_uah.png

Was it you who mentioned at Tamino’s site the idea of using software that digitizes from images to derive data points? Don’t do that with charts based on data that you can download from original sources.

Gavin, et al — What observations would falsify your understanding of global climate change?

I’m sorry, Gavin, my last comment in 138 was careless.

The 1978/1995 and the 1997/2007 regression lines are not significantly different from horizontal. Relative to the zero line the two averages are -0.035 and +0.247, so the best estimate of the step change between the two periods is 0.28 degrees C. Without that change, it would be impossible to make a convincing case for AGW.

In mitigation, I had left the statistics and I was looking at the UAH chart.

It is striking that the temperatures climb the 1998 peak immediately after my first period, and promptly fall all the way back to the 1996 level by the end of 1999. Over the next 2 years the temperature rises again to current levels, and the increase (1996 to 2001)is about 0.5 degrees. Without that step change etc.

It seems as if there are some problems to reconcile different data sets and measurement methods. My statistical knowledge is not enough to be able to slam SD and p values in the head of other debaters. However, can these problems be be taken as a proof of “end of AGW” if other parameters point to a continued warming? Moreover, are there regional data that shows clear trends? I would assume that the Swedish temperature data, dating back to 1720 would be of value. Graphs and data sets 1720-2005 are found at http://www.smhi.se/cmp/jsp/polopoly.jsp?d=8881&a=25030&l=sv

In my view, these data seems to point to a clear trend for the last decade. And this is not including 2006 and 2007, where 2007 had mean temperatures 1,5-2 °C over mean. Furthermore, ice and snow data for Sweden point in the same direction. Thus, if AGW would have stopped why has the temperature for the last month nad a half been 5-6 °C over mean??

#134

Gavin – I’m not trying to imply anyone is being dishonest. All I’m stating is that, from the general publics point of view, it is becoming increasingly difficult to believe the alarmism when the projections (IPCC 2001 report) are now clearly shown to have been overestimated in the short term. It doesn’t matter what the reason is eg. short term weather variability – as you yourself say.

[Response: Your statement is wrong on two counts: a) the data is in line with projections, and b) the projections are not alarmist. They may be alarming but there is nothing projected for the near future that relies on anything other than very dull conservative assumptions. -gavin ]

[Response: To replay the simple analogy I have used above: imagine a spell of cold weather arrives in April, and for a week or even two temperatures are dropping or at least not rising. Nothing unusual of course, just normal weather. Would you then say: “From the general publics point of view, it becomes increasingly difficult to believe that it is spring-time when the expectation of warming temperatures is clearly shown to have been wrong in the short term”? Of course not, because the public can tell the difference between short-term weather and the seasonal cycle. The difference between interannual variability and long-term greenhouse warming is exactly the same. Just less familiar to the general public. -stefan]

Somewhat related to this subject, I am looking for input into my presentation covering the claims of skeptics (factual and logical critiques preferable to “your narration sucks.”) One section is dedicated to the temperature record. It includes such topics as:

1) UHI corrections

2) The significance (or not) of the GISTEMP 2000-2007 flaw for the US lower 48 states.

3) A comparison between instrument and satellite measurements.

4) The claim that “Global Warming stopped in 1998.”

5) The difference between NASA’s analysis and the others.

6) And the observation that “if the world really is warming, why is it cold outside.”

This section can be viewed here:

http://cce.890m.com/part06/

Quicktime 7+ is required. It is about 17 mintues and has been greatly expanded since the last time I solicited input.

Re #134

“I think what the original article was tapping into is the general publics observation that there has been no meaningful global temperature rise in the last decade.”

I would suggest that the Northern hemisphere trends refute that argument.

http://tamino.files.wordpress.com/2008/01/latitude.jpg

Hank #68

I was requesting not demanding, as I would not have beed upset or complained if I did not receive a response.

Fortunately I did from cce #79. Thank you! That is exactly what I was looking for. My interpretation? The layman Joe Q Public says: Looks like we are warmer than average according to all sources, but only GISS shows continued warming trend for this century The other source are flat for 21st century)

Please, I know 7-8 years does not tell us anything yet. But the next 3-5 years will be interesting to watch.

Thank you all, I will now return to my reading only part until I have another question.

Play nice, the public is watching ;-)

Jon P