Many readers will remember our critique of a paper by Douglass et al on tropical tropospheric temperature trends late last year, and the discussion of the ongoing revisions to the observational datasets. Some will recall that the Douglass et al paper was trumpeted around the blogosphere as the definitive proof that models had it all wrong.

At the time, our criticism was itself criticised because our counterpoints had not been submitted to a peer-reviewed journal. However, this was a little unfair (and possibly a little disingenuous) because a group of us had in fact submitted a much better argued paper making the same principal points. Of course, the peer-review process takes much longer than writing a blog post and so it has taken until today to appear on the journal website.

The new 17-author paper (accessible pdf) (lead by Ben Santer), does a much better job of comparing the various trends in atmospheric datasets with the models and is very careful to take account of systematic uncertainties in all aspects of that comparison (unlike Douglass et al). The bottom line is that while there is remaining uncertainty in the tropical trends over the last 30 years, there is no clear discrepancy between what the models expect and the observations. There is a fact sheet available which explains the result in relatively simple terms.

Additionally, the paper explores the statistical properties of the test used by Douglass et al and finds some very odd results. Namely, that their test should nominally inadvertently reject a match 1 time out 20 (i.e. for a 5% significance), actually rejects valid comparisons 16 times out of 20! And curiously, the more data you have, the worse the test performs (figure 5 in the paper). The other aspect discussed in the paper is the importance of dealing with systematic errors in the data sets. These are essentially the same points that were made in our original blog post, but are now much more comprehensively shown. The data sources are now completely up-to-date and a much wider range of sources is addressed – not only the different satellite products, but also the different analyses of the radiosonde data.

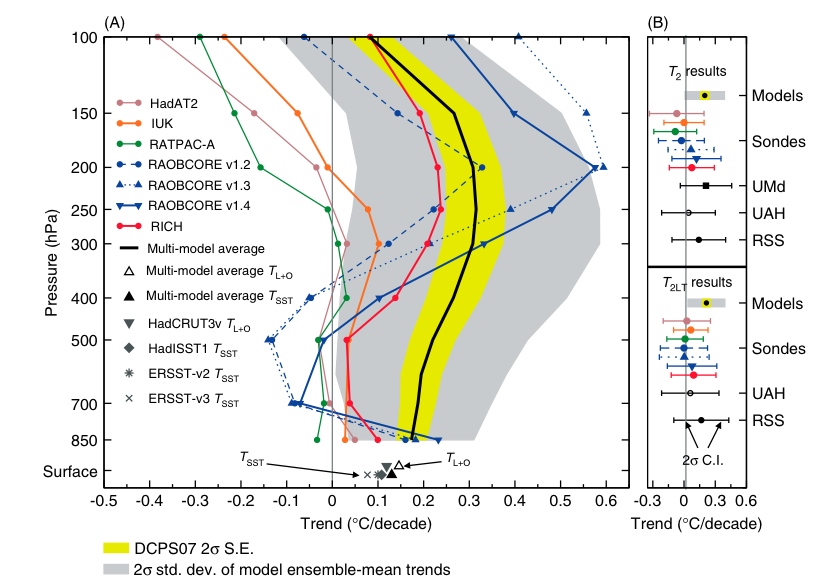

The bottom line is best encapsulated by the summary figure 6 from the paper:

The grey band is the real 2-sigma spread of the models (while the yellow band is the spread allowed for in the flawed Douglass et al test). The other lines are the different estimates from the data. The uncertainties in both preclude any claim of some obvious discrepancy – a result you can only get by cherry-picking what data to use and erroneously downplaying the expected spread in the simulations.

Taking a slightly larger view, I think this example shows quite effectively how blogs can play a constructive role in moving science forward (something that we discussed a while ago). Given the egregiousness of the error in this particular paper (which was obvious to many people at the time), having the initial blog posting up very quickly alerted the community to the problems even if it wasn’t a comprehensive analysis. The time in-between the original paper coming out and this new analysis was almost 10 months. The resulting paper is of course much better than any blog post could have been and in fact moves significantly beyond a simple rebuttal. This clearly demonstrates that there is no conflict between the peer-review process and the blogosphere. A proper paper definitely takes more time and gives generally a better result than a blog post, but the latter can get the essential points out very quickly and can save other people from wasting their time.

I just want to say that I (as PhD-Student) agree on what you are saying; blogs can be a good contribution in the scientific debate. Not least by keeping the public alert on different discussions on going behind the “scenes” of peer-reviewed journals.

This 17-author paper basically says that the models would only be “incorrect” if observations were roughly outside the 0.0 to 0.5 degree-per-decade window.

This means that no warming whatsoever over the next century would not invalidate the models.

Interesting.

[Response: But not true. These are hindcasts for a specific period (1979-1999) and the variance is in large part due the shortness of that period and the degree of ‘noise’ – both internal and forced (i.e. volcanoes) – that confuses the estimate od the long term trends. Our previous post dealt with what the models expect for periods in the future – and the longer the time frame the less influence the short term variations have. – gavin]

Well done. It is also worth noting that the earlier work by Douglass et al. (2004) on this subject had issues in observational data sets pointed out by Fu and Johanson (2005), Mears and Wentz (2005), and Sherwood et al. (2005).

Many thanks for this site yet again!

Please don’t forget that many papers are not readily available to the public (most have to buy them). This means that the best insights the average person can get is what the blog postings and following discussion reveals about the papers and their weaknesses/strengths.

Also, many out there don’t have the technical expertise or time to read the papers even if they could get hold of a copy.

Therefore, the general public relies on sites like this one to help keep up to date with the state of climate science. This is such an important issue that blog sites like this one MUST be maintained.

[Response: Thanks. We realise that access to papers is limited for most of the public and we link to free versions where possible. In this case, I recommend reading the fact sheet (linked above) to get a good sense for what was done. – gavin]

Good post gavin. I think I’m reading the trend chart wrong, but is the mean of the models higher than the mean of all 10 data sets? What do the error bars at the surface look like? Did all the models include volcanic forcing? Err maybe I should read the paper first

How did Douglass et al get published in a respectable journal? Did the editors let it through despite being aware of the errors?

What do Messrs Santer et al mean by surface temperature? The surface I live on comprises two lawns, some areas shaded, some not, flower beds, a new black tarmac drive, a new white stone patio, and a fairly fast flowing river. How would they propose to measure its temperature? Like all the other surface temperatures (apart from the 75% of the surface covered by the oceans) they would probably suggest placing the thermometer a few meters up in the atmosphere.

Satellites are by far the best way to measure atmospheric temperatures across the planet.

I do urge all contributors who are so certain of the AGW theory to at least look at the UAH data (Google Global Warming at a Glance). [edit]

From 1978 to 1996 neither the lower or mid-troposphere data show any warming. The warming which lends credence to the AGW theory occurred over three years from 1999 to 2002. Thereafter, temperatures remained “high” (plus 0.2 degrees above the long term average) and fluctuating for 4 years to 2006. (You would find it difficult to detect this change in the average temperature of your house).

Thereafter the temperatures fell back to 1978 levels.

The mid-troposphere chart experienced the same relatively sharp increase from1999 to 2002, but the increase was much lower, and more rapidly reversed – hence the difference between the long term trends.

The models follow the CO2 concentration, which is why the Hansen “B” line and the “surface” temperatures are diverging.

Santer’s fact sheet does not display troposphere temperatures after 2005.

If the satellite data is anything like accurate then AGW warming theory is either plain wrong or nothing to worry about. If current (1978) temperatures persist for the next 5 to 10 years, challenges to the AGW consensus will increase rapidly, both in number and volume.

[Response: Wrong again. But feel free to continue ignoring all previous corrections of your faulty thinking. – gavin]

I am not an expert by any definition, but just a layman keenly interested (concerned) about the future of the planet and my children, all our children.

I am also very concerned that the current debate on global warming gets so specialized that the forest may well be missed out for the trees.

There seems to be a lurking expectation in some of the climate scientists and others that a new ice-age may be dawning that will yet save mankind from the apocalypse of melting polar ice and significant rise of sea levels in our lifetime. In other words, there is no warming effect of greenhouse gases and

humans can carry on with Business As Usual, including massive burn of fossil fuels.

There is also little or no awareness among the lay public that greenhouse gases are cumulative and the accumulation is exponential in scale today and has been in the last 150 years since the industrial engines appeared.

Somewhere I read that the current production of greenhouse gases is twice as much as what the green plants (on land and in the sea)can neutralize. In other words, the “tipping point” is already far exceeded and there cannot be any new equilibrium of production/neutralization of greenhouse gases.

So any real solution would only come through total and serious cutback in human lifestyles and corresponding energy use.

Please start educating the lay public about global warming in a simple(and stark)way that they can understand. Please also provide a forum for getting some expert advice on what the alternate lifestyles of the future world must look like, in order that we may have a future.

Steve (6) Peer review will never be a guarantee that published papers are free of (even obvious) errors, reviewers editors and authors are all only human and there will always be a chance that none of them will spot the error in time. However here we have seen good science in action in that the error has been spotted and corrected (well done Santer et al!). It also highlights the advantage of pre-prints being made available by the publisher as it means that the correction is made more rapidly that would otherwise be the case (well done IJC).

It is important however that the correction to high profile or contraversial papers are made both in the blogs and in the peer-reviewed litterature, as otherwise the correction is all too easily dismissed by saying “RealClimate, well they would say that wouldn’t they?” ;o)

I agree with “the other Gavin”. Peer-review isn’t the end-all filter for good science. It is simply the first one. And reviewers are often very pressed for time, so sometimes errors fall through the cracks :)

The paper is available at LLNL page

https://publicaffairs.llnl.gov/news/news_releases/2008/NR-08-10-05-article.pdf

Its final conclusion is as follows:

“We may never completely reconcile the divergent observational estimates of temperature changes in the tropical troposphere. We lack the unimpeachable observational records necessary for this task. The large structural uncertainties in observations hamper our ability to determine how well models simulate the tropospheric temperature changes that actually occurred over the satellite era. A truly definitive answer to this question may be difficult to obtain. Nevertheless, if structural uncertainties in observations and models are fully accounted for, a partial resolution of the long-standing ‘differential warming’ problem has now been achieved. The lessons learned from studying this problem can and should be applied towards the improvement of existing climate monitoring systems, so that future model evaluation studies are less sensitive to observational ambiguity.”

Please don’t forget that many papers are not readily available to the public (most have to buy them).

On the contrary, most university doors are WIDE OPEN to the public, joe six pack merely needs to get off the couch and wander down to his or her local world class university research libraries and look them up. There are costs involved, page charges run anywhere for a few pennies up to a dime a page for the venda copy machines or the laser printer. Many of these papers may be available on a preprint server as well, and if you dig deep enough, they are available as fair use copies on the author’s web pages.

Captcha : concluded for

“On the contrary, most university doors are WIDE OPEN to the public, joe six pack merely needs to get off the couch and wander down to his or her local world class university research libraries and look them up.”

For most of us that is more than enough, unfortunately, to preclude the use of the term “readily available.” I live in an area boasting 10+ universities in the metro area, and I’d still have to budget about 2 hours of round trip driving, and a minimum of an hour–and probably more–to get in, find the material, copy what I need, and get out again. Not so practical. I’m really doing more than I should–“should” in relation to the practicalities of daily life–when I take 40 minutes online to browse a few climate papers that I *can* access.

(captcha: “tieing booked”)

> [Response: Wrong again. But feel free to continue

> ignoring all previous corrections of your faulty

> thinking. – gavin]

Is there a search string that will find posts by an individual userid that include inline responses?

[Response: Yes. Add ‘class=”response”‘ to the string. This picks out the html that we use to colour the inline responses green. – gavin]

Nice work! You all should consider featuring your “Fact Sheet” a bit more prominently, perhaps even before the fold, because it seems to be a definitive resource providing refutations to nearly every single claim advanced by skeptics in the wake of the Douglass debacle.

I’ll third the assertion of Figen and “other Gavin”; blogging can be a way of disseminating information to a large group of people rather quickly, but it is most effective in cases like this where it is paired with the traditional, rigorous academic exercise of peer-review.

Re #s 9 and 10: In addition to the errors in the paper seeming like the sort of thing a peer-reviewer ought to have caught, the presence of Fred Singer as a co-author ought to have singled the paper out for special attention. So, as I said, it begins to seem plausible that the editors were aware of the problems and published it anyway. IMHO there’s a reason to have done so, i.e. that it’s useful for such things to get corrected in a reasonably prominent place. But is that what happened?

For the past year, the satellite troposphere anomalies (20S to 20N) have been negative (as much as -0.5C) – La Nina obviously.

But wouldn’t that change the analysis – first, the linear trend is now only 0.06C per decade and secondly, the Nina 3.4 region and ENSO are bigger influences than previously thought.

So, am I reading that graph wrong or does the mean of the models exceed the mean of all 10 observation sets , and that the mean of the surface hindcast for both Land and Ocean and SST exceed the 4 observation means as well.?

Also, how about a quick list of the models used?

The thing is even if Fred Singer’s name is probably a flag for a lot of people, as an editor you have to treat everything fairly. if the reviewers did not find fault then one would have to either send the paper to more reviewers (which would take an inordinate amount of time) or rejecta a paper based on its authors list. While that may have been appropriate in this case in hindsight, it opens the door to a slippery slope. Then new or untenured scientists will have a harder time getting published than established ones “in the old boys club.”

To avoid all that, I think peer review should be double blind so the work is judged on its own merits and not its authorship or familiarity with specific authors or their affiliated institutions.

In #8 Vinod Gupta wrote … “Please start educating the lay public about global warming in a simple(and stark)way that they can understand”. …

However,

although Realclimate has done well in their efforts to do that, the required resources and responibility to succeed on the large scale exist only in governments (who have so far failed, miserably)

Just had a quick glance at the fact sheet.

Have the authors considered the possible effects of El Chichon and Pinatubo in diminishing the relative tropospheric temperature peaks of the ~1983 and ~1992 El Ninos, thereby steepening the tropospheric trend?

[Response: The volcanic effects were taking into account in the models, but the precise timing of El Nino events is going to be different in each model – that in fact is a big factor in the variability of the 20 year trends. – gavin]

As a non scientist, but (I would like to believe), a fairly scientifically literate layman, it seems that the evidence for AGW and the physics based explanation for it are irrefutable. The denialists in my opinion are similar to those who believe in the efficacy of accupuncture, homeopathic remedies, and chiropractic medicine. They will seize on any tiny scrap of data supporting their claims while ignoring the vast amount of objective scientifically based evidence to the contrary.

Figen Mekik: “then one would have to either send the paper to more reviewers (which would take an inordinate amount of time) or rejecta a paper based on its authors list. While that may have been appropriate in this case in hindsight, it opens the door to a slippery slope.”

You must be joking! Are you seriously suggesting that the Douglass et al paper should have been rejected on the basis of the authors? What are you? The thought police?

Who is to say that the Santer et al paper is correct and free of mistake? Papers are accepted because they are based on sound research and the methodologies are also sound. That is not to mean that they are error free.

Santer et al have produced a paper that argues a different hypothesis to Douglass et al. It does not mean by any means that Santer et al is more correct or more free of potential challenge.

Because Gavin SAYS that it does a better job of comparing trends does not make it so.

[Response: … though it does make it a little more likely ;) – gavin]

Thomas Lee Elifritz writes:

Some of the most crucial journals are no longer available in paper; if you don’t have a multi-hundred-dollar-a-year subscription, you’re SOL. The premier planetology journal, Icarus, is an example. Paywalls matter. They are effectively restricting scientific data to professional scientists, which is a bad thing.

As Einstein said, Mr White (22) 10,000 observations may support a theory – it only takes one to refute it. Science would not progress without the one observation out of step.

For example, at my University, many years before the discovery of radioactivity, a professor of Physics noticed that sealed photographic plates in a drawer had been darkened. They were adjacent to a cupboard containing Radium.

He solved the problem by moving the plates to another room.

The tiny scrap of data to which you refer are the satellite temperature records from 1978, which was the year of the global temperature trough following the previous peak in the 1940’s.

Because it contradicts AGW theory, that data has been very thoroughly analysed and criticised. Adjustments have been made for all kinds of systematic errors, and at least one arithmetical error has been acknowledged and corrected.

The results (surely worth a glance) reflect all known temperature perturbations, including the 1988 peak. They are published and plotted every month, (Google Global Warming at a Glance), and overlaid onto the GISS surface data on which Anthony Watts (Watts up with That) labours so strenuously.

You might also be interested in Tamino’s very interesting post (Open Mind) on the long running Central England Temperature record. His polynomial form fitting suggests a new “hockey stick” shape with an up-tick of 0.5 degrees this decade. Temperatures at the start of the decade were about 10.5 degrees centigrade – high but not remarkably so. His data suggested a temperature up-tick this decade from 10.5 to 11.0 degrees centigrade, which would be absolutely unprecedented.

With 15 months to go there is no sign of the up-tick. This year looks like coming out at 10.2 degrees, the 25th warmest year in the record.

Richard says “Santer et al have produced a paper that argues a different hypothesis to Douglass et al. It does not mean by any means that Santer et al is more correct or more free of potential challenge.”

No, that is not correct, both papers seek to determine whether the observational data are consistent with the models, however Douglass et al use a statistical test that actually answers a different question, namely “is there a statistically significant difference between the mean trend of the ensemble and the observed trend”. The two questions are not the same, and indeed AFAICS one would not expect the observed trend to be exactly equal to the ensmble mean even if the models were perfect, so it is hardly a reasonable test of the validity of the approach!

Richard,

Please read my earlier post carefully. I said it would not be right to reject papers because of their authorship. That is what my comment was all about.

You say “What are you? The thought police? ” Again, please read everything one posts before jumping to conclusions about what they are saying. I encourage double blind peer review so no one will act as the thought police.

most university doors are WIDE OPEN to the public, joe six pack merely needs to get off the couch and wander down to his or her local world class university research libraries and look them up.

That’s a pretty high bar. If you’re an ordinary person and simply want to check something you’ve read in a newspaper article, you’re unlikely to want (or be able) to invest several hours in doing so.

Re: #25 (Fred Staples)

Gavin is right, you staunchly continue ignoring all previous corrections of your faulty thinking.

You’re assigning far too much certainty to the UAH satellite reduction. Yet it’s in disagreement with similar satellite reductions from RSS (which is the subject of *this* post), and from the U. of Washington, and from the U. of Maryland. If the satellite data are as surefire as you believe, why do 4 separate groups come to different conclusions based on the SAME raw data?

You completely ignore the fact that satellites don’t *measure* lower-troposphere temperature. They measure temperature in very large segments of the atmosphere, and the “T2LT” channel for the lower troposphere is *inferred* from the data of those other channels by attempting to correct for the stratospheric influence and other factors.

As for the CET record, in the context of *this* post you’re just trying to change the subject. But for your information, the *data* (which are not mine, but are freely available from the web) suggest a rate of increase of 0.5 deg.C per decade. Anyone interested in what the rate of increase presently is should download the data and fit a regression line from, say, 1975 to the present.

As for “this year coming out at 10.2,” are you completely ignorant of the variation present in year-to-year results? The standard deviation of the residuals from a linear regression to annual averages 1975-2007 is 0.472, so we expect a range of variation of roughly +/- 0.94 deg.C from the long-term trend. I guess you’re just a member of the “it was cold yesterday in London, so global warming must be false” brigade. You’re seriously in need of an education about the statistical nature of noise; this is one of many posts I’ve done on that topic.

Finally: any flattering reference to Anthony Watts and his blog casts doubt on both your objectivity and your competence. Those interested in the “quality” of the work of Watts and his collaborators should read this and this and this and this and this and this.

Outeast — your local library can borrow any journal they don’t carry. Ask about “Interlibrary Lo-an.”

(Hyphen for the spamhallucinator software.)

The Reference Librarian will have additional suggestions for searching.

It’s easier to find than complain about.

Hopefully this is not too much off topic, but i was reading this article by Lomborg and am wondering where he goes wrong:

http://www.guardian.co.uk/commentisfree/2008/oct/14/climatechange-scienceofclimatechange

He doesn’t give any sources for his statements about lower temps and sea level rise, so i wonder if they’re correct, and if not, what the correct numbers are. Apart from exact numbers, isn’t his main error that he’s looking too much at short term variations instead of looking at the longer term trends and projections?

“You’re seriously in need of an education about the statistical nature of noise;” (Tamino)

Ah that wonderfully scientific term ‘Noise’

Here is the full data set from UAH since satellite data became available.

1978 – 1994

http://woodfortrees.org/plot/uah/from:1978/to:1994/trend/plot/uah/from:1978/to:1994

1995 – 2000

http://woodfortrees.org/plot/uah/from:1995/to:2000/trend/plot/uah/from:1995/to:2000

2001 – Date

http://woodfortrees.org/plot/uah/from:2001/to:2009/trend/plot/uah/from:2001/to:2009

So Tamino, you often state that ‘noise’ can mask the true signal. So what is the true signal here? The cooling periods 1978 – 1994 and 2001 to date being masked by a warming trend from 1995 -2000? Or a warming trend from 1995 – 2000 being masked by cooling trends from 1978 – 1994 and 2001 – 2009?

I cannot see why people are tring to kick up the most almighty panic on these trends especially when this is combined with no visible acceleration in sea level rise to anywhere near the levels predicted by the models.

Also these trends this century are against a background of higher than estimated man made CO2 emmisions and also that the forcing factor of this affect must have already been at its highest level during the 21st century and can only decline as total atmospheric CO2 increases. After all we know the climate does not have a linear response to rising CO2 levels or the Earth would have already suffered a runaway greenhouse effct in its past.

So how do you justify some of the apocalyptic predictions people seem happy to bandy about?

Alan

Also just to emphasise this noise versus signal idea.

Here is the data from 1940, when the CO2 signal started to become strongly apparent, to 1977. I use Gis temp data because of course satellite data is only available from 1978.

http://woodfortrees.org/plot/gistemp/from:1940/to:1977/trend/plot/gistemp/from:1940/to:1977

Again what is the real long term signal and what is the noise?

Alan

Noise is the random variability that the system you’re not interested in is putting on the signal.

E.g. if you’re trying to listen to someone talking, the engine is making noise.

However, if you want to find out where the bite is, that “noise” IS your signal.

So anything that is of a shorter period than climate is noise.

And what period is “climate”? Well if you ONLY had temperatures to go on, and “midsummer” was the warmest day (lets ignore thermal inertia), how many years would you have to sum to find out “the day of midsummer” to within a week? About 50?

Check it out, go back through the temperature records and see.

Alan Millar says “So what is the true signal here? The cooling periods 1978 – 1994 and 2001 to date being masked by a warming trend from 1995 -2000? Or a warming trend from 1995 – 2000 being masked by cooling trends from 1978 – 1994 and 2001 – 2009?”

One way to decide would be to compare the plausibility of the supposed mechanism behind each hypothesis. One advantage of the theory that there has been a warming trend occasionally obscured in the short term by natural variability is that we have a mechanism that explains why there should be a warming trend (CO2) and mechanisms for explaining the variability (IIRC ENSO is responsible for quite a lot of it). Can you provide a more plausible mechanism explaning a cooling trend occasionally masked by natural variation?

A long term trend (e.g. 1978-2008) is much less affected by ENSO-related natural variability, and that shows a clear warming trend

http://woodfortrees.org/graph/uah/trend/plot/uah

The fact that the observed long term trend shows warming strongly suggests that there isn’t an underlying long term cooling trend and the overall warming is unlikely to be due to natural variability.

BTW isn’t the 1940-1977 cooling is attributable to aerosols?

Re #32, Millard,

“Let us spend one day as deliberately as Nature, and not be thrown off

the track by every nutshell and mosquito’s wing that falls on the rails”.

Henry David Thoreau (1854)

http://data.giss.nasa.gov/gistemp/

Re: #32 and #33 (Alan Millar)

You’ve illustrated, far better than I could, just how naively some people approach trend analysis.

You say “The cooling periods 1978 – 1994 and 2001 to date…” Did you actually bother to do any analysis? What’s next? Will you tout the “cooling period from 8:15 am to 8:23 am”?

To answer your question: it can’t be called “true signal” if it fails statistical significance tests.

Here’s a question for you: if 1978-1994 and 2001-present are “cooling periods,” then what are the rates of cooling, AND what are the uncertainties (confidence intervals) associated with those rates? Be careful: I’m not the only professional statistician who comes around here, and you can bet we’ll all be checking your work.

Just thinking on trends.

Last year a friend of mine, who is a skeptic, proposed that the loss of arctic ice was just part of a 20 cycle. So, I asked him when was the last time that the NW passage was fully navigatable? I think it was more than 20 years ago.

Permafrost is melting; the timing of animal migrations is changing; and a host of other things that have great inherent stability are changing. I was wondering how much more energy there must be in the arctic to melt so many more cubic miles of ice. It can be estimated, but suffice to say it is a lot. Why does anyone cling to data sets that are open to interpretation to refute the basic trends that are so readily observable?

“BTW isn’t the 1940-1977 cooling is attributable to aerosols?” (Gavin, no not that one, a different one)

Well there is conjecture that that is so (unproven but). If that is a fact, what trend is it actually masking?

There was a long term warming trend upto 1940 that did not seem connected to any man made CO2 emissions.

http://woodfortrees.org/plot/hadcrut3gl/from:1880/to:1940/trend/plot/hadcrut3gl/from:1880/to:1940

If the aerosols were, subsequently masking this, apparently natural, trend of recovery from the LIA then continuing that underlying trend to the present time would account for most of the subsequent observed warming and leave only a small amount due to mans CO2 emissions.Indicating a much lower sensitivity to CO2 than the models hypothesise. This seems a perfectly reasonable supposition.

It seems a hell of a coincidence to me that this long term natural trend should cease at precisely the moment aerosols become a factor and man made CO2 start to drive the long term climate. But that is what we are required to believe if we are to see the models hit their targets.

I don’t believe that the Earths climate is cooling most indicators say otherwise. What I find hard to believe is the hypothesised rate of change forecast by the models and that the Earths climate is mainly driven by three or more factors ie CO2, CO2, and CO2!

Alan

Alan Millar, did you never take a course in data analysis? The “signal” is the portion of the measurement, you are interested in, while the “noise” is that which interferes with your observing the signal. In this case, the theory predicts a “warming” signal, and the data confirm this to high confidence.

You are welcome to try and see how it does with a “cooling” signal, although I expect you will have trouble finding supporting data with out cherrypicking it. Here’s a clue. “Climate” is very noisy on short timescales. Measurement errors across satellite systems increase both noise and systematic error. (Note: A couple of colleague of mine has direct experience with this in reconstructing galactic cosmic ray measurements and plasma fluxes far from Earth–no one satellite has produced enough data for a decent model, and yet there are little problems going from one dataset to another.) Best advice, though: Learn the physics and you’ll understand your signals and noise much better. CO2’s effects last for decades to centuries–it’s a trend that’s pretty easy to spot.

Re: #31 (Lennart)

See this for a closer look at Lomborg’s “statistics.”

Ray Ladbury says that the theory predicts a warming signal and the data confirms this to high confidence.

It seems a bit odd that no warming trend at all this decade is confirming to high confidence a warming trend. Imagine how confident he would be if temperatures were actually going up rather than down on GISS since Jan 2001.

Gavin, Ray, Tamino.

I have already answered but my post has disappeared! I will try again to see if my post can beat the censorship on here and whether true discussion is allowed.

“BTW isn’t the 1940-1977 cooling is attributable to aerosols?”

It is conjectured that this is so. It is not proven but assuming that it is a fact what trend is it actually masking?

This is the long term trend upto 1940 when it is hypothesised that aerosols started to become a significant masking factor.

http://woodfortrees.org/plot/hadcrut3gl/from:1880/to:1940/trend/plot/hadcrut3gl/from:1880/to:1940

Now if you extrapolate this, long standing underlying but masked natural trend, visible from the Earths recovery from the the LIA, to the present date it explains most of the observed warming. It leaves only a fairly small amount of warming attributable to CO2 emissions and therefore indicates a low sensitivity to increased atmospheric CO2.

This seems an entirely reasonable conjecture. If this is not so then one has to assume that this longstanding natural trend came to a complete halt at precisely the same moment that aerosols became a factor and that man made CO2 started to drive the Earths long term climate.

Unless one can produce an accurate model of the Earths climate and I admit I cannot, how do you prove this with any degree of confidence? ( I take comfort in the fact that no one else can either ) Even if I understood completely and could hypothesise the effect of the huge number of factors and correlations and feedback mechanisms that drive the climate I would not have accurate measurements over any significant timescale to prove this. Technolgical man has not been around long enough to measure these things. I would guess that we would have to be present and measuring over a couple of glacial and inter-glacial periods to have great confidence in any models.

Of course we are talking 100s of thousands of years for this to happen, a small period indeed when we are talking about the Earths climate but daunting if you are looking for a rush to judgement.

So do I think the Earth is on cooling trend? No, I think most indicators suggest a moderate medium term warming trend that started well before any significant man made CO2 emissions and continues to this date.

Alan

Not all people have easy access to a university library, or even a library of any description. Even when I lived in a major city, it was not easy to get to the university library, but now my nearest physics library is an 8-hour drive away. The closest public library is a little over 3 hours away, in a different jurisdiction so I am unable to borrow books or use interlibrary loa^n. (The ^ is to defeat the spam detector).

@ Tamino

What are you talking about ?? Confidence intervals are estimate intervals of a true endpoint, which could be human population parameters, distance to the moon or temperature trends. You don’t apply confidence intervals on the true endpoint but on proxies or measures to estimate the true endpoint. A – 0.1 temp trend from 2001 to 2008 is a -0.1 temp trend from 2001 to 2008 – period. There are NO confidence intervals. It is the true final endpoint, if you define it that way. You may argue that a short-term trend from 2001- 2008 is not a proper TRUE endpoint. Fine. You may then apply statistics (but which ones ??? good luck) to determine the confidence intervals to estimate the TRUE endpoint. But tell us – what is the TRUE endpoint ? Temperature trend from 1980 to 2030 ? From 2000 to 2099 ? Up to you to cherry pick but PELEASE DO NOT apply confidence intervals on the true endpoint.

Re: #37 (Tamino)

What would you say is the minimal period of time that one should use to ensure that it contains a “signal” (and not just noise). What is the formal statistical method that one would use to ensure that the time frame is long enough?

Thanks.

To Figen Mekik,

My apologies for not understanding your meaning. It was just the part about “While that may have been appropriate in this case in hindsight” that worried me. I thought that the statement implied that you approved of elimination on the basis of the authorship.

I apologise for getting it wrong in this case.

Regards

Richard, no worries at all.

Raobcore different versions giving different results is quite confusing for me.. From what I gather, Raobcore 1.4 should be considered the better most perfect version, if so, why show the older ones?

I suggest a flaw in analysis which is the apparent segmentation of the Upper Air profile, in the Arctic, the real action is happening between 1000 and 650 mb, segmenting upper air levels 850,700, and 500 mb reduces resolution and misses some real important air volumes at higher pressures, where anything can happen between 1000 and 850, 850 and 750 mb etc… Raobcore profile looks a little unrealistic, and it is likely that the models are more correct for the lower troposphere than Raobcore.

However, its very curious, oblate sun refraction method agrees with Raobcore calculated trends at the same location, which is for a warmer lower troposphere at virtually every year, but not with the actual radiosonde measurements varying a lot, which is strange…… This leads me to conclude that Raobcore resolution needs to be increased… But this depends on whether my interpretation of Raobcore is right, which is the most accurate, corrected perfect data available.

As a lurker, it seems to me that Allan Millar makes a good point. Most models seem to have a very large margin of error, so that almost any recent temperature result can be said to fall within the M of E and validate the model. It is therefore difficult to ‘disprove’ the theory. But the basing of the AGW theory upon a short warming trend 1995-2000 seems to be a stretch if you can (I think reasonably) argue that the facts can also support a contrary view. It comes down to \We have a theory of what causes the warming and you don’t – So we’re right and you’re wrong.\ Why are \deniers\ castigated for saying \We don’t know what causes the warming\ when the AGW theory cannot explain what initiates the warming that CO2 exacerbates?