Remember the forecast of a temporary global cooling which made headlines around the world in 2008? We didn’t think it was reliable and offered a bet. The forecast period is now over: we were right, the forecast was not skillful.

Back around 2007/8, two high-profile papers claimed to produce, for the first time, skilful predictions of decadal climate change, based on new techniques of ocean state initialization in climate models. Both papers made forecasts of the future evolution of global mean and regional temperatures. The first paper, Smith et al. (2007), predicted “that internal variability will partially offset the anthropogenic global warming signal for the next few years. However, climate will continue to warm, with at least half of the years after 2009 predicted to exceed the warmest year currently on record.” The second, Keenlyside et al., (2008), forecast in contrast that “global surface temperature may not increase over the next decade, as natural climate variations in the North Atlantic and tropical Pacific temporarily offset the projected anthropogenic warming.”

This month marks the end of the forecast period for Keenlyside et al and so their forecasts can now be cleanly compared to what actually happened. This is particularly interesting to RealClimate, since we offered a bet to the authors on whether the results would be accurate based on our assessment of their methodology. They ignored our offer but now the time period of the bet has passed, it’s worth checking how it would have gone.

Keenlyside and colleagues specifically forecast temperatures for the overlapping decadal periods of Nov 2000-Oct 2010, and Nov 2004-Oct 2015. At the end of the first period, we checked in on the forecast and noted that the predicted global cooling had not occurred. We can now update this for the second period as well.

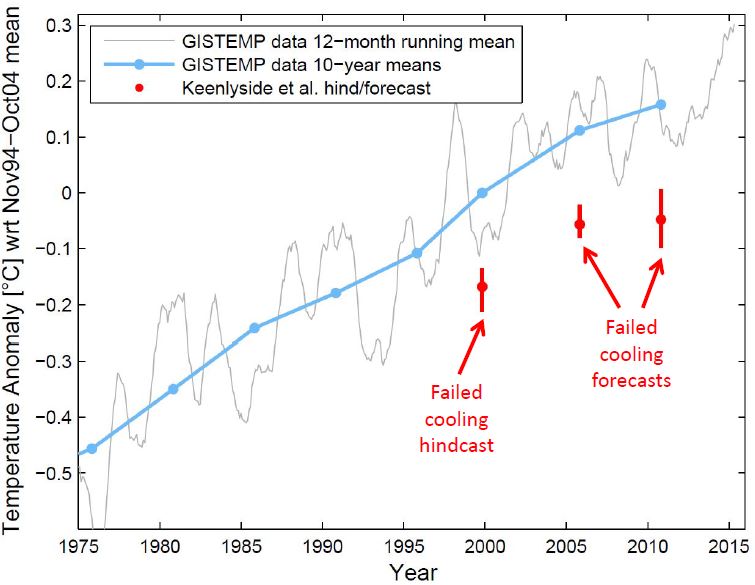

Blue dots and curve show global temperature data (NASA GISTEMP), red dots with confidence intervals the model forecasts and hindcast, for the same 10-year intervals chosen by Keenlyside et al in their paper. (The observed 12-month running average is also shown.) Temperature anomalies are shown relative to the period November 1994 – October 2004 as in the Keenlyside paper. The forecasts for a cooling (relative to the previous 10-year interval) were clearly wrong: decadal average temperatures have kept increasing, despite the ever-present shorter-term variability shown in the grey curve.

It is clear that prediction of global cooling or even stasis was way off the mark, with global warming continuing and observations running more than 0.15ºC warmer than the Keenlyside et al forecast.

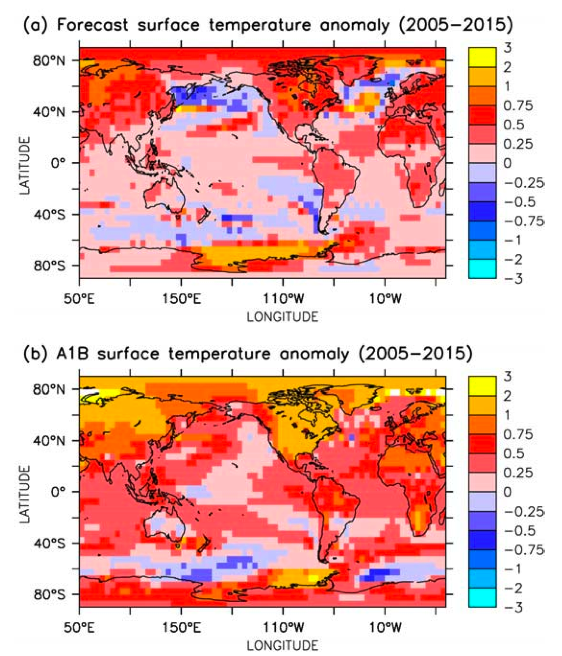

If we examine the spatial pattern, we can see, again, that the initialized prediction did much worse than the free-running, radiatively forced model ensemble (which was surprisingly accurate). This initialization was to be the major innovation of the paper, leading to specific forecasts that were supposed to be closer to observations. The opposite turned out to be the case:

Above: Predicted spatial patterns in Keenlyside et al (2008), in the initialized runs (top) and in the free running A1B scenario. Below: The spatial patterns for the prediction period derived from the GISTEMP analysis.

We stand by our original assessment that the ‘coupling shock’ in the Keenlyside methodology can produce cooling artefacts (as shown in Rahmstorf 1995) and that this was knowable at the time of publication. We also gave further reasons that should have raised red flags with the reviewers of the paper, such as the negative skill scores for the model in the critical region of the northern Atlantic and the poor performance of the hindcasts (two hindcasts for earlier periods were both unskilful, including that for the decade immediately preceding the forecasts, shown in the graph above).

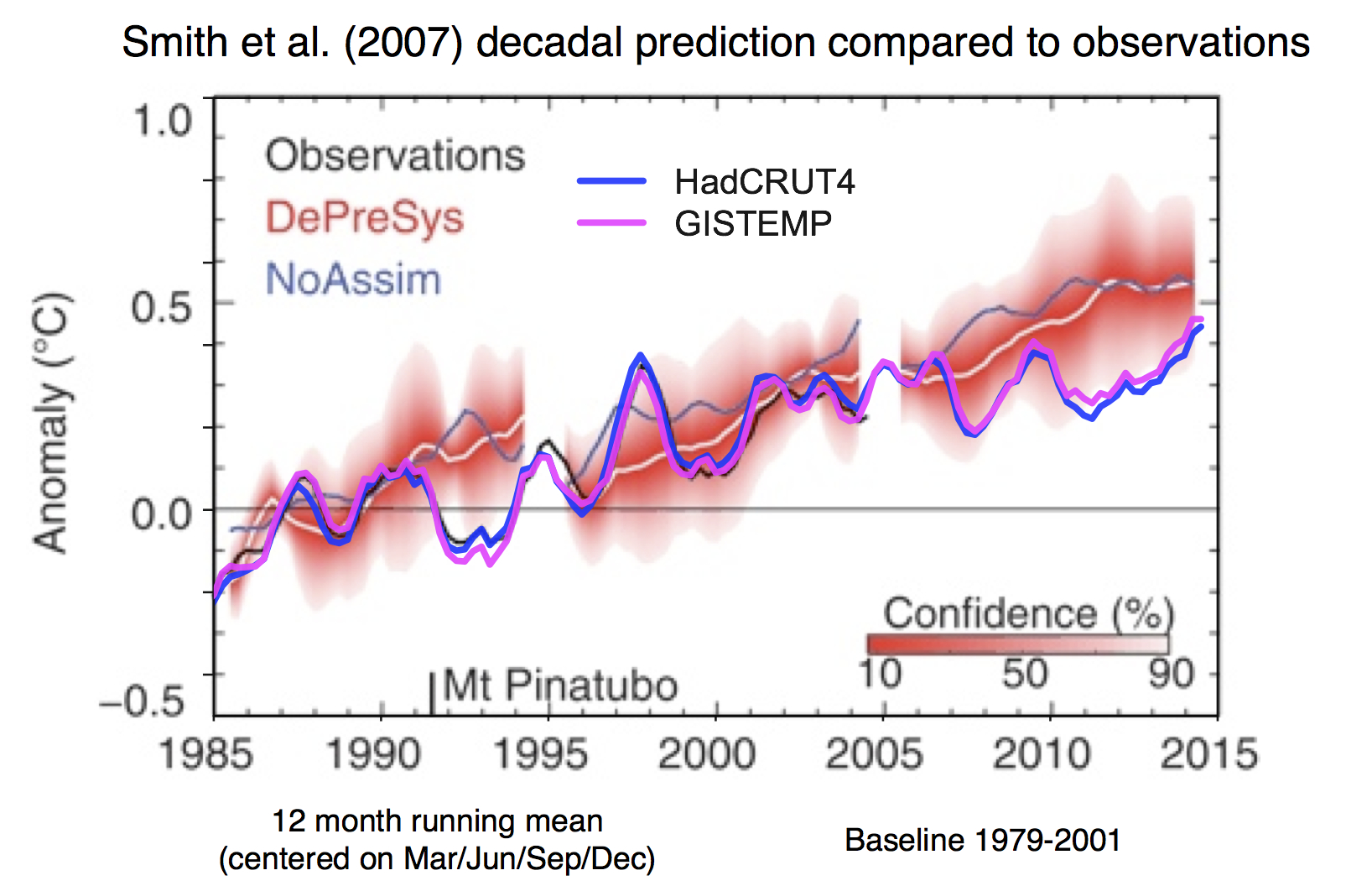

We can also assess the forecasts of the Smith et al (2007) paper (through to the end of 2014):

Figure 4 from Smith et al (2007), overlain with GISTEMP and HadCRUT4 estimates of the global mean temperature.

While the trajectory in the initialized simulations was slightly closer to the observations than their “No Assimilation” simulations, the real world trajectory was significantly outside their error bars for most of the period. Their figure also demonstrates that the impact of initialisation is only detectable for about 5 or 6 years. Some of the offset may well be related to the forcings that were applied post-2005 which may have been too strong (see Schmidt et al. (2005) for some assessment of that).

Summary

Had our bet been accepted, it is clear we would have won unambiguously.

It’s important to be clear about what these unsuccessful attempts at prediction mean. The authors of the papers involved are to be commended for trying something new and making real and falsifiable predictions. However, much more care should have been taken to self-critically examine their potential skill and emphasise their experimental nature, and the levels of certainty expressed in press releases and popular media should have been dialled way down. We offered the bet at the time as we were concerned that the failed forecasts would in the end cast a shadow on the credibility of climate science as a whole, so we felt a need to emphasise that other climate scientists disagreed with these forecasts. The techniques for decadal prediction have advanced somewhat since these earliest attempts (as described in Meehl et al., 2014), but no skilful initialized predictions have yet been verified. Claims then that this kind of decadal prediction is useful for policy-makers or scientists thus remain very premature.

In October 2010, the German newspaper Stuttgarter Zeitung, asked the Keenlyside authors for their comment on the failure of the first period of the prediction. Mojib Latif responded that the continued warming did not speak against their study; one should look at this long term and not attach too much significance to a few years. And he said that if the forecast turns out to be wrong by 2015, “I will be the last one to deny it”. We showed these results to him yesterday and he responded that this was an experimental product, and that the main idea was to shake up the scientific community. In that at least, it was successful!

References

- D.M. Smith, S. Cusack, A.W. Colman, C.K. Folland, G.R. Harris, and J.M. Murphy, "Improved Surface Temperature Prediction for the Coming Decade from a Global Climate Model", Science, vol. 317, pp. 796-799, 2007. http://dx.doi.org/10.1126/science.1139540

- N.S. Keenlyside, M. Latif, J. Jungclaus, L. Kornblueh, and E. Roeckner, "Advancing decadal-scale climate prediction in the North Atlantic sector", Nature, vol. 453, pp. 84-88, 2008. http://dx.doi.org/10.1038/nature06921

- S. Rahmstorf, "Climate drift in an ocean model coupled to a simple, perfectly matched atmosphere", Climate Dynamics, vol. 11, pp. 447-458, 1995. http://dx.doi.org/10.1007/BF00207194

- G.A. Schmidt, D.T. Shindell, and K. Tsigaridis, "Reconciling warming trends", Nature Geoscience, vol. 7, pp. 158-160, 2014. http://dx.doi.org/10.1038/ngeo2105

- G.A. Meehl, L. Goddard, G. Boer, R. Burgman, G. Branstator, C. Cassou, S. Corti, G. Danabasoglu, F. Doblas-Reyes, E. Hawkins, A. Karspeck, M. Kimoto, A. Kumar, D. Matei, J. Mignot, R. Msadek, A. Navarra, H. Pohlmann, M. Rienecker, T. Rosati, E. Schneider, D. Smith, R. Sutton, H. Teng, G.J. van Oldenborgh, G. Vecchi, and S. Yeager, "Decadal Climate Prediction: An Update from the Trenches", Bulletin of the American Meteorological Society, vol. 95, pp. 243-267, 2014. http://dx.doi.org/10.1175/BAMS-D-12-00241.1

Instead of placing bets, wouldn’t the scientific thing be to try to understand what caused the rate of warming to decline and to capitalize then on that knowledge in the interest of mitigating the problem?

[Response: There have been plenty of studies on that (I think around 50) including by Grant Foster and me. Main reason is the recent prevalence of La Niña conditions. That is now over, with the ongoing major El Niño event. -stefan]

There have been plenty of studies about that (including by myself) – main reason is a recent prevalence of La Niña conditions. That is now over with the ongoing major El Niño event.

But wait, there’s more: https://tamino.wordpress.com/2015/11/17/october-a-scorcher/

But you knew that.

Are you trolling, JB per #1?

http://www.eopugetsound.org/articles/state-knowledge-climate-change-puget-sound

A UW climate-change study for Puget Sound forecasts a temperature rise of 2.9 to 5.4 degrees Fahrenheit by midcentury, resulting in wide-ranging shifts as more storms bring rain rather than snow.

The drought of 2015 created a significant change in ground level on the hillside where I live. This is a very spongy hill with artesian wells that have been capped by road and residential construction. Poor planning, the springs keep finding weak spots in the pavement to emerge. I think this year’s drought reduced water volume in the spongy hill and ground level sank an unusual amount. This hillside is moving slowly, but with the unusual drought conditions, it moved an unusual amount this year. Drought in 2016 could create even more unusual subsidence. I am talking to City public works about this process and what it means for built infrastructure, like water, sewer, natural gas lines.

Meanwhile, the UW report is talking abt 5 plus degrees of warming possible by midcentury. Buckle up your seatbelts, it’s going to get bumpy ahead.

I am pleased I guess that RC thought the decline model was incorrect and was public about it. But this model, and others like it, suggest to voters/citizens that the global warming situation is a hoax (or good for us, or a ploy to create the one-world government, etc – keep yr aluminum foil hats ready – chem trails, haarp, etc)

I think there is no doubt that if an established scientific team proposed a study that suggests a decline is in the works or that there are negative feedbacks, etc – (anything that questions a global need to change our ways) there will be funding for that study. Private foundations with a vested interest in increasing apathy or confusion in these matters are well-funded.

So, given all that, …. who funded the scientists and this study that turned out to have missed rather badly?

re: 1.

There were quite a lot of La Nina years which tend to temper global average temperatures. Yet the warming continued just at a lower rate.

“We showed these results to him yesterday and he responded that this was an experimental product, and that the main idea was to shake up the scientific community. In that at least, it was successful!

You should have had side bets among yourselves that the authors once proven wrong, would deny they ever believed, what they had written.

I am more surprised by the tenacious persistence of global warming denialism and the growth of ideological and business-based persuasion. Explained by the rise of the profession of agnotology the “culturally induced ignorance or doubt, particularly the publication of inaccurate or misleading scientific data.” Wikipedia

Aren’t you meant to be using HADCRUT3?

[Response: Why? In any case it makes no difference, in decadal means all data sets are very close together, and very far from the Keenlyside forecasts.]

Dang, Mojib Latif represents what I’d call “mainstream” here in Germany, so I thought, he is quite serious and honest in his statements in the mainstream media (as far as that goes for mainstream media), at least not a “luke-warmer”. Now I have to realize that he actually did expect a cooling period “to shake up the scientific community”?! And the deniers from EIKE and the newspapers like “Stuttgarter Zeitung” ect catched that ball, hahaha^^ Nice photo of snow there in the “Stuttgarter Zeitung”-article from 2010. We will not see much snow here in Germany this winter I am afraid, just like during the last two winters, it is much too warm and dry:

http://www.bernd-hussing.de/TT17.jpg

https://files.ufz.de/~drought/smi_aktuell.png

Climate science and the media is a real nasty business. Mainstream media always spreads inconsistent messages and doubt. The mainstream media does not like climate change. I don’t like the mainstream media.

“… and he responded that this was an experimental product, and that the main idea was to shake up the scientific community…”

Well then, for more than 30 years now my clock says loud and clearly:

Tick tick tick tick tick…. good luck for United Nations Climate Change Conference in Paris! We will all be shook up soon enough…

I document nearly 200 successful climatological forecasts now at my site at http://www.abeqas.com.

A majority of the forecasts were 90% accurate. No initializing and all forecasts are documented, not just the best ones..

What would you like to wager?

You may have won the bet, but we’ve lost the game.

Stefan, thank you for your response at 1. The consensus is La Niña conditions brought about the slowdown. These conditions stacked warm water in the eastern Pacific driving the thermocline down to a depth of about 300 meters. Once the trade winds subsided however, in 2013, this stacked warm water sloshed back to the east and to the surface with the result 2014 and 2015 have successively been the warmest years ever recorded.

Very little of the stacked heat was mixed and diluted in the ocean abyss below the thermocline and as a result when it came back to the surface it was able to drive temperatures off the coast of North America 3 degrees higher than normal.

The oceans are the repository of 93 percent of the heat of global warming and since warm water rises, most of the heat sits near the surface while the temperature at depth approaches the freezing point of water. This differential makes the oceans, particularly tropical waters, the largest battery on the planet.

When heat flows from a warm source to a cold sink through a heat engine, just as when electrons flow from the negative to the positive terminal of a battery, energy is produced.

It is estimated the oceans have the capacity to produce 14 terawatts of primary energy through ocean thermal energy conversion or OTEC, or about the same amount of energy as is currently derived annually from fossil fuels.

NOAA estimates the ocean battery is being charged at a rate of about 330 terawatts each year and since there is no draw down of this charge we are experiencing the escalating consequences of global warming.

Due to the low thermodynamic efficiency of a heat engine operating within the temperature range offered by the oceans, OTEC requires the movement of about 20 times more heat to the deep than the 14 terawatts of power the oceans are capable of producing.

In other words, virtually all of the heat the ocean battery is currently accumulating is either converted to work or moved to deep water with this process.

In support of this premise, in the late 1970s a team from the Applied Physics Laboratory of Johns Hopkins University estimated that the surface water temperature of the oceans, and therefore the lower atmosphere, would be reduced by 1 degree Celsius each decade through the production of 5 terawatts of OTEC power.

The relocation of this heat to the deep would be benign due to the large thermal capacity of water. It is estimated that in spite of all the heat they are absorbing, at depths from 500 to 2000 meters the oceans are warming by about .002 degrees Celsius every year, and in the top 500 meters they are gaining .005 degrees.

The benefit of this ocean heat absorption is noted by Levitus, who pointed out that if all of the heat the oceans absorbed to a depth of 2000 meters from 1955–2008, which raised their temperature by an average of .09 degrees Celsius, was instantly transferred to the lower 10 kilometers of the atmosphere that layer would be warmed 36 degrees Celsius.

My point is we have not tried to apply the knowledge gained in the 50 studies you refer to, so what is the point of them?

If we assume (or are confident) that the reduced warming is for the main part due to La Nina conditions – and is probably supported by a decreasing solar cycle amplitude as well as some increase in volcanic aerosols – it is not astonishing that climate models even with initialized runs do not accurately forecast such a decadal evolution. All the mentioned influences are not included in the models: climate model runs normally are based on constant solar and volcanic forcing on the one hand, and are not able to forecast the ENSO evolution over more than about half a year.

Unfortunately, this might lead us to the insight, that for decadal predictions the missing knowledge of the evolution of the solar cycle, volcanic forcing and ENSO will remain an important problem and make decadal projections of global temperature nearly useless – unless climate models will be able to forecast ENSO for a couple of years.

Nemesis 9 — the past participle of “catch” in English is irregular: “caught” instead of “catched.”

Jim Baird @12:

I see two troubles with OTEC. First is that it has not proven to scale into the terawatt range. The demonstration plants are all in the 100 MW range; their efficiency is in the 3% range; and they tend to get shut down after a year of two. The history of OTEC reminds me of fusion power, which has been 20 years away for my entire life.

For me the more serious problem with OTEC is what will it do to the oceans if it is deployed widely. Dumping tens of terawatts into the deep ocean will change currents, likely generate new upwellings of deep water, and cause changes that are unlikely to be foreseen without modeling what happens with large hotspots in the deep ocean.

Jim Baird pushes OTEC because of personal ties to the technology, according to his own posts on the subject here and elsewhere.

@Tim, thank you so much for articulating the concern about rapidly transporting surface BTUs into the deep. Anthropocene Age to be sure.

both papers made an attempt to better the estimations of climate variability but if you are interested in the real climate you should follow my research. Since 2005 I have documented and described the variability while the last two years I have reached way beyond to detailed descriptions of the mechanisms involved.

TM 15. Dumping tens of terawatts into the deep ocean will change currents, likely generate new upwellings of deep water

Hansen et al. recently pointed to increased sea ice melt acting as an insulator to warmer deep waters shutting down ocean circulation, leading in turn to further ice melting and rapid sea level rise – http://phys.org/news/2015-09-eyes-oceansjames-hansen-sea.html#jCp

So moving tropical heat to the deep is more likely to preserve thermohaline circulation than halt it. As well as sapping the energy of tropical storms, OTEC also would decrease sea level rise 3 ways. First it short-circuits the movement of heat towards the poles and the effect Hansen suggests. The coefficient of thermal expansion of sea water is also greatly reduced at 1000 meters as compared to at the tropical surface and finally the production of hydrogen as an energy/water carrier to get offshore power to market is a transference of ocean volume to the land.

As to upwelling, Munk in his paper Abyssal Recipes calculates that the return rate of heat moved to the abyss is about 4 meters per year, which would hardly be a problem and a certain amount of upwelling would be beneficial to phytoplankton considering increasing thermal stratification is cutting them off from the nutrient rich waters they need to survive.

Better I am sure that the atmosphere is deprived of half of its source of oxygen?

Mark E, your concern about anyone making a profit from addressing the problem, let alone years of their own effort, is laughable. Better I am sure that the fossil fuel industry continue to make money hand over fist?

#18–Jim, I don’t claim any great understanding of these things, but OTEC would be deployed largely in the tropics (because that’s where the needed temperature differential is), while Hansen et al seems to be talking about effects that are happening in–actually, driven by effects in–the polar regions. So your comment that:

…doesn’t make a lot of sense to me.

Just saying’–I’m remaining agnostic on the virtues or vices of OTEC.

Kevin 18, “The net heating imbalance between the equator and poles drives an atmospheric and oceanic circulation that climate scientists describe as a “heat engine.” (In our everyday experience, we associate the word engine with automobiles, but to a scientist, an engine is any device or system that converts energy into motion.) The climate is an engine that uses heat energy to keep the atmosphere and ocean moving. Evaporation, convection, rainfall, winds, and ocean currents are all part of the Earth’s heat engine.” NASA http://earthobservatory.nasa.gov/Features/EnergyBalance/page3.php

Essentially this is the second law of thermodynamics in action – heat flowing from the wamr equator where it is accumulating to the cold poles where it is radiated to space.

What Hansen says is the fresh water melt acts as a blanket that prevents the radiation to space and thus the heat accumulates, again mostly at the equator which warms all the more compounding the problem.

What I claim is the abyss is an even greater cold sink than the poles and that heat flowing there – a heat pipe is required because warm water is buoyant – through other kinds of heat engines can in part be converted to power and would no longer be available to move to the poles where it may be producing the effect Hansen claims.

Some like Tim at 14 suggests this could also shutdown the thermohaline circulation because it is tropical heat that drives the process. As NOAA points out however the oceans are accumulating about 330 terawatts of excess energy every year. We would be lucky to come close to converting and sequestering that much heat in the depths after decades of building out the OTEC fleet and since the thermohaline has been operating for eons it would continue to work if we took out only the heat accumulating due to climate change. As it turns out this would produce about exactly the amount of energy we currently get from fossil fuels.

I also put this option up against nuclear or fission which increases the heat load on the oceans and all that goes with that

I’m not a climate scientist, but don’t temperature rises follow rising CO2 levels pretty loosely? I thought there was a century level connection, not an annual one, so a decade or two here or there doesn’t mean its stopped.

OTOH, with all the efforts to slow CO2 levels with more renewables, and all the countries that say they have reduced CO2 emissions since Kyoto, with EU cap and trade, with the RGGI cap and trade in Northeast US, China incredible GWs of renewables added, even from the slowdown in economic activity from 2008 recession, etc, I’m actually more surprised at the robotic-ly even trajectory of CO2 rise every year.

That’s the one I’d have thought (hoped) was leveling off just a bit globally by now.

But no impact yet???

To the anonymous responder to my #8:-

Because that’s what you said!

Here is the bet, and the whole thrust of your post

The bet we propose is very simple and concerns the specific global prediction in their Nature article. If the average temperature 2000-2010 (their first forecast) really turns out to be lower or equal to the average temperature 1994-2004 (*), we will pay them € 2500. If it turns out to be warmer, they pay us € 2500. This bet will be decided by the end of 2010. We offer the same for their second forecast: If 2005-2015 (*) turns out to be colder or equal compared to 1994-2004 (*), we will pay them € 2500 – if it turns out to be warmer, they pay us the same. The basis for the temperature comparison will be the HadCRUT3 global mean surface temperature data set used by the authors in their paper.

[Response: You are right: had they accepted the bet, that would have been the decisive data set, and we would have won the bet by that as well. For a comparison with reality, though, I’d take the currently best data, and that definitely is not HadCRUT3 any more (and neither HadCRUT4, given their treatment of the large data gap in the Arctic, which we have extensively discussed on this blog). -stefan]

@Barton Paul Levenson, #14

“ Nemesis 9 — the past participle of “catch” in English is irregular: “caught” instead of “catched.” ”

Hey, thx a lot, nice catch^^ I’m still learning, so I stumble over those irregular verbs occasionally :-)

Herzliche Grüsse aus Deutschland!

and adding to my #22, how can it make no difference?

http://www.woodfortrees.org/plot/hadcrut3gl/from:1994/plot/gistemp/from:1994/plot/hadcrut3gl/from:1994/trend/plot/gistemp/from:1994/trend

[Response: Because they predicted a cooling and all observational data sets show further warming – just somewhat different amounts. -stefan]

Jim, #20–

I’m OK with the heat engine terminology, and am well aware how insolation drives large scale circulation patterns, thank you.

However, I think you are wrong about this:

The fresh water is in circumpolar regions, and so far at least, we don’t see ocean circulation shutting down. Moreover, if we did, and if OTEC were indeed deployed at large scale in the tropics, any large scale effect on SSTs would presumably be in the direction of decreased thermal gradients. That’s the wrong sign to be helping circulation…

Kevin, 24

“Freshwater injected onto the North Atlantic or in both hemispheres shuts down the AMOC” from “Ice melt, sea level rise and superstorms: evidence from paleoclimate data, climate modeling, and modern observations that 2C global warming is highly dangerous” – http://www.atmos-chem-phys-discuss.net/15/20059/2015/acpd-15-20059-2015.pdf page 20081

“Tropical and Southern Hemisphere warming is the well-known effect of reduced heat transport to northern latitudes in response to the AMOC shutdown (Rahmstorf, 1996; Barreiro et al., 2008).” page 20086 same paper.

I could be wrong.

Perhaps Stefan could clarify?

#22 & 23–“…how can it make no difference?”

Well, this:

“…lower or equal to”

…makes it kinda Boolean.

By Hadcrut3,Keenlyside et al. lose; by GISTEMP they ‘lose worse’–but there’s no penalty for losing worse.

Susan, #21

If you are interested, AR5, IPCC Working Group 3, Technical Summary will give you the breakdown in emissions.

But, in short, ‘rich’ countries have reduced their emissions a little bit, ‘very poor’ countries have not seen much of a change, but ‘previously poor and now rapidly growing, economically, countries have increased emissions massively, due to huge increases in demand for energy’

This IS NOT to say that the ‘rich’ countries shouldn’t be taking steps to reduce emissions. Rather that these steps have been weak AND that we need serious effort to stop the grow in emissions in, in particular, China and India, and then decarbonise these these (and other) countries.

This is an enormous technical, and social, problem. Technical in that there are technical ways to reduce emissions massively (better vehicles, renewables, nuclear, insulation, heat-pumps etc.) but challenges remain, and (short-term) costs are higher than not giving a damm, and social in that it’s not just a technology fix. People will have to change attitudes to things like saving energy, eating meat, and what things we tax or don’t tax.

IMHO 450ppm CO2 will be significantly overshot (there’s no indication global emissions will peak any time soon) but there’s still masses to play for. The difference between BAU and actually doing what is feasible, given political will, is huge.

I’d take 2.7 degrees now, if you offered it.

Science trumps Nature. ;>

#26–Jim, those quotes seem to me to support what I’ve been saying–ie., the cause of the potential circulation changes is in the high latitudes. You propose to address that with a remedy applied in the tropics. It’s not clear (to me at least) that the causality runs both ways.

Am I missing something?

Just to play pessimist:

Is the whole exercise futile (not just premature)? The internal variability is just too important, unless the forcing is very large on this high-frequency timescale (volcanoes). Especially you’re interested in the spatial structure, especially e.g., DJF higher NH latitudes. Just low signal-to-noise, even if you know the phase of some “mode” that exhibits a multi-decadal timescale at the forecast time. Even for longer timeslices (e.g., Medieval vs. LIA spatial structure) you get big differences between multiple realizations of natural variability in experiments with smaller (solar, land-use) forcing.

I get the point of the initialization, but is it like increasing the satellite/radiosonde data by a factor 10 that is used in assimilation and then expecting meteorologists to make consistent 2-3 week forecast? Would increasing the ocean observing system even matter?

Stefan,

The post is about who would have one the bet. Surely the correct dataset should have been used to prove the point, not one that is 0.2K hotter. This is just sloppy.

[Response: Don’t be silly. The predictions were for the actual global mean temperature anomaly. Updates to the observational data sets (by adding more stations, dealing with non-climatic complications) bring the obs closer to the actual global mean and so become more relevant to the prediction, not less. Had the prediction been for a specific masked product, or a forward model of a particular observation, your point would have had more validity, but they weren’t so it doesn’t. It’s a moot point, but using just HadCRUT3 or HadCRUT4 makes no difference to the outcome. – gavin]

Kevin 30, heat flows from hot to cold. The cycle starts in the tropics from where the heat either flows towards the poles or can be induced to flow to the deep. IMHO the latter is the most productive and least damaging option?

(Keep getting “no service” message – possibly duplicate posts?)

> Griesch … Hansen … blanket … heat flows from hot to cold

I think you’re confused.

The thermohaline circulation is driven by changes in salinity.

Adding fresh water near the poles lets them get colder, not warmer.

That’s because the fresh water floats, instead of sinking.

Sea water density depends on temperature and salinity, hence the name thermo-haline.

“the Gulf Stream transports approximately 3.2 peta Watts of heat to the North Atlantic”

It is heat originating near the equator that is ultimately creating the polar fresh water and the Griesh … Hansen blanket.

Short circuit that tropical heat to the abyss and it is no longer available to move pole-ward; at least not the 330 terawatts that is being added by climate change, which is converted and sequestered by 14 terawatts of heat-pipe OTEC.

i.e. it would be a return to the status quo.

The freshening or decreasing of saltiness at both poles, could ultimately block the oceans’ overturning circulation but OTEC would mitigate the problem.

OK Gavin (response to my #32), humour me and extend HadCrut3 onto their Figure 4 from 1998 and see how that fits their prediction

A recent study by Patrick Taylor, a scientist at NASA’s Langley Research Center in Hampton, Va., suggests, “the Earth’s poles are warming faster than the rest of the planet because of energy in the atmosphere that is carried to the poles through large weather systems.”

Shut of the thermohaline, the tropics get hotter, the weather systems get larger, the poles warm that much faster.

Still no word regarding my own challenge to Dr. Schmidt on climate forecasting wager. I have also sent a survey to Dr. Schmidt and others, regarding the state of climate forecast accuracy transparency for my next biannual publication on the topic. My most recent survey publication effort may have played a positive role in a representation by a major government climate forecasting program (via a response to my survey) that they will begin to disclose the accuracy performance metric history for their multiple decades of forecasting the climate in the United States. More at my own site at: http://www.abeqas.com/the-most-transparently-accurate-climate-forecasts-in-the-world/

Hi Michael W., I’ve taken a gander at your web site. It’s not entirely obvious what these 200 forecasts you mention are forecasting. It looks like it might have something to do with flows in different rivers or streams in the American Southwest and West (and maybe other regions?). But then, it seems strange to compare those to GCM results…

Also, on one page (“Protected: MW&A Forecast Approach Succeeds From New Mexico to Oregon”) you talk about an 8-month forecasting exercise for 10-year trailing averages. Maybe I’m misinterpreting what you’re saying, but over an 8-month period, over 90% of the 10-year trailing average has already been laid down before the period starts. I certainly hope you’d have amazing accuracy in that case! Or am I missing something?

Do you think you would have won had you used the RSS data set? Or for that matter the recently revised UAH ver. 6.0? Looks to me like you just might have but it would have been a lot closer.

[Response: The only predictions published were for the surface temperatures, not for atmospheric layers or satellite products. -gavin]

You’ve failed to understand one of the basics here.

Know which one?

I’d bet not.

Martin, yes those are stream gages. I forecast not only the trend but the magnitude of the stream flow values. A ten year trailing average does not guarantee high accuracy, as a number of my forecasts demonstrate. Rather, the strong correlations between the stream flows and ocean drivers help achieve this accuracy record. In some locations, such as northern New Mexico, the correlations between stream flow records and the PDO, over a 100 + year period are well over 80%.

These are the ocean drivers that cannot be accurately simulated by any of the CMIP5 models. The CMIP5 models (which also employ averaging) must also be reinitialized every few years with the actual ocean driver observations. Otherwise the simulated temperatures will rise like helium balloons to absurd values. That feature of those models has never been candidly disclosed.

In any case, I’m now forecasting the PDO on a 6 year trailing average basis, by employing an observed correlation to another parameter, which itself is strongly correlated to contemporary measurements of Total Solar Irradiance.

Finally, we are implementing 5 year average forecasts soon, as well as targeting larger lead times where we can. The success so far using 10 year averages provide an anchor and a starting point to a very brave new world in climate forecasting. If only other climate scientists would start from such an anchor, perhaps the alarming and unfounded claims of climate change based on emissions would never have materialized.

http://www.science.gov/topicpages/r/reliability+ensemble+averaging.html

Hank, I don’t play “find me a rock”. Go find yourself a rock.

The CMIP exercises and related ensemble products are propped up by RESETTING the model results with actual observations every now and then. This serves to disguise the poor model skill. This shell game has never been candidly disclosed, although the following reference comes close [1]. No scientist involved in the CMIP exercises has come forward to dispute my claim, not even Gavin Schmidt. It’s now been well over a month since I first brought this to the attention of the readers of the post.

[Response: Sorry but I’m unable to refute every piece of nonsense in the comments. However, in this case it is clear: what you describe is totally incorrect. The historical runs are all free running coupled models with changing only the forcing fields over time – they do not ingest any meteorological information. – gavin]

[1] Fricker, T.E., C.A.T. Ferro, and D.B. Stephenson, 2013, Three recommendations for evaluating climate predictions METEOROLOGICAL APPLICATIONS 20: 246 – 255 DOI: 10.1002/met.1409

ps, when I first posted original comment, the site went down. Could be a spurious correlation (and see reference [1] for more on spurious correlations). The next time I posted, the site flickered out again, and replaced briefly then by some spam site.

Keep digging. The forcing fields are what your models are supposed to have skill in simulating. Here’s a thought experiment: Run a simulation from 1960 to now without adjusting any ‘forcing fields’

[Response: You have it completely backwards. How for instance is a climate model supposed to predict ahead of time what the solar dynamo is going to do? Or which volcano is going to erupt? or what economic choices made in China are going to be? These are inputs into climate models, and their job is to predict the climate response to the forcings, not what the forcings are in the first place. – gavin]