The discussion of climate change in public (on blogs, in op-eds etc.) is often completely at odds to the discussion in the scientific community (in papers, at conferences, workshops etc.). In public discussions there is often an emphasis on seemingly simple questions (e.g. the percentage of the current greenhouse effect associated with water vapour) that, at first sight, appear to have profound importance to the question of human effects on climate change. In the scientific community however, discussions about these ‘simple’ questions are often not, and have subtleties that rarely get publicly addressed.

One such question is the percentage of 20th Century warming that can be attributed to CO2 increases. This appears straightforward, but it might be rather surprising to readers that this has neither an obvious definition, nor a precise answer. I will therefore try to explain why.

First of all, ‘attribution’ in the technical sense requires a certain statistical power (i.e. you should be able to rule out alternate explanations with some level of confidence). This is a stricter measure than the word in common parlance would imply (another example of where popular usage and scientific usage of a term might cause confusion). Secondly, attribution (in the technical sense) of an observed climate change is inherently a modelling exercise. Some physical model (of whatever complexity) must be used to link cause and effect – simple statistical correlations between a forcing and a (noisy) response are not sufficient to distinguish between two potential forcings with similar trends. Given that modelling is a rather uncertain business, those uncertainties must be reflected in any eventual attribution. It certainly is an important question whether we can attribute current climate change to anthropogenic forcing – but this is generally done on a probabilistic basis (i.e. anthropogenic climate change has been detected with some high probability and is likely to explain a substantial part of the trends – but with some uncertainty on the exact percentage depending on the methodology used – the IPCC (2001) chapter is good on this subject).

For the case of the global mean temperature however, we have enough modelling experience to have confidence that, to first order, global mean surface temperatures at decadal and longer timescales are a reasonably linear function of the global mean radiative forcings. This result is built in to simple energy balance models, but is confirmed (more or less) by more complex ocean-atmosphere coupled models and our understanding of long term paleo-climate change. With this model implicity in mind, we can switch the original simple question regarding the attribution of the 20th C temperature response to the attribution of the 20th C forcing. That is, what is the percentage attribution of CO2 to the 20th century forcings?

This is a subtly different problem of course. For one, it avoids the ambiguity related to the lags of the temperature response to the forcings (a couple of decades), it assumes that all forcings are created equal and that they add in a linear manner, and removes the impact of internal variability (since that occurs mainly in the response, not the forcings). These subtleties can be addressed (and are in the formal attribution literature), but we’ll skip over that for now.

Next, how can we define the attribution when we have multiple different forcings – some with warming effects, some with cooling effects that togther might cancel out? Imagine 3 forcings, A, B and C, with forcings of +1, +1 and -1 W/m2 respecitively. Given the net forcing of +1, you could simplistically assign 100% of the effect to A or B. That is pretty arbitrary, and so a better procedure would be to stack up all the warming terms on one side (A+B) and assign the atribution based on the contribution to the warming terms i.e. A/(A+B). That gives an attribution to A and B of 50% each, which seems more reasonable. But even this is ambiguous in some circumstances. Imagine that B is actually a net effect of two separate sources (I’ll give an example of this later on), and so B can alternately be written as two forcings, B1 and B2, one of which is 1.5 and the other that is -0.5. Now by our same definition as before, A is responsible for only 40% of the warming despite nothing having changed about understanding of A nor in the totality of the net forcing (which is still +1).

A real world example of this relates to methane and ozone. If you calculate the forcings (see Shindell et al, 2005) of these two gases using their current concentrations, you get about 0.5 W/m2 and 0.4 W/m2 respectively. However, methane and ozone amounts are related through atmospheric chemistry and can be thought of alternatively as being the consquences of emissions of methane and other reactive gases (in particular, NOx and CO). NOx has a net negative effect since it reduces CH4, and thus the direct impact of methane emissions can be thought of as greater (around 0.8 W/m2, with 0.2 from CO, and -0.1 from NOx) . Nothing has actually changed – it is simply an accounting exercise, but the attribution to methane has increased.

Is there any way to calculate an attribution of the warming factors robustly so that the attributions don’t depend on arbitrary redefinitions? Unfortunately no. So we are stuck with an attribution based on the total forcings that can exceed 100%, or an attribution based on warming factors that is not robust to definitional changes. This is the prime reason why this simple-minded calculation is not discussed in the literature very often. In contrast, there is a rich literature of more sophisticated attribution studies that look at patterns of response to various forcings.

Possibly more useful is a categorisation based on a seperation of anthropogenic and natural (solar, volcanic) forcings. This is less susceptible to rearrangements, and so should be less arbitrary and has been preferred for more formal detection and attribution studies (for instance, Stott et al, 2000).

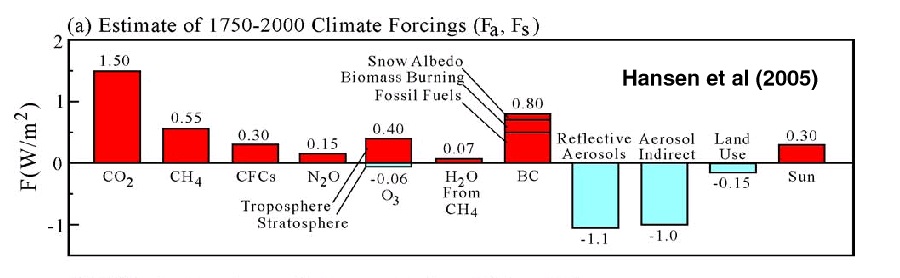

What does this all mean in practice? Estimated time series of forcings can be found on the GISS website. As estimated by Hansen et al, 2005 (see figure), the total forcing from 1750 to 2000 is about 1.7 W/m2 (it is slightly smaller for 1850 to 2000, but that difference is a minor issue). The biggest warming factors are CO2 (1.5 W/m2), CH4 (0.6 W/m2, including indirect effects), CFCs (0.3), N2O (0.15), O3 (0.3), black carbon (0.8), and solar (0.3), and the important cooling factors are sulphate and nitrate aerosols (~-2.1, including direct and indriect effects), and land use (-0.15). Each of these terms has uncertainty associated with it (a lot for aerosol effects, less for the GHGs). So CO2‘s role compared to the net forcing is about 85% of the effect, but 37% compared to all warming effects. All well-mixed greenhouse gases are 64% of warming effects, and all anthropogenic forcings (everything except solar, volcanic effects have very small trends) are ~80% of the forcings (and are strongly positive). Even if solar trends were doubled, it would still only be less than half of the effect of CO2, and barely a fifth of the total greenhouse gas forcing. If we take account of the uncertainties, the CO2 attribution compared to all warming effects could vary from 30 to 40% perhaps. The headline number therefore depends very much on what you assume.

Recently, Roger Pielke Sr. came up with a (rather improbably precise) value of 26.5% for the CO2 contribution. This was predicated on the enhanced methane forcing mentioned above (though he didn’t remove the ozone effect, which was inconsistent), an unjustified downgrading of the CO2 forcing (from 1.4 to 1.1 W/m2), the addition of an estimated albedo change from remote sensing (but there is no way to assess whether that was either already included (due to aerosol effects), or is a feedback rather than a forcing). A more appropriate re-calculation would give numbers more like those discussed above (i.e. around 30 to 40%).

But this is game anyone can play. If you’re clever (and dishonest) you can take advantage of the fact that many people are unaware that there are cooling factors at all. By showing that B explains all of the net forcing, you can imply that the effect of A is zero since there is nothing apparently left to explain. Crichton has used this in his presentations to imply that because land use and solar have warming impacts (though he’s simply wrong on the land use case), CO2 just can’t have any significant effect (slide 18). Sneaky, eh?

But does the specific percentage attribution really imply much for the future? (i.e. does it matter that CO2 forced 40% or 80% of 20th Century change?). The focus of the debate on CO2 is not wholly predicated on its attribution to past forcing (since concern about CO2 emissions was raised long before human-caused climate change had been clearly detected, let alone attributed), but on its potential for causing large future growth in forcings. CO2 trends are forecast to dominate trends in other components (due in part to the long timescales needed to draw the excess CO2 down into the deep ocean). Indeed, for the last decade, by far the major growth in forcings has come from CO2, and that is unlikely to change in decades to come. The understanding of the physics of greenhouse gases and the accumulation of evidence for GHG-driven climate change is now overwhelming – and much of that information has not yet made it into formal attribution studies – thus scientists on the whole are more sure of the attribution than is reflected in those papers. This is not to say that formal attribution per se is not relevant – it is, especially for dealing with the issue of natural variability, and assessing our ability to correctly explain recent changes as part of an evaluation of future projections. It’s just that precisely knowing the percentage is less important than knowing that that the observed climate change was highly unlikely to be natural.

In summary, I hope I’ve shown that there is too much ambiguity in any exact percentage attribution for it to be particularly relevant, though I don’t suppose that will stop it being discussed. Maybe this provides a bit of context though…

But the world is warming overall and surely that is a statistical fact that cannot be ignored?

Very very helpful, as always, Gavin.

And also conveys why the evidence for human-forced warming does not fit easily into the black-and-white framework that the media, and society, more generally, try to apply to it.

I think it would help to include discussion on methane and CO2 feedbacks (from thawing permafrost) and on reduced CO2 absorption (by more acidic warmerr oceans) – in relation to the bit of context you presented above.

it does seem that the forcings and the temperature rise are occuring at the same time, it seems to me that you are suggesting that since evidence is ambigous (in your view) we should continue the experiment of increaseing greenhouse gases to see if the global temperature will continus to rise or at least give us more concrete evidence,

i would like to suggest a new experiment, reduce the amount of suspected greenhouse gases and see if the temperature of the globe starts to fall, or at least stabilize, embarking on such an experiment might have some very interesting social and technological side effects

Sure there may be several different gases or concoction of gases that are causing the effect of global warming. But isn’t the evidence clear that we are currently experiencing an unprecedented level of temperature increase and that has risen in line with CO2, which is generated by record population levels and demands for energy. Given lack of other evidence that is causing this effect and given common sense, I would presume that it isn’t hard to surmise that if we continue to live as we do and for the world population to continue growing as it is now, we WILL run out of resources, assuming our own wastes don’t kill us first. If we want to avoid doing that, we better address these issues now, or as many of them as we believe we can.

I recently read somewhere (I thought ClimateArk, but can’t find it) that according to some study atmospheric methane is now found to be on the increase & had been suppressed for some decades by some factors, but now is not only increasing as expected, but even more. That’s what my faulty memory still has.

And my understanding is that even if methane is short-lived in the atmosphere it degrades into CO2 (is that right?), which is long lived in the atmosphere — is that included in your “indirected effects” re methane?

Then also, if methane (a much more potent GHG) has a short life of up to 10 yrs, the fact that the methane releases & increases are really rapid (geological speaking) might be something important to consider…which David Archer mentioned a couple of years ago on this site. We may not just be repeating some past GW extinction level epoch that took hundreds or thousands of years to build up & play out — it seems we’re doing it with a vengeance as fast as we can. This may be new territory for planet earth, with the (geologically speaking) rapidity being a new factor.

I’m getting the sense that as science reports slowly & meticulously grind out the overall thrust continues to be “It’s worse than we earlier thought.”

Gavin- You dismissed the analysis that I did too cavalierly in your text

“Recently, Roger Pielke Sr. came up with a (rather improbably precise) value of 26.5% for the CO2 contribution. This was predicated on the enhanced methane forcing mentioned above (though he didn’t remove the ozone effect, which was inconsistent), an unjustified downgrading of the CO2 forcing (from 1.4 to 1.1 W/m2), the addition of an estimated albedo change from remote sensing (but there is no way to assess whether that was either already included (due to aerosol effects), or is a feedback rather than a forcing). A more appropriate re-calculation would give numbers more like those discussed above (i.e. around 30 to 40%)”

The number I presented was just the result of the assessment of the relative magnitudes of the radiative forcing that is in the literature; it is these values of radiative forcing that you should comment on (such as tropospheric black carbon; black carbon deposition). I am certainly comfortable with rounding to the nearest value of ten, which is 30% which is within the range that you present in your weblog! Thus your posting is actually supportive of the short analysis that I comppleted, but the sense of your post is otherwise.

I invite interested Real Climate readers to read the several postings on Climate Science on this issue; e.g.

http://climatesci.atmos.colostate.edu/2006/04/27/what-fraction-of-global-warming-is-due-to-the-radiative-forcing-of-increased-atmospheric-concentrations-of-co2/

http://climatesci.atmos.colostate.edu/2006/05/05/co2h2o/

http://climatesci.atmos.colostate.edu/2006/05/10/more-on-the-relative-importance-of-the-radiative-forcing-of-co2/

Your weblog also is incomplete in focusing on just CO2 as a long lived climate forcing. Land use/land cover change, and several of the aerosol effects (e.g. nitrogen deposition) are also long term forcings of the climate system; see

http://climatesci.atmos.colostate.edu/2006/06/27/lags-in-the-climate-system/

[Response: Hi Roger, Thanks for the comment. The point of the post was to point out how arbitrary some of these percentages are (including yours), not to support one or the other. But related to your specific calculation I maintain that you misread the Shindell et al paper (on which I was a co-author) – it does not give an increase in methane forcing alone. Instead, it restates the combined forcing of methane and ozone to be a forcing by methane + CO and NOx and since the (negative) NOx term is small (0.1 W/m2), it makes much less of difference than you conclude. Additionally, your downgrading of the forcing by CO2 was simply wrong. That number (1.4 W/m2 in 2001, 1.5 now) is independently derived and shouldn’t be changed to compensate for an increase in methane. I don’t think we disagree on the importance of including all 20th Century forcings, and as physics of new effects become better quantified they should be included as well. – gavin]

It is very difficult to find real skeptics. That is people who have serious questions regarding the science involved and who are really interested in the issue. 95%+ are simply deniers who keep the focus firmly on basic science that is totally not under debate. When you do try to point out the science, they merely focus on all the “uncertainty” in the conclusions.

However, they are helpful in that by taking up some extreme points of view (The Royal Society is Stalinist) they push more “moderate” skeptics closer to the science behind the theory of climate change.

re #3 and #6: Rate of increase of atmospheric methane has leveled off. This is not what one would expect from increased melting of permafrost.

Can anyone point me to an explanation for the apparent leveling of the increase in global temperature from 1940 to about 1970?

Thanks

Well, you’ve found a skeptic, although not a denier (that’s a metric).

I have a problem with the grammar of your statement “global mean surface temperatures”.

Literally, this means that the average temperature at every point on the earth is rising, which is untrue. You should say something like “The mean temperature of the whole earth”, but I have a problem with that, too.

Temperature is a poor measure of the energy in a body; most materials, like water and air, have a non-linear relationship between temperature and energy, so I see no basis for an application of the arimetic mean with futher qualification about the distribution of measurements.

This may seem a pedantic point, but my information is that oxygen disobeys Boyle’s law by about 1 part per thousand over a pressure range of 1 to 2 atm, and the temperature change you are calculating is about 0.6 degrees above 15 degrees kelvin, or 288 degrees absolute, which is about 2 parts per thousand, so I would welcome your comments on the effects of the non-linearities of the atmosphere on your models.

#3, #6, #9

Mystery of Methane Levels in 90’s Seems Solved – New York Times

A new study suggests that the reason why the atmospheric concentration of methane slowed in the 1990’s was related to the collapse of the Soviet Union.

http://www.nytimes.com/2006/09/28/science/28methane.html?ref=science –

> 1940s-1970s

Google search:

http://www.google.com/search?q=global+temperature+1940s+1970s+particulates&start=0

Example:

http://www.stanfordreview.org/Archive/Volume_XXXVI/Issue_8/Opinions/opinions1.shtml

—excerpt—

“While global temperatures show an upward trend since 1860, dimming and cooling started to outweigh the effects of global warming in the late 1940’s. Then starting in 1970 with the Clean Air Act in the United States and similar policies in Europe, atmospheric sulfate aerosols declined significantly. The EPA reports that in the U.S. alone from 1970 to 2005, total emissions of the six principal air pollutants, including PM’s, dropped by 53 percent. In 1975, the masked effects of trapped greenhouse gases finally started to emerge and have dominated ever since.”

—end excerpt—-

Hi Gavin- Thank you for your reply to #7.

With respect to your comment on the fractional contribution of methane, I used the information from http://www.pollutiononline.com/content/news/article.asp?DocID=%7B92402192-8574-45C2-8319-32A75F1E8ECE%207D&Bucket=Current+Headlines&VNETCOOKIE=NO that stated,

“According to new calculations, the impacts of methane on climate warming may be double the standard amount attributed to the gas. The new interpretations reveal methane emissions may account for a third of the climate warming from well-mixed greenhouse gases between the 1750s and today. The IPCC report, which calculates methane’s affects once it exists in the atmosphere, states that methane increases in our atmosphere account for only about one sixth of the total effect of well-mixed greenhouse gases on warming.”

Were your conclusions misrepresented?

On the magnitude of the radiative forcing of CO2, if the fraction of the radiative forcing attributed to the well-mixed greenhouse gases of methane is increased, and the total radiative forcing of the well-mixed greenhouse gases is unchanged, the fractional contribution attributed to CO2 must decrease. Are you thus suggesting that the IPCC value for the total of the well-mixed greenhouse gases needs to be increased to a value above 2.4 Watts per meter squared (the IPCC figure is presented, for example, at http://darwin.nap.edu/books/0309095069/html/3.html) ?

Finally, there is also the issue of time over which these radiative forcings have been increasing. CO2 has been a significant radiative forcing since the industrial period began, while the large increase in the input of black carbon into the troposphere, for example, has been more recent. If this is so, the “global average temperature” has responded (i.e. equilibrated) to a fraction of the CO2 that was input in the earlier years, such that IPCC Figure overstates the current contribution of CO2 to the global average radiative forcing (since that Figure presents a difference between preindustrial times and 2000, not the current radiative forcing). In the preparation of our 2005 NRC Report, the estimate in our discussions was that a value of 80% is a reasonable estimate of the added CO2 since preindustrial times which has not equilibrated.

I do agree with the theme of your post on the arbitrary aspect of attributing specific numbers to each of the radiative forcings.

However, it is an important issue to estimate the fractional contribution to positive radiative forcing due to CO2. If it dominates the other radiative forcings (and other “non-radiative climate forcings), than policy actions that focus on CO2 make good sense. However, if it is only one of several important radiative forcings, such as I summarize on Climate Science (in the hyperlinks for my weblogs given in #7), and, if we also need to be concerned about the spatial scales of the radiative forcing as is presented in

Matsui, T., and R.A. Pielke Sr., 2006: Measurement-based estimation of the spatial gradient of aerosol radiative forcing. Geophys. Res. Letts., 33, L11813, doi:10.1029/2006GL025974.

http://blue.atmos.colostate.edu/publications/pdf/R-312.pdf,

as well as the “non-radiative” forcings as reported in the 2005 NRC Report “Radiative Forcing of Climate Change: Expanding the Concept and Addressing Uncertainties”,

than the emphasis of CO2 alone is an inadequate recommendation for us as scientists to give to policymakers.

[Response: When in doubt, read the original paper (Shindell et al, 2005) – figure 1 is extremely clear. On the second point, if you change the forcing attributed to one gas, why should the total remain the same? There are no constraints of the total – it’s merely the sum of the individual contributions. Why the line-by-line calculation of forcing by CO2 should be affected by our atmospheric chemistry calculation is a little puzzling… IPCC used an abundance based calculation for the current forcings and that’s fine. Our point was that for emissions reductions in the future, it helpful to know the forcing associated with each emitted component, so that targets can take account of atmospheric chemistry changes too. However, CO2 remains the largest single component and is the one with the largest projected growth, and while there is a lot that can be done to reduce the other forcings, the climate change problem in future is in many ways a CO2 problem. You and I clearly disagree on that, and that’s fine, we should however be able to agree on 20th Century forcings. -gavin]

Re #10

Hi Mark,

Can anyone point me to an explanation for the apparent leveling of the increase in global temperature from 1940 to about 1970?

You might try here:

http://illconsidered.blogspot.com/2006/03/what-about-mid-century-cooling.html

Sorry to veer off-topic, I have a layman’s question that I thought would be best answered here: according to http://www.nzherald.co.nz/section/story.cfm?c_id=2&ObjectID=10404255 a study by the Hadley Centre warns that global warming will lead to excessive drought. That sounds very counter-intuitive to me: higher temperatures should mean higher rates of evaporation and, eventually, higher rates of precipitation. Is it the case that evaporation will increase primarily over land while precipitation will rise mostly over oceans? If not, what am I missing?

Re: 11

I thought that the average surface temperature of the planet would be a pretty simple concept. Usually this is measured by shaded thermometers in the air, so you are always measuring the same thing, i.e. the air temperature. Regarding Boyle’s law, the 0.2% difference from the idea would apply to the actual difference in temperature, i.e. 0.6K, not the absolute temperature. So the given temperatures are indeed consistent.

It is true that heat could be going elsewhere (i.e. melting ice, deep ocean heating), but most of these would actually suppress a warming signature. It is hence plausable that the equlibrium response of the planet to the forcings already present is higher than the observed temperature increase.

This is very important site, cause it helps one to understand what the word “fearmongering” really means. It means – more money to another fearmongering studies and proxies.

Re #8-

Technically, all climate scientists are skeptics, and should be skeptics about the issue until they are proven- if people accept theories without background for global warming and does not form their own opinion based on a fairly comprehensive study, then they are as bad as simple deniers.

Theories and opinions formed by the scientific process cannot be substituted with blind faith in any scientific issue.

[Response: This previous post is relevant to this discussion: https://www.realclimate.org/index.php/archives/2005/12/how-to-be-a-real-sceptic/ -gavin]

Thanks for a very clear article Gavin. The black carbon contribution in the Hansen paper is very interesting. Another area where improving air quality (by removing dangerous particles from exhausts) will hopefully have a useful impact in reducing climate change.

[Response: Agreed. There is a lot of scope, particularly in Asia for a reduction in black carbon emissions to have a strong impact on air quality and radiative forcings. – gavin]

I am not sure I understand the point made in (13)

But, maybe, I am just having the Barbie Doll moment.

Ny understand of global dimming seems to be a little different than yours.

It comes from ARM and James Hansen’s references to

global dimming developing from clouds being enhanced and or formed by pollution, sulfates, and or certain aerosols and so forth.

Because the pollution is there, the clouds form.

And there is still a lot of uncertainty with what is known, and unknown

about the matter. A lot.

ARM’s publication:

“Global Dimming: A Hot Climate Topic Global dimming”

http://google.arm.gov/search?q=cache:DlnfzQyEZmQJ:education.arm.gov/outreach/publications/sgp/jul04.pdf+global+dimming&access=p&output=xml_no_dtd&site=default_collection&ie=UTF-8&client=default_frontend&proxystylesheet=default_frontend&oe=ISO-8859-1

And haven’t you heard of the Asian brown cloud?

“The Asian Brown Cloud (ABC)”

Margaret Hsu and Laura Yee

http://www.sfuhs.org/features/globalization/asian_cloud/

“A recent comprehensive United Nations Environmental Program (UNEP) report, released on August 12, 2002, indicates that a 2-mile-thick toxic umbrella dubbed the â??Asian Brown Cloud,â?? (ABC) stretches over Afghanistan, Pakistan, Bangladesh, Bhutan, India, Maldives, Nepal, and Sri Lanka, which are among the most densely populated places in the world. “…..”The haze, 80 percent man-made, is composed of a grimy cocktail of toxic ash, black carbon, sulfate, nitrates, acids, and aerosolsâ??tiny solid or liquid particles suspended in the atmosphere. The haze also extends far beyond the study zone of the Indian subcontinent. Scientists say that similar clouds exist over East Asia (especially China), South America and Africa. Asian air pollution is unprecedented and will intensify as population increases and countries like China and India rapidly industrialize.”…..”The blanket of pollution is reducing the amount of solar energy hitting the Earthâ??s surface by as much as 15 percent. This has a direct effect on agriculture, by infringing on the important process of photosynthesis in plants. In fact, research carried out in India indicated that the haze could be reducing the winter rice harvest by as much as 10 percent. Furthermore, heat is trapped in the lower atmosphere, cooling the Earthâ??s surface while heating the atmosphere. This combination of surface cooling and lower atmosphere heating appears to alter the winter monsoon, leading to sharp decrease in rainfall over northwestern parts of Asia and an increase in rainfall along the eastern coast of Asia. “…..”The UNEP report seems to suggest that Greenhouse gases warm the Earthâ??s surface while the aerosol concentrated haze cools it. If this were true, it would worsen the abnormal temperature differences between places, and further disrupt the global climate. The UN report dealt specifically with the cloud; its relationship with global warming still requires further investigation.”…..

And to myself, it seems, the gist of all the real debates, would boil down to how to dissipate, or draw down, the trapped GHG gases.

Dimming and cooling, in the end, is more a matter of backscatter and or brownian motion, and the refraction matters and properties of particles, aerosols, and so forth.

Meanwhile, here on earth, we still have the same remaining problem of our trapped thermal atmospheric content that can not escape away from Earth’s self contained system that is maintained by the greenhouse gases that surrounds the earth that is said to be increasing in content, and because it increasing in content, the thermal kinetic capacity (global warming potential of certain said gases will rise with it.)

For example ….. gases produce kj of energy when thermally(radiated) vibrated and they collide with each other.

Just like when two atoms of hydrogen gas and one atom of oxygen gas violently collide, they produce water. It’s a chemical combustion reaction, a exothermic reaction, producing energy of 572 kJ.

And can and does man make water? Uh, no. Do they try to seed clouds, yes. But does man make water. No.

The said gases naturally collide midair, luckily for us Flintstone head humans.

(But I have read they would like to make recycled pee and poo water to sell for people to drink, but that hardly counts, that’s beyond cheating.)

So, really, how to draw down the greenhouse gases while devesting ourselves of their energy content, and or finding a way to tap into it as a energy source ….

Or on the ground technology to contain the fossil fuel emissions of the GHG by products before they EVER reach the atmosphere.

That’s OUR main problem in my opinion; (besides the natural resource

depletion, but if they could contain on the ground, they could get to a point of recycling the carbon content, at some point and time, and do their thermal cracking with the geo storage just like they do today with the petro supplies. It’s doable. It’s exactly how they plan to go after shale rock, tar sands, and any oil locked up in a rock. The issue is making them do it, environmentally safe, NEPA.)

It would seem to me, that would be our problem to address, because we need to solve it, and it’s something that could be addressed.

It matters, somewhat little, if some clouds, some of the time, in some parts of the world, are mirroring away solar EMF in some small ways if you think about in a GLOBAL context.

However, a similar idea has been radically put out, besides the Nobel Prize winners…. by

Stratospheric Injections Could Help Cool Earth, Computer Model Shows

September 14, 2006

http://www.ucar.edu/news/releases/2006/injections.shtml

…”Wigley calculates the impact of injecting sulfate particles, or aerosols, every one to four years into the stratosphere in amounts equal to those lofted by the volcanic eruption of Mt. Pintabuto in 1991. If found to be environmentally and technologically viable, such injections could provide a â??grace periodâ?? of up to 20 years before major cutbacks in greenhouse gas emissions would be required, he concludes. “…

But….Where would you put that information at, into an actionable level ladder scale, in the things we as people could do to effect a tangible REAL change?

It’s a bandaid, radical, and a way to do nothing more than buy time and we have no REAL data on this.

We have absolutely no way of knowing what might happen if MAN does this. It’s much different if nature does this, and then there isn’t any control over it, versus man. But with such a rapid change in the atmosphere in such a short time span, there is bound to be some repercussions versus a natural volcanic cycle.

At least with Mr. Pielke’s call for the addition of land use changes,(a Denver newspaper article I believe I read) I can appreciate the information he threw out there and I agree with him on that line of thinking.

Landuse changes are a significant contribution to albedo changes, as is deforestation, and we have the addition of soil degradation and or erosion, and so on (the loss of carbon stores).

Just for a comparison here to dimming, we have a study, alternate to dimming, that goes back a 1000 years, in relation to sunspots and faculae affecting the brightness of the sun. I’d say it offers in brightness, a very different view from dimming.

Foukal, P. et al (2006)

“Variations in solar luminosity and their effect on the Earth’s climate”

Nature, Volume 443, Issue 7108, pp. 161-166 (2006).

http://adsabs.harvard.edu/abs/2006Natur.443..161F

…” In this Review, we show that detailed analysis of these small output variations has greatly advanced our understanding of solar luminosity change, and this new understanding indicates that brightening of the Sun is unlikely to have had a significant influence on global warming since the seventeenth century.”..

Trying to find other issues, “Masking”, or crutches, …still isn’t ever goin to reduce the numbers on the GHG content we already have and their future global warming potentials that continue to go calculate,

compound daily…

When one thinks about how much we are adding daily on the fossil fuel and cement emissions side to the volume of the atmospheric gas contents in the way of any possible combinations of thermal chemical equations and its concerning.

Water vapor alone increases your heat index umpteenth thermal times. That’s why no one likes humidity.

I won’t go into geek speak.

So, our GHG side is always compounding daily, regardless of what is happening on the solar side.

Whether its sunspots, faculae, brightness, or dimming.

The WMO assessments, if you see the PDF below do have some uncertainties….depending upon where one lives, but the trend, global dimming, according to ARM, has reversed.

But, regardless, according to the WMO, the ozone layer plays a great role, as does air pollution and aerosols, for any attenuation.

5/11/05 – ARM Research Helps Identify A Brighter Earth

…”Based on a decade of surface solar energy measurements, the finding is a reversal of the “dimming” trend previously reported for the 1960s through 1990.”…

http://www.arm.gov/about/newsarchive_aprjun05.stm

“Executive Summary Scientific Assessment of Ozone Depletion: 2006” WMO/UNEP 18 August 2006

http://www.wmo.int/web/arep/reports/ozone_2006/exec_sum_18aug.pdf

Excerpted here

http://www.connotea.org/uri/d4b52caf73b87a8d9974ffba8b82b034

..”Measurements from some stations in unpolluted locations indicate that UV irradiance (radiation levels) has been decreasing since the late 1990s, in accordance with observed ozone increases. However, at some Northern Hemisphere stations UV irradiance is still increasing, as a consequence of long-term changes in other factors that also affect UV radiation. Outside polar regions, ozone depletion has been relatively small, hence, in many places, increases in UV due to this depletion are difficult to separate from the increases caused by other factors, such as changes in cloud and aerosol. In some unpolluted locations, especially in the Southern Hemisphere, UV irradiance has been decreasing in recent years, as expected from the observed increases in ozone at those sites. Model calculations incorporating only ozone projections show that cloud-free UV irradiance will continue to decrease, although other UV-influencing factors are likely to change at the same time.”…”The previous (2002) Assessment noted that climate change would influence the future of the ozone layer.”…”Climate change will also influence surface UV radiation through changes induced mainly to clouds and the ability of the Earthâ??s surface to reflect light. Aerosols and air pollutants are also expected to change in the future. These factors may result in either increases or decreases of surface UV irradiance, through absorption or scattering. As ozone depletion becomes smaller, these factors are likely to dominate future UV radiation levels.”….” Air pollutants may counterbalance the UV radiation increases resulting from ozone Observations confirm that UV-absorbing air pollutants in the lower troposphere, such as ozone, nitrogen dioxide (NO2) and sulfur dioxide (SO2), attenuate surface UV by up to ~20%. This effect is observed at locations near the emission sources. Air pollution exerts stronger attenuation in UV compared with attenuation in total solar irradiance.”…

Gavin, You ask “But does the specific percentage attribution really imply much for the future? (i.e. does it matter that CO2 forced 40% or 80% of 20th Century change?).” You seem to be making the rhetorical point that the percentage doesn’t matter because it depends on how you define it. However, the point of skeptics is, that no matter how you define it, if the modelers mistakenly attribute 80% to CO2 when it is only responsible for 40%, by whatever definition, then the modelers have their climate sensitivities to this particular forcing wrong, and their projections of future warming are likely to be even more wrong.

Naive statements such as that by Ng above “But isn’t the evidence clear that we are currently experiencing an unprecedented level of temperature increase and that has risen in line with CO2,” neglect the research showing that solar activity is also at one of its highest levels in the last 8000 years, and that paleo temperature proxies show stronger correlations with solar activity, than modelers can currently explain and that skeptics are unwilling to dismiss, especially just on the basis of the current state of modeling technology.

Hansen in his 2005 paper, made a point of his model being able to explain all the modern warming with GHGs. It would have been more interesting to see how much of the warming he could have explained with solar, if he had really tried. Runs that doubled the solar forcing, to account for the uncertainty in that forcing would have been a start. Then since the focus of the paper was on the importance of the heat storage in the ocean, a possible next step would be to model what the effects might be if the solar and GHG couplings to the oceans were quite different, which they apparently are. This would not give us a more informative an answer about what the relative attribution of the 20th century warming is, but would perhaps give us a range on what it could be, given our current lack of knowledge and understanding. We probably will never be able to use the 20th century data to parameterize our models, because we don’t have the coverage and accuracy we need for much of the century. Hopefully, more refined work with recent and future data, and incorporation of research into the coupling mechanisms themselves, will allow us to validate the model climate sensitivities to the various forcings, and confidently reproduce multidecadal internal climate modes. Perhaps then, since we are curious, we may be able to properly attribute the 20th century and recent warming.

[Response: ??? Try reading Hansen et al 2005 (and the follow-up submitted paper) – all available at the GISS website. We used I think 14 different forcings individually and together to assess how important each one was. Each one is specified as independently as possible (with uncertainties of course) and run through the model. GHGs alone give more warming than observed, solar is a minor component of that, and reflective aerosols (both direct and indirect effects) and black carbon are shown to be important. If you throw it all in together, it does a good job for the 20th Century. My point in the piece is that attribution is a modelling exercise – there can be no attribution without a model to link cause and effect. Your claim that we could have attributed everything to solar ‘if we tried’ is just ridiculous. We use the best forcing time series that we can get from the rest of the community, and we can’t arbitrarily scale them to get your preferred response – that would indeed be pointless. – gavin]

Gavin,

This is slightly off-topic, but I wonder if RC might consider a post on the connection between air quality and climate change (e.g. the GHG impact of troposheric ozone).

[Response: See Loretta Mickley’s guest piece: https://www.realclimate.org/index.php/archives/2005/04/pollution-climate-connections/ -gavin]

Gavin, thanks again for this clear, insightful and challenging (to some) entry. Your site does a great service to the global community; not only directly, but also indirectly in that user responses to common misunderstandings such as overattribution to Solar forcing and challenging the measurability of the climate system get dispatched quickly and evenly. If they can’t be so dispatched, that’s where the science comes from.

With respect to policy decisions, perhaps with these data we can make a stab at “best-path” efforts. Clearing carbon black may in the short term be cheaper and meet less opposition than hitting CO2 on the head. Of course, we’ll have to hammer away at CO2 eventually, but if there’s $250 billion a year worth of established industry on one side of the equation, and nothing but silence on the other, shouldn’t we be hitting carbon black with a vengence? My concern is that the puny 1 oC warming of the Eemian was enough to raise sea levels 5-8 meters (see Fig. 1 of http://columbia.edu/~jeh1/hansen_re-crichton.pdf and the Wikipedia article on Eemian for this). We’re almost at that now! While we have to hit CO2 soon enough, and sooner is better, it occurs to me that the best-path is to hit the cheapest targets first. Does anyone know whether you can scrub soot from, say, coal emissions while leaving aerosols alone? If so, we should probably be doing that already, since political opposition will be disorganized and far smaller – and we don’t want to have to build 8 meter seawalls around the entire inhabited coast of the planet.

Thanks Gavin for this insightful post showing the nuances, both pro and con, of how the peer-reviewed climate communtity tries to honestly go about its business. These are insights that the public needs to read.

Gavin, I don’t clearly understand this : “But does the specific percentage attribution really imply much for the future? (i.e. does it matter that CO2 forced 40% or 80% of 20th Century change?).” If you want to estimate climate sensitivity to doubling CO2, don’t you need to estimate as precisely as possible the direct and indirect effects of each forcing on temperature trends ? If (delta)t2xCO2 is 1,5, 3 or 5 °C, it’s not exactly the same perspective for mitigation / adaptation.

[Response: Umm.. I agree. But the sensitivity of a model to only CO2 forcing is a very different issue than the looking at the relative amount of that forcing compared to all the others. You are correct though, in thinking that the sensitivity is a more important question for future projections. – gavin]

Now that Svensmark et al. showed in their SKY experiment that indeed GCR (galactic cosmic rays) are a possible factor in contributing to low cloud cover (which has a net cooling effect), and that the 100 year past behaviour of suns high activity with corresponding lower cloud cover might explain 1.2 W/m2 of the forcings (compared to IPCC’s 1.4 W/m2 attributed to anthropogenic greenhouse gases), is it not dishonest to ignore this (possible major, and CO2 independant) contribution?

[Response: There is an awfully long way to go from a simple lab experiment demonstrating a proof of concept to showing that this is an important mechanism of aerosol formation (of which there are many), and showing that it effects cloud cover significantly, and that it gives a non-negligible radiative forcing over the 20th Century. Over the last fifty years there has been no trend in cosmic rays anyway, and so for the temperature rises in recent decades it is highly unlikely to play a role. As has been stated often, the long term component associated with solar changes is pretty uncertain, but as the numbers above show, you would need to have the long term trend in solar-related forcings increase by a factor of five to even match CO2, let alone the total from all GHGs. Potential mechanisms for solar impacts on climate are a fascinating subject and may well help explain observed changes in the paleo-climate record, however, hoping that they will somehow magically reduce the effect of GHG increases today is foolish. -gavin]

Re #22 (comment):

Gavin, one of the main problems with current models is the attribution to aerosol forcings. There is a huge offset between aerosols and CO2 sensitivity, as can be seen in Climate sensitivity and aerosol forcings.

If aerosols have less influence (these are quite uncertain) than currently implemented in climate models, this reduces climate sensitivity for CO2, but not necessary for solar and volcanic.

With a simple EBM (energy balance model, used in a course at the University of Oxford) one can see that a halving of the sensitivity for CO2, compensated with a huge reduction of aerosol influence fits the temperature trend of the previous century as good as the original…

See here

[Response: No it doesn’t. The sensitivity of any model to CO2 is completely independent of it’s sensitivity to aerosol forcings. The problem of being able to tune an EBM to get anything you want is exactly why we use physically based models like GCMs, and use spatial and temporal patterns to do formal attribution. -gavin]

Gavin, care to comment about the state of knowledge regarding radiative forcings? As in, the degree to which actual high quality measurements exist of the fluxes? Not only by satellites but also “looking” from other perspectives, across all pertinent interfaces, in all the pertinent dimensions? Also, how about the general heat flows at the various scales, accounting for non radiative transfers as well.

[Response:Radiative forcings are estimated from radiative transfer models, they are not observed quantities. Radiative fluxes are observed to an increasing level of accuracy but long consistent time series are few and far between. It isn’t (yet) possible to measure the radiative imbalance at the top of the atmosphere, which is why it is easier to look at heat storage. Kiehl and Trenberth’s figure is still a good approximation to what we think we know. – gavin]

RE: #17 – and conveniently, all the thermometers are located on some sort of equidistant grid, across the whole earth, with no error inducing factors of any kind. Then there is reality.

re 17

The following is just an example, and my original question was that I wonder how climate models adjust to non-linearities in the atmosphere.(Could one of the scientists at realclimate please respond)

Thank you for your posting, but if I understand you then I think that you miss the point, which must be my fault for not making myself clear.

Temperature is not, I’m afraid, a simple concept, which is what I am trying to say. It’s a logarithm ratio of entropy versus energy. Humans think they know what temperature is, because it is quoted at them all of the time, but materials do not show a linear relationship between energy and temperature. This is well known.

Ground temperatures vary from minus 50 degrees to plus 50 degrees and pressure varies by plus or minus 10%, or thereabouts. Within this range, gases such as oxygen do not obey the gas laws and the non-linearity is roughly of the same order as shown in AGW. The atmosphere as a whole has a much wider range of temperature and pressure and is non-linear over these ranges.

“It is true that heat could be going elsewhere (i.e. melting ice, deep ocean heating), but most of these would actually suppress a warming signature. It is hence plausable that the equlibrium response of the planet to the forcings already present is higher than the observed temperature increase.”

Maybe, but if you take a kilo of water at 100 degrees and a kilo of ice at 0 degrees and mix the two, the resultant temperature is not the arithmetic mean (50 degrees) It’s a lot lower; try it yourself.

The reason for this is that the relationship between energy and temperature for water is highly non-linear around 0 degrees. The energy that you have to remove from water to create ice is roughly the same as that which you have to put in to take it from 0 degrees to 100 degrees.

Solar radiation is an example of a forcing that is a small sinusoid around a larger mean. If the amplitude of the sinusoid increases [if the hot gets hotter and the cold gets colder], the mean of the solar forcing remains the same since the mean of the sinusoid is always zero, independant of the amplitude.

But the mean of the atmospheric temperature (which is not linearly related to the input solar radiation) changes because the distribution of the output is a non-linear, distorted sinusoid around a mean. The mean of a distorted sinusoid is dependant upon the amplitude of the sinusoid and the type of the distortion.

So if there is more variation in energy from solar radiation today than there was 100 years ago, the mean temperature would change, but the actual energy of the earth would be the same.

The rise in mean would be an artifact of the relationship between temperature and energy.

[Response: It wouldn’t be an artifact, it would just be a fact. Regardless, models are designed to conserve energy and temperature is a diagnostic field which can be averaged any way you want. We use anomalies of global or hemispheric mean of surface air temperature (usually at 2m or so) to compare to observations because that is what we think we can reliably estimate from the real world. -gavin]

Gavin,

Thanks for the link to the previous post, but my question/interest was the opposite of what the post talks about. I’m interested in a discussion about the forcing associated with tropospheric ozone and how this is modeled, not what impact AGW may have on future ozone concentrations. Sorry if that wasn’t clear. My understanding is that the net forcing of tropospheric O3 also takes into account its effect on methane decomposition in the atmosphere, but I’ve never seen a nice summary that explains the science.

Another reason I bring this up is that people often think that addressing air quality and climate change is an either/or proposition when in fact it isn’t.

Re: #22,

Gavin you state: “Each one is specified as independently as possible (with uncertainties of course) and run through the model.”

I have read the papers, I don’t recall that you ran the uncertainties in solar forcing through the model, and there was a factor of two in those uncertainties. You only ran one effective forcing through the model, which is the estimate of the forcing reduced by a factor of 0.92.

You also state “If you throw it all in together, it does a good job for the 20th Century.” The point is that other models with quite different sensitivities also do a “good job” for the 20th century. The problem is poorly constrained.

You state, “we can’t arbitrarily scale them to get your preferred response – that would indeed be pointless”. I have read published research that did exactly this and claimed that the fit to the recent warming was poor. That was not pointless. It is a problem for the skeptics in fact, although this problem may be partially resolved by the correlated albedo biases in the AR4 models.

I agree that attribution is a modeling exercise for a small temperature increase such as we have experienced in the 20th century. But the paleo data does suggest a larger sensitivity for solar forcing than for GHG forcing. Exploring the implications of differential climate sensitivities for the forcings is a legitimate exercise, especially since the current climate models have a wide range of sensitivities, and all have simplified, parameterizations of the couplings. The difference in solar and GHG coupling to the ocean does not appear to be properly modeled.

Re #28 comment:

Gavin, if the influence of aerosols is overestimated, you can’t fit the past century’s temperature with a 3 degr.C / 2xCO2, no matter what model you use, as the 1945-1975 and beyond temperature would be way too high. Thus as you need to fit the past century, one must lower the sensitivity for CO2. And solar sensitivity is anyway underestimated. I don’t know of any physical reason that the sensitivity for solar should be only 0.92 of that for CO2 for the same forcing. To the contrary, as there is an inverse correlation between low cloud cover and solar irradiation, and solar/volcanic have influences in the stratosphere, non-excisting for CO2 or human made aerosols. A sensitivity study for solar in the HadCM3 model showed that it probably underestimates solar with a factor 2 (see Stott ea.)

Further, does a full GCM do a better job than a simple EBM model? Just curious… And why not using multivariate analyses, to see what the different factors might be, if climate was just a black box with known inputs and output(s), but unknown mechanisms (which there are more than enough) in between…

Re #33 Matin, you write “I have read the papers, …”

Which papers? Gavin isn’t the only person reading this blog. He may know which papers your are talking about, but the rest of us don’t. The key to a good post is to know your audience. It is not just Gavin!

You say he’s wrong on the land use yet Crichton sources Nature. And from the first paragraph of that article:

“Moreover, our estimate of 0.27 C mean surface warming per century due to land-use changes is at least twice as high as previous estimates based on urbanization alone7,8.”

What am I missing?

[Response: The study quoted uses the difference between the weather models and the mostly independent surface temperature record to estimate a residual trend. With no physical reasoning at all they attribute the difference to land use changes, despite the fact that no bottom up study of land use changes produces even a warming, let alone one that matches their numbers. My main problem with that study is that the weather models don’t use any forcings at all – no changes in ozone, CO2, volcanos, aerosols, solar etc. – and so while some of the effects of the forcings might be captured (since the weather models assimilate satellite data etc.), there is no reason to think that they get all of the signal – particularly for near surface effects (tropospheric ozone for instance). Residual calculations can sometimes be useful, but you have to be sure that you have taken everything else into account. These studies have not. -gavin]

Thanks for the clear explanation. As mentioned above, it appears that CO2 is presently only 40% of the problem, and CO2 emission is very expensive to reduce in the short term.

Potentially we can eliminate nearly 60% of the short term forcing at reasonable cost by radically reducing real polution (such as O3, NOx, and BC) that also causes immediate health problems. Selling such a plan where everyone sees benefits will be a lot easier than selling a very expensive approach with almost no near term benefits.

CO2 reduction may be much cheaper using technology developed in the next 20 years.

Is the following ever accounted for in models — and should it be? Currently the biosphere is largely controlled to produce what humans want. In the paleo record, the biosphere responded to atmospheric, solar and GHG changes in the way that provided selective advantage. If there is significant feedback possible, this would result in the planet responding differently now than it always did before.

In other words, today we try to squeeze the maximum economic value out of every acre. We do that in the face of changing climate so agricultural systems respond by changing. In the distant past, evolution determined how plant communities responded. That could affect carbon and other uptakes, water cycles, albedo, etc.

Re: #34

Apologies Alastair. I just didn’t want to be repetitive, Gavin and I have been discussing across several threads and forums. Here are the two abstracts with links to the full text:

http://pubs.giss.nasa.gov/abstracts/2005/Hansen_etal_2.html

http://pubs.giss.nasa.gov/abstracts/2005/HansenNazarenkoR.html

The first is cited in the second and provides basis for the “effective forcings” used in the second.

Thanks, Gavin, for your very clear discussion about attribution.

I would like to point out that, recently, also non-dynamical modeling (namely neural network modeling) has shown that it is not possible to reconstruct the temperature course of the last 150 years if anthropogenic forcings are not taken into account. Furthermore, a clear contribution of ENSO has been revealed as far as the correct reconstruction of inter-annual variability of global T is concerned.

I would like to point out this, because this paper of mine has been published in a journal which is not completely dedicated to climate and therefore it can be unknown to many of you.

Please refer to Pasini et al. (2006), Neural network modelling for the analysis of forcings/temperatures relationships at different scales in the climate system, Ecological Modelling 191, 58-67:

http://dx.doi.org/10.1016/j.ecolmodel.2005.08.012

Thanks again

Antonello

Gavin

Thank you very much indeed for taking the time to reply, but I’m afraid that your reply does not address the issue.

I don’t understand your statement that I could apply any average as it would be mathematically unsound.

A mean temperature is not a useful fact, since it does not give any information as to the rise in energy of the earth as a whole. Any meaningful information is lost because you have not qualified the non-linearities or the distribution of the energy, and information may well be lost because of the distortion, anyway.

It is entirely possible for the mean temperature to rise when the total energy of the earth does not, and your old AM radio would not work if this were not the case as it relies on this effect in non-linear regions. Hence my statement that a mean temperature rise would be an artifact, at least in the example given which was only for explanatory purposes.

Anyway, that wasn’t the original question, which was how do your models cope with non-linearities in the atmosphere. If you are not taking into account deviations from ideal gas laws, then I am concerned that e.g. rising air will, in your model, end up at the wrong energy level and your temperatures will then be in error by roughly the same order as the temperature as attributed to AGW.

RE: 24. and 35.

“Does anyone know whether you can scrub soot from, say, coal emissions while leaving aerosols alone? ”

An aerosol may, or may not form clouds. That’s a wide football field hail mary let it land where it may generalization.

One needs to realize ….

to begin with particulate matter is so miniscule, ppm..

Anyhow…

The only technologies for scrubbing, or removing that I am aware of, are electrostatic precipitators (ESPs) or fabric filters.

Particulate Control R&D

http://www.fe.doe.gov/programs/powersystems/pollutioncontrols/overview_particulatecontrols.html

So, it’s a matter of improving that technology…

and in my opinion, its, improving the technology, because it’s harmful to a human’s health, toxic, and particulates are inhalable, so it adds to the burden of the health care system and medicare benefits,disability benefits, in incremental cost to society for health care, if we are increasing particulate emissions.

The logic behind this entire train of thought and thinking process escapes me.

Stronger Standards for Particles Proposed

12/21/2005 EPA

http://yosemite.epa.gov/opa/admpress.nsf/68b5f2d54f3eefd28525701500517fbf/1e5d3c6f081ac7ea852570de0050ae2b!OpenDocument

…”Numerous studies have associated fine particulate matter with a variety of respiratory and cardiovascular problems, ranging from aggravated asthma, to irregular heartbeats, heart attacks, and early death in people with heart or lung disease. EPA has had national air quality standards for fine particles since 1997 and for coarse particles 10 micrometers and smaller (PM10) since 1987. Particle pollution can also contribute to visibility impairment.”…

Coal plants have been using sorbents, and the technology is improving all the time, such as amine scrubbers. Essential the scrubbing of coal flue gas emissions incorporates various sorbents, limestone, and

amines to scrub out soot, sulfates and all those lovely things…

See …

Key Issues & Mandates

Secure & Reliable Energy Supplies – Coal Becomes a â??Future Fuelâ??

http://www.netl.doe.gov/KeyIssues/future_fuel.html

…”Scrubbers can reduce sulfur emissions by 90 percent or more. They are essentially large towers in which aqueous mixtures of lime or limestone â??sorbentsâ?? are sprayed through the flue gases exiting a coal boiler. The lime/limestone absorbs the sulfur from the flue gas. “….” In the late 1970s and 1980s, power plant engineers tested a new type of coal burner that fired coal in stages and carefully restricted the amount of oxygen in the stages where combustion temperatures were the highest. This concept of â??staged combustionâ?? led to â??low-NOx burners.â?? Low-NOx burners have been installed on nearly 75 percent of large U.S. coal-fired power plants. They have typically been effective in reducing nitrogen oxides by 40 to 60 percent. “…..”In 1990, new amendments to the Clean Air Act mandated that nationwide caps be placed on the release of sulfur dioxide and nitrogen oxides from coal-burning power plants. In some areas of the United States â?? particularly the eastern portion of the Nation â?? many states must implement plans to reduce nitrogen oxides to even greater levels than those mandated by the nationwide cap. To reduce NOx pollutants to these levels, scientists have developed devices that work similar to a catalytic converter used to reduce automobile emissions. Called â??selective catalytic reductionâ?? systems, they are installed downstream of the coal boiler. Exhaust gases, prior to going up the smokestack, pass through the system where anhydrous ammonia reacts with the NOx and converts it to harmless nitrogen and water.” …

“The Coal Plant of the Future”

Key Issues & Mandates

Clean Power Generation

http://www.netl.doe.gov/KeyIssues/clean_power.html

A new breed of coal plant that relies on coal gasification represents an important trend in coal-fired units â?? distinctly different from the conventional coal combustion power station. Rather than burning coal, such plants first convert coal into a combustible gas. The conversion process â?? achieved by reacting coal with steam and oxygen under high pressures â?? produces a gas that can be cleaned of more than 99 percent of its sulfur and nitrogen impurities using processes common to the modern chemical industry. Trace elements of mercury and other potential pollutants can also be removed from the coal gas; in fact, the coal gas can be cleaned to purity levels approaching, or in some cases, surpassing those of natural gas. “…..”A key to successful carbon sequestration will be to find affordable ways to separate carbon dioxide from the exhaust gases of coal plants. Techniques are being developed that can be applied to conventional combustion plants, but it is likely that capture methods will be even more effective when applied to integrated gasification combined-cycle plants. Integrated gasification combined-cycle plants release carbon dioxide in a much more concentrated stream than conventional plants, making its capture more effective and affordable. “……”

The following two, articles

“New Sorbents For Carbon Dioxide”

“Metal Sorbent Removes Mercury from Industrial Gas Streams”

can be found in the

National Energy Technology Laboratory

The June 2006 NETL Newsletter

http://www.netl.doe.gov/newsroom/netlog/july2006/Jul06netlog.html

And pricewise, it has become competive [ http://midcont.spe.org/images/midcont/articles/28//CO2EOR_15.ppt.%5D to use

CO2, and to sequestering in relation to oil fields. It actually increases oil field production…and miscible oil field flooding has a long history.

Improved Displacement and Sweep Efficiency in Gas Flooding

http://www.cpge.utexas.edu/re/gas_flooding.html

…”Oil recovery from miscible gas flooding is the fastest growing improved oil recovery technique in the US. The contribution of miscible and immiscible gas flooding to US production is currently about 330,000 barrels of oil per day.”…

[Beeson, D.M. and G.D. Ortloff, 1959, Laboratory Investigation of the Water-Driven Carbon Dioxide Process for Oil Recovery, trans., AIME 216, p. 388â??391.]

Johnson, J. W. et al (2002) “Geologic CO2 Sequestration: Predicting and Confirming Performance in Oil Reservoirs and Saline Aquifers” American Geophysical Union, Spring Meeting 2002, abstract #GC31A-04 http://adsabs.harvard.edu/abs/2002AGUSMGC31A..04J

….”Oil reservoirs offer a unique “win-win” approach because CO2 flooding is an effective technique of enhanced oil recovery (EOR), while saline aquifers offer immense storage capacity and widespread distribution. Although CO2-flood EOR has been widely used in the Permian Basin and elsewhere since the 1980s, the oil industry has just recently become concerned with the significant fraction of injected CO2 that eludes recycling and is therefore sequestered. This “lost” CO2 now has potential economic value in the growing emissions credit market; hence, the industry’s emerging interest in recasting CO2 floods as co-optimized EOR/sequestration projects.”…..

“BP and Edison Plan California Power Plant with CO2 Sequestration”

http://www.energyonline.com/Industry/News.aspx?NewsID=7011&BP_and_Edison_Plan_California_Power_Plant_with_CO2_Sequestration

“LCG, February 15, 2006–Edison Mission Group (EMG), a subsidiary of Edison International, and BP recently announced plans to build a hydrogen-fueled power plant in southern California that would generate electricity from petroleum coke with minimal carbon dioxide (CO2) emissions. The proposed, 500-MW project would utilize new financial incentives included in the Federal Energy Policy Act of 2005 for advanced gasification technologies.”…….”As part of the process, the CO2 gas would be captured and transported via pipeline to oil fields, where the CO2 would be injected underground into the oil reservoirs to improve oil production and sequester the CO2 from the earth’s atmosphere. The CO2 is produced along with the recovered oil, then recycled and reinjected. The companies estimate that about 90 percent of the CO2 would be sequestered. In November 2005, the Department of Energy (DOE) announced that a DOE-funded project had successfully sequestered CO2 into the Weyburn Oilfield in Saskatchewan, Canada, while doubling the fields oil recovery rate.”….The estimated cost of the plant is $1 billion. The companies plan to finish detailed engineering and commercial studies this year and to complete project investment decisions in 2008, with operations commencing in 2011.”

And the referred to Weyburn project is the 4 year geological sequestering CO2 project; the world’s largest project. Also monitored by the IEA-GHG who released a study on it.

“Successful Sequestration Project Could Mean More Oil and Less Carbon Dioxide Emissions”

DOE Fossil Energy Techlines News (2005 Techlines) 05058-Weyburn Sequestration Project (Nov 15, 2005)

http://fossil.energy.gov/news/techlines/2005/tl_weyburn_mou.html

“Weyburn Carbon Dioxide Storage Project Largest in the World”

http://www.sk25.ca/Default.aspx?DN=94,93,16,1,documents

..”The $40-million project was conducted by the PTRC and endorsed by the International Energy Agency Greenhouse Gas R&D Program. The Weyburn project has become a model of international co-operation between the public and private sectors, as well as research organizations from Canada, Europe, Japan and the United States.”…..”The Weyburn project was the world’s first large-scale study on the geological storage of CO2 in a partially depleted oil field,” explained Mike Monea, executive director of the PTRC. “While there are numerous large commercial CO2-enhanced oil-recovery operations around the world, none of these has undertaken the depth and extent of research that we have.”…”The Weyburn project is good news for addressing climate change because it proves that you can safely store 5,000 tonnes of CO2 per day in the ground rather than venting this greenhouse gas into the atmosphere,” said Dr. Wilson.”…

IEA Greenhouse Gas Programme

Capture and Storage of CO2

http://www.ieagreen.org.uk/ccs.html

..”Many of these geological traps have already

held hydrocarbons or liquids for many millions of years. “..

“storage capacities quoted are based on

injection costs of up to 20 US $ per tonne of CO2 stored.”..

“Report on “IEA GHG Weyburn CO2 Monitoring & Storage Project”

http://www.ieagreen.org.uk/glossies/weyburn.pdf

Also see the “NETL Carbon Sequestration Page”

http://www.netl.doe.gov/technologies/carbon_seq/index.html

And there many ongoing DOE efforts into the numerous technologies

September 12, 2006

Critical Carbon Sequestration Assessment Begins:

Midwest Partnership Looks at Appalachian Basin for Safe Storage Sites

http://www.fossil.energy.gov/news/techlines/2006/06052-

..”Researchers have estimated that the formations may have the capacity to store CO2 for more than 200 years.”…

“DOE Releases 2006 Carbon Sequestration Technology Roadmap,

Project Portfolio”

Department of Energy Techlines News (2006 Techlines)

06049-Sequestration Roadmap (Aug 22, 2006)

http://www.fossil.energy.gov/news/techlines/2006/06049-Sequestration_Roadmap_2006.html

http://www.netl.doe.gov/KeyIssues/future_fuel.html

…”Among the past year’s program highlights contained in the roadmap are the following:” The Regional Carbon Sequestration Partnerships have progressed to a validation phase in which they will conduct 25 field tests involving the injection of CO2 into underground formations where it will be stored and monitored. Pilot-scale tests and modeling of amine-based CO2 capture have shown that operating an amine stripper at vacuum can reduce energy use 5â??10 percent per unit of CO2 captured. Novel metal organic frameworks have shown significant potential as CO2 sorbents.”…

[*MORE INFO 8.76MB pdf file “Carbon Sequestration Technology Roadmap and Program Plan”

http://www.fossil.energy.gov/programs/sequestration/publications/programplans/2006/2006_sequestration_roadmap.pdf%5D

As to aerosols, there just isn’t the control over whether the aerosol is going to make the cloud form correctly.

For example with dry dust, one doesn’t have any rain, or little rain, due to the LACK of cloud formation;

…”The second type of aerosol that may have a significant effect on climate is desert dust. “…”Because the dust is composed of minerals, the particles absorb sunlight as well as scatter it. Through absorption of sunlight, the dust particles warm the layer of the atmosphere where they reside. This warmer air is believed to inhibit the formation of storm clouds. Through the suppression of storm clouds and their consequent rain, the dust veil is believed to further desert expansion.”… http://oea.larc.nasa.gov/PAIS/Aerosols.html

likewise say with the issue of small particle formation in clouds, you have a different refraction

and the radiation budget to deal with [“Impacts from Aerosol and Ice Particle Multiplication on Deep Convection Simulated by a Cloud-Resolving Model with a Double-Moment Bulk Microphysics Scheme and Fully Interactive Radiation” Phillips, V T et al (2005)]

This material explains it well in laymen’s terms….

“NASA Explains Puzzling Impact Of Polluted Skies On Climate” July 14, 2006

http://www.sciencedaily.com/releases/2006/07/060714082130.htm

“In a breakthrough study published today in the online edition of Science, scientists explain why aerosols tiny particles suspended in air pollution and smoke — sometimes stop clouds from forming and in other cases increase cloud cover.”…..””When the overall mixture of aerosol particles in pollution absorbs more sunlight, it is more effective at preventing clouds from forming. When pollutant aerosols are lighter in color and absorb less energy, they have the opposite effect and actually help clouds to form,” said Lorraine Remer of NASA’s Goddard Space Flight Center, Greenbelt, Md.”…..”With these observations alone, the scientists could not be absolutely sure that the aerosols themselves were causing the clouds to change. Other local weather factors such as shifting winds and the amount of moisture in the air could have been responsible, meaning the pollution was just along for the ride.”…””Separating the real effects of the aerosols from the coincidental effect of the meteorology was a hard problem to solve,” said Koren. In addition, the impact of aerosols is difficult to observe, compared to greenhouse gases like carbon dioxide, because aerosols only stay airborne for about one week, while greenhouse gases can linger for decades..”…”Using this new understanding of how aerosol pollution influences cloud cover, Kaufman and co-author Ilan Koren of the Weizmann Institute in Rehovot, Israel, estimate the impact world-wide could be as much as a 5 percent net increase in cloud cover. In polluted areas, these cloud changes can change the availability of fresh water and regional temperatures.”….

Re #18 and “This is very important site, cause it helps one to understand what the word “fearmongering” really means. It means – more money to another fearmongering studies and proxies.”

If the climatologists wanted more money, they’d be saying the problem needs more study. What they’re saying is that they know what the problem is. So the attribution of global warming warnings to climatologist greed is silly. It’s a much more effective ad hominem the other way; Lindzen and Baliunas and Soon and other AGW skeptics are receiving non-negligible subsidies from oil companies.

-BPL

Re Comment #37 (CO_2 reductions vs. black carbon and other pollutants):

Hansen made this point several years ago in a paper which received attention in the media. This was seen as back tracking by a major ‘prophet’ of global warming. But note that his latest warnings suggest that we may not have all that long to start doing something about curbing the growth of CO_2 emissions. It seems to me that resistance to slowing and eventually reducing the growth of CO_2 emissions is mainly political and fueled by certain economic interests who don’t want to change their current practices. As Hansen makes clear, we have to proceed on all fronts and ignoring CO_2 emissions for 20 years is not a viable policy if we are serious about the problem. Consider for example how different things would be today if Congress had never allowed the truck examption on fuel economy standards to be extended to vans and SUVs. The argument today seems to be that we can’t raise fuel economy standards because that would place an unfair restriction on US auto manufacturers. But these manufacturers seem to be doing a good job of losing market share all by themselves. I suspect they would be much better off today if they had been forced to compete on fuel economy since the 70s. The economic arguments for waiting are all based on rather short term considerations and ignore the potentially very large cost of waiting to begin taking appropriate measures. Choosing short term gain over long term advantage is childish, and it is time we grew up.

The crucial point has been a failure of leadership. Can you imagine Senator Inhofe getting way with the same sort of nonsense or Crichton being invited to the White House to discuss climate science with a President Gore or a President McCain? And remember that even Bush originally ran on a platform calling for reductions in CO_2 emissions which he promptly recanted upon being elected.

Re: #16 The Hadley Centre drought study.

Essentially you’re understanding is correct. The total amount of precipitation over the whole globe would be expected to increase, but the pattern of this rainfall might not stay the same. In particular, it is often the case that rainfall in one place inhibits rainfall in another place, because of the effect it has on the large-scale circulation [at least in the tropics].

Consequently, if there is more rainfall [due to greater evaporation] where there is already a lot of rainfall, then the effect that rainfall has on the circulation will be increased.

The Hadley study is suggesting that rainfall will become more variable, so there willbe more droughts and also presumably more floods. These effects of climate change are much harder to predict than global mean temperature, but will have a much greater impact and be much harder to adapt to.