The discussion of climate change in public (on blogs, in op-eds etc.) is often completely at odds to the discussion in the scientific community (in papers, at conferences, workshops etc.). In public discussions there is often an emphasis on seemingly simple questions (e.g. the percentage of the current greenhouse effect associated with water vapour) that, at first sight, appear to have profound importance to the question of human effects on climate change. In the scientific community however, discussions about these ‘simple’ questions are often not, and have subtleties that rarely get publicly addressed.

One such question is the percentage of 20th Century warming that can be attributed to CO2 increases. This appears straightforward, but it might be rather surprising to readers that this has neither an obvious definition, nor a precise answer. I will therefore try to explain why.

(courtesy Alexander Ač).

First of all, ‘attribution’ in the technical sense requires a certain statistical power (i.e. you should be able to rule out alternate explanations with some level of confidence). This is a stricter measure than the word in common parlance would imply (another example of where popular usage and scientific usage of a term might cause confusion). Secondly, attribution (in the technical sense) of an observed climate change is inherently a modelling exercise. Some physical model (of whatever complexity) must be used to link cause and effect – simple statistical correlations between a forcing and a (noisy) response are not sufficient to distinguish between two potential forcings with similar trends. Given that modelling is a rather uncertain business, those uncertainties must be reflected in any eventual attribution. It certainly is an important question whether we can attribute current climate change to anthropogenic forcing – but this is generally done on a probabilistic basis (i.e. anthropogenic climate change has been detected with some high probability and is likely to explain a substantial part of the trends – but with some uncertainty on the exact percentage depending on the methodology used – the IPCC (2001) chapter is good on this subject).

For the case of the global mean temperature however, we have enough modelling experience to have confidence that, to first order, global mean surface temperatures at decadal and longer timescales are a reasonably linear function of the global mean radiative forcings. This result is built in to simple energy balance models, but is confirmed (more or less) by more complex ocean-atmosphere coupled models and our understanding of long term paleo-climate change. With this model implicity in mind, we can switch the original simple question regarding the attribution of the 20th C temperature response to the attribution of the 20th C forcing. That is, what is the percentage attribution of CO2 to the 20th century forcings?

This is a subtly different problem of course. For one, it avoids the ambiguity related to the lags of the temperature response to the forcings (a couple of decades), it assumes that all forcings are created equal and that they add in a linear manner, and removes the impact of internal variability (since that occurs mainly in the response, not the forcings). These subtleties can be addressed (and are in the formal attribution literature), but we’ll skip over that for now.

Next, how can we define the attribution when we have multiple different forcings – some with warming effects, some with cooling effects that togther might cancel out? Imagine 3 forcings, A, B and C, with forcings of +1, +1 and -1 W/m2 respecitively. Given the net forcing of +1, you could simplistically assign 100% of the effect to A or B. That is pretty arbitrary, and so a better procedure would be to stack up all the warming terms on one side (A+B) and assign the atribution based on the contribution to the warming terms i.e. A/(A+B). That gives an attribution to A and B of 50% each, which seems more reasonable. But even this is ambiguous in some circumstances. Imagine that B is actually a net effect of two separate sources (I’ll give an example of this later on), and so B can alternately be written as two forcings, B1 and B2, one of which is 1.5 and the other that is -0.5. Now by our same definition as before, A is responsible for only 40% of the warming despite nothing having changed about understanding of A nor in the totality of the net forcing (which is still +1).

A real world example of this relates to methane and ozone. If you calculate the forcings (see Shindell et al, 2005) of these two gases using their current concentrations, you get about 0.5 W/m2 and 0.4 W/m2 respectively. However, methane and ozone amounts are related through atmospheric chemistry and can be thought of alternatively as being the consquences of emissions of methane and other reactive gases (in particular, NOx and CO). NOx has a net negative effect since it reduces CH4, and thus the direct impact of methane emissions can be thought of as greater (around 0.8 W/m2, with 0.2 from CO, and -0.1 from NOx) . Nothing has actually changed – it is simply an accounting exercise, but the attribution to methane has increased.

Is there any way to calculate an attribution of the warming factors robustly so that the attributions don’t depend on arbitrary redefinitions? Unfortunately no. So we are stuck with an attribution based on the total forcings that can exceed 100%, or an attribution based on warming factors that is not robust to definitional changes. This is the prime reason why this simple-minded calculation is not discussed in the literature very often. In contrast, there is a rich literature of more sophisticated attribution studies that look at patterns of response to various forcings.

Possibly more useful is a categorisation based on a seperation of anthropogenic and natural (solar, volcanic) forcings. This is less susceptible to rearrangements, and so should be less arbitrary and has been preferred for more formal detection and attribution studies (for instance, Stott et al, 2000).

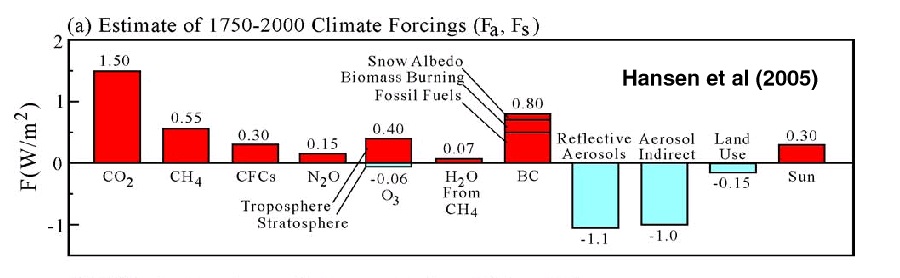

What does this all mean in practice? Estimated time series of forcings can be found on the GISS website. As estimated by Hansen et al, 2005 (see figure), the total forcing from 1750 to 2000 is about 1.7 W/m2 (it is slightly smaller for 1850 to 2000, but that difference is a minor issue). The biggest warming factors are CO2 (1.5 W/m2), CH4 (0.6 W/m2, including indirect effects), CFCs (0.3), N2O (0.15), O3 (0.3), black carbon (0.8), and solar (0.3), and the important cooling factors are sulphate and nitrate aerosols (~-2.1, including direct and indriect effects), and land use (-0.15). Each of these terms has uncertainty associated with it (a lot for aerosol effects, less for the GHGs). So CO2‘s role compared to the net forcing is about 85% of the effect, but 37% compared to all warming effects. All well-mixed greenhouse gases are 64% of warming effects, and all anthropogenic forcings (everything except solar, volcanic effects have very small trends) are ~80% of the forcings (and are strongly positive). Even if solar trends were doubled, it would still only be less than half of the effect of CO2, and barely a fifth of the total greenhouse gas forcing. If we take account of the uncertainties, the CO2 attribution compared to all warming effects could vary from 30 to 40% perhaps. The headline number therefore depends very much on what you assume.

Recently, Roger Pielke Sr. came up with a (rather improbably precise) value of 26.5% for the CO2 contribution. This was predicated on the enhanced methane forcing mentioned above (though he didn’t remove the ozone effect, which was inconsistent), an unjustified downgrading of the CO2 forcing (from 1.4 to 1.1 W/m2), the addition of an estimated albedo change from remote sensing (but there is no way to assess whether that was either already included (due to aerosol effects), or is a feedback rather than a forcing). A more appropriate re-calculation would give numbers more like those discussed above (i.e. around 30 to 40%).

But this is game anyone can play. If you’re clever (and dishonest) you can take advantage of the fact that many people are unaware that there are cooling factors at all. By showing that B explains all of the net forcing, you can imply that the effect of A is zero since there is nothing apparently left to explain. Crichton has used this in his presentations to imply that because land use and solar have warming impacts (though he’s simply wrong on the land use case), CO2 just can’t have any significant effect (slide 18). Sneaky, eh?

But does the specific percentage attribution really imply much for the future? (i.e. does it matter that CO2 forced 40% or 80% of 20th Century change?). The focus of the debate on CO2 is not wholly predicated on its attribution to past forcing (since concern about CO2 emissions was raised long before human-caused climate change had been clearly detected, let alone attributed), but on its potential for causing large future growth in forcings. CO2 trends are forecast to dominate trends in other components (due in part to the long timescales needed to draw the excess CO2 down into the deep ocean). Indeed, for the last decade, by far the major growth in forcings has come from CO2, and that is unlikely to change in decades to come. The understanding of the physics of greenhouse gases and the accumulation of evidence for GHG-driven climate change is now overwhelming – and much of that information has not yet made it into formal attribution studies – thus scientists on the whole are more sure of the attribution than is reflected in those papers. This is not to say that formal attribution per se is not relevant – it is, especially for dealing with the issue of natural variability, and assessing our ability to correctly explain recent changes as part of an evaluation of future projections. It’s just that precisely knowing the percentage is less important than knowing that that the observed climate change was highly unlikely to be natural.

In summary, I hope I’ve shown that there is too much ambiguity in any exact percentage attribution for it to be particularly relevant, though I don’t suppose that will stop it being discussed. Maybe this provides a bit of context though…

Can you recommend any good textbooks in meteorology and climate science appropriate for an mathematics undergraduate who also knows the basic of physics? (Classical mechanics, physics of heat transfer and so on.)

[Response: Ruddiman’s text is good, and so is David Archer’s. – gavin]

re 10.

The apparent leveling off in global temperatures from the 1940s to 1970s might be explained as follows. A reduction in global cloud cover and thus an increase in solar radiation reaching the surface occurred during the dust bowl years of the early-mid 1930s. The warm mid 1930s to 1940s period had frequent EL Nino conditions, perhaps initiated by the reduced cloud cover during the early 1930s. Given that the 1930s-1940s was unusually warm for the 19th – mid 20th century period, the

apparent leveling off in global temperatures in the 1950s and 1960s may merely have been a return to meteorologic conditions similar to that which existed earlier in the 20th century.

A recent study (excerpt below) on cosmic rays and clouds seems to support my comments (above).

Excerpt:

… ‘A team at the Danish National Space Center has discovered how cosmic rays from exploding stars can help to make clouds in the atmosphere. … , during the 20th Century, the Sun’s magnetic field which shields Earth from cosmic rays more than doubled, thereby reducing the average influx of cosmic rays.’ …

Cosmic radiation entering Earth’s atmosphere. Credit: Danish National Space Center.

by Staff Writers

Copenhagen, Denmark (SPX) Oct 06, 2006

http://www.spacedaily.com/reports/Exploding_Stars_Influence_Climate_Of_Earth_999.html

RE: #50 – What is the average annual “normal” rainfall at Coalinga or Leemore? (I already know the answer, this is just to make a rhetorical point) Look up their classification, my prefered source is Landis. What are those two places’ coordinates? They are closer than 200 miles. However, an even more telling type location would be somewhere near the intersection of Interstate 5 and State Route 138. The areas at the edges of the deserts have been experiencing a trend toward above normal rainfall for years. And alluding to Dano’s snipe, the forest densification in coastal central and northern Cal is clearly attributable to moisture abundance. The past 10 years have been, on the whole, very wet – that has been true for all ENSO phases. As for Steve B’s doubting of the possibility of desertification in places like East Central Arkansas, again, look at the recent stats. Then, you could do as I did and take a look. Things have been very, very dry there and for hundreds of miles around, for years. Finally there has been rain there over the past month. But the overall trend over the past few years has been dry. The Dust Bowl was not far from that region. Etc.

Gavin, another point, but it’s not directly adressed to the core of the present discussion (so, you’re free to ignore :-). You write :”Indeed, for the last decade, by far the major growth in forcings has come from CO2, and that is unlikely to change in decades to come.” More generally, 1976-2005 is considered as the three decades of “anthropogenic-rather-than-any-other-cause warming”. But are we really sure of that ? For just an example in the recent litterature, Pinker et al. (2005) and Wild et al. (2005) observed that nebulosity trends as measured by ISCCP (or terrestrial albedo measured by two other means) between 1985-2003 could have imposed a positive forcing of 3-6 W/m2, nearly twice the level of GHG since 1750. For the moment, I did not read any reply to these papers (and graphs of ISCCP for 2003-2005 show quite the same trend for low-level / high-level clouds). So, my (prudential) question is : Three decades, is it enough for definitive conclusions about GHG’s primary role in current and future warming ?

[Response: The big problem with inferring radiative forcings from observations of clouds is that you don’t have a complete picture of all of the factors that influence the clouds changes on the radiation (i.e. SW and LW effects depend on the vertical profile, optical thickness, cloud particle size etc.). Plus these are extremely noisy records. Plus you wouldn’t know whether these are forcings or feedbacks. I don’t know anyone who would suggest that we have a good idea about what the trends in albedo are – all the records are partial, noisy and prone to confounding factors. In some of them, the huge albedo change due to the Pinatubo aerosols doesn’t even appear, in others the trends are opposed. Our understanding particularly of aerosol changes is indeed woefully inadequate, but uncertainty is not the same as knowing that they are changing dramatically (and they’d have to be reducing at a rapid rate to match the changes in CO2). The Pinker and Wild papers are talking about surface fluxes anyway (as I recall), not TOA changes, which are the important ones for radiative forcing. So I would put the statement that the growths in forcings over the last 30 years are predominantly due to GHGs in the highly likely (but not incontrovertable) category. – gavin]

re 52

The problem for sun-cloud-climate connexion in the XXth century is that the stabilizing / cooling trend began in the 40’s, whereas maximum spotnumber of the century occured in year 1959. This 15-20 years lag is not too coherent (or we need a very very strong albedo from aerosols, as long as between 1940 and 1960, GHGs continue to accumulate in the atmopshere and there’s no particularly large volcano forcing).

The last statement in 54. should read – there is little or no doubt that the last three decades of warming were driven by the accumulation of anthropogenic greenhouse gases in the atmosphere. Station temperature plots at all northern locations in the Upper Midwest, Alaska and in high latitude areas of the Rocky Mountains show dramatic increases in overnight minimum temperatures during the winter months.

See:

http://pg.photos.yahoo.com/ph/patneuman2000/my_photos

re 33

I thought this was a terrific question and was disappointed to not see an answer. Because I sometimes get the sense that GCMers do not care that the problem is poorly constrained. Granted, they’re powerless to do anything about it. But doesn’t that argue for cautious interpretation by those that rely on (but do not develop) these models? [Am I being overly presumptuous here?]

[Response: The relatively under-constrained nature of the global mean temperature record is precisely why most of the formal attribution studies using spatial patterns and as many different fields as they can. There is obviously much more data available than remains in the mean temperature record. The whole point of using GCMs is to be able to compare more directly with all sorts of different data. -gavin]

re 57

At the risk of trying your patience, I think you may have missed the point (and please correct me if I’m wrong on that) – as #33 was referring to the parameterizing of the GCMs as being a poorly constrained problem. Meaning you have hundreds of parameters (how many I don’t actually know) but very few inputs and outputs with which to constrain the universe of possible parameterizations. The literature on feedbacks, forcings and fluxes contrains you somewhat, but not much in the grand scheme of things. I don’t see that this has much to do with the “under-constrained nature of the global mean temperature record”, but it is always possible I’m missing something. Last post. Thanks.

[Response: Sorry, I did miss your point. In any model there are dozens of paramterisations – for the bulk surface fluxes, for the entrainment of convective plumes, for mixing etc. The parameters for these processes are generally estimated from direct observations of these small scale processes (though there is some flexibiilty due to uncertainties in the measurements, sampling issues, confounding effects etc.). The tuning for these processes is generally done at the level of that process. When the model is put together and includes all of this different physics, we evaluate the emergent properties of the model (large scale circulation, variability etc.) and see how well we do. When there are systematic problems we try and see why and learn something (hopefully). The number of parameters that are tuned to improve large scale effects are very small (in the GISS Model, maybe 3 or 4) and those are chosen to adjust for global radiative balance etc. Once the parameters are fixed, we do experiments to evaluate the variability, forced (impact of volcanoes, 20th C forcings, LGM etc. ) and internal (NAO, ENSO, MJO etc.). Again, we learn from the comparisons. The amount of data available to evaluate the models is vast – and more than we can handle, though directly assessing individual parameterisations from large scale evalulations is difficult (since it’s a complex system). Thus, most parameterisations are tested against small scale data (field experiments etc.). The problem is not so much under-constrained, as under-specified. There is generally more going on than is included within the (usually simple) parameterisation, and so parameterisation development is not really about changing some constant multiplier, but changing the underlying conception to better match the real physics. – gavin]

#51 (Johan Richter):

These are more meteorologically oriented than the textbooks Gavin recommended:

“Atmospheric Science: an Introductory Survey,” by Wallace and Hobbs (1977) is a classic. Also, “Meteorology for Scientists and Engineers,” by Roland Stull (2000?) is fantastic, and gets better each time I read through it.

Also, if you can find a copy of “Synoptic Climatology of the Westerlies,” by Jay Harman, you will be in for a treat.

Best of luck.

Anyone wanting to learn more, or wanting to try their hand at running climate models can and could join the UCAR community, (and their netcdf group to help you understand any computer coding glitches)…

Their climate models are free and open to anyone willing or wanting to do so.

I have been running one for the last couple of years or so. I am running UNIX in the background on my HP Windows with an Oracle SQL program database along with my Sun Java and somehow it still works fine for me; another words my computer did not blow up with my climate runs and all the other weird stuff I have going on with my PC.

“The Community Climate System Model (CCSM) is a fully-coupled, global climate model that provides state-of-the-art computer simulations of the Earth’s past, present, and future climate states. ”

http://www.cgd.ucar.edu/csm/

Experiments and Output Data

http://www.ccsm.ucar.edu/experiments/

“CCSM3 Experiments and Data”

http://www.ccsm.ucar.edu/experiments/ccsm3.0/

“To document and validate CCSM3.0, various multi-century control runs were carried out. All output data from these control runs is available to the public. The Earth System Grid (ESG) http://www.earthsystemgrid.org/ is the primary method for distributing this output data (more information below http://www.ccsm.ucar.edu/experiments/ccsm3.0/#data ). Also available on this web page is a sampling of diagnostic plots http://www.ccsm.ucar.edu/experiments/ccsm3.0/#plots from these control runs.”

“Another series of CCSM3 experiments is underway which will provide data for an upcoming IPCC Assessment Report. Data from these experiments will be made available after CCSM3 results are submitted to the IPCC. http://www.ipcc.ch/ ”

However, the EarthSystem Grid will be assimilated into the Teragrid http://www.teragrid.org/

but one should be able to find all of these climate models until its totally borged together.

CCSM (Community Climate System Model)

CCSM POP (modified version of Parallel Ocean Program)

CSIM (CCSM Sea Ice Model)

CLM (CCSM Community Land Model)

PCM (Parallel Climate Model)

POP (Parallel Ocean Program)

Scientific Data Processing and Visualization Software

UCAR’s latest journal papers

“Special Issue on Climate Modeling”

Fall 2005, Volume 19, No. 3

http://hpc.sagepub.com/content/vol19/issue3/

UCAR’s 11th Annual CCSM Workshop

http://www.cgd.ucar.edu/csm/news/ws.2006/index.html

notes

….”In addition, there have been over 200 papers written comparing simulations from all the climate models submitted to the Fourth Assessment Report of the IPCC. Many of these are listed at the IPCC Model Output Page.

http://www-pcmdi.llnl.gov/ipcc/diagnostic_subprojects.php” …

Incidentally, there are 553 subprojects (many dealing with the topic of soley GCMs) listed at Lawrence Livermore National Laboratory IPCC’s project page and a large number already have presented their subsequent publications (links provided on LLNL IPPC website).

“Last Updated: 2006-09-15 Current Total: 553 subprojects”

http://www-pcmdi.llnl.gov/ipcc/diagnostic_subprojects.php

Re #51 and “[Response: Ruddiman’s text is good, and so is David Archer’s. – gavin]”

I went to Archer’s site and began to read his fourth chapter, which is available on-line, and I immediately found an error — he says partial pressure is directly proportionate to volume fraction, which is just not true. You have to multiply by the molecular weight of the gas and divide by the mean molecular weight of the atmosphere. pCO2 is 57 Pascals, not 38.

-BPL

[Response: But since the molecular weights are constant in that calculation, the statement about proportionality is correct. -gavin]

Re: #16 (Summer is coming to New Zealand, isn’t it?)

My explanation of what I think basics is a little to long to write here, so I have place it at

http://web.sfc.keio.ac.jp/~masudako/memo_en/precip_evap_warming.html .

Re #60,

The problem with these experiments (as good as the climateprediction.net experiment which uses the HadCM3 model) is that one doesn’t have a possibility to change the sensitivities oneself. All experiments are pre-described.

I am looking for a full GCM, running in background on my own computer, where it is possible to make runs with a reduced sensitivity for GHGs (less feedback) and aerosols (less forcing and feedbacks) and an increased sensitivity for solar. The runs I made with the simple Oxford EBM model (in #28) indicates that it is possible to find a similar (to better) fit of 20th century temperature trend as with the original 3 K/2xCO2…

As there is a high probability that the (cooling) influence of aerosols is overestimated and the influence of solar variations is underestimated, some alternative runs might be of interest…

Re #27 (comment):

Gavin, you say:

I don’t think that solar alone can explain the full warming of the past century, but any increase in attribution to solar anyway will reduce the impact of GHGs, as the sum of all forcings with their (individual and common) feedbacks must fit the past century temperature trend.

Another interesting GCR-cloud finding, based on observations, is described by Harrison and Stephenson (Univ. of Reading): “Empirical evidence for a nonlinear effect of galactic cosmic rays on clouds”. But needs more investigation for longer-term effects.

>61, Barton and Gavin

A plea. Try to provide an explicit reference so readers who aren’t experts can figure out what you’re talking about.

Re Archer’s book, I looked at Ch. 4; I’m guessing you two are talking about this from the first page:

“…The mixing ratio of a gas is numerically equal to the pressure exerted by the gas, denoted for CO2 as pCO2. In 2005 as I write this the pCO2 of the atmosphere is reaching 380 μatm.”

But there’s no “partial pressure” or “Pascal” in the text of the chapter, if Adobe’s search tool isn’t lying. I searched for “38” — and found “380” — so — a plea to you all to try to remember your readers.

Google: define:Pascal yields this: * a unit of pressure equal to one newton per square meter …

Re 12:

Hank, you mention a study which suggests a possible cause why methane levels levelled off in the 1990s. For those of us who don’t have access to the subscription-only reports, would you mind summarising briefly what they suggest? Many thanks!

I’ve been looking at the various CO2 mixing ratio measurements at the CDIAC, and particularly the seasonal variation. I think there is useful information in the seasonal variations that I have not seen commented on before. I wrote this up as a “white blog post” and would appreciate comments.

I have the impression that the approach taken has been to use isotopic ratios to decompose the seasonal contributions.

Dr. Masuda, while humidity (and relative humidity) are controlled by thermodynamics, the equilibrium being quickly established, in my understanding condensation is controlled by kinetic factors, among other things the availability of condensation nuclei. Since the air is swept clear by rain (which is why things appear sharper in the distance after a rainstorm), it is not necessarily true that precipitation will increase.

Re:#11. The very small non-ideal gas contribution from oxygen is not the “major” deviation from ideality of the atmosphere, but rather the small contribution from variable water vapor concentrations is. Even that is only ~0.1%.

In both cases, if one wishes to relate temperature to the energy of the atmosphere, the small deviations from ideality are very, very small when compared to the linear term. For example, assume that temperature rises by 3 C, and over that range the specific heat, Cp, changes by .1%. The “error” in treating the case as linear would be 0.001Cp/(3Cp), or ~0.03%.

Thus, for practical purposes by Tim Hughes’ own argument, there is a linear relation between temperature and energy content of the atmosphere over the range of temperatures and pressures one encounters in the atmosphere.

>66, 12

Almuth, here’s another science news article on the Nature story

http://www.sciencedaily.com/releases/2006/09/060927201651.htm

excerpt:

———

…. it was a decline in emissions of methane from human activities in the 1990s that resulted in the recent slower growth of methane in the global atmosphere.

Since 1999, however, sources of methane from human activities have again increased, but their effect on the atmosphere has been counteracted by a reduction in wetland emissions of methane over the same period.

According to one of the authors of the Nature paper, Dr Paul Steele from CSIRO Marine and Atmospheric Research, prolonged drying of wetlands — caused by draining and climate change — has resulted in a reduction in the amount of methane released by wetlands, masking the rise in emissions from human activities.

“Had it not been for this reduction in methane emissions from wetlands, atmospheric levels of methane would most likely have continued rising,” he says.

“This suggests that, if the drying trend is reversed and emissions from wetlands return to normal, atmospheric methane levels may increase again, worsening the problem of climate change.”

The researchers used computer simulations of how the gas is transported in the atmosphere to trace back to the source of methane emissions, based on the past 20 years of atmospheric measurements.

———

Nature’s science news site of course is pay-to-view; this is their teaser:

Methane emissions on the rise

Quirin Schiermeier

SUMMARY: Industrial greenhouse-gas increase has been masked by natural declines.

CONTEXT: Current projections of methane emissions are likely to be too optimistic, an international team of atmospheric scientists reports today in Nature. Methane, which is less abundant in the atmosphere than carbon dioxide but 20 times more…

News@Nature (25 Sep 2006) News

You might look for references to Paul Steele from CSIRO Marine and Atmospheric

What I find most intersting about CO2 is the pattern it shows of increasing steadily after the end of the last several glacial maximums then dropping quickly as glaciation resumes. These cycles were present long before humans had any ability to affect climate.

I recognize that we humans are now driving a lot of the CO2 increase in the last hundred years but has this natural cycle ever been fully explained?

Maybe somebody could point me to post.

Re: 71.

I see a different pattern in viewing the figure on CO2 at realclimate’s 650,000 years of greenhouse gas concentrations (24 Nov 2005) at:

https://www.realclimate.org/index.php/archives/2005/11/650000-years-of-greenhouse-gas-concentrations/

Figure:

https://www.realclimate.org/epica_co2_f4.jpeg

Jim, some of these charts are plotted with older info on the left, others with older on the right.

You have to look at the label on each chart to see which way the time arrow runs in the sequence.

Would you look again at whatever you were looking at when you wrote 71, and check that?

Compare it to the figure Pat links to in 72, noting the time direction.

Re #71:

The main driver for the pre-industrial temperature-CO2 level correlation seems to be the ocean uptake. Colder water can absorb more CO2, while warmer water releases CO2. Add to this the changes in biosphere (ice ages – less land left for trees/shrubs/pasture, ocean algue production) and physico/chemosphere (ocean currents, deep water formation/uptake, rock wearing, dissolution) and one sees a remarkable stable correlation over the ice ages (~10 ppmv/K), which holds even in recent high-accumulation ice cores up to the LIA.

The 10 ppmv/K is the reaction of CO2 on temperature changes. That doesn’t tell anything about the influence of CO2 on temperature. According to GCM’s, the 10 ppmv/K in the pre-industrial ages includes a feedback of CO2 levels on temperature, but in near all cases, there is a huge overlap between temperature changes and CO2 changes. That means that it is not possible to separate what influences what. With one exception: the end of the last interglacial (the Eemian). Temperature and methane levels decreased, but CO2 levels remained high until the temperature nearly reached its minimum (see here). The subsequent decrease of CO2 levels (~40 ppmv) has little influence on the temperature. That points to a low influence of CO2 on temperature in that range. Of course, the much higher increase in recent times will have an influence on temperature, but probably less than what is implemented in current GCM’s…

re #69

Lots of people seem to make this point, so thanks for putting it; the error is assuming that the temperature change is only 3 degrees.

Air warming over land creates thermals, which commonly rise to the tropopause, going from surface temperature to minus 75 degrees, and from humidities of 100% to 0%, and pressures of 1 atm to 0.2 atm. If the deviation from standard gas laws are ignored, tben the ratio of potential and kinetic energy in the thermal gases will be incorrect by (roughly) the amount attributed to AGW.

But that is just another example.

For some reason, you have ignored that gas conditions across the earth are highly variable and that these gases move around the earth due to winds.

While the points are well taken, it remains possible, and I think adisable to make a much clearer and stronger statement for public consumption.

If the greenhouse gas forcing is 2.5 W/m^2, and the total forcing is 1.7 W/m^2, then the greenhouse forcing is responsible for 147% of the total forcing. In other words, even leaving aside the lags in the system, we are only seeing about 2/3 of the warming that would be due to greenhouse gases alone.

Because greenhouse gases are essentially cumulative and aerosols are essentially instantaneous, and because these two terms dominate, simple extrapolation tends to make the casual observer underestimate the sensitivity of the system to future greenhouse forcing.

(Additionally, the various lags in the system also make matters worse than they might appear at first consideration. This is somewhat off the present point, except in that it also tends to make generalizations either too opaque or too sanguine.)

This is why I suggest using the number 147% in this context: it is the ratio of all greenhouse forcings to the net of all forcings. To say the number falls between 40% and 80% depending on interpretation seems to me to contribute to systematic understatement of the problem. At least let us say that the number falls between 40% and 150%.

Eli at #68 – I think evaporation is more important to consider than condensation for this process. Clearly there can be no rain unless it has first evaporated. I think it is also true that the amount of evaporation in a year is >> than the humidity content of the atmosphere and, consequently, there is not much scope for there to be an imbalance between evaporation and precipitation on a global scale.

Therefore, what goes up, must come down.

Evaporation would be assumed to increase because of the higher temperatures, acting on the 2/3rds of the world covered by ocean. There are other factors, such as cloudiness and surface winds, that might impact this, but it is less obvious that they will have a strong trend.

Consequently I find it hard to accept that lack of cloud condensation nuclei could act as a limiter on condensation and subsequently precipitation.

>79, 65

“thermals, which commonly rise to the tropopause”

What’s your source for this statement? Where did you read it? Why do you consider that source reliable? Can you give us a link to it, so we can look up the references on which it is based?

I think you’re talking about “thunderstorms” not “thermals” — thunderstorms do rise that high at times, the flat top “anvil cloud” is what you see where a thunderstorm cloud has risen far enough to reach the tropopause.

Most thermals don’t have enough moisture carried high enough to even make a little puffy white cumulus cloud. A thunderstorm is a heat engine drawing up enough warm moist air, which continues to expand and release energy and punches on up for a while as it expands and cools, and moisture condenses releasing more heat energy to boost its climb.

Sometimes, yes, til it gets an anvil top.

Forest fires usually don’t punch up that high — and a forest fire is a much bigger heat source than a single thermal. Typically you get one of these:

http://www.atmos.washington.edu/2003Q3/101/notes/Pyrocumulus.jpg

From “Atmosphere 101” — looks like a good class there: excerpt:

——

The surface heating of the fire destabilizes the column, air (a “parcel”) from the surface rises, it remains warmer than the surrounding air and keeps rising, then it reaches its condensation level where it has cooled to its dew point temperature and is thus saturated, the cumulus cloud begins forming, but the air since now saturated is cooling at the moist adiabatic lapse rate rather than the dry adiabatic lapse rate, but it continues rising because it remains warmer than its surrounding, thus the cloud grows vertically. ”

— end excerpt—

Talk to anyone who’s flown hang gliders or regular gliders about this. Most thermals are quite well mixed and broken up within a few thousand feet above the ground.

https://ntc.cap.af.mil/ops/DOT/school/images/cullift.gif

Re #75

If models do neglect gas law deviations and other nonlinearities (you haven’t presented any actual evidence that they do), that would lead to a steady state bias that would be fairly easy to diagnose. Even if the magnitudes were similar, the nonlinearity bias wouldn’t look at all like the time-varying AGW signal, so it’s misleading to compare the two as if AGW might be misattributed nonlinearities.

re: 72, 73, 74 in response to my post 71

I’m referring to a chart such as this:

http://en.wikipedia.org/wiki/Image:Ice_Age_Temperature.png

that seems to show temperature, CO2, and glaciation almost in lock step with higher temperatures, higher CO2 matching with reduced glaciation. What’s more, the increase in CO2 and decrease in glaciation seems to occur very quickly in geological timeframes.

Post 74 seems to be saying the increased CO2 is caused by warmer oceans and less ice. This would seem to be saying the increased CO2 is a response to warming rather than a cause of it.

If CO2 is the cause of the warming (which was what my initial post had assumed), then my question was what was driving the CO2 increase.

Jim, your question is one of the Highlights of this site — see the right side of the main page for the link, or

https://www.realclimate.org/index.php?p=13

Re: 80.

Excerpts:

Ancient Climate Studies Suggest Earth On Fast Track To Global Warming

Human activities are releasing greenhouse gases more than 30 times

faster than the rate of emissions that triggered a period of extreme

global warming in the Earth’s past, according to an expert on

ancient climates.

“The emissions that caused this past episode of global warming

probably lasted 10,000 years. By burning fossil fuels, we are likely

to emit the same amount over the next three centuries,” said James

Zachos, professor of Earth sciences at the University of California,

Santa Cruz.

…

…

During the PETM, unknown factors released vast quantities of methane

that had been lying frozen in sediment deposits on the ocean floor.

After release, most of the methane reacted with dissolved oxygen to

form carbon dioxide, which made the seawater more acidic.

…

…

Santa Cruz CA (SPX) Feb 16, 2006, text posted at:

http://groups.yahoo.com/group/ClimateArchive/message/2816

Re: #71 Jim’s Question:

“What is causing the drop in CO2, as the earth cools, from the interglacial warm period to the glacial coldest period?”

See this review article in Nature “Glacial/interglacial variations in atmospheric carbon dioxide” by Sigman and Boyle (2000) for an explanation of why there is a 100 ppm drop in atmospheric carbon dioxide (280 ppm to 180 ppm) as glacial cycle progressed.

http://scholar.google.com/url?sa=U&q=http://www.atmos.ucla.edu/~gruber/teaching/papers_to_read/sigman_nat_00.pdf

The 100ppm drop in CO2 is not, primarily due to colder oceans. The following is an explanation of why colder oceans alone can not account for a 100 ppm drop in CO2. (See Nature paper for details).

As there is a vast amount of fresh water in the glacial period, in the new ice sheets, the ocean becomes Salter (3%). Salter water can hold less carbon dioxide (6.5 ppm less for a 3% increase in salt content). Colder water can hold more carbon dioxide, however, the deep ocean is already an average of 4C and will freeze (salty or not) at around -1.8C. The estimated maximum drop deep in deep ocean temperature is 2.5 C. The surface subtropical oceans were estimated to have cool by about 5C. (Note vast areas of the high latitude oceans were covered by ice, during the coldest period and could hence no longer absorb carbon dioxide.)

The reduction in carbon dioxide, due to colder oceans, is estimated to be max. 30 ppm. Now as vast areas of land which are currently forested, were covered by the glacial period ice sheets, the temperate forest is no longer using carbon dioxide which adds carbon dioxide to the atmosphere. In addition, during the glacial period large sections of tropical rain forest changes to savanna (About a third of the tropical forest changes to savanna. The planet is drier when it is colder), as savanna is less productive that tropical forests that change also adds carbon dioxide to the atmosphere. The Nature article estimates the temperate forest change and the increase in savanna, adds 15 ppm of carbon dioxide to the atmosphere. The net for this calculation is therefore = – 30 ppm + 6.5 ppm + 15 ppm = -8.5 ppm.

As there is 100 ppm to explain the above are not the solution. The above article explains that increased biological products in the ocean.

During the glacial period there are periodic (200yr, 500 yr, and 1500 yr cycle)rapid cooling events (RCCE “Rickeys”) during which there is an increase in dust (800 times above current in the Northern Hemisphere, Greenland Ice sheet cores, and about 15 times in the Southern Hemisphere, Antarctic sheet cores). The iron and phosphate in the dust causes an increase in the biologic production in regions of the earth’s ocean which are currently almost lifeless due to a lack of nutrients. The increase in biologic production removes the CO2.

re #78

Sorry, I was using “thermals” badly to mean any thermal column. I was trying to keep things too simple.

re #79

I don’t have any evidence which is why I asked the question “do the models take into account gas law deviations and other non-linearities.”

[Response: Gas law deviations are too small to matter -W]

Thanks for the responses to my question in #71.

To quote from one of the links regarding the warming at the end of maximum glaciation:

“Some (currently unknown) process causes Antarctica and the surrounding ocean to warm. This process also causes CO2 to start rising, about 800 years later.”

It is the “currently unknown” part of that I find intriguing. It seems that understanding what triggers the CO2 increases and decreases, which may not be the same thing, is critical to understanding our past and future climate.

Re: 85.

Some links about climate change episodes in Earth’s past:

http://www.geolsoc.org.uk/template.cfm?name=fbasalts

http://www.geology.sdsu.edu/how_volcanoes_work/

http://www.scotese.com/

Also see:

http://groups.yahoo.com/group/ClimateArchive/links

RE: 86,

Pat, I will be forever grateful that you provided the link to

Chris Scotese web page. His graphics and animations are what make earth science so fascinating. And, thanks to Chris as well.

Every science teacher should have his web page bookmarked.

John, Thank you! The information on the Scotese web page was very helpful to me as I was learning about climate change, and continue to learn of course.

Maybe someone from the Alaska Climate Research could help me out with this question. When was the last time 9 inches of rain was measured within a two-day period or less in Alaska during the month of October?

“The National Weather Service reported that 9 inches of rain had fallen

in Seward between noon Sunday and 5 p.m. Monday. Tom Dang of the

National Weather Service said the low pressure system that caused the

storm moved in on the jet stream from the Aleutian Islands, pulling in

tropical moisture that had welled there. … “Within a half hour there

were chunks of ice — I can only assume from Exit Glacier — flowing

down Exit Glacier Road,” …

…

(May need to register with The Anchorage Daily News to get to the story)

October 10, 2006

http://www.adn.com/news/alaska/kenai/story/8288801p-8185319c.html

If Stu Ostro, Senior Meteorologist with The Weather Channel, can discuss what he sees as the impact of climate change upon day-to-day weather patterns – then how come Tom Dang, NOAA’s National Weather Service, is not allowed (or refuses) to do that … in the public interest?

Excerpt 1 of 2:

The Weather Channel Blog

…

“I have written on the impacts of climate change upon day-to-day weather patterns in these pages during the past year … and will have more comment on this one when I have the time (was going to allude to this aspect in my entry last night but couldn’t have quickly done it justice). For now, suffice it to say that I think the occurrence of this event in Alaska was not an “accident.” More broadly in regard to global warming’s impact in Alaska, TWC did a feature on this a couple of years ago. The videos and a text piece can still be found online here” :

http://www.weather.com/aboutus/television/forecastearth/alaska.html

Comment posted by Stu Ostro, October 11, 2006

FROM HAWAII TO ALASKA

October 10, 2006

The Weather Channel Blog

http://www.weather.com/blog/weather/?from=wxcenter_news

Excerpt 2 of 2:

Anchorage Daily News:

…

“Tom Dang of the National Weather Service said the low-pressure system that caused the storm moved in on the jet stream from the Aleutian Islands, pulling in tropical moisture that had welled there. … “Within a half hour there were chunks of ice — I can only assume from Exit Glacier — flowing down Exit Glacier Road,” …

(May need to register with Anchorage Daily News to get to story)

October 10, 2006

http://www.adn.com/news/alaska/kenai/story/8288801p-8185319c.html

For those who may think the flooding in Alaska earlier this week is not out of the ordinary please check out the link (below) to the story and photo.

Caption reads:

“Floods pound highway: Waterfalls on Tuesday wash over the Richardson Highway running through Keystone Canyon, north of Valdez. Floodwaters severely damaged a stretch of the highway, closing the road and blocking Valdez from the rest of Alaska.”

http://www.juneauempire.com/stories/101206/sta_20061012015.shtml

re 87. 88.

The same storm that dumped nine inches of rain this week in Alaska has now dumped more than nine inches of lake-effect snow in western New York.

The hydrologic effect of climate change on lowering the Great Lakes water levels is a huge concern for many people.

The Oct 13, 2006 Weekly Great Lakes Water Level Update from the Corps

of Engineers Detroit District Office shows that:

“Lake Superiorâ??s water level is currently 11 inches lower than it was

a year ago, while Lake Michigan-Huron is 1 inch below last year.”

Recorded lake level data (1918-PRESENT)

Difference from recorded average Oct. levels:

SUPERIOR (-17 inches), MICH-HURON(-19 inches).

Diff. from recorded lowest Oct. mean levels:

SUPERIOR (0 inches), MICH-HURON (+11 inches).

http://www.lre.usace.army.mil/

—

Lakes Michigan and Huron are considered a single lake, hydrologically.

The hydrologic effects of climate change on Great Lakes water levels include greater evaporation and longer growing seasons. Longer growing seasons result in more transpiration and less inflow to the lakes.

A combination of high winds and warm lake surface water temperatures has caused the heavy snow falls along the downwind lake-effect areas of the Great Lakes.

Lake Superior is currently near a record low level for this time of year (records from 1918 to current).

Pat,

Got off the scale vertical sun disc expansions to the southwest of Resolute a few days ago, this is from Pacific Ocean powering a huge Cyclone. North Pacific low pressures seem to be heading Northwards because it aint so cold in the Arctic. Like your Great Lakes piece, do you think no ice during last winter contributed to Great lakes water levels decline?

Re # 91

Erie levels predicted to drop

Story from the Monday, July 24, Edition of the Chronicle-Telegram (Elyria, Ohio)

The Associated Press

CLEVELAND – The newest update to a Lake Erie management plan predicts global warming will lead to a steep drop in water levels over the next 64 years, a change that could cause the lakeâ??s surface area to shrink by up to 15 percent.

The drop could undo years of shoreline abuse by allowing water to resume the natural coastal circulation that has become blocked by structures, experts said.

Updated annually, the plan is required by the Great Lakes Water Quality Agreement between the United States and Canada. It is developed by the U.S. Environmental Protection Agency, Environment Canada and state and local governments with help from the shipping industry, sports-fishing operators, farm interests, academics and environmental organizations.

The newest update addresses for the first time, when, where and how the shoreline will be reshaped. It says the water temperature of Lake Erie has increased by one degree since 1988 and predicts the lake’s level could fall about 34 inches. It also says the other Great Lakes will lose water.

If the projections are accurate, Lake Erie would be reduced by one-sixth by late this century, exposing nearly 2,200 square miles of land and creating marshes, prairies, beaches and forests, researchers said.

Researchers said new islands are appearing in the western basin, where Lake Erie is at its lowest and some reefs are about 2 feet below surface.

“There is now stronger evidence than ever of human-induced climate change,” states the report, dated this spring. “Our climate is expected to continue to become warmer. This will result in significant reductions in lake level, exposing new shorelines and creating tremendous opportunities for large-scale restoration of highly valued habitats.”

A predicted drop in water levels also has been addressed by the International Joint Commission, an American-Canadian panel that controls water discharges out of Lake Superior and the St. Lawrence River. The commission told scientists at a workshop in February that research showed water levels should begin decreasing before 2050.

“We can try to be positive about climate change, really positive,” said Jeff Tyson, a senior fisheries biologist at the Ohio Department of Natural Resources, who helped write a portion of the management plan. “If it continues to be hot, once you lose that meter of water over the top, we get an entirely natural, new shoreline along a lot of the lakefront. If we manage it right, things could look a lot like they did when the first white settlers arrived.”

The report was written in an effort to spark thought about what the shoreline could become, said Jan Ciborowski, a professor at the University of Windsor who specializes in aquatic ecology and also helped write the plan.

“There is a lot of opinion among scientists who study the Great Lakes that we need to get the public to start thinking: ‘What are things going to look like?’ ” Ciborowski said.

The plan monitors issues ranging from pollution to invasive species, said Dan O’Riordan, an EPA manager at the Great Lakes National Program Office in Chicago. He said the agency recognizes the views of experts who predict the lake will shrink.

“They’ve done the math; I would trust the math,” he said.

http://www.chroniclet.com/Daily%20Pages/072406local1.html

re. 93

A plot showing Lake Superior monthly elevations from 2004 to 2006 and the 1925-1926 record low monthly elevations (for comparison) are at the link below. I requested at cleveland.indymedia that people let me know if they want to see a similar plot for Lake Erie.

In my opinion, the current near record (1918-2005) low October average level on Lake Superior and the low levels on Michigan-Huron are due to climate change. The severe drought in the Upper Midwest this year was a large contributing factor to the low levels on Lake Superior.

The level of Michigan-Huron (a single lake hydrologically) has been low for several years already but it will take a tumble over the next few months unless multiple periods of heavy rain develop during that time.

http://cleveland.indymedia.org/news/2006/10/22834.php

Gavin, My question relates to methane forcing and policy. How much of the atmospheric methane is released by incomplete combustion of fossil fuels? If this is a significant source of atmospheric methane as well as the major driver of CO2, then policies that reduce fossil fuel combustion would reduce releases of both gases.

So how do we know how much increased CO2 has increased convection? With more heat at the bottom of the column convection will increase, correct? Won’t that make more cumulous clouds causing more rain and higher albedo?

Further, you give a forcing of -0.15 w/m^2 to land use changes. Is this number simply a basic surface albedo difference forcing which does not include feedbacks such as increased cloud cover over vegetated land or increased biochemical production as opposed to sensible heat production by biomass? Is a precision of two significant figures appropriate?

Thank you for your constructive dialogue.

Re #97 and “Further, you give a forcing of -0.15 w/m^2 to land use changes. Is this number simply a basic surface albedo difference forcing which does not include feedbacks such as increased cloud cover over vegetated land or increased biochemical production as opposed to sensible heat production by biomass? Is a precision of two significant figures appropriate?”

Land use changes include deforestation, which releases stored CO2 into the atmosphere. Especially when the deforestation is accomplished by burning the forest, which it frequently is these days.

-BPL

This explanation of global warming although maybe well intentioned is unclear,foggy and dangerous.It pretends to inform and educate but instead obviscates the real effects of co2,methane,etc.and supplies a convienient red herring to the companies and individuals that continue to chip away at the land,sea and air of planet earth making it unhabitable for all living things.

Re #98,

Quite true, and a question is how much of the CO2 taken up by the forests and subsequently burned turns to ash instead of returning to CO2.

Re #99,

Can you be more specific than “This explanation”?

Thanks

Steve