Actually, Roy has been pretty busy dishing out the confusion recently. Future posts will take a look at his mass market book on climate change, entitled Climate Confusion, published last month, and his article in National Review. We’ll also dig into some of his peer reviewed work, notably the recent paper by Spencer and Braswell on climate sensitivity, and his paper on tropical clouds which is widely misquoted as supporting Lindzen’s IRIS conjecture regarding stabilizing cloud feedback. But on to today’s cooking lesson.

They call it "Internal Radiative Forcing." We call it "weather."

In Spencer and Braswell (2008), and to an even greater extent in his blog article, Spencer tries to introduce the rather peculiar notion of "internal radiative forcing" as distinct from cloud or water vapor feedback. He goes so far as to say that the IPCC is biased against "internal radiative forcing," in favor of treating cloud effects as feedback. Just what does he mean by this notion? And what, if any, difference does it make to the way IPCC models are formulated? The answer to the latter question is easy: none, since the concept of feedbacks is just something used to try to make sense of what a model does, and does not actually enter into the formulation of the model itself.

Clouds respond on a time scale of hours to weather conditions like the appearance of fronts, to oceanic conditions, and to external radiative forcing (such as the rising and setting of the Sun). Does Spencer really think that a subsystem with such a quick intrinsic time scale can just up and decide to lock into some new configuration and stay there for decades, forcing the ocean to be dragged along into some compatible state? Or does he perhaps mean that slow components,like the ocean, modulate the clouds, and the resulting cloud radiative forcing amplifies or damps the resulting interannual or decadal variability? That latter sounds a lot like a cloud feedback to me — acting on natural variability whose root cause is in the ponderous motions of the ocean.

Think of it like a pot of water boiling on a stove. What ultimately controls the rate of boiling, the setting of the stove knob or the turbulent fluctuations of the bubbles rising through the water? Roy’s idea about clouds is like saying that you should expect big, long-lasting variations in the boiling rate because sometimes all the steam bubbles will decide to form on the left half of the pot leaving the right half bubble-free — and that things will remain that way despite all the turbulence for hours on end.

The only sense that can be made of Spencer’s notion is that there is some natural variability in the climate system, which in turn causes a natural variability to some extent in the radiation budget of the planet, which in turn may modify the natural variability. Is this news? Is this shocking? Is this something that should lead us to doubt model predictions of global warming? No — it is just part and parcel of the same old question of whether the pattern of the 20th and 21st century can be ascribed to natural variability without the effect of anthropogenic greenhouse gases. The IPCC, among others, nailed that, and nobody has demonstrated that natural variability can do the trick. Roy thinks he has, but as we shall soon see, it’s all a matter of how you run your ingredients through the food processor.

The impressive graph that isn’t

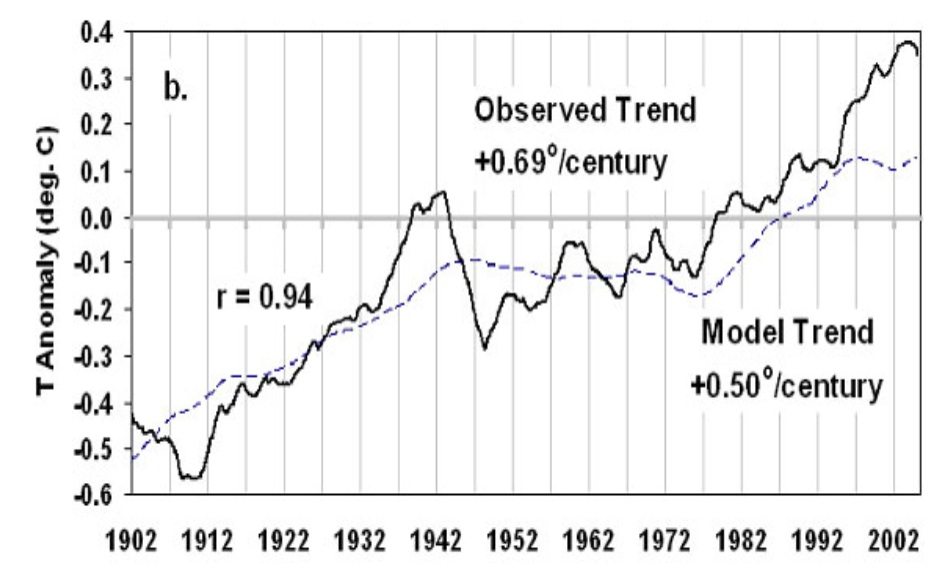

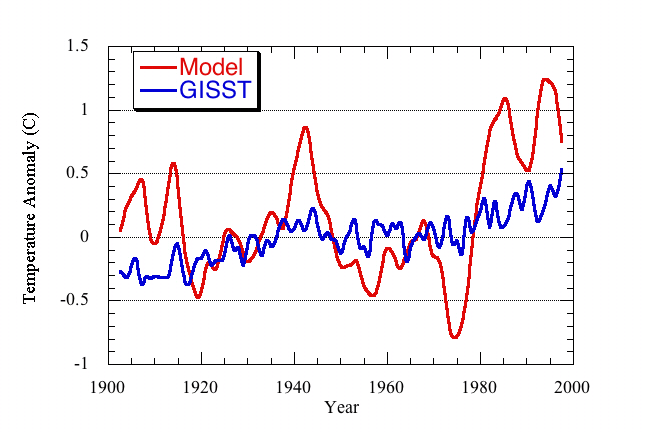

So here’s what Roy did. He took two indices of interannual variability: the Southern Oscillation (SOI) index, which is a proxy for El Nino, and the Pacific Decadal Oscillation Index (PDOI). He formed an ad-hoc weighted sum of these indices,and then multiplied by an ad-hoc scaling factor to turn the resulting time series into a time series of radiative forcing in Watts per square meter. Then he used that time series to drive a simple linear globally averaged mixed layer ocean model incorporating a linearized term representing heat loss to space. And voila, look what comes out of the oven!

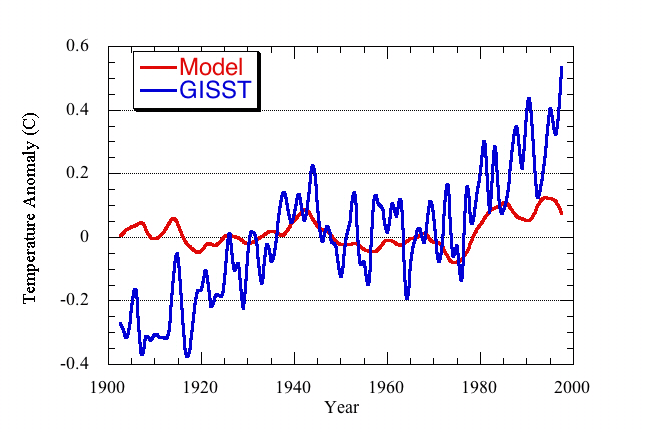

Roy is really taken with this graph. So much so that he uses it as a banner near the top of his climate confusion web site under the heading "Could Global Warming Be Mostly Natural?" But is it as good as it looks? To find out, I programmed up his model myself, but chose the set of adjustable parameters based on compatibility with observations constraining reasonable magnitudes for these parameters. Here’s what I came up with:

So why does Roy’s graph look so much better than mine? As Julia Child said, "It’s so beautifully arranged on the plate – you know someone’s fingers have been all over it."

A Cooking lesson

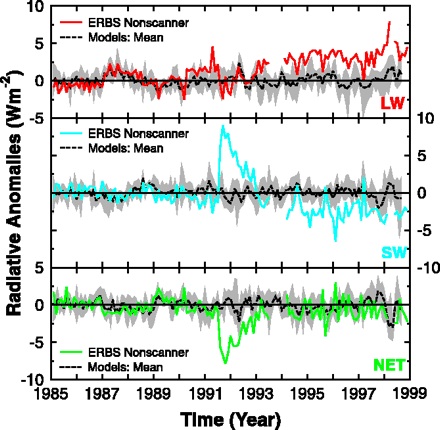

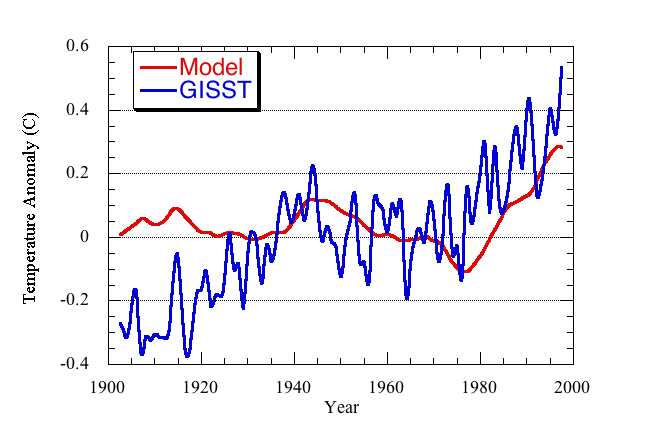

Lesson One: Jack up the radiative forcing beyond all reason. Reliable data on decadal variability of the Earth’s radiation budget are hard to come by, but to provide some reality check I based my setting of the scaling factor between radiative forcing and the SOI/PDOI index on the tropical data of Wielecki et al 2002 (as corrected in response to Trenberth’s criticism here.) The data is shown below. On interannual time scales, it’s mostly the net top-of-atmosphere flux that counts, so the curve to look at is the green NET curve in the bottom-most panel.

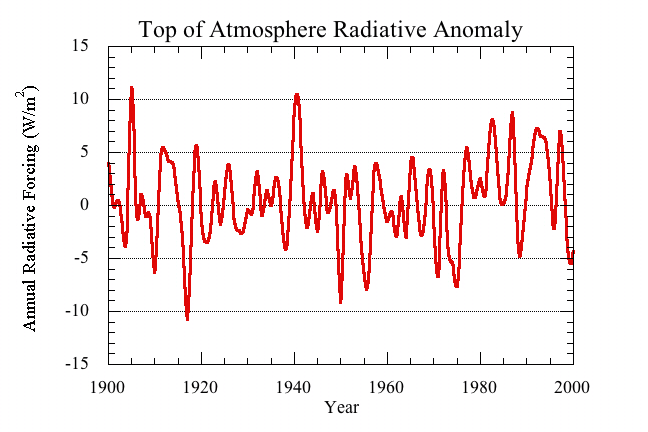

Except for the response to the Pinatubo eruption (the pronounced dip during 1991), the fluctuations are on the order of 1 W/m2 or less once you smooth on an annual time scale. Based on this estimate and on the typical magnitude of Spencer’s combined SOI/PDOI index, I chose a scaling factor (Roy’s a) of 0.27 W/m2 .. In his article, Roy uses a value ten times as big, but then he partly covers up how large the annual radiative forcing is by showing only the five year averages. With Roy’s value of the scaling coefficient, the annual radiative forcing looks like this

which is clearly grossly exaggerated compared to the data. Moreover, in my own estimate of the scaling factor I tried to match the overall magnitude of the fluctuations, whereas restricting the estimate to that part of the observed fluctuation which correlates with the SOI/PDOI index could reduce the factor further. Finally, even insofar as some part of climate change could be ascribed to long term cloud changes associated with the PDOI and SOI, one cannot exclude the possibility that those changes are driven by the warming — in other words a feedback. Still, let’s go ahead and ignore all that, and put in Roy’s value of the scaling coefficient, and see what we get.

So here’s our cooked graph as of Lesson 1 of the recipe:

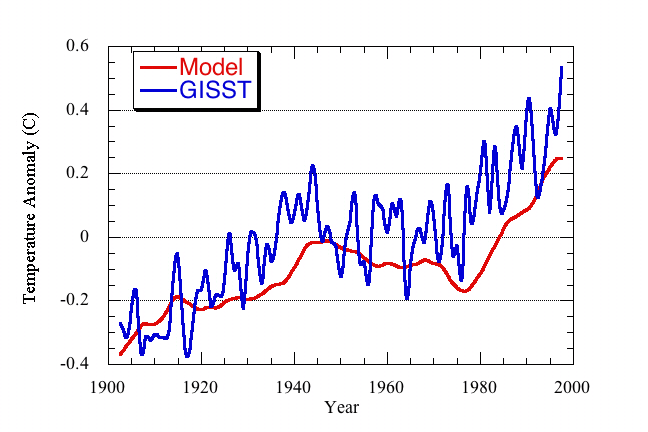

Lesson Two: Use a completely unrealistic mixed layer depth. OK, so we’ve goosed up the amplitude of the temperature signal to where it looks more impressive, but the wild interannual swings in temperature look completely unlike the real thing. What to do about that? This brings us to the issue of mixed layer depth. The mixed layer depth determines the response time of the model, since a deeper mixed layer has more mass and takes longer to heat up, all other things being equal. The actual ocean mixed layer has a depth on the order of 50 meters. That’s why we got such large amplitude and high frequency fluctuations in the previous graph. What value does Roy use for the mixed layer depth? One kilometer. To be sure, on the centennial scale, some heat does get buried several hundred meters deep in the ocean, at least in some limited parts of the ocean. However, to assume that all radiative imbalances are instantaneously mixed away to a depth of 1000 meters is oceanographically ludicrous. Let’s do it anyway. After all, as Julia Child said, "In cooking you’ve got to have a ‘What the Hell’ attitude." Here’s the result now:

Lesson 3: Pick an initial condition way out of equilibrium. It looks better, especially in the latter part of the century. But it doesn’t get the trend in the early century right. Gotta keep cooking! The essential ingredient this time is the choice of initial condition for the model. If we initialize the anomaly at -0.4C, which amounts to an assumption that the system is wildly out of equilibrium in 1900, then this is what we get:

Now, it’s finally looking ready to serve up to the unsuspecting diners. Note that it’s the adoption of an unrealistically large mixed layer depth that allows Roy to monkey with the early-century trend by adjusting the initial condition. With a more realistic mixed layer depth, changing the initial condition on temperature anomaly only leads to a rapid adjustment period affecting the first few years.

My graph is not absolutely identical to Roy’s, because there are minor differences in the initialization, the temperature offset used to define anomalies, and the temperature data set I’m using as a basis for comparision. My point though, is that this is not an exacting recipe: it’s hash — or Hamburger Helper — not soufflé. Following Roy’s recipe, you can get a reasonable-looking fit to data with very little fine-tuning because Roy has given himself a lot of elbow room to play around in: you have the choice of any two variability indices among dozens available, you make an arbitrary linear combination of them to suit your purposes, you choose whatever mixed layer depth you want, and you finish it all off by allowing yourself the luxury of diddling the initial condition. With all those degrees of freedom, I daresay you could fit the temperature record using hog-belly futures and New Zealand sheep population. Anybody want to try?

Postlude: Fool me once …

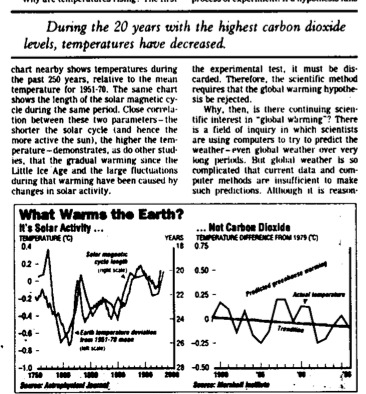

Why am I not surprised about all this shameless cookery? Perhaps it’s because I remember this 1997 gem from the front page of the Wall Street Journal, entitled "Science has Spoken:Global Warming is a Myth":

That’s not Roy’s prose, but it is Roy’s data over there in the graph on the right, which purports to show that the climate has been cooling, not warming. We now know, of course, that the satellite data set confirms that the climate is warming , and indeed at very nearly the same rate as indicated by the surface temperature records. Now, there’s nothing wrong with making mistakes when pursuing an innovative observational method, but Spencer and Christy sat by for most of a decade allowing — indeed encouraging — the use of their data set as an icon for global warming skeptics. They committed serial errors in the data analysis, but insisted they were right and models and thermometers were wrong. They did little or nothing to root out possible sources of errors, and left it to others to clean up the mess, as has now been done.

So after that history, we’re supposed to savor all Roy’s new cookery?

That’s an awful lot to swallow.

Of course there is the famous:

All Theories Proven with One Graph

http://www.jir.com/graph_contest/index.html#OneGraph

Raypierre:

What do you suppose motivates someone, a scientist, nontheless, do cook his data this way and make such a concerted effort to mislead the public?

[Response: That’s a question for psychologists. I wish I knew the answer. I doubt that he, or Dick Lindzen for that matter, are in it for the money, so that takes out the easiest of motives. I think it’s probably a matter of ideological blinders. The perceived implications of global warming being a real problem are so dissonant with some other value system that it imposes some kind of filter on the interpretation of objective reality. Anything I say would be just guessing, though. Fortunately, this problem doesn’t seem to plague too many scientists. –raypierre]

Raypierre, Thanks for this. Very difficult for the layman to spot such chicanery.

I wondered about the graphs in the merde du jour “Science Has Spoken: Global Warming Is a Myth”, by Robinson & Robinson 1997, both from the “Oregon Instutute of Science & Medicine”. The source given was the ExxonMobil funded Mashall Institute. So now we know!

According to exxonsecrets.org, Spencer is on the payroll of numerous other ExxonMobil funded think-tanks.

Google Roy Spencer site:exxonsecrets.org – lots of hits!

For more from the “Oregon Instutute of Science & Medicine”

https://www.realclimate.org/index.php/archives/2007/10/oregon-institute-of-science-and-malarkey/

//”Does Spencer really think that a subsystem with such a quick intrinsic time scale can just up and decide to lock into some new configuration and stay there for decades, forcing the ocean to be dragged along into some compatible state?”//

I certainly read the “internal radiative forcing” idea as something like this (actually I didn’t see any other way to interpret it). For the most part, I’ve not seen much evidence to suggest that internal variations alone can bring the climate to a new state on decadal timescales, even if the internal fluctuations do not completely average out over decades (e.g.,, the PDO being in a positive phase more than a negative phase during the timescale of consideration). What I was reminded of though is something along the lines of the hypothesis of a very weak signal/stochastic-resonance idea, where “noise” and a very weak “signal” could combine (similar to some of the D-O hypotheses out there). Perhaps Spencer could try to relate secular cloud changes as internal variations superimposed on a rising trend (he at least seems to admit that the 2x CO2 non-feedback value is 1.2 C).

Though, there is yearly variability all the time, and the ocean and atmosphere are constantly exchanging heat back and forth on time scales characteristic of the mixed layer, which can be more than a year, and that should change radiative fluxes on monthly to yearly timescales. But you’re precisely right… that is weather. Why should the late 20th century be any different from any other time, and where are these decadal weather forcings in the paleo record?

On the possibility of a changing cloud cover “forcing” global warming in recent times (assuming we can just ignore the CO2 physics and current literature on feedbacks, since I don’t see a contradiction between an internal radiative forcing and positive feedbacks), one would have to explain a few things, like why the diurnal temperature gradient would decrease with a planet being warmed by decreased albedo…why the stratosphere should cool…why winters should warm faster than summers…essentially the same questions that come with the cosmic ray hypothesis.

The key questions are whether internal fluctuations will not actually average out to zero and cause a positive rise in temperatures on long time scales, and is this forcing sufficient to overcome that of well-mixed greenhouse gases. I say the answer to both is no.

The people who tell us that computer models are no good because, despite being based on physics, there are gaps, want us to believe that this sort of pure data massaging is somehow superior. I was watching a YouTube of Bob Carter’s recently and he was banging on the claim that computer models are useless because you have to work around gaps in knowledge. His superior solution? Extrapolate from past data which doesn’t include any anthropogenic effects.

The fundamental flaw in almost all the denial arguments I’ve seen is that they start from the premise that global warming isn’t happening therefore the best model is the past.

Sadly there seems to be no limit to how pathetic the argument can be, and some people still consider it plausible.

I like the “inactivists” tag.

Spencer is doing what I, as a naive teenager, did with Bode’s Law — curve-fitting by adjusting an arbitrary number of parameters. I got some wonderful fits when I had six or twelve arbitrary constants to play with (for the nine-planet system we had in the ’70s). Both Spencer’s work and mine depend on the statistical fallacy called “the enumeration of favorable circumstances.”

Thanks for the thorough analysis. This article has already come up in a few discussions with some who remain skeptics. I now can argue against Spencer’s results. Thanks to Julia too.

raypierre

Are you sure with your only 50m mixed layer?

I think that if you apply this little depth, with RF evolution in the last century, you find a very weak climate sensitivity.

To explain the actual temperature anomaly, with a very (too?) simple “model” and with a climate sensitivity of 0.75°C.m2/W, I need, at least, a global 200-300m mixed layer.

For the south hemisphere only it is far deeper.

[Response: No, I don’t think a 50m mixed layer by itself is an adequate description of relaxation. I wanted to stick with the same model Spencer used, and that meant sticking with a one-layer ocean model. The point is that if one is going to go that route, a one km mixed layer gives you far too much thermal inertia. –raypierre]

raypierre

I add to my precedent post that, for me, the mixed layer is an “equivalent” mixed layer which includes the thermal exchange between the real mixed layer (which is delimited by the thermocline) and the deeper layers.

I think you agree that the 0-3000 m layer is heating (if we refer to Levitus for example)

Roy Spencer is an interesting character to me. His peer-reviewed published track record seems reputable. But his non-peer reviewed statements seem to be full of [edit] distortions. See his 2007 testimony to congress for example.

http://oversight.house.gov/documents/20070320152338-19776.pdf

How does someone like this match up his professional and personal beliefs?

Hmm. Methinks I understand the sudden appearance of Gerry Browning, Ferenc M. Miskolczi, et al. They are trying to trash the use of dynamical models using real physics so that the ad hoc approach described here seems to have equal credibility. What a brilliant approach to solving the problem of climate change: All they have to do is change the laws of physics!

Nicely put, guys.

Re. graphs that ‘show’ things, I particularly like the supposed correlation between global warming and piracy on the high seas:

http://www.scq.ubc.ca/piracy-as-a-preventor-of-tropical-cyclones/

Worth a look if you want to be more creative with your data …

The EIB Network is not going to be happy with you guys.

raypierre,

Thank you for taking the time to wade through this and attempting to replicate Spencer’s results. That kind of work won’t get you any grants, but nevertheless it’s crucially important for the health of the science!

And thank you for writing it all up in a clear, enlightening, and entertaining fashion.

I’ve had some blog back-and-forth with Roy Spencer on this “internal forcing” business, though I wasn’t as clear on the problem with it as you are here. Anyway, my impression after reading some of his responses was that he’s making it deliberately vague as if the source of these internal forcings were somehow un-knowable; surely that’s not the stance of a normal scientist, is it? Wouldn’t you want to get to the bottom of some mysterious internal behavior of this sort? But instead the argument seems to be these are random, uncaused features of Earth’s climate…

On graphs that “show” things, I’ve seen a graph that showed a very close match through the decades between the Keeling curve of CO2 levels in the atmosphere, and the number of submissions to scientific journals (even the seasonal variations match up…). With just two free parameters (scale and offset) you can prove a lot of remarkable things! Who knew that scientific publication was actually the cause of global warming?

Pierrehumbert unwittingly makes the point, I believe, that disagreement about what goes into the models (e.g. arguably unrealistic radiative forcing in Spencer’s) is precisely why there is no consensus on the subject of AGW, media repetition of that insistence notwithstanding.

All the books are cooked, because that’s what models are, [necessarily] cooked books with ever-improving inputs. As required by the scientific method, Pierrehumbert properly assumes the Spencer model is wrong until it can be verified. Why RC assumes the other, scarier models are right, following a faith-based assumption that the preferred models are correct until proven otherwise, is problematic. In twenty years time, when, for example, we might know enough about the variability of the Earth’s radiation budget, I think we can agree that we’ll chuckle to some extent at the relatively primitive and incomplete models we are using today.

The politicization of the issue, on both sides, makes it well-nigh impossible to get at the science for readers such as me, as desire outweighs reason so much of the time. I am left with little alternative than to distrust any and all models until more facts are in about this chaotic climate of ours.

[Response: No, the post makes the point that you can’t just make up stuff to put in models to make them come out the way you want. There are uncertainties in parts of the general circulation models used to forecast future climate, but thousands of scientists have made meticulous efforts to make sure that the processes are based on observations of basic physics, laboratory measurements, and sound theoretical calculations. The work that has gone into the representation of ocean circulations and heat burial is a case in point. This isn’t perfect, to be sure, but it is constrained by things like ocean chemical tracer analyses. It’s the sort of thing that allows us to go beyond arbitrary one-layer mixed layer models. –raypierre]

Raypierre– Ditto what #14 Anthony Kendall says, this is a substantial piece of work that doesn’t add to your “publication” record, but highly useful and illuminating. You are owed thanks by every rational person concerned by the misinformation that circulates. A recent Pew Center poll shows that a DEcreasing fraction of conservative Republicans think AGW is a problem! Thanks I suppose to guys like Roy Spencer.

But, um, please do give full labels for the graphs in lesson one, what’s LW, SW and NET ?

[Response: Sorry, I pulled that last graph out of the Science article without including the caption. LW is the fluctuation in the longwave (infrared) component of the top-of-atmosphere energy budget. SW is the shortwave (mostly cloud reflection) component. The sum is NET, which is the net fluctuation in the top-of-atmosphere component. The trends in LW and SW are larger than the trend in NET, but it should be noted that getting decadal trends out of satellite data of this sort is difficult. I’m sure this paper isn’t the last word on the subject, but it at least gives us some idea of the actual magnitude of interannual fluctuations of the energy budget. –raypierre]

RayPierre: Let me add my thanks for your work in taking this apart. I have read a number of his earlier papers and I would go as far as to call his initial work “ground breaking”. Sadly he appears to have strayed more into politics than science in recent years.

Not too long ago he published an opinion piece that the current rise in CO2 is not due to anthropogenic sources but ocean temperatures are the drivers. He threw in a lot of phrases saying “This is probably the most provocative hypothesis I have ever (and will ever) advance:“, he knew most did not accept it, etc. etc.

Of course now when people quote it, they seem to lose the idea that it is a “provocative hypothesis” and accept it as carved in stone.

John

He’s also a creationist, of the intelligent design subspecies. It seems quite safe to assume that his conservative christian faith drives his personal opinion about science, when science conflicts with that faith. “Lying about science for Jesus” seems to be common among conservative fundamentalists, unfortunately. Spencer’s hashing of the data regarding climate change is no different than the misrepresentations of biology and evolution that comes from the creationist/intelligent design crowd. His buying into the latter is maybe easier to understand because he’s not an evolutionary biologist so perhaps can be forgiven for being ignorant of what the science really says. But climate science? It’s his field. There’s no excuse.

RayPIerre’s piece not only puts a wooden stake through the heart of Spencer’s argument, but he’s funny, too! If he’s this humorous in English, what’s he like in french?

Very useful post.

It would be interesting to do a compare/contrast between what goes into Roy’s blog vs. what he gets through peer review. There was an earlier version of the paper with Brasswell floating around when it had been (if I remember correctly) accepted conditional upon certain changes. Even in that state I believe his formulation was something like “provides weak/marginal support to the IRIS conjecture”. Very mild stuff, in other words.

All else said, however, his rock band is mildly humorous:

http://bigcitylib.blogspot.com/2007/12/hard-rockin-creationist-solos-for.html#links

I am clealy not spending enough time in the kitchen in preparing graphs for publication. Excellent work picking through the manufacturing process of a graph, not easy to do. As to motivation, look no further then the attention that is recieved.

Raypierre:

I still have not recieved an answer for my question about your “Venus Unveiled” article. In early March of this year you attended a conference at the little ski resort of La Thuile in the Val D’Aosta, the Italian Alps. Your article included a picture of a resort with little snow on the ground. Was that picture taken by you that week or was it some summer picture included in your article for subliminal purposes?

[Response: I wasn’t even thinking of subliminal purposes when I put up that picture; I put it up to give some sense of the natural beauty of the setting. But, in fact I’m glad you asked. That picture was taken during the meeting by Darren Williams, and yes, it was hot and there was hardly any snow on the ground. I managed to stick on a lot of klister and find enough melting snow in the valleys to cross-country ski on, but yes it was hot and the spring skiing was pretty rotten. –raypierre]

What an awesome post!

And thank you for that terrific final paragraph on how they botched the satellite data for so long, just coincidentally making a bunch of mistakes that pushed their mis-analysis all in the same, wrong direction.

Anyway, you inspired me:

http://climateprogress.org/2008/05/22/should-you-believe-anything-john-christy-or-roy-spencer-say/

Re #16. Walter Manny unwittingly makes the point that he doesn’t understand the difference between dynamical and statistical modeling, while simultaneously showing he doesn’t understand how models are validated. Dynamical models include the physics as best it can be determined–so their agreement with observations is their validation. Statistical models look at past performance and assume the future will perform similarly–the fallacy of which can be seen in all those AAA rated mort-gage-backed securities that are now being recycled for toilet paper.

I’ve learned a lot from realclimate, I thank you all for your efforts here.

Now the inevitable caveat. I’ve been around a while, and in that time I’ve followed all manner of scientific and other debate, many of which have eventually been resolved. Here’s a statistical observation from those debates: the side employing a relatively higher personal focus in their attacks on the other side’s ideas is eventually proven wrong a higher percentage of the time.

I didn’t see anything personal in Spencer’s article, nor do I find it on Pielke’s site in general. But I do here, too often. You’re scientists, wage a war of facts and ideas, your readers will figure out for themselves who’s credible and why.

If I misunderstand the purpose of this site, if it’s just for the faithful to gather and reinforce themselves and have their jollies, please hang a big sign to that effect on your homepage. If you’re trying to educate and convince all we need are the facts, ma’am, just the facts.

Let me add my thanks for you efforts! This is just what is needed

at this time since quotations from Spencer are being widely

circulated on discussion sites. I will be looking forward to

your review of his peer reviewed papers, particularly

“Cloud and radiation budget changes associated with tropical

intraseasonal oscillations,” published in GEOPHYSICAL RESEARCH

LETTERS in August, 2007.

Ken Milne:

“the side employing a relatively higher personal focus in their attacks on the other side’s ideas”

The irony.

How about attacking the facts presented by Ray Pierre instead of attacking the person, as per your own advice? Thank you very much.

Ken, I would agree with you if Ray had been dealing with honest errors by someone seriously trying to get at the truth. But an effort that is, in effect, a parody of science deserves to be parodied. I do not think Ray got personal about this at all. He simply pointed out the obvious dishonesty (or incompetence, take your choice) behind the methodology employed.

Raypierre,

Thanks for an excellent post that was easy to follow, insightful, and very convincing. It’s very sad to see a scientist like Spencer seemingly blinded by his own biases or simply unwilling to accept his earlier errors (thus he needs to keep shifting the debate and somehow try to save face). All scientists make errors. The good ones accept it and move on to do other good work.

I realize this is off topic for this particular thread, but it does relate to a bet, and therefore to previous ones. It would appear that the Govenor of Alaska is going to take the EPA to court,and challenge the ruling that polar bears are threatened. Since most of the justification for the EPA ruling is based on the predictions of AGW, it will be interesting to see how the court rules, if it indeed comes to trial and a verdict is reached. Anyone want to make any bets?

Re Jim’s bet in 31, I’ll bet that if we have an ice-pack free arctic this summer, Alaska’s suit will fail.

Given that the polar bear listing was a response to a suit the feds were in the process of losing badly, I’d say the Alaskan governor is just going to waste the taxpayers money.

Since PDO’s gotten a lot of press coverage recently, I’d love to see a full post on it and its implication on changes on attribution of climate change.

Since this post is about Spencer, a recent op-ed of his is notable:

http://article.nationalreview.com/?q=NTUzNWUzYTA4ZTkwMTVhZmM3M2NkZDc5NDhmOTRkMzA=

Here’s what really bothers me about op-ed pieces like this. Spencer is making ridiculous arguments, yet he clearly is smart enough to know how fallacious they are and it doesn’t take a top expert to point them out. Examples:

– “Apparently, our addition of nine molecules of carbon dioxide to each 100,000 molecules of air over the last 150 years can now be blamed for anything and everything we choose.”

This is an attempt to fool the layperson into thinking the change to our atmosphere is insignificant.

– “The warming that allowed the Vikings to farm in Greenland 1,000 years ago was surely natural. But we are now told that warming in Greenland today is surely manmade. Glaciers retreating in western Canada have revealed evidence of previous forests, showing that warming and cooling cycles do indeed occur, even without SUVs. Yet the SUV is now the scapegoat for retreating glaciers.”

Last year my car didn’t start because of a bad battery. This morning it didn’t start. Even though the battery was tested to be fine and all signs point to a faulty starter, Spencer’s logic would have us believe it’s the battery again.

– “But McCain has made it clear that the science really does not matter anyway because, even if humans are not to blame for global warming, stopping carbon-dioxide emissions is the right thing to do. And if we had another choice for most of our energy needs, I might be willing to accept such a claim as harmless enough.”

McCain thinks science doesn’t matter? Quite the contrary in fact. And we do have other choices for our energy needs.

– “But carbon dioxide is necessary for life on Earth, and I have a difficult time calling something so fundamentally important a “pollutant.” ”

So is the sun, but we don’t really want any more of it.

– “So, here we are with bad science ready to support bad policy decisions that will lead to bad economic times ahead, and no presidential candidate who is willing to ask the hard questions. ”

“Bad economic times”. It’s ironic that those claiming mitigation efforts will cause economic ruin label the scientific community as “alarmist”.

Here’s what I dont’ get: There seems to be no shortage of loud political commentary from contrarians, as seen on various op-ed pages, yet when a scientist from the consensus community makes any suggestion of reducing emissions, an obvious implication from what the science says, it’s a big deal and they are labelled as activists or ideologues.

RE: #19

Let’s keep the establishment of religion out of this discussion. […edit…]

[Response: Agreed with that. I deleted the rest of your comment because of the inflammatory accusations. But generally, I agree that one’s religious perspectives don’t have much bearing on attitude to climate change, since I have met plenty of devout from all religious who are concerned about care of creation. I do think, however, that skepticism about evolution is relevant information, from the standpoint of attitude towards scientific argument and ability to deny or distort evidence. But please, let’s not go any further into religion. –raypierre]

Thanx for the deconstruction.

I have a question regarding the mixed ocean layer. I would be grateful for a reference showing the time it takes for the ocean to mix to different depths. The article indicates a mixing time of 100 yr for 1000 m; for 50 m i gather the mixing time is on the order of 1 yr ? what would an appropriate mixing time be for say, 300m and 3000m ?

I do understand that these mixing times vary depending upon locale, season, tide , topo, … but are there order of magnitude estimates somewhere ?

[Response: Actually, there are two separate issues floating around in your question. The first is: what is the response time for a given mixed layer depth. That one is easy: all other things being equal, it’s proportional to the mixed layer depth, and using Spencer’s sensitivity coefficient you get something on the order of 50 years for a 1000m mixed layer. The question of how long it actually takes an ocean to redistribute heat to a depth of, say, 1000 meters is more complicated, especially since the processes that mix heat down to those depths are not at all globally uniform. I don’t know how to give a simple few-line answer to that question, except to say that over a time scale of a hundred years, some heat does mix down to a depth of around 300m, which is why we have “committed warming” in the pipeline. In a sense, you have to wait for those deeper waters to finish warming before they stop removing energy from the upper ocean. –raypierre]

I’m new here, but I have to ask, is there a way(there should be) to measure the total amount of heat energy in our biosphere? Seems to me that if that number were made public,and talked about, it would disspell a lot confusing talk from the skeptic side about ‘how cold it was in Nebraska last winter, and how that proves that man made global warming is a hoax’.

[Response: I don’t think you are asking the right question, and certainly an answer to that question would not do anything much to address the tendency of certain parties to extrapolate from one or two winters to a long term trend. Could you clarify your reasoning here? Then maybe I can be more enlightening. –raypierre]

RayPierre:

This was my point. If my comment was understood to criticize Christianity or Christians in general, I apologize. The vast majority of Christians don’t reject science.

Thanks Raypierre,

That was great (love the photo). I was thinking of other analogous illustrations for this phenomenon.

Since we are talking about cooking: The fact that much of prepared food is now sold to us with manufactured ingredients as opposed to real food.

They create all these interesting flavor enhancements to tantalize our pallets and trigger dopamine responses. But it’s not real food. Nowadays, I check labels to see if what I’m buying has any new and interesting created ingredients.

My wife thinks that the ads with the doctors smoking, saying “Look, I smoke” also applies here as well. Propaganda and disinformation is an art it seems.

When all is said and done, and the evidence is assimilated after it becomes tragically obvious, I wonder how any of these folks, (scientists cooking with manufactured ingredients) will feel about their contributions?

Best,

John

PS I did a point by point on John Coleman, mainly because I know a lot of people are claiming that because he started the weather channel he must know what he is talking about.

http://www.uscentrist.org/about/issues/environment/john_coleman

I think it relates here also since we are talking about cooking and the weather v. climate debate.

PPS There are some charts in his presentation that I could not find references to. If anyone knows their source so I can properly address them, would be appreciated if you drop me a note via the contact form on http://www.uscentrist.org (the ones about the solar/temp connections).

Re; #36: Raypierre says:

“except to say that over a time scale of “a hundred years”, some heat does mix down to a depth of around 300m, which is why we have “committed warming” in the pipeline”

Ray, your above statement is absurd. Why do you think Willis is looking at annual changes in heat content down to 700 m? Please look at the work by Sidney Levitus and Josh Willis and others. The heat content changes below 300 meters, integrated over the entire ocean, are much, much, much more rapid and significant than you indicate as shown by time series cross sections of the ocean heat content. There is even evidence of annual-decadal heat content changes down to 1500 meters. Please ask Gavin or some of your other team members (or e-mail Josh Willis) about this issue. Your apparent misunderstanding of how dynamic vertical mixing processes manifest themselves in the real ocean, compromises your entire analysis. You have a conceptual picture of the ocean as a slowly changing laminar fluid, almost like a stratified geologic formation. Shallow waves and conductive transfer of heat are not the only process that ventilate the ocean. Turbulence accomplishes great and mighty things. Also, please post my last comment.

[Response: It is not possible to represent deep-ocean heat burial in any faithful way in a single-layer mixed-layer model. I am not claiming that that is the case. The ground rule here is to stick with the model Spencer actually used, and show how he got his result, how much latitude he gave himself for curve-fitting, and how indefensible his parameter choices are within the model limitations he himself chose. Within that framework, assuming global instantaneous mixing of heat down to 1 km is absurd. It’s what enables him to wipe out the unrealistically large interannual short-term fluctuations you would get by assuming a fluctuation in the radiative forcing as large as he assumed. You are making a good simulation of appearing to know what you are talking about, but as usual, you are just contributing to the noise level. –raypierre]

Well, from what I see, the “debate” centers around how warm or cold the atmosphere is. But theres also the oceans ,and the land, and changes in those temps should be reflected in talk about overall changes in temps. And I don’t think they do , which is one reason why it’s so easy to keep the debate going. I started on this line of thought after a skeptic on another message board that I frequent stated that because the air is cooler after it rains, that proves that MMGW is a hoax. I don’t get his reasoning, he never could explain it, he obviously didn’t understand the basics of thermal dynamics, and heat transfer, but a lot of people bought that arguement. And I think that’s a result of the undue focus on air temps. I hope that clarifies my point.

Dhogoza, I don’t think you can point the finger at religion–conservative or otherwise–but I have noticed a tendency of many advocates of Intelligent Design to adopt a strong version of the Anthropic Principle in arguments against climate change. In effect they argue that a world designed for human habitation must include negative feedbacks that maintain the state of the planet against our malign influences. This really isn’t a “Christian” idea. Indeed, you get similar arguments from the loons on the left, as well. We have no way of knowing what motivates Spencer. However, such an idee’ fixe’ has poisoned the mind of more than one scientist.

It must be an inverse relationship*: NZ sheep numbers are in decline. Dairy cows are where the action is at the moment.

* But of course! We’re south of the equator so it must be inverse. Even the man in the moon is upside down!

[Response: You’re forgetting all the degrees of freedom Roy allows himself by his rules of the game. For example, the arbitrary linear combination of indices allows you to change the sign of indices if it suits your purpose. So, if the sheep population is going down, but you need something going up to do your curve fit, fine — just multiply by a negative number! For that matter,since Roy allows himself to pick any index he wants to do the forcing, if you don’t like sheep try doing the growing cow population. In order to fit the 20th century temperature, you only need a combination of indices that is flat (when smoothed out over some tens of years) and then goes up a bit at the end. The end part gives you the recent rise, the flat part gives you the mid-century, and then you get the early-century rise by diddling with the initial condition (assuming a large mixed layer depth). The fact that a fit can be done is completely devoid of content –raypierre]

There’s a lot regarding the history of the intelligent design movement available on the web, including the entire transcript of the trial in Dover during which the conservative Bush-appointed federal district judge became convinced that it is nothing more than old testament-based creationism with a new label.

This really isn’t open to dispute.

And, in this country, old-testament creationism is almost entirely a christian point of view (which is NOT to say most christians hold that point of view, they don’t).

The phrase “intelligent design” arose as a reaction to a defeat in federal court of an attempt to teach creationism as part of the biology curriculum in the south. The publisher of an upcoming creationist biology text hurriedly replaced all occurrences of “creator” with “intelligent designer”, etc in hopes of being able to get it used in public school biology classes.

This is documented history.

Christy and Spencer are both Southern Baptists, and Christy at least has made clear (in writing meant for public consumption) that his faith and his experience having done missionary work in Africa influence his *political* beliefs regarding the climate change debate (but not his scientific work).

The intelligent design movement is a fundamentalist christian variant of creationism. When an avowed fundamentalist christian like Spencer claims that ID proves evolution false “coincidence” is not the first thought that comes to mind.

Ray, I think Lee Grable’s point is important: The fact that we use the term “global temperature” to mean the average temperature on a two-dimensional surface rather than the three-dimensional ocean plus land plus atmosphere system of the earth has the potential to allow confusion. It isn’t necessarily obvious to the layman that a one- or two-year decline in measured mean surface temperature does not imply that the three-dimensional earth has cooled. Also, it doesn’t help that climatologists often use sloppy language when talking about this (which happens, of course, in any discipline where shorthand expressions are substituted for lengthy, but precise, formulations). Unfortunately, the answer to Grable’s question is “No, we can’t measure the heat content of the entire earth.”

[Response: But if you could measure the heat content of the entire fluid envelope of the Earth, how would that help you address Grable’s issue? Grable said something about a “biosphere,” which is what triggered my request for clarification. I would claim that the surface temperature — which is a comparatively easy thing to measure — is a relevant test of climate physics because a lot of the ocean response is indeed determined by the relatively shallow mixed layer. Naturally, one can do better with measurements of subsurface ocean temperatures and glacier volume (which affects latent heat content of the Earth), but the surface temperature does pretty well for a start. –raypierre]

Raypierre states:

” Is this something that should lead us to doubt model predictions of global warming? No — it is just part and parcel of the same old question of whether the pattern of the 20th and 21st century can be ascribed to natural variability without the effect of anthropogenic greenhouse gases. The IPCC, among others, nailed that,…”

Among the others who have definitely nailed that are:

http://www.cgd.ucar.edu/ccr/publications/meehl_additivity.pdf

Look especially at their figure 2(d). In order to reproduce observed climate, both anthropogenic and natural effects have to be taken into account. Natural effects alone fall well short of observations.

Raypierre also says: “this is not an exacting recipe: it’s hash — or Hamburger Helper — not soufflé…”

It might also be twenty four blackbirds baked in a pie. They seem to be trying to feed us crow.

It is a fundamental principle of science, perhaps first enunciated by Leibnitz in Section 6 of his Discourse on Metaphysics, that a theory must be simpler than the data it explains. Thalt shall not over-fit.

I was trying to count parameters.

We’ve got two lesson 1 parameters (two weights but which sum to one, plus the ad hoc scaling factor making a total of two free parameters), then we’ve got one lesson 2 parameter (the mixed layer depth) and one lesson 3 parameter, the initial temperature anomaly, making a grand total of 4 free parameters plus he got to choose the data sets that he drove this model with in the first place. Did I miss any?

“What value does Roy use for the mixed layer depth? One kilometer. To be sure, on the centennial scale, some heat does get buried several hundred meters deep in the ocean, at least in some limited parts of the ocean. However, to assume that all radiative imbalances are instantaneously mixed away to a depth of 1000 meters is oceanographically ludicrous.”

Ray, Lets talk some science. I have not personally insulted you, so I would ask for the same courtesy. Your above statement deals with choosing a correct mixed layer value to imput into this simple model. Presumably, your choice is driven by observational oceanography, no? (since that is obviously what you are referring to above) Can you document from the literature why you are closer to correct in choosing 50 meters, than Spencer is in choosing 1000 meters? That is a big difference, and I want to find out who is closer to being correct. It is quite interesting that on annual time-scales, quite rapid changes in ocean heat storage can be observed down past 700 meters, as pointed out by the Willis paper reference. Willis has even stated that a smaller, but still possibly significant fraction of the annual to decadal heat content variability takes place below 700 meters. You make a big deal about “instantaneous”, but even mixing in the upper 50 meters is not “instantaneous”, so it really boils down to how fast heat gets mixed to deep layers. Disqualifying his model on the “instantaneous” argument is a strawman. You have made a direct unsupported statement that Spencer’s model is flatly wrong in his choice of a mixing depth. I believe its you who may be wrong, and I challenge you to back up your hypothesis.

I also ask that Gavin drop in on this issue, and report to us what the coupled models depict on mixing depths. It seems to me that they must show deeper mixing than 50 M, since there is not enough mass in the upper 50 meters of ocean to account for the annual heat storage changes that are implied by observations for the the full integrated 700 meter volume of ocean.

Also, in my comment you chose not to post, I pointed out that the Wielicki graph clearly shows net TOA annual radiative fluxes of more than 1 W/m2. I also asked you flatly if the Wielicki graph in green (“net TOA anomoly”) is reporting the same quantity as the red graph labeled “top of atmosphere radiative anomaly”?

There have been some serious charges leveled against Spencer here, so lets put your analysis under the microscope and see how it stands up to closer examination.

[Response: Don’t worry about insulting me. In this business you can’t be too thin-skinned. I’ve been insulted by better than you and lived to tell the tale. Ask Ram about the “spherical mouse” sometime.

As for Wielecki, I’m not sure which red graph you are referring to, but if you mean the one at the top of the three-panel graph from the corrected version of the Science article,that is labeled “LW,” which, as I said in my response to Spencer Weart that is the TOA longwave radiative forcing. The one below that (“SW”) is the shortwave (solar) radiative forcing. “NET” is the sum, and is the green curve at the bottom, which is much smaller than the individual components, because of the substantial cancellation between longwave and shortwave components. Take note of the time scale. A lot of the remaining variability you see averages out over the annual cycle, and as I said, if you just take the part that actually correlates with PDO and SOI, you’d get an even smaller radiative forcing coefficient. You are welcome to try something similar with global radiative forcing fluctuation, but if you do it will be rather tricky to isolate the cloud effect, since you have the snow and ice albedo effect to deal with then, which are largely temperature-related feedbacks. Now, if you meant the other red curve, in the graph below, that is not from data at all. That is what you would get from Roy’s combination of the PDO and SO indices, using his scaling factor, if you don’t hide the amplitude by taking a five-year running mean. As you noted, that graph has very large fluctuations — which was my point. It’s way out of line with Wielecki’s NET curve.

Now, on to the interesting issue of heat transport to the deep ocean. Your problem is that you are trying to shoehorn the whole ocean into a mixed-layer picture. What makes the mixed-layer the mixed-layer is that wind-driven and buoyancy-driven turbulence mixes heat almost instantaneously, making it act as an isothermal slab. That allows you to compute the thermal inertia without having to deal with the transport explicitly. Large scale ocean currents do not work that way. There is another characteristic time scale, which is the time for transport of fluid from the surface waters into the deep ocean. While there can be individual convective plume events that go deep and quickly, these have to be weighted by the volume of ocean water involved. The reason I say that a global 1 km. mixed layer is oceanographically absurd is that all sorts of well-observed facets of the ocean go haywire if you assume a mixed layer to that depth — seasonal cycle, C14, CFC’s, and for that matter the rate of removal of CO2 from the atmosphere. Moreover, ENSO would go away if you mixed the tropical ocean that deeply. Now, for some purposes if you were looking at the very long term response to a very slowly varying radiative forcing, you might get away with treating the ocean with a deeper equivalent mixed layer. But that is not what is at issue here — Roy is forcing the model with an artificially pumped-up radiative forcing with a lot of short-term variability, but is then artificially suppressing the short-term response by mixing it away to 1km instantaneously.

[Response: Now, as to “accusations,” all I’ve done is shown you how Roy got his graph, and shown you the number of adjustable knobs he allowed himself to twiddle in order to get it. If you want to defend his parameter choices over mine, fine and good luck to you. –raypierrre]

John E. Pearson–a quotation.

“Give me 4 parameters, and I will fit an elephant; five, and I will make him wiggle his trunk!”–John von Neumann

RayPierre, You have confused me. You state that “the satellite data set confirms that the climate is warming” and send us to the RSS/MSU website http://www.remss.com/msu/msu_data_description.html#msu_amsu_trend_map_tlt

However the average temperature change of the four channels is MINUS 0.002 K/decade over the past 29 years. The greatest increase is the TLT channel at 0.175 K/decade (1.75 K/century). “Warming” should indicate an ongoing process. But none of the channels show any warming since 1998. For a process that occured in the past but is not currently happening, the past tense “warmed” is more appropriate.

[Response: You are being deliberately obtuse. The satellites track the GISS surface temperature record and reproduce the trend. If you want to know why your “global warming stopped in 1998” meme is nonsense, just go check our earlier posts on the subject. –raypierre]

[Response: It’s worth adding that averaging the four channels makes no sense whatsoever. The stratosphere is involved in TMT, TTS and TLS and that is cooling because of ozone depletion and increasing CO2. – gavin]