Over the last couple of months there has been much blog-viating about what the models used in the IPCC 4th Assessment Report (AR4) do and do not predict about natural variability in the presence of a long-term greenhouse gas related trend. Unfortunately, much of the discussion has been based on graphics, energy-balance models and descriptions of what the forced component is, rather than the full ensemble from the coupled models. That has lead to some rather excitable but ill-informed buzz about very short time scale tendencies. We have already discussed how short term analysis of the data can be misleading, and we have previously commented on the use of the uncertainty in the ensemble mean being confused with the envelope of possible trajectories (here). The actual model outputs have been available for a long time, and it is somewhat surprising that no-one has looked specifically at it given the attention the subject has garnered. So in this post we will examine directly what the individual model simulations actually show.

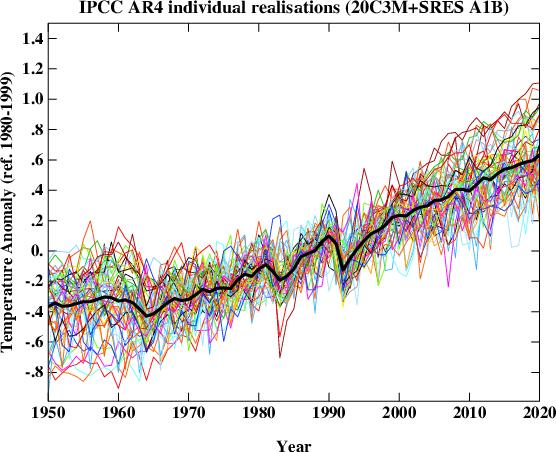

First, what does the spread of simulations look like? The following figure plots the global mean temperature anomaly for 55 individual realizations of the 20th Century and their continuation for the 21st Century following the SRES A1B scenario. For our purposes this scenario is close enough to the actual forcings over recent years for it to be a valid approximation to the simulations up to the present and probable future. The equal weighted ensemble mean is plotted on top. This isn’t quite what IPCC plots (since they average over single model ensembles before averaging across models) but in this case the difference is minor.

It should be clear from the above the plot that the long term trend (the global warming signal) is robust, but it is equally obvious that the short term behaviour of any individual realisation is not. This is the impact of the uncorrelated stochastic variability (weather!) in the models that is associated with interannual and interdecadal modes in the models – these can be associated with tropical Pacific variability or fluctuations in the ocean circulation for instance. Different models have different magnitudes of this variability that spans what can be inferred from the observations and in a more sophisticated analysis you would want to adjust for that. For this post however, it suffices to just use them ‘as is’.

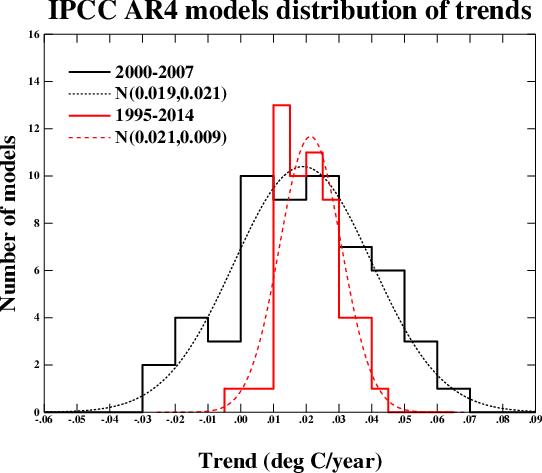

We can characterise the variability very easily by looking at the range of regressions (linear least squares) over various time segments and plotting the distribution. This figure shows the results for the period 2000 to 2007 and for 1995 to 2014 (inclusive) along with a Gaussian fit to the distributions. These two periods were chosen since they correspond with some previous analyses. The mean trend (and mode) in both cases is around 0.2ºC/decade (as has been widely discussed) and there is no significant difference between the trends over the two periods. There is of course a big difference in the standard deviation – which depends strongly on the length of the segment.

Over the short 8 year period, the regressions range from -0.23ºC/dec to 0.61ºC/dec. Note that this is over a period with no volcanoes, and so the variation is predominantly internal (some models have solar cycle variability included which will make a small difference). The model with the largest trend has a range of -0.21 to 0.61ºC/dec in 4 different realisations, confirming the role of internal variability. 9 simulations out of 55 have negative trends over the period.

Over the longer period, the distribution becomes tighter, and the range is reduced to -0.04 to 0.42ºC/dec. Note that even for a 20 year period, there is one realisation that has a negative trend. For that model, the 5 different realisations give a range of trends of -0.04 to 0.19ºC/dec.

Therefore:

- Claims that GCMs project monotonic rises in temperature with increasing greenhouse gases are not valid. Natural variability does not disappear because there is a long term trend. The ensemble mean is monotonically increasing in the absence of large volcanoes, but this is the forced component of climate change, not a single realisation or anything that could happen in the real world.

- Claims that a negative observed trend over the last 8 years would be inconsistent with the models cannot be supported. Similar claims that the IPCC projection of about 0.2ºC/dec over the next few decades would be falsified with such an observation are equally bogus.

- Over a twenty year period, you would be on stronger ground in arguing that a negative trend would be outside the 95% confidence limits of the expected trend (the one model run in the above ensemble suggests that would only happen ~2% of the time).

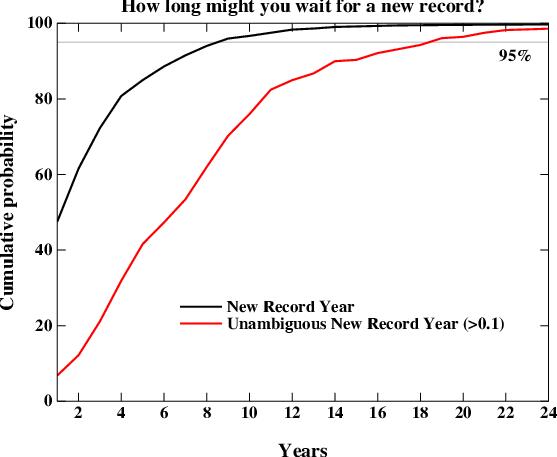

A related question that comes up is how often we should expect a global mean temperature record to be broken. This too is a function of the natural variability (the smaller it is, the sooner you expect a new record). We can examine the individual model runs to look at the distribution. There is one wrinkle here though which relates to the uncertainty in the observations. For instance, while the GISTEMP series has 2005 being slightly warmer than 1998, that is not the case in the HadCRU data. So what we are really interested in is the waiting time to the next unambiguous record i.e. a record that is at least 0.1ºC warmer than the previous one (so that it would be clear in all observational datasets). That is obviously going to take a longer time.

This figure shows the cumulative distribution of waiting times for new records in the models starting from 1990 and going to 2030. The curves should be read as the percentage of new records that you would see if you waited X years. The two curves are for a new record of any size (black) and for an unambiguous record (> 0.1ºC above the previous, red). The main result is that 95% of the time, a new record will be seen within 8 years, but that for an unambiguous record, you need to wait for 18 years to have a similar confidence. As I mentioned above, this result is dependent on the magnitude of natural variability which varies over the different models. Thus the real world expectation would not be exactly what is seen here, but this is probably reasonably indicative.

We can also look at how the Keenlyside et al results compare to the natural variability in the standard (un-initiallised) simulations. In their experiments, the decadal mean of the period 2001-2010 and 2006-2015 are cooler than 1995-2004 (using the closest approximation to their results with only annual data). In the IPCC runs, this only happens in one simulation, and then only for the first decadal mean, not the second. This implies that there may be more going on than just the tapping into the internal variability in their model. We can specifically look at the same model in the un-initiallised runs. There, the differences between first decadal means spans the range 0.09 to 0.19ºC – significantly above zero. For the second period, the range is 0.16 to 0.32 ºC. One could speculate that there is actually a cooling that is implicit to their initialisation process itself. It would be instructive to try some similar ‘perfect model’ experiments (where you try and replicate another model run rather than the real world) to investigate this further though.

Finally, I would just like to emphasize that for many of these examples, claims have circulated about the spectrum of the IPCC model responses without anyone actually looking at what those responses are. Given that the archive of these models exists and is publicly available, there is no longer any excuse for this. Therefore, if you want to make a claim about the IPCC model results, download them first!

Much thanks to Sonya Miller for producing these means from the IPCC archive.

Martin Vermeer (341) — I just read Gavin’s replies, not what Gerald Browning writes. :-)

That is what this site is all about,. Character assassination.

My credentials speak for themselves you [edit]

Jerry

[Response: ok, that sort of name calling (edited out for the benefit of our readers) earns you a permanent ban. you are no longer welcome here at RealClimate. please take it elsewhere. -moderator]

#345 — Ray, what causal conclusions are warranted in science in the absence of a falsifiable theory?

For me, the answer to that is ‘none.’ How about for you? Can we just decide what is causally true, by reference to inner certainty? Can we use inductive inferences to conclude about physical causes? If so, then how do we choose among conflicting inferences?

You offer to take back your “insidious motives” charge if I will agree to violate the basic construct of science, namely to put aside the standard of objective judgment regarding causes and agree to a conclusion that is not warranted by a falsifiable theory. I can’t ethically do that.

Climate models include virtually everything we can presently know about climate. If our conclusions regarding climate are “not at all contingent on the results of climate models,” then on what should they be contingent? On personal subjective judgment, perhaps?

Look at Figure 2 in the Skeptic article. The line from the simple model amounts to an upper limit estimate of enhanced CO2 surface temperature warming, because all the CO2 forcing ends up in the atmosphere in that model. The fact that it reproduces all 10 GCM trend lines across 80 years, minimally, means that in all of that 80 years in the GCMs none of the CO2 forcing is being diverted into warming the oceans, melting the ice caps, or reducing sea ice in the Arctic, etc. How reasonable is that?

R. W. Spencer, et al., (2007) “Cloud and radiation budget changes associated with tropical intraseasonal oscillations” GRL 34, L15707 1-5, report interactions between clouds, precipitation and longwave radiative cooling that tends to dump excess heat off into space. This effect is not included in models. It seems to me far premature to say that climate theory is “settled.”

That being true, then how is it possible, within the demands of science and its standard of objective judgment, to make a conclusion that, “the evidence is quite strong.“? In science, evidence takes its meaning from theory, and theory only allows conclusions when it’s falsifiable.

The point of my article is simple. If the uncertainty is much larger than the effect, then the effect is undetectable. This seems to be a very basic principle of experimental science. Do you disagree?

[Response: Commenting on this caricature of an outline of a silhouette of an argument is probably an exercise in futility, but here goes. First off, if you think that the entirety of climate science outside of GCMs is non-falsifiable you are an idiot. Thus your first point is simply diversionary rhetoric, as is your citation of Spencer’s paper. In your opinion, what does the MJO have to do climate change, or your linear fit to GCM results? Is it included? As for your pop-philosophy of science, I’d suggest that your linear model which suggests that GCMs should have a +/- 100 deg C spread in global mean temperatures after 100 years of simulation has in fact been falsified already since GCMs don’t actually do that. Therefore, your ‘theory’ should be discarded. Though I’m sure you’ll find a reason not to. – gavin]

Re #339: Gavin, please have a look at the Wijffels PPT presentation (slide 18) from the March 2008 NOAA XBT Fall Rate Workshop http://www.aoml.noaa.gov/phod/goos/meetings/2008/XBT/index.php.

Even after the fall rate corrections are applied, it still looks to me like most of the models are sigificantly overestimating the volcanic forcing of Agung, Chichon, and Pinatubo relative to the ocean heat observations. The other point I take from this workshop is that the XBT bias correction is still not agreed upon, and this whole issue looks like a mess. The Wijffels correction increases the rate of long-term warming, the Levitus correction keeps it the same, and the Gouretski correction shows a substantial decrease in the trend.

[Response: There’s some stuff in press that goes into more detail. A little more patience is unfortunately required. – gavin]

Pat Frank (353) — The philosophy, or better, the methods, of science have advanced far beyond the falsifiablity notions so ably (and importantly) espoused by Karl Popper. Philosophers of science have treated some of the methods of science rather formally. One could do worse than to begin with

http://plato.stanford.edu/entries/logic-inductive/

but I certainly also recommend “Probability Theory: The Logic of Science” by the late E.T. Jaynes.

re #353

Pat Frank, there’s a fundamental logical error in your statement:

”Climate models include virtually everything we can presently know about climate. If our conclusions regarding climate are “not at all contingent on the results of climate models,” then on what should they be contingent? On personal subjective judgment, perhaps?”

Of course our conclusions regarding climate are not contingent on the results of climate models. The notion that they are is to get things back to front. A more appropriate statement is that our climate models are contingent on our understanding of the climate system.

I’ve tried to consider this argument by analogy with protein folding modelling which I’m more familiar with, a simulation effort that shares many of the features of climate simulations (it attempts to parameterize a large number of contributions (forces) to the folded state of proteins according to a vast body of independent research on the nature and contributions of these forces, and to encode these within a sophisticated model…it makes use of the most powerful computers available…and so on).

We could take our version of a protein folding algorithm and attempt to “fold” human haemoglobin according to the known amino acid sequence of the protein. We might or might not be successful (probably not, in fact). Are our conclusions regarding protein folding, or the conformation of haemoglobin, contingent on the results of our folding model? Of course not.

What is contingent on the results of a model? The essential contingent element is described by the tautology that what is contingent on the results of our model is our confidence in our ability to simulate certain elements of the target system according to parameterization of a number of components whose contributions/interactions have been determined by a large body of independent research….

And when we address this in the real world, we find that climate models do a pretty good job (at least in terms of global average temperatures, atmospheric water vapour, response to volcanic eruptions, warming induced changes in rainfall patterns…), probably because the large body of independent research that impacts on the parameterization of our model, and on which our understanding of the climate system is contingent is reasonably well founded.

Pat Frank, Thanks for the offer, but I’ll stick with physics. There are a lot of wrong ways to calculate errors, but I’ve never found them particularly enlightening. Moreover, to say that one cannot detect a signal against a noisy background depends on the characteristics of both the signal and the noise.

As to falsifiability–that is easy. All you have to do is construct a physical, dynamical model that does a better job without relying on enhanced greenhouse warming due to human activity. Let us know how that goes. Nobody else seems to be having much luck with it–hence my argument that that much of the science is settled.

And as David Benson has pointed out, philosophy has progressed a long way beyond Popper.

Gavin, will you consider letting Jerry back in if he’s medicated?

#353 — Gavin wrote: “[Response: Commenting on this caricature of an outline of a silhouette of an argument is probably an exercise in futility, but here goes. First off, if you think that the entirety of climate science outside of GCMs is non-falsifiable you are an idiot. Thus your first point is simply diversionary rhetoric, as is your citation of Spencer’s paper. In your opinion, what does the MJO have to do climate change, or your linear fit to GCM results? Is it included? As for your pop-philosophy of science, I’d suggest that your linear model which suggests that GCMs should have a +/- 100 deg C spread in global mean temperatures after 100 years of simulation has in fact been falsified already since GCMs don’t actually do that. Therefore, your ‘theory’ should be discarded. Though I’m sure you’ll find a reason not to. – gavin]”

I’ll ignore the lurid preface. Gavin, please notice that I never suggested that the entirety of climate science outside of GCMs is non-falsifiable.

If you really want to know the relevance of the results of Spencer ea to climate change, why not ask him to post an essay on it here? On the other hand, Spencer ea write that, “The increase in longwave cooling is traced to decreasing coverage by ice clouds, potentially supporting Lindzen’s “infrared iris” hypothesis of climate stabilization. These observations should be considered in the testing of cloud parameterizations in climate models, which remain sources of substantial uncertainty in global warming prediction.” Towards the end of the paper, they specifically state, “While the time scales addressed here are short and not necessarily indicative of climate time scales, it must be remembered that all moist convective adjustment occurs on short time scales.” That all seems pretty relevant.

Next, I didn’t make a linear fit to GCM results. The linear model is an independent calculation involving surface temperature, constant relative humidity, and GHG forcing. It faithfully mimicked GCM surface temperature projections across 80 years, however.

Next, the uncertainty widths were not a representation of what a GCM might project for mean future surface temperature. I was very careful to point this out explicitly in the article. It’s a matter of resolution, not specified mean.

Penultimately, the linear model isn’t a theory. It was constructed to test what GCMs project for surface temperature. In the event of the actual test, it did a good job.

Finally, in view of #352 and “you are an idiot,” shouldn’t you ban yourself from RealClimate? :-)

#355 David Benson, thanks for the pointer. :-) I’ll check it out. I’ve been reading David Miller’s “Critical Rationalism.” What do you think of his work?

It would probably be more useful if he were let back in unmedicated. While I appreciate the unwillingness to let vile comments slide by (the name calling etc that’s been edited out), I have to wonder if editing them does more to reduce the negative impact on his reputation and the willingness to take him seriously, than it does to enhance the effort of this blog to maintain a cordial atmosphere for disagreement.

While I haven’t looked, I’m sure that Jerry’s claiming he’s been banned for having proved all of climate science wrong, that the editing of his rude comments amounts to “censorship by the anti-science establishment”, etc etc.

In other words, he’s going to do the best to martyr himself and benefit by the edits and by being banned.

This thread has brought out the denialists in force, in fact it’s brought out the angry in them.

Because computer models are a mystery to the general population, they’re a favorite target of denialists. But the fact that the models actually work threatens to rob them of this tactic, in fact they fear that their favorite target is slipping through their fingers.

That’s why it’s no surprise that they’re so desperate to salvage the claim that models are useless. If even computer models of climate are understood to be realistic, how can they hope to convince people that fundamental physics and observed data aren’t harbingers of things to come?

Apparently they think it’s on the level of forcing Elliot Ness to use Grand Theft Auto to predict future crime.

Gavin,

You made a comment in reply to G. Browning: “Weather and climate models clearly are… “. I thought I understood that climate was not chaotic. Did you mean the climate models are chaotic, but climate isn’t, or, if you meant that climate is chaotic, how is it so? Thanks…

[Response: Climate models include the weather as well. Initial value tests with either type of model show sensitivity to initial conditions – therefore the individual path through phase space is chaotic. But when you look at the climate of these models averaged over a long time, or equivalently over a large number of ensembles, the statistics are stable – the global mean temperature, wind patterns and their variability etc. Thus the model climate is not chaotic. – gavin]

re 350. Tamino makes a good point about the usefulness of imperfect models to make predictions. That’s probably a wise turn for this discussion to take. ordinarily when one builds a model, one would specify in advance which measures of merit or which predictions will be used to judge the model. Then one collects observations and checks the model’s measures of merit or predictions against observations. In the case where you are making multiple predictions cases will obviously arise in which some predictions are more accurate than others. The less accurate predictions don’t “falsify” the model, they suggest areas for improvement. And some predictions are more important than others. In the case of GCMs, it would seem ( weigh in and disagree) that the key predictions, in no particular order, would be.

1. sea level.

2. Precipitation.

3. GSMT.

I’d select these three primarily because it would appear that they have the mostly easily quantified impact on the human species. Other suggestions are of course welcome.

Next comes the question of how we assess the usefulness of the prediction. This means specifying a test in advance of our prediction and specifying an amount of data that we need to acquire before a solid evaluation of the model’s usefulness can be made.

experimental design.

can we assess models after 5 years of data? 10? 20? open question.

At what point in time can we asses the skill of Ar4 models?

how long must we collect data before we judge the usefulness of the model.

The latter question, of course, begs the question of “useful for what” and the question of “useful for whom” A model prediction of sea level rise may be useful for the army corp of engineers working in NOLA, but useless for tibetan monks. Is regional usefulness more important than global usefulness?

Lots of questions. Start simply. what are the three most critical, most useful “predictions” that GCMs make?

Gavin. Thank you for your earier comments. And Marcus – I think I must have been posting when your comment came in.

I still have several questions arising but I will think a bit before asking them. Just one now. What is the correct estimate of the “instantaneous” effect of methane (i.e. before any decay) compared to CO2. I calculated it earlier to be about 180 times CO2. I used numbers from Wikipedia (http://en.wikipedia.org/wiki/Greenhouse_gas):

0.48(Wm-2CH4)/1.46(Wm-2CO2)*384(ppmCO2)/1,745(ppbCH4)*44(CO2)/16(CH4)

Actually this gives 199 times CO2. Is it really as high as this? Where have I gone wrong? I hope any mistake isn’t too simple.

Geoff (#365): Two issues: First, your W/m2 values are relative to preindustrial, not to zero. eg, 1.46 W/m2 of CO2 is from raising CO2 concentrations from 280 to 384. Second, forcing isn’t linear with added gas, so to calculate the forcing from an additional ton of methane compared to an additional ton of CO2 you need to be a bit more sophisticated.

I’d recommend using the forcing approximations from

Hansen, PNAS, 1998.

Hope this helps.

Maybe I missed in somewhere in all of the above, but are the numbers for Sonya Miller’s AR4 global means (presumably the basis for the figures at top) linked somewhere, or could they be? Thanks.

Marcus

The ratio of concentrations 384(ppmCO2)/1,745(ppbCH4) is clearly recent enough to fit my purpose. But is the ratio of radiative forcing 0.48(Wm-2CH4)/1.46(Wm-2CO2) contemporary with it? Perhaps Tom can help on this? I don’t know the primary source – I just interpreted (or misinterpreted) the table on Wikipedia.

I’m not keen on being more sophisticated – I suspect all the sophistication anyone could muster won’t change the answer by more than a few percent.

But thanks.

#356 — Chris, thanks for a thoughtful comment. It seems to me that your premise on my logical error inheres an error of science. That is, models of climate are not independent of our understanding of the climate system, which independence your formulation of my logical error seems to require.

Climate models, like the protein folding model of your example, contain the same physical theory that informs the intermediate conclusions one may derive about processes of smaller scale than those involving the entire system. Not inconsiderable amounts of the physics of each system are known. So, of course one may know things about climate without having a complete theory, just as one may know something about protein folding without a complete theory.

However, still using the protein folding example to illustrate, one may not know why hemoglobin folded along *this* trajectory but not *that* one, when each is equally plausible, unless one has a physical model of protein folding that is falsifiable at the level of trajectory resolution. And if the folding phase space is extremely large, there may be a very large number of equally plausible trajectories, making a choice of any one of them very uncertain.

Without a model that properly and fully included solvent, for example, how could one decide that some particular folding step was driven more by the increased entropy of excluded water, than by the known formation of a couple of localized hydrogen bonds?

Further, if you constructed computer model of hemoglobin folding using an empirically parameterized and incomplete physical model, and adjusted the multiple parameters to a non-unique set that had your in-silico hemoglobin produce something like the proper folded state, that from an initial unfolded state that was rather poorly constrained, how much could the success of that model tell one about the actual trajectory hemoglobin traversed in the U-to-N transition? Very little, I’d hazard. Nor would that model predict anything reliable about the folding of, say, bacterial nitrous oxide reductase (exemplifying another four heme-iron protein). These caveats would be true even if the computed intermediate states of hemoglobin seemed descriptively reasonable.

And if your U-to-N parameters were tuned for refolding in urea solution how much confidence would you grant them to describe the refolding protein in guanidinium chloride?

This is somewhat analogous to the state of uncertainty with climate models, except that the climate state-space is hugely larger than that of folding proteins. Climate models are not falsifiable at the trajectory level. An empirically tuned non-uniquely parameterized model with incomplete physics can’t choose among plausible trajectories and can’t tell us reliable details about the disbursements of relatively minor amounts of energy among coupled feedback systems and within future climates.

The rms cloudiness error described in the Skeptic article is real, and alone produces an uncertainty at least as large as all of the w.v.e. GHG forcing. How are climate models supposed to resolve effects that operate well below the limits of uncertainty?

[Response: This statement is so awesomely wrong, it’s flabbergasting that you still repeat it. You did not show any error propagation in a GCM – you showed it in a toy linear model that is completely divorced from either the GCMs or the real world. Statements you make about GCMs therefore have an information content of zero. – gavin]

Despite your reassurances, the authors of AR4 WGI Chapter 8 are not very sanguine about the ability of present GCMs to predict future climate, and for good reason.

Pat Frank, Your contention that climate models are not falsifiable just doesn’t hold water. First, the complexity of climate models means that you need to be precise about what you see to falsify. Presumably you are not interested in falsifying every aspect of the model. Indeed, most of the model may be correct. The main question is whether we can falsify the hypothesis of anthropogenic causation–and that is straightforward. All you have to do is construct a physical, dynamical model (no, your toy doesn’t qualify) that excludes this effect and show it accounts for the data at least as well (or as well modula the number of parameters–per information theory) as the current batch of climate models. As efforts along this track are moribund (and I’m being kind), I would say that current GCMs are safe from falsification for now–not because they are not falsifiable, but because they are the best models we have for now.

BTW, your assertion that a model with incomplete physics will not have predictive power is simply false. In many cases when you do not have an overabundance of data, you are better off assuming a simpler model, rather than one that has poorly constrained parameters.

Finally, I think that you fail to understand the role GCMs play in climate physics. We know climate is changing. We know that all attempts to explain the changes assuming only natural variability have failed. We know that a mechanism that we know to already be operant in the climate–greenhouse forcing–can explain the observed trends quite well. We know from paleoclimate data that much warmer temperatures are possible when CO2 is high. This is sufficient to establish a serious risk–indeed at the outside edge of past variation an unbounded risk that could spell the end of human civilization. The climate models are not essential to establishing the credibility of such a risk. Rather, they are invaluable at bounding the risk so that we have realistic targets for how to allocate mitigation efforts. If you are wary of draconian curtailment of economic activity, the models are your best friend.

#369 — Gavin wrote, “you showed it [uncertainty propagation] in a toy linear model that is completely divorced from either the GCMs or the real world…”

The “toy model” did an excellent job of independently reproducing GCM global average temperature projections. So it manifestly is not completely divorced from them. The real world is another question entirely, and obviously not just for my little model.

[Response: Matching a linear trend is not hard. How does it do on the 20th C, or the mid-Holocene or the LGM or in response to ENSO or the impacts of the NAO or to stratospheric ozone depletion or the 8.2 kyr event or the Eemian….. Just let me know when you’re done. – gavin]

Ah, Geoff, what I meant to say was that even for a crude approach, you’d want to use

(384-280)ppm CO2/(1745-750)ppb CH4 because the forcings you listed are the additional forcing from going from preindustrial (280 ppm and 750 ppb) to present day concentrations.

# 369 Pat Frank

you’ve changed the meaning in your revised statement.

My post was addressing your assertion/question ”Climate models include virtually everything we can presently know about climate. If our conclusions regarding climate are “not at all contingent on the results of climate models,” then on what should they be contingent? On personal subjective judgment, perhaps?”

….which is a non-sequiter (embellished with a bit of sarcasm!).

In addressing my post, you’ve changed your statement, and no one would disagree with the first part of your revised statement ”That is, models of climate are not independent of our understanding of the climate system… (that’s obvious)..the second part which independence your formulation of my logical error seems to require is wrong (another non-sequiter).

Ray Ladbury made the point several times, but I’ll repeat it. Our conclusions regarding climate are NOT contingent on the results of climate models. However, obviously our climate models ARE contingent on our understanding of the climate system.

Your own “analysis” could be used to illustrate this point. Much of our concern with respect to man-made enhancement of the Earth’s greenhouse effect comes from the well-established understanding (independent of models) that CO2 (and methane and nitrous oxides and CFC’s) is a greenhouse gas, and the semi-empirical relationship (also essentially independent of models – from analysis of the Phanerozoic proxy temperature and CO2 records; from analysis of the temperature evolution during glacial cycles; from analysis of the IR absorption properties of CO2 and an estimation of its “contribution” to the greenhouse effect and so on…) that the Earth responds to enhancement of the greenhouse effect with a warming near 3 oC (+/- a bit) per doubling of atmospheric CO2.

Obviously your comments concerning “accuracy” (in your Skeptic’s piece) are silly and non-scientific. But your simple consideration of a so-called “passive greenhouse warming” model is unsurprisingly consistent with the estimated climate sensitivity (I’m not sure where the “passive” comes in, but if the Earth’s surface warms under the influence of an enhanced greenhouse effect, why not call it “passive”? – we could imagine a frog sitting in a saucepan on a cooker as an analogy perhaps). As the Earth’s greenhouse effect is enhanced, the Earth warms, and (at equilibrium) this warming seems to be near around 3 oC (+/- a bit) per doubling of atmospheric CO2 equivalents.

That seems to be broadly consistent with the scientific data, and the models of 20th century and contemporary warming (both as hindcasts and forecasts) are consistent with that conclusion. There are a couple of additional conclusions that we might make from the relative success of hindcast and forecast modelling efforts thus far: (i) the oddly non-scientific compounding errors that you postulate don’t actually apply to the climate models under consideration (perhaps those climate modelers actually know what they’re doing!)…and (ii) the feedbacks (especially those associated with atmospheric moisture in all its myriad forms) are acting roughly as has so far been predicted.

#371 — Gavin, I never claimed to be simulating a complete climate model, but just to test the meaning of GCM projections of GHG-driven global average surface temperature.

Matching the linear trend of GCM outputs using a simple model that incorporates only very basic physical quantities is a more serious result than you’re allowing. It’s not a physically meaningless LLS fit. It shows that all one need do to reproduce GCM global average surface temperature projections across at least 80 years is to propagate a linear trend in GHG forcing. Where are the surface temperature effects of all the feedbacks you listed? — ENSO, NAO, PDO, etc.? The coincidence of lines shows that for 80 years at least none of the excess GHG energy goes anywhere except into surface temperature.

[Response: Why not try looking at what the models are doing, because it certainly isn’t that. How can you claim your model reproduces the GCMs when if you looked at any other variable (TOA imbalance, ocean heat content changes), your model would be way off what those same GCMs show? Your model is not physically based despite some random cut and pastes from the literature and corresponds to no conceivable planet, ocean or climate. Its information content is zero. Therefore neither your conclusions about what you think it says about the GCMs, nor what you think it means for the real world have any validity. – gavin]

DO WE NEED AN EMERGENCY STOP?

Marcus thanks. I should have tried harder to understand your post. I’ve now even looked at the equations in AR4 that give estimates for changes in radiative forcing. The CH4/N2O does seem to show more interaction than I expected. But I am working towards an estimate that I can understand for the short term effects of methane.

Because I worry about positive feedbacks, I want to see if there are “emergency stops” to global warming. The current emphases are on periods of several decades or centuries. But could anything be done in the short-term if it were discovered that there is a danger of setting off “climate avalanches” caused by underestimated or unknown feedback mechanisms? Might we need quick fix policies to pause global warming before realistic longer term solutions are put into place?

Is methane reduction a quick fix? I understand cutting anthropomorphic methane emissions would have a grater immediate effect than cutting CO2 emissions, even if the effect was not as long-term.

Would methane reduction be a good emergency stop?

#373 Chris — There is no change of meaning from #353 in my statement in #369. The latter post was intended to be, in part, an explanation of #353. Pointing out that, “models of climate are not independent of our understanding of the climate system. (#369),” is merely a way of elaborating the same message as, “Climate models include virtually everything we can presently know about climate. (#353)”

There is no non-sequitur, no change in meaning, and no logical lapse.

The comment in #369 was in response to your remarkable claim that, “Of course our conclusions regarding climate are not contingent on the results of climate models. The notion that they are is to get things back to front. A more appropriate statement is that our climate models are contingent on our understanding of the climate system.”

Sentence 3 in that paragraph supposes that “climate models (from sentence 1) render conclusions from something other than “our understanding of the climate system” (sentence 3). How can that be? What is the physics of climate except an embodiment of our understanding of climate? What relevant understandings do climate models contain other than the known physics of climate? Your statement in that paragraph makes a distinction (climate models vs. understanding) without a difference.

In that first sentence, you are supposing that it is possible to derive conclusions at the level of climate without a theory of climate. This is to fly into the face of the fundamental structure of science, in which the meaning of fact is derived only from a valid theory.

Just to be very clear, it does not take a fully complete falsifiable theory in order to derive subsidiary conclusions. Valid subsidiary theories provide that ability. This is merely an outgrowth of the program of reductionism. I made this point explicitly in my previous post. However, global conclusions require a global theory, in both the generic and the explicit sense of “global.”

You wrote that, “Our conclusions regarding climate are NOT contingent on the results of climate models.” If, by this, you mean globally predictive conclusions regarding climate are possible without a falsifiable global theory, then I find your claim, as coming from a scientist, incredible.

[Response: This statement has the logical consistency of wet newsprint. Take an analogy: “You wrote that the sky is blue. If by this you mean the moon is made of green cheese, then I find you claim incredible.” This (and your version above), is obvious nonsense. You don’t need to be a philosopher of science to spot that. – gavin]

Your suggestions with regard to, “man-made enhancement of the Earth’s greenhouse effect” are meritless with respect to deriving a global impact from CO2. The reason is that there is no global climate theory. For example, turbulent convection is not at all well-represented in climate models, and turbulent convection plays at least as large a part as radiant transmission in determining surface temperature. This is part of Gerald Browning’s point. The lack of a global theory of climate means that conclusions regarding the global-scale response of climate to minor perturbations are not possible. Further, if you were really as serious about “Phanerozoic proxy temperature” as you represent, you’d concede that where it has been resolved, atmospheric CO2 always lags temperature trends. This does not support CO2 as a climate driver.

[Response: No, CO2 is a greenhouse gas. That’s why it’s a climate driver. – gavin]

Your statement that, “Obviously your comments concerning “accuracy” (in your Skeptic’s piece) are silly and non-scientific.” is unsubstantiated opinion-mongery.

I called the linear model “passive” because it represents a thermal response of atmosphere to GHG forcing absent any climate feedbacks (except constant relative humidity). It did strikingly well reproducing GCM projections. This implies that the GCMS also omit any feedbacks, except constant relative humidity, in calculating global average surface temperature response to GHGs. That is, if any feedbacks were present that diverted GHG forcing energy into climate systems other than the atmosphere, the GCM temperature lines would have diverged from linear and from the passive model line. But across 80 years, they don’t. Given that remarkably unreasonable result, one is nonplussed by your blandly stated confidence in them.

[Response: Confidence is built from success in modelling real events – Pinatubo, mid-Holocene, response to ENSO etc. etc. – If your supposed error propagation was valid how do they do any of that? – gavin]

Further, given the widely acknowledged inability of GCMs to model clouds adequately, your statement that, “the feedbacks (especially those associated with atmospheric moisture in all its myriad forms) are acting roughly as has so far been predicted.(bolding added),” is a wonder to behold.

[Response: The amplifying affect of water vapour and ice albedo feedbacks has been amply demonstrated in the real world. Cloud feedbacks are more uncertain – but pray tell how your linear model (which will no doubt be validated against complex observed climate changes involving clouds any day now) deals with the transition between the shortwave and longwave feedbacks? – gavin]

Finally, if my compounding of an apparently systematic global cloudiness error is “oddly unscientific” why haven’t you posted evidence of a scientific mistake in it?

[Response: You haven’t been paying attention. Your error was pointed out by Tapio Schneider and many others. You confuse an error in the mean with an an error in a trend and make up an error envelope that has no connection to anything in the models or the real world. -gavin]

Pat Frank, I have never understood why people take pleasure in demolishing a straw man. Climate models have demonstrated their utility and have succeeded in modeling a variety of phenomena–as pointed out repeatedly by Gavin, Chris et al., and yet you insist that this is impossible. I would think that a curious mind might want to learn enough about the models to resolve the seeming contradiction. Yet you persist in clinging to your cartoon version of the models. Strange.

Most puzzling of all is the fact that you attack the climate models. All that is needed to establish the credibility of the threats posed by climate change is the fact that CO2 is an important ghg, the fact that we’ve increased that ghg by 38% (indeed the highest it’s been in over 800000 years), the fact that the planet is warming rapidly and the fact that at least two of the most serious mass extinction events seem to have been due to warming caused by greenhouse gas increases. Climate models are key to limiting risk–not establishing it.

It is virtually impossible to construct a climate model with any predictive power with a CO2 sensitivity much below 3 degrees per doubling. It is virtually impossible to limit sensitivity to less than 6 degrees without a climate model.

Perhaps you had fun constructing your toy model, but you certainly did nothing to advance understanding of climate–you own or anyone else’s.

Pat Frank’s repetition of this old canard …

pretty much allows one to judge his credibility without any further effort.

An example of a real world test of assumptions drawn from a toy model, worth doing now if you never did it as a kid:

http://insects.ummz.lsa.umich.edu/MES/notes/entnote10.html

At least we have the IPCC models… the US Govt National Scientific assessment released today seems to be a sanitized version of the IPCC report. http://www.climatescience.gov/Library/scientific-assessment/

It quotes the IPCC report extensively and borrows the same language.

To be fair, this is just from my quick scan on my part – but I did spot a familiar graph on p 96 of the US report. Same data as the IPCC graph except all the data ranges seem to be adjusted about a half a degree downward.

See for yourself: http://www.climatescience.gov/Library/scientific-assessment/Scientific-AssessmentFINAL.pdf on p 96 vs http://ipcc-wg1.ucar.edu/wg1/wg1-figures.html

To me it looks like a batch of fudge.

[Response: The graph is from IPCC AR4 (Fig SPM 5) and shows the model results for the 20th C to and the scenarios going forward. There is no paleo-data at all. – gavin]

> half a degree downward

As I recall the models do suggest North America will warm a bit less than the global average increase, and that US report is supposed to be specifically about effects on the US.

Could be about right.

Except that the chart refers to IPCC as the source.

noted in the upper right corner

#378: dhogaza, you stole my comment! ;-)

#370 — Ray, with respect to an anthropogenic cause for climate warming, all one need do is show a large uncertainty. The cloud error, which is entirely independent of the linear model, fully meets that criterion. In a larger sense, what do non-unique parameterization schemes do to prediction falsification?

I’m not saying an incomplete model has no predictive power. I’m saying that a model must have resolution at the level of the predictions. That criterion is clearly not met with respect to a 3 W m^-2 forcing, as amply demonstrated by the magnitude of the predictive errors illustrated in WGI Chapter 8 Supplemental of the AR4.

In the absence of constraining data, and when the models available are not only imperfect but are imperfect in unknown ways, use of those models may be far more disastrous than statistical extrapolations. The reason is that a poor model will make predictions that can systematically diverge in an unknown manner. Statistical extrapolations allow probabilistic assessments that are unavailable from imperfectly understood and systematically incomplete models.

Any force in your last paragraph requires that all the relevant climate forcings be known, that they be all correctly described, and that their couplings be adequately represented especially in terms of the nonlinear energy exchanges among the various climate modes. None of that is known to be true.

#376 — Gavin, modeling real-world events can only be termed physically successful in science if the model is predictively unique. You know that.

If you look at the preface to my SI, you’ll see mentioned that the effort is to audit GCM temperature projections, and not to model climate. You continually seem to miss this point.

There is no confusion between an error in the mean and an error in a trend, because the cloud error behaves like neither one. Cloud error looks like theory-bias, showing strong intermodel correlations.

#377 — Ray, have you looked at the GCM predictive errors documented in the AR4 WGI Chapter 8 Supplemental? They’re very large relative to GHG forcing. Merely saying that CO2 is a greenhouse gas does not establish cause for immediate concern because the predictive errors are so large there is no way to know how the excess forcing will manifest itself in the real climate.

Just saying the models cannot reproduce the current air temperature trend without a CO2 forcing bias may as well mean the models are inadequate as that the CO2 bias works as they represent.

And in that regard, have you looked at Anthony Watts’ results at http://www.surfacestations.org? It’s very clear from the CRN site quality ratings that the uncertainty in the USHCN North American 20th C surface temperature trend is easily (+/-)2C. Are the R.O.W. temperature records more accurate? If not, what, then, is the meaning of a +0.7(+/-)2 C 20th C temperature increase?

#378 — dhogaza, if the paleo-CO2 and temperature trend sequences were reversed we can be sure you’d be bugling it, canard or no.

Honestly, I see little point in continuing discussion here. I came here to defend my work in the face of prior attacks. It’s not about climate but about a specific GCM audit, no matter Gavin’s attempts to change the focus. Claims notwithstanding, the evidence is that none of you folks have mounted a substantively relevant critique.

[Response: It seems to me that a GCM audit would actually involve looking at GCMs and the uncertainties they have. What you have done is make up a toy model with no structural connection to the real world or the GCMs and then claimed that it has some predictive capability to say something about GCMs. But since it manifestly does not predict any GCM behaviour other than the linear trend in one specific scenario for which it was designed, it should be rejected by the principles of science you claim to support. You can be happy that your theory was falsifiable, and indeed it has been falsified, thereby advancing the sum total of human knowledge. Thanks! – gavin]

This is the acid test (with thanks to Ross McKitrick for the idea that GHG emission imposts should be linked to measured temperature change, be it positive or negative).

The test for modellers is, are you prepared to have your salary adjusted in a decade, in proportion to your present model performance versus the measured climate?

As one who was paid to find new ore deposits, I did my bit. Had I not, I would have been on the streets early. It’s so easy to romanticise performance, it’s another matter to be paid for it. Or not paid. Or to have to reimburse.

Pat Frank, you are utterly ignoring the fact that the signal and noise may have utterly different signatures! For my thesis, I extracted a signal of a few hundred particle decays from a few million events–using the expected physics of the decays. I’ve worked with both dynamical and statistical models. I’ll take dynamical any day. For the specific case of climate models, CO2 forcing is constrained by a variety of data sources. These preclude a value much below 2 degrees per doubling (or much over 5 degrees per coubling). Thus, for your cloudy argument to be correct, you’d have to see correlations between clouds and ghgs–and there’s no evidence of or mechanism for this.

In effect, what you are saying is that if we don’t know everything, we don’t know anything–and that is patently and demonstrably false. What you have succeeded in demonstrating here is that you don’t understand climate or climate models, modeling in general, error analysis and propagation or the scientific method. Pretty impressive level of ignorance.

Geoff Sherrington, Policy must be predicated on the science, not the weather. Had you been as myopic about prospecting as you are about climate science, you would never have found so much as a piece of quartz.

I want to emphasize dhogaza’s #378 comment regarding Frank’s #376 statement, “atmospheric CO2 always lags temperature trends,” because this also made my baloney detector go on alert. Even I can detect this as a factually incorrect red herring. The detector goes ding-ding-ding when the incorrect statement is defended (Frank #384)! Frank has contaminated all of his arguments with baloney.

I have been following this discussion closely because I am a subscriber to the Skeptic (Tapio Schneider’s contribution was excellent). The magazine will probably have author rebuttals in the next issue, and there is always a lively section that will accept fairly detailed readers letters. It will be important that rebuttals be crafted for the educated non climate scientist, such as me, in order to be effective.

Steve

#384 — Gavin, the cloud error came from a direct assessment of independent GCM climate predictions. The linear model reproduced GCM surface temperature outputs in Figure 2, and was used to assess GCM surface temperature outputs from Figure 1. Where’s the disconnect?

[Response: The disconnect is that whenever the models don’t give a linear trend, your toy model will fail to reproduce it. Therefore your model does not match the GCMs in anything other than what you fitted it to, and therefore its conclusions about cause and effect and error propagation are irrelevant. – gavin]

#386 — Ray your example is irrelevant because you extracted a signal from random noise that follows a strict statistical law (radiodecay).

Roy Spencer’s work, referenced above, shows evidence of a mechanism coupling GHG forcing to cloud response, in support of Lindzen’s iris model. More to the point, though, in the absence of the resolution necessary to predict the distribution of GHG energy throughout the various climate modes, it is unjustifiable to suppose that this effect doesn’t happen because there is no evidence of it. The evidence of it is not resolvable using GCMs.

[Response: Actually you have no idea. Spencer’s statistics are related to the Madden-Julien oscillation, and he has certainly not done an analysis of this in the models and nor have you. The relationship to the iris hypothesis is non-existent since that involved a direct impact of SST increase on the size of the subsiding areas, not a connection of both to third mechanism (the MJO). This is just a random ‘sounds-like-science’ factoid. Please keep it up. – gavin]

You wrote, “In effect, what you are saying is that if we don’t know everything, we don’t know anything–and that is patently and demonstrably false.

I’ve never asserted that and have specifically disavowed that position at least twice here. How stark a disavowal does it take to penetrate your mind? My claim is entirely scientific: A model is predictive to the level of its resolution. What’s so hard to understand about that? It’s very clear from the predictive errors documented in Chapter 8 Supplemental of the AR4 that GCMs cannot resolve forcings at 3 W m^-2.

[Response: And yet they can. How do you explain that? (Extra points will be given for an answer that acknowledges the possibility you have no idea what you are talking about). – gavin]

Your last paragraph is entirely unjustifiable. To someone rational.

Pat Frank asks what kind of disavowal of the position that not knowing everything means not knowing anything. Oh, I don’t know, I guess what I’m looking for is a disavowal that doesn’t reassert the same position in different words. You seem not to understand the fact that dynamical, physically motivated models do not operate the same way as statistical models. If you were interested in learning the difference rather than pontificating, this site would be a good place to start.

And as has been repeated ad nauseum, the reality of anthropogenic causation does not require GCM–you simply can’t come up with a model that comes close to working without it. The models are much more important for limiting concern rather than establishing. I just do not understand why you would attack climate science when you have so little understanding of it.

Re # 387 Ray Ladbury

What a nasty response. In reality, we found many billions of dollars of new resources. We also found enough uranium to avoid much CO2 release into the air. Hundreds of millions of tonnes of it.

Have you a similar record of success, or are you frightened by the concept of Key Performance Indicators? (I’ll explain KPIs if the concept is foreign to you).

Gavin is on record as saying that 10 years of weather is enough for climate. I was merely using his figure.

Re: #391 (Geoff Sherrington)

Where is Gavin on record as saying that 10 years of weather is enough for climate?

re 392 tamino. Ask Gavin. He wrote it – if I can read his prose correctly, which is difficult because it is seldom without hidden caveats.

[Response: Link? – gavin]

Re #391

Surely you’ve used the Global Positioning System in your prospecting work. Without the effort of people like Ray, I daresay the on-board computers of those satellites would be toast.

Can you say ‘mission critical’?

Re #390 Ray Ladbury

Sorry that I’m new to RealClimate, though I’ve read about you a lot and of course about anthropogenic global warming. I trust I don’t “add nausea” by asking what’s meant by “the reality of anthropogenic causation does not require GCM–you simply can’t come up with a model that comes close to working without it.”

How do you know that it’s not possible to come up with a model that works without including anthropogenic causation? What indeed is the definition of “coming close to working”, a phrase which suggests to me an objective scale of measure that any model builder could use to see if he or she is getting close?

Gee Geoff, perhaps you didn’t like the response because it echoed your tone. I am indeed familiar with KPIs. Do you even know enough about what climate scientists do that you could define them for the field?

And where did you get the idea that temperatures have declined over the past 10 years? Maybe you should take a look here:

http://tamino.wordpress.com/2007/08/31/garbage-is-forever/

Model_err,

Not a problem. In order to “work,” a dynamical model takes the known factors and tries to determine their relative importance and level. Usually, they will take data from several sources and come up with a best fit and perhaps a range of uncertainties. This is what is done for CO2 forcing when looking at volcanic eruptions, paleoclimate data, etc. They then apply the model to other data–e.g. the Pinatubo eruption, warming trend of the past 30 years, etc.–and see how well they do. The latter step is called the validation. If the results are good, it serves as independent validation of the model and of the forcing levels. Now, for the model to be wrong, one would have to construct a model that not only does as well on the validation, but also explains why the analysis that constrained the forcings was wrong.

The case for CO2 is a pretty easy one–it’s the only forcer that has been increasing consistently over the past 30 years. Other candidates like galactic cosmic rays, etc–first, there’s no evidence for an increase and second there’s no real physical mechanism.

The real advantage of the anthropogenic causation mechanism is that it explains what’s going on in terms of known physics–not in terms of some putative, unknown mechanism. Hope that helps.

Ray, thank you, yes, that’s very helpful. I suppose strictly speaking you haven’t dealt with the anthropogenic part of your original statement. But it seems that very few people doubt that the marked increase of CO2 in the atmosphere is substantially down to man’s increased emissions 1850-2008.

I do have some further questions:

1. Given that physical mechanism is not known for the impact of cosmic rays on our climate, does this mean that no cosmic ray data is input into any of the models that are used in the IPCC scenario process?

2. Is the CO2 sensitivity or forcing entered as an input to such models, as an assumption, or is it derived from the way the models predict that water vapour and clouds will react to more CO2 in each cell of the grid?

3. Is it true that these models don’t predict realistic (ie frequently observed) levels and formations of cloud cover? Does this matter?

4. Does it matter that turbulence is not able to be modeled well – and not just for computational reasons, as I gather, but because it is very hard to solve mathematically?

I also have some questions on “the results” that you say are validated for a model to be considered “working”.

1. Is it only global mean temperature that is checked against the real world (surely not)? At what kind of timescale? Every hour, day, month, year?

2. After something like Pinatubo, is it the distribution and time evolution of the effect on temperature and other variables that is checked against the real world? Including air/ocean surfaces and higher up in the atmosphere?

3. Are other variables like humidity and wind output from models checked against the real world?

4. At what granularity are these checks made? Continent sized or a few cubic feet? (I think I know it’s not the latter but you get the idea)

5. Do model builders know what’s coming in terms of validation? Surely the big events like Pinatubo and their results (as best the instruments at the time were able to capture them) are very well known now? Doesn’t this just become curve fitting therefore?

Thank you for your help so far.

model_err (398 asks):

1. Given that physical mechanism is not known for the impact of cosmic rays on our climate, does this mean that no cosmic ray data is input into any of the models that are used in the IPCC scenario process?

There could be other references in the report that I am not aware of I do remember reading this:

From AR4 Ch2 2.7.1 (p193)

“Many empirical associations have been reported between globally averaged low-level cloud cover and cosmic ray fluxes (e.g., Marsh and Svensmark, 2000a,b). Hypothesised to result from changing ionization of the atmosphere from solar-modulated cosmic ray fluxes, an empirical association of cloud cover variations during 1984 to 1990 and the solar cycle remains controversial because of uncertainties about the reality of the decadal signal itself, the phasing or anti-phasing with solar activity, and its separate dependence for low, middle and high clouds. In particular, the cosmic ray time series does not correspond to global total cloud cover after 1991 or to global low-level cloud cover after 1994 (Kristjánsson and Kristiansen, 2000; Sun and Bradley, 2002) without unproven de-trending (Usoskin et al., 2004). Furthermore, the correlation is significant with low-level cloud cover based only on infrared (not visible) detection. Nor do multi-decadal (1952 to 1997) time series of cloud cover from ship synoptic reports exhibit a relationship to cosmic ray flux. However, there appears to be a small but statistically significant positive correlation between cloud over the UK and galactic cosmic ray flux during 1951 to 2000 (Harrison and Stephenson, 2006). Contrarily, cloud cover anomalies from 1900 to 1987 over the USA do have a signal at 11 years that is anti-phased with the galactic cosmic ray flux (Udelhofen and Cess, 2001). Because the mechanisms are uncertain, the apparent relationship between solar variability and cloud cover has been interpreted to result not only from changing cosmic ray fluxes modulated by solar activity in the heliosphere (Usoskin et al., 2004) and solar-induced changes in ozone (Udelhofen and Cess, 2001), but also from sea surface temperatures altered directly by changing total solar irradiance (Kristjánsson et al., 2002) and by internal variability due to the El Niño-Southern Oscillation (Kernthaler et al., 1999). In reality, different direct and indirect physical processes (such as those described in Section 9.2) may operate simultaneously.”

Model_err, I am not a climate modeller–just a physicist who has made some effort to understand the science. For detailed questions about the models you would need to ask one of the contributors to the site–or go to the references in the IPCC documents. As to the source of the carbon–we know it’s fossil, since it is depleted in C-13.

1)Cosmic rays–you cannot model what you have no mechansim for. In any case galactic cosmic rays have been roughly the same for at least 50 years.

2)Sensitivity is determined from independent data–past volcanic eruptions, paleoclimate, etc. This has been discussed on this site. Note–the source data must be and is independent of that used for validation.

3)Cloud cover is difficult to model given the typical cell size is larger than many weather systems. For details on this, you’d need to consult the literature.

4)Again, turbulence typically takes place on smaller scales. The question is how important it is.

Even with these limitations, the models do reproduce gross weather features, and this enhances confidence that they have the physics basically right.

Results

Gavin has dealt with these questions pretty thoroughly in the discussions above with Jerry Browning before the latter melted down.