Last Monday, I was asked by a journalist whether a claim in a new report from a small NGO made any sense. The report was mostly focused on the impacts of climate change on food production – clearly an important topic, and one where public awareness of the scale of the risk is low. However, the study was based on a mistaken estimate of how large global warming would be in 2020. I replied to the journalist (and indirectly to the NGO itself, as did other scientists) that no, this did not make any sense, and that they should fix the errors before the report went public on Thursday. For various reasons, the NGO made no changes to their report. The press response to their study has therefore been almost totally dominated by the error at the beginning of the report, rather than the substance of their work on the impacts. This public relations debacle has lessons for NGOs, the press, and the public.

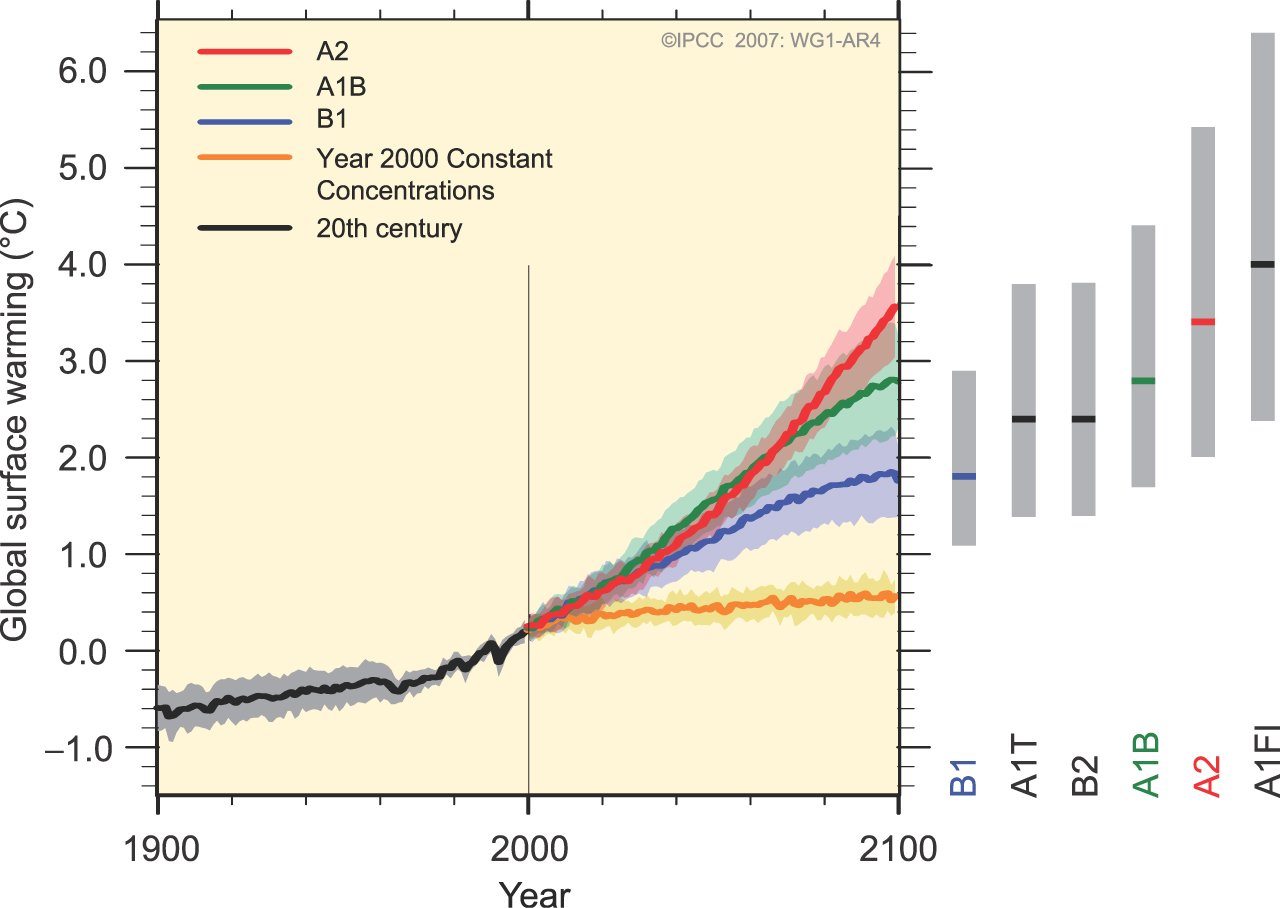

The erroneous claim in the study was that the temperature anomaly in 2020 would be 2.4ºC above pre-industrial. This is obviously very different from the IPCC projections:

which show trends of about 0.2ºC/decade, and temperatures at 2020 of around 1-1.4ºC above pre-industrial. The claim is thus at least 1ºC above what it should have been, and implied trends over the next decade an order of magnitude higher than otherwise expected.

How they made this mistake is quite instructive though. The steps they followed were as follows:

- Current CO2 is 390 ppm

- Growth in CO2 is around 2 ppm/yr, and so by 2020 there will be ~410 ppm

So far so good. The different IPCC scenarios give a range of 412-420 ppm.

- They then calculated the CO2-eq to be 490 ppm.

- The forcing from 490 ppm with respect to the pre-industrial is 5.35*log(490/280)=3 W/m2.

- Given a climate sensitivity of 3ºC for 2xCO2 (i.e. 3.7 W/m2), a forcing of 3 W/m2 translates to 3*3/3.7=2.4ºC

The first error is in misunderstanding what CO2-eq means and is used for. Unfortunately, there are two mutually inconsistent definitions out there (and they have been confused before). The first, used by policymakers in relation to the Kyoto protocol, relates the radiative impact of all the well-mixed greenhouse gases (i.e. CO2, CH4, N2O, CFCs) to an equivalent amount of CO2 for purposes of accounting across the basket of gases. Current GHG amounts under this definition are ~460 ppm, and conceivably could be 490 ppm by 2020.

However, the other definition is used when describing the total net forcing on the climate system. In that case, it is not just the Kyoto gases that must be included but also ozone, black carbon, sulphates, land use, nitrates etc. Coincidentally, all of the extra GHGs and aerosols actually cancel out to a large extent and so the CO2-eq in this sense is quite close to the actual value of CO2 all on its own (i.e. in IPCC 2007, the radiative forcing from CO2 was 1.7 W/m2, and the net radiative forcing was also 1.7 W/m2 (with larger uncertainties of course), implying the CO2-eq was equal to actual CO2 concentrations).

In deciding how the climate is going to react, one obviously needs to be using the second definition. Using the first is equivalent to assuming that between now and 2020 all anthropogenic aerosols, ozone and land use changes will go to zero. So, they used an excessive forcing value (3 W/m2 instead of ~2 W/m2).

The second mistake has a bigger consequence: is that they assumed that the instantaneous response to a forcing is the same as the long-term equilibrium response. This would be equivalent to a planet in which there was no thermal inertia – or one in which there were no oceans. Oceans have such a large heat capacity that it takes decades to hundreds of years for them to equilibriate to a new forcing. To quantify this, modellers often talk about transient climate sensitivity, a measure of a near term temperature response to an increasing amount of CO2, and which is often less than half of the standard climate sensitivity.

It has to be acknowledged that people sometimes make genuine mistakes without having any desire to mislead or confuse, and that this is most likely the case here. It does no responsible organisation any good to have such a mistake in their material. It just leads to distractions from the substance of the report. The situation is serious enough that there is no need for exaggerated claims to produce headlines, just the plain unvarnished best guesses will be fine. It is likely that temperatures will reach 2.4ºC at some point in the next this century, and so the calculated impacts are certainly relevant – just not in 2020.

Unfortunately, in this case the people involved did not decide to fix the errors that were pointed out, going so far as to have the PR person for the launch insist that the calculation was ok. That the Guardian journalist, Suzanne Goldenberg, took the time to check on the details is a credit to the press. The reaction can be put down to institutional inertia, combined with the fact that their scientific advisor was in hospital (and is 87). It would clearly have been much better to have had this properly peer reviewed ahead of time. To that end the AGU Q&A climate service or the Climate Science Rapid Reaction Taskforce (CSRRT) are invaluable resources for getting some quick scientific peer review.

Lynn (#86),

Sorry, I somehow overlooked your reply when it appeared. Sounds like you made good use of a misconceived request for “balance”.

As for the rights-based/duty-based hypothesis, I don’t really see any of your points as evidence one way or the other. The anecdotal insight about shopping bags came from someone primed by your presentation of the theory. If we live in a rights-based culture, that may not explain so much why people e.g. demand meat on their plates, as why they fall back on rights language to make their demands. Also, when some opponents of curbing carbon emissions wax noisy about ‘rights’, it seems to be from a very particular ideological perspective — not to say paranoid delusion — that views any government regulation as a potential step toward tyranny. Such a view is not widely held, nor is it a necessary corollary of a rights-based ethic. (I’d argue quite the opposite, in fact, but that’s enough off-topicness from me.)

Another issue re CC & food that I don’t think has been studied is the effect of strongly negative Arctic oscillations — with freezes coming into the subtropical south in winter (N. Hemisphere), instead of staying up north….which is a natural anomolous event that some years occurs, but some scientists think may become more frequent with CC. Like freezes hitting Florida & S. Texas (killing our garden crops!). We can’t grow anything here in the summer. Too hot. At best we might hope to keep our garden crops alive with lots of watering. So there are 2 growing seasons — fall & spring. And winter crops. But if even one killing freeze hits (as happened about every 10 years in the past, but now seems to be more frequent) the plants die.

Another recent study points to the increasing minimum diurnal (nighttime) temps being harmful to crops (and these are increasing faster than day temps), such as rice, much more so than the increasing maximal diurnal (daytime) temps at this point, tho in the future with ever higher day temps those are also expected be harmful.

See Welch, et al. 2010. “Rice Yields in Tropical/Subtropical Asia Exhibit Large but Opposing Sensitivities to Minimum and Maximum Temperatures.” PNAS 107(33):14562-14567.

That sort of reminds me of how the heat deaths in Europe summer 2003 were more due to the hot nights & people not being able to recouperate from the high day temps.

So I’ve developed a sympatico for plants — they get the same types of diseases we get (viral, bacterial, fungal, micro-organsims, etc), can “drown” or get water-logged in floods(roots need oxygen), need water & “food,” and are negatively affected by increasing night temps.

Where I am from “une blague=a joke” . Draw any conclusions you may think are funny.

RE CM #101 & “If we live in a rights-based culture, that may not explain so much why people e.g. demand meat on their plates, as why they fall back on rights language to make their demands.”

That’s the whole point re social sciences — what Joe Schmoe does within his socio-cultural-psychological-biological-environmental complex using sociocultural, etc materials at hand, not what legal scholars come up with. And that’s what I meant by “attribution theory” kicking in — people perceiving & socially constructing things according to their advantage.

Hi

As a biological scientist, having followed this CO2 debate over the past year, this is a first time post

I’m trying to figure out whether this thread is a political or scientific statment.

The political argument for remediation of man made generation of CO2, for whatever reason is over. The new world order didn’t happen. So we will see whether ‘CO2 forcing’ or ‘weather as usual’ rules the day, and how humans respond in the future. We have at least 100 years of carbon based energy, and it looks like at leat another 60 years of ‘flywheel’ to consume that carbon based craving.

Where do we go from here? The same folks advocating ‘clean enery’ seem to be blocking nuclear energy. Human beings seem to thrive on, and crave energy!

Thanks for the blog and the freedom of speach!

“The same folks advocating ‘clean enery’ seem to be blocking nuclear energy.”

This isn’t true, Eric. Yes, some environmentalists are nervous about nuclear power (or vehemently against it), while many others would prefer to be able to do without it for obvious reasons. But among those serious about reducing CO2 emissions, most people recognise the advantages of nuclear power, and in some circumstances the necessity of nuclear power.

James Hansen, for one, has been quoted in favour of nuclear power.

RE: #99 Hank Roberts

Day length can be a big advantage up north:

http://www.gadling.com/2007/07/16/giant-mutant-like-vegetables-at-alaska-state-fair/