The discussion and analysis of the latest round of climate models continues – but not always sensibly.

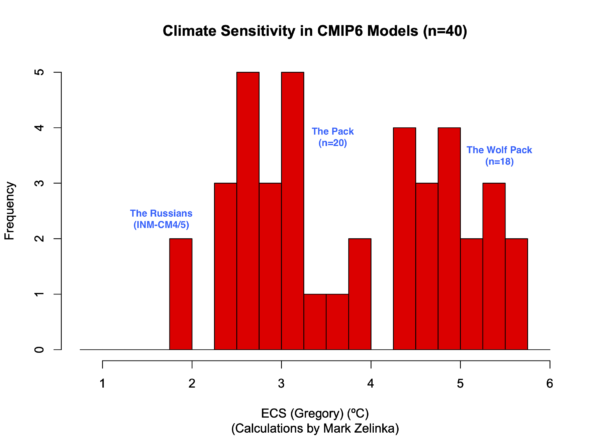

In a previous post, I discussed the preliminary results from the ongoing CMIP6 exercise – an international, multi-institutional, coordinated and massive suite of climate model simulations – and noted that they exhibited a wider range of equilibrium climate sensitivities (ECS) than in previous phases (CMIP5 and earlier) and wider than the assessed range based on observational constraints (of many kinds).

Since then, more model results have been added to the archive, and thanks to Mark Zelinka, we can see some of the analysis as it updates in real time.

By eye, it looks like there are two (or three) groups of models, one within the range of the assessed values (roughly 2 to 4.5ºC), one group with significantly higher values, and one institution/two models with a notably lower ECS. The question everyone has is whether this extended range is credible.

Mark and colleagues recent paper (Zelinka et al., 2020) demonstrated that a big part of the reason for the high sensitivities was in the Southern Ocean cloud feedback:

This is the key result from the Zelinka et al paper that just appeared. It shows that the big difference in sensitivities in some of the CMIP6 models is tied to the short wave low cloud feedbacks in the Southern Oceans (orange line). pic.twitter.com/RN9ply5kT8

— Gavin Schmidt (@ClimateOfGavin) January 4, 2020

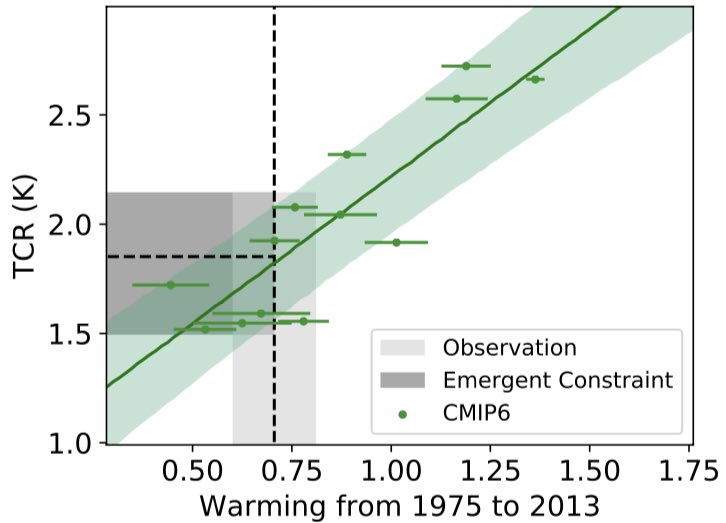

Since my first post, there have been a number of papers have looked at the skill of these models to see whether there are some key observational data that might help in constraining the sensitivity (and by extension, the projections into the future). One set of papers has focused on the global mean trends from 1990 or so onward which is a period of stable or declining aerosol trends and which might therefore be a closer test of the models’ transient sensitivity to CO2 than earlier periods. Notably Tokarska et al. (2020) and Njisse et al. (2020) suggest that many of the high ECS group warm substantially faster than observed over this period and therefore should be downweighted in the constrained projections of the future.

Recently however, writing in Guardian, Jonathan Watts uses results from the UK’s new model (Williams et al., 2020) and a commentary from Tim Palmer to argue that that we nonetheless need to take these high sensitivities more seriously, and indeed that they may indicate that the assessed ECS range has been underestimating potential changes in the future. This is however flawed.

The Williams et al paper demonstrates that updates to the HadGEM3‐GC3.1 model developed by the UK’s Hadley Centre that affect the clouds and aerosols, increase the skill of that model in short-term initialized weather forecasts. This is fine, and indeed, consistent with increases in skill in the newer models across the board when they are compared to a very broad range of observations.

But it is a logical leap to go from an observation of increased skill in one metric to assuming that therefore the overall ECS in this particular model is more likely. To demonstrate that, one would need to show that this particular measure of skill was specifically related to ECS which has not been done (a point Palmer acknowledges). To put in another way, it may be that all models that do well on this task have a range of ECS values, and that the coincidence of this one model doing well and having a high ECS, was just that, a coincidence.

The Williams et al paper and Palmer commentary point to one particular feature of this model which is that the newer (higher ECS) versions have greater amounts of cloud liquid water at cold temperatures. For background, clouds can consist of either ice crystals, or liquid water droplets which have quite different radiative behaviours (liquid water clouds are generally more reflective), and knowing whether clouds are ice or water has been historically difficult to determine globally. In recent years however, satellite data from CloudSAT/CALIPSO has shown that more clouds have liquid water and at colder temperatures than was assumed before, and hence newer models have reflected that updated information.

This has an impact on ECS because in a warming world, one expects more cloud water to turn from ice to liquid, and since liquid clouds are more reflective, this is a damping feedback on overall climate warming. But if there is less cloud ice around, then there will be less of that ice to turn to water, and thus the magnitude of this damping effect will be smaller, and thus the overall sensitivity will be higher.

In discussions with colleagues over the last few months, this effect has been frequently brought up as a potential reason to think that the higher ECS values are therefore justified. But closer analysis does not necessarily support this. Some models for instance, have increased their cloud liquid water but have only had modest increases in climate sensitivity. Thus the relationship between higher CLW and ECS may be less strong than assumed above. It may be that other features in the clouds (such as the transition of different cloud types) might be playing a bigger role.

This assessment is obviously an important task for the authors of the IPCC AR6 report which is currently in it’s second-order draft. One (very modest) positive impact of the pandemic is that the deadline for papers to be accepted in order for them to be included in the final version of AR6 has been delayed to January 31st 2021, which will allow much of this new science to be published in time.

In the meantime, claims that climate sensitivity is much higher, or that worst cases scenarios need to be revised upwards, are premature.

References

- M.D. Zelinka, T.A. Myers, D.T. McCoy, S. Po‐Chedley, P.M. Caldwell, P. Ceppi, S.A. Klein, and K.E. Taylor, "Causes of Higher Climate Sensitivity in CMIP6 Models", Geophysical Research Letters, vol. 47, 2020. http://dx.doi.org/10.1029/2019GL085782

- K.B. Tokarska, M.B. Stolpe, S. Sippel, E.M. Fischer, C.J. Smith, F. Lehner, and R. Knutti, "Past warming trend constrains future warming in CMIP6 models", Science Advances, vol. 6, 2020. http://dx.doi.org/10.1126/sciadv.aaz9549

- F.J.M.M. Nijsse, P.M. Cox, and M.S. Williamson, "An emergent constraint on Transient Climate Response from simulated historical warming in CMIP6 models", 2020. http://dx.doi.org/10.5194/esd-2019-86

- K.D. Williams, A.J. Hewitt, and A. Bodas‐Salcedo, "Use of Short‐Range Forecasts to Evaluate Fast Physics Processes Relevant for Climate Sensitivity", Journal of Advances in Modeling Earth Systems, vol. 12, 2020. http://dx.doi.org/10.1029/2019MS001986

In a way, the possibility of continuingly unresolved uncertainty about the more extreme scenarios may be almost beside the point when even added heat of “only” 3C would be ugly enough to raise alarm levels. It may be that significant, far-reaching, and transformative changes remain as a necessity in AR6.

Thanks for the update. It’s interesting.

However, I think Lance (#1 above) is correct: the practical import in terms of mitigation is probably basically moot. We (still) need to hit the brakes, hard. That doesn’t change, whether the higher sensitivities are possible or not.

(That is of course not to say that the science isn’t worth doing, both for its own sake, and as part of understanding the larger picture in climate change.)

At some point it may not be possible to do attribution “Climbing down Charney’s ladder: Machine Learning and the post-Dennard era of computational climate science”, V. Balaji, NOAA/GFDL

Apparently it is difficult to associate a particular measure of skill to ECS which you say that Palmer acknowledges has not been done.

Balaji concludes with this:

Maybe worthwhile to do a future post on this topic.

That makes sense. But, I assume short wave low cloud feedbacks in the Southern Oceans are inadequately constrained by observation, on top of which the usual disclaimers about possible longer term non-linear feedback effects of temperature on cloud formation, water vapor, convection, albedo, etc. still apply. Thus, if Williams and Palmer are unconvincing in favor of higher values, neither can we rule the possibility out. Wherever this line of inquiry ends up, it’s unlikely to shake the (AR5) consensus of a 10% probability ECS>6.

Is the 1990-2013 period used for the emerging constraint really that representative ? This more or less corresponds to a large cooling trend in the eastern tropical Pacific, that did impact global temperatures (the infamous hiatus) and circulation patterns (Southern Ocean included). This episode is missing from CMIP6 models, as it is – probably – natural variability, so you would expect them to warm more than observations over that period.

Thanks Gavin for this post. While I agree with the content, I would have been more careful about the conclusion. As climate scientists, we need to avoid false alarms but also overconfidence and being too conservative about the plausible range of climate change.

Nijsse et al (2020) is not about the ECS (TCR only) and Tokarska et al (2020) shows that the past warming trend is better at explaining the inter-model spread in TCR than ECS (40% versus only 33% of explained variance using both cmip5 and cmip6 models if I understood correcty).

Concluding that it is premature to revise upward worst cases scenarios (high ECS values) may be therefore as premature as saying the opposite. Yet, both premature statements are not necessarily symmetric and there are cases where being overconfident can have dramatic consequences, as suggested recently by the COVID pandemic.

Hi Gavin,

Some models for instance, have increased their cloud liquid water but have only had modest increases in climate sensitivity. Thus the relationship between higher CLW and ECS may be less strong than assumed above.

Just looking at the full version of Figure 2 from Zelinka et al. 2020, with the breakdown by scattering and amount there seem to be some clues. Difference in ratio of liquid water to ice clouds would only affect the scattering component but it seems that change in the amount of clouds is equally as important for feedback differences. The lack of solid lines on the scattering chart also indicates that CMIP6-CMIP5 scattering differences are not significant whereas cloud amount differences are significant in high latitude locations (though this is comparing all CMIP6 models rather than just those with high climate sensitivity).

Has there been any work on how much of this is due to “rapid adjustment” effects on low clouds due to direct CO2 influence? Are liquid water clouds more susceptible to this effect?

Thank you for this interesting analysis. It strikes me how all we ever hear from denialists is that climate scientists only ever emphasize the worst-case scenarios and projections, yet here you are explaining why some of the most sensitive models (and associated impacts, by and large) are suspect and need much much more cautious consideration. It certainly isn’t the first time this type of assessment has been expressed (here or in other venues, including the literature), yet it is almost always ignored by deniers unless they want to use it to paint such scenarios as impossible (“even the ‘supposed’ experts agree these are bunk!”). To those “skeptical” of climate change, take note: here is a major researcher in the science providing analysis of high-end possibilities with the same level of scrutiny that you always claim they never do. Consider that the next time you want to rant about how this is a “religion.”

Climate justice demands the elimination of classifications like Burn Before Reading, that lead to massive CO2 emission from plastic burn bags, classified junk mail,fossil document combustion and failure to properly sequester sensitive national climate sensitivity estimates and back issue stacks of Eos and JGR Atmospheres

RE: “But it is a logical leap to go from an observation of increased skill in one metric . . .”

Perhaps this should read “But it is an illogical leap”

> “But if there is less cloud ice around, then there will be less of that ice to turn to water, and thus the magnitude of this damping effect will be smaller, and thus the overall sensitivity will be higher.”

My interpretation: you’re saying that much of what was thought to be cloud ice (when? the 2010s?) actually turned out to already be liquid, so that there was more dampening of cloud feedback earlier in Earth’s history when CO2 levels were lower (when?) than there will be in the future. To put it another way, ECS is not a constant but varies depending on Earth’s temperature regime, and this factor points toward a higher ECS in the higher temperature regime we’re facing. Do I understand correctly? (If so, what other factors might increase or decrease the future ECS?)

> “But closer analysis does not necessarily support this.”

Duly noted. I sure hope somebody figures this out before the deadline!

In the past I have been puzzled trying to figure out why the difference between TCR and ECS is so large (1.75°C vs 3°C). I remember looking at feedbacks on an IPCC diagram and noted that most of them are short-term and medium-term feedbacks that should affect TCR as well as ECS. Then there’s CO2 from permafrost melting, which I assume does not affect ECS because ECS assumes constant CO2 (please let me know if I assume rightly). So today you’ve told me about a cloud feedback I didn’t know about, but what other known feedbacks could explain the difference between TCR and ECS? Or is ocean warming (plus short-term feedbacks compounded) sufficient to explain almost all of the difference?

The Precautionary Principle applies here. Let’s look at some real-world info that should give one pause when minimizing or rationalizing a particular view. I say rationalizing because this commentary/essay/analysis has the “feel” of coming from a perspective, not a purely objective look at things. I sense a bit of scientific reticence.

That “feeling” of reticence is difficult to quantify. It comes from decades of experience interpreting words, behavior, and emotions as a counselor and teacher. It is a skill I have. But it’s not perfect. Still, I wonder, Gavin, if your long habit of favoring the conservative interpretations of rates of change is not at play here? I have noted recent comments much more in line with the views I have long held of high long-tail risk and was glad to see that, but your tendency *has been* towards reticence.

I offer the last two years of global temps as a major warning sign that sensitivity is, indeed, on the high end. I don’t recall hearing of any specific mechanism by which the multiple record average monthly temperatures this year would have been anticipated. Last year, too, seemed to be considered more anomalous than it should have been.

Why?

I have come to believe – I use that word because all I can do is point to the temperature records, extreme weather events, and ASI, etc., and say, should this really be happening?, while having no hard data or research to say it should or should not – that we have corossed a climate tipping point that nobody can see. I live in a world of patterns. I am what we call in permaculture pattern literate.

And the pattern is disturbing.

If for no other reason than the patterns all around us, I wonder at the wisdom of anything that seems to throw cold water on higher sensitivity that isn’t relatively absolute.

In the meantime, claims that climate sensitivity is much higher, or that worst cases scenarios need to be revised upwards, are premature.

I disagree. Calls to minimize them are dangerously conservative. Or, can you give us a clear and definitive reason why despite no El Nino the last two years we have been looking at such high temperatures?

From the perspective of a non-scientist this discussion is about rearranging the deck chairs on the Titanic.

Killian, I would say the issue is that no other lines of evidence point to such a high climate sensitivity, so it probably isn’t that high. While I think the precautionary principle deserves to be applied to planetwide hazards, I don’t think there’s anything to be gained from Gavin wading into political territory by sounding an alarm himself. Conservative leaders actively teach their followers that the precautionary principle is bad, so any scientist who invokes the principle hurts his own credibility among conservatives.

Dan DaSilva, “a convergence is required”? You’re saying that every model must produce very similar results to every other model, or you can assume every model is wrong? But that’s just a non sequitur. Ignoring the logical fallacy, what should we conclude if that were true? Are people to simply believe any conclusion that appeals to them (e.g. “ECS is about 0.9C because the GWPF told me so”), as if reality will bend to their will?

It’s also a useless thing to say. Scientists would very much like all models to be perfect and in agreement, but that doesn’t change the fact that improving models is long, hard work that you don’t speed up by complaining. For laymen, I propose the following technique: find models that have had good historical success and assume they’re right. CMIP3 forecasted well, as well as hindcasting well.

Re #13 Dan DaSilva said Quote: “The question everyone has is whether this extended range is credible.”

The question that should be asked is whether ANY of the climate models are credible. The enormous range of ECS gives the answer. In any honest modeling endeavor, a convergence is required before any claims of credibility.

#ClimateDenial is a criminal act: A Crime Against Nature and a Crime Against Humanity.

#EcoNuremberg

DDS 13: The question that should be asked is whether ANY of the climate models are credible.

BPL: Take a look:

http://bartonlevenson.com/ModelsReliable.html

DDS: ” In any honest modeling endeavor, a convergence is required before any claims of credibility.”

Ah, and you speak based on how many publications?

[crickets…]

Really, your continued belief in your own competence despite the efforts of reality to convince you otherwise is truly astounding.

RE. DaSilva @13

So says someone who apparently has no experience or knowledge of simulation modeling. No where in the literature for credibility or validation of models does it state that convergence is required. Each model stands on its own.

It was George Box who made the famous statement: “All models are wrong, but some are useful.”

Building on that, we have: “Modelling in science remains, partly at least, an art. Some principles do exist, however, to guide the modeller. The first is that all models are wrong; some, though, are better than others and we can search for the better ones. At the same time we must recognize that eternal truth is not within our grasp.” –McCullagh, P.; Nelder, J. A. (1983), Generalized Linear Models

Part of the point of Gavin’s post is actually the search for the better ones.

Having said that, one thing its certain, the response of the climate to changes in forcing is somewhat complex and there is uncertainty in the exact response; however, what is quite clear, is that the models tell us that the chance of the climate response to a doubling of CO2 being insignificant is practically nonexistent. In that, the models are quite useful–we do have a problem. The only question that remains is: exactly which future generation gets totally screwed first?

It just doesn’t seem plausible that in the era of Globalization (shifts of production from U.S. and EU zones and into SE Asia) and continued air pollution regulation in developed countries (with far less in developing countries) that,

“the global mean aerosol cooling trend is very small and consistent across all available CMIP6 models (−0.01°C per decade from 1981 to 2014 for the CMIP6 ensemble mean” (Tokarsa et al)

The aerosol loading in the northern hemisphere and shifts from the west to south-east should be driving more cooling during the Northern Hemisphere Summer in the Models and this would compensate by at least 0.1C/decade of the higher sensitivity (wolf pack) trend.

14

Chill, Ripman– some of the RCP 8.5 CMIP outliers seem about as plausible as the Titanic sinking the iceberg.

https://vvattsupwiththat.blogspot.com/2019/05/once-again-u.html

Dan DaSilva:

As DDS is a relentless resurrector of undead denialist memes, I know I shouldn’t respond. His latest howler, however, is an opportunity to link to the US National Academy of Sciences Climate Modeling 101 pages. Scientifically metaliterate non-experts will agree that DDS’s credibility suffers by comparison.

In a tangentially related post, ATTP discusses sources of climate model uncertainty over simulation timespans. He highlights an article published three weeks ago in Earth System Dynamics: Partitioning climate projection uncertainty with multiple large ensembles and CMIP5/6, by Flavio Lehner et alia. Worth another treatment, Gavin?

That high sensitivity might not be premature, after all. (Common sense says it obviously is not.) Some if that inconvenient scientific data:

https://phys.org/news/2020-06-today-atmospheric-carbon-dioxide-greater.html

CO2.

[blockquote]Schubert and colleagues’ new CO2 “timeline” revealed no evidence for any fluctuations in CO2 that might be comparable to the dramatic CO2 increase of the present day, which suggests today’s abrupt greenhouse disruption is unique across recent geologic history.

…because major evolutionary changes over the past 23 million years were not accompanied by large changes in CO2, perhaps ecosystems and temperature might be more sensitive to smaller changes in CO2 than previously thought. As an example: The substantial global warmth of the middle Pliocene (5 to 3 million years ago) and middle Miocene (17 to 15 million years ago), which are sometimes studied as a comparison for current global warming, were associated with only modest increases in CO2.[/blockquote]

22 Mal

Thanks for reminding us of John Nielson-Gammon’s shrewd AGU presentation .

I must agree with many of his conclusions about the problem ofd scientific metaliteracy, having reached similar onesnin an essay published 24 years earlier:

https://vvattsupwiththat.blogspot.com/2017/10/mama-dont-low-no-skeptics-round-here.html

Still waiting for answers to my earlier questions.