Sometimes, I encounter arguments suggesting that since we cannot predict the weather beyond a couple of weeks, then it must be impossible to predict the climate in 100 years. Such statements tend to present themselves as a kind of revelation, often in social settings and parties after I have revealed for some of the guests that I’m a climatologist (if I say I work for the Meteorological Institute, I almost always get the question “so, what’s the weather going to be like tomorrow?”). Such occasions also tend to be times when I’m not too inclined to indulge in deep scientific or technical explanations. Or when talking to a journalist who wants an easy answer. In those cases I try to provide a short and simple, but convincing, explanation that is easy for most people to understand why climate can be predicted despite the chaotic nature of the weather (a more theoretical discussion is provided in the earlier post Chaos and Climate). One approach is to try to relate the topic to something with which they are familiar, such as to point to empirical observations which most accept (I suppose with hindsight it could be similar to the researchers in the early 20th century trying to convince that nuclear reactions were possible – just look at the Sun, and there is the proof! Or before that, the debate about whether atoms were real or not – just look at the blue sky, and you look at the proof…). I like to emphasised the words ‘weather‘ and ‘climate‘ above, because they mean different things.

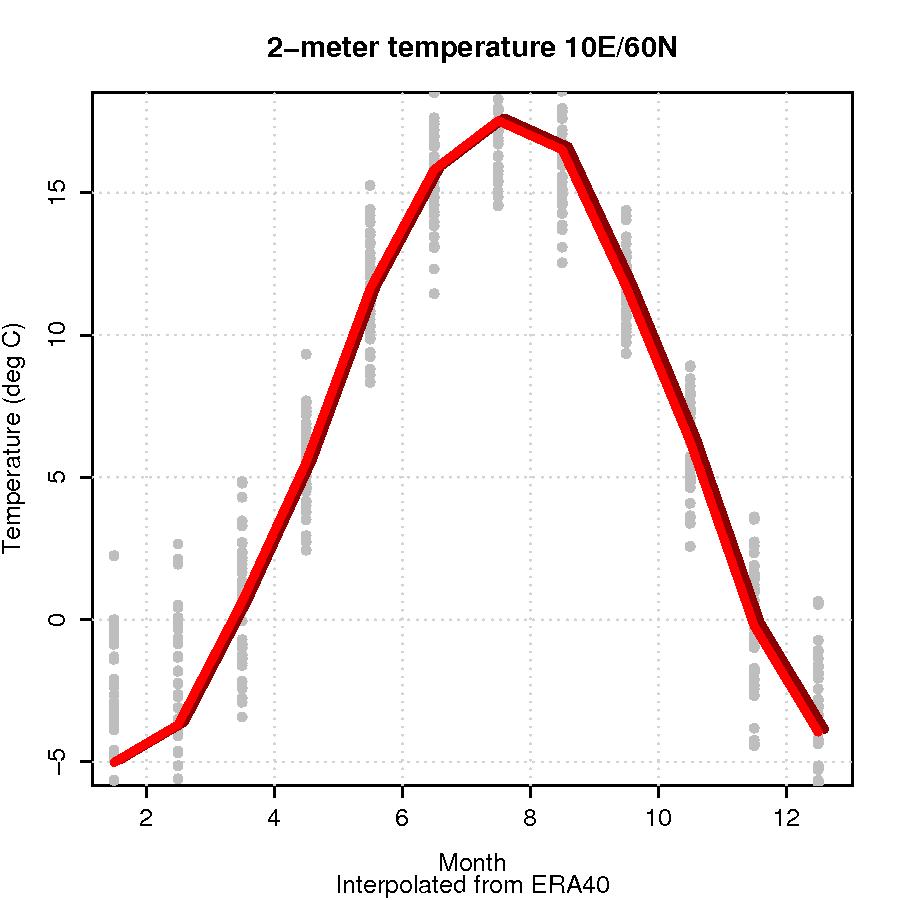

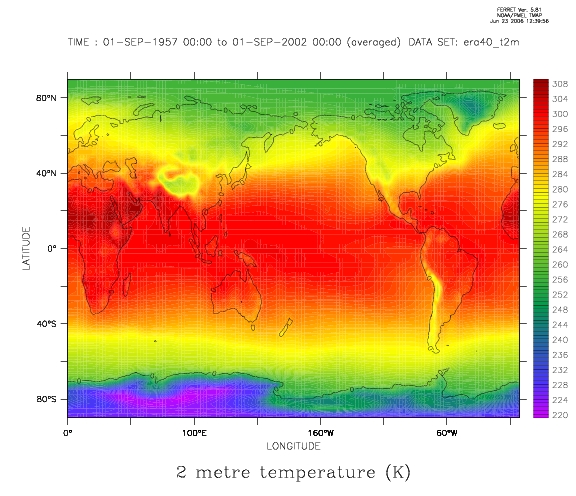

It is true that we cannot predict the weather indefinetely (or even beyond a couple of weeks), because of the chaotic nature and infinitesimally small uncertainties in the state as we know to day, will affect how the weather evolves in a few weeks (the ‘chaos effect’). But, still I say that I know with certainty that there is a very high probability that the temperature in 6 months will be lower than now – when winter has arrived (it’s summer on the northern hemisphere at the present). In fact, the seasonal variation in temperature and rainfall (wet and dry seasons in the tropics) tends to be highly predictable: the winters at high latitudes are cold and summers mild (if anyone doubts, read on here); the southeast Asian Monsoon usually starts over India in the first days of June. I don’t usually bring with me maps and figures to social events, but it would be nice to show a picture such as the one in Fig. 1 to illustrate. If the person is not convinced, I may continue with other arguments for why the climate is predictable: take the latitude for instance – the poles are cold and tropics warm. Furthermore, maritime climates at higher latitudes with wet and mild (small day-to-day or season-to-season temperature variations) are distinct to continental climates far away from the sea (dry with great temperature variations). It is well-established that high-altitude places tend to have lower temperatures and greater temperature variations. Most hikers and mountaineers have experienced that. These are local climatic properties that we can predict if we know the geography, even if we cannot predict the weather on an exact day far in the future. To convince further, I may add that empirical evidence suggesting that (local) climate is not unpredictable, but rather systematically influenced by external factors (boundary conditions) is that Northern Europe enjoys a mild climate: Oslo is roughly on the same latitude as the southern tip of Greenland. There is a reason for that – Oslo has a considerably warmer climate because of the effects of oceanic heat transport/capacity and prevailing winds. I also remind that people really have known for centuries that there are systematic factors influencing the local climate, it’s just that this fact sometimes gets forgotten by those who claim that we cannot predict climate. Isn’t it silly? I may ask if there is any reason to think that the predictability stops at the seasonal and geographical variations.

I may continue with in a hand-wavy manner: In a similar fashion as seasonal and geographical effects, changes in Earth’s orbit around the Sun alters the planetary climate by modifying the amount of energy received from our star (but because of terrestrial response, the atmospheric composition is modified as well, enhancing the effect even further), and changes in the atmospheric composition affects the climate because grenhouse gases absorb heat that otherwise would escape into space – greenhouse gases are transparent to sunlight, but opaque to infrared light due to their molecular properties and their ability to absorb energy (if I say it’s quantum physics, people tend to understand it’s getting a bit technical). I stress that the greenhouse effect is also beyond doubt – without it, the energy balance between total energy Earth intercepts from the Sun and the energy lost through black body radiation implies that Earth’s surface on average would be about 30K cooler than we know it. Volcanoes also affect our climate, and we have theories explaining why. Furthermore, looking to other planets, the observation that Venus has higher surface temperature than Mercury, despite being further away from the Sun, can only be explained as a result of different absorbing properties of their respective atmospheres (a strong greenhouse effect at Venus).

So, my question is, do you think people get the message that I try to convey this way? Is it too simple or too complicated? Somebody who knows of every-day examples demonstrating the central principles? Any suggestions on how to explain for laypersons not connected to the Internet?

re: 147. Oh yes, graduate degree and 23 years experience in fact. Did you? Seems unlikely. I am not sure why this has to be repeated but here goes: Natural forcings alone can not explain global temperature trends since around 1970. When added, anthropogenic forcings do. Natural forcings alone among the set boundary conditions do not reproduce the warming. The anthropogenic forcings are now stronger. This is basic modeling stuff about boundary conditions so do try to understand, take a fundamental modeling course again, and follow along.

Can anyone answer a few questions?

How will we know when we have an adequate climate model? What is the criterion for knowing when to stop and agree that we have what we need?

I ask this as it seems that modelling the climate goes back over 100 years and predictions for a doubling of C02 have ranged from about 0C to 6C from the earliest days. The models have got better and better but the range seems to remain in that ballpark. We must surely know a good deal more about rates of change but the range does not seem to narrow consistently.

Given the progress so far. When is it predicted that we will have a concensus that the range has converged to a limit of what can be known or sensibly asked?

This will provide a time limit to a watch and wait (for the models to agree) stategy.

Given that we can put a date to this. What is the best guess temperature rise by that date?

That is to say will the models achieve accepted results before they are overtaken by events?

Unpromising answers to these questions would lead one to ask whether the modelling has not already done enough and quite possibly that the primary goal was reached many years or decades ago.

It worries me that pursuing the question of “how bad” relentless only gives rise to a “wait and see” reaction. That we must sit back and wait for the modellers to sort their models out. If the real answer is that it is going to be bad but “how bad” is unaswerable in a useful timescale then a whole excuse for doing nothing evaporates.

I worry that we are behaving a bit like a liner steaming full-ahead in an endless night through fog, in a region known to be prone to icebergs; whilst waiting for the guys in the back-rooms to perfect radar in the hope that it will enable us to accurately see the hazard that is looming up in that fuzzy outline dead-ahead in time to take avoiding action.

Re # 149. The numerical solution can only be indendent of the grid resolution/numerical accuracy for linear PDE’s. In case of nonlinear PDE’s (like in turbulence, ocean and atmospheric flows) the solution will always diverge, no matter how fine the grid or accurate the numerical method are. That’s why weather forecasts always will have a limited predictability. I work on simulation of turbulent flows. Two different numerical codes or the same code with different resolution will always give different solutions after some time (given the same initial conditions). However, the statistics (average velocity, spectra, variance, PDF’s) are the same if the resolutions and the numerical accuracy are high enough (and agree with observations). That is what I (can) demand of my code and what I am interested in, in case of a chaotic system, not that the solutions are exactly the same after some time. I guess the same demands concern GCMs: the probabilities of the projected temperatures should be more or less independent of resolution and numerical accuracy, not the temepature itself.

RE: #153. Linear vesus non-linear is not the issue; the basic, not-averaged Navier-Stokes equations are actually quasi-linear, I think.

Your example of fine-scale resolution of turbulence is the same as I mentioned in #149. I tried to contrast that with modeling and calculations of mean flow fields. I’m certain that you expect calculations of the mean flow field, with whatever closure approach employed, to be both (1) the same between different codes and (2) the same between two runs of the same code with different spatial and temporal resoutions. The latter case I have also tried to summarize as a necessary process for Verification of the calculations preformed with AOLGCMs. Until the point of a resolution-free solution is attained, the discrete equations have not been solved.

Let me know if I have not correctly characterized what you would expect from code(s) designed to model and calculate the mean flow field of a turbulent flow.

I agree with your summary of the approach that is used whenever the small scales in a turbulent flow are resolved. Direct solution of the Navier-Stokes equations produce the local-instantaneous small-scale motions of the fluid. The mean flow and stats of the turbulence will agree both between codes and with experimental data.

And here is where I am having difficulty. I understand that the AOLGCM models and codes do not resolve the small-scale fluid motions. The basic mass, momentum, and energy equations are written to describe the motions of the mean flow. Turbulence is modeled by only algebraic closure equations. The spatial scales used for numerical solutions of the equations are enormous; on the order of kilometers, I think. And the calculated numbers are taken to represent the motions, and temperature, of the mean motions of the fluid; not the stats of the numbers as when turbulence is calculated directly from un-averaged Navier-Stokes equations.

That is, the temperature of the mean motions is not rolled up from applying statistics to the calculated numbers. So, it seems to me that the calculated results from AOLGCMs are sometimes implied to be equivalent to what is calculated by direct solutions of the Navier-Stokes equations, yet the calculated numbers are not handled in a manner consistent with what is required by that approach.

If what I am thinking is not correct, I hope Gavin and others will shed some light on where I have messed up. It would be very interesting to see that the spectra, variance, PDF’s, etc. of the fluid temperature calculated with different codes and with different runs of the same code, especially runs with sightly preturbed ICs, do in fact demonstrate more or less independence of resolution and numerical accuracy.

Thank you for your information and I hope we can continue to explore these topics here on RC.

The topic seems to have shifted significantly from the beginning, so let me try to go back to the original issue: “Short and simple arguments for why climate can be predicted”. If I may, let me offer an simple analogy that I often use in my own field of Solar System dynamics.

Most of us have played pinball. The path the ball follows through the playing field is chaotic because of the bumpers set up to make the game exciting. The location of the ball becomes increasing more difficult to predict with time as tiny differences in initial conditions make it nearly impossible to predict exactly how the ball will hit each bumper. In this manner, predicting the “weather” is a lot like predicting the future trajectory of the ball. Short predictions are much more accurate than long term ones.

When we consider the long term evolution of the ball, however, the slant of the table means the *secular* trend is downhill, particularly if we do not use the paddles at the bottom. If we shoot 100 balls through the game, each one will eventually end up at the bottom; the only real issue is timescale. Climate predictions fall into this category; they are much more easy to predict over long timescales than weather.

Global warming can be considered an important factor in determining the slant of the table and how fast the balls roll downhill. The greater the slant, the faster the secular trend and the shorter the overall timescale needed to reach the bottom. There are short term perturbations to the system that can produce the opposite trend (i.e., the ball hits a bumper and goes uphill), but they do not seriously affect the secular trend.

Thus, our goal in dealing with global warming should be to try to make the table much flatter than it is now and reduce the secular trend.

Re #120

Ike Solem reminds us that volcanos can have a large effect on climate.

There was an impressive swarm of earthquake activity along the southern edge of the Long Valley Caldera during mid November, 1997. The activity reached an initial peak of some 800 quakes per week, then about January 1998, another period peaked at around 900 quakes per week. A few quakes had intensities of near M5. The activity then receeded over a period of a few months. Last I heard, the lava dome had also “deflated” to some degree.

i think that every country should sign the kyoto protocol because we don’t need enviromental harm!!!! but we do need more water in the world lol

“So, my question is, do you think people get the message that I try to convey this way? ”

No. I don’t get it and I’m a scientist. The things you are talking about “predicting” such as winter or cold air at high altitude are are simply “climo” and not a forecast which implies a temporal component.

This doesn’t mean that I don’t understand the difference between forecasting with climate models and weather models, I just don’t understand the analogies made here.

BTW, where can I find infomation about actual climate forecasts made in the past twenty years ? I can never seem to find this information.

[Response:Climatology is about time variations and about expectations (forecasts), due to changes in the forcings. furthermore, predictions do not necessarily have to be a ‘just a weather forecast’, you can also use models to make a prediction of the surface temperature on Venus. -rasmus]

RE #7

“I can predict right now with a high degree of confidence that the …temperature averages, and will continue to be above the 1971-2000 temperature averages for hundreds of thousands of years to come.”

Perhaps you should be a little more cautious. I’ve made my living by forecasting and I have learned to be careful of the assumptions I make. The future is a strange place and there are plenty of things in the next few hundreds of thousands of years that can hose your forecast.

RE:#21

My reply is to offer a bet: I will predict the average temperature of the earth for next year and they will predict the temperature of the city we are in for that day next year. While I have had a number of very creative reasons for they will not agree to the bet I have yet to have anyone take me up on it.

Comment by John Cross � 13 Aug 2006 @ 9:01 pm

————-

The standard deviation of annual temperature fluctuations of the earth are roughly 20 times (just a guess) smaller than the standard deviation of the daily temperature fluctuation at a random city.

Your bet says nothing about the skill of climate forecasting. In fact, daily temperature forecasts have far more skill than annual earth temperature forecasts when measured by the percent of periodic variability which is explained by the forecast.

Here’s the place to make your predictions and offer wagers on them:

http://www.longbets.org/

Is there a simple explanation some one can give me as to why calculating climate sensitivity from the warmth due to GHG divided by the forcing is not accurate? 33C and 148 W/m2 respectively for a sensitivity of 0.22 C/W/m2.

Thanks George

[Response: For one, this assumes a linear response over the whole of climate history, and would clearly fail the ‘faint young sun’ test. Secondly, where does 148 W/m2 even come from? The forcing number you would want to use (assuming that you would erroneously want to use a linearised estimate) is the net radiative change at the tropopause when you remove all greenhouse absorbers (H2O, CO2, clouds etc.) while keeping the albedo the same. I don’t know what that number would be, and I don’t think it’s particularly relevent in any case (see first point). As far as I can tell this ‘calculation’ originates from Douglas Hoyt (and doesn’t include feedbacks – which is the whole point), and has no back up anywhere in the literature. In contrast, estimates of the climate sensitivity derived from observational constraints that have plenty of support in the literature give numbers around 0.75 C/W/m2 (see our previous posts on the topic: most recently here). Pulling numbers out of thin air is no substitute! – gavin]

Re 162

See Calculating the Greenhouse Effect

and Water vapour: feedback or forcing?

and Climate Feedbacks

I think the really short explanation is that it’s nonlinear.

re: 159. Matilda,

I agree it is important to get assumptions right and that users need to understand the assumptions.

For example, people who use NOAA’s NWS hydrologic prediction products for preparedness need to be aware that the NWS hydrologic predictions assume global warming is not happening. Therefore, floods and droughts are likely to be more severe than predicted by NWS and will probably come with less warning time.

Another example – Hank made an assumption (60. of RC fact-fiction-fiction thread) that I should get a good attorney to work on my employment issue. Hank assumes that a competent attorney will tell me that my public complaint is not only easy to ignore but has to be ignored legally. Hank also assumes that publicly posting my story over and over makes me appear disgruntled and makes me appear like I believe that NOAA administrators and NWS did nothing wrong legally.

Hank assumes that the reason I’ve been posting my complaints about NOAA’s NWS supervisors having fired me in 2005 for my efforts on climate and hydrologic change is me, and that my actions aren’t helping me. Hank has it all wrong though. My reason has not been for myself but to bring attention to the need for us to reduce our greenhouse gas emissions. Oh well, sorry if my bring this to you bothers you too. It’s not always easy to figure out where people are coming from that post here. Sometimes we can’t be too cautious or we miss the life boat.

RE: #151 – Did you read #149 before posting? It does not seem like you did.

Let’s turn the question on its head:

Take a breezy day. You are sitting next to a window and occasionally a gust of wind comes through.

Now, why should you believe the weather forecast if the weatherman can’t even tell you when the next gust will blow through your window, and how strong it will be?

Regarding Dan Huges’ comments (e.g. #10 and #43 above) about numerical convergence:

In post #149 above, Dan refers to a Sandia NL report on validation and verification (V&V) in codes. The codes Sandia is primarily interested in are radiation-hydrodynamics codes used for weapons simulations. This is actually a bit ironic, because these codes are ALSO (just like climate models) applied to systems with large Reynolds (Re) number, and they therefore also of necessity fail the very same convergence tests that Dan seeks for climate models, in the very strict sense of convergence that I believe Dan is using here.

In fact, the very same criticisms that Dan levels at GCMs could equally well be levelled at just about anybody who does computational fluid dynamics (CFD) applied to high-Re systems. Go ask the engineers simulating the airflow over an airbus A380 what the Lyapunov exponent of their physical system is. They don’t know, either!

The notion of a Lyaopunov exponent, while interesting, it not as useful in this context as it is in the case of orbital simulations which have a very very small number of degrees of freedom. The number of degrees of freedom in a high-Re CFD simulation is astonishingly huge, whether you are simulating the turbulence on the Sun, the climate, or a nuclear weapon. You ain’t getting convergence, in the strict sense of the word, in our lifetimes.

This is a big problem. But, it’s a problem for ANYBODY doing high-Re CFD.

This problem does not, in and of itself, however, negate the possiblity of prediction, depending upon what one means by convergence. That is, the answer depends upon what you mean by asking that the results be independent of the numerical resolution. In fact, all of the texts on CFD that I know of devote a hefty amount of space to turbulence modeling, and as any CFD researcher knows well, the results of any simulation depend upon what turbulence model you use. This is why it’s always a good idea to use more than one turbulence model if you can. And why are there models? Because it is not only impossible to get strict convergence for an extremely high-Re problem, it is more importantly besides the point. So, if you want to complain about lack of convergence in climate models, please define your terms precisely.

I must concur with Geert (#143). And, Dan, in #144, you are also incorrect here. The N-S equations are indeed nonlinear and that is the problem. The advective term, the v dot grad v term, causes these problems, whether you are simulating flow over an F-18, predicting the weather, simulating a nuke, or doing climate simulations. Everybody knows this. Yes, it is also true that the N-S equations are quasilinear, but the class of quasilinear PDEs is not disjoint from the class of nonlinear PDEs.

re: 165. Absolutely. I could not agree more with “Numerical approximations for boundary conditions, by the way, can destroy the most carefully-laid plans of numerical solution methods.” However, if the boundary conditions in any model are not significant with respect to domain emissions over time (especially if we are talking orders of magnitude), they are not likely to be significant forcings in comparison. I use a model every day in my work. It is highly dependent on the boundary conditions because they “set the stage” so to speak for the model to run and provide background levels. Those boundary conditions are quite significant relative to the conditions within the model domain. In other words, the forcings are similar magnitude. Not so much the case now with climate models where man-made forcings have been shown to have greater influence on global temperatures since circa 1970. I would love for a skeptic/denialist to cite any climate model work in the peer-reviewed literature which, when run solely with natural forcings, can replicate recent global temperature trends.

I do apologize and was absolutely wrong for completely misreading something very important in post 145: The forcings listed were “sun, volcanos, emissions”. I read “emissions” solely in the context of natural emission sources such as influences of the sun and volcanos, as they were linked together parenthetically. Obviously, if man-made emissions are included in the boundary conditions, my comments that followed did not make much sense at all as I was only considering natural forcings in the boundary conditions. Again, my apologies for my misread. I do disagree that solar influences, volcanic emissions and influences, and man-made emission estimates for the future are “unknown” for “future development” of boundary values. For example, various man-made emission estimates (scenarios if you will) can be and are quite useful. They have been used in regional air quality modeling for quite some time with quite reasonable and accurate results.

But again, my apologies to all for my obvious mistake.

D.

re: #167. Kindly point me to text in the Sandia report in which it is stated that convergence of the numerical solution methods for models/codes for applications to large-Re turbulent flows cannot be attained. Include the book by Roache, too, if you need additional material for backup. If you do not supply such a pointer, then I will take that to mean that you cannot find it.

Also, kindly point me to text in any CFD textbook in which it is stated that the convergence of the discrete model equations for the mean flow field cannot be attained; no matter the time given to perform the demonstration. If you do not supply such a pointer, then I will take that to mean that you cannot find it.

Although I will take a lack of response to mean the requested text cannot be located, it would be very useful to see a straightforward summary of the information located posted here on RC.

Oh, anyone reading this is invited to supply the requested pointers.

“The Seven Deadly Sins of Verification:

Assume the code is correct.

Qualitative comparison.

Use of problem-specific settings.

Code-to-code comparisons only.

Computing on one mesh only.

Show only results that make the code “look good.”

Don’t differentiate between accuracy and robustness.”

Thank you.

[Response: I’m not going to get into generic CFD questions, but with regard to your seven deadly sins, GCMs come out looking quite good. 1) No one has ever said GCMs are absolutely correct – at GISS we’ve found at least three coding errors since the IPCC AR4 simulations were done, none of which thankfully appear to make any noticeable difference. I’m sure there are others. 2) Comparisons can be as quantitative as you like – download all of the IPCC AR4 simulations from the server at PCMDI and have away. 3) Model parameters are tuned to improve the simulation of present day climatology. All applications are done using the same settings. 4) Model to reality comparisons are done all the time. 5) Resolution is an easy thing to change and is done frequently within model groups and across them. 6) With all the IPCC AR4 data available, we are not in control of what comparisons are done and what results are shown. 7) Robustness of the climate sensitivity across models is pretty much the whole point of the AR4 comparisons and that is quite distinct from the accuracy of any particular model. You can see my (long) paper on the subject for more details. – gavin]

My posts #s 10, 31, 32, 43, 127-130, 143, 149, 150, and 154 addressed the AOLGCMs, not general CFD.

All I’m asking is for someone to point me to papers and reports in which it is shown that any AOLGCM applied to a fixed application has the property that as the discrete temporal and spatial increments are decreased the changes in the calculated results for the mean flow field approach fixed values; that is, the changes in the calculated values bewteen runs decrease and approach machine precision (or some appropriate small number) . I can provide a more detailed prescription, but it should not be needed in this setting. All I need is one specific citation.

I think it is the case that code-to-code comparisons are accepted as good practice under only very restrictive conditions. I can supply citations if anyone wants to see them.

Thank you in advance for the citation(s) that I’m looking for.

re #169,

Dan,

I did not say that convergence of the model equations to the mean flow can not be attained. Do not put words in my mouth.

It appeared (because of your comments re Lyapunov exponent) that you wished, not that the numerical methods be formally convergent to the model equations, but that there be demonstrated numerical convergence to the exact solution. I wished only to point out that it is impossible to converge in practice to the exact solution of a high-Re flow – that is to say, it is impossible to accurately simulate each and every eddy down to the Kolmogorov scale.

My apologies if I misunderstood you. Did I?

In any case, it is impossible to proceed further without a formal mathematical definition of “convergence.” Pick one you like and let me know what it is.

Re 170:

Dan,

Thanks for clarifying.

That doesn’t seem like such an onerous burden offhand, and a numerical convergence study along those lines would seem reasonable to me. I would guess for a number of reasons that convergence in GFD might be more difficult than in other areas of CFD, but I’m not a GFD person so I’ll keep my musings to myself here, other than to point out that defining a mean solution is a bit tricky given that we are talking about a time-dependent solution and there’s an ensemble with only one member.

I’d also guess that it’s already been done; in fact I’d be really surprised if it hadn’t been done.

Anyway, I’m a CFD/fluids person, not a climate person, so I’ll leave finding the references to somebody else. Sorry.

P.S.

Dan Hughes,

Speaking again as a CFD/fluids person (astrophysics), not a climate person:

In some of your other posts I’ve come across, you’ve raised some good points about V&V, SQA, etc. In my experience, the engineers really do seem to be taking the lead on establishing code standards. I’ve seen a lot of publications in the literature in my field with bad code V&V.

However, (1) the climate system is not as nice and self-contained as a laboratory experiment. This obviously complicates the process of V&V. You can’t just take engineering concepts of V&V and slap them on climate models without taking into account the unique aspects of the problem. There’s a whole host of epistemological issues here that you don’t get in the lab. (2) Again, not my field, but I feel pretty sure that the GFD people don’t have anywhere near the budget like you get at the national labs when you’re working on, say, some big ASCI project. Those guys can do all the V&V/SQA they want because they have CPU cycles to burn and the money to hire all the comp sci / engineering / science people they need.

I’d be curious to know how convergence works out for GCMs. But, on the other hand, I don’t take my lack of understanding of the codes &c as an excuse not to pay attention to the results. We can put more funds into improving the sims; in fact, I think it’s imperative that we do. Then, they might even be able to hire people like you, Dan, so that instead of critiquing their methods from the outside, you could work to solve the problems from the inside. Constructive criticism is of course always needed; inflammatory rhetoric is not.

I think you’d be a fool or in some serious self-denial, though, to think that somehow there’s some basic flaw in CFD applied to the GFD in the GCMs, and that everything’s going to be hunky-dorey if we just keep on with business as usual. We do that and we’re scr#%$d. The evidence is pretty dang overwhelming.

That’s it for me…..

Gavin,

What’s the jobs situation in computational GFD/GCMs these days, anyway? As for astrophysics, the NASA science/theory programs have been decimated. Same for the DOE fusion research program.

Thanks,

Peter

I have weblogged a response to this Real Climate weblog at http://climatesci.atmos.colostate.edu/2006/08/22/real-climate-post-on-weather-and-climate/

I invite Real Climate to reply.

[Response:I prefer explaining it here so that we can keep the discussion at one site, rather than jumping back and forth (please be a gentleman, Roger, and keep the discussion that we started, here at RC…). For academic reasons, there may be many different ways of defining climate, but for all practical purposes, it’s useful to define it as the ‘typical weather patterns’. Or, we could call it ‘etamlic’, and use this new term to describe what we mean: the typical weather pattern, such as described by a distribution function of the important variables. It is then etamlic that is of greatest interest for the society, although the complex and intricate processes are themselves interesting, at least for the scientists and the model developer.

Yes, it’s trivial to predict the seasonal cycle of the etamlic – here we are in complete agreement – but this trivial knowledge is more profound than so. It leads to the next step: is it possible to push the limit a bit further? If you look at the distribution function for the temperature in winter and summer at high latitudes, you see that the distribution function (probability distribution function, or p.d.f.) of the temperature is systematically affected by external forcings. The same observation can be made for the diurnal cycle, and the time horizon for the prediction isn’t the main issue here, because it’s true for any day in the future (in hundred and one years, the night time temperature will still tend to be lowest). Thus, these are clear effects of the natural cycles in etamlic. They do not arise spontaneously, but are driven by periodically varying conditions (‘external forcings’). These periodic variations can be taken as empirical evidence that the local etamlic response is *not* insensitive to (natural and periodic) external forcings.

To progress beyond the trivialities, we must ask: how much better can we do than just using the seasonal variations as our predictions? Seasonal forecasting over Europe is notoriously difficult, but if we use the 1961-90 period as base line, almost all 3-month-temperature averages are above the base line. Thus, skill is boosted by the fact that there is a trend in the data! Precipitation is harder, and if you look at the seasonal cycle in precipitation e.g. for Norway, you see a much less marked annual cycle. In the tropics, the situation is different, with the wet and dry seasons being the main features. But, don’t forget that predictability from initial coditions and predictability from changes in boundary conditions are two different things. While it is true that predictability from a given state of the system (initial conditions) is very limited and seasonal forecasts have little skill beyond a few months, they can still predict that winter temperatures are generally lower than summer temperatures at higher latitudes even after hundreds of years. To re-iterate this point, climate models do also predict that afternoon temperatures generally are higher than temperatures at dawn, even after many years and long after the model has lost the ‘memory’ about it’s initial state (due to chaotic behaviour), given that the diurnal cycle is provided for the forcing.

The next crucial question is: what about the global response? Is there any reason to think that it is insensitive, in spite of the fact that the local etamlic undergoes such marked annual variations? The effects of vulcanoes – such as after Pinatubo – suggests otherwise. And then, the BIG question: is there any reason to think that the sensitivity stops abruptly beyond the seasonal forcings or vulcanoes? A more reasonable proposition, if we have no additional information, is that the sensitivity is a slowly varying functions with the strength of the forcing. This is a good null-hypothesis, but it is not entirely implausible that etamlic hypothetically could undergo abrupt changes (‘tipping points’). Are there any reason to think that the response/sensitivity is different for other radiative drivers, such as changes in the total solar irradiance, landscape, or greenhouse effect? We can look to paleoclimatic (empirical) evidence, such as the glacial cycles, and what do we see?

Now, the we are ready for the next question: how skilful are the models and what fidelity do they have? In the IPCC TAR, a comparison between simulations for the past and empirical data, suggest that at least some climate models ar able to provide a close description of the historic evolution of the global mean temperature, given estimates for the historic GHG emissions and known natural forcings. I think that models which reproduce the past trends and past climate realistically are useful for making scenarios for the future. -rasmus]

Pete Williams, Validation of any models/codes designed to be applied to analyses of inherently complex phenomena and processes is a very difficult undertaking. Almost all real-world problems of interest cannot be studied in a laboratory; take the case of a supersonic aircraft carrying external stores and firing/releasing some of them. Yet models and codes designed to analysis complex engineering and natural situations and equipment do in fact get Validated. It will very likely be very difficult and expensive for most codes applied to real-world analysis objectives. By the same token, many existing codes would never have gotten designed and built if the difficulty of the task was a factor.

BTW, Gavin, you have measured the successes of one AOLGCM by comparing the outcomes of some of the seven deadly sins. This is just backwards from generally accepted practice in scientific and engineering software. The accepted practices avoid the seven deadly sins like a plague and instead focus on the ‘best of the best’ good practices. After all, aren’t sins to be avoided.

[Response: I don’t understand your point. We do try to avoid sinning and I gave you list of how we avoid temptation. What is so objectionable? – gavin]

In reference to abrupt climate change, the concept of geoengineering to effect the global warming trend has been widely discussed. In particular, particulate injection into the atmosphere has been suggested as a way to mitigate global warming. This might not be a good idea, based on the following scenario:

The increasing freshness of the North Atlantic has been tagged as a primary component of a process that could turn off the oceanic heat conveyor. However, it is not well understood how this could occur in an abrupt manner. My idea is that a volcanic event could, as has happened in the past, cause a multi-year rapid cooling that would freeze the fresh water on the surface of the North Atlantic. This could cause an “instant” shutdown of the conveyor current, which would create a “snowball” cooling effect that might trigger another Ice Age.

Thus, the suggestion that artificial atmospheric cooling effect should be implemented is premature until all factors are examined.

RE: #177 – My take is as follows. Here is my statement to the would be “geoengineers:” Keep your grubby mitts off of our Earth. We can certainly debate the current and historical abuses layed upon her. But this talk of truely destructive meddling on an unprecedented mass scale makes me livid! Utterly livid!

Gavin,

Re your response to #169 I hope you don’t mind me pointing out that your response to the seven deadly sins is a beautiful example of the sixth sin – ‘Show only results that make the code “look good.”‘ In each of your defences, you only describe where the models fail to sin, and never mention the odd lapse, except in case of sin 1.

But your reply is a misinterpretation of that first sin. When it says assume the code is correct, it means that the algorithms of the code are correct, not that there may be a few trifling coding errors. By highlighting those, you have shown that you believe the algorithms are correct, yet in the abstract to your (long) paper it says “Data-model comparisons continue however to highlight persistent problems in the marine stratocumulus regions.” So even though you know there are problems you still asume the code is correct :-(

Sin 2 is to make qualitative comparisons, which you invite us to do, but that is thwe sin! When quantatitve comparisons are made we find on as simple a matter as climate sensitivity the values produced range from 1.5C to 5.6C and greater! No wonder you prefer qualatative comparisons :-)

At least you admit you are guilty of sin 3: problem specific settings used to improve the simulation of present day climatology, but then you try to spin your way out of it :-(

Sin 4 is more difficult to pin on you. You certainly compare code, since it is now it is all open source. And you compare it with reality, for instance with the results of the tempertures measured in the upper troposphere using radiosondes and satellites. It is just a pity that it is the results that are changed to fit the models, not vice versa :-(

It is true that the modellers are not guilty of sin 5, using one mesh only. The paleoclimatologists have been trying to get the models to replicate abrupt climate change by using smaller and smaller grids, but still have not managed it. Why does it remind me of Einstein’s remarks “Insanity: the belief that one can get different results by doing the same thing.” :-)

In trying to defend yourself from sin 6, which I have already shown you to be guilty of, you argue that you are not in control of what results are shown. I think you meant the results of the comparisons. However, you do inevitabley have control of the results you publish on which the the comparisons are made. One case where results were not published was the Climate Prediction experiment. Models which crashed, were left out of the published data, and there was some discussion of leaving out the highest sensitivities although this did not happen, as fas as I know :-?

Finally sin 7: not differentiating between accuracy and robustness. I not sure I understand that, or should I say I am sure I don’t understant that :-( Do you both mean precision and robustness? To me the model results are neither accurate (sensitivities of 3C +/- 50%) nor robust (sensitivities of 3C +/- 50%). :-(

All I can say is that with 7 out of 7 as a score for sins, it is just as well for you than my name is not St. Peter, otherwise I know where you would be heading ;-)

Cheers, Alastair.

[Response: Well, I’m pretty glad you are not St. Peter, because your judgment may be impaired. You have assumed a whole bunch of things I never said. For instance, find one place anywhere on this site, or in any of my papers where I even hinted that I thought that the model code was a ‘correct’ emulation of the real world. I kind of assumed that it went without saying that everyone understood that climate models are only approximations of reality – so when asked whether the I think the code is ‘correct’, I assumed that this is a question about it’s bugginess. But in either sense, I have never assumed the code is ‘correct’.

With respect to 2, you are quoting a quantitative comparison as proof that we don’t do quantitative comparisons. I fail to see your logic. Number 3 you have completely mis-interpreted. The ‘sin’ is to keep changing the parameters to fit whatever latest application you find. That is precisely what we don’t do. Once the parameters are set for the climatology, they are set for all subsequent tests. We use the same parameters for the 20th Century runs as for 8.2 kyr experiments, and you know what, it works. Your other points are pretty trivial. – gavin]

Re #177 If a volcanic eruption could cause cooling which would be amplfyied by the Norh Atlantic freezing over, then surely the melting of the Arctic ice will have the opposite effect and cause a warming?

In that case, since the Arctic ice will probably have gone within five years, see https://152.80.49.210/products/SATELLITE/US058SCOM-IMGatp.NPOLE_IC_PS.gif

The concentration of the ice is down to 50% within 200 miles of the north pole.

In the time available, geo-engineering is the only answer, otherwise the climate of the entire northern hemisphere will be altered on a similar scale to that which happened at the end of the Younger Dryas.

Re #178 It is a bit too late to say “Keep your grubby mitts off our Earth.” Basically the whole planet is scarred from our engineering, not least the atmosphere which has been severely altered by the byproducts of the engines we have used to engineer our lifestyles – the American Dream which is heading for a nightmare!

Rasmus – regarding #175 (which I will crosspost on Climate Science), chaos can also occur due to sensitivity to boundary conditions (e.g. see Martin Claussen’s paper [Claussen, M, 1998: On multiple solutions of the atmosphere-vegetation system in present-day climate. Global Change Biology,4, 549-559).http://www.mpimet.mpg.de/fileadmin/staff/claussenmartin/publications/claussen_multiple_gcb_98.pdf

I also discussed the issue of climate as an initial value problem in Pielke, R.A., 1998: Climate prediction as an initial value problem. Bull. Amer. Meteor. Soc., 79, 2743-2746 [http://blue.atmos.colostate.edu/publications/pdf/R-210.pdf]. Thus the topic was not first introduced on Real Climate but has been presented to the community some time ago. Unfortunately, international assessments have chosen to ignore this perspective.

I would like your conclusion on what can the models skillfully predict on multi-decadal time scales. You state

“In the IPCC TAR, a comparison between simulations for the past and empirical data, suggest that at least some climate models ar able to provide a close description of the historic evolution of the global mean temperature, given estimates for the historic GHG emissions and known natural forcings.”

What else can the models skillfully predict on this time scale?

Regards Roger

[Response:Thanks for your response, Roger, and your interest in this post. You are absolutely right – RC did not first introduce the concept of predictability of first (initial value problem) and second (boundary value problem) kind, but this topic goes back to the 1960s and the work by Lorenz. One prominent scholar on this topic is Tim Palmer (at the ECMWF), who has written about this years ago. I must admit that I have not had the time to look at the model results in detail, but work on finger print methods suggest that the models are successful at capturing the main structures of the atmosphere. And, of course there is the trivial observations that they capture the geographical distribution and large-scale circuation patterns. When it comes to more local details, then the models fall short, and one must apply downscaling techniques to make scenarios for the local climate. One the local scale, there are greater differences amongst the models on historical evolution, even the same model with same forcing. But, the main point here is the global temperature, which is an indicator of the state of our climate (like the temperature giving an indicator of a fever, but doesn’t tell you where the infection is…). -rasmus]

Re #181 It is a bit too late to say “Keep your grubby mitts off our Earth.” Basically the whole planet is scarred from our engineering, not least the atmosphere which has been severely altered by the byproducts of the engines we have used to engineer our lifestyles – the American Dream which is heading for a nightmare!

Comment by Alastair McDonald â?? 22 Aug 2006 @ 2:42 pm

———————

I’m amazed at the people who hold the view that the earth and it’s ecosystems are incredibly sensitive and on the verge of collapse. To me, environmental changes seem small, the planet seems remarkably robust and it’s creatures very adaptable.

I could be wrong about all this but keep in mind, the burden of proof in the public debate will always rest with the doomsayers.

I’m still searching in vain for actual climate forecast verifications.

Specifically, can anyone here point me to actual climate model forecasts made say 5,10,15 or 20 years ago and their verification ?

I’m not talking about simulations of past climates. What I want to see are actual forecasts made and published and how they have verified.

With weather forecasts, millions of forecasts can be verified and the skill scores and decay with lead time well known. This is not the case with climate model forecasts.

RE: #183 – While it may be done off line, there is no public in depth verification of the NWS 90 day (30 day lead time) precip and temp forecasts. The best they do is post achieved maxima and minima for a very small number of recording stations at metro area airports. It sure would be nice to see a more thorough scorecard. Would love, for example, to see a scorecard, month-by-month, for the NWS 90 day temperature Aug-Sep-Oct that was pushed out in July. The scorecard itself could also be a map of variance or sigma versus the forecast map. Or something like that. Put those computers to work looking at how well the prognostications came in. Do it for all climate forecasts. Publish results. Hide nothing.

# 175 Response says: … For academic reasons, there may be many different ways of defining climate, but for all practical purposes, it’s useful to define it as the ‘typical weather patterns’. …

My comments:

‘typical weather patterns’ terminology for climate in early spring was used in narrative Upper Midwest Spring Snowmelt Flood Outlooks in the 1980s and 1990s by the NWS North Central River Forecast Center.

During that time, I was not aware that climate in the Upper Midwest was changing rapidly. Thus, initial numerical snowmelt flood outlooks which were issued then were often not high enough and untimely due to the earlier than expected snowmelt runoffs and heavier than ‘typical’ rainfall while the melt was going on.

Defining climate as ‘typical weather patterns’ is misleading now that we know the climate in the Upper Midwest is changing rapidly, especially during the winter – early spring period.

>183, Matilda

Have you looked at these?

http://scholar.google.com/scholar?sourceid=Mozilla-search&q=climate+forecast+verification

Re #183 Matilda, this is the only climate verication I know of:

The Global Warming Debate By James Hansen � January 1999 at http://www.giss.nasa.gov/edu/gwdebate/

It would be interesting to update Figure 1 with today’s temperatures. It is not clear which dat sets were used for the red line, and whether other global temperature records would give different results.

HTH,

Cheers, Alastair.

RE # 183

Matilda, you said:

[I could be wrong about all this but keep in mind, the burden of proof in the public debate will always rest with the doomsayers. ]

Yes, you are wrong about all this.

Read up on glacial meltback in the Andes and Himalayan mountains and Arctic ice meltback.

Then convince the hundreds of millions of farmers and villagers dependant upon river water flowing from those mountains that [these environmental changes seem small, the planet seems remarkably robust and it’s creatures very adaptable.]

Re #179 et seq

Sheesh. Look, there is much to be learned from the ideas of V&V which have been developed over the last few decades. But on the other hand, I’m going to jump to conclusions here and guess that the people ranting about this stuff are used to well-controlled experiments, lots of data, and known physics. Air flow over a wing is just the Euler eqn, plus some turbulence models that were developed with a lot of hard work. Nuke codes are verified thanks to ca. 1000 nuke tests, plus constant verification with hydro tests, etc etc. The physics is pretty much all known, thanks to an absolutely immense expenditure finding cross-sections, opacities, etc; the initial conditions are all defined; the gov’t spends a gazillion bucks on a proton radiography system so you can see what’s going on, and so on.

When push comes to shove, you use these same LANL/LLNL legacy codes and apply then to a supernova, and it fizzles. Why? Good question. The real world ain’t so simple.

So don’t complain that the models still show big discrepancies/uncertainties with long-term climate forecasts, unless you know what you’re talking about. Being a CFD/fluids person, by the way, does not count as being an expert, in this regard.

re: 185 which was in response to 184 (not 183).

The statement “The best they (NWS/NOAA) do is post achieved maxima and minima for a very small number of recording stations at metro area airports” is simply wrong. A quick search on the Climate Prediction Center web site yields plenty of verification stats for CPC seasonal outlooks at

http://www.cdc.noaa.gov/seasonalfcsts/4panel.html and http://www.cpc.ncep.noaa.gov/products0/wesley/verf/90day_inflat/OFF-map/l01/index.html. Indeed, nothing is hidden!

RE: #189 – What specifically do you mean by “Arctic ice melt back?” Do you mean changes in the mass or area of continental glaciers? Or are you refering to reputed “long term trends” (based on a rather recent base line) in Arctic Sea Ice extent minima? And now for the trick question, what is the Cpk of said areal sea ice extent minima? What is the demonstrated confidence interval of such a figure? Share your wealth of knowledge!

Corrected second link in 191:

http://www.cpc.ncep.noaa.gov/products/wesley/verf/90day_inflat/index.html

The “Official Forecast” verification links are near the end of the list of parameters.

RE: #191, 192 – well the NWS sites where I was looking at the 3 month sure did not have them. Thanks for the links, they’ll get well used especially after Aug gets rolled up.

RE # 192. Steve you asked:

[What specifically do you mean by “Arctic ice melt back?”].

I mean just that; not mass or area of continental glaciers.

And what is the acronym Cpk you used?

Finally, I do not have time and you are not really interested in my wealth of knowledge, per se. However, I can suggest you link to:

http://arctic.atmos.uiuc.edu/cryosphere/

and share Dr. Bill Chapman’s wealth of satellite images and data.

Go ahead, Steve, knock yourself out. Spend an afternoon viewing nearly 30 years of evidence the warmer temp in high latitudes is real and having an impact.

RE: #195 – I am well familar with Crysophere Today. Now that you also know about it, Google to learn about Cpk and start to do some real work. Who knows what you might find? Of course, one major challenge you will encounter is the fact that the satellite data don’t go very far back in time. That’s all I’ll write.

Hi steve, I googled Cpk. First hit; California Pizza Kitchen. No help there.

Satellite data don’t go very far back in time? How far back would satisfy you? I’m more interested in where the melt is going to take the NA climate in the future (next 30 years).

See: Disappearing Arctic sea ice reduces availale water in the American west; Sewall & Sloan. GRL, Vol 31, L06209, doi:10.1029/2003GL019133,2004.

That’s all I’ll write.

RE: #197 – well if you are interested in understanding what would be considered innate variation versus abnormality vis a vis Arctic sea ice mean annual extent and annual minima time series, a precursor would be to have a large enough sample of past data to be able to know, at say, a 90% confidence level, what the “warning limits” and “spec limits” are. The fewer data you have, the wider the warning and spec limits need to be to prevent a false alarm trigger. Certainly, you can expect a certain amount of variation. The degree and sign of the variation may actually be modulated by some sort of overall factor. I believe that to be the case. That would be sort of a meta variation. So, we have a big problem here. We indeed have only 30 years of data. We therefore have a poor understanding of the innate level of variation and of the supercycles of extent. We also do not really know what to make of events like the 1999 minimum. Is it within innate variation, an outlier, what? If the 2006 minimum is not at pronounced as 2005, what if any thing does that mean? What if the next few years, the minima continue to become less pronounced, what if anything does that mean? Need data … lots of it.

re 182. [Response … But, the main point here is the global temperature, which is an indicator of the state of our climate (like the temperature giving an indicator of a fever, but doesn’t tell you where the infection is…). -rasmus]

It seems the infection is more serious than previously figured, do you agree?

“Frozen” Natural Gas Discovered At Unexpectedly Shallow Depths Below Seafloor by Staff Writers

Montreal, Canada (SPX) Aug 23, 2006

http://www.terradaily.com/reports/Frozen_Natural_Gas_Discovered_At_Unexpectedly_Shallow_Depths_Below_Seafloor_999.html

re 4.

Eric (skeptic),

It’s more important NOT to recognize the mechanics and then be caught off guard in dealing with more rapid and more deadly global warming forces of global destruction.

‘Ah don’t ya know we’re on the eve of destruction’.

For lyrics to tunes go to the article by Stu Ostro, Senior Meteorologist The Weather Channel, titled: PEACE, MUSIC … AND A CATEGORY 5 HURRICANE – and 23 comments – at:

http://www.weather.com/blog/weather/8_10233.html?from=blog_comment_mainindex&ref=/blog/weather/#comment

I hold nothing against meteorologists who now say they are not skeptics on Anthropogenic Greenhouse Gas Driven Rapid Global Warming (AGHGDRGW).