Over the last couple of months there has been much blog-viating about what the models used in the IPCC 4th Assessment Report (AR4) do and do not predict about natural variability in the presence of a long-term greenhouse gas related trend. Unfortunately, much of the discussion has been based on graphics, energy-balance models and descriptions of what the forced component is, rather than the full ensemble from the coupled models. That has lead to some rather excitable but ill-informed buzz about very short time scale tendencies. We have already discussed how short term analysis of the data can be misleading, and we have previously commented on the use of the uncertainty in the ensemble mean being confused with the envelope of possible trajectories (here). The actual model outputs have been available for a long time, and it is somewhat surprising that no-one has looked specifically at it given the attention the subject has garnered. So in this post we will examine directly what the individual model simulations actually show.

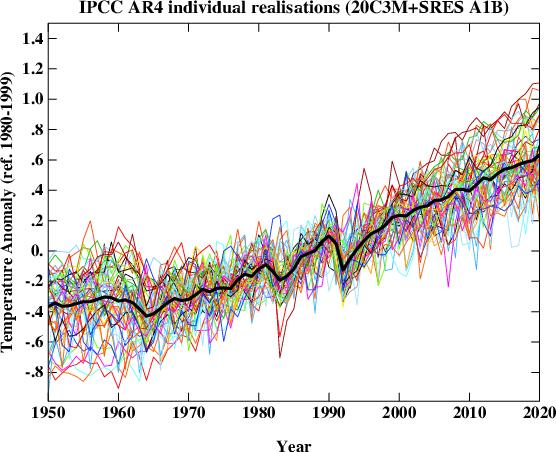

First, what does the spread of simulations look like? The following figure plots the global mean temperature anomaly for 55 individual realizations of the 20th Century and their continuation for the 21st Century following the SRES A1B scenario. For our purposes this scenario is close enough to the actual forcings over recent years for it to be a valid approximation to the simulations up to the present and probable future. The equal weighted ensemble mean is plotted on top. This isn’t quite what IPCC plots (since they average over single model ensembles before averaging across models) but in this case the difference is minor.

It should be clear from the above the plot that the long term trend (the global warming signal) is robust, but it is equally obvious that the short term behaviour of any individual realisation is not. This is the impact of the uncorrelated stochastic variability (weather!) in the models that is associated with interannual and interdecadal modes in the models – these can be associated with tropical Pacific variability or fluctuations in the ocean circulation for instance. Different models have different magnitudes of this variability that spans what can be inferred from the observations and in a more sophisticated analysis you would want to adjust for that. For this post however, it suffices to just use them ‘as is’.

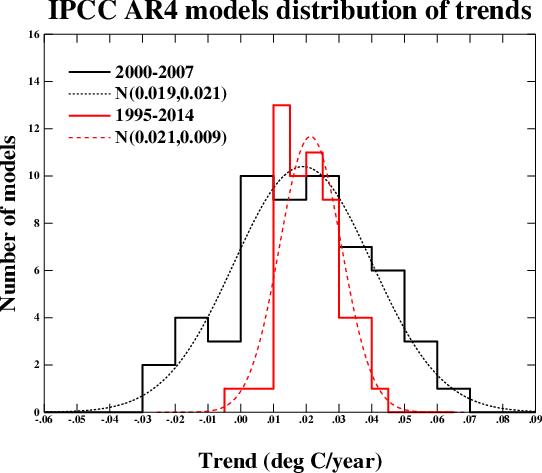

We can characterise the variability very easily by looking at the range of regressions (linear least squares) over various time segments and plotting the distribution. This figure shows the results for the period 2000 to 2007 and for 1995 to 2014 (inclusive) along with a Gaussian fit to the distributions. These two periods were chosen since they correspond with some previous analyses. The mean trend (and mode) in both cases is around 0.2ºC/decade (as has been widely discussed) and there is no significant difference between the trends over the two periods. There is of course a big difference in the standard deviation – which depends strongly on the length of the segment.

Over the short 8 year period, the regressions range from -0.23ºC/dec to 0.61ºC/dec. Note that this is over a period with no volcanoes, and so the variation is predominantly internal (some models have solar cycle variability included which will make a small difference). The model with the largest trend has a range of -0.21 to 0.61ºC/dec in 4 different realisations, confirming the role of internal variability. 9 simulations out of 55 have negative trends over the period.

Over the longer period, the distribution becomes tighter, and the range is reduced to -0.04 to 0.42ºC/dec. Note that even for a 20 year period, there is one realisation that has a negative trend. For that model, the 5 different realisations give a range of trends of -0.04 to 0.19ºC/dec.

Therefore:

- Claims that GCMs project monotonic rises in temperature with increasing greenhouse gases are not valid. Natural variability does not disappear because there is a long term trend. The ensemble mean is monotonically increasing in the absence of large volcanoes, but this is the forced component of climate change, not a single realisation or anything that could happen in the real world.

- Claims that a negative observed trend over the last 8 years would be inconsistent with the models cannot be supported. Similar claims that the IPCC projection of about 0.2ºC/dec over the next few decades would be falsified with such an observation are equally bogus.

- Over a twenty year period, you would be on stronger ground in arguing that a negative trend would be outside the 95% confidence limits of the expected trend (the one model run in the above ensemble suggests that would only happen ~2% of the time).

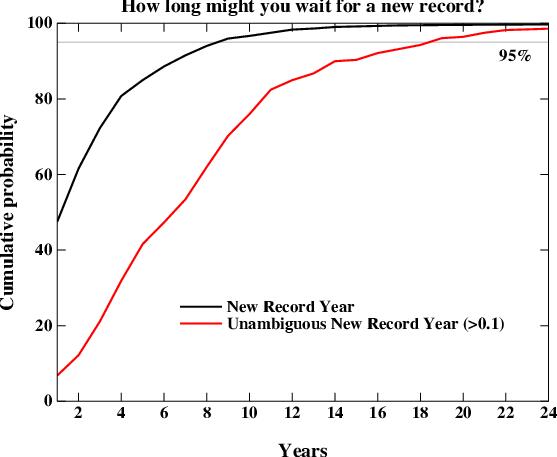

A related question that comes up is how often we should expect a global mean temperature record to be broken. This too is a function of the natural variability (the smaller it is, the sooner you expect a new record). We can examine the individual model runs to look at the distribution. There is one wrinkle here though which relates to the uncertainty in the observations. For instance, while the GISTEMP series has 2005 being slightly warmer than 1998, that is not the case in the HadCRU data. So what we are really interested in is the waiting time to the next unambiguous record i.e. a record that is at least 0.1ºC warmer than the previous one (so that it would be clear in all observational datasets). That is obviously going to take a longer time.

This figure shows the cumulative distribution of waiting times for new records in the models starting from 1990 and going to 2030. The curves should be read as the percentage of new records that you would see if you waited X years. The two curves are for a new record of any size (black) and for an unambiguous record (> 0.1ºC above the previous, red). The main result is that 95% of the time, a new record will be seen within 8 years, but that for an unambiguous record, you need to wait for 18 years to have a similar confidence. As I mentioned above, this result is dependent on the magnitude of natural variability which varies over the different models. Thus the real world expectation would not be exactly what is seen here, but this is probably reasonably indicative.

We can also look at how the Keenlyside et al results compare to the natural variability in the standard (un-initiallised) simulations. In their experiments, the decadal mean of the period 2001-2010 and 2006-2015 are cooler than 1995-2004 (using the closest approximation to their results with only annual data). In the IPCC runs, this only happens in one simulation, and then only for the first decadal mean, not the second. This implies that there may be more going on than just the tapping into the internal variability in their model. We can specifically look at the same model in the un-initiallised runs. There, the differences between first decadal means spans the range 0.09 to 0.19ºC – significantly above zero. For the second period, the range is 0.16 to 0.32 ºC. One could speculate that there is actually a cooling that is implicit to their initialisation process itself. It would be instructive to try some similar ‘perfect model’ experiments (where you try and replicate another model run rather than the real world) to investigate this further though.

Finally, I would just like to emphasize that for many of these examples, claims have circulated about the spectrum of the IPCC model responses without anyone actually looking at what those responses are. Given that the archive of these models exists and is publicly available, there is no longer any excuse for this. Therefore, if you want to make a claim about the IPCC model results, download them first!

Much thanks to Sonya Miller for producing these means from the IPCC archive.

Thanks again Ray. The key claim seems to be

>Even with these limitations, the models do reproduce gross weather features, and this enhances confidence that they have the physics basically right.

They’ve got certain aspects of the past right and they are dynamical models based on the best possible approximations to known physical processes, and where the processes are not known they make good guesses which never at any point turn into significant errors at a gross scale. Therefore they are a reliably guide to the climate a hundred years into the future. Not as reliable as the equations of general relativity or quantum mechanics but reliable enough that policy decisions should be based on them right now, decisions whose financial implications run into the trillions of dollars. That’s the argument, correct?

All I’m prepared to say at this point is that it’s no wonder that heating is shown not just in the models but in the discussion!

I’ve taken a look back on this thread, as you suggested, and found a number of things helpful, probably at this stage the most helpful being your advice to Richard Treadwell in #104 and the three urls there, especially the history at http://www.aip.org/history/climate/index.html

I have a bit of learning to do before I say much more. But the history is truly fascinating. Thanks for that pointer and others.

model_err, Glad some of this was helpful to you. As a physicist, I find it helpful to think in terms of a phase space of sorts, with dimensions corresponding to the different forcings that affect climate. We’ve localized the “realclimate” to a region in that phase space. Some of the dimensions offer us a lot of wiggle room–we don’t know them very well. Others offer very little wiggle room without our science being completely wrong–and there’s no evidence of that. CO2 falls into the latter category–not a lot of wiggle room. Regardless of what else is happening CO2 will make things warmer than they would be otherwise unless there’s some magical homeostasis mechanism ala Lindzen. And we’ve zero evidence for that and a paleoclimate that opposes it. What’s more, there are a lot more possible states in there that lead to dangerous warming than states that don’t.

Ray,

You physicists and your phase spaces. As a lover of cartoons the depiction I like best has to be the creator pinpointing the big bang in the phase space of all possible universes, so helping the artist Roger Penrose to explain the direction of the second law of thermodynamics, at least to his satisfaction! (p444 of my copy of “The Emperor’s New Mind”)

Going from one esteemed professor with a controversial theory to sell to another, can I ask you about Lindzen’s “homeostasis mechanism”. At the most basic level, at the global level, is the issue conservation of energy and is it only through something like the putative Iris Effect that more long wave radiation would escape the earth’s atmosphere to ‘mitigate’ energy gained through more infrared radiation being absorbed by more CO2?

Oh and Arch Stanton, thank you for the pointer to the discussion on the cosmic ray theories in IPCC AR4. I meant to say that before.

Re: #403 (model_err)

You may already have read it (it’s very technical in places), but the same point is made in my favorite book, Penrose’s “The Road to Reality.”

Model_err, Well, to restore equilibrium, either you have to increase IR escaping (and really the only way to do that is by increasing temperature or taking atmospheric cooling off the adiabat) or decrease incoming radiation. Given the fact that CO2 effects persist for centuries, the effect would have to correlate with the greenhouse effect (or any other warming, for that matter, unless you can figure out how to make the mechanism sensitive only to CO2–good luck). The paleoclimate among other sources argues against this. Otherwise, the only way to restore radiative equilibrium is for Earth to heat up and emit more radiation in the portion of the blackbody curve not blocked by greenhouse gasses.

tamino (#405), which point was that exactly? (I got tRtR when it came out and I still enjoy browsing the non-technical bits, if I can find any!)

Ray (#406) I’m not expecting to be the chap that finds that CO2-sensitive mechanism, any more than when I read Penrose or Hawking I expect to be the one who beats them to a theory of quantum gravity. But I might take a view (amateur though it inevitably is) that a unified theory may only happen well after they both pass on. I might even favour one man’s approach to the other – or Ed Witten’s latest thoughts, or whatever. I clearly wouldn’t know what I was talking about when I did but what’s the point of reading about any of these things without using the generous freedom the creator has given us to make our own choices? And then seek to learn more.

So, what shape do I think a “unified theory of climate” will take? When do I think it will happen, if ever? Or something much less that still sheds light in an important way on the energy transfers and the global consequences for climate?

I’m not sure. Wishful thinking can of course come in, depending on how ruinous one thinks current proposed mitigation will be for everyone on the planet.

> Lindzen

Pick any of the early related papers and then click the link for subsequent cites, e.g.

http://scholar.google.com/scholar?hl=en&cites=15678360454309315029

> ruinous

Name me one* instance where society, forewarned of a developing problem, made an effort that was premature, let alone “ruinous” to the generation that spent the money and time protecting subsequent generations.

How do you feel about chlorofluorocarbons?

http://www.nature.com/nature/journal/v427/n6972/box/427289a_bx1.html

__________________

*Besides the steam-powered horse-poop street-cleaner, which was hypothetical at best.)

Hank, hey, that’s only 34 papers to read, from someone I’ve never met, before going to bed here in Europe. Sure, I intend to learn more, as I implied in my previous post, but the gradient of decreasing ignorance over time ain’t I’m afraid going to be that steep. I take your point though (as I assume it is) that a lot of people disagree with the Iris Effect as proposed by Lindzen, based on real world observations that they carefully cite. Nor have I said that I agree with it. I just wanted to understand the energy transfer implications of these different ideas. I’m still not clear about the use of the term equilibrium in regards to the world’s weather and climate in any case.

You then highlight ‘ruinous’. Please put it back in the original sentence and look favourably on the mitigating phrases ‘wishful thinking’, ‘depending on how’ and ‘current proposed mitigation’.

One thing this would allow is to vary ‘current proposed mitigation’ to something that caused less alarm to some of those who most deeply care for the “bottom billion” of poorest nations in the world, as Professor Paul Collier has recently and notably identified them. I’m not saying anything about the validity of the science at this point, just about the effects of proposed approaches to mitigation or adaptation. It’s a massive subject. And it sure isn’t the only variable in that sentence.

At to naming one instance etc … I can think of loads, which I won’t mention. Because it might be thought that I was automatically lumping in humankind’s current and future reactions to AGW (which are after all unknown) with such terrible disasters. Just as some observers have taken the word “deniers” in discussions of this kind as making an explicit comparison, morally and factually, between those who question the likelihood of “end of civilization” AGW scenarios with Holocaust deniers. I’m sure those on this forum would never intend such a terrible thing. But I wouldn’t want to give even the slightest impression of anything similar in the opposite direction. For that reason I refuse to give an example.

What I feel about CFCs is that I accept the consensus view. You may want to play the relevance of that belief back to me. That’s always fun.

Would it be possible to show the temperature record of that time period on the same graph?

What do you think about Senator Inhofe’s web blog?

http://epw.senate.gov/public/index.cfm?FuseAction=Minority.Blogs&ContentRecord_id=d5c3c93f-802a-23ad-4f29-fe59494b48a6&Issue_id=

On Tuesday 8 July, 2008 “The Age”, a newspaper published in Melbourne, Vic, Australia, printed an article by William Kininmonth, former senior climate scientist in the Australian Public Service.

http://business.theage.com.au/why-so-much-climate-change-talk-is-hot-air-20080707-34iz.html

From this article….”

Frank Wentz and colleagues of Remote Sensing Systems, California, published a paper in the prestigious international journal, Science. This paper reported a finding of the international Working Group on Numerical Experimentation that the computer models used by the IPCC significantly underestimated the rate of increase of global precipitation with temperature. The computer models give a 1-3% increase of precipitation with every degree centigrade while satellite observations, in accordance with theory, suggest that atmospheric water vapour and precipitation both increase at a rate of 7 % for each degree centigrade rise.”…

I have been trying to locate an analysis of Wentz’ 2007 work. Its

probably here somewhere, but search doesnt find it.

Could someone kindly point me to the right place?

“[Response: Hmmm…. possibly because ‘life on Earth’ hasn’t constructed an extremely complex society that is heavily dependent on the stability of the climate? I wonder who has….. – gavin]”

What an incredibly ignorant statement. Do you really believe that?

In fact I don’t see any understanding of Pat Franks criticism in your article. You don’t get it. The fact that the ever increasing error in your models wraps around like modula aritmetic around the mean of Pat Frank’s simple model doesn’t mean the error itself isn’t continually increasing. That just means it’s bounded. Big deal.

His criticism stands. The models aren’t any better than his simple model in predicting the effects of CO2 forcing despite having quite independent skills with regard to volcano eruptions.

[Response: Frank’s ‘model’ is nothing more than a linear fit for an experiment where linearisation works ok. It doesn’t work for the 20th Century, or the LGM, or the mid-Holocene or the Pliocene, or for the Younger Dryas or ENSO, or volcanoes or for the NAO etc. etc. It explains absolutely nothing. Thus taking it’s error propagation and claiming it applies to the real models is just dumb. The GCMs simply do not have the properties the Frank claims. As to my ‘ignorant’ remark, I might ask you how many people were displaced by the 120m rise in sea levels at the end of the last ice age? How many people would be with even a small fraction of that today? How much infrastructure was affected by the changing monsoon 7000 years ago? How much now? How many people moved from the grasslands of the Sahara 5000 years ago, and how many were affected by the Sahel drought in the 1980s? We do have a lot more technology and expertise now, but we also have a much bigger investment in the status quo. – gavin]

#413 — Gavin wrote, “Frank’s ‘model’ is nothing more than a linear fit for an experiment where linearisation works ok.”

That’s wrong, Gavin. You know that’s wrong, and incessantly repeating the same error won’t make it right. The Skeptic model is not a linear fit. It’s not a fit at all. It’s a test of what GCMs do with atmospheric CO2.

That model produced a sui generis line that was statistically independent of the GCM lines, and its independent reproduction of their output shows that GCMs propagate CO2 forcing linearly. Your standard of, “It doesn’t work for the 20th century…” is no standard of judgment at all. It’s just so much red herring, because the model was never meant to be a climate model. It was meant to be an audit of one behavior of GCMs.

In the event, it did an excellent job reproducing the global average surface temperatures projected by GCMs from a trend in atmospheric CO2. On that basis, it is completely justifiable to use as an estimator of error in a GCM CO2 global SAT projection.

Your scornful dismissals notwithstanding, nothing you have written here has disproved the Skeptic analysis.

[Response: There is nothing to ‘disprove’ because your analysis proved nothing whatsoever concerning GCMs. And frankly calling it the ‘Skeptic analysis’ is an offensive both to true skeptics and the magazine itself. Here’s another reason why your argument is bogus – you claimed that since your model has no heat capacity and therefore no ocean warming, there cannot be any committed heating “in the pipeline”. Yet the models you say you are matching certainly do have both these things. Therefore your claim that your linear model (which ‘fits’ a linear trend) says something about the underlying GCMs is just wrong. Give me one thing that your model verifiably predicts about the GCMs other than the linear trend in global mean temperature in those particular experiments. Just one. – gavin]

Richard Hill (July 9) asked where to find comments the Wentz 2007 article. Interesting source, those are the people who caught the sign error in the MSU work earlier.

You can find the abstract at

http://www.sciencemag.org/cgi/content/abstract/sci;317/5835/233

Try going to that link and on that page click the link for subsequent articles citing it; those will comment:

THIS ARTICLE HAS BEEN CITED BY OTHER ARTICLES:

Human-Induced Arctic Moistening.

S.-K. Min, X. Zhang, and F. Zwiers (2008)

Science 320, 518-520

Identification of human-induced changes in atmospheric moisture content.

B. D. Santer, C. Mears, F. J. Wentz, K. E. Taylor, P. J. Gleckler, T. M. L. Wigley, T. P. Barnett, J. S. Boyle, W. Bruggemann, N. P. Gillett, et al. (2007)

PNAS 104, 15248-15253

Potential Impacts of Climate Change and Human Activity on Subsurface Water Resources.

T. R. Green, M. Taniguchi, and H. Kooi (2007)

Vadose Zone J. 6, 531-532

In the #414 Response Gavin wrote, “There is nothing to ‘disprove’ because your analysis proved nothing whatsoever concerning GCMs.”

Wrong again, Gavin. It demonstrated that all one need do is linearly propagate GHG forcing to reproduce the results from 10 state-of-the-art GCMs projecting the effect of trending GHGs on global atmospheric temperature.

You wrote, “Here’s another reason why your argument is bogus – you claimed that since your model has no heat capacity and therefore no ocean warming, there cannot be any committed heating “in the pipeline”. Yet the models you say you are matching certainly do have both these things.”

I never claimed anything about ‘no heat in the pipeline.’ I only pointed out that because the simple linear forcing model reproduced the atmospheric temperature trend lines projected by all 10 GCMs, they cannot have been putting any of the excess GHG thermal energy anywhere else than in the atmosphere. That’s an observed result, Gavin.

You claim the GCMs included heat in the pipeline. But they didn’t show it, did they, because their lines are consistent with a linear model that put all the excess GHG forcing into atmospheric heat. And the model line was consistent with the GCM lines across 80 to 120 years.

And that audit line is not a “fit.” Nor is the linear model anything except an audit. The bogosity here resides in your arguments, and nowhere else.

[Response: I’ll keep this up as long as you want to continue to make a fool of yourself. The GCMs you are discussing were also all run out with constant forcing after 2100. If you and your model were correct, the temperatures would have flat-lined immediately the forcing stopped. Thus if that isn’t what happened, perhaps you would be so good as to admit that your model is bogus? After all, we have your statement in clear black and white here – “the GCMs didn’t show it … because they are consistent with a linear model..”. That’s a brave statement – you have made a prediction about what the GCMs should show – given your understanding of how they work. That’s how science progresses. Hypothesis, prediction, test. Now let’s see…. where is it… umm…. ah – let’s try this (make the end date 2200, and click ‘show plot’)….. Oh dear… the warming appears to be continuing past 2100, and oh, if you look at net radiation at TOA, you will see that it is positive the entire time (becoming smaller after 2100 of course). Neither of these two diagnostics are consistent with your linear model. So what say you? Do you consider the hypothesis that your model is a good analogue to the GCMs to be disproved? Or will you try and find a loophole?…. I’m itching to find out! – gavin]

Pat Frank, maybe try reading some of the posts on this site rather than touting your own, nonphysical toy model. It will be more productive for all of us.

[Response: I’m going to try the Socratic method for a while… let’s see. – gavin]

#416 — Gavin, you continually resurrect the same red herring. Over and yet over again, I’ve pointed out that the Skeptic analysis is an audit, and not a climate model. And yet over again plus 1, you challenge it on a climate model criterion.

The Skeptic analysis successfully audited GCM outputs with respect to GHG trend projections. It did not audit them with respect to anything else. Your “prediction” is not a prediction, and your “test” is not a test. Neither is your ‘climate model criterion’ a criterion nor are your ‘criticisms’ criticisms.

It’s fun that you chose GISS ModelE as your emancipatory GCM exemplar. Steven Mosher has an interesting plot showing how GISS ModelE performed against the HADCru temperature series: http://tinyurl.com/5l5uhc

It didn’t do that well. Between 1885-1910 GISS ModelE output trended up while temperature trended down, 1920-1940 it was flat while temperature trended up, it missed many sharp excursions throughout those periods and showed everywhere lower yearly jitter than did global temperature. It performed fairly well only during 1980-2000. Was it tuned to do so? Because after 2000, it diverged again: http://tinyurl.com/5s3q3p

It’s also fun that in Douglass, et al. (2007) “A comparison of tropical temperature trends with model predictions” Int. J. Climatol. 10.1002/joc.1651 1-9, Tables IIa,b showed that two versions of GISS ModelE produced two quite different temperature trends throughout the tropical troposphere even though they were primed with the same 20CEN forcing.

Douglass, ea. concluded, “We have tested the proposition that greenhouse model simulations and trend observations can be reconciled. Our conclusion is that the present evidence, with the application of a robust statistical test, supports rejection of this proposition.” Now there’s a rigorous test and validation of a prediction from the Skeptic audit, namely that GCMs are unreliable.

#417 — Ray, I’ve responded to criticisms throughout this thread. Would you kindly point out the criticism you consider definitive?

[Response: Ah, the distraction dodge, you disappoint me. I was expecting something with a little more intellectual integrity. Your last comment made a specific statement about the models – implying that because your toy model had no heat capacity, that the real GCMs can’t have either and that there would be no warming “in the pipeline”. I showed (rather clearly) that this was completely mistaken. Now you claim that your efforts were an ‘audit’ – a word that appears nowhere in your article, and whose definition in this context is, shall we say, obscure. Worse, you now claim that your ‘audit’ is only valid for a period over which you compared it – i.e a linear model fitting a linear trend – and you think that is improving the credibility of your claims about errors. If your toy model can’t be used to say anything about models, why did you say anything about the models? Please continue – this is fun. – gavin]

Gavin, that’s a great applet.

Would it be possible to have several quantities (say, up to five) in one plot? And encapsulated postscript output for use in documents?

IIUC the argument is that, when everthing is close to an exponential growth process, there is no way to distinguish in the output an attenuation (like a negatve feedback) from a time delay. Well, duh.

Pat Frank, I am merely pointing out that this site is a treasure trove of information about the climate. Should you tire of your straw men and toy models, or rather “audits,” you are certainly free to actually learn something about climate science. Encouraged, even.

Ray, sit back, have some popcorn, let Gavin teach, let’s learn, eh?

#418 Response — Gavin, I have never claimed the Skeptic analysis was anything other than an audit. It is you who, over and over, tried to make it into a climate model. Let that, your misplaced strategy, speak to the standard of intellectual integrity.

Likewise, it is a fact that the linear forcing equation produced a line that reproduced the temperature trends from 10 GCMs (12, really), given a positive trend in GHGs. This is what it was constructed to test. So then you offer as criticism that it doesn’t reproduce GCM output in the absence of a positive GHG trend. This specious non-sequitur can also be added to your freight of intellectual integrity.

It doesn’t matter to the validity of that result what GCMs do with SAT after a positive GHG trend turns off. What matters to the validity of the result is what GCMs do with excess GHG forcing during the trend itself. The coincidence of lines shows that they put the excess forcing into atmospheric temperature. So the Skeptic equation hypothesized, and so the GCM outputs verified.

If you find “audit” obscure, try the first couple of sentences here: http://en.wikipedia.org/wiki/Audit You’ll find the approach described in the opening paragraphs of the article SI. I hope that helps.

#420 — Ray, thanks for the constructive suggestion. I have engaged no straw men.

[Response: Let’s recap. You have a formula that creates a linear trend by using a very low sensitivity and assuming no heat capacity. GCMs create a linear trend by having a much greater sensitivity and a significant thermal inertia. You compare the two trends, declare that the former is an ‘audit’ of the latter, and thus the GCMs aren’t doing what they are actually doing. Brilliant! By the same logic I could ‘audit’ General Motors by losing some money this quarter, and then suggesting to their board that they could deal with their financial woes by fixing the holes in everyone of their employees pockets – I’m sure they’ll be impressed. If your formula had anything to do with the GCMs, then the GCMs would not have a net radiation imbalance during the period of linear temperature rise and would not be putting energy into the ocean. Yet they do both of these things. Therefore your ‘formula’ is not an audit at all. It shows precisely the wrong behaviour over the very period you claim it matches. Bottom line, your formula has as much to do with the GCMs as a stick has to do with the Eiffel Tower. The only claim you’ve made is that the GCMs are putting al their heat into the atmosphere – but they do not (look up Energy into Ground). Thus what is left? – gavin]

Wow. Pat Frank, I have to say that I am astounded by the number of different misconceptions you demonstrate in your various writings. You claim that because you can match the ensemble average response of GCMs to one forcing scenario you have generated an “audit” which you can use to tell the people who run the GCMs that their models don’t have heat going into the ocean? Despite the fact that they are the ones with access to their model outputs, and that they can in fact go in and tell you exactly how much heat ends up in the oceans?

You think that because the year-to-year variability in the GCMs average out, that they can’t represent any physical reality? Let me ask you something: I have a hundred computer models which represent ten rolls of a six sided die. The “ensemble average” of these models is a line that equals 3.5 from roll 1 through roll 10. So, in your universe, I could use an “audit” equation equal to 3.5, because all these models that produce “noise” between 1 and 6 aren’t really physical because they don’t agree with each other?

You think that you can propagate baseline uncertainty (from cloudiness or whatever) and apply that uncertainty anew every single year? Let’s say I go up to the top of a mountain that is 5000m high (plus or minus 100m). Now I take 1m high bricks. Every year I add one brick to the top of the mountain. In your universe, I wouldn’t be able to predict the trend in height changes of my tower because we’d have to throw in 100m of uncertainty every single year??? And, by the way, why did you choose a year as the unit of time to use to propagate your uncertainty? You could have used a day as your unit of time, and then your uncertainty would have grown 365 times as quickly!!! (oh, wait, that would make no sense, would it? Much like the rest of your analysis)

I can’t believe I actually wasted valuable time reading your stuff. I’m off to do something useful now.

Other discussions abound, e.g.

http://bruinskeptics.org/2008/05/27/innumeracy-in-global-warming-skepticism/

(inconclusive, but deep)

Hank,

That is an interesting exchange. We have a very perceptive young man engaging a very wily ole dude.

Inconclusive yes, but the playing field was hardly level.

Thanks for finding this nugget.

Paul

Response to #422 — Gavin wrote, “Let’s recap. You have a formula that creates a linear trend by using a very low sensitivity and assuming no heat capacity.”

Not correct, Gavin. The sensitivity is contained in the fraction representing the contribution to temperature of water vapor enhanced CO2. That fraction was obtained from Manabe’s 1967 work. According to ISI SciSearch, Manabe’s paper has been cited 696 times, including 15 times in 2007 and 6 times so far in 2008. That paper shows a sensitivity of about 2.6 C for a doubling of CO2 (300 ppm to 600 ppm) at fixed relative humidity. According to Ch. 10 of the 4AR, p. 749, the SAT climate sensitivity is between 2-4.5 C for doubled CO2, with a best estimate of “about 3 C.” So, Manabe’s value is very acceptable. Increasing the sensitivity by 15% would not materially change any important result in the Skeptic article.

The real bottom line, Gavin, is that your premise is wrong, and so your analysis is misguided, and thus your conclusions are invalid. The linear representation of GCM GHG trend SAT projections remains sound. The GM analogy is inapt.

[Response: Then why does your model give only 1.8 deg C warming for an increase of ~8 W/m2? That (by rather basic arithmetic) implies a warming at 2xCO2 (roughly 4 W/m2), of 0.9 deg C. Rather smaller than 3 deg C, wouldn’t you say? Your claim that it has a mid-range climate sensitivity is completely undermined by your own figure. More curiously, I don’t see any derivation of what the ‘base forcing’ value you used – maybe I missed it – but it seems to be about 52 W/m2 in order to get the results you show. Where does that come from? Because of course you realise that changes in that number completely determine the sensitivity…. – gavin]

#413 __ Marcus, paragraph 1, successive rolls of dice are statistically independent. Successive time steps of climate models are not.

“You think that because the year-to-year variability in the GCMs average out, that they can’t represent any physical reality?” No, that’s not why.

“You think that you can propagate baseline uncertainty (from cloudiness or whatever) and apply that uncertainty anew every single year?” Uncertainty in an intermediate result is always propagated forward into successive calculations.

[Response: Only in toy models with no feedbacks. With compensating feedbacks uncertainties are heavily damped. The irrelevance of your error propagation idea to shown very clearly in the GCMs because the cloud fractions are actually very stable and do not go from 0 to 100% after 100 years of simulation. – gavin]

#426 Response — Thanks, Gavin. You raised a very interesting point that had escaped my notice, and I thank you for that. You’re right. The Skeptic equation, and the Figure 2 plot, show a 1.6 C temperature increase for GHG doubling, rather than the 2.6 C climate sensitivity from Manabe’s model.

So, where does the attenuation come from? I had to think about that a bit. Bear with me a little, here. The only two possible sources of a heat capacity-like effect are the scaling fraction from Manabe’s work (0.36) and the greenhouse temperature of Earth (33 C).

Of these, the 33 C is the only viable candidate, because the atmospheric 33 C greenhouse temperature approximates an equilibrium temperature response of the atmosphere to the radiant energy flux, as attenuated by the heat removed because of the long-term thermal exchange with, mostly, the oceans. So, linearly scaling 33 C for atmospheric temperature automatically includes the proper ratio of thermal energy partitioned between the atmosphere and the rest of the climate system.

The linear scaling just assumes no proportional change in the net global heat capacity, relative to that of the atmosphere, during the given time of GHG warming. In this assumption, unvarying fractions of thermal energy from the excess GHG forcing are linearly partitioned between the rest of the global climate (e.g., the ocean) and the atmosphere.

The correspondence of the Skeptic equation result with the GCM projections shown in Figure 2 indicates that this assumption is empirically verified as operating within the GCMs (not necessarily in the climate itself).

So, the Skeptic equation not only linearly propagates the percent of water-vapor enhanced GHG forcing (Manabe), but also linearly propagates the ratio of thermal partitioning between the atmosphere and the oceans (with the atmospheric thermal fraction observable as the net 33 C). The second clause represents a new realization, and I thank you for bringing this line of thought to my attention, Gavin.

We can now see that the linear Skeptic equation actually has something to say about how GCMs partition global thermal energy. Skeptic Figure 2a is now seen to show that the physical model of Earth climate in at least 10 GCMs not only says that Earth surface temperature responds in a linear way to increasing greenhouse gas forcing, but also that the ratio of thermal energy partitioned between the atmosphere and the rest of the globe is constant. I.e., in GCMs the heat capacity of the atmosphere is held in constant ratio to that of the rest of the globe, at least through a modest change in atmospheric temperature. There is no sign in the Figure 2a GCM projections of any global non-linear thermal response to increased GHGs.

Thank-you again for bringing this new realization to my attention, Gavin.

#413 Response — You’re mistaking the mean with the uncertainty in the mean. Compensating feedbacks only bound the mean. They have no effect on propagated uncertainties.

[Response: You are very welcome. Perhaps you’d like to thank me as well for pointing out that you still do not provide a derivation for your ‘base forcing’ term which ends up defining the sensitivity. I think it rather likely that the value was just taken to get a good fit to the GCM results. The apparent contradiction between your so-called Manabe-derived sensitivity and the actual sensitivity is simply a reflection of what I pointed out above, i.e. that just because two methods both provide a linear trend, there is no reason to suspect that they provide a linear trend for the same reason. The fact remains, you toy model is not a good match to any aspect of the GCMs other than the linear temperature trend to which is seems to have been fitted, and has zero information content regarding the actual models. – gavin]

#408 Response — Gavin, you wrote, “Perhaps you’d like to thank me as well for pointing out that you still do not provide a derivation for your ‘base forcing’ term which ends up defining the sensitivity.”

“Base Forcing” is defined in “A Climate of Belief” Supporting Information, page 5 right under equation S1: “Base Forcing” is the forcing from all three greenhouse gasses as calculated from their historical or extrapolated concentrations for either the year 1900 or 2000, depending on which year was chosen as the zeroth year.”

You wrote, “I think it rather likely that the value was just taken to get a good fit to the GCM results.”

I think the evidence shows you haven’t read the SI.

You wrote, “The fact remains, you toy model is not a good match to any aspect of the GCMs other than the linear temperature trend…”

Which it was constructed to test. I’ve been completely forthright about that. It is you who has ever tried to make it out to be what it never was and what I never meant it to be.

“to which is seems to have been fitted, and has zero information content regarding the actual models.”

This has been your hope all along, Gavin. It has never been realized. Your arguments have been entirely tendentious, and have not made reference to what I actually did.

The Skeptic equation faithfully reproduced GCM GHG temperature trends across 80-120 years using simple and physically reasonable inputs that assume a linear temperature response to GHG forcing and (we now know partly thanks to you) a constant equilibrium partition of thermal energy between the atmosphere and the rest of the climate.

And, in fact, we also now know that in GCMs this thermal equilibrium is attained very rapidly, because the Skeptic equation makes the equilibrium instantaneous and its correspondence with the GCM results is excellent.

Evidently, the Skeptic equation tells us a lot about the actual models.

[Response: Let me be clearer. What is the calculation that defines the ‘base-forcing’? Apart from the fact that you are mangling the concept of radiative forcing in any case, your sentence in the SI explains nothing – where is the calculation? You still appear to think that regardless of how a linear trend is derived the must be the same thing. This is just nonsense. And even more amusingly you insist that the models’ must come to equilibrium quickly even though I already showed you they don’t. Who should people believe, you or their lying eyes? ;) – gavin]

#429 Response — ACoB SI Page 4, bottom: “The increasing forcings due to added greenhouse gases calculated according to the equations in Myhre, et al.,2 are shown in Figure S4.”

ACoB SI page 5, Legend to Figure S4: “Additional greenhouse gas forcing from the year 1900, for: (-), CO2; (−−−), methane, and; (⋅⋅⋅⋅), nitrous oxide, as calculated from the fits to the greenhouse gas concentrations of Figure S3, using the equations in Myhre[Ref. 2]”

ACoB SI Page 5, ““Base Forcing” is the forcing from all three greenhouse gasses as calculated from their historical or extrapolated concentrations for either the year 1900 or 2000, depending on which year was chosen as the zeroth year. “Total Forcing” is “Base Forcing” + the increase in forcing.”

Where’s the difficulty?

In the Response to #428, you wrote, “your ‘base forcing’ term … ends up defining the sensitivity.”

That’s wrong. Base forcing is the GHG forcing of the zeroth year for any projection as calculated using the equations from Myhre, 1998 (SI reference 2). It is merely the denominator of the per-year fractional forcing increase. The only reasonable source for the sensitivity is the 33 C of equilibrium greenhouse warming (#428).

In your standard GISS ModelE applet, the 2000-2100 delta T is 2 C, and is 2.4 C and flat by 2140. The immediate response is 0.83 of the final. This isn’t fast?

As regards your last sentence, it’s not me anyone should believe, nor you, but their own analysis of Skeptic Figure 2 and the the SI. Everything necessary is there to easily repeat the calculation, no matter that you unaccountably seem lost. The Skeptic equation is based strictly in obvious physical quantities, as described, and nothing more. Nothing was adjusted to make a fit with the GCM projections. And yet, it reproduced their results.

[Response: You still haven’t given the number you use or the calculation that got it. Why is this so difficult for you? Myhre’s equation gives the additional forcing term, not your ‘base forcing’ and doesn’t extrapolate to zero CO2 in any case. So I’ll just repeat my question – what is the number you use for ‘base forcing’, and where is the equation from which it was calculated? An inability to respond to this (rather simple) question, will be revealing.

As for GISS modelE, the long term sensitivity is 2.7 deg C, and there is a multi-decadal (and longer) response to changes in forcings, related to the uptake of heat in the ocean. Now that you appear to accept this, why do still insist your toy formula which has a (mysterious) sensitivity of 1.1 deg C (1.8 deg C from 2000 to 2100 with ~6.5 W/m2 forcing), no lag to forcings and no uptake of heat in the ocean, has anything to do with it? – gavin]

Didn’t choke on your first fantasy short story, did you? Surely you remember what the forcing function looked like, don’t you?

…and by the way: extend the plot to 2300 (as far as it will go)… “flat by 2140”, in your dreams.

#108 CobblyWorlds posits:

“If we go through a massive output of carbon (as CO2 or CH4) into the atmosphere from some part of the biosphere, we’ll have a better handle on what to expect, and hence how to model such feedbacks.”

Gavin, do you agree this would represent a useful experiment? How much of a pulse CO2 input would be required to see a significant effect? And how large would that expected effect be?

The reason Pat Frank’s analysis is flawed is because in any complex adaptive system otherwise multiplicative error is constrained by the fact that individual subsystems will often converge on narrowly defined equilibrium states. The convergence process implies that error for that component subsystem diminishes to zero in the limit. That is why Gerald Browning’s arguments about unpredictable weather, though interesting, may be irrelevant if they do not scale up temporally to the level of climate. Unbounded growth in error, as postulated by Browning, is prevented by climatic processes that mathematically conspire to constrain the chaos associated with turbulent weather. In the long-run, determinisitic responses to strong forcings and feedbacks prevail.

This is precisely why Pat Frank’s linear model is innappropriate: it doesn’t have the equilibrium substates necessary to prevent exponential growth in error. A nonlinear GCM, paradoxically, does.

Nonlinearity is not always the devil people make it out to be. Nature is governed by nonlinear dynamics. Yet it persists. Such persistence is not possible in Pat Frank’s death-spirallingly uncertain world.

I would be interested in your view on this, Gavin. And of course, Drs. Frank and Browning are free to respond as well.

Richard, it’s been done. See here:

http://climate.jpl.nasa.gov/images/CarbonDioxideGraphic1.jpg

http://climate.jpl.nasa.gov/images/GlobalTemperatureGraphic1.jpg

Hmph, they made up their website with several different images so linking to the image gets a cut-off chart losing the recent data. Here’s the page, see it for the full images:

http://climate.jpl.nasa.gov/keyIndicators/index.cfm#CarbonDioxide

I know you’re only trying to be helpful, Hank, and can’t resist the jab, but there IS a difference between backcasting (your graphics) and a priori prediction (my proposed experiment).

Richard, look at Arrhenius’s proposed experiment.

It’s the same as yours, and he has priority.

That’s what we have available now.

http://earthobservatory.nasa.gov/Library/Giants/Arrhenius/arrhenius_2.html

#430 Response —

[edit]

And even so, the information in the Skeptic SI is enough for any scientist to replicate my work. That’s as much as is demanded by the professional standards of science, your innuendos notwithstanding. The same standard is all too often observed in the breach by certain elements in climate science. Elements that are evidently beyond the reach of your revelatory perspicacity.

But, I’ll lay it out for you anyway; not that you deserve it, Gavin. It’s only to lance that particular boil.

The equations I used are in Myhre 1998, Table 3 and footnote, and employed Myhre’s “Best estimate” alpha constants for CO2, CH4, and N2O.

The origin of projection years for Figure 2 was 1960, chosen on the basis of statements in the CMIP Report 66, as noted below. The extrapolated calculation started from the 1960 atmospheric concentrations of CO2, CH4, and N2O, either as measured or from the extrapolations shown in SI Figure S3.

Year 1960 was chosen as the beginning projection year for the Skeptic Figure 2 comparison from the description given in C. Covey, et al., “Intercomparison of Present and Future Climates Simulated by Coupled Ocean-Atmosphere GCMs” PCMDI Report No. 66, UCRL-ID-140325, October 2000, where it is mentioned that, “For our observational data base we use the most recent and reliable sources we are aware of, including Jones et al. (1999) for surface air temperature … Jones et al. (1999) conclude that the average value for 1961-1990 was 14.0°C…”

As the CMIP GCM projections used a 1960-1990 air temperature, 1960 seemed the most reasonable base year for comparison. The calculated “Base Forcing” used was that calculated for 1900, which was 33.3019 W/m^2. Using a 1900 base year forcing produced a 1960 net greenhouse temperature of +14.37 C from the 1960 gas concentrations, which is entirely comparable to the Jones value. The GHG surface air temperatures from 1960 on were calculated from the Skeptic equation, with the yearly increased forcing ratios calculated using the measured and extrapolated gas concentrations and the equations in Myhre 1998, as projected from 1960. CO2 was increased at 1% per year, compounded from the 1960 value, to mirror the conditions in the CMIP study. The yearly increased concentrations of CH4 and N2O used the SI Figure S3 extrapolations. The projection was finally renormalized to produce anomalies for the Figure 2 comparison plot with the 10 GCM projections.

So, there it is. Sorry about the tea spoon. Not silver.

[Response: Now we are getting somewhere – So your ‘Base forcing’ of 33.3019 W/m2 is for 1900. Implying a temperature at 1900 of 0.36*33 = 11.88 C (seems a little low, don’t you think?) and a forcing change from 1900 to 1960 of almost 7 W/m2 (Wow!). Now that can’t have been derived using either 1900-1960 forcing changes (about 0.6 W/m2 for CO2+CH4+N2O), and certainly doesn’t come from the Myhre et al equations. And we still haven’t got the source of the Base Forcing number itself! Myhre’s equations are for the difference in forcings from an initial concentration C_0 to C – but for CO2 it is logarithmic and so you can’t put C_0=0 (and the formula is not valid for C_0 at small concentrations in any case). So once again, where does the 33.3019 W/m2 number come from? (PS. It really would be easier if you just told me). – gavin]

Given your polemical self-indulgence, Gavin, editing out the first part of my post was pure cowardice on your part.

I’ve now laid out in detail for you how everything was calculated. The number you calculated for 1900 is the fractional contribution from the water-vapor enhanced greenhouse, not the total greenhouse warming. The total GHG forcing change I calculated at 1960, relative to 1900, was 0.68 W/m^2, not 7 W/m^2. It’s not that complicated, that you should make such mistakes. Your carping is pure posture. It’s now up to you to follow the calculation to replicate the results, or not. That is how science works, isn’t it. The rest is grandstanding.

[Response: Not cowardice – just a desire to stick to the point. However, you are busy dissembling here: if ‘base forcing’ is ‘total forcing’ for 1900, then your formula gives T_1900 = 0.36*33*1. = 11.88. If ‘Total forcing’ is defined differently going back as going forward, then you have even more to explain. To get 14.37 deg C at 1960 using your formula and the actual change in forcing from 1900 implies that 14.37 = 0.36*33*(Base+0.68)/Base and gives Base=3.24 W/m2 ! somewhat different from the 33.3019 W/m2 number – which you still have not explained.

Lest any readers be confused here , I should make clear that in my opinion, Frank’s equation is a nonsense. It is faulty in conception (there is no such thing as a ‘base forcing’ in such a simple sense), predicts nothing and is useless in application. I am only drawing out Frank’s inability to define even the simplest issues with a one parameter model (how were the parameters defined, does it pass any sanity test) in order to make this clear. My guess is the reason why no explanation of the base forcing number is forthcoming is because it was chosen in order to get the linear change of 1.9 deg C with an additional forcing of 5.32 W/m2 (100 years of 1% increases in CO2) (i.e. it was fitted to the GCM results). That would imply that Base = 0.36*33*5.32/1.8 = 33.26 W/m2 (close enough I think). To get 14.37 deg C at 1960 with that Base value the ‘total forcing’ at 1960 must be 40.28 W/m2 – a full 7 W/m2 more than the ‘Base’ number – which of course makes no sense and was only brought up in this thread as a vague post hoc justification (it appears nowhere in the original work). Given his values for the 1960 temperature and Base forcing and the definition of Total Forcing as Base forcing + additional forcing, this must follow. Additionally with that Base value of 33.3019 he implies a sensitivity to doubling CO2 (3.7 W/m2) of 0.36*33*3.7/33.3019= 1.3 deg C (not the 1.6 deg C claimed above).

I leave it to the readers to determine who is grandstanding. – gavin]

Agonizing. I finally looked at the original paper, which says:

“All the calculations supporting the conclusions herein are presented in the Supporting Information (892KB PDF).” (in the original it’s a hotlink to the PDF)

This is what is in that PDF file:

“…”Base Forcing” is the forcing from all three greenhouse gasses as calculated from their historical or extrapolated concentrations …”

But there’s no calculation. I know Gavin knew that. But on the off chance there’s anyone less interested than I was in this who’s still wondering WTF, it’s the “as calculated” that’s missing.

I had a calculus teacher who would put proofs on the front chalkboard and from time to time say “now, for sure, we can see that …” and leap.

And those of us sitting assiduous but astonished in the front would far to often raise our hands and say, “but, I don’t see ..”

And he’d harrumph, and walk over to the much larger chalkboard on the side of the classroom, start with his next to last equation (from the front), fill both chalkboards with a long string of calculus, get to the end of the second board, write the last equation (from the front) and then go back to the front.

A few lines later, he’d say “now, for sure …..”

He was one of the senior math teachers, filling in for the usual teacher of the bonehead biology calculus course. He really didn’t have the patience for it.

Not sayin’ that’s what’s going on here. But for many of us in the peanut gallery here, who aren’t going to derive this from wherever it for sure obviously comes, it would be interesting to actually see the entire calculation asserted.

It’d have to take less time to show the work than to argue over whether it’s obvious. It’s not obvious.

__________________

reCaptcha: “justify 35@38c”

#437

A little replication never hurt.

> a little replication

Mount a scratch planet? http://edp.org/monkey.htm

> base forcing

Has Frank taken as his base the number generally stated for how much the natural greenhouse effect warms the planet? That would be 33C or 34C, per all the usual sources, warmer than the planet would be in the absence of greenhouse gases in our atmosphere.

> Has Frank taken as his base the number

He does say the number is “empirical” so he got it somewhere.

#443

No, I thought Earth might be slightly more representative. You’re missing the point. CO2 is at a different level now than during Arrhenius’s time, so you would expect a different reponse now than what he predicted then. Having two points on the response curve would be better than having just one.

Never mind. I’ll take my idea to someone who’s interested. I keep forgetting this is a lecture hall, not a place to discuss interesting new ideas.

Chuckle.

Richard, if you’re investigating fire, do you set fire to your house?

#446

Ever the hyperbolist. “Spark”. Not “fire”. Stomp them all out, if you can. Some will escape, and may be worth studying. But never mind.

# 426 Pat Frank,

for my education: Could you tell me how you defined climate sensitivity.

I thought the units of climate sensitivity are °C/(W/m2).

Arrhenius’s experiment: baseline condition covers 400,000 years.

Adding fossil carbon, the experimental period so far is about 100 years.

Best possible experiment to follow: halt the change, return to baseline. See how long it takes.

#440 Response — Got it, Gavin. So, when you wrote, “An inability to respond to this (rather simple) question, will be revealing.” that was just you sticking to the point. As you had admitted personal revelation, I just wondered what was revealed to you about others you know who have for years adamantly refused to show their methods.

I can see from your writing this, ff, “To get 14.37 deg C at 1960…,” that my wording of this sentence: “Using a 1900 base year forcing produced a 1960 net greenhouse temperature of +14.37 C from the 1960 gas concentrations, which is entirely comparable to the Jones value.” was confusing. Regrets that my intended meaning wasn’t clear.

First, to prevent further confusion, the 14.37 C should have been 14.67 C. Further regrets for the typo. In any case, that sentence was not written to suggest that +14.37 C (+14.67 C) is the water vapor enhanced greenhouse temperature component produced in 1960 starting from 11.88 C in 1900. Instead, it was meant to convey the net average water vapor enhanced greenhouse-produced surface temperature of Earth at 1960, as calculated by 33*[1960 forcing/1900 forcing]=33 x [33.98/33.302]=33.67 C. Note the absence of the 0.36 scaling. That is, following the GHG increase from 1900 to 1960, the induced 33.67 K above the TOA emission temperature of ~254.15 K, equals a surface average temperature of +14.67 C in 1960. Again, regrets for the typo in writing 14.37 C.

[Response: The reason why this is not understandable from your materials is that you are now using a different formula for temperature changes from 1900 to 1960 than you do for the future period. So much for consistency. – gavin]

You wrote, “However, you are busy dissembling here…” This, of course, is your quite apparent fond hope and what you have unattractively and without evidence insinuated all along.

You wrote, “– which you still have not explained….” Let’s recall that by the time you wrote that, you not only had my SI, but also my more detailed comments in post #439. That is, by that time I had already explained the method in some detail. You, however, apparently declined any effort at understanding, preferring instead to continue with your accusatory line.

Your argument in #440 Response rests entirely on misunderstanding the meaning of the sentence noted above in which you set 14.37 (14.67) C to be the net water-vapor enhanced GHG temperature component at 1960, increased from the 1900 value of 11.88 C. If you had bothered to plug the numbers into the Skeptic equation, you’d have found the net GHG component temperature increase from 1900 to 1960 was from 11.88 C to 12.12 C.

[Response: And what do these numbers even mean? And if they mean nothing, then so do the numbers you come up with later. – gavin]

I’m going to continue a little out of sequence here. In #439, you wrote, “the {Myhre] formula is not valid for C_0 at small concentrations in any case.” I saw no lower limit caution in Myhre, 1998. Did you? Nor is one mentioned in Section 6.3 or 6.3.5 Table 2 of the TAR, where the same expressions are given and discussed. So, here it is handed to you, Gavin. Unit values were used for Co GHG concentrations in the Myhre equations in all cases. I.e., for CO2 in 1900, the equation used was 5.35 x ln(297.71/1)=30.474 W/m^2. The total base forcing for 1900, 33.3019 W/m^2, also included the forcings for aboriginal CH4 and N2O, as taken from the extrapolations of SI Figure S3. These 1900 gas concentrations plus any others I used are all available from the fit equations given on SI page 4, under Figure S3.

[Response: Ummm… a little math: log(C/C0)=log(C)-log(C0). Now try calculating log(0.0). I think you’ll find it a little hard. indeed log(x) as x->0 is undefined. What this means is that the smaller the base CO2 you use, the larger the apparent forcing – for instance, you use CO2_0 = 1 ppm – but there is no reason for that at all. Why not 2 ppm, or 0.1 ppm, or 0.001 ppm? With each of these you would get a range of base forcings from 26.7 to 67.4 W/m2. Indeed you could have as large a forcing as want. I’m sorry that you were apparently confused by this, but Myhre is clear that his formula is a fit to the specific values he calculated from 290 to 1000 ppm. At much lower levels, the forcing is no longer logarithmic, but linear. Your base forcing number is therefore arbitrary. On a more fundamental point, your implied idea that climate changes are linear to the forcing from zero greenhouse gases to today is nonsense. – gavin]

To validate this use, when the Myhre equations are used in this manner, the calculated net forcing for doubled CO2 (nominally 297.7 to 595.4 ppmv) is 3.7 W/m^2, which is exactly the IPCC value. That is, 5.35 x ln(297.71/1)=30.474 W/m^2; 5.35 x ln(595.42/1)=34.183 W/m^2; and (34.183-30.474) W/m^2=3.7 W/m^2.

[Response: Ummm… more basic math: log(C1/C0)-log(C2/C0)= log(C1)-log(C0)-log(C2)+log(C0) = log(C1/C2) – therefore changes in forcing are not dependent on what the C0 value is. So this can’t be a ‘validation’ of choosing C0=1 (or any other value). More nonsense I’m afraid. – gavin]

Not being content with that verification alone,

[Response: that’s sensible!]

however, I also independently estimated the base forcing from the total greenhouse G of 179 W/m^2, given in Raval 1989.* I’ll lay that out for you, too, so that you needn’t rely on your source of revealed pejorative. If the total greenhouse energy is 179 W/m^2 (surface flux minus OLR flux), producing a +33 K surface greenhouse temperature increase, then the total water vapor enhanced GHG forcing is found using the Manabe 1967 Table 4 ratio, which yields 0.36 x 179 W/m^2 = 64.44 W/m^2. But we want GHG forcing alone, prior to water vapor enhancement. From Manabe 1967, Table 5 (300->600), the average (0.583 cloudy and 0.417 clear) change in equilibrium temperature at fixed absolute humidity is 1.343 C and at fixed relative humidity is 2.594 C. So, the temperature increase ratio due to water vapor enhancement of GHGs is 2.594/1.343=1.932. Temperature is linear with forcing, so the *dry* forcing due to the GH gases that go into the water vapor enhanced greenhouse effect in year 1900 (i.e., approximately no anthropogenic contribution) is then 64.44/1.932 = 33.35 W/m^2. This value is essentially identical to the 33.3019 W/m^2 calculated from the equations of Myhre, 1998, used as described above, and so fully corroborated that value.

*A. Raval and V. Ramanathan (1989) “Observational determination of the greenhouse effect” Nature 342, 758-761.

[Response: Just FYI, GHE is closer to 155 W/m2 (Kiehl and Trenberth) (and that is a energy flux, not an energy). But if you just wanted the total greenhouse gas forcing for present day conditions with no feedbacks, you should have just asked – it’s about 35 W/m2 (+/- 10%) (calculated using the GISS radiative transfer model). The no-feedback response to this would be about 11 deg C consistent with the Manabe calculation. This implies that your formula is only giving the no-feedback response of course. – gavin]

These two results — reproducing the IPCC-accepted forcing for doubled CO2 and congruence with the estimation from G — independently validated using the Myhre equations as above to calculate the base forcing value of 33.3019 W/m^2 used in the Skeptic equation.

[Response: To four decimal places no less! ]

Any reasonable attempt to follow the method I had already described would have found how “base forcing” was calculated. But eschewing that option, you evidently preferred to maintain a convenient polemical fiction.

[Response: This is not so, and I challenge any reader of this to work it out without your revelation in this comment that you used a completely arbitrary C0=1ppm in the Myhre formula. – gavin]

You wrote, “Lest any readers be confused here , I should make clear that in my opinion, Frank’s equation is a nonsense. It is faulty in conception (there is no such thing as a ‘base forcing’ in such a simple sense), predicts nothing and is useless in application.”

It’s good you wrote that was merely your [personal] opinion. The predictive power and utility of the Skeptic equation are in evidence in Skeptic Figure 2. Who should your readers believe, you or their lying eyes?

[Response: We’ve been over that, your equation predicts nothing other than a linear trend and does it by assuming a low sensitivity and no heat capacity which has nothing to do with the models it claims to emulate. It doesn’t work for 1900 to 1960, it doesn’t work for any transient event and it doesn’t work if the forcing stabilise. It just doesn’t work and any serious consideration of the physics of the climate should have told you why. – gavin]

You wrote, “I am only drawing out Frank’s inability to define even the simplest issues…”

Indeed. Let’s revisit what you have been drawing. You first several times drew the conclusion, without cause, that the Figure 2 line was a fit. You then several times falsely drew the red herring that the Skeptic equation was a failed toy climate model. Finally, you have drawn a false indictment that I willfully picked a studied value for “base forcing” so as to dishonestly impose a correspondence with the 10 GCM projections of Skeptic Figure 2.

[Response: It has taken you dozens of comments to even say what the base forcing was, and then even more to explain where your calculation came from, and when you did, it was clear that you didn’t understand the formula’s you are using, and you partially justified it using a mathematical trick that is in fact independent of the choice you made. A model of clear exposition that is sure to leave readers with a greater confidence in your abilities. – gavin]

Not one of those drawings has proved sustainable. In no case, Gavin — not one — did you base those claims on evidence. They were, one and all, your unsupported invention.

The evidence, further, shows that you have been unwilling to make the slightest effort to work out what I did. The description in the SI is enough to show the way, granting some needed work. That I have had to hand-lead you through the method merely shows that for you the method is not the issue, but rather your issue in evidence is a polemical need that there be no method at all.

[Response: In some sense you are correct – your formula is not a good description of the climate, nor it’s response to forcing and is patently wrong in relation to the GCMs. Therefore the only issue is why you think it has any validity. Your description of where it came from was vague (and in the end arbitrary) fully justifying an exploration into its source. – gavin]

You wrote, “My guess is the reason why no explanation of the base forcing number is forthcoming is because it was chosen in order to get the linear change … (i.e. it was fitted to the GCM results).”

You guessed that right from the start.[edit]

In the event, not one single element of the Skeptic equation was subjectively chosen, or fitted.

[Response: I call BS. Every element was subjectively chosen. A ratio from here, a formula from there, an base CO2 concentration plucked out of thin air, a new formula picked for the 1900-1960 period etc. The fact that your end conclusion is inconsistent with the papers you supposedly drew the numbers from should have a been a clue (see below). – gavin]

You wrote, “Additionally with that Base value of 33.3019 he implies a sensitivity to doubling CO2 (3.7 W/m2) of 0.36*33*3.7/33.3019= 1.3 deg C (not the 1.6 deg C claimed above).”

I didn’t claim the 1.6 C was from CO2 doubling. I wrote (post 428) that it was “a 1.6 C temperature increase for GHG doubling.” I.e., from all the GHG gases present at doubled CO2. This meaning is obvious, as a 1.6 C increase can be read right off the Skeptic Figure 2 at projection year 70, when CO2 is doubled in the additional presence of the other GH gasses.

[Response: Umm… maybe I was just confused by the words you used. Nonetheless, your estimate of sensitivity to 2xCO2 is 1.3 deg C – yet the source of your ‘0.36’ factor had a sensitivity twice that and the GCMs you think you are emulating have values of 2.6 to 4.1 deg C. But, here’s another inconsistency: the GCMs in figure 2 only have 1% increasing CO2, which gives a forcing of 4.3 W/m2 at year 80. Your figure shows a warming of ~1.9 C, implying that your formula had used a forcing of ~5.4 W/m2 – significantly higher than what the GCMs used. I should have spotted that earlier! Thus, not only is your sensitivity way too low, you used a higher forcing to get a better match! – gavin]

Why, in any case, would you calculate a sensitivity to doubled CO2 using a base forcing value, 33.3019 W/m^2, that also includes the forcings from CH4 and N2O? The year 1900 base forcing for CO2 alone is 30.47 W/m^2. The sensitivity given by the Skeptic equation for CO2 doubling from 1900 is 0.36x33x(3.7/30.47)=1.44 C. But remember that your question led to the realization, expressed in post 428, that the 33 C net greenhouse added temperature in the Skeptic equation reflected the quasi-equilibrium heat capacity partition of energy between the atmosphere and the rest of the climate, so that the calculated sensitivity is less than the instantaneous response of atmospheric temperature.

[Response: Ha! You are changing the formula again! I begin to see why this conversation has been so hard – you really have no idea what you are doing. I challenge anyone to make sense of your last paragraph. The sensitivity is now not the temperature change, the base forcing is now a different base forcing, 1% increasing CO2 is not 1% increasing CO2, and the greenhouse effect is now not the greenhouse effect. I made the mistake of taking your formula at face value, but in fact each term has some hidden dependency on the questions being asked that you only choose to share with the world after the calculated answer has been questioned. If you think this is what doing science is about, you are in worse shape than I initially thought. – gavin]

You wrote, “I leave it to the readers to determine who is grandstanding. – gavin]”

So, who is it?

[Response: A wise man once advised to never ascribe to malice what might be attributed to incompetence. I concur. The series of errors and misunderstandings that led you to your formula may well not have been intentional. And now having found a linear formula that fits (albeit one that needed a further boost to the forcing values), you have assumed that your numerological coincidence is telling you something about the real world. Unfortunately, you are still wrong whether you came upon this by accident or by design. And that is all that matters. – gavin]