Guest commentary by Tamino

Update: Another review of the book has been published by Alistair McIntosh in the Scottish Review of Books (scroll down about 25% through the page to find McIintosh’s review)

Update #2 (8/19/10): The Guardian has now weighed in as well.

If you don’t know much about climate science, or about the details of the controversy over the “hockey stick,” then A. W. Montford’s book The Hockey Stick Illusion: Climategate and the Corruption of Science might persuade you that not only the hockey stick, but all of modern climate science, is a fraud perpetrated by a massive conspiracy of climate scientists and politicians, in order to guarantee an unending supply of research funding and political power. That idea gets planted early, in the 6th paragraph of chapter 1.

The chief focus is the original hockey stick, a reconstruction of past temperature for the northern hemisphere covering the last 600 years by Mike Mann, Ray Bradley, and Malcolm Hughes (1998, Nature, 392, 779, doi:10.1038/33859, available here), hereafter called “MBH98” (the reconstruction was later extended back to a thousand years by Mann et al, 1999, or “MBH99” ). The reconstruction was based on proxy data, most of which are not direct temperature measurements but may be indicative of temperature. To piece together past temperature, MBH98 estimated the relationships between the proxies and observed temperatures in the 20th century, checked the validity of the relationships using observed temperatures in the latter half of the 19th century, then used the relationships to estimate temperatures as far back as 1400. The reconstruction all the way back to the year 1400 used 22 proxy data series, although some of the 22 were combinations of larger numbers of proxy series by a method known as “principal components analysis” (hereafter called “PCA”–see here). For later centuries, even more proxy series were used. The result was that temperatures had risen rapidly in the 20th century compared to the preceding 5 centuries. The sharp “blade” of 20th-century rise compared to the flat “handle” of the 15-19th centuries was reminiscent of a “hockey stick” — giving rise to the name describing temperature history.

But if you do know something about climate science and the politically motivated controversy around it, you might be able to see that reality is the opposite of the way Montford paints it. In fact Montford goes so far over the top that if you’re a knowledgeable and thoughtful reader, it eventually dawns on you that the real goal of those whose story Montford tells is not to understand past climate, it’s to destroy the hockey stick by any means necessary.

Montford’s hero is Steve McIntyre, portrayed as a tireless, selfless, unimpeachable seeker of truth whose only character flaw is that he’s just too polite. McIntyre, so the story goes, is looking for answers from only the purest motives but uncovers a web of deceit designed to affirm foregone conclusions whether they’re so or not — that humankind is creating dangerous climate change, the likes of which hasn’t been seen for at least a thousand or two years. McIntyre and his collaborator Ross McKitrick made it their mission to get rid of anything resembling a hockey stick in the MBH98 (and any other) reconstruction of past temperature.

Principal Components

For instance: one of the proxy series used as far back as the year 1400 was NOAMERPC1, the 1st “principal component” (PC1) used to represent patterns in a series of 70 tree-ring data sets from North America; this proxy series strongly resembles a hockey stick. McIntyre & McKitrick (hereafter called “MM”) claimed that the PCA used by MBH98 wasn’t valid because they had used a different “centering” convention than is customary. It’s customary to subtract the average value from each data series as the first step of computing PCA, but MBH98 had subtracted the average value during the 20th century. When MM applied PCA to the North American tree-ring series but centered the data in the usual way, then retained 2 PC series just as MBH98 had, lo and behold — the hockey-stick-shaped PC wasn’t among them! One hockey stick gone.

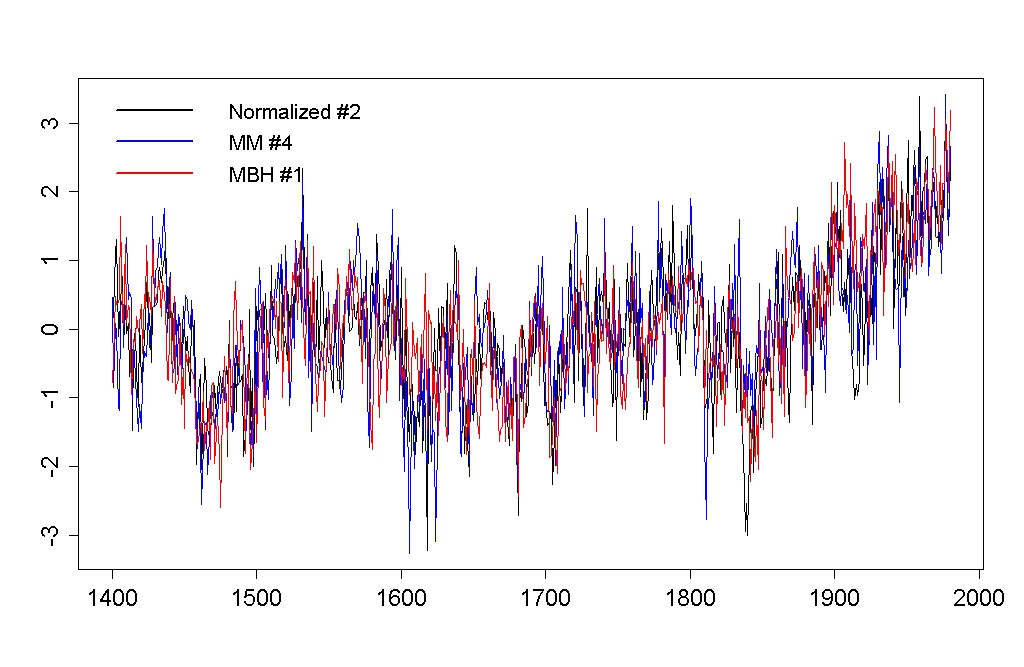

Or so they claimed. In fact the hockey-stick shaped PC was still there, but it was no longer the strongest PC (PC1), it was now only 4th-strongest (PC4). This raises the question, how many PCs should be included from such an analysis? MBH98 had originally included two PC series from this analysis because that’s the number indicated by a standard “selection rule” for PC analysis (read about it here).

MM used the standard centering convention, but applied no selection rule — they just imitated MBH98 by including 2 PC series, and since the hockey stick wasn’t one of those 2, that was good enough for them. But applying the standard selection rules to the PCA analysis of MM indicates that you should include five PC series, and the hockey-stick shaped PC is among them (at #4). Whether you use the MBH98 non-standard centering, or standard centering, the hockey-stick shaped PC must still be included in the analysis.

It was also pointed out (by Peter Huybers) that MM hadn’t applied “standard” PCA either. They used a standard centering but hadn’t normalized the data series. The 2 PC series that were #1 and #2 in the analysis of MBH98 became #2 and #1 with normalized PCA, and both should unquestionably be included by standard selection rules. Again, whether you use MBH non-standard centering, MM standard centering without normalization, or fully “standard” centering and normalization, the hockey-stick shaped PC must still be included in the analysis.

In reply, MM complained that the MBH98 PC1 (the hockey-stick shaped one) wasn’t PC1 in the completely standard analysis, that normalization wasn’t required for the analysis, and that “Preisendorfer’s rule N” (the selection rule used by MBH98) wasn’t the “industry standard” MBH claimed it to be. Montford even goes so far as to rattle off a list of potential selection rules referred to in the scientific literature, to give the impression that the MBH98 choice isn’t “automatic,” but the salient point which emerges from such a list is that MM never used any selection rules — at least, none that are published in the literature.

The truth is that whichever version of PCA you use, the hockey-stick shaped PC is one of the statistically significant patterns. There’s a reason for that: the hockey-stick shaped pattern is in the data, and it’s not just noise it’s signal. Montford’s book makes it obvious that MM actually do have a selection rule of their own devising: if it looks like a hockey stick, get rid of it.

The PCA dispute is a prime example of a recurring McIntyre/Montford theme: that the hockey stick depends critically on some element or factor, and when that’s taken away the whole structure collapses. The implication that the hockey stick depends on the centering convention used in the MBH98 PCA analysis makes a very persuasive “Aha — gotcha!” argument. Too bad it’s just not true.

Different, yes. Completely, no.

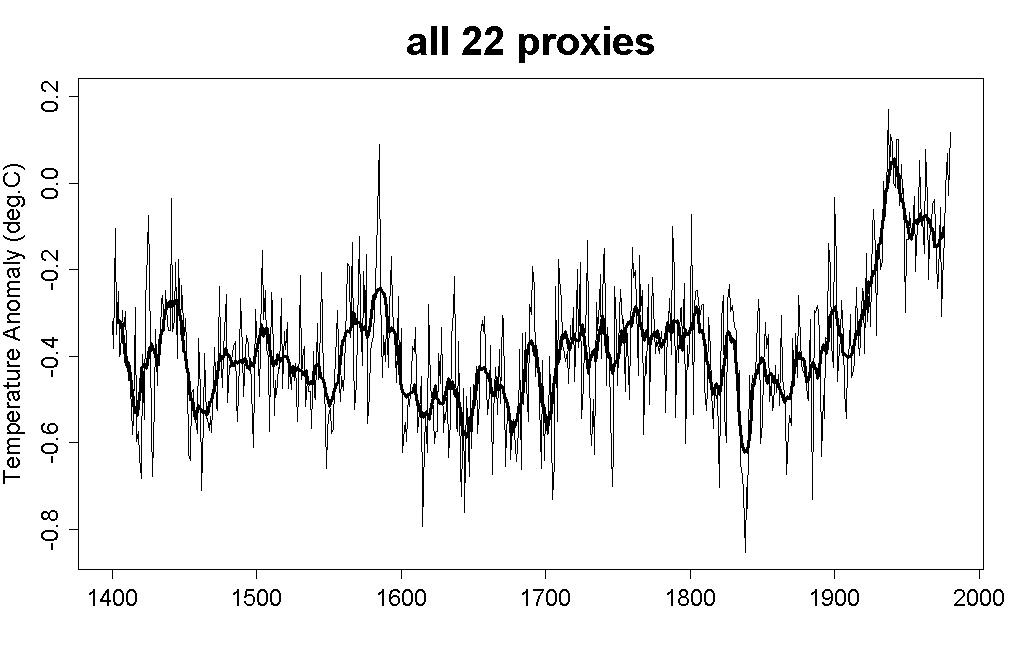

As another example, Montford makes the claim that if you eliminate just two of the proxies used for the MBH98 reconstruction since 1400, the Stahle and NOAMER PC1 series, “you got a completely different result — the Medieval Warm Period magically reappeared and suddenly the modern warming didn’t look quite so frightening.” That argument is sure to sell to those who haven’t done so. But I have. I computed my own reconstructions by multiple regression, first using all 22 proxy series in the original MBH98 analysis, then excluding the Stahle and NOAMER PC1 series. Here’s the result with all 22 proxies (the thick line is a 10-year moving average):

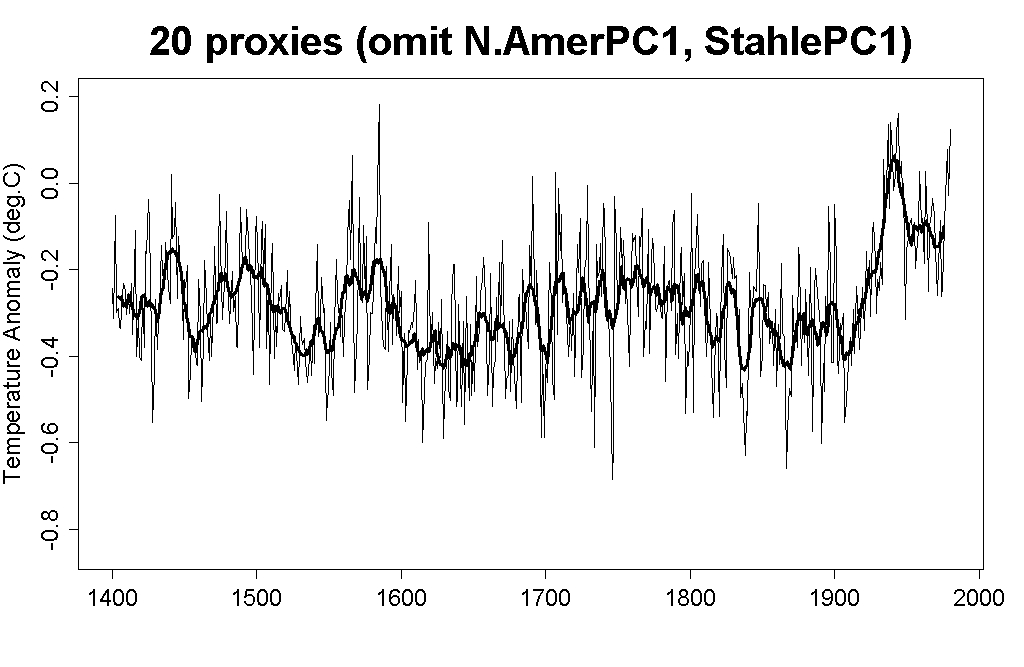

Here it is with just 20 proxies:

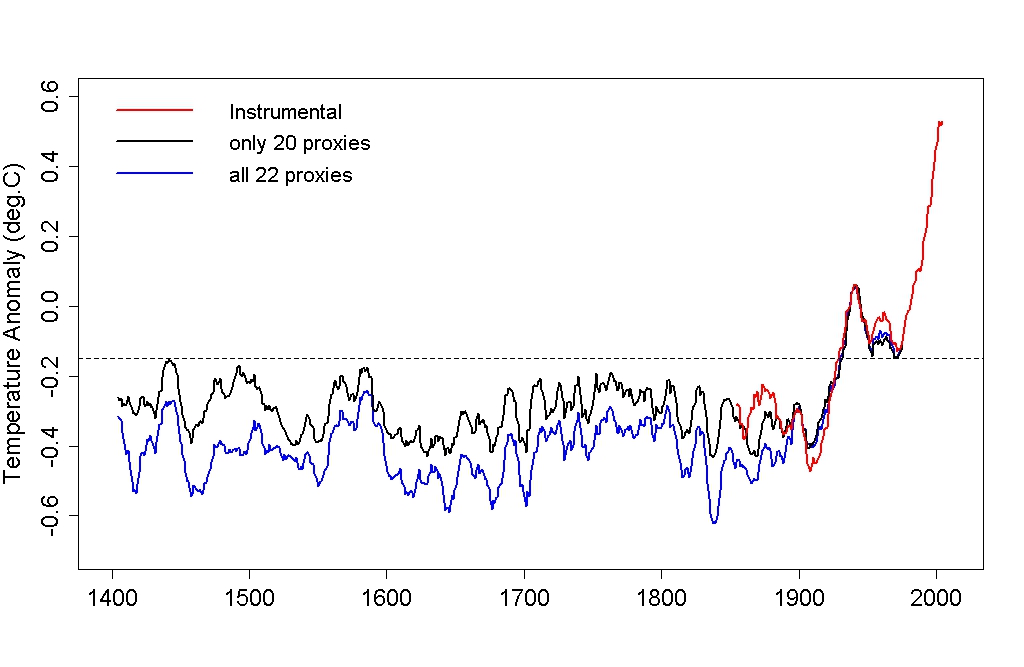

Finally, here are the 10-year moving average for both cases, and for the instrumental record:

Certainly the result is different — how could it not be, using different data? — but calling it “completely different” is just plain wrong. Yes, the pre-20th century is warmer with the 15th century a wee bit warmer still — but again, how could it not be when eliminating two hand-picked proxy series for the sole purpose of denying the unprecedented nature of modern warming? Yet even allowing this cherry-picking of proxies is still not enough to accomplish McIntyre’s purpose; preceding centuries still don’t come close to the late-20th century warming. In spite of Montford’s claims, it’s still a hockey stick.

Beyond Reason

Another of McIntyre’s targets was the Gaspe series, referred to in the MBH98 data as “treeline-11.” It just might be the most hockey-stick shaped proxy of all. This particular series doesn’t extend all the way back to the year 1400, it doesn’t start until 1404, so MBH98 had extended the series back four years by persistence — taking the earliest value and repeating it for the preceding four years. This is not at all an unusual practice, and — let’s face facts folks — extending 4 years out of a nearly 600-year record on one out of 22 proxies isn’t going to change things much. But McIntyre objected that the entire Gaspe series had to be eliminated because it didn’t extend all the way back to 1400. This argument is downright ludicrous — what it really tells us is that McIntyre & McKitrick are less interested in reconstructing past temperature than in killing anything that looks like a hockey stick.

McIntyre also objected that other series had been filled in by persistence, not on the early end but on the late end, to bring them up to the year 1980 (the last year of the MBH98 reconstruction). Again, this is not a reasonable argument. Mann responded by simply computing the reconstruction you get if you start at 1404 and end at 1972 so you don’t have to do any infilling at all. The result: a hockey stick.

Again, we have another example of Montford implying that some single element is both faulty and crucial. Without nonstandard PCA the hockey stick falls apart! Without the Stahle and NOAMER PC1 data series the hockey stick falls apart! Without the Gaspe series the hockey stick falls apart! Without bristlecone pine tree rings the hockey stick falls apart! It’s all very persuasive, especially to the conspiracy-minded, but the truth is that the hockey stick depends on none of these elements. You get a hockey stick with standard PCA, in fact you get a hockey stick using no PCA at all. Remove the NOAMER PC1 and Stahle series, you’re left with a hockey stick. Remove the Gaspe series, it’s still a hockey stick.

As a great deal of other research has shown, you can even reconstruct past temperature without bristlecone pine tree rings, or without any tree ring data at all, resulting in: a hockey stick. It also shows, consistently, that nobody is trying to “get rid of the medieval warm period” or “flatten out the little ice age” since those are features of all reconstructions of the last 1000 to 2000 years. What paleoclimate researchers are trying to do is make objective estimates of how warm and how cold those past centuries were. The consistent answer is, not as warm as the last century and not nearly as warm as right now.

The hockey stick is so thoroughly imprinted on the actual data that what’s truly impressive is how many things you have to get rid of to eliminate it. There’s a scientific term for results which are so strong and so resistant to changes in data and methods: robust.

Cynical Indeed

Montford doesn’t just criticize hockey-stick shaped proxies, he bends over backwards to level every criticism conceivable. For instance, one of the proxy series was estimated summer temperature in central England taken from an earlier study by Bradley and Jones (1993, the Holocene, 3, 367-376). It’s true that a better choice for central England would have been the central England temperature time series (CETR), which is an instrumental record covering the full year rather than just summertime. The CETR also shows a stronger hockey-stick shape than the central England series used by MBH98, in part because it includes earlier data (from the late 17th century) than the Bradley and Jones dataset. Yet Montford sees fit to criticize their choice, saying “Cynical observers might, however, have noticed that the late seventeenth century numbers for CETR were distinctly cold, so the effect of this truncation may well have been to flatten out the little ice age.”

In effect, even when MBH98 used data which weakens the difference between modern warmth and preceding centuries, they’re criticized for it. Cynical indeed.

Face-Palm

The willingness of Montford and McIntyre to level any criticism which might discredit the hockey stick just might reach is zenith in a criticism which Montford repeats, but is so nonsensical that one can hardly resist the proverbial “face-palm.” Montford more than once complains that hockey-stick shaped proxies dominate climate reconstructions — unfairly, he implies — because they correlate well to temperature.

Duh.

Guilty

Criticism of MBH98 isn’t restricted to claims of incorrect data and analysis, Montford and McIntyre also see deliberate deception everywhere they look. This is almost comically illustrated by Montford’s comments about an email from Malcolm Hughes to Mike Mann (emphasis added by Montford):

Mike — the only one of the new S.American chronologies I just sent you that already appears in the ITRDB sets you already have is [ARGE030]. You should remove this from the two ITRDB data sets, as the new version should be different (and better for our purposes).

Cheers,

Malcolm

Here’s what Montford has to say:

It was possible that there was an innocent explanation for the use of the expression “better for our purposes”, but McIntyre can hardly be blamed for wondering exactly what “purposes” the Hockey Stick authors were pursuing. A cynic might be concerned that the phrase actually had something to do with “getting rid of the Medieval Warm Period”. And if Hughes meant “more reliable”, why hadn’t he just said so?

This is nothing more than quote-mining, in order to interpret an entirely innocent turn of phrase in the most nefarious way possible. It says a great deal more about the motives and honesty of Montford and McIntyre, than about Mann, Bradley, and Hughes. The idea that MM’s so-called “correction” of MBH98 “restored the MWP” constitutes a particularly popular meme in contrarian circles, despite the fact that it is quite self-evidently nonsense: MBH98 only went back to AD 1400, while the MWP, by nearly all definitions found in the professional literature, ended at least a century earlier! Such internal contradictions in logic appear to be no impediment, however, to Montford and his ilk.

Conspiracies Everywhere

Montford also goes to great lengths to accuse a host of researchers, bloggers, and others of attempting to suppress the truth and issue personal attacks on McIntyre. The “enemies list” includes RealClimate itself, claimed to be a politically motivated mouthpiece for “Environmental Media Services,” described as a “pivotal organization in the green movement” run by David Fenton, called “one of the most influential PR people of the 20th century.” Also implicated are William Connolley for criticizing McIntyre on sci.environment and James Annan for criticizing McIntyre and McKitrick. In a telling episode of conspiracy theorizing, we are told that their “ideas had been picked up and propagated across the left-wing blogosphere.” Further conspirators, we are informed, include Brad DeLong and Tim Lambert. And of course one mustn’t omit the principal voice of RealClimate, Gavin Schmidt.

Perhaps I should feel personally honored to be included on Montford’s list of co-conspirators, because yours truly is also mentioned. According to Montford’s typical sloppy research I have styled myself as “Mann’s Bulldog.” I’ve never done so, although I find such an appellation flattering; I just hope Jim Hansen doesn’t feel slighted by the mistaken reference.

The conspiracy doesn’t end with the hockey team, climate researchers, and bloggers. It includes the editorial staff of any journal which didn’t bend over to accommodate McIntyre, including Nature and GRL which are accused of interfering with, delaying, and obstructing McIntyre’s publications.

Spy Story

The book concludes with speculation about the underhanded meaning of the emails stolen from the Climate Research Unit (CRU) in the U.K. It’s really just the same quote-mining and misinterpretation we’ve heard from many quarters of the so-called “skeptics.” Although the book came out very shortly after the CRU hack, with hardly sufficient time to investigate the truth, the temptation to use the emails for propaganda purposes was irresistible. Montford indulges in every damning speculation he can get his hands on.

Since that time, investigation has been conducted, both into the conduct of the researchers at CRU (especially Phil Jones) and Mike Mann (the leader of the “hockey team”). Certainly some unkind words were said in private emails, but the result of both investigations is clear: climate researchers have been cleared of any wrongdoing in their research and scientific conduct. Thank goodness some of those who bought in to the false accusations, like Andy Revkin and George Monbiot, have seen fit actually to apologize for doing so. Perhaps they realize that one can’t get at the truth simply by reading people’s private emails.

Montford certainly spins a tale of suspense, conflict, and lively action, intertwining conspiracy and covert skullduggery, politics and big money, into a narrative worthy of the best spy thrillers. I’m not qualified to compare Montford’s writing skill to that of such a widely-read author as, say, Michael Crichton, but I do know they share this in common: they’re both skilled fiction writers.

The only corruption of science in the “hockey stick” is in the minds of McIntyre and Montford. They were looking for corruption, and they found it. Someone looking for actual science would have found it as well.

RE: 92 – Gavin’s response.

Gavin, can you provide statistics reference for the RE score that is non-climate related? Something to explain it’s use and significance? There’s plenty written about r2.

[Response: There is some material in the NAS report (particularly p92 onwards). But I am not familiar enough with any other fields’ use of similar metrics to give you other references. – gavin]

22 (Peter):

Note that the ‘change in precipitation’ Climate Wizard map is much more important than the temperature change. If the rains fail, so does humanity, as Lovelock pointed out in Gaia’s Revenge. So the carrying capacity of much of southern Europe will fall sharply but northern Europe will remain productive, with different crops and methods.

One area to look at is eastern Scotland between Aberdeen and Inverness [see e.g. http://en.wikipedia.org/wiki/Aberdeenshire%5D: arable land noted for its malt barley and potatoes], forecast to become wetter and warmer [now has very cold winters], most of it sparsely inhabited [98/sq mile], English spoken, noted for its whiskies. And it is a long way away from southern Europe . .

Tamino says

So, essentially, according to M&M, the “first principal component” PC1* of MBH98 was “corruption”? (*presumably also the “first unprincipled component” UPC1 under their “system”)

And UPC2 was what, “dishonesty”?

Does the ordering of the UPC’s depend on whether the the analysts are centered, un-centered, de-centered, double-centered or self-centered?

John,

That Wegman deck is a piece of work. It seems pretty clear to me that you can’t really evaluate an area of science if you don’t know the first thing about it. Wegman clearly did not know the first thing about paleoclimate reconstruction or the problems inherent therein. Yet if you look at his current CV, he now claims expertise. Hmmm….

#67

IIRC, even within MBH98 itself, differing numbers of PCs were selected/retained at different time “steps”. The number of PCs to retain in any specific case depends on:

a) the selection rule (e.g. retaining most of variance in original data)

b) the exact PC algorithm used (yes, centering makes a difference)

and

c) the actual data.

As I said above, there was no discussion or even recognition of this point in the M&M papers or Wegman et al. (And, yes, I do recognize that centering on the calibration period has been denigrated by statisticians, but clearly using conventional centering has little effect on the final result).

RE: Gavin’s reply to #92

[Response: ‘others’? There is a well known problem with a certain class of tree ring density records that briffa et al have worked on. As you certainly do know, this is something that affects their proxies post 1960, even while they correspond to other proxies and instrumental data earlier. This is clearly an issue, and is being looked into by many groups. Doesn’t affect mbh98 or any of the subsequent Mann et al papers though. If you don’t like this, feel free to discount these proxies until it is resolved. – gavin]

If the proxies post-1960 show effectively a reverse correlation then it is not valid to simply assert that the tree ring data correspond to other proxies and instrumental data earlier and the post-1960 period is a special case.

[Response: It is not ‘the proxies’, it is one specific set of proxies that have nothing to do with what is being discussed here. I’ll repeat myself, if you are not happy with using them, feel free to ignore them. Other people have looked at these proxies and find that they do replicate other periods very well when there are confirming data (both instrumental and other proxies), and this leads them to conclude that there is some worth to them. If you are not so convinced after reading the relevant literature, then fine. Again, it really doesn’t matter. – gavin]

The reverse correlation in the modern validation period shows that, at the very least, a temperature : tree ring response curve is potentially multi-valued. This would completely invalidate the use of tree ring data as proxies for past temperature reconstruction.

[Response: only for *these* proxies and only if the confounding factor is non-anthropogenic. This does not apply to all tree rings. – gavin]

Another possibility is that the response is not multi-valued to temperature, its just that tree ring response to temperature is completely confounded by multi-correlation with other growth limiting variables.

Either way, if we do as you say and discount these proxies then what was Briffa doing in the IPCC assessment report?

PS: Briffa et al = Briffa and others.

[Response: In the time of writing for TAR there weren’t so many reconstructions available. IPCC has a duty to reflect the literature and assess what is consistent and what is not. Including Briffa et al and discussing the problems are exactly what is required. If it hadn’t been there, I’m sure someone would have claimed that it was omitted because it conflicted with some ‘purpose’! – gavin]

Once more people have read the book, and if Montford and McIntyre were welcomed to participate in the discussion, then I would be interested in participating in a more detailed discussion on this.

Regarding the divergence problem for tree rings, is that an isolated incident or are there other climate or weather “anomalies” like that from the early 1960’s? Are they connected, and if so, what are the most accepted theories for explaining them?

Thanks.

I know that Judith Curry thinks highly of Montford’s book. So following up on Gavin’s response to Judith (#74), why not let Judith be a guest contributor and publish her review of the book on RC.

The New York Times and other major publications will occasionally publish two reviews of the same book–one from a regular beat reviewer during the week and another in the Sunday Book review section.

Judith should certainly be able to make her own argument such a review, which I’m sure would trigger a lively discussion thread here.

Just a suggestion.

ThinkingScientist said: “When tested with stationary (no trend) noise the algorithm still produces a hockey stick.”

ThinkingScientist seems to swallow Monckton’s fictions without thinking at all.

If you create a long timeseries of random noise, then search through it, you will be able to find a section that looks like a hockey stick. You will also be able to find a section that looks like a puppy dog or a wheel barrow. This is why it is called “cherry picking”.

If you select only the part of a random series that you like, it is no longer random. You might as well have drawn the graph yourself with completely fictitious numbers.

This is so basically obvious that it barely even qualifies as “statistics”.

Or does ThinkingScientist trust Monckton to use genuinely random noise? It’s not like he has ever been caught lying before….

But as Gavin says, the solution is simple. Try it yourself.

#38 Andy Revkin

Re. Gavins response

In addition: nor should they.

Heck nothing would ever get done. It’s like a bureaucracy multiplier. 100% openness would be not only oppressive, it would be untenable. Like living with the Stasi and wondering what you can and can’t say in front of your children, or your friends and co-workers. Or in this case, what you should or should not put in an email.

I have friends right here in Basel that grew up behind the wall. It is interesting to see how they seem to not share much information, even when contrasted with the normal Swiss cultural perspective of privacy.

From an agency perspective, that’s why they have PR filters, not that that is always a good thing, as seems to have been illustrated curing the Bush administration. Interesting to note that in speaking with personnel hired into PR positions at science institutions during the Bush administration, I found that they still did not believe global warming was either occurring, or human caused (that was last year!), even though the worked in the PR offices of science units that not only study climate but have released statements about the veracity of the human-caused signal in global warming and that it really is humans that have caused climate change.

In an even stranger twist, I was at the WMO/IPCC in Geneva and spoke with someone there that was hired after the Bush administration left office, and right there in the WMO I find this individual telling me that he thought the whole global warming thing was overblown! He even told me what department he worked for in the Bush administration.

I told another friend of mine there in the WMO that day who was not from the US, and she said something like, ‘you’re kidding’, as if it was impossible. Well, apperently not.

Too bad there is not such a thing as a magic deception filter test that can root out such issues. But that is the beauty, difficulty and challenge of freedom. You can and should have a right to privacy, but it can also be a two edged sword. Like a double agent in an intelligence unit. It is a right that many now enjoy and would hate to lose. I guess it really all comes down to the integrity and honor of the person wielding the sword.

The Bush administration argued, and though I disagree with some of the things they did I do not disagree with the general policy, that they need to have a certain level of privacy to effectively operate (not so much as to preclude investigatory capacity however, such as in the ‘accidental’ deletion of millions of White House emails from their originating source, as well as the multiple back up servers. . . ‘accidentally’ of course). Otherwise nothing would ever get done and no one would be able to readily work out issues of understanding or misunderstanding.

Summary, privacy is important for different reasons at different levels and helps get things done. Invasion of rightful privacy on the other hand is intrusive and breaks the tenet of intention that rightful privacy is designed to protect.

The EAU/CRU hack was criminal, unethical, and performed by those of whom have a distorted idea of what honor is in the context of the greater good. One can only wonder if they had an agenda? ;)

—

A Climate Minute: The Natural Cycle – The Greenhouse Effect – History of Climate Science – Arctic Ice Melt

‘Fee & Dividend’ Our best chance for a better future – climatelobby.com

Learn the Issue & Sign the Petition

RE: #103 by kkloor

Having a review from Judith is an excellent suggestion. I think she has consistently shown herself to be evenhanded.

[edit – comments on our moderation policy are extremely tedious at the best of times. It might not be perfect, but the idea is prevent descent into abusive slanging matches and it mostly works. Don’t insult the hosts is usually a good tip. This is now OT].

#97 ThinkingScientist (ha ha)

[edit – the rules against the abuse of other commenters go both ways. Please people, keep it substantive and not personal.]

RE: #109 by Didactylos says:

“ThinkingScientist seems to swallow Monckton’s fictions without thinking at all.”

I have no idea what you are referring to with regard to Monkton. If you will check my comment #83 the statement about testing with stationary random noise are taken directly from MM2005 which is published in GRL. Their procedure is an entirely reasonable and widely used technique in many disciplines for testing the validity and robustness of an algorithm.

Your further comments:

“If you create a long timeseries of random noise, then search through it, you will be able to find a section that looks like a hockey stick. You will also be able to find a section that looks like a puppy dog or a wheel barrow. This is why it is called “cherry picking”.

If you select only the part of a random series that you like, it is no longer random. You might as well have drawn the graph yourself with completely fictitious numbers.

This is so basically obvious that it barely even qualifies as “statistics”.”

are exactly the point. This is not called “cherry picking” in science and statistics this is called spurious correlation. Any statistical method that is not robust when subjected to this standard test is invalid. The test of significance for statistics such as R is a test against the possibility of spurious correlation. For a complex and non-standard algorithm such as used in MBH98 then the test described by MM2005 GRL using random noise is entirely valid. MM2005 shows that MBH98 failed that tests. Your comments really should apply to the result of MBH98 – the findings of MM2005 in GRL show that what you describe is essentially what the MBH98 algorithm did with the data – find things that are not there (or, more properly I should say, not significant in a statistical sense).

[edit – boring]

[Response: You are incorrect (again). The test that MM2005 did was invalid for the reasons outlined in Huybers comment on that paper. Spurious correlation is of course something to be concerned with (and the implication that no-one was aware of this prior to McIntyre coming along is nonsense). That’s why you have validation intervals, that’s why you hold back data, that’s why you do monte-carlo tests with random noise, that’s why you do pseudo-proxy tests in climate models etc. But all of this is absolutely besides the point – if you think some other test should be done, then do it – that’s what science is all about. All of this data (and much more) is online. All of this code is online and you can do what you like. If you conclude something very different, then publish it – even if it’s very similar you should publish it. The whole point is to try and find out what happened in the past and Mann et al in 1998 were only the first step down that road. It wasn’t the last word on anything, and so treating it like it was the most important paper in the world is just bizarre. The fact is that later efforts with all sorts of improvements to the methods and different ways of testing show similar results. Why does MBH98 even matter to you? – gavin]

Re: 38 Andy Revkin

Working for the government does not take away ones right for reasonable privacy. In fact, the US Government goes to great lengths to protect the privacy of it’s workforce.

Joe Public has no more right to read my e-mails (even though they are on government computers) than he has a right to walk into my office and start telling me what to do. I work for the US Government, not individual tax-payers (imagine having 300 million people directly bossing around government workers without our current chain-of-command). We operate under a system of laws and regulations that balance the needs of a worker for reasonable privacy and government accountability to the public. Barring some sort of criminal activity, there is absolutely no reasonable expectation that a government employees e-mails would ever fall into the public domain.

Keith, I suspect the reason Curry “thinks highly” of Montfords book is because he’s created a narrative that’s easily followed. We don’t actually know her reasons because whenever one tranche of evidence is dealt with she alludes without specifying to a mysterious other, different set as was evident on that thread on C-A-S backin April. And when inescapably confronted over Wegman for example, she chose to figuratively speaking stuff her fingers in her ears and announce she didn’t want to hear anymore about it.

What Dr. Curry seems unaware of is that creating narratives is what writers do, and for various reasons. Montford’s book, as Tamino, Deep Climate and John Mashey are showing) is nowhere near as comprehensive as she appears to believe and selectively creates a narrative. How could Montford have examined GB’s of emails and interviewed any of the participants in the time available? That’s an easy question to answer – he didn’t. Some might call his expediency and haste to jump on the (co-ordinated?) bandwagon fabrication, or charlatanry, or creating a fiction but then, that’s what writers do. The one thing it isn’t is credible science.

Essentially Montford is to climate science what von Daniken was to archaeology.

RE: 99 Gavin says:

“I can create any metric I want to test the prediction in the validation period.”

The generally accepted approach to this in statistics is to determine the metric prior to the analysis. Which is why I will try and ask my

question for a third time:

What is the statistical authority for it being acceptable to fail an R test for this type of data and still claim the result statistically significant?

[Response: Now you making accusations. The RE statistic is used frequently in tree-ring work for precisely the reasons I outlined. Your insinuation that it was chosen after the fact has absolutely no basis in fact. Neither does your claim that the only measure of statistical significance is the correlation. For instance, in looking at a climate model being run in an unforced mode, the correlation of year to year values with the real world will generally be zero. But the model does have skill in many aspects of climate. You thus appear to be inventing rules for your own convenience. – gavin]

Paleoclimate analysis relies on proxies – and to be more specific, proxies are “…biological, physical or chemical measurements made in sediments, fossil shells, ice cores, trapped air, speleothems, corals and other archives that serve as surrogates for climate parameters studied by modern climatologists with observations, instruments, satellites, and computer models. Proxies constitute the primary tools for reconstruction of climate-related parameters.” (Paleoclimates: Understanding Climate Change Past and Present, Cronin 2009)

This kind of data is collected from geological archives – various Earth features that can be forensically sampled and examined down to the minutest details. This is different from analyzing a lot of data collected by the same instrument – a radiosonde or a climate satellite, in that the goal is to reconstruct (or create a model of) the climate and the ecosystem as it was at the time. The more kinds of data, the better the reconstruction tends to be.

One other difference is that while you only get one change to sample the real-time data, you can return to geological archives again and again. Honest scientists would thus call for more data collection when faced with a real uncertainty, of course – which McIntyre would never dream of doing, except as a stalling tactic. The one thing that you pick up from ClimateAudit is that the authors don’t understand what the data is that they are looking at, particularly with proxies.

However, since the “hockey stick” allegations came out, more work has indeed been done. Yes, Climate Audit attacked this new work too – although they ignored previous efforts by Kaufman and others on the Holocene Thermal Maximum, some 9,000-11,000 years ago. They use the same methodology – but if it’s not about the past thousand years, ClimateAudit ignores it…. if these guys were running tobacco PR, they’d be claiming that all molecular biology studies on carcinogenic substances were fundamentally flawed – which is what they did in the past, isn’t it? Hence, ClimateAudit is really just an assault on science itself by a slick PR person working for Canada’s oil, gas and tar sands industry. The real goal is probably blocking the EPA from interfering with plans for more Alberta piplines to deliver more dirty syncrude to Midwestern and Texan refineries?

Nevertheless, they can’t refute the basic work on the issue:

Essentially, this confirms and extends the earlier Mann et al. work. The figures require a Science subscription, but here’s the heading on their own “hockey sticks”, which if you can follow tamino should be informative:

Now, (G) in particular is worth looking closely at – that’s the comparison with the Mann et al. study, and the Moberg et al. study. There’s not much difference. There’s a slow cooling trend, as expected from the slowly decreasing insolation (the Milankovitch cycle), followed by an unexpected temperature spike at the end – the result of fossil fuel combustion on a global scale.

The conclusions of all this statistical analysis of Northern Hemisphere paleorecords are also backed up by observations in other parts of the world – it’s For example, see Africa’s Lake Tanganyika

The same thing is going on in freshwater lakes in other regions – Lake Tahoe, California for example. Only a blatant fraudster would deny the existence of the trend at this point.

Tamino: “But applying the standard selection rules to the PCA analysis of MM indicates that you should include five PC series, and the hockey-stick shaped PC is among them (at #4).”

Let’s get facts straight. First, “Preisendorfer Rule N” alladgedly used by Mann et al is by no means a “standard” selection rule. In fact, it’s an obscure ad-hoc method used by few climatologist and unknown in real statistical literature. Using some selection rules common in the true PCA literature the 4th PC does not get selected. Moreover, the rule as described in the original literature is based on variance arguments and therefore can not be even applied to “noncentered PCA” (whatever that is supposed to do) as used by Mann et al. Second, the use of “Preisendorfer Rule N” is not described in MBH98 (or related literature), and it is not found in any MBH9X relesed (or leaked) code. Moreover, it is easily demonstrated that Rule N was not consistently used in MBH98. Last, but by now means least, what on Earth one should conclude about work that is supposed to describe general temperature patterns of an entire northern hemisphere but is extremely depended on the _fourth_ most important pattern in a proxy network covering merely western USA? The word “robust” is definitely not on lips of any thinking man.

Tamino: “It was also pointed out (by Peter Huybers) that MM hadn’t applied “standard” PCA either.”

Huybers was mistaken. Tree ring series are already in common units. In such cases the covariance based PCA (as done by MM) is recommended. The correlation based PCA (as advocatd by Huybers) is used only in cases where no common units among variables exist.

Tamino: “I computed my own reconstructions by multiple regression, first using all 22 proxy series in the original MBH98 analysis, then excluding the Stahle and NOAMER PC1 series.”

Oh, I guess you simply forgot to exclude also the “Gaspe series” you later describe as “the most hockey-stick shaped proxy of all”. Why don’t you publish your results with the 19 remaining proxies?

Tamino: “This is not at all an unusual practice, and — let’s face facts folks — extending 4 years out of a nearly 600-year record on one out of 22 proxies isn’t going to change things much.”

Let’s face facts folks – Tamino’s claim is beyond reason and downright ludicrous – and Tamino knows it. The four year unreported ad-hoc extension makes all the difference whether “the most hockey-stick shaped proxy of all” gets included or excluded to AD1400 network. Without it (and the infamous bristlecones masked as “PCs”) the hockey stick is an endangered species.

Tamino: “You get a hockey stick with standard PCA, in fact you get a hockey stick using no PCA at all. Remove the NOAMER PC1 and Stahle series, you’re left with a hockey stick. Remove the Gaspe series, it’s still a hockey stick.”

How many times do you think you can play this leave-one-but-only-one-out -game and still people would buy the rotten argument?

[Response: Do please calm down. You are incorrect on any number of issues – tree ring width and density proxies are not in the same units and there is a lot of variation in the amount of variance in the N. American network. And really, you think that starting the reconstruction from 1404 instead of 1400 would have made any difference to anything? What magical rule says that you can only do step-wise reconstructions in 50 year steps? I’m sure that Tamino will have more to say in response, but in the meantime, perhaps you’d care to suggest what selection rule for PCs you would use? Given that the data does actually contain a HS signal, I would be surprised that anything you suggest would have any material effect whatsoever. Go on, try and be a little constructive. – gavin]

#96 Lynn Vincentnathan

Check out the responses here re. Lindzen iris hypothesis:

http://www.ossfoundation.us/projects/environment/global-warming/myths/richard-lindzen

—

A Climate Minute: The Natural Cycle – The Greenhouse Effect – History of Climate Science – Arctic Ice Melt

‘Fee & Dividend’ Our best chance for a better future – climatelobby.com

Learn the Issue & Sign the Petition

#100 Geoff Wexler

Exactly. I used to comment on CiF but the lack of moderation there results in a tedious repetition of the usual tired old long debunked myths, with at least a semi-organised rush of deniers to anything by Monbiot, for example. Any unmoderated climate change blog suffers the same. Sensitive moderation does not stifle debate, it allows space for debate to happen.

Judith Curry – what are your views on this ??

RE: #116

Gavin, it was absolutely not my intention to make insinuations that this was what was done and I am sorry that my comment was not sufficiently clearly worded and led to that conclusion being drawn by yourself. I was trying to discuss accepted statistical practice, not imply wrongdoing.

Perhaps if I re-phrase the point it would help. I do not disagree with you that there may be a range of statistics that can be calculated in any particular case. However, a very widely used statistic in many proxy cases, including my own work, is the R statistic. I have pointed out that both MBH98 and WahlAmman2007 fail a test of significance for R. I do not claim that the R statistic is the only test of significance but it is a very important one.

You state that the R statistic is not favoured by dendro’s. My question therefore still stands: What is the statistical authority for it being acceptable to fail an R test for this type of data and still claim the result statistically significant?

[Response: The reference given in the NAS report is Fritts (1976), so see what he says (I don’t have a copy). But the discussion in the NAS report is pretty clear – no single metric tells you everything interesting about a reconstruction’s validity. – gavin]

I work for a university, and do research supported by public funding. I don’t consider any email to be secure, but I have a reasonable expectation of privacy. Nevertheless, there are limits. I can imagine circumstances in which my emails might be subpoenaed by a court of law, and I would have no great worries if that were to happen. On the other hand, I have no doubt that somebody who went on a cherry-picking expedition through my emails could find some things that could be used to make it appear that I was doing something improper. Creating conditions such that scientists felt the need to write every single email so carefully that it could not possibly be misinterpreted by a hostile reader would impose an enormous burden in terms of time, slow the progress of research, and substantially increase its cost. It is hard to imagine how this could possibly serve the public interest.

Andy Revkin, may I also commend this, on researchers’ privacy:

http://rabett.blogspot.com/2010/07/and-so-it-drags-on.html?showComment=1279886737405#c7783741013556179367

I think JC should:

a) type her own review of the book here (worst option)

b) write it somewhere else and link in comments

c) get RC’s permission to guest post – and post her review

What she has done so far, however, is to troll. And I guess she really does not want to be a troll. So, JC, pick an option. But quit trolling. Both you – and RC’s contributers and readers – deserve better than your two hopeless posts so far.

ThinkingScientist says: 23 July 2010 at 11:46 AM

[edit – discussions of comment policy are tedious from either side, please let this drop]

Back to the topic you’re concerned with, Gavin has repeatedly invited you to apply your statistical skills to assuage the doubts you’re harboring over the nearly-fossilized MBH98. You imply you’ve got the skill to test your doubt, you are fixated on this doubt, why do you not go ahead and address your doubt rather than repeatedly demand that somebody else do your work for you? Why are you asking Gavin for more help?

Re Gavin’s response #106:

“It is not ‘the proxies’, it is one specific set of proxies”.

It is not one set of proxies, it’s one type of proxy. Tree ring studies at Schunya River, Khadyta River, Nadim River and Jahak also show the divergence since mid-century.

In order to use tree rings as temp proxies you need to explain the divergence problem, not dismiss it out of hand.

[Response: ‘Dismiss out of hand’? Funny. But the fact remains that not all tree rings show this problem. But while I’d be very happy for this to be resolved, it hasn’t been. So you can either decide that there is some signal in the tree rings, or you can decide that there isn’t. If you think there is, you proceed as above with all the caveats necessary, and if you think there isn’t, try and look at exclusively non-tree ring proxies. You will still get something very similar for the last 500 years or so, and possibly even longer. Again, I just don’t see this as an important issue – no one is forcing you to agree with anything. But the fact is, there is a lot of concordance amongst boreholes, glacier-based reconstructions, non-tree ring reconstructions, etc. Perhaps we’d make more progress if you told me what key question you think all this affects? – gavin]

Thinking scientist said…

There is one small point forgotten about here concerning the MBH98 algorithm/methodology.

When tested with stationary (no trend) noise the algorithm still produces a hockey stick. Therefore the method cannot be classified as “robust” or having any merit.

Thinking scientist, did you compare the magnitudes of the leading eigenvalues produced by Mann’s data with the magnitudes of the leading eigenvalues produced by McIntyre’s “red noise” data?

There’s an old saying: “Size matters”. And “size matters” every bit as much for hockey sticks as it does for other types of appendages.

chek,

I’m currently reading his book, and if he spoke to anyone other than McIntyre (and I actually doubt that, it seems to be drawn almost entirely from CA) I’d be very surprised.

In all, this is so very, very tedious and tiresome, even for a bystander like me. I can only imagine the exasperation of the scientists involved. I did a “grep ‘trick’ * -R” on my source code directory containing computer code written by colleagues and myself, and got enough results to be very happy that I am active in a completely uncontroversial field of research (astronomy)…

Another fun exercise:

wget http://www.kernel.org/pub/linux/kernel/v2.6/linux-2.6.34.1.tar.bz2

tar jxf linux-2.6.34.1.tar.bz2

cd linux-2.6.34.1

find . -name ‘*.[chS]’ | xargs grep ‘ trick[ .]’

I tell ya, those Linux kernel hackers are a nefarious bunch. They’re almost as sneaky as those crafty climate scientists!

ThinkingScientist, why do you insist on finding an authority to tell you why Pearson r^2 is usually not a good idea and RE is? Wouldn’t it be much better if you actually understood why this is so?

Gavin already linked you to the NAS report. Let me give you Wahl & Ammann 2007:

http://www.cgd.ucar.edu/ccr/ammann/millennium/refs/Wahl_ClimChange2007.pdf

Their explanation is well-written, extensive and clear. It worked for me, and I don’t think I’m so much smarter than you :-)

J. Curry writes (#107):

“Once more people have read the book, and if Montford and McIntyre were welcomed to participate in the discussion, then I would be interested in participating in a more detailed discussion on this.”

You’ve read the book (right?). You’ve read the response here (right?). Certainly, you must be able to support your assertion:

“cons: numerous factual errors and misrepresentations, failure to address many of the main points of the book”

Have at it.

RE: #121

Gavin thank you for the reference to Fritts (1976). I am not familiar with this reference but I will certainly locate a copy and review what is said about validation statistics.

Regarding the NAS report and your comment that no single verifcation statistic tells you everything we are in agreement.

RE: #114 and my response to Didactylus I have to point out that Didactylus was arguing that the use of random series as a means of establishing stastical significance or confidence intervals in general is invalid. This is not true. Didactylus’ comment is without merit and my reply to Didactylus stands.

Because your (Gavin) reply is inserted in my response to Didactylus the first statement that I am incorrect (again) is not relevent to that response. The general principle that MM2005 set out to establish significance by using autocorrelated random sequences is an entirely valid approach and, as I said, is widely used in statistics: you acknowledge that in your own reply.

Huybers did raise criticisms to MM2005. These were addressed by MM in a follow-up reply in 2005 in GRL. They re-ran their analyses taking account of Huybers comments and got the same result:

“The apparent contradiction between verification statistics

is thus fully resolved: the cross-validation R2 of approx 0.0

demonstrates that the MBH98 model is statistically insignificant;

the new simulations, implementing the variance rescaling

called for by Huybers and newly-revealed in the

MBH98 code, confirm our earlier finding that the seemingly

high MBH98 RE statistic is spurious.”

I can see nothing wrong with their analysis and response to Huybers.

[Response: You aren’t paying close enough attention then… ;) This is actually quite clearly discussed in Amman and Wahl (2007) (section 4), where it is shown that the ‘noise’ that MM2005c used actually contains a fair bit of signal. Neither McI nor McK has ever submitted a paper or a comment subsequent to that (and they’ve had a fair while now…. ). – gavin]

Rattus @128

Quite. Its deficiencies even as a piece of investigative journalism, let alone a definitive account are glaring, but only to those with eyes to see.

Gavin:

“But while I’d be very happy for this [divergence] to be resolved, it hasn’t been. ”

If the present divergence illustrates a ‘problem’ with the highly resolved tree-ring proxies, what methods have been undertaken to show that the same ‘problem’ did not occur in the past? Can any procedure or method, be in fact, able to lend confidence to the idea that such divergence did not occur in the past?

Thanks

[Response: Short of inventing a time machine, no-one is going to be able to prove that 100%. However, you can look at what the concordance is of these tree ring density records are with other proxies – do they diverge only in the last few decades or does it happen all the time? As far as people have been able to tell, it hasn’t happened before (i.e. there is a concordance where there is overlap between proxies in the past). So, I think there is a reasonable case to be made that the divergence is unique to the modern period (and there are plenty of hypotheses as to why that might be). But of course there is always some ambiguity while the actual reason for the divergence is still unclear. So, it’s basically a judgement call. If you think there is no skill, despite the past concordance, then discount those studies, if you think there is some skill, than weigh them in with the rest. But overall, if you are overly bothered by ambiguity, might I suggest that paleo-climate studies are not for you… ;) – gavin]

RE: #125 Doug Bostrom

RE: #130 Martin Vemeer (mostly this one)

The point about statistical authority is important. Verification statistics cannot be arbitrarily selected (this argument applies equally to discussion of RE or R or any other test). The point made by MM in their reply to Huybers is that because R^2 is effectively zero it is not reasonable to conclude the RE is meaningful and therefore the MBH98 result does not have statistical significance.

Reagrding the NAS report, I don’t disagree with either it or the comments by Gavin concerning the use of more than one statistic and I agree with Gavin on this point.

Regarding WahlAmman2007 I already commented on this at #92 above – the explanation in WahlAmman2007 is based on a clearly artificial example, not a general case.

Regarding the comment “why do you insist on finding an authority to tell you why Pearson r^2 is usually not a good idea and RE is? Wouldn’t it be much better if you actually understood why this is so?” the answer is I do understand, but in debates like this it is important to get the source ie the correct authority. Without that neither side is properly informed in the argument.

[Response: Doesn’t follow. I can correct someone who claims that 1+1=3without reference to Russell and Whitehead. The example given by WA05 and the example given in the NAS report (fig 9.3) are both fine examples of what you get with different metrics. This really isn’t that difficult. – gavin]

ThinkingScientist: perhaps your repetition of Monckton’s arguments is pure coincidence. When you boil down MM into the same soundbite he did, it’s an easy leap to make. That doesn’t make either of you right, though!

[edit]

[Response: Do you mean Montford? Monckton is a whole other kettle of fish. – gavin]

RE: #132

Its always nice when the topic of discussion finally gets onto your own specific area of expertise – autocorrelated data series :-)

WahlAmman2007 state in Section 4:

“To generate “random” noise series, MM05c apply the full autoregressive structure of the real world proxy series. In this way, they in fact train their stochastic engine with significant (if not dominant) low frequency climate signal rather than purely non-climatic noise and its persistence.”

It is my understanding that MM2005 effectively uses the covariance (autocorrelation) of the proxies to establish the power spectrum of the sequence and then simulated this. This seems to me entirely reasonable. In the frequency domain this amounts to using the observed power spectrum and then creating an autocorrelated random sequence by using a uniform PDF for the phase spectrum. That does not introduce the “Climate Signal” and invalidate the result as suggested by WahlAmman2007 and I cannot see anywhere where they propose an alternative as to the correct power spectrum to use for the simulation – perhaps I overlooked it and you can point it out to me?

[Response: Their point is that part of the auto-correlation structure in the data is due to the climate signal – that seems to be self-evident. If I have a trend over a century caused by a response (say) to rising CO2 or solar variability, then the auto-correlation will be larger than if I just had non-climatic noise (of whatever colour). Now the issue is of course whether there is a signal at all, but assuming the whole structure of the input data is noise makes the assumption that there is no signal – thus it is somewhat circular. What you would ideally want to do is know what the auto-correlation structure is for just the non-climatic part – and that is almost certainly smaller. – gavin]

@107 Judith Curry

Once more people have read the book, and if Montford and McIntyre were welcomed to participate in the discussion, then I would be interested in participating in a more detailed discussion on this.

Montford and McIntyre have their own blogs. No one is stopping them from participating.

I am sure that I’m not alone in suggesting that you considering starting your own blog, or at least try to include something of substance in your comments when you do post here.

I can understand the hesitancy to do so on your part, given the inevitable fact checking and potential embarrassment that can come with it, but complaining about the moderation policy and a rider don’t add much to the discussion, do you think?

Dear Gavin

One can live with ‘ambiguity’ or uncertainty, as you put it. The problem comes when our confidence placed in the implication of such conclusions, exceeds what they are deserving of.

Paleoreconstruction statistical skill alone, is not our primary concern. The fact that such reconstructions as MBH99 and others, have lent credence to the notion of ‘dangerous’ and ‘unprecedented’ climate change, when speaking from within the realm of paleoreconstructions alone, such notions cannot be confidently supported as you indicate above – that is also a cause for concern.

Thanks

[Response: But here it is you that is over-reaching. The concern about future climate change because of human emissions of CO2 existed long before MBH98 – even the Kyoto Protocol predated this (1997). And the attribution of recent change to anthropogenic effects has nothing to do with whether recent temperatures are ‘unprecedented’. Obviously one can go back to the last interglacial, or the Cretaceous to find global temperatures far in excess of today’s (though not far in excess of where we might be by 2100). The title of MBH99 was “Northern Hemisphere Temperatures During the Past Millennium: Inferences, Uncertainties, and Limitations” – i.e. no-one is hiding the uncertainties. If you mistakenly think that all of this fuss is because of some statistical quirk in a single paper written in 1998, then I strongly advise you to read the IPCC reports more carefully. Mann et al 1998 could be completely wrong, and yet nothing would change with respect to the climate change problem. The interest in these records is for what they can tell us about natural variability, spatial patterns of change, responses to solar or volcanic forcing, teleconnections etc. – it’s all interesting and useful, but it is nothing like as important as the outside interest shown in these studies might suggest. – gavin]

RE: #137

[Response: Their point is that part of the auto-correlation structure in the data is due to the climate signal – that seems to be self-evident. If I have a trend over a century caused by a response (say) to rising CO2 or solar variability, then the auto-correlation will be larger than if I just had non-climatic noise (of whatever colour). Now the issue is of course whether there is a signal at all, but assuming the whole structure of the input data is noise makes the assumption that there is no signal – thus it is somewhat circular. What you would ideally want to do is know what the auto-correlation structure is for just the non-climatic part – and that is almost certainly smaller. – gavin]

With all due respect Gavin, your answer makes no sense.

1. You are referring to a trend over half a century but we are talking about tests using stationary stochastic simulations. Over many simulations (say, 10,000 as I seem to recall were run by MM) the effect you describe is irrelevent: it gets averaged out and would not effect the verification statistics.

[Response: Not so. The real world proxies contain auto-correlation structure that is contributed by both non-climatic noise, stationary ‘weather’, and climate signals that are forced by various drivers (CO2, solar, volcanoes etc.). Depending on the strength of each of these – which might vary in time as well and the sensitivity of the proxy, the actual auto-correlation one would deduce will be different. If you take that calculated rho and generate stationary timeseries from it, you are not mimicking merely the noise, but also the structure caused by the signal we are trying to extract. It does not ‘average out’. Maybe someone would like to generate some examples using a basic red-noise process overlaid with a climate signal (for instance from solar or volcanic forcing histories) and then compute the auto-correlations? They are not going to be what you started with. – gavin]

2. You have asked the same question I did: WahlAmman2007 criticise MM2005 for using a particular power spectrum for the simulation but do not appear to offer an alternative. You have just stated that this is what you need to know in order to solve the problem of the validity of the verification statistics for MBH98. I don’t agree with you, but expanding on your point, if this spectrum is not known then I would suggest the best starting point is the observed power spectrum of the proxies and this is the usual starting point in my discipline. A further point to this is that even the PC’s don’t tell you which part of the signal is which. As you said earlier in this post: “PC’s are just a way of reducing data – they do not come with obvious interpretations necessarily.”

Sounds to me like you are arguing the problem is not solvable, in which a claim of justification and statistical significance for these methods (MBH98, WahlAmman2007 etc) is not established.

[Response: A priori knowledge of the non-climatic noise in a single proxy is hard to come by, but there are reasonable bounds. I am persuaded by the analysis in WA07/AW07/Huybers05 that RE>0 is a fair test for the data structures that are being used. It was certainly a reasonable test for MBH98 to use. You can impose a higher significance criteria if you like, in which case you would ignore the last century or so in MBH98, but you would still be able to go further back with the more up-to-date proxy networks. Again, you are free to take what you want. But we will never know exactly what the right answer was – all we can do is keep working at it. So far the additional data has not disagreed violently with what MBH came up with. Science will nevertheless move on. – gavin]

Gavin,

I have enjoyed our discussions today and thank you for allowing every post through. Your final replies to my post #140 are a suitable neutral point for me to close at this point – in my time zone it is now after midnight.

With regard to your point:

“Maybe someone would like to generate some examples using a basic red-noise process overlaid with a climate signal (for instance from solar or volcanic forcing histories) and then compute the auto-correlations?”

this would be interesting and I may even take a look at it. Got any suitable data I can use? :-)

Best regards,

ThinkingScientist

[Response: Yes. Try the PMIP3 data for the next set of last millennium simulations. I am in the process of compiling a master file of estimated radiative forcings from it (but anyone can do something similar themselves). – gavin]

#130 caerbannog

ROFLMAO. So much for those people who were arguing that English has only one meaning for the word “trick”, as in deceive.

My favourite is in net/ipv4/ip_output.c:

Just goes to prove that Linux really is a communist plot to destroy the American economy, I guess.

Judith Curry 107: Once more people have read the book, and if Montford and McIntyre were welcomed to participate in the discussion, then I would be interested in participating in a more detailed discussion on this.

BPL: Let me explain this to you as simply as you can. Mann et al. 1998, 1999 were perfectly valid science. McIntyre is a crackpot right-winger with an axe to grind. His use of his blog to get his admirers to flood CRU with FOIA requests shows that he is simply out to harass and interfere with climate scientists by any means possible. Montford is one of his scientifically illiterate admirers. So participating in a discussion with them would be pointless and stupid.

Should biologists sit down and have a nice, even-handed discussion with creationists? How about astronomers and Velikovsky freaks? Archaeologists and Erich von Daniken or Zechariah Sitchin?

They tell me you’re a scientist yourself. If so, kindly get your act together and stop palling around with the dedicated enemies of science.

Tamino: “I computed my own reconstructions by multiple regression, first using all 22 proxy series in the original MBH98 analysis, then excluding the Stahle and NOAMER PC1 series.”

Jean S: Oh, I guess you simply forgot to exclude also the “Gaspe series” you later describe as “the most hockey-stick shaped proxy of all”. Why don’t you publish your results with the 19 remaining proxies?

BPL: In other words, your criterion for what to exclude is “anything that looks like a hockey stick.” And you intend to use that to prove that there’s no hockey stick.

Read my lips: It’s warmer now than in the past 2000 years. You don’t need MBH98-99 to prove it. Everybody else who does similar studies gets the same result.

The Earth is warming. We’re doing it. And it’s the most serious problem civilization faces outside of nuclear war. Deal with it.

Shorter Gavin: keep your eye on the doughnut and not on the hole.

Gavin,

I agree with what you say above, but differ from you only to remind ourselves that, in the larger debate, well outside the paleoclimatology community, the hockey stick has supported important ‘tenets’ (as Anderegg et al say) of the anthropogenic warming concept. The issue of divergence may not be important as for our causal understanding, but is certainly important for understanding temperature equivalence of different climatic periods (as in, even with low CO2, was the MWP anywhere near as warm as it is today).

[Response: Co2 has nothing much to do with the medieval period. The key forcings are solar and volcanic. But whether it was warmer than today or cooler is not an important issue – despite what you might read elsewhere. The fact is that the uncertainties in the forcings, the mean temperatures and the climate sensitivty mean that it is very unlikely that any one will be constrained by the other two at this period. Hence the interest in the spatial responses – bigger signals. -gavin]

Confident assertions, for example, have been made in this very forum, that climatic states in the past 1000 years have never matched the present-state, based on the simple fact that the paleo temperatures of the MWP never reach the mythical heights the instrumental proxy of the present day.

[Response: You grossly overstate what has been claimed. I doubt very much we have ever said we know for certain that temperatures are higher now than 1000 years ago. I certainly think it’s likely given the reconstructions that exist and the obvious mismatch in timings of putative MWP’s in the records (something that Montford and Monckton seems a little confused about). But don’t confuse a defense of what is likely with a defense of what is important. ]

Present-day temperatures may very well exceed what ever was truly experienced in the MWP, and its drivers may be different. But even if a sizable fraction of the present warmth was experienced previously – irrespective of its cause, – our need to ‘understand’ divergence in its entirety only increases.

Has anyone calibrated ‘divergent’ paleo-data against its corresponding instrumental period data, and compared the resulting reconstruction with existing reconstructions? I am (simplistically) assuming that such a reconstruction will then diverge in all presently non-divergent points, but might give an indication of the absolute temperatures reached earlier?

[Response: Don’t know. But you would not end up with a very different picture except that the error bars would be larger and the resolved variance smaller – even if it validated… -gavin]

Some evidence that it is now warmer than it was quite some time ago at certain locations:

90–7000 years ago:

http://www.npr.org/templates/story/story.php?storyId=914542

http://www.physorg.com/news112982907.html

4300 years ago:

http://www.livescience.com/environment/melting-ice-reveals-ancient-tools-100426.html

7000 years ago:

http://news.softpedia.com/news/Fast-Melting-Glaciers-Expose-7-000-Years-Old-Fossil-Forest-69719.shtml

5200–5500 years ago:

http://news.bbc.co.uk/2/hi/science/nature/7580294.stm

http://researchnews.osu.edu/archive/quelcoro.htm

http://en.wikipedia.org/wiki/%C3%96tzi_the_Iceman

[Response: You aren’t paying close enough attention then… ;) This is actually quite clearly discussed in Amman and Wahl (2007) (section 4), where it is shown that the ‘noise’ that MM2005c used actually contains a fair bit of signal. Neither McI nor McK has ever submitted a paper or a comment subsequent to that (and they’ve had a fair while now…. ). – gavin]

If you generated a big ensemble of time-series of MM2005c “noise” and then computed a straight average, is it possible that a “hockey-stick” might emerge?

Re: #119 (Jean S)

Thanks for stopping by to entertain us.

And thanks for appointing yourself judge of valid selection rules as well as “true PCA literature”!

I thought you wanted to get the facts straight. It is described in MBH98, Methods Section, sub-section “Calibration,” 3rd paragraph, lines 6-11, which states:

“An objective criterion was used to determine the particular set of eigenvectors which should be used in the calibration as follows. Preisendorfer’s selection rule ‘rule N’ was applied to the multiproxy network to determine the approximate number Neofs of significant independent climate patterns that are resolved by the network, taking into account the spatial correlation within the multiproxy data set.”

Didn’t you just say you wanted to get the facts straight? The code is (and has been for five years) available at

http://www.meteo.psu.edu/~mann/shared/research/MANNETAL98/METHODS/multiproxy.f

It’s not that hard to identify the section preceded by these comment lines:

c now determine suggested number of EOFs in training

c based on rule N applied to the proxy data alone

c during the interval t > iproxmin (the minimum

c year by which each proxy is required to have started,

c note that default is iproxmin = 1820 if variable

c proxy network is allowed (latest begin date

c in network)

c

c we seek the n first eigenvectors whose eigenvalues

c exceed 1/nproxy’

c

c nproxy’ is the effective climatic spatial degrees of freedom

c spanned by the proxy network (typically an appropriate

c estimate is 20-40)

It’s been repeatedly shown that if you leave out the NOAMER PC1 (or PC4 as you’d call it) altogether you still get a hockey stick. But the words “extremely depended on the _fourth_ most important pattern in a proxy network covering merely western USA” are on your lips.

It’s actually kinda funny that you repeat the sucker argument (did you get it from Ross McKitrick?) of characterizing PC4 as “fourth most important.” It’s the one with the 4th-largest variance (in the MM methodology) — but how “important” it is also depends on how well it correlates with temperature during the calibration period. Perhaps you’ll join Montford in complaining that hockey-stick shaped proxies dominate reconstructions because they correlate well with temperature.

Did you miss the part about getting a hockey stick with no PCA at all?

You don’t flatter yourself repeating this argument. The NoAmer ITRDB tree ring series are not in common units — they’re not in any units at all.

Ring-width series are scaled by the average ring width, so if the species or environmental conditions cause greater or lesser average growth, the year-to-year variance due to climate (or other factors) will be suppressed or enhanced. And since species type (they aren’t all the same kind of tree, are they?) and environmental conditions can cause greater or lesser average growth and response to climate, even if the data were in common units it would still be necessary to normalize the data.

The real howler is that the NoAmer ITRDB data series aren’t even all the same type of data! While most are tree ring width, a few of them are tree ring density — care to explain how they can all be in “common units”?

Normalization is the right thing to do. You really fumbled this one.

I confess — you totally got me — I completely forgot to do a reconstruction leaving out all the data series you don’t like.

I take it that you’re admitting Montford was wrong about getting a “completely different result” if you leave out just NOAMER PC1 and Stahle — that you have to leave out Gaspe too.

The hilarious part is that even if we do, the result with the 19 remaining proxies gives peak warmth in the late 15th century comparable to about 1940, but nowhere near as warm as the late 20th/early 21st centuries. It’s still a hockey stick — in spite of allowing you all the cherries you can eat.

How many times do you think you can play this we-have-to-leave-out-everything-that-looks-like-a-hockey-stick” rotten argument?

This is pathetic. The saddest part is that you probably don’t know it.

Missing 4 years out of 580 is not a valid reason to require removal of the Gaspe series. Extension by persistence is not at all an unusual practice. Start the reconstruction in 1404 if you want — hockey stick.

Your real motive for insisting on the removal of Gaspe is clear: because it looks like a hockey stick.

You and McIntyre can (and will) harp on MBH98 until the end of time but the fact is that, warts and all, they got the right answer. This has been confirmed repeatedly, including with data and methods over which McIntyre and McKitrick and you have had to wrack your brains to find new nits to pick. Every reputable reconstruction since MBH98 has confirmed the hockey stick, including those which use no tree rings at all and no PCA at all.

When you admit that the hockey sticks (there’s a host of them) are right — only then — you might be able to participate in an honest discussion of MBH98. We’ll be interested in your answer to the question: if their work is so horribly wrong, how did they get the right answer?