It’s worth going back every so often to see how projections made back in the day are shaping up. As we get to the end of another year, we can update all of the graphs of annual means with another single datapoint. Statistically this isn’t hugely important, but people seem interested, so why not?

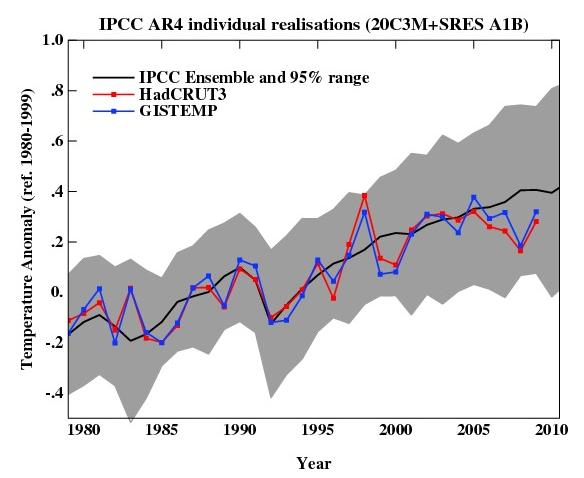

For example, here is an update of the graph showing the annual mean anomalies from the IPCC AR4 models plotted against the surface temperature records from the HadCRUT3v and GISTEMP products (it really doesn’t matter which). Everything has been baselined to 1980-1999 (as in the 2007 IPCC report) and the envelope in grey encloses 95% of the model runs. The 2009 number is the Jan-Nov average.

As you can see, now that we have come out of the recent La Niña-induced slump, temperatures are back in the middle of the model estimates. If the current El Niño event continues into the spring, we can expect 2010 to be warmer still. But note, as always, that short term (15 years or less) trends are not usefully predictable as a function of the forcings. It’s worth pointing out as well, that the AR4 model simulations are an ‘ensemble of opportunity’ and vary substantially among themselves with the forcings imposed, the magnitude of the internal variability and of course, the sensitivity. Thus while they do span a large range of possible situations, the average of these simulations is not ‘truth’.

There is a claim doing the rounds that ‘no model’ can explain the recent variations in global mean temperature (George Will made the claim last month for instance). Of course, taken absolutely literally this must be true. No climate model simulation can match the exact timing of the internal variability in the climate years later. But something more is being implied, specifically, that no model produced any realisation of the internal variability that gave short term trends similar to what we’ve seen. And that is simply not true.

We can break it down a little more clearly. The trend in the annual mean HadCRUT3v data from 1998-2009 (assuming the year-to-date is a good estimate of the eventual value) is 0.06+/-0.14 ºC/dec (note this is positive!). If you want a negative (albeit non-significant) trend, then you could pick 2002-2009 in the GISTEMP record which is -0.04+/-0.23 ºC/dec. The range of trends in the model simulations for these two time periods are [-0.08,0.51] and [-0.14, 0.55], and in each case there are multiple model runs that have a lower trend than observed (5 simulations in both cases). Thus ‘a model’ did show a trend consistent with the current ‘pause’. However, that these models showed it, is just coincidence and one shouldn’t assume that these models are better than the others. Had the real world ‘pause’ happened at another time, different models would have had the closest match.

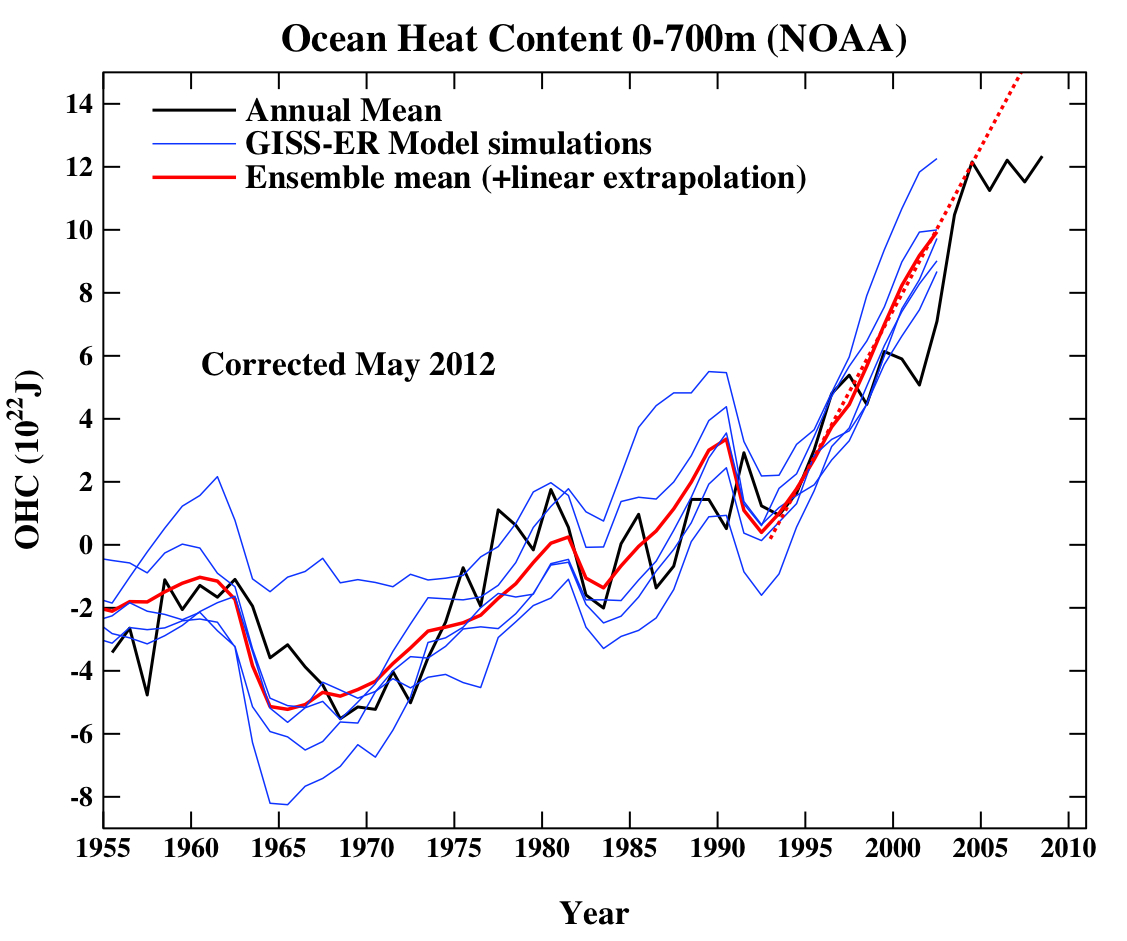

Another figure worth updating is the comparison of the ocean heat content (OHC) changes in the models compared to the latest data from NODC. Unfortunately, I don’t have the post-2003 model output handy, but the comparison between the 3-monthly data (to the end of Sep) and annual data versus the model output is still useful.

Update (May 2012): The graph has been corrected for a scaling error in the model output. Unfortunately, I don’t have a copy of the observational data exactly as it was at the time the original figure was made, and so the corrected version uses only the annual data from a slightly earlier point. The original figure is still available here.

(Note, that I’m not quite sure how this comparison should be baselined. The models are simply the difference from the control, while the observations are ‘as is’ from NOAA). I have linearly extended the ensemble mean model values for the post 2003 period (using a regression from 1993-2002) to get a rough sense of where those runs could have gone.

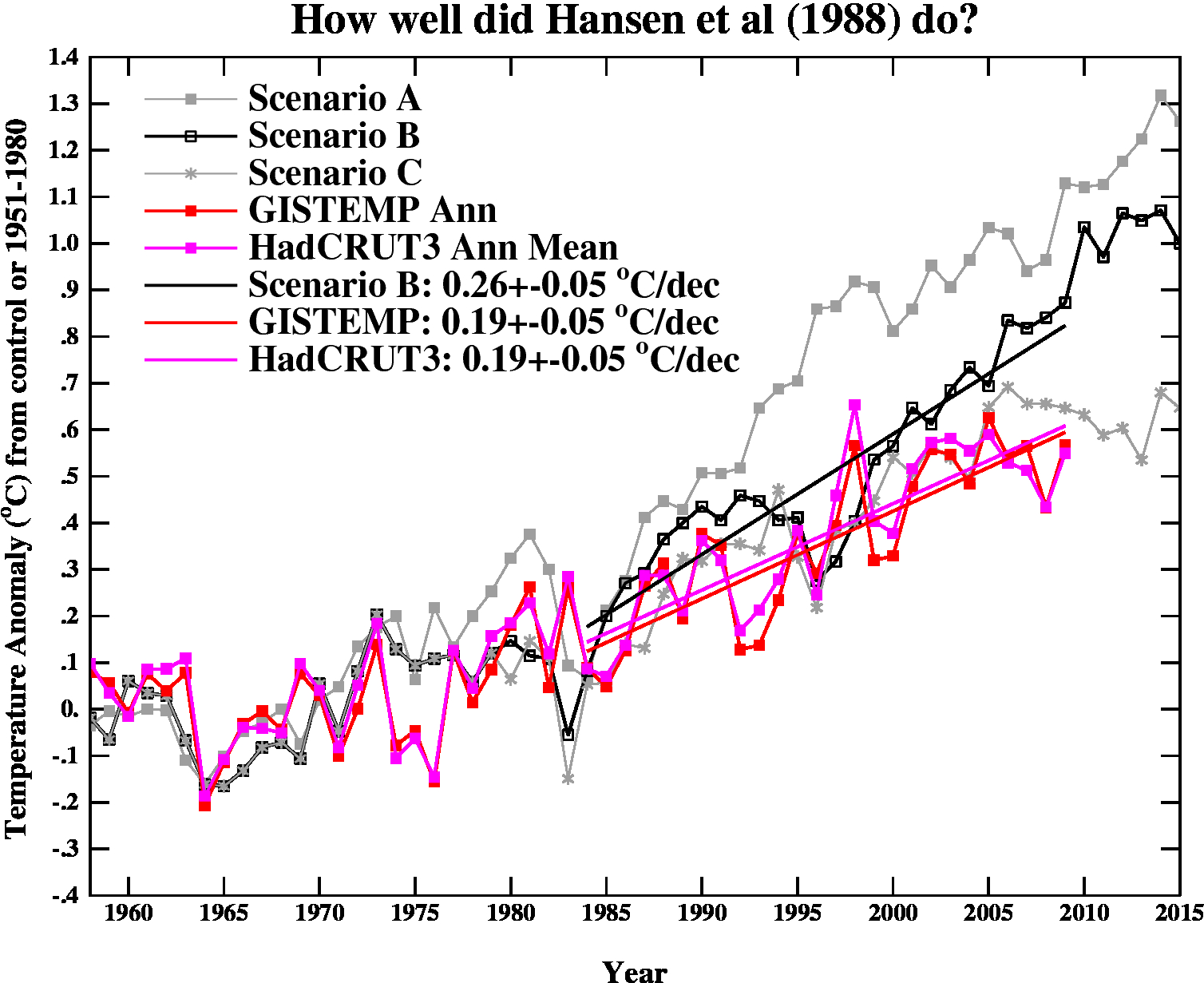

And finally, let’s revisit the oldest GCM projection of all, Hansen et al (1988). The Scenario B in that paper is running a little high compared with the actual forcings growth (by about 10%), and the old GISS model had a climate sensitivity that was a little higher (4.2ºC for a doubling of CO2) than the current best estimate (~3ºC).

The trends are probably most useful to think about, and for the period 1984 to 2009 (the 1984 date chosen because that is when these projections started), scenario B has a trend of 0.26+/-0.05 ºC/dec (95% uncertainties, no correction for auto-correlation). For the GISTEMP and HadCRUT3 data (assuming that the 2009 estimate is ok), the trends are 0.19+/-0.05 ºC/dec (note that the GISTEMP met-station index has 0.21+/-0.06 ºC/dec). Corrections for auto-correlation would make the uncertainties larger, but as it stands, the difference between the trends is just about significant.

Thus, it seems that the Hansen et al ‘B’ projection is likely running a little warm compared to the real world, but assuming (a little recklessly) that the 26 yr trend scales linearly with the sensitivity and the forcing, we could use this mismatch to estimate a sensitivity for the real world. That would give us 4.2/(0.26*0.9) * 0.19=~ 3.4 ºC. Of course, the error bars are quite large (I estimate about +/-1ºC due to uncertainty in the true underlying trends and the true forcings), but it’s interesting to note that the best estimate sensitivity deduced from this projection, is very close to what we think in any case. For reference, the trends in the AR4 models for the same period have a range 0.21+/-0.16 ºC/dec (95%). Note too, that the Hansen et al projection had very clear skill compared to a null hypothesis of no further warming.

The sharp-eyed among you might notice a couple of differences between the variance in the AR4 models in the first graph, and the Hansen et al model in the last. This is a real feature. The model used in the mid-1980s had a very simple representation of the ocean – it simply allowed the temperatures in the mixed layer to change based on the changing the fluxes at the surface. It did not contain any dynamic ocean variability – no El Niño events, no Atlantic multidecadal variability etc. and thus the variance from year to year was less than one would expect. Models today have dynamic ocean components and more ocean variability of various sorts, and I think that is clearly closer to reality than the 1980s vintage models, but the large variation in simulated variability still implies that there is some way to go.

So to conclude, despite the fact these are relatively crude metrics against which to judge the models, and there is a substantial degree of unforced variability, the matches to observations are still pretty good, and we are getting to the point where a better winnowing of models dependent on their skill may soon be possible. But more on that in the New Year.

Good summary. Quite clear.

Many thanks. Given the recent report that Earth may be more sensitive than previously estimated, I was very interested in your overview on sensitivity. And just as interested in your observations on the progress of models. My bias is that we can expect too much of models, and that the (important! worthy!) effort to improve them will one day face a point of diminishing returns, so I’m looking forward to next year’s look at skill and winnowing. Mexico City inches closer every day.

By how much does the solar forcing, which is currently remaining unusually long at a low value, affect this picture?

Yay! Science is back! :)

Do you intuitively think the dynamic ocean components will ever be predictable over more than a cycle or so or should they be considered ocean “weather” which will always cause a variance between measured and predicted climate?

[Response: It’s possible that different parts of the ocean ‘weather’ will be predictable over different timescales. ENSO might only be for six months, but the AMO might give useful info a decade or so out. However, the amount of variance that you might be able to explain could still be small. It’s an active research area. – gavin]

Because you’ve used annual averages rather than monthly, the impact of autocorrelation on the trend analysis is small. Just a rough guess: the corrected error ranges will be about 20% larger than the stated values.

[Response: Thanks. I used annual averages in the data because that’s what I have from the models. Like with like etc… – gavin]

Is the occasional Pinatubo-type eruption also included in the models? Do we have an idea from vulcanolagists how often these (and stronger) eruptions might be expected to occur? Thanks.

[Response: Sometimes. There was a volcano in 1995 in the Hansen runs for instance. However, there weren’t any in the AR4 runs. We’ve done other experiments including volcanoes and it doesn’t make that much difference in the long term (at least for the occasional eruption). – gavin]

Have you considered inviting George Will up for a personal tour of GISS ?

[Response: I’ve not invited him personally, but we have standing invites to the Wall Street Journal editorial board (no response), I tried to get Alexander Cockburn to come (no response), and of course Michael Crichton did come (to very little effect it appears). – gavin]

As someone who has taught simulation studies at university level I find the discussion somewhat amusing.

It is possible to remove the CO2 component of all models and replace it with any submartingale and arrive at the same upward sloping graph.

There are many such statistics in the real world – the number of Mars bars consumed, for example.

The link between CO2, or Mars bars, and climate change is not proven by the models.

[edit]

[Response: Perhaps you can point me to a subroutine that uses Mars bar concentrations in a calculation of the radiative transfer in the atmosphere? And then show me the lab results that calibrate it’s effects? The radiative code involving CO2 is available here for comparison. – gavin]

Norbert (3) — See papers by Tung and co-authors for the newest estimates of global temperature variation over the course of an average solar cycle. I don’t know how well accepted this work is yet.

Douglas (7) — Big volcanoes erupt randomly so other than putting in some in model runs following the power law distribution on VIX magnitude there is not much to do. But as Gavin states, occasion eruptions won’t matter much and big ones are certainly only occasional.

If atmospheric CO2 is linked to surface temperatures in a dose relationship, why aren’t we seeing that? Especially when observing graph 3 “How well did Hanson do?” On the otherhand if atmospheric C02 is only a quasi reflection for the generation of particulates, and atmospheric particulates are more determanent of surface temperatures as we have seen when volcanos erupt, then the variable distribution of particulates would be better to track than atmospheric CO2. Instead of trying to improve existing climate models tracking CO2 and temperature, maybe models should be developed to track atmospheric particulates and surface temperatures. I come at this from the point: when the model fails, discard the model.

[Response: Not sure what you are really asking. The latest generation of models include interactive particulates and atmospheric chemistry and have those changing through time as well as the greenhouse gases (and solar and volcanoes etc.). The models are not ‘fitted’ to the temperature record and so what you are seeing are temperature changes associated with plausible trends in all of the components. The models, while imperfect, have not ‘failed’. – gavin]

Is it possible to add error bars to the surface temperature records?

[Response: About 0.1 deg C for every annual value, and about 0.05 deg C/dec in the trends. – gavin]

Graham (9) — Do explain how Mars bars cause stratospheric cooling, please.

Graham @ 9,

You know, you may actually have a point.

http://www.venganza.org/about/open-letter/

Then again perhaps you are just a troll :-)

Regarding post #9, citing one’s own credentials as a university lecturer, physicist, software engineer, airline pilot, janitor, or any of myriad of other “qualifications” is rather silly when that pose is struck in support of a jocular sophomoric prank or confidently expressed but naive statement borne of ignorance.

Influence in this discussion stems purely from serious intellectual content, not window-dressing. There are numerous excellent examples of posters here on RC able to support serious discussion, including a vanishingly tiny handful of genuine skeptics, persons worth emulating whatever one’s inclination may be.

In the case of #9, we see conversation on Gavin’s interesting topic essentially being vandalized by irrelevantly self-described lecturer “Graham”. To what end? We can only guess, but the intention of making a contribution to improvement of the human condition can be ruled out. One thing we can conclude is that it took a very short time indeed for somebody to begin emptying garbage on this thread.

Surely you can do better, “Graham”.

Fred @14 has a point. The “cooling” we have seen since 1998, or was it 2001, has been the result of increased pirate activity around the horn of Africa. Once the pirate activity goes down we can expect the global warming to return.

Graph 1

=======

The IPCC AR4 came out in 2007. Now the prediction would have been made a short time before that. Why does the comparison graph start way before 2007?

This to mean comes across as betting on a horse race after it has started, already knowing who has fallen and who hasn’t.

ie. The test has to be a test on future temperatures, not on whether or not the model fits the past. Otherwise you’re cherry picking.

Graph 2

=======

Same applies. When was the prediction made, and how well has it performed after the prediction? I don’t know of many forecasts made in 1953.

Also here, no confidence intervals, so given the inclusion of known data to say how good a prediction something is, its not good statistics.

Graph 3

=======

Again, it looks like you are starting the graph in 1985 (from the lines) for a prediction starting 1988 (could be a delay in publication, perhaps you can explain)

Similarly, why don’t the scenarios all start at the same point when the prediction is made?

Conclusion

==========

ie. From the graphs, the strong conclusion is that the prediction is both a forecast and a hind cast, and so doesn’t demonstrate predictive ability.

If we take the first graph, a simple polynomial curve fit would also show a hindcast that matches and a good forecast, but in reality be a useless predictor of the future.

Since some of these models have been fitted to the temperature record, and the temperature record has been shown to be suspect, isn’t it a case of garbage in, garbage out?

Nick

[Response: Err.. no. Since none of these models were fit to any of these measures, this is perhaps more likely a case of you not wanting to pay attention to anything that might threaten your presupposition that models can’t possibly work? For graph 1, I used all the models with no picking to see which ones did better in the hindcast. For graph 2, the ocean heat content numbers are new and were not used in any model training, and for graph 3, the true projections started in 1984 as stated. That gives 26 years for evaluation, something clearly not available for the AR4 models (projections starting from 2003 or 2000 depending on the modeling group). If you just look at the changes since 2000 say, the spread is wider but there is no obvious discrepancy either (you can draw in the 2009 values if you like). – gavin]

A troll, but a very amusing troll.

RiHo08@11, we seem to have something in common: Neither of us has a frigging clue what the hell you are talking about. Particulates? What particulates? Aerosols? Soot?

We would all love to hear about your miraculous “particulate warming mechanism”. However, whatever you come up with, the greenhouse nature of CO2 is KNOWN physics–that’s why it is in the models, and why your imaginary mechanism is not.

re Norbert 28 December 2009 at 6:12 PM

The solar max – solar min is calculated to give a surface temperature contribution of 0.11K according to Lean and Rind [*]. The contribution of Tung (0.2 oC max to min; and back again of course!) is considered to be on the high side (Lean and Rind suggest that Tung’s value is a bit too high due to neglecting to account for volcanic cooling which was in phase with 2 of the last 5 solar cycles).

So all else being equal, the solar cycle contribution should completely negate a CO2-induced temperature rise of 0.2 oC per decade, during the descending period of the solar cycle (2003-2009 for the current cycle). Since the current solar minimum has been somewhat contracted, the solar cycle contribution to current temperature variation might be a little bit more negative….

On top of this there has seemingly been a very small overall reduction in the secular solar trend since the mid 1980’s…[**]

[*] Lean JL, Rind DH (2008) How natural and anthropogenic influences alter global and regional surface temperatures: 1889 to 2006 Geophys. Res. Lett. 35, L18701

[**] Lockwood M, Frohlich C (2007) Recent oppositely directed trends in solar climate forcings and the global mean surface air temperature Proc. Roy. Soc. A 463,2447-2460

Fred, while the year isn’t over yet, both the temperature number and the number of pirate attacks increased (the pirate attacks more than doubled) since 2008. Obviously more study is going to be needed. It’s complicated by the use of high-power water cannon to repel pirates, which probably increases low-level cloud nuclei over the oceans.

It’s a bit disingenuous to use an entire degree as your error range.

[Response: Would you rather I made up certainty that doesn’t exist? – gavin]

it really doesn’t match which

did you mean matter??

[Response: yes. thanks. – gavin]

How about a map of Arctic temperature anomolies?

Graham’s point is somewhat valid (correlation alone doesn’t equal causation) but then he spoils it with the Mars Bar example (where there is no possible causation anyway)… So I think he didn’t understand what is being done here. If I regressed observed temperatures on a Mars Bar time series then I’d get a positive relationship between them (though other diagnostics could tell me that there is a problem). But that’s not what is being done here. Rather forecasts are being compared to the observations. And these show that the climate models could be correct. But they don’t rule out an alternative explanation. But you’d have to be able to show a causal mechanism for that other explanation and then show that the predictions from that other explanation could also produce decent forecasts. So Mars Bars are ruled out.

@Graham: “As someone who has taught simulation studies at university level I find the discussion somewhat amusing. It is possible to remove the CO2 component of all models and replace it with any submartingale and arrive at the same upward sloping graph.”

What a coincidence. I’ve taught simulation at the graduate level myself. I can give you a submartingale which does not reproduce the upward slope. You can too, if you think about it for a second.

David, thanks! Forcing by the solar cycle was apparently estimated to possibly add about 0.18 deg C (from the current min), although there have been doubts and it is perhaps not established yet. However if correct, and if the solar cycle had come back as usual, then it seems that the temperature would now already be visibly higher, and noticeably closer to the IPCC ensemble prediction. (?)

Where McIntyre has stick and stirs:

http://climateaudit.org/2007/12/01/tuning-gcms/

about Kiehl’s paper on models.

Gavin,

I’ve looked at the AR4 TOA downward solar flux data and found that GISS ER/EH were the only models with realistic post-20C3M variability. The other modeling groups just used a flat solar constant, not even a solar constant + cycle. I was surprised to find that some used a flat solar constant for the entire 20C3M period.

How do you think this unrealistic solar irradiance (pre/post 20C3M) affects the quality of the simulations? I had done some tests looking for changes in variance in the pre/post-20C3M periods but didn’t find anything conclusive. I suspect that without that solar-induced variability, it would allow CO2 to have an unrealistically large influence.

[Response: We’ve always striven to include as much of what is happening in the real world as possible. Our next set of runs will have significantly more (chemistry feedbacks, other land surface changes etc), and clearly this does impact variability to some extent. However, the existence of the 11 yr cycle in this class of models is only a minor contribution – each model’s internal variability is a much bigger factor in the decadal-scale changes (and the attribution problem). Note that some of the modelling groups in AR4 were doing this for the first time, and so did not have as sophisticated a set up as some of the more established groups. Whether this matters or not depends a little on the analysis – for these graphs, it isn’t very relevant, but if you think it does, suggest some variations and I’ll look into it. – gavin]

Hank @ 21,

While you are correct in saying that the issue of low-level cloud nuclei formation over the oceans possibly caused by use of high-power water cannon to repel the pirates requires further study and better modeling techniques, the fact remains that this in and of itself doesn’t in any way undermine the trend in warming and the clear linkage with the decreasing number of pirates.

You see, we haven’t established any direct correlation or causation of any warming due to increase in pirate attacks. The data is still showing a clear reduction in the number of actual pirates, despite the fact that attacks by this reduced number of pirates has increased.

I realize this may at first glance seem to be somewhat counter intuitive to the layman who has not delved deeper into modeling of this particular phenomenon. I will however be more than happy to post both my data and the actual source code which underlies the models.

Happy New Year to all and may “REAL” science prosper :-)

Hansen as much as said that would happen IN the 1988 paper: The sharp eyed Auditoriums have seen that the NOAA forcings are higher than the GISS ones. These are all calculated from concentrations, but using slightly different formulas. So, what does this say about the model. In Hansen’s 1988 words

Well, 20 years down the road you can finally see the difference. Of course you could re run the 1988 model with the more likely sensitivity to CO2 forcing.

Global cooling after volcanic eruptions has been recorded in ice core data and thermometers,:1809, 1815, 1883, 1980 etc. and others. If coal burning produces particulates, especially those sources with unregulated emissions, particulates, sulfer dioxide, etc. may impact climate changes. Since the last decade of divergence of atmospheric CO2 and surface temperatures suggests that any linkage between the two is much more complex than current modeling would imply, other ideas regarding climate change and what is influencing it should occur to all of us. I am mearly suggesting another idea that has caused global cooling in the past. In this case, atmospheric CO2 is only a surrogate marker for the particulates/SO2 etc. A plausible and testable hypothesis.

Thanks,

Time to publish it!

I would like to test my understanding by responding to Graham’s comment. After looking it up in Wikipedia, it seems to me that a submartingale has the property that its expected value will grow over time.

Ok, so then I think the point of the comment is that one cannot infer a causal relationship between CO2 and warming from the data.

But then the comment misses a crucial point, because (if I understand) the purpose of the simulation is NOT to prove a causal relationship. The causal relationship stems from the physics. The main point of the simulation is to estimate parameters and project more detailed effects than the global mean temperature.

Of course, if the projection had been grossly inconsistent with the data, one would have to search for overlooked physical considerations.

So, it may be that the same relationship holds between Mars bars and global temperature, but, of all the things that have been trending upwards over the interval in question, CO2 is of interest because there are strong a priori reasons to suspect that CO2 would result in increasing temperature (and no such reason for Mars bars, etc.)

re: gavin’s response to #9…

when are scientists going to stop writing code in fortran?

as crimes go, using fortran is far worse than anything revealed in “climategate”…

[Response: You might think that, but it’s just not true. Fortran is simple, it works well for these kinds of problems, it complies efficiently on everything from a laptop to massively parallel supercomputer, plus we have 100,000s of lines of code already. If we had to rewrite everything each time there was some new fad in computer science (you know, like ‘C’ or something ;) ), we’d never get anywhere. – gavin]

There is something about these climate models that I would like to understand. Perhaps you could point what is wrong with my logic.

These are model runs that were published in time for AR4 which was published in 2007. Assuming that they had to be completed a bit before that these runs would have been done around 2006. Looking at the graph that you produced the average tracks the HadCRUT3 average almost perfectly up until that time. Given that the HadCRUT3 average is a kind of standard of correctness in this field isn’t that what we would expect?

I know that people talk about the models being independent in some way of the actual values, but this seems unrealistic to me as any significant deviation would probably indicate an error to the modelers, or to the people selecting models for inclusion in a consensus document.

[Response: The AR4 runs were done in 2004, using observed concentration data that went up to 2000 (or 2002/3 for a couple of groups). None of them were tuned to the HadCRUT3 temperature data and no model simulations were rejected because they didn’t fit. – gavin]

Therefore I would tend to judge your graph by looking at the period prior to 2007 as being the “known” period for the models, and the period after 2007 being the forecast period. Based on this principle all one could say is that the actual results have diverged downward from the forecast. Certainly any economic model would have been judged in this way.

So the summary of what you have shown is that in the long term the model produced by Hansen in 1988 is significantly too high with current temperatures actually being below his scenario “C”. A comparison of actual temperatures with those forecast/projected by AR4 shows that AR4 in the very short term is also running too high.

What did Hansen do right in scenario C? Are we able to figure out where and why his model succeeds?

[Response: ‘C’ had constant concentrations after 2000 – which obviously did not happen, but it’s useful to compare the two simulations for a sense of how important the forcings are over short time periods (not very). The AR4 runs are more useful for that of course because there were many more simulations done for each scenario. – gavin]

Re: 8 … I applaud the efforts, Gavin. But George Will is with the Washington Post. Never know, a personal invitation might just charm the bowtie off him.

By the way, I’m teaching a course in climate change at Utah State University this coming semester — for nonscience majors — using your book as the principle text.

[Response: Yay! (Thanks.. and I hope it goes well – let me know.). -gavin]

Thanx for getting back to the science. Looking at the grafs, i notice that ocean heat content jumps about the time surface temperatures fall below ensemble mean. But thats maybe, probably, just a coincidence…?

Thank you, Gavin. The graphs look to me to prove that you have done a very good job of predicting. All of the real global temperatures are close enough to your predictions. You did better than Hansen, as expected, since Hansen’s forecast was made at an earlier date. Computing power was less then, and knowledge was less then, so Hansen did well for his time. Predicting climate change is necessarily a very difficult thing to do. Showing real data on the same graph as computer models is clear proof that this isn’t “just a model” thing.

I conclude that we are in very deep trouble. I don’t see how anybody can disagree. The 2002 to 2009 nitpick is just that, a nitpick, no doubt caused by weather. There is no way to avoid the conclusion that something is going terribly wrong. There is no excuse for delay in doing something about it. I know my congressman is committed to the cause because I have talked directly to him about climate change at every opportunity. Senators are harder to get to see directly. Most people don’t want to hear about it. I don’t know what else I can do.

I’d like to get back to the pirate issue. What steps have the world’s leaders taken to increase the number of pirates to prevent global warming and save the planet? Was this even discussed at Copenhagen? How can we combat the disinformation of the pirate deniers?

Is the Hansen 1988 graph the one with the large CFC forcing? If so how much of the difference is accounted for by this?

I think that Graham is essentially right in reminding that a comparison between a model and data is meaningful only if the probability that the data would look like they look if the model were wrong is very low. This is obviously not the case with the “new” data, which are probably compatible with ANY reasonable model anyway. In other words, it would be IN ANY CASE very unlikely that the average temperature would have decreased enough to “exclude” the current GCM,whether they are right or wrong. So the piece of information brought by the addition of a single point is actually very low.

Fred @14,

You fail to mention the significant point that pirate activity has fallen more in the Northern Hemisphere since 1800, and we’ve seen greater warming there. (and let’s just not mention the tropics vs polar thing, okay?)

On a more serious note:

I am profoundly grateful not to be doing a science where it can take 15 years data to corroborate a trend. Oh, exciting, we get our annual data-point now, just it time for Xmas! You poor bastards, what a difficult job…

…aka the ‘Ostrich Model’ ;-)

Gavin, so true, so true. This points to a very common fallacy about model/hypothesis testing (perhaps a misreading of Popper?): you never test a hypothesis against ‘nothing’; you’re always testing hypotheses against each other. And the Ostrich is the worst of the lot…

Jaynes (2003, p. 504) quotes Jeffreys: “Jeffreys (1939, p. 321) notes that there has never been a time in the history of gravitational theory when an orthodox significance test, which takes no note of alternatives, would not have rejected Newton’s law and left us with no law at all. …”

;-)

If some of you didn’t see Tamino’s post yet on “How current temperatures match former predictions”, it’s here: http://tamino.wordpress.com/2009/12/07/riddle-me-this/

Nice work. Could you do it for precipitation ?

[Response: You would only see noise on these kinds of spatial/time scales. – gavin]

Apologies if the following question has been answered before.

It seems that the models have somewhat overestimated the surface temperature this decade. My question is: where is the energy that would have been contained in the surface layer had the models been more accurate? Do the models show it somewhere else within their domain, e.g. in the upper atmosphere? If so, are such places now warmer than the models predict? Or, is the excess thermal energy, predicted to be in the surface layer, in outer space or the ocean depths (which I presume to be outside the domains of the models) instead?

Thanks to anyone for taking the trouble to answer this.

Instead of comparing past projection with what was speculative progression of GHS concentration, why not rerun those model with the actual value.

Getting the right answer from the wrong value doesn’t validate anything.

I am actually quite amazed at just how well the models do. The machines those models are run on must be incredible to handle the calculations involved – wish I could get my sticky fingers on one :)

A possibly off topic question based on the first graph (I am a high school drop-out, and probably don’t understand anomaly graphs, so please bear with me):

In my discussions (more like arguments) with “skeptics”, would I be right in saying that even at the “coldest” point during the “current cooling phase” that global mean temperature was higher than at any point prior to 1998? Is that the correct interpretation of the 2008 data point?

Any help would be appreciated.

PS

I love this site not least because it sometimes feels impossible to talk to the actual scientists in the fields I am interested in. I always feel slightly removed from the action. Yet here, I can talk to the actual people doing the science, and well, my little heart just goes pitter patter :)

I would hate to see the denier types be cleaned out completely, but maybe a “wall of shame” type thread could be made where the denier posts could be sent, that can not be replied to, but the long list of repetitions of the same tired old talking points could be visible to everyone and show WHY they are not welcome in the actual discussion threads?

It could also act as a sort of trendline, where we could see the talking points appear, run their course, then reappear the way they do over time, showing the seemingly contrived nature of them. Just an idea.

Also, Not to be a nitpicker, para 3 last sentence:

“different models would had the closest match.”

I assume you meant “have the closest match.”

[Response: thanks, fixed. – gavin]