It’s worth going back every so often to see how projections made back in the day are shaping up. As we get to the end of another year, we can update all of the graphs of annual means with another single datapoint. Statistically this isn’t hugely important, but people seem interested, so why not?

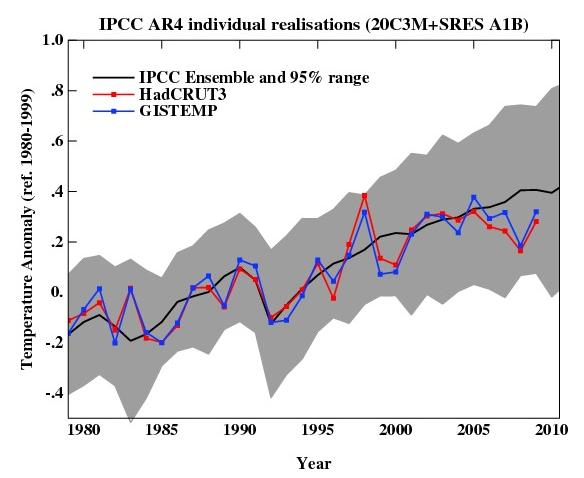

For example, here is an update of the graph showing the annual mean anomalies from the IPCC AR4 models plotted against the surface temperature records from the HadCRUT3v and GISTEMP products (it really doesn’t matter which). Everything has been baselined to 1980-1999 (as in the 2007 IPCC report) and the envelope in grey encloses 95% of the model runs. The 2009 number is the Jan-Nov average.

As you can see, now that we have come out of the recent La Niña-induced slump, temperatures are back in the middle of the model estimates. If the current El Niño event continues into the spring, we can expect 2010 to be warmer still. But note, as always, that short term (15 years or less) trends are not usefully predictable as a function of the forcings. It’s worth pointing out as well, that the AR4 model simulations are an ‘ensemble of opportunity’ and vary substantially among themselves with the forcings imposed, the magnitude of the internal variability and of course, the sensitivity. Thus while they do span a large range of possible situations, the average of these simulations is not ‘truth’.

There is a claim doing the rounds that ‘no model’ can explain the recent variations in global mean temperature (George Will made the claim last month for instance). Of course, taken absolutely literally this must be true. No climate model simulation can match the exact timing of the internal variability in the climate years later. But something more is being implied, specifically, that no model produced any realisation of the internal variability that gave short term trends similar to what we’ve seen. And that is simply not true.

We can break it down a little more clearly. The trend in the annual mean HadCRUT3v data from 1998-2009 (assuming the year-to-date is a good estimate of the eventual value) is 0.06+/-0.14 ºC/dec (note this is positive!). If you want a negative (albeit non-significant) trend, then you could pick 2002-2009 in the GISTEMP record which is -0.04+/-0.23 ºC/dec. The range of trends in the model simulations for these two time periods are [-0.08,0.51] and [-0.14, 0.55], and in each case there are multiple model runs that have a lower trend than observed (5 simulations in both cases). Thus ‘a model’ did show a trend consistent with the current ‘pause’. However, that these models showed it, is just coincidence and one shouldn’t assume that these models are better than the others. Had the real world ‘pause’ happened at another time, different models would have had the closest match.

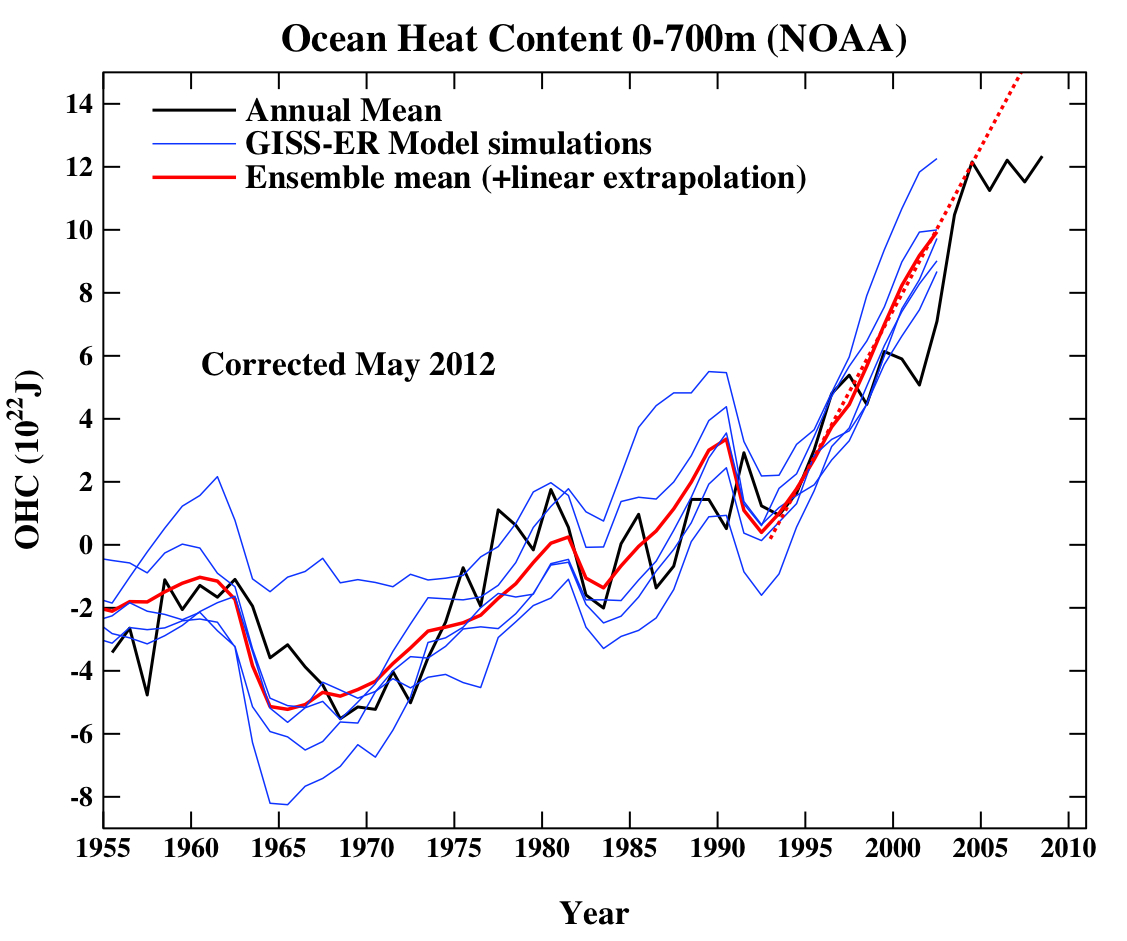

Another figure worth updating is the comparison of the ocean heat content (OHC) changes in the models compared to the latest data from NODC. Unfortunately, I don’t have the post-2003 model output handy, but the comparison between the 3-monthly data (to the end of Sep) and annual data versus the model output is still useful.

Update (May 2012): The graph has been corrected for a scaling error in the model output. Unfortunately, I don’t have a copy of the observational data exactly as it was at the time the original figure was made, and so the corrected version uses only the annual data from a slightly earlier point. The original figure is still available here.

(Note, that I’m not quite sure how this comparison should be baselined. The models are simply the difference from the control, while the observations are ‘as is’ from NOAA). I have linearly extended the ensemble mean model values for the post 2003 period (using a regression from 1993-2002) to get a rough sense of where those runs could have gone.

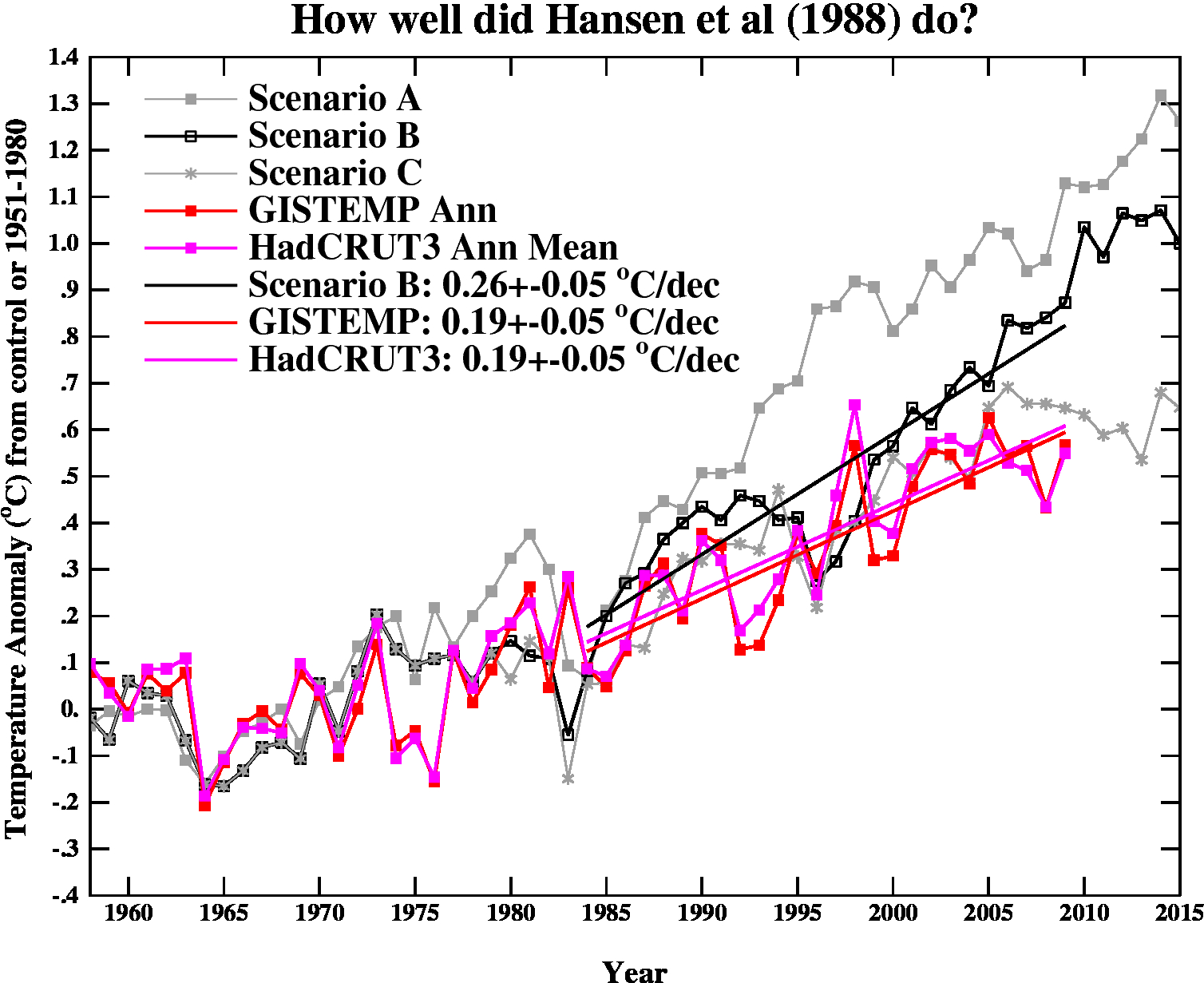

And finally, let’s revisit the oldest GCM projection of all, Hansen et al (1988). The Scenario B in that paper is running a little high compared with the actual forcings growth (by about 10%), and the old GISS model had a climate sensitivity that was a little higher (4.2ºC for a doubling of CO2) than the current best estimate (~3ºC).

The trends are probably most useful to think about, and for the period 1984 to 2009 (the 1984 date chosen because that is when these projections started), scenario B has a trend of 0.26+/-0.05 ºC/dec (95% uncertainties, no correction for auto-correlation). For the GISTEMP and HadCRUT3 data (assuming that the 2009 estimate is ok), the trends are 0.19+/-0.05 ºC/dec (note that the GISTEMP met-station index has 0.21+/-0.06 ºC/dec). Corrections for auto-correlation would make the uncertainties larger, but as it stands, the difference between the trends is just about significant.

Thus, it seems that the Hansen et al ‘B’ projection is likely running a little warm compared to the real world, but assuming (a little recklessly) that the 26 yr trend scales linearly with the sensitivity and the forcing, we could use this mismatch to estimate a sensitivity for the real world. That would give us 4.2/(0.26*0.9) * 0.19=~ 3.4 ºC. Of course, the error bars are quite large (I estimate about +/-1ºC due to uncertainty in the true underlying trends and the true forcings), but it’s interesting to note that the best estimate sensitivity deduced from this projection, is very close to what we think in any case. For reference, the trends in the AR4 models for the same period have a range 0.21+/-0.16 ºC/dec (95%). Note too, that the Hansen et al projection had very clear skill compared to a null hypothesis of no further warming.

The sharp-eyed among you might notice a couple of differences between the variance in the AR4 models in the first graph, and the Hansen et al model in the last. This is a real feature. The model used in the mid-1980s had a very simple representation of the ocean – it simply allowed the temperatures in the mixed layer to change based on the changing the fluxes at the surface. It did not contain any dynamic ocean variability – no El Niño events, no Atlantic multidecadal variability etc. and thus the variance from year to year was less than one would expect. Models today have dynamic ocean components and more ocean variability of various sorts, and I think that is clearly closer to reality than the 1980s vintage models, but the large variation in simulated variability still implies that there is some way to go.

So to conclude, despite the fact these are relatively crude metrics against which to judge the models, and there is a substantial degree of unforced variability, the matches to observations are still pretty good, and we are getting to the point where a better winnowing of models dependent on their skill may soon be possible. But more on that in the New Year.

In defense of Fortran…

I was surprised several years ago to find that the GCMs were mostly written in Fortran. I then looked at the code (available online for your viewing pleasure). After a number of hours, it became clear that the code was mostly just doing calculations. Without insult, I suspect I could program Excel to run a GCM if I was fully competent in the physics. Excel wouldn’t be fast enough of course (a matter of no small importance).

Programming can be broken down into four basic parts: loops, conditionals, algebra (calculations) and housekeeping (management of memory, stack, code, i/o and other system resources).

The GCMs need loops, algebra, a few conditionals and very basic housekeeping.

Fortran (literally “Formula Translation” for the non-computer science folks) is excellent at doing algebra, okay with loops and conditionals but provides very primitive housekeeping.

C/C++/Java are specifically designed around the “housekeeping” aspect and the ability to access ALL available computer resources with a fine level of control for the serious software engineer. Functions, objects, scope, namespaces, constructors, destructors, members, etc blah, blah, blah allow software engineers like myself to build and manage applications of enormous complexity with hundreds of objects, thousands of variables, thousands of unique functions dealing with sockets, threads, error handling, interprocess communication, sophisticated security, etc, etc.

The Apache Webserver, Linux or Windows could not HOPE to be written in Fortran. But a program to calculate the overall National League batting average for each year since 1887 using box scores from every game could easily and simply be written in Fortran by anyone with minimal programming expertise. While I could write a C++ program to do the same thing (and rather quickly since that is what I do for a living), it wouldn’t be particularly faster or *better* at calculating those batting averages.

A GCM Fortran program just doesn’t need all the sophisticated “handling” and “management” that many applications need. If Excel was fast and “large” enough, I suspect the climate scientists would use THAT instead.

This is not to say the GCMs would not benefit from high quality software engineering (using appropriate tools) particularly with regard to data parsing, data handling, data presentation, data analysis, multi-threading the calculations across multiple systems (for scaling), error handling (to avoid wasted runs), etc. Indeed, if the Fortran code is bundled into a library, C++ code can CALL those same functions getting the same speed benefit and provide the ability to handle the input, output and processing in a more sophisticated manner.

In the future, I can envision models that incorporate precise but modifiable land formations, ocean currents, etc where the additional elements creates enough complexity in the data handling and function handling that C++ will become mandatory. But until then…

And when they say they can’t model ocean dynamics well – its not a COMPUTER problem – its a PHYSICS/DATA problem.

PS A computer model is a misnomer of course, it really means a MODEL implemented with a computer. Technically, a computer model would model how a computer works…

> what stopped the runaway then

All of the factors combined, of course.

A few might include:

Fainter sun*

Where the continents were.

Where the ocean currents went.

How fast heat was redistributed to the deep ocean.

How fast biogeochemical cycling removed the CO2.

_______________

* “… we can find the habitable zone as the band of orbital distances from the Sun within which an Earth-like planet might enjoy moderate surface temperatures and CO2-partial pressures needed for advanced life forms. We calculate an optimum position at 1.08 astronomical units for an Earth-like planet at which the biosphere would realize the maximum life span. According to our results, an Earth-like planet at Martian distance would have been habitable up to about 500 Ma ago while the position of Venus was always outside the habitable zone.”

http://dx.doi.org/10.1016/S0032-0633(00)00084-2

Habitable zone for Earth-like planets in the solar system

S. Franck, A. Block, W. von Bloh, C. Bounama, H. -J. Schellnhuber and Y. Svirezhev

Potsdam Institute for Climate Impact Research

#91, TRY, another source of info about what climate can do is paleoclimatology. For instance, during the end-Permian 251 million years ago, there was a great warming (over many thousands of year, bec it was due to natural, not man-made, forcings). Over 90% of life on earth died. One of the final blows, after the warming reached great heights, was the oceans became super-anoxic (oxygen depleted). That’s already happening to some extent today, both from the warming and from fertilizer run-off and eutrophication. In such conditions bacteria break down the methane into HS (hydrogen sulfide), a deadly gas, that is thought to have dealt a deadly blow during the end-Permian both to sea life and also to some extent outgassing and killing land life.

A good book is Hansen’s, STORMS OF MY GRANCHILDREN — which explains both global warming and the politics (both Republican and Democrat) that has thwarted the needed efforts to mitigate climate change.

It’s not in the book I don’t think (I haven’t read all of it), but during the 1992 elections both Bush Sr and Clinton received about the same contributions from the oil industry. I’m not sure about Obama, but I don’t think he would have become an IL senator without southern IL coal behind him.

We have to look at the human climate forcings behind the non-human forcings. I sort of see us as in “The Matrix” (did you see the movie?) with our “umbilical cords” plugged into oil and coal. We live in various illusions, and have conflated subsistence in the ecological/environmental sphere with “the economy,” and fear any harm to the economy, thinking the environment is just a pretty, but unessential background picture. One way to understand the analytical distinction is that animals don’t have economies, but do just fine. Economics is instrumental, it facilitates; the ecological-biological-environmental sphere is fundamental to life.

A helpful book re how we can reduce our GHGs by 75% without lowering productivity is NATURAL CAPITALISM – see http://www.natcap.org . Also http://www.rmi.org

BD #76 claimed El Nino wasn’t predicted ahead of time with quotes from CPC’s archive. But Gavin said that it was “predicted months ahead”, not that everyone predicted it. S. Molnar #92 showed the Australian prediction 6 months out. There also is the GISS 2008 year end summation.

“Natural dynamical variability: The largest contribution is the Southern Oscillation, the El Niño-La Niña cycle. The Niño 3.4 temperature anomaly (the bottom line in the top panel of Fig. 2), suggests that the La Niña may be almost over, but the anomaly fell back (cooled) to -0.7°C last month (December). It is conceivable that this tropical cycle could dip back into a strong La Niña, as happened, e.g., in 1975. However, for the tropical Pacific to stay in that mode for both 2009 and 2010 would require a longer La Niña phase than has existed in the past half century, so it is unlikely. Indeed, subsurface and surface tropical ocean temperatures suggest that the system is “recharged”, i.e., poised, for the next El Niño, so there is a good chance that one may occur in 2009. Global temperature anomalies tend to lag tropical anomalies by 3-6 months.”

[Response: Yes and no. This is more of hand waving prediction than I was really referring to. The dynamical models were pretty clear that we would be in an El Nino event now months ago. – gavin]

Nice Item in American Scientist about Gilbert Plass (which Gavin Schmidt contributed to, I saw), the first Scientist to solidly identify the role of CO2 in anthro-CO2 forced climate change; it’s well worth reading!

Try,

Are you looking for this?

Chen, C., J. Harries, H. Brindley, and M. Ringer 2007. “Spectral signatures of climate change in the Earth’s infrared spectrum between 1970 and 2006.” EUMETSAT Conference and Workshop Proceedings 2007.

“Previously published work using satellite observations of the clear sky infrared emitted radiation by the Earth in 1970, 1997 and in 2003 showed the appearance of changes in the outgoing spectrum, which agreed with those expected from known changes in the concentrations of well-mixed greenhouse gases over this period. Thus, the greenhouse forcing of the Earth has been observed to change in response to these concentration changes. In the present work, this analysis is being extended to 2006 using the TES instrument on the AURA spacecraft. Additionally, simulated spectra have been calculated using LBLRTM with inputs from the HadGEM1 coupled model and compared to the observed satellite spectra.”

Griggs, J.A. and J.E. Harries 2004. “Comparison of spectrally resolved outgoing longwave data between 1970 and present.” EUMETSAT Conference and Workshop Proceedings 2004.

“Measurements of spectrally resolved outgoing longwave radiation allows signatures of many aspects of greenhouse warming to be distinguished without the need to amalgamate information from multiple measurements, allowing direct interpretation of the error characteristics. Here, data from three instruments measuring the spectrally resolved outgoing longwave radiation from satellites orbiting in 1970, 1997 and 2003 are compared. The data are calibrated to remove the effects of differing resolutions and fields of view so that a direct comparison can be made. Comparisons are made of the average spectrum of clear sky outgoing longwave radiation over the oceans in the months of April, May and June. Di®erence spectra are compared to simulations created using the known changes in greenhouse gases such as CH4, CO2 and O3 over the time period. This provides direct evidence for significant changes in the greenhouse gases over the last 34 years, consistent with concerns over the changes in radiative forcing of the climate.”

Griggs, J. A., and J. E. Harries 2007. “Comparison of spectrally resolved outgoing longwave radiation over the tropical Pacific between 1970 and 2003 using IRIS, IMG, and AIRS.” Journal of Climate 20, 3982-4001.

Hanel, R. A., and B. J. Conrath 1970. “Thermal Emission Spectra of Earth and Atmosphere from Nimbus-4 Michelson Interferometer Experiment.” Nature 228, 143-&.

Harries, J.E., H.E. Brindley, P.J. Sagoo, and R.J. Bantges 2001. “Increases in greenhouse forcing inferred from the outgoing longwave radiation spectra of the Earth in 1970 and 1997.” Letter, Nature, 410, 355-357.

“The evolution of the Earth’s climate has been extensively studied1, 2, and a strong link between increases in surface temperatures and greenhouse gases has been established3, 4. But this relationship is complicated by several feedback processes—most importantly the hydrological cycle—that are not well understood5, 6, 7. Changes in the Earth’s greenhouse effect can be detected from variations in the spectrum of outgoing longwave radiation8, 9, 10, which is a measure of how the Earth cools to space and carries the imprint of the gases that are responsible for the greenhouse effect11, 12, 13. Here we analyse the difference between the spectra of the outgoing longwave radiation of the Earth as measured by orbiting spacecraft in 1970 and 1997. We find differences in the spectra that point to long-term changes in atmospheric CH4, CO2 and O3 as well as CFC-11 and CFC-12. Our results provide direct experimental evidence for a significant increase in the Earth’s greenhouse effect that is consistent with concerns over radiative forcing of climate.”

Emphasis mine.

PS, for anyone–including those skeptical about climate science, as long as you can consider evolution and the age of the Earth– I also highly recommend the video:

http://www.agu.org/meetings/fm09/lectures/lecture_videos/A23A.shtml

Once you see the pictures of cliffs showing rocks dropped by icebergs, and on top of them rocks made out of marine animal shells — rock made out of carbon dioxide by biogeochemical cycling — you’ll have a better understanding of how this has worked over deep time.

From the slides displayed alongside the speaker, you can pick out search terms worth trying in Scholar, e.g.

http://scholar.google.com/scholar?sourceid=Mozilla-search&q=paleobarometers

It’s like playing whack-a-mole. Maybe whack-a-troll? ;-)

Original source and internal data: http://data.giss.nasa.gov/gistemp/

Recompiled and run on linux: http://rhinohide.cx/co2/gistemp/

Gavin, care to speculate if GCMs will ever migrate to schemes that are fully coupled to carbon models? Seems like it’d be nice to move towards models that include things like keeping track of the amount of inorganic C in the oceans (weakening the ocean sink) or calculating high latitude soil respiration? Pie in the sky thinking? Anybody suggest a good paper that covers this?

[Response: These models already exist and will be used extensively for the AR5 simulations. Cox et al (2000) was an early pioneer, and there was a good review by Friedlingstein, P. et al. 2006, ‘Climate–Carbon Cycle Feedback Analysis: Results from the C4MIP Model Intercomparison’, Journal of Climate, Vol. 19, 15 July, pp. 3337 – 3353. – gavin]

TRY asked in 91:

Yes and yes.

We know the absorption spectra of carbon dioxide, of water vapor, methane and other greenhouse gases. We are able to measure them in laboratories. We are able to predict them on the basis of quantum mechanics and we are able to predict the intensity of the lines based upon partial pressure and temperature.

See for example:

Pressure broadening

THURSDAY, JULY 05, 2007

http://rabett.blogspot.com/2007/07/pressure-broadening-eli-has-been-happy.html

Temperature

WEDNESDAY, JULY 04, 2007

http://rabett.blogspot.com/2007/07/temperature-anonymice-gave-eli-new.html

A radiative transfer brain teaser

THURSDAY, DECEMBER 03, 2009

http://rabett.blogspot.com/2009/12/radiative-transfer-brain-teaser-here.html

for example, the different absorption lines around 4.3 μm and of carbon dioxide correspond to different vibrational modes (which are quantized states of molecular excitation) and bending modes at 15&mu.

We are able to analyze the downwelling solar radiation into the blackbody radiation of the sun and the absorption lines due to specific greenhouse gases, and we are able to analyze the upwelling thermal radiation from the earth into the same.

Please see for example:

Atmospheric Transmission

http://www.globalwarmingart.com/wiki/File:Atmospheric_Transmission_png

Through the analysis of upwelling with satellites and downwelling radiation with ground instruments we are able to show where changes in the levels of specific greenhouse gases from different years are responsible for changes in the spectra of the thermal radiation that makes it to space and is re-emitted by the atmosphere at the ground.

Please see:

How do we know CO2 is causing warming?

Thursday, 8 October, 2009

http://www.skepticalscience.com/How-do-we-know-CO2-is-causing-warming.html

While it absorbs thermal radiation from the surface, it radiates thermal radiation as a function of its own temperature — in all directions, much of which makes it back to the surface. Therefore it reduces the rate at which thermal radiation makes it to space. Moreover, there are infrared images of it doing exactly this over western and eastern seaboards of the US due to higher population density, traffic and carbon dioxide emissions.

In fact you can see it in this image:

AIRS Carbon Dioxide Data

A 7-year global carbon dioxide data set based solely on observations

http://airs.jpl.nasa.gov/AIRS_CO2_Data/

The dark orange off the east and west coasts of the United States? That is carbon dioxide at roughly 8 km altitude — infrared at 15 μm in wavelength has been absorbed and emitted at lower levels of the atmosphere, but this is where it gets emitted for the last time before escaping to space — and as such the brightness temperature at that wavelength reflects the cooler temperature at a somewhat higher altitude than the surrounding areas.

Radiation transfer is one the best understood facets of climatology. And if you know that radiation coming in from the sun has been more or less constant (e.g., satellite measurements since the early 1960s show that but for the solar cycle solar radiation has remained nearly constant over this period) but that the amount of thermal radiation making it to space is being reduced by greenhouse gases, then by the principle of the conservation of energy you know that the amount of heat in the climate system is increasing. The only way that the rate at which energy entering the system and energy leaving the system may once again be brought into balance is by decreasing the rate at which energy enters the system or increasing the rate at which energy leaves the system.

We can’t control the sun, so we have to look at the other side of the equation. To increase the rate at which energy leaves the system either you reduce the opacity of the atmosphere — or you increase the temperature and thus infrared brightness of the surface to compensate for the increased opacity of the atmosphere. And to slow and ultimately stop the rate of temperature increase you have to stop increasing the opacity of the atmosphere — which means bringing our greenhouse gas emissions under control.

Re the self limiting positive feedback issue raised above, that would be true if it were solely dependent on CO2 or other GHG increments that are constrained by their finite concentrations (and the IR absorption cross section). But there is also a non self limiting feedback from dH20/dT that is exponential and is not finite. I wonder if Gavin would have the time to explain in “relatively” simple terms what boundary conditions the (typical) models use to prevent the H20 positive feedback from runaway. Cheers

“Carbon dioxide (CO2) levels in the atmosphere have risen 35% faster than expected since 2000″

Gavin, they are referring to concentration, not emissions. Temperature should be in the upper half of the cone, not the lower half.

[Response: The statement is wrong then (I guarantee they meant emissions). I showed you the actual data for concentrations – there is no dramatic increase over what was expected. Note that this isn’t contradictory because a) concentrations average over a long period of emissions, and b) there are quite large variations in terrestrial and ocean uptake year by year. – gavin]

RE: #76 BD

You’re looking at the wrong web page for El Nino predictions. What you’ve read is an update of current conditions and near term conditions. Try this link instead. GISS predicted the El Nino back in 2008. Read the last sentence of this December 2008 report.

http://data.giss.nasa.gov/gistemp/2008/

Re: #81 — TH…

I understand your confusion. The BBC article you cite does say “levels”. However, the actual paper they are referencing (http://www.pnas.org/content/104/24/10288) discusses only emissions. In other words, in a rare slip, the press got it wrong. :)

I do like these graphs and their predictions, but ultimately we really need to talk with overall energy heat content increase. That includes ocean and atmosphere energy changes as well as measured ice changes as well in one simple graph.

1998 may be the hottest year on record, but that is only on the surface and is only with the HadCRUT3 data. We really need to wake up these politicians with an overall energy increase, rather than playing the denialist games of presenting cherry picked data. If we show the ocean heat changes with the atmosphere changes in one go, and show those changes compared to our energy usage, it would shock anyone. The ocean alone has heated up by 16*10^22J! since measurements started. I normally ask them how many nuclear bombs that is.

@Ray Ladbury #76

Thanks for the confirmation Ray. Another question so that I am not flying blind: What is the reason that anomaly graphs are used rather than absolute temperature graphs? I am sure there is a good reason, but I would rather give the right one, than what I *think* is the right reason, in my arguments.

@B.D #76

“El Nino was not predicted until it was already happening.”

I believe that currently there is a lag between the actual start of an El Nino, and the time when we can say an El Nino has started. So if a someone were to say “Yesterday an El Nino started” and a month later we find that it did indeed start, that would still be a prediction even if made after the actual beginning. Others will correct me I am sure, but that is my understanding.

Re: pat #84

“I am having difficulty understanding the graphs…can the Hansengraphs into two graphs, natural global warming and the anthropogenic part.”

This raises another question for the scientists. Are there any model runs where the model is exactly the same as these ones except starting from the assumption that CO2 is not a Greenhouse Gas? Can a similar graph be made where such a run is compared to the others to see which more closely resembles reality?

I suspect that a lot of problem people have is not understanding just how wrong the models would get it if they assume that CO2 is not a factor, all else being equal. This would highlight, I feel, the need for the ‘skeptics’ to identify what ELSE has to be added to replace CO2, assuming their view is correct.

I would understand of course if no such run has been done – it is physically wrong, and could be considered a waste of very valuable computing resources – but it can’t hurt to ask.

Another thing about models that I think a lot of people fail to understand, is the model is NOT trying to PREDICT the actual temperatures at any period of time – they are simply trying to SIMULATE an environment running under the same conditions as the real one, sort of as a check to our understanding of how the real one works, and as a way to determine where we may need to improve. The fact they so closely follow reality indicates that we know a whole hell of a lot about how the real one works. Would that be a fair assessment?

TRY at #91

50% is passed to other molecules through collisions. Then those other molecules emit various other IR wavelengths?

You have been answered before here:

https://www.realclimate.org/index.php/archives/2009/12/unforced-variations/comment-page-20/#comment-151628

and by Ray Ladbury at #979 of the same thread and again now on this page at #95.

Isn’t it time that you acknowledge these replies rather than repeating your point/question?.

Lynn Vincentnathan wrote in 103:

I like that. An environmentalist interpretation of “The Matrix.”

There could also easily be a marxist or libertarian interpretation. Undoubtedly there are. Or an interpretation by an artist rebelling or against convention. I have heard of a labor union member interpretting it as applying to labor unions and of christian interpretations. A metaphor that can be interpretted along many lines.

My own interpretation is that it is about the human condition, the ever-present possibility of existential or cognitive failure where cognitive failure results in our being trapped in a world of illusion, how we define ourselves in relation to that world, and of the necessity of undergoing a process of shedding that world of illusion, the story of our rebirth — whereby we re-establish our proper relationship with reality. Others might say its fountainhead. Regardless, the need to act in the face of the ever-present possibility of existential failure and the need to be open to recognizing and correcting our mistakes is always with us, each at every moment of our lives — we can be engaged in a process of constant rebirth, always be following the path of the hero — as it is told through human myths throughout many human cultures.

In my view, this interpretation more or less subsumes the others — they are applications of its abstract principles within specific contexts. However, in my view we should also be open to the possibility that our application of it within a specific context is itself a form of illusion that we should shed. That how we have applied it so far may itself be a kind of metaphor that we mistook for reality. A world of illusion that we have yet to escape.

> here is also a non self limiting feedback from dH20/dT

Says who, Terry? I searched both Google and Scholar and found nobody suggesting this. Where did you get that idea?

Not from the Wikipedia people, I hope? Stoat has covered it:

http://scienceblogs.com/stoat/2009/02/runaway_climate_change.php

Eli Rabett (31) — The 1988 model is what it is with a measured climate sensitivity which now seems kinda high. But putting in more of the physics, it evolved into the current ModelE which hass a measured climate sensitivity of less than 3 K.

pat — On the centennial scale, all of the warming is anthropogenic. Without (significant) human influences, the climate should hae been very slowly cooling due to the change in orbital forcing.

Terry (111) — The air can only would so much water before clouds form and precipitation occurs. Try Ray Pierrehumbert’s

http://geosci.uchicago.edu/~rtp1/ClimateBook/ClimateBook.html

Ron Broberg wrote…

Is this raw data or has this data been adjusted, homogenized and re-adjusted. We already know how GHCN adjusted the data in Australia and Antarctica. I am a big fan of fudge, but not with climate data.

112, Comment by TH — 29 December 2009 @ 2:49 PM: “Carbon dioxide (CO2) levels in the atmosphere have risen 35% faster than expected since 2000″

Gavin, they are referring to concentration, not emissions. Temperature should be in the upper half of the cone, not the lower half.

____________________________________

Read it again. It says “levels … have risen 35% faster than expected”. The rate at which CO2 rises is basically a linear function of the emission rate, so this is the same thing as saying the emissions are rising 35% faster than previously expected. Because we’re talking about a relatively short time period, it doesn’t translate into a big difference in CO2 concentrations … not yet anyway.

[Response: Sorry, but read one of the preceeding comments. This is an error in the news piece – they meant to say emissions, not levels – which have not risen 35% more than expected. – gavin]

Re D Benson (@120)and Hank (@119) my question was not whether runaway would happen (and clearly it doesnt), but how the MODELS deal with limiting the exponential increase in H2O (and thus forcing) with increments in T. If constant RH is assumed then the total amount of H2O is still unlimited in the vapour phase as it approaches 100%RH combined with increasing T. If constant SpH is assumed then that is limiting, but constant RH is not limiting. I also understand that convection to higher altitude and rain out is a limiting factor, but for a given mass of air and a relatively unlimited amount of H2O(l) increase in T results in and increase in RH with no limits. Again my query relates to how the models limit H2O(g).

re: several posts on a runaway greenhouse effect on Earth

Pierrehumbert said here and elsewhere (in Nature for instance) that a runaway greenhouse was essentially impossible on Earth considering the amount of absorbed solar radiation. According to his figures, CO2 from fossil fuels could not trigger a runaway on Earth, except possibly through some highly unlikely indirect cloud effect (or something even more exotic).

I made this point on an earlier RealClimate thread. For some reason, fellow commenters seem to believe that CO2 from fossil fuels could actually trigger a runaway. Now as before, my question is: does Hansen substantiate his claim anywhere? If not, I will continue to abide in the bliss of Kombayashi-Ingersoll.

Why no discussion concerning the XBT to Argos data set jump around 2003/2004 ?????

Mike Cloghessy says:

Is this raw enough for you?.

Have fun.

TRY, see also:

http://ams.confex.com/ams/Annual2006/techprogram/paper_100737.htm

Hey gavin i wanted to make sure you saw this. Anyway i am having this discussion with this certain person on a danish climate debate forum, and he pulled this

http://wattsupwiththat.com/2009/04/11/making-holocene-spaghetti-sauce-by-proxy/

Out, an article which he wrote in where he argues that proxies are cherry picked or something like that, and that proxy studies do not show what climate scientists argue. Anyway he has a number of impressive graphs and i don’t really know how to respond. It seems quite impressive but i think he may be pulling some numbers out of his ass.

Please help.

It seems disingenuous to show a comparison from the AR4 (which was produced in 2007) that compares how well it does from 1980-2008.

Now picking data from the predictions made on page 70 of the IPCC Third Assessment Report (TAR – 2001). We can see that warming predictions for the period from 2001 to 2010 range from 0.3 to 0.4 for the model ensemble all SRES envelope. If we compare this to the actual warming of between 0 and 0.5 from your graph above it doesn’t appear that the models are doing too well.

> watts, spaghetti made with cherries

> a number of impressive graphs

This is what I keep seeing — any climate question, do a Google image search, and the septic sites dominate the results, page after page. They’re overwhelmingly represented in any image search on climate.

To get the attention of the people who don’t read but look at pictures (and vote on that basis) the scientists need to go kick their PR departments, and drastically increase the number of simple, illustrative graphics put out online.

Please. Go shake up _your_ public relations officers today.

Show them what Google Image finds, on any subject related to climate or your own research field. Make them afraid for their own and their university’s budget if they don’t get better at this.

David, pick the keywords out of whatever you read, do a text search for science sites, and _read_ instead of looking at the pictures. It’ll help far more.

Here’s one place to start. Just one. Search for more.

https://www.realclimate.org/index.php/archives/2008/09/progress-in-millennial-reconstructions/

Terry, why not ask about some specific model? Here for example: http://www.ukca.ac.uk/documents/igac.pdf

I don’t understand why you expect increasing humidity without limit. This may help:

http://geog-www.sbs.ohio-state.edu/courses/G230/hobgood/ASP230Lecture14.ppt

“… Saturation does not mean that air is holding all the water vapor it can!

Air is mostly empty space. If there were no dust or other nuclei for water to condense on, then we could evaporate much more water vapor into the air before it started to condense into liquid water.”

Terry (123) — The models just put in the known physics and crank away. Not sure want you really need, but try

http://bartonpaullevenson.com/Greenhouse101.html

http://bartonpaullevenson.com/NewPlanetTemps.html

Yeah i do read a lot. I am by all means trusting in the current paradigm in climate science. But i can’t respond to his text nonetheless, because i am not very well educated as of yet. I cannot see if what he is doing is good “science” or if he has any valid complaints, and of course i read the article he posted. I also google search man! come on. I was born with the internet basically wired into my brain.

But of course you are right Hank. Thank you for the link. Now if gavin or you could help me out with the article?

@130 Hank:

Bingo.

Best,

D

Hank Roberts @ 130 – you wrote how search results were dominated by “septic sites.” ;) Nice Freudian keystroke!

Actually just this morning I was asking at tamino’s blog how to filter out WattsandMcIntrye etc from blog searches. Did a little more research and figured out that you can do that easy enough with an Advanced blog search, by entering in the names of the authors you’re interested in. For instance, these are the authors linked to in Open Mind’s blogroll: DeepClimate|Tamino|chriscolose|climatesight|greenfyre|SimonD|fergusbrown

Much the same could be done with Google Image search, just subtract out the domains of your denier crew “-wattsupwiththat.com,” etc. It would be a simple matter to compile the resulting URLs for these restrictive searches and post them in a FAQ here and elsewhere.

Another fun correlation (?): Graph compares rock music quality with US oil production 1949-2007. And also Bush & gas, approval ratings and gasoline prices that is.

Time for a Mars bar!

Alw (129):

“It seems disingenuous to …”

It seems disingenuous that people repeatedly make the same bogus accusations without making any effort to understand the context of the subject matter (or “bother” to read the answers already presented). Go back and read posts # 17, 36, and 78 in this thread. Then read these articles:

https://www.realclimate.org/index.php/archives/2008/11/faq-on-climate-models/

https://www.realclimate.org/index.php/archives/2009/01/faq-on-climate-models-part-ii/

Terry (111): There is no water runaway, as there is a stable mechanism of eliminating the excess from the atmosphere, as David says. Some other runaways are possible, eventually limited by other factors. Runaways often die when a key resource is exhausted.

I.e. someday the global warming initiates a rapid thaw of the permafrost. Then large quantities of methane and CO2 are liberated causing more warming (with all the known feedbacks, like increasing water vapor etc.). This vicious cycle runs until all the permafrost has melted.

Possibly some other vicious circle will kick in concurrently, like thawing of the undersea clathrates. The process will then run until also this resource is exhausted. Then some other stable state may eventually be established. Risk of an inhabitable earth (as we know it) is very high or at least non-negligible, a few thousand years from now.

Anyway, what is the time frame we would like to evaluate? Agriculture was invented some 12000 years ago, right after the latest ice age. Written history goes back some 4000 years. Many kids born today will experience the year 2100, would that be adequate? No, it is meaningless. Just an arbitrary point on a ramp-up curve (political input added to the forecast).

Maybe cloning the dinos is a good idea. They would feel at home, again. In one of the imaginative dino-world shows the “reporter” was wearing an oxygen mask…

I also like comparing models and observations, but I remain deeply skeptical about the models and their predictive capabilities, most likely for several reasons:

1. I think that models should be tested on whether or not they replicate observations made AFTER the model is published. As you are no doubt aware, the models used in AR3 do not perform well when compared to observations made after their publication date. Of course, it is too early to draw a conclusion about AR3 and too early to even look at AR4. But its hardly an argument in favor of the models.

2. Although you didn’t have the OHC model data handy, the models did not predict that upper ocean heat content would suddenly stop increasing in 2004. You accurately display this change, but the data is rescued by a sudden spike in ocean heat content in 2002-2003. This is most likely due to a splicing artifact, and not “real”. If you believe otherwise, I’d love a reference. [The NOAA data appears to be from Levitus et al which quite explicitly relied on splicing different data sets at that very point].

3. You don’t compare recent tropical tropospheric temperatures to the model runs. As you are probably aware, when Santer 08 is extended to use recent data (you stopped at 1999 for some reason), the models falsify.

In the long run, none of this matters. Very little time has passed since AR3 was produced. Either the models are largely correct, or they are not. In ten years, I’ll feel comfortable drawing conclusions. If the models are correct then in 2020:

1. The model ensemble will be consistent with observed surface temperatures (since AR3) while a random zero trend prediction (with equal variance) will not.

2. Trends in the upper tropical troposphere since AR3 will also be consistent with observed data (using the methodology of Santer ’08 or equivalent), while zero trend predictions are not.

3. Upper ocean heat content will resume its rise and, over the period beginning in 2004 (when we have reliable ARGO data) to 2019 will be consistent with the model predictions (and again, zero trend predictions will not be).

I am, obviously, a skeptic. I agree that additional CO2 will tend to warm the planet, but I think that climate modelers are incredibly arrogant to think that they can actually model our planet. Simon Rika gushes that “I am actually quite amazed at just how well the models do” but doesn’t appear to realize that the predictions in the first graph are from AR4 (2007). The real test is whether or not the models continue to be accurate AFTER they are published.

In data I trust. If mother earth starts following the models, I will be convinced. If not, the models are just an object lesson in scientific arrogance.

Meteorologists would be supremely confident in their models too, if they didn’t have to suffer the routine indignity of being proven wrong. By making predictions that can only be tested over a span of decades, climate modelers are just delaying the inevitable.

[Response: Again, what would you rather we do? We are stuck with the fact that there is very little predictability on short time scales because of the magnitude of the intrinsic variability. Thus predictions only narrow to something useful after a decade or two. We do hindcasts, but we are then accused of tuning to the data. We can do experiments to match paleo-climates but then we are accused of irrelevance. We can do experiments for specific short term events like Pinatubo or the impacts of ENSO, but we are still accused of somehow fixing things. None of these criticisms are valid, and the models do quite well on each of these tests. At what point might you think there is enough information to accept that their projections are pointing in the right direction? (If the answer is never, then there is no point discussing things further). – gavin]

#87 Mike Cloghessy, and if you’re not satisfied with GISS releasing all of its data, you can get the truly crazy raw station history data directly from the NCDC. The data that is behind most of the world historical temperatures is there, documented by hundreds and hundreds of climatologists or weather people. And if you’re dismayed that you have to pay for it, just hit up your local university library and ask for a guest account, which will give you completely free access to everything going back to your grandparents or earlier!

Eli,

Since we have been warming from the 1600’s (or cooling since the Holocene Optimum), when did it switch from natural to anthropogenic? And why can’t the man made warming overcome the trivial effects of the cold phase PDO.

Pat

Gavin, having just read this new paper on mid-Pliocene Arctic Ocean temps, I would be very interested in a discussion (a new post perhaps) on efforts to model that period, or perhaps on the entire PRISM project since I don’t recall it ever being discussed here. As the paper notes, at present the models don’t seem to be able to warm the Arctic sufficiently, and this may be related to their inability to get the current Arctic sea ice reduction right. I find the confluence of very warm Arctic temperatures with CO2 levels approximately the same as today’s on a planet virtually identical to ours to be striking evidence.

That should be “septicTM Stoat”

http://mustelid.blogspot.com/2004/12/septics-and-skeptics-denialists-and.html

David, it’s not worth pursuing stuff at places like Watts, especially old topics like that, where people have already tried to make comments on it; you can see they gave up. But there’s no end to sites like that claiming wonderful discoveries that would win them Nobel prizes if they only wanted to publish them. Lots of pictures and assertions, characteristically, but nothing solid.

Read the real science. You won’t “win” a “debate” with anyone who’s relying on the PR picture sites. That’s my best advice. Perhaps someone who’s gagged all the way through that stuff will have something helpful to say.

@135 KLR:

The overarching issue is the dominance of the information by replacing it with…with…well, preferred information.

This surely is weakening whatever democracy we have left in the US of A, as curious individuals aren’t going to go to such lengths on The Google, esp if they don’t know what to filter.

Best,

D

KLR, the point is what someone new to the area, such as David Johansen sees.

Re Hank (@131) ““… Saturation does not mean that air is holding all the water vapor it can!” Thanks but I am well versed in standard water vapour physics/chemistry, and my question was related to how the model constrains it. I had hoped for a simple quick explanation, a bit of digging and http://physics.nmt.edu/~krm/minschwaner_dessler_jcli2004.pdf outlines the combination of RH, SH, convective detrainment and radiative cooling etc that limit H2O(g)in the models, and it is not a simple boundary condition (perhaps why Gavin decided to not reply) I will keep looking for other examples. Cheers

@Hank Roberts: “any climate question, do a Google image search, and the septic sites dominate the results”

Septic indeed.

Isn’t the septic dominance a simple consequence of the large effort of the denialists to position their view in search engines as opposed to what an honest census might provide? Google ain’t exactly Nate Silber, and doesn’t try to be.

Re #133: David, at a quick glance even I (being no expert) noticed that weird hinging at 1950, which invalidates everything else he did. One thing we can be quite sure of is that the proxies don’t all agree in 1950 or on any other date, so forcing them to do so will throw off everything else. Scanning through the comments, I noticed a Tom P (don’t know who he is, but Watts identified him as working for NASA) who identified that and some other problems.

In general, there’s no shortage of this sort of crankery on the toobs, and one thing I can guarantee you is that the authors of such things are entirely unpersuadable by even the most expert input. As Ray Ladbury can tell you, physics journals get submissions all the time purporting to overturn the consensus on things like relativity. Being a very prominent field at the moment, climate science gets more than its fair share of such material.

pat (140) __ Please do read climatologist W.F. Ruddiman’s popular “Plows, Plagues and Petroleum”; you might care to read his guest post here on RealClimate.

@Mike Cloghessy#121: Is this raw data or has this data been adjusted, homogenized and re-adjusted.

In other words, you don’t have a clue and are parroting the talking points fed to you. To bad you didn’t take the time to understand those talking points.

Here’s a clue:

GHCN takes daily CLIMAT reports and compiles them into monthly averages for Tmax, Tmin, and Tmean. The Tmean file is named v2.mean. For most purposes, this is considered the raw data. The GHCN value added data is in a file named v2.adj. See the difference? v2.mean is raw. v2.adj is value-added. The GISS team uses v2.mean (the raw data) and does their own value added adjustments. They do those adjustments right out in the open – the complete code is available. Many people have taken this code and recompiled it.

That is the rough outline. If you want detail (for instance on the use of the adjusted USHCN in GISTEMP) see the references below.

Of course, no station reports monthly Tmax, Tmin, and Tmean. This is compiled from daily sources and therefore (gasp) not raw, but compiled. Additional QA is provided as described here – but you won’t find the TOB or UHI adjustments here, that is in the v2.adj data set.

I didn’t catch the Antarctica reference, but if your Australia reference was to the recent post by Willis Eschenbach on WUWT, his initial post was either deliberately obscuring the differences between GHCN v2.mean, v2.adj, GISS ds1, ds2, ds3, CRUTEM, and IPCC – or he has a poor understanding of the differences himself. His blog post was a prime example of misleading, poorly edited commentary. Knowing how long he as been around the climate blogs, I lean towards the possibility that he was deliberately being misleading. Too bad you were suckered in.

(Rereading his blog post just now, I see that he has severely edited out much of the confusing cross-references to GISS, CRU, and IPCC. So maybe he is just that poor of a writer. Too bad it took him days or weeks to get to a readable version.) -rb

References if you are actually interested in getting off the teleprompter:

http://www.ncdc.noaa.gov/oa/climate/ghcn-monthly/images/ghcn_temp_overview.pdf

http://www.ncdc.noaa.gov/oa/climate/ghcn-monthly/images/ghcn_temp_qc.pdf

ftp://ftp.ncdc.noaa.gov/pub/data/ghcn/v2

ftp://ftp.ncdc.noaa.gov/pub/data/ushcn/v2/monthly/readme.txt

http://data.giss.nasa.gov/gistemp/sources/gistemp.html