In the Northern Hemisphere, the late 20th / early 21st century has been the hottest time period in the last 400 years at very high confidence, and likely in the last 1000 – 2000 years (or more). It has been unclear whether this is also true in the Southern Hemisphere. Three studies out this week shed considerable new light on this question. This post provides just brief summaries; we’ll have more to say about these studies in the coming weeks.

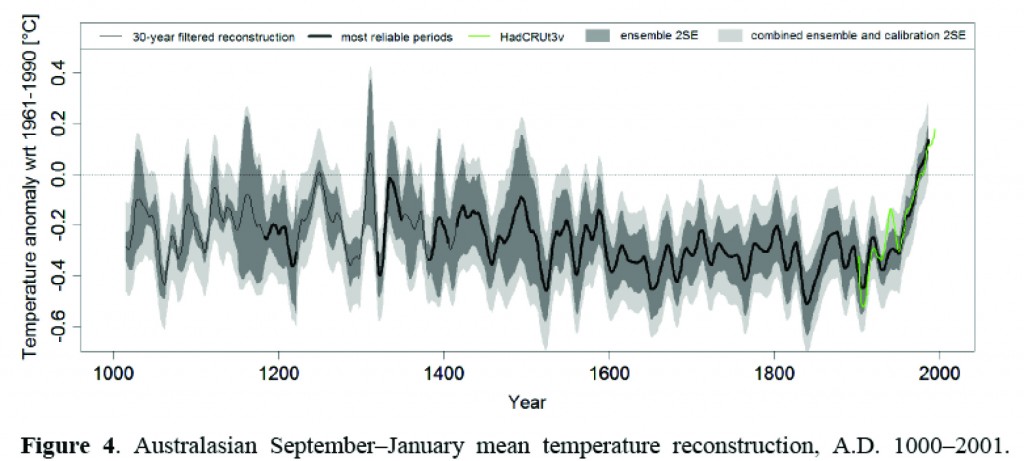

First, a study by Gergis et al., in the Journal of Climate [Update: this paper has been put on hold – see comments] uses a proxy network from the Australasian region to reconstruct temperature over the last millennium, and finds what can only be described as an Australian hockey stick. They use an ensemble of 3000 different reconstructions, using different methods and different subsets of the proxy network. Worth noting is that while some tree rings are used (which can’t be avoided, as there simply aren’t any other data for some time periods), the reconstruction relies equally on coral records, which are not subject to the same potential (though often-overstated) issues at low frequencies. The conclusion reached is that summer temperatures in the post-1950 period were warmer than anything else in the last 1000 years at high confidence, and in the last ~400 years at very high confidence.

Gergis et al. Figure 4, showing Australian mean temperatures over the last millennium, with 95% confidence levels.

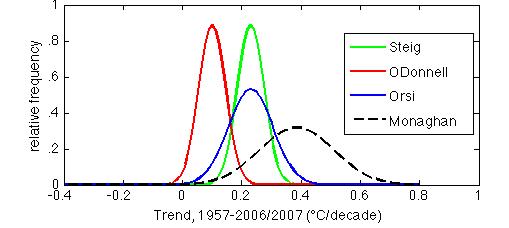

Second, Orsi et al., writing in Geophysical Research Letters, use borehole temperature measurements from the WAIS Divide site in central West Antarctica, a region where the magnitude of recent temperature trends has been subject of considerable controversy. The results show that the mean warming of the last 50 years has been 0.23°C/decade. This result is in essentially perfect agreement with that of Steig et al. (2009) and reasonable agreement with Monaghan (whose reconstruction for nearby Byrd Station was used in Schneider et al., 2012). The result is totally incompatible (at >95%>80% confidence) with that of O'Donnell et al. (2010).

Probability histograms of temperature trends for central West Antarctica (Byrd Station [80°S, 120°W; Monaghan] and WAIS Divide [79.5°S, 112°W; Orsi, Steig, O’Donnell]), using published means and uncertainties. Note that the histograms are normalized to have equal areas; hence the greater height where the published uncertainties are smaller.

This result shouldn’t really surprise anyone: we have previously noted the incompatibility of O’Donnell et al. with independent data. What is surprising, however, is that Orsi et al. find that warming in central West Antarctica has actually accelerated in the last 20 years, to about 0.8°C/decade. This is considerably greater than reported in most previous work (though it does agree well with the reconstruction for Byrd, which is based entirely on weather station data). Although twenty years is a short time period, the 1987-2007 trend is statistically significant (at p<.1), putting West Antarctica definitively among the fastest-warming areas of the Southern Hemisphere -- more rapid than the Antarctic Peninsula over the same time period. We and others have shown (e.g. [cite ref = "Ding et al., 2011"]10.1038/ngeo1129[/cite]), that the rapid warming of West Antarctica is intimately tied to the remarkable changes that have also occurred in the tropics in the last two decades. Note that the Orsi et al. paper actually focuses very little on the recent temperature rise; it is mostly about the "Little-ice-age-like" signal of temperature in West Antarctica. Also, these results cannot address the question of whether the recent warming is exceptional over the long term -- borehole temperatures are highly smoothed by diffusion, and the farther back in time, the greater the diffusion. We'll discuss both these aspects of the Orsi et al. study at greater length in a future post. Last but not least, a new paper by [cite ref = "Zagorodnov et al."]10.5194/tcd-5-3053-2011[/cite] in The Cryosphere, uses temperature measurements from two new boreholes on the Antarctic Peninsula to show that the decade of the 1990s (the paper state “1995+/-5 years”) was the warmest of at least the last 70 years. This is not at all a surprising result from the Peninsula — it was already well known the Peninsula has been warming rapidly, but these new results add considerable confidence to the assumption that that warming is not just a recent event. Note that the “last 70 years” conclusion reflects the relatively shallow depth of the boreholes, and the fact that diffusive damping of the temperature signal means that one cannot say anything about high frequency variability prior to that. The inference cannot be made that it was warmer than present, >70 years ago. In the one and only century-long meteorological record from the region — on the Island of Orcadas, just north of the Antarctica Peninsula — warming has been pretty much monotonic since the 1950s, and the period from 1903 to 1950 was cooler than anything after about 1970 (see e.g. Zazulie et al., 2010). Whether recent warming on the Peninsula is exceptional over a longer time frame will have to await new data from ice cores.

References

- J. Gergis, R. Neukom, S.J. Phipps, A.J.E. Gallant, D.J. Karoly, and . , "Evidence of unusual late 20th century warming from an Australasian temperature reconstruction spanning the last millennium", Journal of Climate, pp. 120518103842003, 2012. http://dx.doi.org/10.1175/JCLI-D-11-00649.1

- A.J. Orsi, B.D. Cornuelle, and J.P. Severinghaus, "Little Ice Age cold interval in West Antarctica: Evidence from borehole temperature at the West Antarctic Ice Sheet (WAIS) Divide", Geophysical Research Letters, vol. 39, 2012. http://dx.doi.org/10.1029/2012GL051260

- E.J. Steig, D.P. Schneider, S.D. Rutherford, M.E. Mann, J.C. Comiso, and D.T. Shindell, "Warming of the Antarctic ice-sheet surface since the 1957 International Geophysical Year", Nature, vol. 457, pp. 459-462, 2009. http://dx.doi.org/10.1038/nature07669

- D.P. Schneider, C. Deser, and Y. Okumura, "An assessment and interpretation of the observed warming of West Antarctica in the austral spring", Climate Dynamics, vol. 38, pp. 323-347, 2011. http://dx.doi.org/10.1007/s00382-010-0985-x

- R. O’Donnell, N. Lewis, S. McIntyre, and J. Condon, "Improved Methods for PCA-Based Reconstructions: Case Study Using the Steig et al. (2009) Antarctic Temperature Reconstruction", Journal of Climate, vol. 24, pp. 2099-2115, 2011. http://dx.doi.org/10.1175/2010JCLI3656.1

- N. Zazulie, M. Rusticucci, and S. Solomon, "Changes in Climate at High Southern Latitudes: A Unique Daily Record at Orcadas Spanning 1903–2008", Journal of Climate, vol. 23, pp. 189-196, 2010. http://dx.doi.org/10.1175/2009JCLI3074.1

“The result is totally incompatible (at >95% confidence)…”

Is that a completely fair statement? The two pdfs are different, presumably at 95% confidence, and clearly the match between Steig and Orsi is MUCH better than O’Donnell and Orsi, but eyeballing it looks like the overlap of the two pdfs is much greater than 5%… if blue was a model, and red was observations, I would not say that the model trend was falsified at 95% confidence. But maybe this is a different kind of comparison?

[Response: What matters here is the paired probability. The chances of the real values in the Orsi distribution being at the LOW end, and simultaneously O’Donnell et al. being at the high end is the product of the two probabilities together. For example, two overlapping Gaussian PDFs that overlap at 1-sigma are compatible only at (.33/2)^2 = 0.03 probability. –eric]

Thanks, Eric, this is fascinating, and fills gaps in our knowledge of the Southern Hemisphere.

The key, of course, is the trend line, with nothing on the horizon to reverse it. We know now that if we wait until the danger is much more obvious, it will be too late. This knowledge should be driving climate scientists to be more proactive, via community meetings, pressure on political leaders and, especially, insistent approaches to editorial boards and media companies. They are humans, too, and if they are presented with the facts we might have a chance. Mandia, Mann, and Hansen have shown leadership here. The time is right to take this to the next level.

The Orsi paper is compatible with O’Donnell when you consider the location of the bore hole (WAIS divide). The drill site is located in an area depicted by the reddish color on the O’Donnell map, which corresponds to ~0.2C/decade temperature rise.

https://www.realclimate.org/index.php/archives/2010/12/a-brief-history-of-knowledge-about-antarctic-temperatures/

The Orsi paper also shows that temperatures during the LIA were only half as cold in the Southern Hemisphere as the Northern.

[Response: That is incorrect. I used the data from the specific location of WAIS Divide in the calculation I show. As O’Donnell et al. show in their supplement, they *can* get agreement with the borehole data by using slightly different — and well justified — truncation parameters in their truncated least squares regressions. As for the LIA, *that* is where the specificity of location is relevant. Nothing can be said about “the Southern Hemisphere” in general during the LIA from these results alone.–eric]

Climate change is well attested to. However, if CO2 (greenhouse effect) is the main cause, should not the main warming occur in the winter, and not in the summer?

[Response: No one said anything about the rapid warming in West Antarctic being due to direct radiative forcing from CO2! Incidentally though, the West Antarctica warming *is* dominated by cold season — not by summer. Read the referenced papers.–eric]

“For example, two overlapping Gaussian PDFs that overlap at 1-sigma are compatible only at (.33/2)^2 = 0.03 probability”

Thanks. My mental guesstimate on that pdf multiplication step was obviously a bit off, the overlap looked larger than the math states.

Though… I’m still a little bothered by this comparison method. Say, we take a gaussian pdf estimate of a trend, .2+-.1, and then later God comes down and tells us that the trend is .2+-.00001. If I multiply the pdfs together, I get a very small number, despite the fact that my original pdf had the same best estimate, just much more uncertainty.

(or is the size of the pdf in this case part of the information: eg, there is some year to year noisiness in temperature, such that I can know the trend perfectly and it should still have a pdf?)

(I really need to go back and learn more statistics some day)

Eric,

Can you specify your response further? Your graph for O’Donnell shows a peak frequency at ~0.8C/decade. The O’Donnell paper shows that Central West Antarctica varies from -0.1 to 0.3 C/decade, which very likely matches your graph (no argument there). However, the drill site from the Orsi paper appears to reside in the area that O’Donnell, et. al. show to be ~0.2C/decade. I am looking at the specific locations of both papers, and they appear to show similar temperature rises at similar sites. O’Donnell, et. al. do show a large gradient in their temperature rise plot, so that precise location is critical for comparison. If I am incorrect, please explain.

[Response: I used the grid box closest to WAIS Divde (79.5 S, 112W) in both cases. If you average over a few nearby grid boxes, same result — see comment from Ned #2 below. –eric]

I see how you get the high confidence that currently we are warmer than the past 1000 years. I have a couple of questions, though.

Because the accuracy and precision decrease going back in time, does not the average/mean/mode/median (whichever best describes the centre line) become, in essence, smoothed? Does this not mean that the actual highs and lows become represented by lower numbers as we go back? I.e, the temperature range OF THE MEDIAN is inversely proportional to time? If so, then we can only say that the MWP was MORE than the depicted temperature.

During the MWP the northern hemisphere portion representing western Europe over to Greenland and Newfoundland was clearly warmer than present. Do you have to consider this portion non-representative of the world back then to say that the MWP was cooler than today? If the MWP northern portion as I described was non-representative of the world back then, why would it be today? Could today’s Arctic/Northern be unusually warm while the Antarctic/Southern is warm today, as it was unusually warm then while, presumably, the Antarctic/Southern was cool then?

I’m trying to understand how global global has to be to be non-regional. Also how representative of “reality” old data numbers are relative to new data numbers.

Thanks for this! Good info. (Quips about ‘the Team’ and equipment suppressed here.)

A slight typo: “more rapid the the Antartic Peninsula.” (Presumably that should have been a ‘than.’)

#4–And we were talking about summer versus winter warming just where, exactly?

..and “Antarctic,” of course…

[Response: Fixed, thanks.–eric]

Dan H. writes: The drill site is located in an area depicted by the reddish color on the O’Donnell map, which corresponds to ~0.2C/decade temperature rise.

and also: the drill site from the Orsi paper appears to reside in the area that O’Donnell, et. al. show to be ~0.2C/decade.

Are you just eyeballing that? I did a rough georeferencing of the O’Donnell map, plotted the location of the drill site, measured the RGB values, and compared them to the RGB values in the map legend. My plot had the site falling near the corners of four grid cells, so I’m not sure what the actual value is, but it appears to be in the range +0.10 to +0.15C/decade. It is definitely lower than Orsi’s +0.23C/decade.

I don’t see any reason to doubt the histograms shown in the figure in this post.

[Response:Yes, precisely. –eric]

Interesting to see evidence of the MWP and LIA in the southern hemisphere.

[Response: What years are you defining these periods with? – gavin]

“Because the accuracy and precision decrease going back in time, does not the average/mean/mode/median (whichever best describes the centre line) become, in essence, smoothed? Does this not mean that the actual highs and lows become represented by lower numbers as we go back?”

No. To the extent your logic is right about the smoothing, the *highs* would be ‘represented by lower numbers’, but the *reverse* would be true for the lows. There’s no reason to think the mean (or median) would shift–absent systemic bias, of course.

If you are going to calculate the probability that two things are incompatible, then you need to provide a working technical definition of what constitutes incompatibility in this situation. What exactly is your definition?

It appears that you have done the following (please correct me if I have misinterpreted any of the steps).

-Take four “independent” estimates of the temperature trend for central West Antarctica along with their standard errors.

-Construct four Gaussian distributions having whose means and standard deviations are those particular values.

-Interpret these distributions as representing genuine probability density functions of the actual temperature trend. It should be noted that in reality the actual trend being estimated is a fixed (i.e., non-random) unknown value.

-Declare that the O’Donnell estimate is “totally incompatible” with various others.

When a reasonable question is raised on this in comment 1, you provide an inline response containing what is claimed to be a probability calculation (bold mine):

Ignoring my previous objections on using the probability densities to calculate realistic probabilities , there are some further issues with you have done.

From the number, .33, given in the example, it appears that for each curve you have calculated a one-sided area of the portion of that curve “under” the other distribution and then multiplied these values together to calculate the probability of the event defined in the bolded text. From your conclusion, you imply that that event constitutes “compatibility”.

How exactly does one being larger than usual AND at the same time the other being smaller than usual constitute compatibility? One would think that the two values would be more compatible if EITHER the larger one was smaller and the smaller one remained smaller OR the smaller one was larger and the larger one remained the same. The calculation for your example would then be .325*.675 + .675*.325 = 0.439, considerably different from the result given in your comment.

In case you are having difficulty accepting this, try doing your calculation on two identical Gaussians shifted by only .01 of a standard deviation. Do you really think that the compatibility of those two distributions is a mere 0.25?

[Response: I defined “incompatible” very precisely. NB I think you are right that I made the wrong calculation though — see Tamino’s comment below. But your calculation is wrong for the example of moving two identical Gaussians by 0.01 std. devs. You need to calculate the joint probability in overlapping part of the distributions, which in the example you gave is essentially all of the distribution. You’ll find the probability of incompatibility in that case is very very low. In fact, about 99.6% of the distributions will overlap, so the probability that they are the same is .996^2 = 99.2%. That’s just a wee bit different than the probability for O’Donnell vs. reality.–eric]

Oops!

Slight calculation correction:

.325*.675 + .675*.325 = 0.439

should read

.159*.841 + .841*.159 = 0.267

The ocean component is also important. Here’s a good recent article on model-based predictions of oceanic subsurface warming in the Antarctic and Arctic regions:

http://www.nature.com/ngeo/journal/v4/n8/full/ngeo1189.html

“We find that in response to a mid-range increase in atmospheric greenhouse-gas concentrations, the subsurface oceans surrounding the two polar ice sheets at depths of 200 – 500 m warm substantially compared with the observed changes thus far. Model projections suggest that over the course of the twenty-first century, the maximum ocean warming around Greenland will be almost double the global mean, with a magnitude of 1.7–2.0 C. By contrast, ocean warming around Antarctica will be only about half as large as global mean warming, with a magnitude of 0.5–0.6 C. A more detailed evaluation indicates that ocean warming is controlled by different mechanisms around Greenland and Antarctica. We conclude that projected subsurface ocean warming could drive significant increases in ice-mass loss, and heighten the risk of future large sea-level rise.”

I’m gonna agree with RomanM about the incompatibility issue. I too get the impression that the plotted pdf’s are based on a central estimate and standard deviation estimate. If two identical Gaussians (i.e., same standard deviation) intersect at the 1-sigma point, then the difference of their means is 2-sigma. But the standard deviation of the difference is sigma times sqrt(2), so if we compute a z-statistic for the difference it’s only 2/sqrt(2) = sqrt(2). The two-sided p-value for that is 0.157. Am I missing something?

I’ll add that using the “joint probability” argument can be tricky (it has to be done just right), and the definition of “overlappy” is unclear.

[Response:Tamino, “Mea culpa, you are right (though Roman M is still wrong about his claim about two distributions that are only .01 sigma off in their mean.). In fact the distributions overlap at slightly more than 1 std, so what the results actually show is that there is >80% chance O’Donnell is wrong compared with reality as measured by the boreholes. I would still call that incompatible. The irony should not be lost that the blogosphere was alive with shrill claims of fraud etc. on the grounds that O’Donnell et al. got a *different* result from Steig et al (which is identical to Orsi at very high confidence). Now it’s going to be alive with claims that O’Donnell cannot be rejected at better than 80% confidence? –eric]

I still don’t see where there is an operational definition. Please point it out to me.

From what I infer reading your example, the “overlappy” portion is the part under the function min(f1(x), f2(x)) where f1 and f2 are the two densities. In the example, this turns out to be the area outside of one standard deviation for the Gaussian which is about .318. In the example I cite, it is for all practical purposes almost 1.

From the description in your inline comment 1, you then do the calculation for the simultaneous occurrence of two specific independent events (the choice of which really not making sense to me): (.318/2)^2 = 0.025. In my example, this would be (1/2)^2 = 0.25.

Perhaps you could please do the “correct” calculation in the latter case if I am not understanding you properly.

[Response:See the response I already gave. I think Tamino is right; I will get out the actual data and make sure I have the precise location of overlap of the distributions. The answer won’t change much: there is “only” about an 80% probability that O’Donnell et al. got the trend wrong.–eric]

“The chances of the real values in the Orsi distribution being at the LOW end, and simultaneously O’Donnell et al. being at the high end is the product of the two probabilities together.”

I’m still a bit confused as to what this means. When I try to experiment with continuous distributions, I get nonsensical answers. For example, if I assume a uniform distribution from 0 to 1 (ie, density 1), and multiply by a 2nd uniform distribution from 0 to 1, I get back a distribution from 0 to 1 which integrates to 1. Great, they are 100 percent compatible. But if I do the same operation with a uniform distribution of 0 to 2 (ie, density one half), multiply by a 2nd uniform distribution of 0 to 2, I get back a uniform distribution from 0 to 2 with density one quarter… which integrates to 50 percent… which means that I’m doing something wrong here…

To make it simpler, I thought about a six-sided die. The pdf of a normal die is a discrete function of 1/6 probability at each of the integers from 1 to 6. If I take a 2nd normal die, then I can take the product of the two probabilities, and I get 1/36 from 1 to 6… which sums to 1/6… which is the probability that the 2nd die gives me the same number as the first die. Which suggests the the product of pdfs is actually telling me something about the probability that random draws from each pdf will be the same, rather than the probability that the first pdf is compatible with the second. Or am I doing this wrong?

As an alternate way of looking at it: assuming Orsi is “truth”, can I integrate the Orsi pdf to the right of the intersection of the green and red lines and state that is the probability that Steig et al. is superior to O’Donnell? This makes more intuitive sense to me… eg, Orsi indicates some possibility that reality falls in a region where O’Donnell has higher probability density than Steig, in which case O’Donnell would have been a better bet. But most (80 to 90 percent?) of the Orsi probability density is to the right of that point…

[Response:This doesn’t make any sense. No statistical fiddlings are going to wind up with the conclusion that two distributions with identical means (mine and Orsi’s) being LESS likely to be the same than two distributions with means separated by a full standard deviation O’Donnell’s and Orsi’s)!.–eric]

So you know what the true value of the parameter is. Who needs statistics? ;)

[Response:Actually, yes! The borehole data is a direct measure of the true value. The statistical reconstruction approach I originally used — and the mean trend of which is validated by Orsi et al. — is not needed anymore, since now we have direct measurements of temperature from the borehole. The O’Donnell et al. results are not needed anymore either.–eric]

I didn’t say that my answer was “right”. What I said was that .25 would be the answer if the method that you used was applied to the case of a small shift sideways.

[Response:No, it wouldn’t.]

This brings me back to my earlier criticism of the misconception that the curves drawn somehow represent a probability distribution for an unknown constant value. This is tantamount to the interpretation of a confidence interval as “the probability that the unknown parameter is in that given interval is 95%” or whatever the confidence level might be. It is simply not a true interpretation.

On a constructive note, let me suggest an off-the-cuff definition of “compatibility” for estimates, not for questionable distributions defined around them:

Two estimates for a parameter are incompatible at a given significance level α1 if for each value in the parameter space, the null hypothesis is rejected at the α2 level by at least one of the estimates.

The two levels of significance may possibly be different depending on the underlying situation. For a Gaussian mean, this reduces to the two single sample confidence intervals overlapping.

[Response: Actually the confidence interval for Orsi et al. is indeed the probability (given their assumptions for their inverse modeling of course )that the unknown parameter truly lies in a particular place in under the curve. In reality, this is not really the same for Steig et al. or O’Donnell et al., where in fact the ‘true’ value is known and the uncertainties reflect the magnitude of the trend vs. the magnitude of the variance — that is, the likelihood of this particular estimate of the trend changing with e.g. one more year of data. So I agree with you there – strictly speaking this is somewhat apples and oranges. However, it’s what we have to work with. I would certainly be interested if you have a different suggestion for how to use this information, but ultimately the question is whether O’Donnell et al. underestimate the trend significantly? Do you have some clever way of somehow showing they are NOT, while maintaining the claim that O’Donnel et al. is different from Steig et al??>—eric]

[Response:Minor update, and then I really do need to get back to work: the correct calculation is as you pointed out to include the possibility that while one ‘true’ value lies in the overlapping tail, the other stays where it is (and all such permutations). What’s then needed is the cumulative distribution function for the O’Donnell et al. results, up to the overlap point (~1.6) and the same for Orsi et al. Then the result following Tamino’s point is p~0.19. So my statement — on this basis — of >80% chance that O’Donnell et al. were wrong is valid. Oh, and the chance that Steig et al. (2009) was right is umm. .well, you do the math. ;)–eric]

If you ignore the blade of the stick, and examine only the period from 1000 to 1900 it seems to me that there is a slight cooling trend (with decadal and century scale variations superimposed). Is this correct? Is the trend significant?

The reason I ask, is that a millennial cooling trend is well established in the northern high latitudes. (For a general overview readers may be referred to Kaufman 2009, key figure reproduced as figure 20 in the Copenhagen Diagnosis http://www.ccrc.unsw.edu.au/Copenhagen/Copenhagen_Diagnosis_LOW.pdf; and for a specific example from Iceland see Axford et al (2008, http://www.earth.northwestern.edu/~yarrow/axford_et_al_jopl_2008.pdf).

The high northern latitude decline in temperatures is not particularly surprising, as this is what one would expect (at least for summertime temperatures) from insolation changes. But these precessional insolation changes should only cool in the northern hemisphere, not the southern. So, to see the same cooling trend at sites in the southern hemisphere is interesting.

[Response: My view is that this is a conundrum only if one assumes that the only thing that matters is seasonal insolation intensity. Peter Huybers has done nice work showing that that is by no means likely to the the case, and shows that actually S and N high latitudes OUGHT to be in phase (both cooling through the Holocene). See Huybers and Denton, 2008. Also, a few model runs I”ve looked at for the late Holocene show this too.–eric]

I thought the decline over the past some thousands of years, before human changes became important, was the expected one from the post-ice-age peak.

This trend:

http://www.globalwarmingart.com/images/b/bb/Holocene_Temperature_Variations_Rev.png

consistent with this pattern:

http://www.globalwarmingart.com/images/8/8f/Ice_Age_Temperature_Rev.png

Those are from http://www.globalwarmingart.com/wiki/Global_Warming_Art:About

Hank,

The decline from the “post ice age peak” you refer to is driven by the orbital changes, in this case the precession of the equinoxes. These changes are expected to be most clearly apparent in the summertime temperatures in the northern hemisphere, which is what is observed.

However, this particular insolation signal should enhance summertime temperatures in the southern hemisphere; currently we are heading to a time when the NH winter solstice (and SH summer solstice) is at perihelion. This will happen about 1.5K years from now. When that happens SH summertime temperatures should be at their warmest, and NH summertime temperatures should be at their coldest. If other orbital factors (obliquity and eccentricity of the orbit) were “favorable” a glaciation might ensue. Of course with anthropogenic global warming this is unlikely to happen (see Archers Long Thaw book for details).

But while we are in an interglacial, it is not a given that an insolation change that lowers NH summer temperatures should also be expressed as a cooling trend in the southern hemisphere proxies.

I can think of several ways in which this might happen but before embarking on explanations, I’d like to know if the cooling trend is significant.

[Response:I can’t speak to the cooling trend in the Gergis et al reconstruction (having not carefully considered that result yet) but the cooling trend in the last 2000 years in Antarctic (West Antarctica) is unambiguous. See e.g. Fegyveresi et al., 2011 in Journal of Glaciology. –eric]

When comparing the two pdf’s, you assume they vary independently, presumably due to sampling noise, etc.

I’m not saying that is unjustified, but it is worth noting that the actual overlapping distribution could be much

lower (or higher) if the two measurements suffered from correlated (or anti-correlated) biases.

[Response:Very true. However, they are completely independent. 100% Which is what makes the comparison so worth doing.–eric]

If someone has access to high quality image files of the NH hockey stick from Mann(2008), and the Australasia hockey stick from Gergis(2012), it would be interesting to see the images displayed one above the other, on the same timescale. I used the Gergis image from this site, and the Mann image from the SkS site, and there seems to be some interesting agreement periods. For example, the sharp drops in proxy temperatures around 1350 and 1460 are present in both records, as near as I could see.

From what I understand, sometimes the NH and SH temperatures will march together, and other times be out of phase. A discussion of the two proxy records comparing and discussing the temperature swings, by someone knowledgeable of likely temperature swings over the last 1200 years, would be interesting to read.

> Antarctic cooling

chlorofluorocarbons may have added to recent cooling, if this study held up; a bit old:

http://www.nytimes.com/2002/05/03/us/ozone-hole-is-now-seen-as-a-cause-for-antarctic-cooling.html

I see “the relative importance of GHG and insolation on the warmth intensity varies from one interglacial to another.”

http://dx.doi.org/10.1007/s00382-011-1013-5

Climate Dynamics, Volume 38, Issue 3-4, pp. 709-724 2/2012

[Response: My own view is that while the research on ozone and its influence on Antarctic temperatures is solid, its importance is vastly overstated. It accounts for temperature change only in summer; see David Thompson’s excellent review: here.

Note also that the notion of Antarctic cooling was based on the short ~1970-2000 record. The full data show that even at South Pole this is not the trend — it’s neutral to slightly warming, as of course we showed back in 2009 (and as O’Donnell confirmed). It *has* been cooling over the Halley Station area, but that is about it.

As to what is happening in all the other seasons, during which the big changes are actually occurring, See our papers on this: Ding et al., 2011, Ding et al., 2012, Steig et al., 2009, Steig et al., 2012, as well as Schneider et al., 2011. –eric]

Just an FYI,

Andrew Mumford and Harold Ambler take a big poke at Keith Briffa in an article titled “Climategate Continues” in the National Review.

http://www.nationalreview.com/articles/300877/climategate-continues-andrew-montford

WUWT has a reprint that includes some interesting graphs.

H.

[Response: More fiction and fantasy. I like the “clique of like-minded and very powerful people” who have apparently gone along with this allegedly “worst sin in climatology”! Hyperbole much? – gavin]

The 0.23C/dec rise must be caused to a certain extent to CO2 radiative forcing, maybe not as clear than it is at arctic latitudes since the rise of Co2 to ~400ppm is a global phenomenon. The ice melt in the antarctic is caused by a number of factors: warmer wind and ocean currents and black soot deposits etc. Dont forget that even if the ice extent graph doesn’t significantly change with a warmer antarctic ocean there is a hightened hydrologic cycle thus more snow fall year round giving the impression that the area under ice is constant. What is happening right now and for the past 30+ years is significant accelerated thinning of the ice shelves. Another factor which needs a lot more research but seems very alarming is the antarctic ocean bottom water or current which is 60% less today than it was 30 years ago. This frigid water around 0C is heavily saline and travels south where it eventually warms and rises and is essential to moderating the southern ocean currents and temperature and hence the southern hemisphere climatic patterns. These factors all lead to potential and imminant tipping points which probably will change the global climate faster and more suddenly than even most climate scientists can conceive of.

25 on Antarctic cooling. I have a vague memory that Gavin wrote something on that as well. Yes. Here it is. http://pubs.giss.nasa.gov/docs/2004/2004_Shindell_Schmidt.pdf

[Response:Indeed, one of our very first RC posts was about this. See here.]

The original HS uptick/blade unambiguously predated the supposed era of man-made warming (1980 onwards). I’ve never understood why this isn’t discussed more. Anyone have any ideas ?

[Response: Anthropogenic warming started much earlier than 1980 – it is rather that it wasn’t unambiguously detectable until the 1980s (i.e. the signal to noise ratio was small). However, any proxy reconstruction is attempting to match the climate changes from whatever cause. The instrumental record shows warming since the 1900s and so you expect the reconstructions to show the same thing. The issue of attribution – why the changes are what they are – is a separate issue. – gavin]

From 15 Solem

We conclude that projected subsurface ocean warming could drive significant increases in ice-mass loss, and heighten the risk of future large sea-level rise.

Subsurface ocean warming presumably affects only floating ice. So when this melts, the sea level rises?

[Response: Slightly. It also affects floating ice shelves which serve as ‘stoppers’ to ice flow from the ice sheets, so indirectly impacting sea level as well. – gavin]

I’ve been looking for a response by the authors to Post 37 above, to the questions in this paragraph: “During the MWP the northern hemisphere portion representing western Europe over to Greenland and Newfoundland was clearly warmer than present. Do you have to consider this portion non-representative of the world back then to say that the MWP was cooler than today? If the MWP northern portion as I described was non-representative of the world back then, why would it be today? Could today’s Arctic/Northern be unusually warm while the Antarctic/Southern is warm today, as it was unusually warm then while, presumably, the Antarctic/Southern was cool then?” While I understand we can do little else but rely on proxy records for much of the SH history over this recent past, I think these questions need consideration.

PFK,

The thing is that the anomalous warmth of the North Atlantic during the putative MWP is not at all a speculation. We have measurements from other portions of the globe that show the rest of the planet (with the possible exception of China) was not anomalously warm. There simply was no global MWP.

In contrast, warming today is global. Yes, the arctic has warmed more than the rest of the planet, but that is in fact what the models predict.

[Response:I am not sure I’d go so far as to say that it’s definite that the MWP wasn’t globally warm. However, the evidence certainly does strongly support that. Indeed, borehole data from Taylor Dome in Antarctica (never published unfortunately) was used by Broecker to argue that the MWP was an ocean-circulation driven “seesaw” phenomenon (cold in the South when warm in the North). Not sure I buy that — indeed, the Orsi et al. data show that at least for West Antarctica, it is wrong — but neither do I think I know the answer. We’ll do a post on this some time soon. –eric]

Back in the post on O’Donnell et al., Eric thought aloud about doing a new paper taking their valid suggestions on board (but getting it right). There isn’t one yet, is there? Or did I miss something?

[Response: Have simply not had the time. I probably will do something about it this summer. It’s more compelling with the Orsi et al results because they offer independent validation. That way it is not just me and O’Donnell arguing with another another. In the end, physics trumps statistics.–eric]

The link to the Gergis et al paper at J. Climate is broken, and the paper no longer appears to be accessible. We have no information as to why or whether it’s temporary or not, but we’ll update if necessary when we have news.

Gavin: The link to the Gergis et al paper at J. Climate is broken…

Here’s a manuscript of the Gergis paper.

[Response: Thanks, but we’ve just been sent this from one of the authors:

…which of course implies that the reconstruction, conclusions etc. will need to be revisited. What impact it will have is a little unclear at this point. – gavin]

Gavin – you ought also to mention that the problem was discovered at the Climate Audit blog and that the authors publicly thanked Steve M. and the participants at his blog for identifying it.

Roger says: 8 Jun 2012 at 4:26 PM

Gavin – you ought also to mention…

Why? How extraordinary to expect that generous social scruples be gifted to ClimateAudit and its endless innuendo, insinuations, aspersions.

Perhaps when/if McIntyre & Friends learn the value of keeping a civil tongue they might be accorded punctilious civility?

Juvenile coup counting.

It’s not generosity if it is deserved.

dboston

The authors publicly thanked them. Why shouldn’t that information be disseminated ? The fact you don’t like them is irrelevant. There are many people I dislike and of whom I disapprove. However, if such people have done something worthy I’m not going to pretend that it didn’t happen and not acknowledge this.

The fact is that the error was reported at Climate Audit. In amongst the supposed innuendo etc., there was very useful quantitative work going on in reproducing results.

[Response:My impression is actually that the particular error was not first identified at Climate Audit. Karoly’s letter says “also”. But whatever, this is a perfectly good example of science working properly. My initial impression is that Gergis et al.s’ results will not wind up changing much, if at all.–eric]

Perhaps now the climate community will move towards better publication standards as has been seen in econometrics (eg archiving of code and data) and medical research (eg pre-registration of drug trials).

[Response: I have nothing against code archiving (the GISS GCM code is open source, and I prepared turnkey code for our recent paper in AOAS for instance), and this can be useful. But in this case, people are doing independent replication – at least of some elements – and I have often argued that this is more significant. I don’t see how ‘pre-registration of drug trials’ has any relevance here though. Paleo-climate is an observational science – like cosmology or archeology – and there are very few possibilities for real world experiments such as you would set up for a double-blind drug trial. You are stuck with the data that people have collected and you need to make the best of that. – gavin]

There is a difference between an error being reported at Climate Audit and being discovered by Climate Audit. It appears that the Journal was aware of the problem before McIntyre posted about it. Also, I’m not sure the authors “publicly thanked” him, that was an editor at the journal who wrote the email to McIntyre? Either way, a private email which the recipient posts on his site isn’t the same thing as a public statement. Have the authors actually released a public statement of thanks? As Roger points out, let’s not pretend. I think it is important to get the relevant details before we hand out accolades.

To Gavin’s point, even McIntyre admits the stick may still exist even in the updated results.

[edit – please try and stay focused on issues, not people’s feelings or motivations]

We lose nothing by acknowledging McI when he is right. He deserves acknowledgement. It doesn’t change what he is wrong about.

Ray @ 42, +1

Precisely. Science is about facts, not feelings.

Unsettled scientist

If you write a mail to a blogger then he will post it. This is going public. Whether it was discovered independently or not is irrelevant. The fact is that he and others at CA discovered it through detailed reproduction work and was thanked accordingly.

They put in the hours and did something good for science. Its churlish to nitpick and not to acknowledge it regardless of what one may think of them in other areas.

Re: Unsettled Scientist at 8 Jun 2012 6:39PM

Rather than an editor at the journal, it was the senior author of the paper, Dr. Karoly, who wrote the email to Stephen McIntyre. I haven’t seen any statement about this issue from the Journal itself.

Nothing wrong with an ackowledgement of course, but Karoly’s email mentions that they found the error by themselves as well. It’s entirely unimportant in the end.

Note what McIntyre say about it though:

“As readers have noted in comments, it’s interesting that Karoly says that they had independently discovered this issue on June 5 – a claim that is distinctly shall-we-say Gavinesque (See the Feb 2009 posts on the Mystery Man.)

I urge readers not to get too wound up about this”

So, clearly dog-whistling with something “interesting” and “Gavinesque”, and then, just for the form, saying “let’s not get too wound up about this” (so that he can defend himself against any claim that he made a big deal out of it)

Jeez, I wonder which of these two mutually exclusive messages will end up being most influential with his followers.

‘In the end, physics trumps statistics.–eric’

Hmm; ‘In The End’, maybe, but not in the real world and in the present :-)

Man: Ah. I’d like to have an argument, please.

Receptionist: Certainly sir. Have you been here before?

Man: No, I haven’t, this is my first time.

Receptionist: I see. Well, do you want to have just one argument, or were you thinking of taking a course?

Eric, you say “My initial impression is that Gergis et al.s’ results will not wind up changing much, if at all.–eric]”

That seems an odd thing to say. Only a few of the 27 proxies used in the study satisfy the described selection process. A new inspection, by the intended selection process, of all the proxies originally considered may find a number of others which should have been included. However that may turn out, the group of proxies will be quite different to the original 27 considered in the paper.

I wonder how anyone could have a reasonable opinion that this will change very little or nothing.

Of course, a similar result could probably be achieved by changing the method, but that would be a very odd thing to do and would leave the authors susceptible to the criticism that they were seeking some particular outcome, whether or not this was in fact the case.

[Response:I don’t know what it will change, because we don’t know exactly what the significance of this “error” was yet. Basing calibrations on data detrended during the instrumental period is not common practice, but neither is it unheard of. If their “mistake” was to have actually used non-detrended data to establish their calibration relationships, as is standard practice, then two commenters at Climate Audit itself have already provided data, from their calculations, showing that a high percentage, though not all, of the 27 sites used by Gergis et al., are significantly related to warm season temperatures at p = .05. –Jim]

The ClimateAudit claim for having first identified the “issue” could be valid but things aren’t entirely clear.

ClimateAudit was thanked (not publicly) ‘on behalf of all the authors of the Gergis et al (2012) study‘ thus – “We would like to thank you and the participants at the ClimateAudit blog for your scrutiny of our study, which also identified this data processing issue.” The timing of the ‘discovery’ of the “issue” was an identical date to the comment at ClimateAudit that first expresssed concern on the matter (although when the “issue” was truly expressed within ClimateAudit is something else again).

If we assume the ClimateAudit site as the discoverer, should we be mentioning it here? (Perhaps we should at the same time be acknowledging the contribution made by Limin Xiong to Gergis et al 2012, which, who knows, could have been just as essential to the eventual findings of the paper.)

I am no expert on what goes down at ClimateAudit but I see an irony in denialist websites (whose main ingredient is wanton ignorance melded with rich portions of error) being applauded for their very occasional successes. Yet websites such as RealClimate here (whose main ingredient is the evidence-based science) are continually sniped at and derided by those self-same denialist sites. (The sniping fire of course is not one way.)

So, is ClimateAudit like a good traffic warden in a car park – a hated but essential functionary in preventing parking chaos? Or does ClimateAudit go further like a bad traffic warden – terrorising motorists by continually trying to have ‘offending’ automobles towed away and crushed for the most minor infringement of petty regulation? I am no expert here.

As for “losing nothing by acknowledging McI,” will McI or the wider denialist community be ‘dining out’ on the strength of this ‘success’? If so, would any ‘acknowledging’ then simply become some trophy for the fellow diners to gloat over?

[Response: There is a very important asymmetry here. For the most part, scientists are actually interested in finding out stuff about the real world – mistakes made in analyses and bugs in code, while an unfortunate fact of life – are impediments to that, and so have to be fixed when found. The idea that scientists are inerrant plays no role in real discussions of the issues. However, there is a group that sees every error, regardless of its significance, as more ‘proof’ that all the science on climate change can be discarded. So each reported error becomes part of a litany of oft-repeated mantras designed to reinforce that central idea. The point of such exercises is to underline that scientists are imperfect (which they are), though it too-often morphs into statements that scientists are deliberately deceiving (which they are not). (It is the latter statements that are offensive, not the former). On the contrary, despite personally feeling bad after making a mistake, fixing errors is a big part of making progress (though the more interesting errors are usually more subtle than the one being discussed today). The way forward is not to fret about having made mistakes or worry about giving ‘different sides’ ammunition, but to see what actually changes and what it implies for anyone’s understanding of the real world. – gavin]