Ross McKitrick was so upset about a paper ‘Learning from mistakes in climate research’(Benestad et al., 2015) that he has written a letter of complaint and asked for immediate retraction of the pages discussing his work.

This is an unusual step in science, as most disagreements and debate involve a comment or a response to the original article. The exchange of views, then, provides perspectives from different angles and may enhance the understanding of the problem. This is part of a learning process.

Responding to McKitrick’s letter, however, is a new opportunity to explain some basic statistics, and it’s excellent to have some real and clear-cut examples for this purpose.

The ‘offensive’ paper (Benestad et al., 2015) has supporting material that criticises some of McKitrick’s publications (e.g. McKitrick and Michaels, 2004), explaining why they are flawed. However, this is only a very small part of the analysis presented therein.

There is one crucial point that McKitrick seems to have missed, which is that nearby temperature trends are related because the trend varies smoothly over space.

An important point made in (Benestad et al., 2015) was that a large portion of the data in the analysis of McKitrick and Michaels (2004) came from within the same country and involved common information for the economic statistics (GDP, etc). In technical terms, we say that there were dependencies within the sample of data points.

I repeated their work which was the basis for my original comment (Benestad, 2004), and the point about dependencies and spatial correlation was made in this paper as well as in one of the first posts on RealClimate (Are temperatures affected by economic activities?):

In order to reduce the possible effect of inter-station dependency, the data were sorted according to latitude. Then, half of the data (latitudes from 75.5° S to 35.2° N) was used to calibrate the statistical models and the remaining data were used for evaluation (latitudes 35.3° to 80.0° N)… The negligence to account for inter-station dependencies in the analysis resulted in spurious results and inflated confidence levels in the analysis of McKitrick & Michaels.

McKitrick responded with a strange description of my approach as “an extreme version of the withholding test”. This view is strange because my choice was the only way to ensure that the split-sample would not give spurious results due to data dependency, as the quote above explains.

McKitrick and Michaels had not carried out a proper split-sample test, as it involved dependencies between the calibration and evaluation samples, and they had used an approach which involved

500 split sample withholding/prediction tests in which 30% of the data were randomly withheld each time and predicted by a model fit to the remaining 70%.

This does not resolve the problem of interdependencies and is not proper a split sample test. Statistically, their approach favours splits where stations from the same region or the same country were prepresented in both the calibration and the evaluation samples.

In his letter, McKitrick also makes several false statements. One is that I predicted ‘the Northern Hemisphere data from the (smaller) Southern Hemisphere’.

The data set in question, however, contained more locations with temperature trends in the northern hemisphere, and an even split set the boundary for the two samples at 35.25°N. This was explained in the original comment (Benestad, 2004) and is apparent from the quote from it above.

McKitrick also wrongly states that only the residuals matters in terms of autocorrelation, implying that the number of degrees of freedom is not affected by the correlation of the values themselves. He claims to have proven so in a journal remote from natural sciences and climatology called Journal of Economic and Social Measurement. However, his claim is unconvincing and this journal is not really relevant for climate research questions.

He nevertheless insists that (here “SAC”=”spatial autocorrelation”)

without providing any evidence, that SAC would reduce the effective degrees of freedom in MM04 sufficiently to undermine the significance of the conclusions.

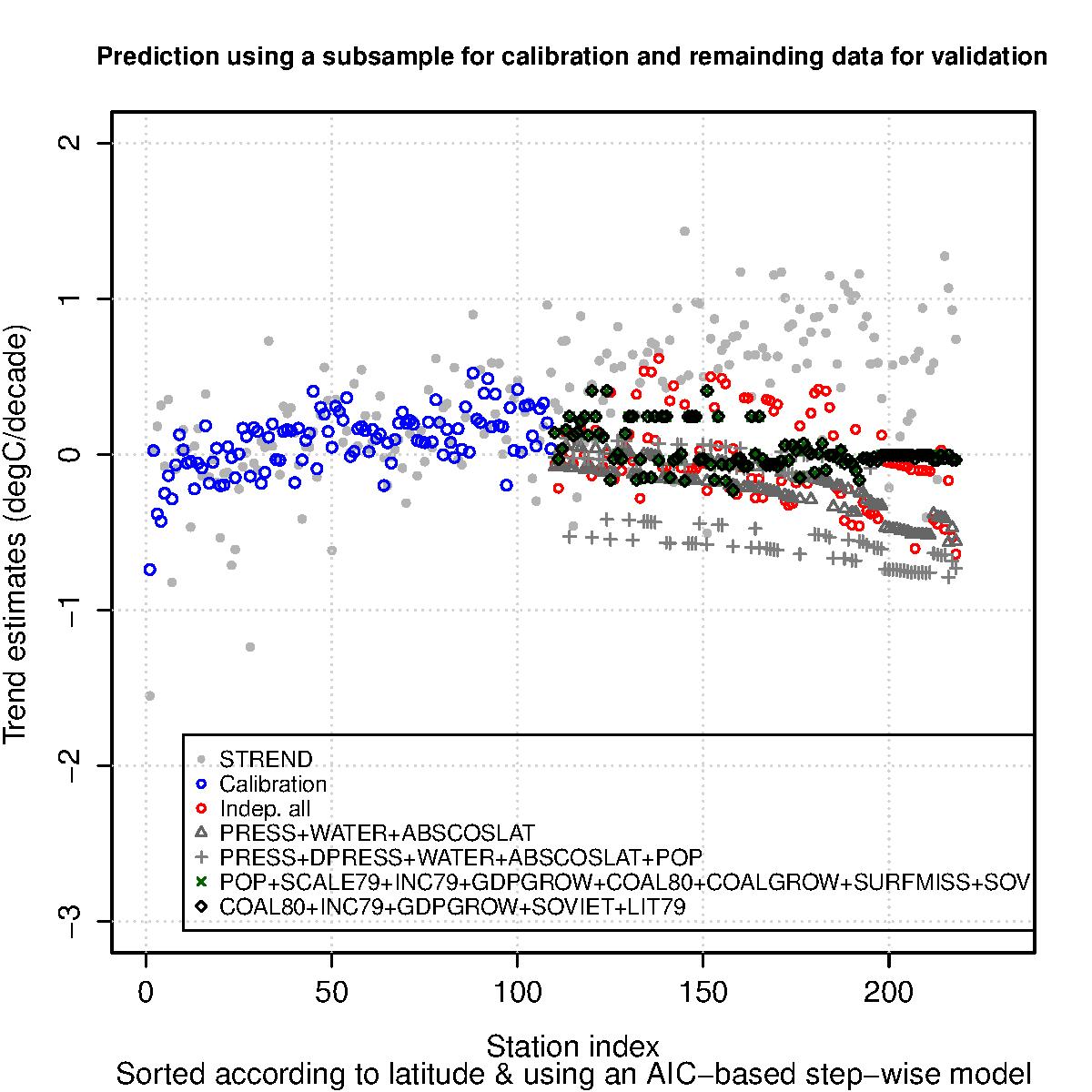

His claim is obviously false, as the analysis in my original comment did indeed provide evidence that McKitrick was wrong about the autocorrelation. The figure below provides an illustration and is from my original comment.

The grey points in the figure show the temperature trends (“STREND”) and the coloured ones are the results of the regression model. The results show that the economic variables cannot explain the trends at the stations in the evaluation sample (station index greater than 110).

The code of this demonstration is in the replicationDemos-software available from Figshare.

McKitrick’s view on autocorrelation is also wrong because in theory, you could get uncorrelated residuals from a model prediction for large number with high autocorrelation where the magnitude of the residuals are much smaller than the predicted values.

His letter also reveals that he continues to fail to grasp other crucial points, and that the observation is still valid that he

failed to address the important question of how many PCs were included in the calibration and how much of the variance they could describe

Although he cites his own paper in a journal with a questionable reputation, he fails to address the question in his response. Rather, he has a hang-upon the particular shape of the leading principal component.

Again, the crucial point is the number of principal components that were included in the regression analysis and the proportion of the variance that they account for. This is just mathematics.

Another way to interpret McKitrick’s letter is that it is an attempt to silence scientists. This is the second attempt by McKitrick to act as a gatekeeper (he himself falsely accused climate scientists of exactly this). He managed to prevent the paper from being published on an earlier occasion when it was submitted to Climatic Change.

Attempts to censor articles with which one disagrees may have become more commonplace in terms of motivated rejection of well-established scientific propositions (Lewandowsky & Bishop, 2016).

A Norwegian organisation that challenges the IPCC (“klimarealistene”) has twice tried to silence my public discourse on climate science and my comments on the Humlum paper by writing to the director of my institute.

Attempts to silence criticism runs against the principles of science.

References

- R.E. Benestad, D. Nuccitelli, S. Lewandowsky, K. Hayhoe, H.O. Hygen, R. van Dorland, and J. Cook, "Learning from mistakes in climate research", Theoretical and Applied Climatology, vol. 126, pp. 699-703, 2015. http://dx.doi.org/10.1007/s00704-015-1597-5

- S. Lewandowsky, and D. Bishop, "Research integrity: Don't let transparency damage science", Nature, vol. 529, pp. 459-461, 2016. http://dx.doi.org/10.1038/529459a

If spatial correlation does not affect the statistical power of an analysis of temperature trends, then I have an idea. Let’s have every US airport install four thermometers instead of one, and we’ll cut the statistical uncertainty of US temperature trends in half.

More seriously, if the predicting variables were country-level, I believe the data need to be treated as a clustered sample. This week’s Nature (Feb 4 2016, page 28) highlights failure to account for clustering as the most common error in obesity research. (I’ll ditto that for health services research in general.) The correction is straightforward (in cross-sectional data, anyway) and typically matters materially if there are few clusters (here, countries) or if one cluster dominates the sample.

Sooo, if an anti-scientist collides with a scientist, do they annihilate each other, emitting intense gamma rays and neutrinos??!! ‘-)

without providing any evidence, that [spatial autocorrelation] would reduce the effective degrees of freedom in MM04 sufficiently to undermine the significance of the conclusions.

This is also shown in a paper by Gavin Schmidt.

Even if the spatial autocorrelations were not that strong, it is an enormous blunder that the original MM04 paper did not take any autocorrelations into account and assumed full independence, both of the climate and of the economic parameters.

Furthermore MM04 jumps to conclusions about the reasons for their (non-significant) relationship between temperature trend and economic activity. This does not have to be more urbanization, it could also be better removal of non-climatic changes (inhomogeneities). Raw station data has a cooling bias; rich countries may invest more resources in good climate data and put more work into removing the cooling bias of the raw data. This would also lead to a statistical relationship between the temperature trend and economic activity.

P.S. Just as McKitrick should have responded in a friendlier way, a retraction for a difference of opinion is absurd, I would have preferred a friendlier title.

You do yourself no favors by citing Lewandowsky. Seems to me that you are yourself active in trying to censor papers that you do not like.

[Response: You present rhetoric devoid of any factual arguments. Citing a publication by a highly respected scholar from the top journal Nature – “no favors”? That’s just nonsense. And Rasmus is factually engaging with McKitricks arguments here, not trying to prevent their publication (as McKitrick did, even demanding a retraction). -stefan

Excellent post. Congratulations for standing up against the anti-science inquisition in this era.

Thanks for this rebuttal of professional denier McKitrick, but it is unlikely to move the needle. Ross will now claim to be quoted in Realclimate, and the subject of a scientific discussion.

In fact, McKitrick is no scientist and, as Dana et al point out above, he is not much of a statistician, either.

Economists such as McKitrick and Lomborg, both of whom are on the payroll of Exxon Mobil, deserve only ridicule. The facts listed here communicate that evaluation well to scientists, but the public is no better off. They are the ones that scientists and Realclimate staff need to concentrate on reaching. It’s not an easy task, especially for scientists, but is absolutely necessary.

Ross McKitrick makes some pretty gross quantitative blunders. In June 2015, he wrote a (now altered) note on the Karl et al Science paper.

This is typical of how one of his key points echoed around the usual chambers:

“Ross McKitrick points out that to get the new NOAA sea surface data they added 0.12 °C to the buoy readings, to make them more like the ship data. That magic number came from Kennedy et al. (2011) where the uncertainty was reported as (wait for it) 0.12 ± 1.7°C.”

So where did Ross get that? By looking at this table. It’s a very simple, convnional layout. Karl et al have looked at thousands of pairings where shaips and buoys have readings made in reasonably close space/time proximity. The show, or global and each ocean, the mean difference, the SD, SE and number in sample. But Ross has picked out the SD. That is, the standard deviation of the samples (pairings) (and multiplied by 2 for good measure). The uncertainty of the mean is of course SE, the standard error of the mean.

This was all pointed out by a student. But far worse than the bungle was the smokescreen.

“Regarding the SD vs SE question, there is no dispute that SE gives the variance of the estimated global mean, if the unweighted global mean is what you are interested in. What is really at issue is how good an approximation +0.12 is to each site-specific adjustment.”

The SD of the pairings used to estimate the bias adjustment has nothing whatever to do with “site-specific adjustments”. It has mostly to do with the degree to which the ship and buoy readings matched on those occasions, now long past. But this cover story persists in the McKitrick adjusted version:

“That quote refers to a paper by Kennedy et al. (2011 Table 5) 5 which reports a mean bias of +0.12 °C. However, Kennedy et al. also note that the

estimateestimated bias in each location is very uncertain: it is 0.12 ± 1.7°C !Also,In other words the estimated bias varies quite a bit by region. “And so the original elementary blunder lives on.

The situation with MM07 is even worse, with a series of typical blunders. Moreover, McKitrick was well aware of the correlation issue even before MM04 was published

http://rabett.blogspot.com/2016/02/nigel-persaud-dons-his-eyeshade-and.html

Benestad

I have looked at Ole Humlums treatize on cycles in the history, and I know him a bit personally from the monthly Klimapizza- meetings in oslo.

I showed him Urnesdragene to give him better ideas of material cyclings and oscillations, waves over waves and cycles over cycles and how to study it.

Those figures are an Icon for me in Musical acoustics that rather relates to the Helmholz and Herz-Bjerknes- school of physicas and acoustics. And Bohr de Broglie, I think.

Humlum, as is obviously also the case of Nicola Scafetta and Richard Lindzen, do find cycles and cyclings everywhere, but they do not care to examine physical and material causes and possible reality sustainability and experimental reproducability. Like I have to when I am to deliver finely regulated, higly complex pnevmatic oscillat\ors in molecular matter.

If you are unqualified and unable to do that, then you rather have to cheat and to bluff if you also have to earn. Unluckily that is where I find them.

They hardly discriminate between physical coherence, phase- couppled waves and moovement, and corelation that may also occur on chaotic level, but that cannot be made or constructed or predicted in any reliable way. I Think that is rather near to what you also have found.

Fourier was a rather Redeemed Harmonical pythagorean, who saw natural numeral harmonic swingings everywhere.

But, as proper Pythagoreans, we mus also see CHAOS! in the Universe and the scientific systems and instruments, not just CHOSMOS along with whishful thinking.

The sea serpent for instance is not a solid state dry matter phaenomena in virtual industrial political reality. The Sea Serpent is of rather viscous nature. Thus it hardly cycles , it rather rumbles and splashes, and shows a lot of Wolf im Ton also Just like like we have it in the radio transmitters , on the sailships and in the rocket engines, and in the orchestra, in the musical instruments.

Fouriers principle and method is quite superbe and eminent as long as you have got to do with fine laminar and dynamic notes. There the sublime complex sound figures are in order and fine just like the Urnes Dragons. But by tone break and rumble and Wolf im Ton it fails. Be aware of that essencial physical difference.

The Fourier principle is no universally appliciable method. It is rather a very clumsy and unpractical theory when it comes to physical Chaos and to unlaminar, catastrohic dis- continous, and irreversible events. Fouriers very superbe theory of superposed harmonic oscillation is quite often severely mis- used.

My judgement of Humlum & al is that they hardly got the dicipline of looking after and checking possible physical causes also for their found and described forms, and unable to discriminate and to look after material coherence or not.

The next study, the consequent higher CO2 in the atmosphere following higher temperatures in the oceans,… is falsely ascribed to “Henrys law” by many. Allthough Henrys law has no temperature parameter and only rules at constant temperatures.

What rather rules is Clausius Clappeyrons law of the champagne- cooler and the cold cellar beer, and the “seething” of natural water when you heat it up. Stated like dP/dT = etc.

Because of that, you can be certain to find what Humlum & al has found if you blind out any other disturbing effect and measure it accurately enough. But that real Clausius- Clappeyron- effect that Humlum & al seems to have found, is by magnitudes very much smaller than the whole reality as given by the Keeling- curve.

Thus my judgement is that the article is peculiar and cannot be set on for any practical purposes. All from Physical chemisterys point of wiew without Benestad having told about it. A waste lot of very proud litterature on musical acoustics in the library of physics has to be disqualified also the same way.

You see, for discussing fishes in the Aqvarium (Limnologie)and the water bitty for hardening steel where the water must be boiled and you even have to piss in it and add some salted herring lake for proper smithwork, Claussius Clappeyrons rather than Humlums principle and “Henrys law” should be known.

All in all, it seems both un- experienced and un- practical, so I really wonder what kind of praxis that kan be.

Scafetta I find is most probably a New Age Pupil of Theodor Schwenk by translations “Das sensible Chaos”. Schwenk is the great GURU of Hydrodynamics for the Antroposohers. That subject is important for me and is called “Harmonicale Grundlagenforschung”, but I rather use Johannes Kepplers harmonices Mundi from 1619, that is the basic work. Leonardos wave morphological conscepts in the light of Kepplers harmonics.

The mentioned GURU T.Schwenk is also quite more serious than Scafetta and Lindzen who tried to work in the same area.

Then we have Bjerknes on Pensum, very exellent indeed on the same.

Somehow they lack BACCALAVREVS 1 and empirical control and contact with the possible things in the discussion.

They quite definitely lack Bjerknes`water bath and Fossegrimmen & http.//Urnesdragene in school when discussing streaming and oscillating molecular matter in wild nature.

You list a lot of criteriæ and made errors and flaws. That listing is to complicated for me. But I rather judge by what I can find or show to in real nature if possible by naked senses, for the purpose og being able to manage practically in the laboratory by it.

My impression after some meetings is that those Klimarealistene are lacking higher education and are severely proud unpractical and misguided. They rather react and behave like very traditional inaugurated and trained frustrated party members. It may be rather the same syndrom over there in the GOP (Chicago Gangsters?) in our days and of the same generation.I see a clear connection there.

The obvious European paralell is Arbeiter und Bauernfakultät in Greifswald, now closed. That Faculty delivered philosophical litterature and catechisms of what they called “The Science” for efficient party- work and dicipline, also in Norway for several years, and at the age where those …..realists were studying and training things.

Rasmus

Very interesting column, and I mostly agree (or am mute due to a lack of expertise in climate modeling). One question though: how does splitting the data set into all the northern half and all the southern half remove spatial auto-correlation? Most data adjacencies are preserved that way, right?. I must be misreading something you said. Thanks

[Response: Hi, split-sample does not remove auto-correlation, but it removes inter-dendencies between the training sample and the evaluation sample. Nice that you asked this! -rasmus]

OP:

It demonstrates that McKitrick is playing a game of politics, not science. The modern culture and practice of science are alien to the vast majority of AGW-deniers. They are like Alfred Bester’s Scientific People. McKitrick may be out of his scientific depth, but we can be sure he’s scored rhetorical points with his base. Quant Suff! Most Scientific!

This in response to Carbomontanus (10 Feb 11:30 am):

Sometimes dancing at the edge of an unfamiliar language can add exquisite perspective to the exercise. As a neurobehavioral specialist with dim and distant fluency in higher math, I’m too quickly lost as words turn to digital jargon of calculus and beyond (sad story)..

Your post’s merging of wave theory, harmonics and uncertainty, described with unfamiliar and almost poetic phrasing, adds an unexpected dimension, and reminds me that higher math is just another language I barely recognize, let alone speak (though prob better than Norwegian).. That’s an essential lesson I think far too often ignored by many on another side of the climate wars table who have similar handicaps.

Having said all that, “Wolf im Ton” is my new favorite metaphor, already used once this morning in discussion of several crazy parents and how they lead their lives….

Then there’s “…Klimarealistene are lacking higher education and are severely proud unpractical and misguided. They rather react and behave like very traditional inaugurated and trained frustrated party members…”

All too apt, that.

I suspect mikeworst is confusing Stephan Lewandowsky, the psychologist, with Robert Lewandowsky, the footballer; it could happen to anyone. Besides, I don’t think Stephan Lewandowsky has ever scored 5 goals in 9 minutes.

#13–Ha!

That makes 2 false equivalences in mw’s comment, then!

@ Phil Mattheis

In English, they say “A rumble” for wave structural instability of that sort in wind instruments. We often say “Gurgle” (= Gargle) which is the way it sounds..

Which is also very obvious behaviours of the sea serpent. It gargles of course..

I have analyzed it very carefully on oscilloscope, and it is actually severe partial note beat.

The partials are coherent & phase- couppled to the groundtone by weak forces in space or rather in the square of space, namely due to van der Waals forces. Think of viscous, glue- forces, that are also slightly present in air.

Those weak forces, who make coordinated behaviours laminar oscillation possible at all in a gas ( = etym. CHAOS!) may become too strained under high amplitudes and “difficult” end conditions, that the laminar and coherent sound figures break and splashes like breakers on sea.

That is what I hear, and find on the scope.

The same class of effects is definitely important also in meteorology for “The weathers…”.

It is about Structural stability of streaming and oscillating pnevmatic forms, viscous forms, and electromagnetic forms.

It also occurs in radio transmitters and in solid state machinery like the violin.

The flute instrument or the radio transmitter or Chladnis plate sometimes cannot decide on which one of 2 possible sound figures at the same groundtone under the same main current and end- conditions. Then it “Rumbles” back and forth between both.

The same seems to be the case for the jet stream that may suddenly flip over from one form to another, and back again. Or ENSO for that sake, that looks to me like a similar system.

The ENSO and the NAO do not “Cycle”. It rumbles and splashes in a more chaotic way.

As for ugly disobedient parents, quite exactly!

The scope shows clearly that it cannot decide between Octave or Duodecima, pair or odd numbers, Femininum or Masculinum, on who is to be the strongest partial and thus in charge, on a critical note.

This is Real Climate, you see. Thus it is.

carbomontanus, thank you for your excellent comments. I should learn so much every day! In return, here’s another channel you might find interesting.

wili @ 2

Thanks for the laugh!

Actually though, it is sad that the good work of real scientists can be annihilated in in minds of the public, by the efforts of anti-scientists.

Stefan, I’d think twice before celebrating the editorial hegemony of any species of political activism over a science journal .

An interesting thing noted elsewhere by Rasmus was that one of the reviewers of the manuscript when it was submitted to Climate Research, one who rejected it in scathing, highly personal language, was actually Ross McKitrick himself.

Reviewer’s ethics? Conflicts of interest? Pffft. Whatever.

Magma, I would certainly hope that if I write a critical paper that one of the criticized authors is among the peer reviewers.

I have not heard similar complaints here, and in my opinion rightfully so, when Eric Steig reviewed a paper that was critical of one *his* papers.

Of course, Eric did not use scathing and highly personal language, but that’s different.

The proper avenue for that is via a published comment and/or reply, not to squelch a critical evaluation of one’s past work in an effort to ensure it doesn’t see print.

This was an egregious conflict of interest.

So, Magma, you are saying that Eric Steig acted unethically. I disagree.

The current example is even more problematic, in that Rasmus et al looked at many papers. That’s a lot of replies the Editors would have had to request and publish alongside this paper.

Reviewers who hesitate to recuse themselves impeach their own integrity.

> So, Magma, you are saying …

Bzzzt! Rhetorical misfire: Those are your words, not his.

RE sentence in article, “Attempts to silence criticism runs against the principles of science.” Jah. But a journal can still legitimately decline a paper written solely to criticize another paper. Journals are not direct forums for scientific disputes; generally when two groups disagree regarding the explanation of a phenomenon, both publish affirmative research of their own rather than attacks on each other. As I’m not versed in this particular case, I’ll leave it at that.

Hank, I am having trouble reading Magma’s comment as anything else than him saying it was unethical for McKitrick to review a paper that criticizes some of his own papers. Since Steig reviewed a paper that criticized one of *his* papers, the logical conclusion would be that Magma says Steig behaved unethically by agreeing to review that paper.

Note that I consider it likely that the Editor explicitly selected McKitrick *because* he was one of those that was being criticized, and thereby also knew the ‘CoI’ involved.

Illogical.

Criticism of the science is how science works.

McK’s been doing it wrong.