In January, we presented Lesson 1 in model-data comparison: if you are comparing noisy data to a model trend, make sure you have enough data for them to show a statistically significant trend. This was in response to a graph by Roger Pielke Jr. presented in the New York Times Tierney Lab Blog that compared observations to IPCC projections over an 8-year period. We showed that this period is too short for a meaningful trend comparison.

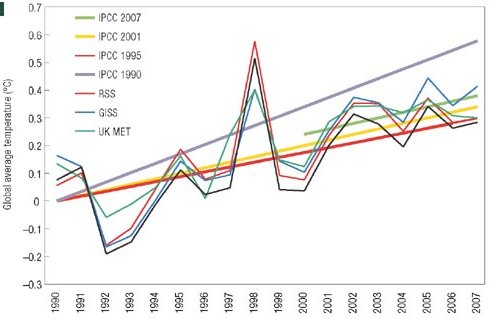

This week, the story has taken a curious new twist. In a letter published in Nature Geoscience, Pielke presents such a comparison for a longer period, 1990-2007 (see Figure). Lesson 1 learned – 17 years is sufficient. In fact, the very first figure of last year’s IPCC report presents almost the same comparison (see second Figure).

Pielke’s comparison of temperature scenarios of the four IPCC reports with data

There is a crucial difference, though, and this brings us to Lesson 2. The IPCC has always published ranges of future scenarios, rather than a single one, to cover uncertainties both in future climate forcing and in climate response. This is reflected in the IPCC graph below, and likewise in the earlier comparison by Rahmstorf et al. 2007 in Science.

IPCC Figure 1.1 – comparison of temperature scenarios of three IPCC reports with data

Any meaningful validation of a model with data must account for this stated uncertainty. If a theoretical model predicts that the acceleration of gravity in a given location should be 9.84 +- 0.05 m/s2, then the observed value of g = 9.81 m/s2 would support this model. However, a model predicting g = 9.84+-0.01 would be falsified by the observation. The difference is all in the stated uncertainty. A model predicting g = 9.84, without any stated uncertainty, could neither be supported nor falsified by the observation, and the comparison would not be meaningful.

Pielke compares single scenarios of IPCC, without mentioning the uncertainty range. He describes the scenarios he selected as IPCC’s “best estimate for the realised emissions scenario”. However, even given a particular emission scenario, IPCC has always allowed for a wide uncertainty range. Likewise for sea level (not shown here), Pielke just shows a single line for each scenario, as if there wasn’t a large uncertainty in sea level projections. Over the short time scales considered, the model uncertainty is larger than the uncertainty coming from the choice of emission scenario; for sea level it completely dominates the uncertainty (see e.g. the graphs in our Science paper). A comparison just with the “best estimate” without uncertainty range is not useful for “forecast verification”, the stated goal of Pielke’s letter. This is Lesson 2.

In addition, it is unclear what Pielke means by “realised emissions scenario” for the first IPCC report, which included only greenhouse gases and not aerosols in the forcing. Is such a “greenhouse gas only” scenario one that has been “realised” in the real world, and thus can be compared to data? A scenario only illustrates the climatic effect of the specified forcing – this is why it is called a scenario, not a forecast. To be sure, the first IPCC report did talk about “prediction” – in many respects the first report was not nearly as sophisticated as the more recent ones, including in its terminology. But this is no excuse for Pielke, almost twenty years down the track, to talk about “forecast” and “prediction” when he is referring to scenarios. A scenario tells us something like: “emitting this much CO2 would cause that much warming by 2050”. If in the 2040s the Earth gets hit by a meteorite shower and dramatically cools, or if humanity has installed mirrors in space to prevent the warming, then the above scenario was not wrong (the calculations may have been perfectly accurate). It has merely become obsolete, and it cannot be verified or falsified by observed data, because the observed data have become dominated by other effects not included in the scenario. In the same way, a “greenhouse gas only” scenario cannot be verified by observed data, because the real climate system has evolved under both greenhouse gas and aerosol forcing.

Pielke concludes: “Once published, projections should not be forgotten but should be rigorously compared with evolving observations.” We fully agree with that, and IPCC last year presented a more convincing (though not perfect) comparison than Pielke.

To sum up the three main points of this post:

1. IPCC already showed a very similar comparison as Pielke does, but including uncertainty ranges.

2. If a model-data comparison is done, it has to account for the uncertainty ranges – both in the data (that was Lesson 1 re noisy data) and in the model (that’s Lesson 2).

3. One should not mix up a scenario with a forecast – I cannot easily compare a scenario for the effects of greenhouse gases alone with observed data, because I cannot easily isolate the effect of the greenhouse gases in these data, given that other forcings are also at play in the real world.

Stefan,

Thank you for your reply in #124 and for clarifying the source of the methodology used in your paper. It is still not clear how the ‘minimum roughness criterion’ (MRC) was implemented in Matlab. I will contact Grinsted as you suggest.

Re 1: Moore (2004) states in the text “Here, the series is padded so the local trend is preserved (cf. minimum roughness criterion [Mann, 2004])” pointing to Mann’s method. The word ‘variation’ appears only in the caption of Fig. 2 in reference (I thought) to a specific construction. Still, you have clarified that you did not use Mann 2004 data padding and the confusion arises from reading of Moore 2004.

Re 2: You state:

Doesn’t this statement contradict the fact that another boundary condition would not support your conclusions, as you acknowledge in point 1?

Such a trend line passes through and terminates near the center of the IPCC range, not in the upper half of the range.

The general point here is that there is additional uncertainty in the trend at the end points not only due to the shortage of data, but also due to the choice of methodology. These do not appear to have been accounted for in your paper.

3. Thank you for any errors you might care to identify on my website.

Thanks again for the opportunity to clarify this issue.

[Response: Dear David,

since we use an 11-year embedding period, the uncertainty in the trend determination and the boundary condition only affect the last 5 years, agreed? (I double-checked this with the two boundary condition options of the ssatrend routine.) That is the years 2002, 2003, 2004, 2005 and 2006 in our paper. For 2001, I have a full 11-year embedding period, namely 1996-2006. Now the temperature values of 2002-2006 are all without exception in the upper half of the IPCC range, no matter whether you use the Hadley or the NASA data. So you have to choose a strange boundary condition to come up with a trend line that is not in the upper half here.

Your update post http://landshape.org/enm/rahmstorf-et-al-2007-update/ does show a graph with such a strange trend line, the one you label “SSA+MRC”. This line runs below all the data 2002-2006. It also deviates from your other lines well before 2002, at times which should not be affected by a boundary condition. You better double-check what you did there.

I maintain that in our paper, the choice of boundary condition does not affect any of the conclusions. We might also just have left out the last 5 years of the trend line – it would have made no difference to our paper whatsoever. -stefan]

Re: SecularAnimist #150,

It is not that anthropogenic CO2 emissions are a physically impossible explanation for the recent warming, it is just that the model evidence that is dismissive of a reasonable size solar contribution strains credulity. Even if the poorly understood level of solar activity were to fall back from one of the highest levels in the last 8000 years, to the still elevated levels reached in the earliest part of the 20th century, they will still be responsible for some of the current energy imbalance. Climate commitment studies and physical reasoning about the thermal capacity of the oceans tell us that the climate system takes centuries to adjust to a new level of forcing. A short intervening period of higher forcing won’t magically accelerate the adjustment of the deep levels of the ocean, and a mid-century aerosol cooling would only delay the adjustment.

The question is still open. The current models don’t have the credibility to reject the hypothesis that a significant part and perhaps even most of the recent warming can be explained by the plateau in poorly understood solar activity. Both the AGW and solar hypotheses require a signficant mid to late 20th century variation in aerosol forcing to explain the temperature signal. AGW may eventually explain most of the recent warming, but the coincidence of an unusually high plateau in solar activity, that is unlikely to continue, and a climate that is arguably within the range of natural variability, justifies some skepticism and humility.

I think we can all stop being amazed at the number of people who log onto realclimate discussion forums to post long-since-discredited notions about global warming. I’m surprised that people still bother to respond to such posts with anything more than a simple link to previous RC articles. For tom watson, you could try

Learning from a simple model 2007

The CO2 problem in 6 easy steps

Past Climate Reconstructions

A Saturated Gassy Argument

Those cover the three basics of climate science – the computer-based climate models, the real-time and recent observations, and the paleo-reconstructions of past climates, all of which point to an unprecedented warming of the Earth’s climate due to fossil fuel use and deforestation. People who are genuinely interested in the subject will be happy to read those introductory materials, while the typical global warming denialist corps will just continue to repeat their talking points ad naseum. Arguing with them only serves their purpose.

More to the point of this thread, i.e. model-data comparison, let’s look at the latest new projections of sea level rise (BBC and Reuters):

What happens to the ice as it melts? It absorbs some warmth from the atmosphere. How much? That’s another reason why using short-term trends in surface air temperatures to gauge global warming “pausing” or “accelerating” is not plausible. The atmosphere is primarily warmed by the emission from the oceans, ice sheets and land masses, which are themselves heated by sunlight, and which exchange heat among themselves – so surface temps can vary up or down while the planet as a whole continues to warm at a steady rate.

How do you get that top down view? NPR ran a story on “The missing heat mystery” in which they referenced the ongoing discussion over the accuracy of the Argo Float system, another data-model discrepancy issue:

I somehow feel that the actual discussion between the NPR reporter and the professor was rather longer and more complex than what was reported. What NPR should have said is that due to the fact that the Triana/DSCOVR climate satellite was mothballed by the Bush Administration, that variable can’t be directly measured at the moment, which leaves global warming denialists with some wiggle room to continue their campaign against regulations on carbon emissions.

Skeptics would do well to refer to some facts and a myth from the Hadley Center of the British Met Office. This is a respected source for climate change information:

http://www.metoffice.gov.uk/corporate/pressoffice/myths/

In light of recent comments about solar activity,particular attention should be paid to Fact 4 which explains why the recent warming can’t be attributed to the Sun or natural factors alone.

http://www.metoffice.gov.uk/corporate/pressoffice/myths/4.html

This latter site shows a graph of solar activity over the last 150+ years. It states among other things, that “Changes in solar activity DO affect global temperatures,but research shows that over the last 50 years,increased gas concentrations have a much greater effect than changes in the Sun’s energy.”

150 SecularAnimist: good point, but this may be a good time to remind everyone of what ad hominem means. “You are an idiot, therefore I reject your argument” is an example.

On the other hand, “For the following logical and supportable reasons, your argument makes no sense yet you persist with it, therefore you are an idiot” is not.

In either case, it’s probably best not to tell someone they are an idiot, but if anyone insists on wandering into a site full of experts and claiming to be cleverer than them all, someones patience is bound to snap sooner or later.

Tom: even though in my field, computer science, I like to think of myself as an “expert”, every now and then I have an inspiration that seems like a really good idea, but when I work through the detail, it falls apart. That doesn’t stop me trying to look for other good ideas. It is however preferable to get past the reality check phase before telling everyone else about a great advance.

re: #154 Phil

But perhaps more useful is to say: “You are wrong for these reasons, yet remain totally convinced you are right and thousands of professionals are wrong. You may wish to look up the definition of the Dunning-Kruger Effect, which is fortunately curable if one wishes.”

Thanks for catching my mistake, Alastair.

“Er, sorry, wrong number …”

re #126 Tom Watson

“FYI An animation of this sat image will show daily rising and falling temps in desserts.”

Which desserts are we talking about, baked Alaska or bananas Hawaiian? :-)

Re #132 Meltwater:

It is easy to despair, but also too easy to blame it on ourselves. Heck, half of Americans reject evolution; how did we even expect to succeed better? Much of the accusation of ‘arrogance’ is just concern trolling. We do pretty much as good a job as anybody under the circumstances.

Human reality is that non-scientists will believe what they want to believe, rather than what they need to know. The critical faculties that a science training affords won’t be available to the great majority of mankind. Why do you think the U.S. insists on having a Vietnam experience every second generation?

Debate isn’t helpful, and gives a false respectability to the denialist side. What happens here on RC is education/popularization/science review, not debate, and while valuable, doesn’t convince anyone that doesn’t want to be convinced — and anyway doesn’t reach the population at large.

What might work is to get non-scientific authorities — clergy, business, celebrities, even respected politicians if there are any left, from all parties — to sign up to AGW. Statesmanship. That seems to be Al Gore’s line.

Humanity at large will not come around by itself until the stuff hits the fan in a way that is obvious without analysis, probably a generation from now. Then the younger people here may enjoy an ‘I told you so’ experience, though it will be bittersweet.

tom watson writes:

We can measure back-radiation from the sky with instruments.

Martin Lewitt writes:

The recent warming turned up sharply in the last 30 years. The sun’s output has been flat for the past 50 years, as have cosmic rays. What’s so hard to understand here?

Yes, the sun affects the Earth, and yes, if the sun gets hotter the Earth will get hotter as well. But the sun hasn’t gotten hotter. We can measure it from satellites, you know.

Ref 161. Barton writes “Yes, the sun affects the Earth, and yes, if the sun gets hotter the Earth will get hotter as well. But the sun hasn’t gotten hotter. We can measure it from satellites, you know.” All of which is true. If Chapter 2.7 of IPCC AR4 to WG1 (Extraterrestrial Effects) is correct, then you are also correct. The question is, is Chapter 2.7 to AR4 to WG1 correct when it claims to show that the ONLY effect the sun has on the earth’s climate is when it gets “hotter”; i.e. (presumably) the solar constant increases? That is the 64 trillion dollar question.

Re Jim’s 64 trillion dollar question, I have a better one: Why would we prefer a mysterious solar influence, in the complete absence of a correlation, over a strong correlation that is explained by well-known physics?

A belated admittedly minor clarification: I do not understand the problem with using a person’s demeanor and attitude to help assess their assertion.. For stuff that is less than physically unassailable (most things, including AGW), taking into account slobbering, blubbering, and haughtiness is not unreasonable. Maybe not very accurate, to be sure, but none-the-less not unreasonable.

As I read, Tim B (no relation) in 35 expressed my thought above much better than I did.

Rod, the assessment of something like AGW should consider two basic issues: (1) Is the analysis consistent with the known laws of physics? and (2) Does the analysis yield results that are consistent with the evidence? Granted, the answers to these questions will have some uncertaintly for an problem as complex as AGW, but as both the analysis and the evidence are refined, the uncertainties will continue to shrink. There is absolutely no place for the attitude of whomever in this.

Re: Barton Paul Levinson #161

The Sun has gotten hotter. The fact that it did so prior to 1940, and then just pretty much just remained so, does not alter its ability to explain warming, even to this day. GHG gasses also have difficulty explaining the shape and timing of the 20th century temperature statistics without significant aerosol contributions.

No matter how suggestive the GHG concentration curves are to the naked eye relative to the plateau in solar activity, without positive feedbacks, anthropogenic GHGs can only account for less than a third of the recent warming. Credible attribution of the rest of the less than 1W/m^2 energy imbalance requires models that can reproduce the observed solar response, and have a much better “match” to the climate than current models.

Read the climate commitment studies of Wigley, et al, and Meehl, et al, to understand how the argument that recent solar activity has not increased is simplistic and wrong. If the level of solar forcing reached prior to 1940 continues (which is unlikely per Solanki), then there will be a solar contribution to the energy imblance resulting in sea level rise for several more centuries. Presumably most of the temperature response occurs in the first few decades, although arguably, that response was delayed by the causes of the midcentury cooling.

Re 167:

Well yes, because climate models are incomplete without the contribution from aerosols.

The larger question for Martin: How is the climate system so exquisitely sensitive to tiny TSI fluctuations, yet remarkably insensitive to the release of hundreds of billions of tons of carbon into the atmosphere?

Re #164 Rod B:

That’s good to hear, Rod… not too long ago when I suggested you do just that re: Singer, Lindzen et al., you didn’t warm to the idea. Demonstrable mendacity falls under ‘demeanor and attitude’, don’t you think?

I understand and respect that comments can’t be fully moderated (nor should they), but something as untrue as this, “The recent warming turned up sharply in the last 30 years. The sun’s output has been flat for the past 50 years, as have cosmic rays. What’s so hard to understand here?” does at least deserve a clarification.

[Response: This is not untrue. No recent assessment of solar forcing supports a rise over the last 50 years. – gavin]

The sun’s output did significantly increase over the past century as Peter Stott notes in this analysis of climate models.

Solar output varies significantly over 11-14 year cycles, has generally increased over the past century (the increase stopping about 30 years ago) and has declined since about mid 1990s. While the output peaked about 30 years, the minimum of the cycle was still higher than the previous one. It is also important to note, during that 30 years solar output was still higher than the previous century (and first half this one). Shortly after there is a significant decline in CRF in the 90s, warming did, in fact, stop.

Also of interest, the high energy cosmic rays that are known to affect low cloud cover are less affected solar activity, and when looked at independently rather lumped together with all cosmic ray flux (see Nir Shaviv’s sciencebits.com website), the relationship is quite striking. There are lags and feedbacks that dampen temperature signal too. The theory is entirely consistent with recent observations.

[Response: No, it is not. The focus on high energy GCR is a post-hoc justification for the lack of any evidence that geomagnetic modulation affects climate and that the ‘theory’ needs low clouds to vary but not other kinds of clouds. Any mechanistic theory however must start from the role of ionization in creating aerosols and this is not restricted to low levels nor high energy GCR. Instead, it is dominated by lower energy GCR and occurs higher in the atmosphere, it is also there that ionization is able to compete more favorably with other nucleation processes. There has not been one single attempt by the proponents of this mechanism to physically model the effects of this for the last few decades or over longer time periods when we know other processes are occuring as well (for instance, a GCR-cloud mechanism does not invalidate the radiative forcing of CO2). No quantified estimates of this theory are available, no attribution has been attempted, let alone proven, and so statements like ‘The theory is entirely consistent with recent observations’ are devoid of any substance. – gavin]

Roger Pielke Sr. recently posted some references on effects of cloud nucleation particle concentration on precipitation. These papers further support lags and dampening effects. The CRF/cloud effects should be fairly limited geographically, since cloud nucleation particles are already in high concentration over and near most land. Since the CRF/cloud effect on climate mainly happens through increased incidence in water, there are significant lags.

Ilya Usoskin notes: “S&W studied either global or longitudinally averaged data. On the other hand, the effect of CR on clouds is shown to exist not everywhere but only in regions with favorable conditions. This is mostly limited to a few climate-defining regions, e.g., North Atlantic/Europe, South Atlantic, Far East. See, e.g., Usoskin et al. (2004), Palle et al. (2004), Marsh & Svensmark (2003), Voiculescu et al. (2006).”

The signal in the atmosphere should be further masked by the slowing of the water cycle during high CRF periods due to the effect described by the Freud paper Roger linked, though I have no idea the extent of that effect. [ Increased cloud nucleation particle concentrations decrease precipitation, this would increase atmospheric temperature while the oceans would be warm less than normal].

[Response: The indirect effects of aerosols on clouds are important, imperfectly understood and much research is ongoing on their effects. However, the change in aerosols due to human emissions dwarfs any trend in aerosols associated with solar changes on multi-decadal timescales – remember global dimming? that is inconsistent with any solar-modulated trend in GCR nucleation. – gavin]

Jim,

Solar and GHGs are coupled to different parts of the climate system with different vertical, geographical, diurnal and seasonal distributions. Solar variation is poorly understood, UV variation and tropical convection influences on the stratosphere are becoming better appreciated. Most model independent estimates of climate sensitivity are based on solar and aerosols, and those few that aren’t are far larger error bars. In a complex nonlinear system where the forcings are coupled differently, the sensitivities should not be assumed to be the same. The AGW may well ultimately explain more of the warming than this unusually high level of solar activity, but there is little evidence for reaching that conclusion yet, or even that the AGW explanation is more likely. Just because the complexity of the climate system requires models to resolve this issue, doesn’t mean we are entitled to assume they are capable of that yet. There is plenty of evidence that they aren’t.

Hopefully the models will be capable in another couple generations, although I suspect it might take another couple decades of high quality data to validate and tune the models to project this small energy imbalance. Unfortunately, there may not ever be good enough data from the past to attribute the recent warming, but the models may be able to use this era of better data to demonstrate they have the required skill understanding the current climate and for projection.

Ref 163. You are missing the point. IPCC AR4 to WG1 either explicity or implicity states that the only extraterrestrail effect which affects the earth’s climate, is a change in solar constant. Does AR4 establish this, beyond reasonable doubt, in Chapter 2.7? I have noted many times that there is clear evidence that the sun, or something else that is extraterrestrial, affects climate. The trouble is that this is only a hypothesis, as no-one can show conclusively what the linkage is. But this does not mean that a linkage does not exist. The evidence presented in Chapter 2.7 of AR4 is, to me, woefully inadequate. The idea I have seen postulated is that the historical changes in the earth’s climate are due to a change in the solar constant. It is a lovely hypothesis, for which there is not one single solitary scrap of evidence.

[Response: Why is it people make so many incorrect statements? There is an *explicit* discussion of the potential for GCR/cloud effects in Chapter 2, and there is no implicit conclusion that there are no solar (direct or indirect) impacts on climate. Instead, there are quantifications of the direct TSI effect (relatively simple in concept and easy to quantify), the amplified effects of the UV on ozone (still simple and only a little harder to quantify) , but no quantification of the more speculative mechanisms because these mechanisms have *never* been properly quantified. How is IPCC supposed to assess work that has not even been attempted, let alone accepted by the wider community? – gavin ]

Oops. Another important mistake in my comment:

“Shortly after there is a significant decline in CRF in the 90s, warming did, in fact, stop”

There was an increase in CRF, not a decline. A decline in solar activity.

RE 155 Philip Machanick, I followed the link with your name and in fact we do think or approach finding truth is honest ways. My engineering work in the art and science of measuring many things has me biased to regard that what modern satellites see as far more honest, OK metrology sound data than all the past inferred indexes of temperature based upon humans writing it down or judging proxies.

If CO2 is delaying the departure of heat energy, It must be stored somewhere and It should be measurable. If said heat cannot be found, then all science

PS In general, I never, well seldom give any notice to those who post attacks behind the courage of an anonymous handle.

re 160 Barton Paul Levenson Says:

“We can measure back-radiation from the sky with instruments.”

Where does one buy an instrument that measures back-radiation from the sky?

One that provide some meaningful data on What CO2 is doing.

But the way the paper I linked too and that is called by some here as wrong wrong wrong came to pass because an EE like me said, this does not make sense.

From http://www.junkscience.com/jan08/Global_Warming_Not_From_CO2_20080124.pdf

Recently he had trouble understanding how a rise of carbon dioxide, or any substance, in the atmosphere from such low values as 0.035 percent could have such an overly large influence on the Earth’s future temperature as some predict. So he decided to read and learn about this from the perspective of an individual with a technical background, but not in the field of climate. After a few infrared measurements on summer nights showed that the amount of heat being radiated from the atmosphere was much less than some climate models predicted, he began an intensive study resulting in his own computer simulations based on available atmospheric data and well- known laws of infrared physics. While weather predictions and long-term climate and very complex and beyond the author’s expertise, he feels the single issue of heat absorption and radiation due to carbon dioxide is much simpler, well understood, and better modeled and measured as proposed here. For reasons explained in the report, he went from being unknowledgeable to skeptical to now very doubtful about a harmful future temperature rise due to increased carbon dioxide levels.

None of the work done by the author related to this report was funded by any organization, company or person, besides the author who paid personally for the infrared measuring device and the fees for the carbon dioxide and water vapor spectral transmittance computer calculations.

Richard J. Petschauer

Whew, glad junkscience.com solved this issue! I was becoming concerned about the long term impacts of adding gigatons of GHGs to the atmosphere and what that might mean to my 10 and 8 year olds. Glad this issue is solved……

tom watson quoted: “While weather predictions and long-term climate and very complex and beyond the author’s expertise, he feels the single issue of heat absorption and radiation due to carbon dioxide is much simpler, well understood, and better modeled and measured as proposed here.”

This is the paradigmatic example of what I referred to earlier: an individual with neither knowledge nor understanding of climate science, who somehow comes to believe that he has found the “simple” and obvious reason why global warming due to anthropogenic CO2 emissions is impossible — a simple and obvious reason that has somehow eluded the careful study of many hundreds of climate scientists over decades.

Jim Galasyn ponders a Jim Cripwell comment: “Why would we prefer a mysterious solar influence, in the complete absence of a correlation, over a strong correlation that is explained by well-known physics?”

For the simple reason that a strong correlation of global warming with CO2 emissions from burning fossil fuels implies that we need to phase out the use of fossil fuels, whereas a “mysterious solar influence” does not. Therefore, the anthropogenic hypothesis must be false, and the “mysterious solar influence” hypothesis must be true.

Martin (169) says, “…Demonstrable mendacity falls under ‘demeanor and attitude’, don’t you think?”

In a word, NO, not really. (O.K., three words)

Re Martin’s claims in 171, please read the excellent Weart history of global warming to understand why solar forcing is ruled out.

Re #159 “Humanity at large will not come around by itself until the stuff hits the fan in a way that is obvious without analysis, probably a generation from now.”

See:

http://news.bbc.co.uk/2/shared/bsp/hi/pdfs/25_09_07climatepoll.pdf

Extract:

“Large majorities around the world believe that human activity causes global warming and that strong action must be taken, sooner rather than later, in developing as well as developed countries, according to a BBC World Service poll of 22,000 people in 21 countries.

An average of eight in ten (79%) say that “human activity, including industry and transportation, is a significant cause of climate change.”

Nine out of ten say that action is necessary to address global warming. A substantial majority (65%) choose the strongest position, saying that “it is necessary to take major steps starting very soon.””

Let me clarify, that my hope for the models intended model “generations”, not human “generations”. I had in mind a couple model generations i.e., 6 to 10 years. I am more concerned about the length of the data record that might be needed to validate them, although more “interesting” solar behavior might help crystalize some of the issues sooner.

As far as solar variations go, see the following recent paper:

http://publishing.royalsociety.org/media/proceedings_a/rspa20071880.pdf

Lockwood & Frohlich (2007) Recent oppositely directed trends in solar climate forcings and the global mean surface air temperature. Proc. Roy. Soc.

Take a look at Figure 1, which plots (a) The international sunspot number, R (b) The open solar flux FS (c) The neutron count rate C due to cosmic rays (d) The Total Solar Irradiance composite (e) The GISS analysis of the global mean surface air temperature anomaly DT (with respect to the mean for 1951–1980).

Nature has a subscription-only news article on this paper: No solar hiding place for greenhouse skeptics, Jul 4 2007

Thus, solar variation explanations just don’t work.

Martin Lewitt cites the work of Meehl et. al as evidence that solar forcing explains everything, but that’s completely nonsensical. See Meehl et al (2005) Climate change projections for the 21st century and climate change commitment in the CCSM3 (climate model) J. Climate Those are all scientists from NCAR in Boulder and Lawrence Livermore.

Their work does raise some real issues about model predictions vs. observations when it comes to Arctic sea ice, however:

Here’s the model prediction:

Compare that to some of the current estimates:An ice-free Artic within a decade? (2008) That’s an interview with Dr Wieslaw Maslowski of the Naval Postgraduate School in Monterey, CA, on the loss of 7 million square km of sea ice lost from 1979-present:

The Navy was actually one of the first government institutions to accept the reality of global warming, because they had access to the nuclear submarine records of thinning Arctic sea ice. In 1999, the Navy released data showing that over the period from 1960-2000, ice thickness had declined by close to 40%. That trend was already obvious in the data in 1990, but was kept secret for another decade.

> in 1999, the Navy released data

Worth a cite and long quote. Here:

nsf.gov – News – Newly Declassified Submarine Data Will Help Study …

The area is known as the “Gore Box” for Vice President Al Gore’s initiative to declassify Arctic military data for scientific use. …

http://www.nsf.gov/news/news_summ.jsp?cntn_id=102863

http://www.nsf.gov/news/mmg/media/images/pr986_f.jpg

Map of Arctic Ocean where formerly classified submarine data are now being released for study.

Larger: http://www.nsf.gov/news/mmg/media/images/pr986_h.jpg

January 28, 1998

A treasure-trove of formerly classified data on the thickness of sea ice in the Arctic Ocean, gathered by U.S. Navy submarines over several decades, is now being opened. Data from the first of approximately 20 cruise tracks — an April, 1992 trans-Arctic Ocean track — has just been released, and information from the rest of these tracks, or maps of a submarine’s route, will be analyzed and released over the next year-and-a-half.

“The data opens up a magnificent resource for global change studies,” said Mike Ledbetter, National Science Foundation (NSF) program director for Arctic system science.

Climate modellers differ over the fate of the great expanse of Arctic sea ice, which is about the size of the United States. More than half the ice melts and refreezes each year.

“The Navy has collected data for decades on ice thickness in the Arctic, which was important to know for navigation and defense,” said Ledbetter. “But this information is also extremely important to science, now giving us a history of sea ice that we could not collect any other way.”

“The data is essential to building a baseline of sea-ice thickness in the Arctic basin to examine how global change affects ice cover,” explained Walter Tucker of the U.S. Army Cold Regions Research and Engineering Laboratory. Tucker is supported by NSF to process and analyze all digital ice-draft data collected by Navy submarines in the Arctic since 1986. The National Snow and Ice Data Center at the University of Colorado-Boulder is handling the actual data release.

The Arctic Submarine Laboratory, on behalf of the Chief of Naval Operations, approved declassifying the sea-ice data within a specific swath of the Arctic Ocean, roughly between Alaska and the North Pole. The area is known as the “Gore Box” for Vice President Al Gore’s initiative to declassify Arctic military data for scientific use.

Re #180 Nick: I know, I know. But “major” is a weasel word, and no price tag :-)

I hope you’re right, but believe it when people have been shown a concrete proposal, price tag and all. Expect gnashing of teeth and “why us and not them” rhetoric, even for perfectly affordable proposals. We see it already inside the EU.

This is going to take statesmanship.

Let’s agree to disagree then… I would call habitual lying an attitude problem.

I suppose the good news is that you didn’t challenge that those gentlemen have been lying… or was that an oversight? No, don’t shatter my illusions Rod :-)

In the concluding parenthetical sentence of his initial response to David Stockwell’s criticism of Rahmstorf et al (2007) at #124, Stefan Rahmstorf chides Stockwell and Roger Pielke Jr for having misspelled his name, and says that this is ‘an indication of the care someone takes in getting things right.’

Is it? In Dr. Rahmstorf’s contribution “Anthropogenic Climate Change: Revisiting the Facts” to Ernesto Zedillo, eds., “Global Warming: Looking Beyond Kyoto” (Brookings Institution Press and Yale Centre for the Study of Globalization, 2008), Caspar Amman (sic) is cited as one of the authors of Wahl et al (’Comment on “Reconstructing Climate from Noisy Data”‘, ‘Science’, 312, no. 5733:529). However, according to the cited source (and the list of contributors to this website), the correct spelling is ‘Ammann’.

Dr. Rahmstorf includes the chapter in the Brookings/Yale book in his list of publications, and it is published on the website of the Potsdam Institute of Climate Impact Research with which he is affiliated.

Despite this error, Rahmstorf (2008) should be considered on its merits, as should Rahmstorf et al (2007) and the recent contributions of Dr Stockwell and Professor Pielke.

[Response: Thank you for pointing out this oversight. -stefan]

Re #182, Ike, Great post there but it won’t convince some regardless of what you say. The biggest fear for me was always that the models were too conservative in their findings. The sensitivity to initial conditions that the science of complexity tells us about applies to the earths thermostat mechanism. It one thing to know the forcings and hence the temperature ranges, its another to know the implications of these changes. The IPCC 2007 report will not be updated until 2011/2012 and hence has itself seemingly in its 2007 assessment been too conservative in its estimates of the implications of 0.2C per decade warming and how sensitive earths systems are in influencing and responding to atmospheric warming via GHG.

I think that James Hansens telling remark was whe nhe said that we have another 1F (0.6C) rise in the existing infrastructure along with the 1F (0.6) in the oceans already. 1.2C/2F is enough to get us to 2C. The implications of this are staggering if at 0.8C we are already getting seriously worried. If you live long enough you are going to see that 1.2C rise which is efectively guaranteed turn Earth into a different planet.

Ref 172, Gavin’s reply. I refer to IPCC AR4 to WG1 and the SPM. I have read Chapter 2.7 and studied Figure SPM 2. My interpretation of these, is that it is claimed that the only extraterrestrial effect that affects the earth’s climate, is a small change in the solar constant. There is no possibility of any other extraterrestrial factor affecting the earth’s climate. All this has been established scientifically. Am I correct?

[Response: Not even close. Read section 2.7.1.3, 3rd paragraph on. – gavin]

Ike,

You should look at figure 4 of that Lockwood and Frohlich paper. It shows that even while trends in the last 20 years are in the opposite direction to that required to explain the recent warming, that the magnitude of the recent trend is small, and leaves levels of solar forcing at higher levels than early in the century. If levels of solar activity are maintained at high levels, there will be a positive energy imbalance resulting in heat storage into the ocean that Meehl, et al, and Wigley, et al demonstrate takes centuries. Of course, these high levels of solar activity are unlikely to be maintained for centuries. Think of it this way, if you put a pot of water on the stove with the burner set at 9, a “recent trend” that turns it down to 8, doesn’t rule out the burner as the cause of a continuing rise in the water temeperature.

The mid-century cooling in the temperature record is not consistent with either the solar or the GHG forcing “trends”. Presumably, reductions in aerosol negative forcings or whatever caused the midcentury cooling, explains the shape of the temperature curve.

Both solar and GHG warming can be making a contribution to the Arctic melting. We need models good enough to properly attribute the warming and with the skill to project the climate based on future GHG scenerios. Since the case for AGW contribution of more than one third of the recent warming is dependent on the showing of positive feedback, that case is not helped by model failures in representing the melting, no matter how rapid and alarming that melting is. Stroeve, et al, document the model problems in this issue. Skill and credibility in projection, will require better models.

Stroeve, J.C.; M. M. Holland, W. Meier, T. Scambos, and M. Serreze (2007). “Arctic sea ice decline: Faster than forecast”. Geophys. Res. Lett 34. doi:10.1029/2007GL029703. Retrieved on 2007-05-26. “All models participating in the Intergovernmental Panel on Climate Change Fourth Assessment Report (IPCC AR4) show declining Arctic ice cover over this period. However, depending on the time window for analysis, none or very few individual model simulations show trends comparable to observations.” Co-author Scambos from press release: “Because of this disparity, the shrinking of summertime ice is about thirty years ahead of the climate model projections.”

Martin Lewitt writes:

Yes it does.

So does any other cause of the warming.

If you’re talking variance accounted for, when I regress temperature anomalies on CO2 concentrations for 1880-2007, I get 78% of variance accounted for, not one third.

The current models take the sun into account.

Doesn’t work that way. If the climate were responding to a big impulse with a delay, the response would be greatest right away and then gradually damp with time. It wouldn’t first be flat and then suddenly ramp up.

tom watson writes:

bucksci.com and hitachi-hat.com spring to mind, also Edmund scientific. The instrument you want is an “infrared photometer.”

Barton Paul Levinson,

Most of the temperature response to the impulse of solar activity probably would have been greatest “right away”, if not for the midcentury cooling event. BTW, “right away” according to the climate commitment studies would be within the first few decades. While the current plateau in solar activity might eventually look like an “impulse” when viewed on geologic time scales, on our time scale, we are still within the high part of the impulse, although it may be starting to tale off.

My less than one-third was based upon the direct warming of the CO2, not any accounting for variance, which presumably would incorporate some positive feedbacks. Until solar variation and coupling is better understood and modeled, we won’t know how much of the variation should really be attributed to GHGs.

It is not enough for the models to “take the sun into account”, they must do a good enough job to attribute and project the climate. You may have missed the discussion of the Camp and Tung results in this thread:

https://www.realclimate.org/index.php/archives/2008/04/blogs-and-peer-review#comment-84547

re 190, Dear Barton Paul Levenson, do have such an instrument? How does it work and what does it tell you about how CO2 is causing back radiation?

What wavelengths do they operate at and can you direct me to the calibration methodology used in manufacture and recommended for users?

I already have a Craftsman Non-contact Infrared Thermometer with Laser Pointer 82288 and it is of little use in making any meaningful measurements with regards to any specific gas, especially a trace gas like CO2. A sling psychrometer is far more useful in knowing how the real greenhouse gas will impact the atmospheric temperature over several future hours. Has any of your past life experience had you ever dealing with instrumentation to make an actual measurement of any physical property of matter? I built my first weather instruments, optical instruments and electronic amps, strobes, readiation detectors, camera, qblow furnish in the sixties. my first solar how water heating system in the senventies. http://toms.homeip.net/2002.10.05_to_newport/320×240/DSC04651.jpg

> the real greenhouse gas

So you know the truth, and you’re here witnessing for your faith?

Re Tom’s home measurement of back radiation, isn’t the device you want called a Pyranometer?

Re 193 hank, In engineering it is common practice to simply ignore contributors to a process that may contribute less than a small fraction of 1%.

H20 has concentrations of 20 to thousands of times that of CO2. H20 adsorbs serveral times the wavelenghts of CO2 if in equal concentrations with CO2. It’s specific heat is similar, but H20 has a heat of fusion plus vaporization that is 600 times that of CO2.

There is more H20 than CO2 by orders of magnitude. It’s heat storage is a few orders of magnitude greater than CO2. And H20 adsorbs an order of magnitude of more wavelengths than CO2.

CO2 can be declared a trace green house gas and not a real green house. Especially when having fun. All the stuff that we use every day and works as well as it does was created by engineers who figured out what was not contributing.

[Response: CO2 contributes about 20% to the total greenhouse effect (once you deal with overlaps etc.), water vapour contributes about 50%. Seems to me that engineers would think that a worthwhile contribution to investigate. – gavin]

Re 175. Tom, get real. The paper as you describe it is amateurish nonsense of the most embarrassing kind – simplistic work by people with enough background that they should know better. I scanned the paper, but would not waste my time reading something that had appeared only on junkscience. If he really thinks he is on to something, let him submit it to a peer-reviewed scientific publication.

This site provides an opportunity to be educated through interaction with top climate scientists who generously donate their time. When amateurs wade in with bold claims that they know more about climate science than the climate scientists, it frankly comes across as silly.

The people who run this site, as well as some of the highly knowledgeable contributors, are amazingly patient in answering almost any relevant question and pointing you in the right direction. See if you can pose a proper scientic question.

I’ve never understood this thing that people have about magical effects of changes in the Sun, mystical lag times, etc.

Last night, when the Sun set, temperatures dropped fairly quickly, and this morning, they went back up, fairly quickly.

“Jet Streams Are Shifting And May Alter Paths Of Storms And Hurricanes”

http://www.sciencedaily.com/releases/2008/04/080416153558.htm

“… These changes fit the predictions of global warming models …”

Re #151 Stefan

#18 Stefan,

You state:

To test this I have shown three different methods with variations and graphs here http://landshape.org/enm/examples-of-simple-smoothers/.

The results are as follows:

1. Singular spectrum analysis (SSA used in your paper Rahmstorf et al. 2007), 11 year embedding period, comparing ‘minimum roughness criterion’ end padding with no padding. The trend lines flex about the 8th point, deviating every other point in the trend line, particularly the last 7.

2. Smooth spline, 11df, end-padding from top and bottom of trend channel (95%CL of a single value). The trend lines flex about the 11th point.

3. Moving average with final point varied to top and bottom of trend channel (95%CL of a single value). The last point in the trend changes, but the it stops 5 points short of the end, of course.

In general, the causal filters do not localize variations, while the acausal moving average does. Perhaps you were thinking of a moving average when you said it only affects the last five points?

Now I don’t know what happens in the Matlab implementation you used. Unfortunately, estimates of uncertainty are not included in Rahmstorf et al. 2007, which is most of my concern.

The green line in first SSA figure in my post is the simple linear regression of the 34 years data. This trend line is virtually in the middle of the IPCC trends, is not in the upper half, and is not ‘strange’.

The simple regression line is almost the same as the SSA with the MRC, and is an example where choice of boundary conditions affects the conclusions of your paper. As a point of interest, what was the result of the other choice of boundary condition in matlab that you mention? Did it shift the end of the trend line above or below the one you used?

You suggest that 5 points above the line is significant. However, you do not know unless you test it, and in series with high serial correlation, such runs are probably not unusual. That’s a kind of non-parametric runs test and I forget what it is called.

Stepping back from this analysis, the whole point of statistical testing is to ensure your conclusions are not overturned by subsequent data. The final figure in the post above shows a smooth spline of monthly temperatures from Hadley and GISS. If an orthodox statistical test had been performed in 2006 in Rahmstorf et al. 2007 it would have given a different conclusion, borne out by the subsequent data, i.e. random fluctuations about a long term trend.

[Response: If you really think you’d come to a different conclusion with a different analysis method, I suggest you submit it to a journal, like we did. I am unconvinced, though. -stefan]