Last year I discussed the basis of the AR4 attribution statement:

Most of the observed increase in global average temperatures since the mid-20th century is very likely due to the observed increase in anthropogenic greenhouse gas concentrations.

In the new AR5 SPM (pdf), there is an analogous statement:

It is extremely likely that more than half of the observed increase in global average surface temperature from 1951 to 2010 was caused by the anthropogenic increase in greenhouse gas concentrations and other anthropogenic forcings together. The best estimate of the human-induced contribution to warming is similar to the observed warming over this period.

This includes differences in the likelihood statement, drivers and a new statement on the most likely amount of anthropogenic warming.

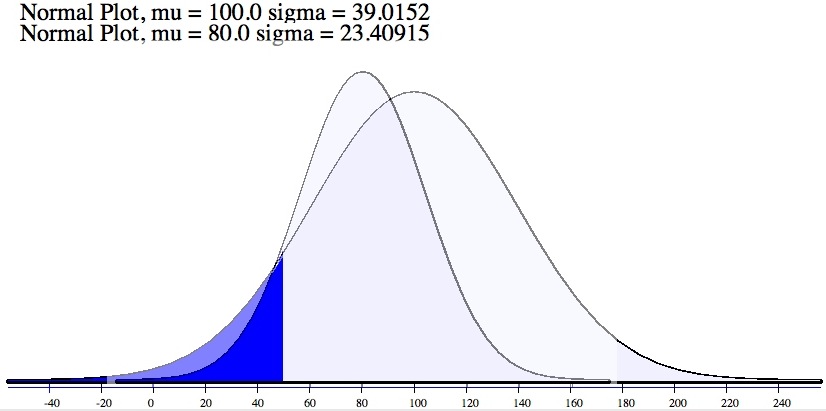

It is useful to remind ourselves that these statements are addressing our confidence in the characterisation of the anthropogenic contribution to the global surface temperature trend since the middle of the 20th Century. This contribution is unavoidably represented by a distribution of values because of the uncertainties associated with forcings, responses and internal variability. The AR4 statement confined itself to quantifying the probability that the greenhouse-gas driven trend was less than half the total trend as being less than 10% (or alternately, that at least 90% of the distribution was above 50% based on the IPCC definition of “very likely”):

Figure 1: Two schematic distributions of possible ‘anthropogenic contributions’ to the warming over the last 50 years. Note that in each case, despite a difference in the mean and variance, the probability of being below 50, is exactly 0.1 (i.e. a 10% likelihood).

In AR5, there are two notable changes to this. First, the likelihood level is now at least 95%, and so the assessment is for less than 5% probability of the trend being less than half of the observed trend. Secondly, they have switched from the ‘anthropogenic greenhouse gas” driven trend, to the total anthropogenic trend. As I discussed last time, the GHG trend is almost certainly larger than the net anthropogenic trend because of the high likelihood that anthropogenic aerosols have been a net cooling over that time. Both changes lead to a stronger statement than in AR4. One change in language is neutral; moving from “most” to “more than half”, but this was presumably to simply clarify the definition.

The second part of the AR5 statement is interesting as well. In the AR4 SPM, IPCC did not give a ‘best estimate’ for the anthropogenic contribution (though again many people were confused on this point). This time they have characterised the best estimate as being close to the observed estimate – i.e. that the anthropogenic trend is around 100% of the observed trend, implying that the best estimates of net natural forcings and internal variability are close to zero. This is equivalent to placing the peak in the distribution in the above figure near 100%.

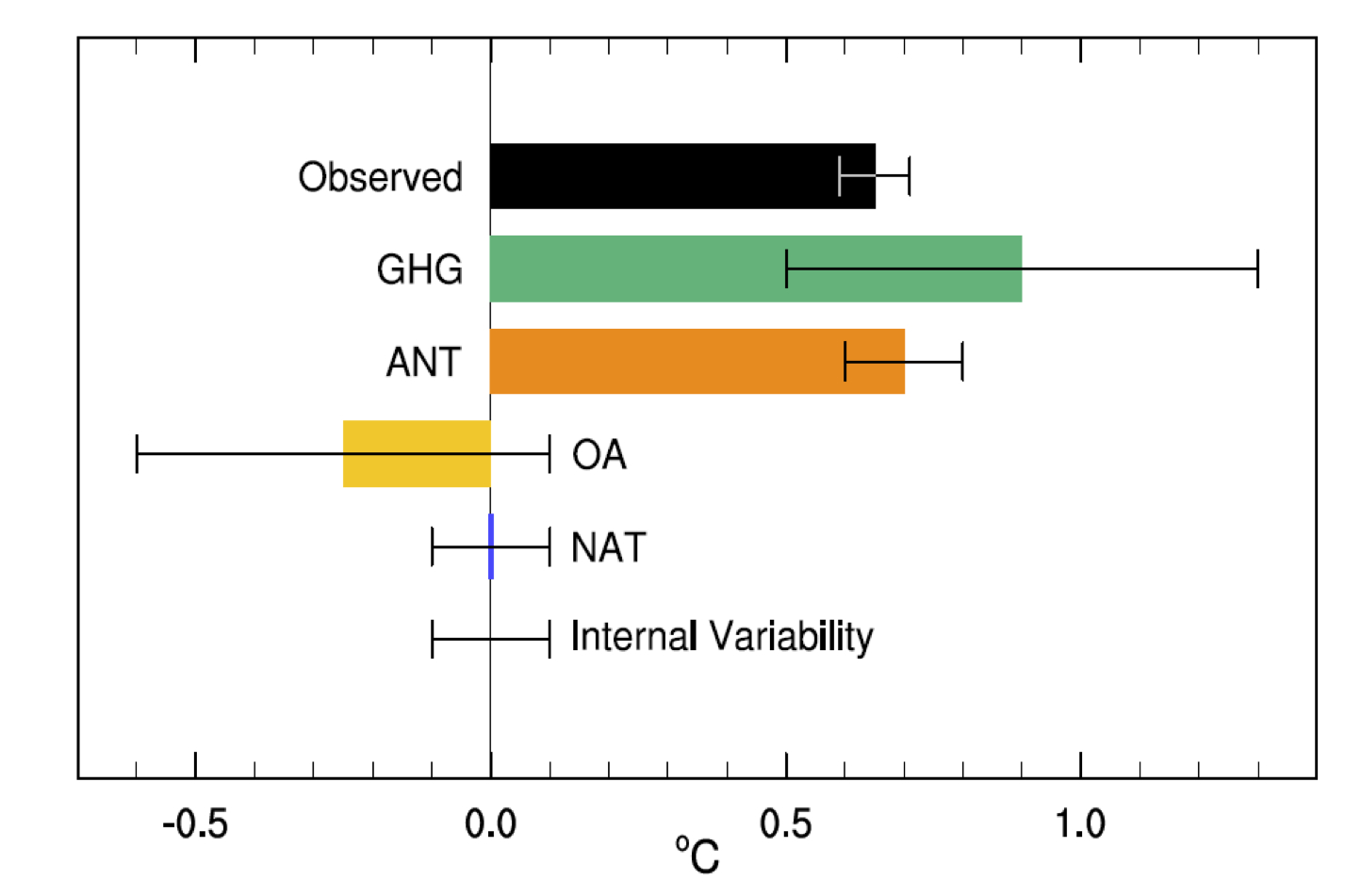

The basis for these changes is explored in Chapter 10 on detection and attribution and is summarised in the following figure (10.5):

Figure 2. Assessed likely ranges (whiskers) and their mid-points (bars) for attributable warming trends over the 1951–2010 period due to well-mixed greenhouse gases (GHG), other anthropogenic forings (OA), natural forcings (NAT), combined anthropogenic forcings (ANT), and internal variability. The HadCRUT4 observations are shown in black with the 5–95% uncertainty range due to observational uncertainty.

These estimates are the “assessed” trends that come out of fingerprint studies of the temperature changes and account for potential mis-estimates (over or under) of internal variability, sensitivity etc. in the models (and potentially the forcings). The raw material are the model hindcasts of the historical period – using all forcings, just the natural ones, just the anthropogenic ones and various variations on that theme.

The error bars cover the ‘likely’ range (33-66%), so are close to being ±1 standard deviation (except for the observations (5-95%), which is closer to ±2 standard deviations). It is easy enough to see that the ‘ANT’ row (the combination from all anthropogenic forcings) is around 0.7 ± 0.1ºC, and the OBS are 0.65 ± 0.06ºC. If you work that through (assuming normal distributions for the uncertainties), it implies that the probability of the ANT trend being less than half the OBS trend is less than 0.02% – much less than the stated 5% level. The difference is that the less confident statement also takes into account structural uncertainties about the methodology, models and data. Similarly, the best estimate of the ratio of ANT to OBS has a 2 sd range between 0.8 and 1.4 (peak at 1.08). Consistent with this are the rows for natural forcing and internal variability – neither are significantly different to zero in the mean, and the uncertainties are too small for them to explain the observed trend with any confidence. Note that the ANT vs. NAT comparison is independent of the GHG or OA comparisons; the error bars for ANT do not derive from combining the GHG and OA results.

It is worth asking what the higher confidence/lower error bars are associated with. First, the longer time period (an extra 5 years) makes the trends clearer relative to the noise, multiple methodologies have been used which get the same result, and fingerprints have been better constrained by the greater use of spatial information. Small effects may also arise from better characterisations of the uncertainties in the observations (i.e. in moving from HadCRUT3 to HadCRUT4). Because of the similarity of patterns related to aerosols and greenhouse gases, there is more uncertainty in doing the separate attributions rather than looking at anthropogenic forcings collectively. Interestingly, the attribution of most of the trend to GHGs alone would still remain very likely (as in AR4); I estimate a roughly 7% probability that it would account for less than half the OBS trend. A factor that might be relevant (though I would need to confirm this) is that more CMIP5 simulations from a wider range of models were available for the NAT/ANT comparison than previously in CMIP3/4.

It could be argued that since recent trends have fallen slightly below the multi-model ensemble mean, this should imply that our uncertainty has massively increased and hence the confidence statement should be weaker than stated. However this doesn’t really follow. Over-estimates of model sensitivity would be accounted for in the methodology (via a scaling factor of less than one), and indeed, a small over-estimate (by about 10%) is already factored in. Mis-specification of post-2000 forcings (underestimated volcanoes, Chinese aerosols or overestimated solar), or indeed, uncertainties in all forcings in the earlier period, leads to reduced confidence in attribution in the fingerprint studies, and an lower estimate of the anthropogenic contribution. Finally, if the issue is related simply to an random realisation of El Niño/La Niña phases or other sources of internal variability, this simply feeds into the ‘Internal variability’ assessment. Thus the effects of recent years are already embedded within the calculation, and will have led to a reduced confidence compared to a situation where things lined up more. Using this as an additional factor to change the confidence rating again would be double counting.

There is more information on this process in the IPCC chapter itself, and in the referenced literature (particularly Ribes and Terray (2013), Jones et al (2013) and Gillet et al (2013)). There is also a summary of relevant recent papers at SkepticalScience.

Bottom line? These statements are both comprehensible and traceable back to the literature and the data. While they are conclusive, they are not a dramatic departure from basic conclusions of AR4 and subsequent literature – but then, that is exactly what one should expect.

References

- A. Ribes, and L. Terray, "Application of regularised optimal fingerprinting to attribution. Part II: application to global near-surface temperature", Climate Dynamics, vol. 41, pp. 2837-2853, 2013. http://dx.doi.org/10.1007/s00382-013-1736-6

- G.S. Jones, P.A. Stott, and N. Christidis, "Attribution of observed historical near‒surface temperature variations to anthropogenic and natural causes using CMIP5 simulations", Journal of Geophysical Research: Atmospheres, vol. 118, pp. 4001-4024, 2013. http://dx.doi.org/10.1002/jgrd.50239

- N.P. Gillett, V.K. Arora, D. Matthews, and M.R. Allen, "Constraining the Ratio of Global Warming to Cumulative CO2 Emissions Using CMIP5 Simulations*", Journal of Climate, vol. 26, pp. 6844-6858, 2013. http://dx.doi.org/10.1175/JCLI-D-12-00476.1

“It could be argued that since recent trends have fallen slightly below the multi-model ensemble mean, this should imply that our uncertainty has massively increased”

As I understand it from Michael Mann ,www.livescience.com/39957-climate-change-deniers-must-stop-distorting-the-evidence.html, this is similar to how the IPCC reasoned about the lowered climate sensitivity lower end which was due to the “pause”.

But what is the strange statistical methodology behind that, what is so special with the recent trends? There are a lot of trends over the years, sometimes the models are above sometimes they are below. In addition you must of course factor in what we know about the current natural variability. For example, measurement of the ocean heat storage doesn’t support any slowdown.

Would be very interesting with an analysis that explained the IPCC likely sensitivity interval of 1.5-4.5 in terms of the attribution above. At least it is not intuitive how the 97.5% chance ((100+95)/2) of the warming being more than 50% anthropogenic is consistent with a 17% chance of the equilibrium sensitivity being less than 1.5K.

Can you explain why in figure 2(10.5) the error bar on ANT is so small? Naively I would expect this to be the sum of GHG and OA. This would then work out to be an error on ANT of sqrt(2*0.36) = 0.8C. This is also not explained in chapter 10.

[Response: I pointed out above that these are independent analyses. Since there is some overlap in the pattern of response for aerosols only and GHGs only, there is a degeneracy in the fingerprint calculation such that it has quite a wide range of possible values for the OA and GHG contributions when calculated independently. In the attribution between ANT and NAT, there is no such degeneracy since OA and GHG (and other factors) are lumped in together, allowing for a clearer attribution to the sum, as opposed to the constituents. This actually is discussed in section 10.3.1.1.3, second paragraph, p10-20. – gavin]

To correct my mistake above. If extremely likely (>95%) is 97.5% then likely (>66%) should of course be 83% which gives 8.5% chance of equilibrium sensitivity being less than 1.5K. But this misunderstanding was the result of an IMHO unclear terminology. For some reason the experts play a guessing game and don’t want to tell you how certain they are in the range 66%-100%. For an outside observer I suppose this is equivalent to 83%. Or BTW, shouldn’t it be 66%-90%, eq. to 78% giving 11% for the lucky case of sensitivity less than 1.5K, otherwise they would have said “very likely”. Or wait, is the interval really the middle part with symmetrical ends?

Ah, I give up, I’m too stupid to understand this apparently, but then what about those poor politicians :-)

[Response: Is the confusion here simply the one-tailed vs. two-tailed assessments? For the contribution of ANT to OBS, it is one-tailed (i.e. P(x>50%)), while for sensitivity it is two-tailed P(1.5

No, it is not about the one/two tail distributions. You are a policymaker going to make an important decision about something. You want a probability from the expert to put in your risk analysis. You ask an expert how sure he/she is. You get the answer that the consequence is “likely” (defined as 66%-100%). But the expert’s fuzziness in knowing how certain he/she is is meaningless to your rational decision. So you have to decide if the best guess is:

1. 83% (modelling the expert fuzziness as symmetrical)

2. 78% (taking into account that the expert would have said “very likely” (or stronger) in the case >90% was in the possible range.)

Technically perhaps you could integrate symmetrically over 66%-90% in your risk analysis but that seems a bit weird.

[Response: Not really, that’s you’d do in a Bayesian analysis. If you have a consequence that is a function of a parameter (which is uncertain), the most sensible thing to do is integrate over all the possible values (ie. using the full distribution), and calculate the distribution of consequences. But attribution as we are discussing here doesn’t have any specific policy implication mainly because this is about past changes. – gavin]

Dear Gavin and the RC team. RC is my personal #1 first-stop resource about Climate Change, and totally appreciate and value the effort all of you put into this, and understand it is a voluntary unpaid effort. I have been coming here since circa 2006?, at least monthly for a peek at what’s your latest info, or when something controversial appears in the Media then I come here immediately to get the “good oil”. I’m a lurker who may have only posted something here 3 times, and couple of emails direct which never got a reply … which is fine. I’m not a Uni grad, but sharp enough to sit around the top 5% “intelligence band” testing, have run businesses with t/o above $50 million per annum, and total staffs of up 900. Not bragging, simply saying I am at least a little (?) above average with 56 years of life experience. I have kept coming here because you all including those that make comments to Posts really are the salt of the earth, and when I can I share your site/posts with others I know regularly.

I wish to simply make a few basic comments about your “communication” here, and humbly suggest you may benefit from a team meeting at a local tavern to review whether or not RC Posts are still “fit for purpose” .. or even if the “purpose” has changed over recent years?

On your About page RC states:

“RealClimate is a commentary site on climate science by working climate scientists for the interested public and journalists. We aim to provide a quick response to developing stories and provide the context sometimes missing in mainstream commentary.”

Gavin, I fit into the “interested public” category yet I believe I am ten times sharper than a Journalist or most of the politicians that run our lives. I got about half way through this post before you totally lost me. When I skimmed down to your responses to “comments” I was clueless in Seattle. This is happening more and more and thus I wonder is it me getting older, or are you guys becoming increasing more about discussing RC at high scientific level as if you are actually in a science Lab speaking with your peers as opposed to the “public & journalists”? I simply cannot keep up with the “jargon” anymore, and I am not qualified to learn by heart every single acronym/term/phrase used in the IPCC Report, but I did read the entire 2007 v4 report myself. Like I do “get it” the issues about CC & GHG and have a good understanding about the complexities and the bottom line, and accept the uncertainties and why they exist plus recognise that the new info in the last few years has been astounding work by all in this field. Sheer brilliance!

JohnL above is clearly more aware of what you’re talking about than I am, and recently I can see there are many participants having really good meaningful discussions here, I totally respect that .. and do not intend to tell you guys what to do or how to it. I do take the time to educate myself about “terms” used but have never ever heard one-tailed two-tailed before nor some others terms, and I am a regular user of your service. Honestly though your “science/jargon” speak is getting too much for me, and I simply anted to at least let you know before I give up entirely on RC.

If you still feel that your purpose is directed at the “public and journalists” may I suggest you use your best recent POST from RC, go visit http://theconversation.com/au and submit for publication, and then see what kind of responses you get from what is mainly academic level readers from all walks of life, and then ask yourself what % of readers understood what you said/meant.

No doubt this comment is way too long .. sorry about that. I wish you all well. Sean

With all respect to the learned authors, that is breathtakingly ugly prose made worse in the update: passive construction; unnecessary modifiers (“observed”); meaningless modifiers (“very”, “extremely” *); three words where a simple one works (“most”); jargon terms (I suppose “human-caused” would be just too Hansen?); technically difficult comparisons (“best estimate … similar to”).

It’s not necessary to write ugly, unintelligible prose … just because it’s a technical report.

(* Sure, defined elsewhere, but essentially meaningless on a straight read. And unfortunately now ubiquitous in IPCC products.)

Good on you that you are taking a whole thread the topic I questioned in my comment: https://www.realclimate.org/index.php/archives/2013/10/the-evolution-of-radiative-forcing-bar-charts/comment-page-1/#comment-416516 This issues stands out really in WG1 AR5 final report although 2216 pages can easily overwhelm.

One thing that puzzles me is that while the error bar on GHGs is still huge people like Dana Nutticelli keep on hammering that “the science is settled”. Not to mention (Chinese?) aerosols, deep ocean heat or El Nino. This sounds unscientific to me.

[Response: Many things are well established, and yet nothing has a zero error bar. Our thoughts on Unsettled Science are worth going back to perhaps. – gavin]

Gavin and all, I’m sure the options of communicating with policymakers have been studied, but I do feel, like a couple of others, that the keyword probability statements are not communicating the proper confidence on one hand, and risk on the other. I’m pretty sure the nuances of these keywords are lost entirely on media and the public.

So, I wonder if there might not be another equivalent terminology, perhaps not wagers, but maybe projected returns on $10,000 invested against the several possible futures.

As far as “ugly prose” goes, readership needs to realize that IPCC is not only trying to be precise, they are trying to get agreement from a large number of people in a big organization, not all scientists. Doing that will “take the edge off” almost any writing!

Useful post, thanks.

One thing confuses me. You wrote:

“Interestingly, the attribution of most of the trend to GHGs alone would still remain very likely (as in AR4); I estimate a roughly 7% probability that it would account for less than half the OBS trend.”

OTOH, you also wrote that “the probability of the ANT trend being less than half the OBS trend is less than 0.02%”

Since aerosol forcing (i.e. OA) is almost certainly negative (i.e. cooling), GHG contribution should exceed the net anthropogenic (ANT) contribution. How then can you calculate a (much) higher probability for GHG<50% than for ANT<50%?

Is this purely a consequence of the much smaller error bars on ANT as compared to GHG? (cf your in-line response to Clive Best, #2). That would strike me as very odd though. These error ranges are a result of how one looks at the issue. Top-down (delineating the total response to different contributions) this may make sense, but bottom-up (looking at individual contributions) one would get a much different result I'd wager. Based on the estimates and associated uncertainties of the different forcings (GHG and aerosols), I'm much more confident that GHG by themselves exceed 50% of the warming than that net anthro exceeds 50%. Am I mistaken in that or does this indeed depend on the framework within which these error bars are quantified?

[Response: I agree it’s odd at first glance, but having discussed it with a couple of the IPCC authors, it is clearer. The issue is the degeneracy of the OA and GHG patterns looked at separately i.e. it is hard to distinguish statistically between x*GHG+y*OA and (x+delta)*GHG+(x-delta)*OA (somewhat simplified). So the absolute error on the GHG and OA terms is larger than the error in the ANT attribution. It is the (small) chance that OA could be positive that makes the conclusion weaker, while the chance that ANT is negative is much smaller. (I think). – gavin]

Quoting Research Paper: The Tragedy of the Risk-Perception Commons: Culture Conflict, Rationality Conflict, and Climate Change. [CAPS for emphasis]

The conventional explanation for controversy over climate change emphasizes impediments to public understanding: limited popular knowledge of science, the inability of ordinary citizens to assess technical information, and the resulting widespread use of unreliable cognitive heuristics to assess risk.

A large survey of U.S. adults (N = 1540) found little support for this account. On the whole, the MOST scientifically literate and numerate subjects were slightly LESS likely, not more, to see climate change as a serious threat than the LEAST scientifically literate and numerate ones.

More importantly, GREATER scientific literacy and numeracy were associated with GREATER cultural polarization: respondents predisposed by their VALUES to dismiss climate change evidence became MORE dismissive, and those predisposed by their VALUES to credit such evidence MORE concerned, as science literacy and numeracy INCREASED.

We suggest that this evidence reflects a conflict between two levels of rationality: the individual level, which is characterized by the citizens’ effective use of their knowledge and reasoning capacities to form risk perceptions that express their cultural commitments; and the collective level, which is characterized by citizens’ FAILURE to converge on THE BEST available scientific EVIDENCE on how to promote their common welfare.

——

This conflict between individual and collective rationality is not inevitable. It occurs only because of contingent, mutable, and fortunately rare conditions that make one SET OF BELIEFS about risk congenial to one cultural group and an opposing set congenial to another.

NEUTRALIZE THESE conditions, we will argue, and the conflict between the individual and collective levels of rationality is resolved.

Perfecting our knowledge of HOW to achieve this state should be A PRIMARY AIM OF THE SCIENCE OF SCIENCE COMMUNICATION.

http://www.climateaccess.org/sites/default/files/Kahan_Tragedy%20of%20the%20Risk-Perception%20Commons.pdf

Oooh, Oooh, I have to use that word in a sentence. OK…. How about… Miss: Your mis-specification of our Missouri and Mississippi mistletoe missives is causing mayhem, mischief and misery in our matrimonial ministries.

Well, despite those difficulties, the science does seem to be settling in, like a furrow in a queen-size bed.

> most scientifically literate and numerate subjects were …

My guess is:

Those predisposed to believing climate change who are less scientifically literate, fall for scares about climate changing fast in improbable ways.

Those predisposed to _disbelieve_ who are less scientifically literate, fall for scares about so ci al ists stealing their freedoms in fast improbable ways.

I can’t help feeling that there is a certain amount of circular argument going on here.

“Over-estimates of model sensitivity would be accounted for in the methodology (via a scaling factor of less than one), and indeed, a small over-estimate (by about 10%) is already factored in.”

It seems clear that each model is tuned to match past temperature trends through individual adjustments to external forcings, feedbacks and internal variability. Then the results from these tuned model are re-presented (via Figure 2 above) as giving strong evidence that nearly all observed warming is anthropogenic as predicted. How could it be anything else ?

[Response: You premise is not true, and so your conclusions do not follow. Despite endless repetition of these claims, models are *not* tuned on the trends over the 20th Century. They just aren’t. And in this calculation it wouldn’t even be relevant in any case, because the fingerprinting is done without reference to the scale of the response – just the pattern. That the scaling is close to 1 for the models is actually an independent validation of the model sensitivity. – gavin]

Despite this we then read in chapter 9 that.

“Almost all CMIP5 historical simulations do not reproduce the observed recent warming hiatus. There is medium confidence that the GMST trend difference between models and observations during 1998–2012 is to a substantial degree caused by internal variability, with possible contributions from forcing error and some CMIP5 models overestimating the response to increasing greenhouse-gas forcing.”

The AR5 explanation for the hiatus as given in chapter 9 is basically that about half of the pause is natural – a small reduction in TSI and more aerosols from volcanoes, while the other half is unknown – including perhaps oversensitivity in models.

[Response: When people say ‘half/half’ it is usually a sign that the analysis has not yet been fully worked out (which is this case here). – gavin]

Then on page 9.5 we read “There is very high confidence that the primary factor contributing to the spread in equilibrium climate sensitivity continues to be the cloud feedback.

[Response: This is a separate issue and the statement is completely true. – gavin]

How much of this inter-model variability has actually all been hidden under the ANT term ?

[Response: The cloud feedback variation (and the consequent variation in TCR) goes into the analysis by producing different scalings for the different model responses. The mean scaling is about 0.9 (though there is presumably a spread), and that feeds directly into the uncertainty in the attribution. If all the models had the same sensitivity, the errors would be less. – gavin]

Thanks Gavin. It does appear, also from what you say, to depend on how one looks at the issue. It’s like in curve fitting, the influence from ANT and OA can not be properly disentangled, so the combined effect is more constrained than the individual effect. But this seems mainly a consequence of the fact that the combined effect ‘must’ conform to the observed temp change (since NAT and INT.VAR are so small). It is somewhat reminiscent of the the anti-correlation between aerosol forcing and sensitivity (in CMIP3).

Looking at the physical contribution of the individual components, I find it physically much more plausible to assign a large error bar to the aerosol forcing, which is carried forward to a large error bar in the net forcing (larger than in GHG) and thus in the net temperature effect. As was e.g. shown in AR4 fig 2.20 (http://www.ipcc.ch/publications_and_data/ar4/wg1/en/figure-2-20.html ). That is for the forcing though. But forcings are quasi-linearly related to the temp effect via the (close to constant) sensitivity, so the picture shouldn’t be materially different from a physics point of view. The only reason that for the temperature effect this would look different (as in your fig 2 above) is in (what I would call) a curve-fitting context.

Just to clarify, I meant referring to fig 2.20 B at the bottom of the link http://www.ipcc.ch/publications_and_data/ar4/wg1/en/figure-2-20.html

#10, #12:

Yes, what is or is not “rational” is a subjective judgment.

Elsewhere somebody asked: “How could adding 1 molecule in 10,000 cause several degrees of warming?”

And the answer is simply: Yes it is hard to believe, yes it defies common sense, but that’s just how it is! You can’t be second guessing the work of professionals with common sense arguments (common sense is based on everyday experience and climate change is well beyond its reach). You have to trust the scientists who carefully analyzed this question.

Ok but what if the skeptic does not want to trust the scientists? He has two choices: He can either mistrust a) the scientists’ character or b) the scientists’ competence.

a) That means the scientists are a corrupt bunch of liars who make things up because they have some sinister hidden agenda. And because so many scientists are involved it has to be a world-wide conspiracy involving thousands. And the government …

b) Do scientists make mistakes? Sure they do. But again, that thousands of scientists would make the same mistake seems a bit unlikely. And the skeptic keeps anxiously waiting for new research to finally yield the “truth” that he is so desperately hoping and praying for.

I can see from comments on various blog sites that climate skepticism emerges from second guessing based on limited knowledge and then evolves into wishful thinking, and paranoid conspiracy theories. And yes that seems perfectly rational to the skeptics.

For Clive Best: You’ve been fed a line and swallowed it.

Remember the “trick” stories?

“Tuning” stories are often the same sort of deceit.

You fell for it.

“Tuning” means fitting the physics, not matching the past.

Read past the title of this post:

Paper review: ‘Tuning the climate of a global model’

Citation: Mauritsen, T., et al. (2012), Tuning the climate of a global model, J. Adv. Model. Earth Syst., 4, M00A01, doi:10.1029/2012MS000154.

Sean quoted: “The conventional explanation for controversy over climate change emphasizes impediments to public understanding: limited popular knowledge of science, the inability of ordinary citizens to assess technical information, and the resulting widespread use of unreliable cognitive heuristics to assess risk.”

Oh, please. What is conspicuously missing from that “conventional explanation”?

How about:

Can we stop pretending that “the public” has spent 30 years listening to scientists and are just “not getting it” because of their “limitations” and “inabilities” and “unreliable cognitive heuristics”?

The reality is that soft-spoken scientists have been drowned out by screaming paid stooges with megaphones. The problem isn’t that the public can’t understand you — they simply cannot hear you at all through the noise. And whispering more articulately is not going to help.

PS – my mistake.

Clive Best has not been fed a line and swallowed it.

He’s among those actively feeding that line to the public:

https://duckduckgo.com/?q=%22clive+best%22+tuning+models

Thanks for that clarification Hank,

You say “Tuning” means fitting the physics, not matching the past. I agree that this is an honest procedure if normalization is done just once and then fixed in time. I also accept that such models indeed reproduce well the observed warming 1950 – 2000.

Maybe I am just being thick here – but please can you explain to me then why these normalized CMIP5 models end up with such different external forcings as shown for example in Fig 10.1 d) in AR5 ?

[Response: These are the effective forcings, not the climate responses. And the variations in the effective forcings are a function of mainly of the aerosol modules, the base climatology and how the indirect effects are paramterised. This diagnostic is driven ultimately by the aerosol emission inventories, but the distribution of aerosols and their effective forcing is a complicated function of the elements I listed above. They are different in different models because the aerosol models, base climatology (including winds and rainfall rates) and interactions with clouds are differently parameterised. – gavin]

Does this not reflect variations in climate sensitivity of the underlying physics between models ?

[Response: No. This is not the temperature response, though it does reflect differences in some aspects of the underlying physics (though not in any trivial way). – gavin]

If so how do we make progress to determine the optimum model ? Is it even possible to have one standard climate model ?

[Response: We don’t. And there isn’t. There is inherent uncertainty in modelling the system with finite computational capacity and imperfect theoretical understanding and we need to sample that. The CMIP ensemble is not a perfect design for doing so, but it does a reasonable job. Making predictions should be a function of those models simulations but also our ability to correct for biases, adjust for incompleteness and weight for skill where we can. Model variation will grow in the future as we sample more of that real uncertainty, but with better ‘out-of-sample’ tests and deeper understanding, predictions (and projections) may well get better. – gavin]

I noticed that the IPCC plans to finalize WG1 sometime in Jan 2014. But not after they edit the science in WG1 to match the political statements in the SPM. Do you deny this and if not, do you condemn it?

[Response: Oh please. Let’s look at the reality, rather than the paranoid fantasy. The changes that need to be made (on top of basic copy-editing) are actually already publicly available here. They consist of extremely tedious details on which time periods to use in the summary statements, additional sentences of clarification, and consistency in terminology across the report and SPM etc. The whole thing is completely transparent and has nothing whatsoever to do with some imagined ‘political statements’. It was a summary *for* policy-makers, not a summary *by* politicians. So, to answer the question, yes, I do deny this, and no, I do not condemn it. – gavin]

Re Clive Best – there is a range in climate sensitivity among models. Other lines of evidence are available – they have ranges too, but they at least line up enough to give greater confidence in the results. I don’t think we could ever know climate sensitivity to the nearest 0.0001 K – that seems fairly obvious. So it’s just a matter of adjusting and narrowing the range with advancements in the science. (The obvious standard climate model has a mass of

~ 6 trillion trillion kg – although much of that is structural support in the short term; the RAM and … etc. is probably about

5.1 E18 kg

+ 1.4 E21 kg = ~ 1.4 E21 kg – unfortunately it only stores some of the output data, and the memory is sometimes dumped if not degraded.)

see

http://www.skepticalscience.com/Estimating-climate-sensitivity-from-3-million-years-ago.html

https://www.skepticalscience.com/hansen-and-sato-2012-climate-sensitivity.html

https://www.realclimate.org/index.php/archives/2008/11/faq-on-climate-models/

https://www.realclimate.org/index.php/archives/2009/01/faq-on-climate-models-part-ii/

re 16 Retrograde Orbit ““How could adding 1 molecule in 10,000 cause several degrees of warming?”

And the answer is simply: Yes it is hard to believe,”

– different people have very different common sense instincts.

A sheet of Aluminum foil 0.016 mm thick(http://en.wikipedia.org/wiki/Aluminium_foil) (a very familiar object to most in the U.S.) wrapped around the Earth would have a mass ~ (~roughly 3 times density of water, thus ~ 0.048 g/m^2, vs ~ 10,000 kg air /m^2) 1/200,000,000 of the atmosphere (plus a small fudge factor for increased area assuming it’s not too high up) – molar ratio would be similar (http://en.wikipedia.org/wiki/Aluminium ) –

1 part in 200,000,000 would be (more than – I’m guessing much more, how thin would Al foil have to be before you could start to see through it?) sufficient to cause a mass extinction greater than the K/T or even end-Permian, as well as disrupt communications with ISS!

re my “(The obvious standard climate model has a mass of

~ 6 trillion trillion kg – although much of that is structural support in the short term; the RAM and … etc. is probably about

5.1 E18 kg

+ 1.4 E21 kg = ~ 1.4 E21 kg – unfortunately it only stores some of the output data, and the memory is sometimes dumped if not degraded.)” (see original for links/sources) … and the parameters are not easily adjusted to fit conditions on/for other planets, and, until we install the flux capacitor, it only runs in real time.

To bob (#21 above):

What is happening between AR5 WG I release shortly ago (yes, it was released on a Monday, recently) and the prospective final release in January 2014 are things like moving the figures into the text (from the bottom where they have been thrown together, for now) and doing things like typesetting to allow the text to flow around diagrams, if necessary, etc. Your conclusion by fiat, that the intent is to “match … political statements” is simply false.

The IPCC WG I team is actually doing things in the best way possible, focusing first and foremost on the accuracy and content, getting that published as soon as that work is complete, and deferring the typesetting issues in the final version to a later phase. Releasing ALL of their work product as soon as it is complete, but before typesetting work, is actually MUCH BETTER and MUCH MORE GENEROUS of them than otherwise.

They could have waited until the typesetting was done and over and produced only one version. But they chose to release two — pre- and post- typesetting. I’m sure that decision will cost them something, because no good turn goes unpunished these days and I’m sure there will be people saying “well, which is it? this one or that one? Obviously, they don’t know what they are doing,” when in fact they are the SAME. Just one of them is coming out before they get all the pretty pictures typeset into their final locations.

I, for one, am darned glad that they took the chance and the risks of people like you mis-characterizing their motives and decided to put out the work product sooner than later. I am actually proud of them for that decision. It was the RIGHT DECISION to make.

@16- Retrograde Orbit

The problem is when you do explain about 1 molecule in 10,000 they simply switch off. They weren’t interested in an answer in the first place. The “that little material can’t have an effect” is an excuse to stop thinking and stop conversation. It isn’t an invitation. You need to keep in mind when ‘debating’ these people that you’re not going to convince them. Your audience is the silent observers.

Let me share one gambit I use on the dilute gas issue. I ask “do you know what ozone does in the atmosphere?” (It blocks UV radiation from the sun- most people know this).

“do you know the concentration of Ozone in the stratosphere that blocks the UV?” (generally no)

It’s 4-8 ppm (50-100 times less than CO2)

Oh!

So actually 400 ppm of CO2 is a whole lot of material in the atmosphere as far as blocking radiation goes.

#21 Bob (the denier)

Just once I’d like to see one of these drive-bys reply with the appropriate response. To wit:

“Sorry, I see from Gavin’s response that I bought some denialist crap hook line and sinker and simply didn’t have the intelligence to realize it for the crap that it was. Unfortunately, I’ll probably be back with more insanity from denialist websites because I’m a gullible sucker easily taken in.”

Obviously they lack decent tools for self analysis.

#25–Good ‘gambit’ on the dilute gas issue, Dave. I’ll try it.

@Dave123 #25:

Yes, a good one to remember. That’s joining my repertoire. I also like this stock fav from Barton Paul Levinson:

About 100 years ago there was 4kg of CO2 above every square metre of the Earth’s surface. Now there is 6kg.

#25 Dave 123

I don’t think they are not interested in an answer. If you were able to offer them a common sense answer (and your gambit is a good one) they would switch sides. You have to appeal to their common sense, and that’s exactly what you do. Unfortunately the anti-climate change blogs squirm with (bogus!) common sense answers as well.

Many successful, educated people use common sense a lot and it is the reason they have been successful. Now you are telling them: Forget common sense, you have to trust the experts. That’s a hard sell.

@Kevin 26

You can’t really convince people with words – you need maths and simulation. I convinced myself that the CO2 greenhouse effect was significant by working through the physics in reverse. I considered an imaginary situation where a flux of 15 micron band IR photons radiate down to earth from space. That way it is fairly easy to calculate using HITRAN, the altitude where more than half of them are absorbed by CO2 molecules in the atmosphere. This then gives you the effective altitude where thermal IR photons from the surface transfer radiatively to space. The temperature of that layer defines the reduction in radiative flux compared to zero CO2. This gives an upper limit on the CO2 greenhouse effect because it ignores H2O. details here

Another objective was to derive the logarithmic dependency of radiative forcing with increasing CO2 concentrations

So my “skepticism” is really just about the feedbacks.

Re 29 Clive Best – it sounds like you’ve got a good understanding of atmospheric radiation (esp. equating the depth of penetration of radiation from space with the depth from which photons emitted to space could be emitted (I use ‘could’ because of course photon emission is also proportional (nonlinearly, esp. at shorter wavelengths) to temperature. Emission weighting function (the centroid of which could be considered the effective altitude) is more precisely proportional to absorption of incident radiation from the opposite direction (assuming LTE, no Raman, Compton, etc. types of scattering that change photon energy)).

One suggestion: while effective altitude is a very useful concept, nonlinearities in the temperature profile over height mean that taking T(effective altitude of emission to space) = T(effective temperature of radiation emitted to space) is at best an approximation. The altitude for which T = T of the radiation can generally be a bit different, although I don’t know quantitatively what the difference would be in this case.

(or maybe you addressed this in your links? Haven’t checked)

“ Emission weighting function (the centroid of which could be considered the effective altitude) is more precisely proportional to absorption of incident radiation from the opposite direction ” for any given frequency (and polarization, if it matters) and direction (and location and time) (for the source of radiant intensity (flux per unit area per unit solid angle));

I think an emission weighting function could be defined for all directions over a hemisphere (for the source of a flux per unit area), though I don’t know if the term is ever used that way.

( emission weighting function (I’ll use EWF for short) for intensity in a given direction used here: http://www.geo.mtu.edu/~scarn/teaching/GE4250/AtmoEmission_lecture_slides.pdf

PS for radiation upward to space, EWFs shown in that linke over z (geometric height) due to relatively well-mixed gases (no cloud contributions, etc.) have a peak at some height and generally go gradually to zero above and below (unless intersected the ground, in which case a second peak will generally occur at the ground due to the generally large increase in optical thickness per unit geometric length there). If a vertical coordinate that is or tends to be proportional to mass, e.g. p (pressure) is used instead, then, aside from variations in line strength and broadenning with height (and with scattering playing a minor role), the peak would appear instead at TOA (top of atmosphere) and have exponential decay downward (except for whatever is left over by the time the surface is reached) (I’m not sure offhand how scattering would affect that; luckily Earth’s greenhouse effect is mostly from emission/absorption). Using optical thickness itself as a vertical coordinate would give the same, precisely exponential EWF in all cases (at least without scattering), even where penetrating the ground/ocean (assuming no reflection/scattering), but of course that coordinate system would have to shift with frequency, direction (that part’s simple, though), and conditions. Also, the actual lines of sight are bent in these alternative coordinate systems, though that has little impact for conditions that vary only slowly horizontally (e.g. layer clouds as opposed to cumulonimbus).

Aside from contributions from the surface, or where the atmospheric optical thickness is less than 1, the centroid will be at unit optical depth (which is below the level where half of photons from above, in that direction, would have been absorbed (was your using 1/2 instead of 1/e intended to compensate for the radiation not generally being directed vertically?). A convective temperature profile is generally curved in the mass-proportional coordinates, so, even setting aside stratospheric T, in that case it is more obvious that the weighted average T will tend to be different than it is at the centroid. The Plank function is also nonlinearly related to T (esp. at relatively shorter wavelengths – it approaches a linear proportionality at relatively long wavelengths), so the brightness temperature of the radiation will also be a bit different from the weighted average T (it would be interesting to depict T profiles and lapse rates in terms of their Planck functions). These two nonlinearities can cancel somewhat, at least in the troposphere.)

In the above, I was using centroid in the sense of the weighted center of the EWF; but this is more like a center of mass (except it’s not mass in this case). In usage I’m familiar with, centroid is the center of some space – a line or curve, surface, or volume, weighted only by the space itself (length, area, volume). Since EWF is proportional to the density of the fraction of emission cross section which is visible (as in not blocked by absorption), the weighted center of an EWF is in a sense a centroid of an area (the area of visible emission cross section), but that’s a bit of a stretch.

Maybe I should just say center of an EWF, with the exact meaning implied by the W in EWF.

… OTOH, it is the centroid of the area (or volume or hypervolume) of the graph of the EWF graphed as a function over the relevant coordinate(s). Okay, maybe I’ll stick with centroid.

… I only skimmed your (Clive Best) links, but it looks pretty good.

(PS a lot of the ReCAPTCHAs lately have been pairs of numbers. I’ve been enjoying it – not too hard to read.)

“Using optical thickness itself as a vertical coordinate would give the same, precisely exponential EWF in all cases (at least without scattering)” … oops, forgot about refraction. Fortunately, the surface material is generally close to isothermal over the necessary depth in this part of the spectrum (right? H2O is pretty opaque to LW (longwave) radiation; forest canopies … well, close enough I suppose; out of time now anyway…) so it doesn’t matter so much…

@ 18 SecularAlarmist “The reality is that soft-spoken scientists have been drowned out by screaming paid stooges with megaphones. The problem isn’t that the public can’t understand you — they simply cannot hear you at all through the noise. And whispering more articulately is not going to help.”

I agree >95% with your comment above and your basic response. I understand your frustration because I share in it. As Climate science is complex so is “communication”. Both are a human endeavor and anyone whose been married long enough understands that even one on one communication is a minefield and not simple nor straightforward.

Yes CO2 is critically important yet so are the nuances of albedo, cloud dynamics, ENSO and OHC which complicate the appearance of accuracy/certainty. Nothing exists in a vacuum iow, and multiple drivers moderators have different affects in the whole. It’s not an either/or option.

Heartland Inst. et al is only one piece of the jigsaw puzzle here imho. In the 1980’s the most outspoken public world figures who were pro-climate change concern were Thatcher and Reagan. One can’t get as economically right-wing than those two, and yet in 2013 the situation has kind of reversed.

I’m all for more rational & knowledgeable “softly spoken” academics and scientists to replace the US president and all of Congress but know that isn’t going to happen. What you said about “screaming paid stooges” is true. I believe what the Paper was saying is also true. Blending of the two truths plus other aspects and understanding the dynamics as “whole unit” is far closer to representing the “reality” of the “problem” I was seeking to point to.

No criticism of RC was intended, nor was I trying to suggest the issue was simple nor that there was a one-sided solution. Sounds a lot like the complex interactions in understanding Climate Science. Thx for the comment though. I do get it (I think). The Public do vote. If the Public don’t get it (no matter the cause/drivers of this) then everything else being done is a waste of time. Gavin et al may as well go fishing and spend time with his family and friends. That’s how I see it at least, but I am no expert. Sean

re http://clivebest.com/blog/?p=4597

“Below the emission height, radiation in CO2 bands is in thermal equilibrium with the surrounding atmosphere. This is usually called Local Thermodynamic Equilibrium (LTE). ”

LTE actually refers to the condition where the statistics of a population of molecules/atoms/ions/… within a small volume fits an equilibrium distribution, in velocity and energy, including equipartition among translational, rotational, vibrational, etc. states – except where the ‘freezing out’ by the quantum nature of (some of) those states occurs; etc. i.e. it is the distribution approached over time as random collisions continue to redistribute the energy (and momentum), while the system (small volume’s contents) is isolated. Such a distribution allows such a system to be neatly described as having a particular temperature, and all that that entails.

Absorption and emission of photons can disturb a system from LTE, because, for example, the excited molecules/atoms/electrons will tend to emit photons and lose energy, and subpopulations (CO2 vs O2) will tend to gain or lose energy at different rates. But if collisions are frequent enough, energy gains and losses are redistributed sufficiently rapidly to minimize such deviations from LTE, so that the CO2 is at about the same temperature as the O2, etc, and the approximate temperature can approximately determining the fraction of molecules in any particular state, so that emission cross sections (approximately)= corresponding absorption cross sections.

LTE is not required to be satisfied below the effective emission altitude, nor are significant deviations from LTE required above the effective emission altitude; instead it is determined by the collision rate relative to other processes (including the totality of photon emission and absorption across the whole spectrum; the LTE of the atmospheric material is not a property of the photons going through it or interacting with it; it doesn’t vary with wavelength (or direction of radiation), whereas the effective emission altitude, or for that matter, the EWF, does); collisions are more infrequent with height, so the LTE approximation starts to have significant errors at z = … don’t know the exact number, but I think it’s somewhere at or above the stratopause.

@Patrick 027

Thanks for the useful comments. Yes emission at different wavelengths must vary non-linearly with temperature. On Venus I think it is the 4 micron band that dominates because of the large increase in surface temperature. Yes I agree I have probably over simplified things.

Now I have a question for you or anyone else : I have often wondered why the natural level of CO2 in the atmosphere on Earth should be round about 300 ppm. Why has this value been stable for millions of years (apart for rather small variations during ice ages). Why for example is it not 1000 ppm ?

I think the answer may lie in a delicate balance between radiation physics and photosynthesis. The atmosphere itself seems to radiate strongest in the 15 micron band with a CO2 concentration of around 300ppm Photosynthesis needs CO2 levels > 200 ppm and temperatures not too hot. Is there some natural Gaia like feedback at work, which man has disrupted ?

[Response: The dependence of photosynthesis on CO2 levels is complex and different for C3, c4 and CAM plants. At levels around 180ppm (as during the last few glacial cycles), C4 plants (e.g grasses) had increased competitive advantage over C3 plants (trees etc), but there were still trees around. I would hesitate to posit a grand Gaian regulatory feedback on the basis of an over-simplified view of photosynthesis. – gavin]

Clive Best – The natural level of CO2 over the past million years has probably been about 220-240ppm on average. Only the relative blips of interglacial peaks have reached 300ppm.

Uncertainty increases further into the past but mid-Pliocene (~4mya) CO2 levels are understood to have been about 400ppm and probably higher a couple of million years before that. A stable level of about 230ppm is probably “only” a feature of the past 2.5 million years of the Pleistocene.

Going back further the evidence available suggests that stable levels of about 1000ppm were pretty common during the Mesozoic and there was plenty of photosynthesis going on then.

Just for completeness, and to preempt any confusion, this post from Paul Matthews, is a typical example of what happens when people are so convinced by their prior beliefs that they stop paying attention to what is actually being done. Specifically, Matthews is confusing the estimates of radiative forcing since 1750 with a completely separate calculation of the best fits to the response for 1951-2010.

Even if the time periods were commensurate, it still wouldn’t be correct because (as explained above), the attribution statements are based on fingerprint matching of the anthropogenic pattern in toto, not the somewhat overlapping patterns for GHGs and aerosols independently.

Here is a simply example of how it works. Let’s say that models predict that the response to greenhouse gases is A+b and to aerosols is -(A+c). The “A” part is a common response to both, while the ‘b’ and ‘c’ components are smaller in magnitude and reflect the specific details of the physics and response. The aerosol pattern is negative (i.e. cooling). The total response is expected to be roughly X*(A+b)-Y*(A+c) (i.e. some factor X for the GHGs and some factor Y for the aerosols). This is equivalent to (X-Y)*A + some smaller terms. Thus if the real world pattern is d*A + e (again with ‘e’ being smaller in magnitude), an attribution study will conclude that (X-Y) ~= d. Now since ‘b’ and ‘c’ and ‘e’ are relatively small, the errors in determining X and Y independently are higher. This is completely different to the situation where you try and determine X and Y from the bottom up without going via the fingerprints (A+b or A+c) or observations (A+d) at all.

Sean wrote: “In the 1980′s the most outspoken public world figures who were pro-climate change concern were Thatcher and Reagan. One can’t get as economically right-wing than those two, and yet in 2013 the situation has kind of reversed.”

Denial of anthropogenic global warming has nothing whatsoever to do with being “economically right-wing”, or with any other economic or political ideology.

It has to do with protecting the short-term profit interests of ONE PARTICULAR INDUSTRIAL SECTOR, the fossil fuel industry. It has to do with GREED, not ideology.

It is no more “ideological” than denial of the carcinogenicity of tobacco smoke.

Yes, it’s true that the fossil fuel industry’s propaganda machine, and their accomplices in Congress, have systematically equated “burning fossil fuels” with “capitalism” and “free markets” and “liberty”, and has branded denial as a “conservative belief”.

But this is all nonsense. It is a denialist pseudo-ideology that is every bit as bogus as denialist pseudo-science. It is done simply to take advantage of the pre-existing base of the Fox News / Rush Limbaugh audience, who have been brainwashed for a generation to unquestioningly embrace whatever the so-called “right wing” media brands as “conservative”.

In effect, that audience has been developed over the years into a “cult for hire”, who will believe, say and do whatever some corporate interest pays Fox News to tell them is the “conservative” thing to believe, say and do. And the fossil fuel corporations are taking full advantage of it.

Paul,

I am not sure that stable would best describe the levels. The atmospheric concentration never really stabilized at 230 ppm, but alternated between 190 and 270, for an average value of 230 ppm. Miocene values appear to be only slightly higher, but still below 400. Even these low values did not occur until the glaciation of Antarctica began some 30-35 million years ago, dropping from previous values of over 1000.

They do not appear to be an “artifact,” but a result of the glaciation which occurred, which appears to have been repeated during previous eras.

“They do not appear to be an “artifact,” but a result of the glaciation which occurred…”

Hmm. Was that really the way the causality ran? One might suspect it was the other way ’round; at least, off the top of my head I find it much easier to imagine how dropping CO2 would cause glaciation than how glaciation would cause dropping CO2.

re Clive Best – CO2 –

… and trees now exist up to some height on mountains…

In the long term (millions of years), the chemical weathering that supplies Ca (and Mg, etc.) ions from silicates to react with CO2 to form carbonate rocks tends to speed up in response to warming (depending on geography – ideally you want mountain slopes (with Ca-rich silicates, of course) with strong mechanical erosion – it can involve glaciers – but the sediments end up in warm wet environments for the chemical weathering to be fast). This is a significant negative feedback that may help balance solar brightening over geologic time. It will also tend to act ultimately to stabilize CO2 at different levels depending on geologic CO2 emission (inorganic source i.e. the reverse of the reaction just described, or from oxidation of organic C) and changes in organic C burial, and changes in geography and climate – mountain building in particular locations (Himalayas recently, Appalachians some time ago, etc.), etc.

This chemical weathering process is too slow to damp out shorter-term fluctuations, and there are some complexities – glaciation can enhance the mechanical erosion that provides surface area for chemical weathering (some of which may be realized after a time delay – ie when the subsequent warming occurs – dramatically snow in a Snowball Earth scenario, where the frigid conditions essentially shut down all chemical weathering, allowing CO2 to build up to the point where it thaws the equatorial region, at which point runaway albedo feedback drives the Earth into a carbonic acid sauna, which ends via rapid carbonate rock formation), while lower sea level may increase the oxidation of organic C in sediments but also provide more land surface for erosion… etc. Dissolution of carbonate minerals in the ocean or on land can’t result in a net production of carbonate rock/mineral when it comes out of solution, but when in solution, it allows more CO2 to be taken up by the water (because CO2 (or H2CO3?) reacts with CO3-2 in water to form bicarbonate ions). Vegetation affects erosion and chemical weathering (PS interesting aside about how bogs can displace forest via affect on soil chemistry… will have to wait) – more generally, biological evolution (including shells?) has certainly affected the C cycle, among others.

Organic C burial on land is favored by (warm?) wet conditions on flat terrain (poor drainage). Organic C burial in the oceans may be enhanced by nutrients carried from the land by wind. If organic C oxidizes in the deep ocean, the amount of CO2 stored will depend on the remaining residence time of that water mass in the deep ocean, so I’d expect changes in the alignment of climate zones, nutrient-rich surface waters, and ocean currents would affect the partitioning of C between the ocean and atmosphere.

The mechanism for positive CO2 feedback to ice-age/interglacial changes may/is/probably involves some of the above. It isn’t a given for all Earthly conditions – rearrange the continents and oceans, change the baseline climate, … it may not work then.

…

Denialism is not limited to physical sciences.

People also casually engage in politically-motivated denial well-knowm social sciences results as in this post by SecularAnimist:

“Denial of anthropogenic global warming has nothing whatsoever to do with being “economically right-wing”, or with any other economic or political ideology.

…”

Please desist. It’s not helping either the cause of rational inquiry or the level of discourse here.

You might also want to get informed about what’s going on in the rest of the world before pinning global phenomenons on factors specific to some countries.

#43-4: With a moment to spare for such things, I found that good ol’ Wikipedia says:

“The icing of Antarctica began with ice-rafting from middle Eocene times about 45.5 million years ago and escalated inland widely during the Eocene–Oligocene extinction event about 34 million years ago. CO2 levels were then about 760 ppm and had been decreasing from earlier levels in the thousands of ppm. Carbon dioxide decrease, with a tipping point of 600 ppm, was the primary agent forcing Antarctic glaciation. The glaciation was favored by an interval when the Earth’s orbit favored cool summers but Oxygen isotope ratio cycle marker changes were too large to be explained by Antarctic ice-sheet growth alone indicating an ice age of some size. The opening of the Drake Passage may have played a role as well though models of the changes suggest declining CO2 levels to have been more important.”

http://en.wikipedia.org/wiki/Antarctic_ice_sheet

Anonymous Coward@46,

I am not sure what point you are trying to make. While the majority of climate denialists are on the political right at present, there is nothing inherent in conservatism that would predispose one to be in denial of basic science. I can cite many climate scientists who are conservative, some even Republican. I can equally cite prominent leftists who are in denial–the most prominent may have been the late Alexander Cockburn.

So, it is not that there is no preponderance of denialists on one side or the other, but rather that political philosophy by itself is not predictive or useful. If we are to cleave to truth, we cannot cling to ideology–regardless of the ideology.

Anyone know of an accessible copy of this one?

http://www.tandfonline.com/doi/abs/10.1080/10350330500487927#preview

Cashing-in on Risk Claims: On the For-profit Inversion of Signifiers for “Global Warming”

Nope, you’ve only made things worse. Is A chose arbitrarily, or does it mean something? Can we choose A = 0? Or can we maximise A, so that either b or c is zero? Why are b and c small?

[Response: Sorry! Basically, the A+b/A+c thing and their magnitudes is a rough summary of the results. The commonality of the response is related to the commonality of the response to all near-global, near-homogenous forcings – the feedbacks that get invoked (water vapour, ice-albedo, clouds) are similar in both cases (though with differences in the regions where there is stronger inhomogeneity). If the fingerprints were completely orthogonal, it would be the ‘A’ part that would be small – that isn’t the case though. – gavin