Are the heat waves really getting more extreme? This question popped up after the summer of 2003 in Europe, and yet again after this hot Russian summer. The European Centre for Medium-range Weather Forecasts (ECMWF), which normally doesn’t make much noise about climate issues, has since made a statement about July global mean temperature being record warm:

Consistent with widespread media reports of extreme heat and adverse impacts in various places, the latest results from ERA-Interim indicate that the average temperature over land areas of the extratropical northern hemisphere reached a new high in July 2010. May and June 2010 were also unusually warm.

Here, the ERA-Interim, also referred to as ‘ERAINT’, is the ECMWF’s state-of-the-art reanalysis. But the ERAINT describes the atmospheric state only since 1989, and in isolation, it is not the ideal data set for making inferences about long-term climate change because it doesn’t go all that far back in time. However, the statement also draws on the longer reanalysis known as the ERA40 re-analysis, spanning the time interval 1957-2002. Thus, taken into context of ERA40, the ECMWF has some legitimacy behind their statement.

The ERAINT reanalysis is a product of all suitable measurements fed into a model of the atmosphere, describing all the known relevant physical laws and processes. Basically, reanalyses represent the most complete and accurate picture that we can give for the day-to-day atmosphere, incorporating all useful information we have (satellites, ground observations, ships, buoys, aircrafts, radiosondes, rawinsondes). They can also be used to reconstruct things at finer spatial and temporal scales than is possible using met station data, based on physical rules provided by weather models.

The reanalyses are closely tied to the measurements at most locations where observations – such as 2-meter temperature, T(2m), or surface pressure – are provided and used in the data assimilation. Data assimilation is a way of making the model follow the observations as closely as possible at the locations where they are provided, hence constraining the atmospheric model. The constraining of the atmospheric model affect the predictions where there are no observations because most of the weather elements – except for precipitation – do not change abruptly over short distance (mathematically, we say that they are described by ‘spatially smooth and slowly changing functions’).

There are also locations – notably the in the Polar regions and over Africa – where ground-based measurements are sparse, and where much is left for the weather models to predict without observational constraints. In such regions, the description may be biased by model shortcomings, and different reanalysis may provide a different regional picture of the surface conditions. Surface variables such as T(2m) are strongly affected by their environment, which may be represented differently in different weather models (e.g. different spatial resolution implies different altitudes) and therefore is a reason for differences between reanalyses.

Furthermore, soil moisture may affect T(2m), linking temperature to precipitation. The energy flow (heat fluxes) between the ground/lakes/sea and the atmosphere may also affect surface temperatures. However, both precipitation and heat fluxes are computed by the reanalysis atmosphere model without direct constraints, and are therefore only loosely tied to the observations fed into the models. Furthermore, both heat fluxes and precipitation can vary substantially over short distances, and are often not smooth spatial functions.

While the evidence suggesting more extremely high temperatures are mounting over time, the number of resources offering data is also growing. Some of these involve satellite borne remote sensing instruments, but many data sets do not incorporate such data.

In the book “A Vast Machine“, Paul N. Edwards discusses various types of data and how all data involve some type of modelling, even barometers and thermometers. It also provides an account on the observational network, models, and the knowledge we have derived from these. Myles Allen has written a review of this book in Nature, and I have reviewed it for Physics World (subscription required for the latter).

In the book “A Vast Machine“, Paul N. Edwards discusses various types of data and how all data involve some type of modelling, even barometers and thermometers. It also provides an account on the observational network, models, and the knowledge we have derived from these. Myles Allen has written a review of this book in Nature, and I have reviewed it for Physics World (subscription required for the latter).

All data need to be screened though a quality control, to eliminate misreadings, instrument failure, or other types of errors. A typical screening criterion is to check whether e.g. the temperature estimated by satellite remote sensing is unrealistically high, but sometimes such screening may also throw out valid data, such as was the case of the Antarctic ozone hole. Such post-processing is done differently in analyses, satellite measurements, and reanalyses.

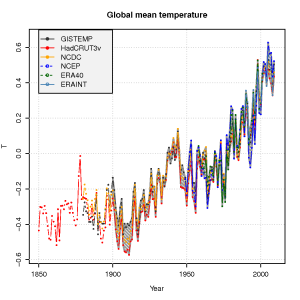

The global mean temperature estimated from the ERAINT, however, is not very different from other analyses or reanalyses (see figure below) for the time they overlap. We also see a good agreement between the ERA40 reanalysis, the NCEP/NCAR reanalysis, and the traditional datasets – analyses – of gridded temperature (GISTEMP, HadCRUT3v, NCDC).

Do the ERAINT and ERA40 provide a sufficient basis for making meaningful

inferences about extreme temperatures and unprecedented heat waves? An important point with reanalyses, is that the model used doesn’t change over the time spanned by the analysis, but reanalyses are generally used with caution for climate change studies because the number and type of observations being fed into the computer model changes over time. Changes in the number of observations and instruments is also an issue affecting the more traditional analyses.

Since the ERAINT only goes as far back as 1989, it involves many modern satellite-borne remote sensing measurements, and it is believed that there are less problems with observational network discontinuity after this date than in the earlier days. It may be more problematic studying trends in the ERA40 data, due to huge improvements in the observational platforms between 1958 and now. Hence, it is important also to look at individual long-term series of high quality. These series have to be ‘homogeneous’, meaning that they need to reflect the local climate variable consistently through its span, not being affected by changes in the local environment, instrumentation, and measurement practices.

An analysis I published in 2004, looking at how often record-high monthly temperatures recur, indicated that record-breaking monthly mean temperature have been more frequent that they would have been if the climate were not getting hotter. This analysis supports the ECMWF statement, and was based on a few high-quality temperature series scattered across our planet, chosen to be sufficiently far from each other to minimize mutual dependencies that can bias the analysis.

The ECMWF provides data for some climate indices, such as the global mean temperature, and the National Oceanic and Atmospheric Administration (NOAA) has a web site for extreme temperatures and precipitation around the world with an interactive map, showing the warmest and coldest sites on the continents. Another useful tool is the KNMI ClimateExplorer, where people can both access data and carry out different analyses on line. It is also possible to get climate data on your iPhone/iPod Touch through Apps like Climate Mobile.

Update: I just learned that NOAA recently has launched a Climate Services Portal on www.climate.gov.

Update: http://rimfrost.no/ is another site that provides station-based climate data. The site shows linear trends estimated for the last 50 years.

The state of Texas is basing their claims on what robust scientific findings?

RiHo08 — 17 September 2010 @ 5:05 PM puts up a straw man and tells us:

The works of Kipling are science. Comments of some imagined opponent “smacks of a historical myopia and ignorance.” Imagined opponents “need to go back to high school as they seem to have missed something in 9th grade history.”

I say– Drive-by troll.

Or maybe your personal bias has led you to believe that those reporting that such an extended event hasn’t been seen before are ignorant, when they really aren’t. Making hand-waving assertions of this sort without any reference to sources (any source, much less any credible source) simply makes you look foolish ’round these parts.

People have been making the same claims about the Russian heat wave earlier this summer, yet the Russians insist that nothing like it has been seen for at least 1,000 years. Now, that’s a country in touch with its history.

http://dotearth.blogs.nytimes.com/2010/09/17/scientists-react-to-a-nobelists-climate-thoughts/

The UHI does create a very modest temp trend change in the measurements but it has been corrected for. The late Stephen Scheinder and Spencer Weart have discussed this.

It is interesting in fact that the UHI trend is flat to slightly negative, yet the cities are growing. At the size of a city, I would like to use the term milli-climate to describe the delta between the local conditions and the broader regional climate. As a city is bigger than a typical microclimate, but a lot smaller than a climate I think it is desciptive. I can think of some plausible reasons why the urban milliclimate at a given point might be cooling (relative to the climate it is embedded in). The first is the growth of vegetation, which has a local cooling effect. Secondarily white roofs are becoming more common, as the benefits of less demand on cooling systems, and a cooling effect on the urban environment are become more noticed. So it may not be surprising to see the UHI effect waning as time (and microclimate intervention) go by.

> nobelist

(physics)

You saw XKCD yesterday?

Roddy Campbell (#120)

As a first point, I object to being verballed. I presented Curry and Pielke as examples of well qualified people whose expressed opinions have been non-factual because of either laziness or ideological blinkers. You are persistently attributing to me a view that both or either have ideological blinkers (a view I am not defending, and don’t have enough information to hold). The disjunction is important.

Further, you claim that I “…would discount anything those two say on grounds of proven ideological bias.” I do not discount anything anyone says, even geocentrists. Rather, if they are making a novel argument, I check the reasoning to see if it holds up; as ,for example, in my responce to RiHo08 (@34 above). If they are regurgitating something I have seen refuted several times before, then I ignore it.

You may be misrepresenting me because you have over interpreted my actual statements, in which case I ask that you reread my posts and acknowledge the error. Alternatively, you may be trying to get me to defend an extreme and untenable position for rhetorical gain. That would reflect very poorly on you.

As to Judith Curry, my take on her was based on my originally reading her comments here at RC, which were simply incredible. Tim Lambert merely provided a convenient and accurate summing up. I have not read Zorita on Curry, so I cannot respond to his opinion. However, surely you don’t want to argue that Curry’s posts here at RC demonstrated carfull consideration of the issues and reasonable discussion?

As to Pielke, you only responded to one of the two examples provided. With regard to the one you did respond to, are you sugesting that quoting a google search as indicating 1.264 notices of a Mann paper in news without checking the search data sufficiently to notice that only 2% of the search results actually mentioned Mann’s paper was not lazy? Or are you suggesting that “correcting” the over statement of notices of Mann’s paper from 1,264 to 27 when in fact there were only 11; or “correcting” the understatement regarding notices of Landsea’s paper from 1 to 3, when in fact there were 5 such notices represents an adequate responce? Doing so suggests that you think accusations of media bias are acceptable so long as the accusers bias their reporting of the actual numbers by no more than 50%. Is that a position you wish to take?

Re comment #6. Gavin and Eric answered it completely but I will just add that the reanalysis have been used widely by forecasters. The numerical weather prediction models used in the day to day forecasting by myself and others use these same data assimilation techniques and they have vastly improved the forecasts.

RiHo08 (@145),

I am not surprised you do not wish to defend your earlier post. Citing examples of heatwaves at least one of which was 10 degrees Centigrade cooler than that which recently struck Russia (and the others show no evidence of being any warmer than that) hardly challenges the notion that the 2010 Russian Heatwave surpassed all others in at least the last thousand years (as declared by the Russian Meteorological office).

Now you wish to baffle us with Kippling. But Kippling only describes a flood which makes the road unpassable; a flood which will go down in a day or two, that has made the river approx two to 4 miles wide, and ten feet deep at the ford.

http://www.online-literature.com/kipling/indian-tales/7/

http://www.kipling.org.uk/rg_floodtime_notes.htm

Frankly that compares poorly with a flood well over 6 miles wide (and much wider in the Sindh), deep enough to flow through third story windows of a hotel on the river bank (in one location) and which is still ongoing a month and a half after the initial flooding.

http://en.wikipedia.org/wiki/File:Pakistan_2010_Floods.jpg

So far, for those who look a little further, your anecdotal evidence is ony showing how exceptional the events in Russia and Pakistan have been. That your cherry picking excercise is so unsuccessful should, however, give you a clue. You cannot find anecdotes of truly comparable incidents because truly comparable events have not occured in at least a thousand years.

“The state of Texas is basing their claims on what robust scientific findings?” – 152

What does it matter when the opposition is strictly ideologically driven?

And if they lose, they will just blame Activist Liberal Judges.

Neither Science nor evidence will sway the ideology of self deceivers.

You must defeat their ideology.

“Hopefully another dumb lawsuit that will result in another bloody nose for those bringing it” – 151

Only action produces results. Hope does nothing.

Susan # 155 good link, so thanks for that! It is quite true that different scientists ‘frame’ findings differently. I like how the late Stephen Schneider explained the science and the judgment calls, especially those of the IPCC in 2007.It is also true that a background in physics alone does not make one an epxert on climate.

#145 RiHo08

“It seems to me that the arguement that global warming is prompting extreme; ie, never been seen before, events, smacks of a historical myopia and ignorance. Maybe those expousing such beliefs need to go back to high school as they seem to have missed something in 9th grade history”

“never been seen before” = UNPRECEDENTED, not extreme.

Perhaps someone needs to go back to grade school, as he or she seems to have missed some vocabulary lessons.

“I usually haven’t resurfaced on blog sites as I usually say what I have to say and exit.”

Pretty lame. Psychologically incapable of engaging in dialogue but still wants a reaction. An individualist who secretly pines for social contact but can’t handle the implications of such contact.

All that science claims with high probability, RiHo08, is that there is increasingly more energy in the system. It might mean nothing, but I think even you would agree that if there is an increase in the energy of a highly complex, highly dynamic system that has a great number of energy-sensitive elements, abnormal events will be observed.

RiHo08 #145:

> Yet, as a boy, I had read to me tales by Rudyard Kipling describing the

> monsoon rampages of the Indus River carrying her cargo of silt to the

> farmlands and deltas below.

Indeed, one can learn much about the natural world from reading Kipling. For instance, the fact that wolves sometimes adopt human children and teach them their language. But instructive as this individual case report may be, it does not help answer the questions I am sure are on all our minds: How large is the population of feral, wolf-raised children? Is it growing or declining? What is the chance of finding an animal-tongue interpreter in a hundred square miles of Indian forest? For this we need systematic observations and statistics. Anecdotic evidence, even from such reliable witnesses as novelists and poets, is of limited worth. Still, it’s noteworthy that in Roman times, wolves would suckle twins at the same time, while Kipling reports a single child. Do a linear fit to those data and draw your own conclusions, say I.

:-)

[Response: ;)

]

Dan @160, isn’t reanalysis essential in order to remove non-physical modes from the input daya sets? I think it was developed by the forcasting community, because it is an essential part of making weather models useful.

150,152 (on Texas),

It will be interesting (and sad) to see when fossil fuels no longer have the value they do today (because of their unintended damage to the earth’s climate), and when at the same time they turn out to be the cause of Texas’ downfall as a wealthy and powerful state, i.e. when unrelenting water resource issues (and here and here) drive half the population away, and leave the state a shell of its once arrogant self.

I wonder what sort of legislation the Texans will pass when their state is crumbling around them, having lived and died at the heart of both sides of the fossil fuel equation, economic boom and climate/water bust.

I’m not wishing anything ill on the people of Texas, mind you, or anyone for that matter, but we can all see this coming, so why can’t they? One merely wishes that they took their own self interests, as well as those of the nation and planet as a whole, more to heart, with a less short sighted approach. A selfish “but we love fossil fuels because they make us rich” approach now is not going to win much pity, let alone many friends and allies, in a future where they suffer even more than others for their greed.

I don’t care.

I ran my calculations in the ’70’s and concluded two things:

1. It’s a political problem rather than an Engineering Problem and I don’t do those.

2. The threshold/tipping point is about 1985. The people are choosing these crazies to represent them. Nothing to be done.

I’m working on carrying capacity of the planet given non-distribution.

There is actual merit to the Texas v. EPA law suit. It addresses EPA rules that are near-draconian, maybe schizophrenic idiotic, and potentially illegal per the Clean Air Act. But, from a scientific view I do not condone or support Texas’ argument regarding the validity of climate science in general, the IPCC, etc. However, from a legal view, it is common practice for both parties in any law suit to throw everything in, including the kitchen sink, and exaggerate the hell out of most of those things. That’s how the legal system works.

Bob (Sphaerica) @ 169

Reminds me of a humorous saying a Texan once told me, “Ya can always tell a Texan, but ya can’t tell ’em much!”

As to the oil industry, well arrogance is intoxicating. The problem is, there tends to be a down side to intoxicants, and inventing a culture that makes a virtue of that particular flaw is just asking for trouble. Hard to worry about problems if you can invent your own reality.

> Rod B

> that’s how the legal system works

Rod is from Texas, I recall. He would know.

Pity the Texas bar hasn’t caught up with Oklahoma, among others — yet.

“… even if the duty of “zealous” representation had not been replaced by the duty of “diligent representation” in the current Oklahoma Rules of Professional Conduct, ‘zealous” advocacy would still not authorize … misrepresenting the facts or law in pleadings, briefs and letters whether in litigation or in transactional law.

“Professionalism standards by bar associations have begun to address such professionalism abuses by aspirational standards conveying that the local bench and bar do not condone such behavior. A number of recent professionalism codes have come out specifically against lawyer conduct which interferes with the truth seeking mission of the justice system….”

http://www.okbar.org/ethics/harris.htm

Texas may catch up eventually. I’m sure Rod will keep us posted with their progress.

62, Edward Greisch: 57 Kevin McKinney: In a technological civilization, the things a citizen needs to learn are science and math. Some other courses need to be dropped: gym, English literature, etc. Those who can’t pass the Engineering and Science Core Curriculum [E&SCC] shouldn’t get degrees at all. Part of the purpose is to prevent lawyers and judges whose undergrad degree is music. Judges and lawyers NEED contact with reality and the only place to GET contact with reality is science and engineering laboratory.

I wonder if a group of people trained as you recommended could write a Constitution as good as what the US has, or if it would even occur to them to write a Bill of Rights. Could they even imagine a free market? Checks and Balances? My guess is that they’d control all communication, and stifle free thinkers like Thomas Edison, Steve Jobs, Rachel Carson and Florence Nightingale.

When will it be allowed to say that Cuccinelli’s father spent his career as a gas lobbyist and that his advertising company gave almost 100,000 dollars to AG Cuccinelli? It’s on the Internet.

It would be nice to know who the father’s clients are, especially the ones in “Europe.”

http://legendofpineridge.blogspot.com/2010/09/attorney-general-cuccinellis-daddy-and.html

Why “Scientific Consensus” Fails to Persuade

http://www.nsf.gov/news/news_summ.jsp?cntn_id=117697&org=NSF&from=news

“It is a mistake to think ‘scientific consensus,’ of its own force, will dispel cultural polarization on issues that admit scientific investigation,” said Kahan. “The same psychological dynamics that incline people to form a particular position on climate change, nuclear power and gun control also shape their perceptions of what ‘scientific consensus’ is.”

“The problem won’t be fixed by simply trying to increase trust in scientists or awareness of what scientists believe,” added Braman. “To make sure people form unbiased perceptions of what scientists are discovering, it is necessary to use communication strategies that reduce the likelihood that citizens of diverse values will find scientific findings threatening to their cultural commitments.”

“I wonder what sort of legislation the Texans will pass when their state is crumbling around them, having lived and died at the heart of both sides of the fossil fuel equation, economic boom and climate/water bust.” – 169

Impossible to say, but I would bet that Climatology and most other ideologically challenging science will long before then, have been defunded in order to reduce the state and federal deficits.

“One merely wishes that they took their own self interests,” – 169

Like U.S. Bankers do?

1. Are you making the same error that Alan Greenspan made when he presumed based upon his Randite Ideology that it would be impossible for a deregulated banking industry to work against it’s own self interest?

2. One component of the denialist opposition results from that aspect of human nature in which a higher value on immediate benefit is perceived compared to a greater benefit at some time in the future. A bird in the hand is worth 2 in the bush.

“… we can all see this coming, so why can’t they?” – 169

Because it is more comforting to them to believe liars who will tell them what that which does not challenge their conservative faith based liedeology.

Remember, they were promised by Free Market Economists that the Earths resources are “essentially infinite.”.

God will provide.

They are two of the prime components of their Liedeolgy.

Is it even possible to be more detached from physical reality?

You can not reason with the unreasonable. Rational argument doesn’t work. Only time and death will correct their ideologically driven divergence from reality.

If change is to occur faster than the death rate, the underlying political ideology of their denialism has to be defeated.

Since scientists are not doing this, science has so far lost the battle.

[Response: And just what are you doing about it (except consistently bitching) mr big talker?????? And do try to get a clue about the roles of different elements of society in effecting change–Jim]

The Supreme Court previously ruled that CO2 regulation falls under the Clean Air Act umbrella. W’s administration lost that little lawsuit. With the Supremes ruling hanging over their heads, on what basis do you believe the relevant US Court of Appeals might rule EPA rules regulating CO2 are illegal?

Of course, RodB’s a bit … hazy … on well-established science, so it’s not unlikely he’s equally … hazy … on the legal implications of a sweeping Supreme Court ruling.

BTW Texas appears to be attacking the science, which they claim has been proven “fraudulent”, rather than storm the Supreme Court ruling castle directly. They have a chance with that argument given the current make-up of the Supreme Court.

Rod W. Brick writes: “Paul Tremblay, your description of high school and its students in #107 is diametrically opposed to my observations. Though it’s possible my experience is limited to what might be a public school different from the ‘norm'”

Do you care to elaborate? I believe I stand on firm ground, having worked in the school system for 15 years in all types of classrooms. In my current job, I have seen and scored tens of thousands of tests from all over the country.

VD, please contact your village:

http://www.google.com/search?q=Vendicar+Decarian

North Pole Trek in 5 Days

This is to tell everyone about an important initiative being undertaken in just a few short days because yes, it is getting warmer and warmer and we need to take urgent action to do something about it.

Parvati, a Canadian musical artist and yoga instructor, is taking a courageous journey to the most northern Canadian soil: a small, desolate island in the Arctic Ocean known as Ward Hunt Island. The location is just kilometres from the Magnetic North Pole and 200 kilometres from 90 degrees North.

Parvati’s mission is to bring awareness to the urgent ecological effect of melting polar ice caps. Charged with purity of heart, clear intention, and the willingness to serve, Parvati will become the first artist to ever perform this far North. There she will offer her songs to help raise awareness of just how quickly the ice caps are disappearing and the devastating effect this is having on the entire planet.

Born in Montreal and now living in Toronto, Parvati is an internationally acclaimed singer, songwriter, performer and producer of electronic dance pop. Her music celebrates the gift of life and her debut album and multimedia show, Yoga in the Nightclub, has had people from Toronto to Berlin shaking to its joyful rhythms. After a summer of increased signs of environmental distress, Parvati decided to postpone her Canadian tour to trek to the North Pole. She says she simply cannot turn away from the effects climate change is having.

“I feel the global ecological crisis is a wake up call for us all, a call to awaken I AM consciousness, the magnificence of who we are,” says Parvati.”The planet reflects how we collectively treat ourselves, each other and our environment. A collective is only as strong as its individuals. If we want to change our environment, we need to transform ourselves.”

Parvati will be joined on the trip by Satish Sikha (www.ourearth-wewill.com), another environmental activist. In Resolute, Canada’s most remote city, Satish will unveil the world’s longest piece of woven silk. Each segment is signed by a celebrity, politican or international dignitary who shares their thoughts on climate change.

Parvati leaves Toronto on September 23, 2010 to meet with city council in Iqaluit andperform for school children in Nunavut. She will singat the top of the world on September 26th.

The timing of Parvati’s trip is significant. Recent news reports that many ice shelves in Greenland and Canada have cracked. At the end of August, NASA reported an ice crack on Ward Hunt Island that is 40 metres deep and the size of Bermuda. Meanwhile, the sea ice levels are at an unprecedented low land as such wreaking havoc on our fragile ecosystem.

More information about Parvati’s trip is available at http://www.parvati.ca.

CM @167 — Thanks! :-))

Here’s some partly OT. On a listserve someone mentioned how GISS has reported that this is the warmest year (I thought they did that after the year’s end, maybe she mean warmest summer).

To which another replied:

To which I replied (just let me know if what I said is correct enough…):

I can make corrections if I got it wrong….

Lynn Vincentnathan @182 — Looks fine to me.

For Lynn:

http://www.google.com/search?q=site%3Atamino.wordpress.com%2F+Goddard+error

“I had read to me tales by Rudyard Kipling describing the monsoon rampages of the Indus River carrying her cargo of silt to the farmlands and deltas below. Those events, chronicled more than 150 years ago were equally impacting the lives of that area now called the Swat Valley.”

But Eugene O’Neill didn’t write “Oetzi the Iceman Thaweth”, nor did Hemingway pen an ode to “The Vanishing Snows of Kilimanjaro”; Tennessee Williams “Cat on a Hot Tin Roof” wasn’t about 53 degrees centigrade in the shade in Mohenjo-daro. The Joads weren’t fleeing expanding Hadley cells, or falling rice crops, in Steinbeck’s “The Grapes of Wrath”.

Lynn, there are other ways to consider 2010 as warmest ever, SST’s in the Arctic are for the most part crazily warm http://www.osdpd.noaa.gov/data/sst/anomaly/2010/anomnight.9.16.2010.gif

I just measured to confirm unusual warm waters in the NW passage as well. The sea here has huge influence when devoid of ice, winter is 3 weeks late , it usually started at end of August in the not so distant past, So for the large part of the Arctic, its all time record high temperatures. All of which properly considered, sparsely measured with surface stations, not considering this region of the world like Hadley does ultimately lead to unbalanced arguments between GT institution results.

Will post on my website a blue sun at sunset, which largely means weakly distorted sun disk. This never happened not so long ago, sea ice causes more refraction and the colours were usually more red due to scattering of red by the greater length of air at more tardy sunsets.

Hank says, “Texas may catch up eventually.”

Not likely, but I’ll keep an eye out.

dhogaza, I believe the Supreme Court could have their ruling reversed (just an opinion — not very likely, though), but that is not what the Texas lawsuit was about. So that would be OT#2 here…. from the OT#1 about Texas. Getting way too complicated for me!

Paul Tremblay, it is not anywhere close to my experiences with Dripping Springs (TX) HS or the Texas testing program..

This is a response to Edward Greisch: I am a very well-trained pianst/composer/music theory teacher/performer who follows science closely.

Did you know that teaching a child to sight-read music between the ages of 3 and 10 will raise that child’s IQ by an average of 10 points by the time they are an adult? Did you know that sight-reading music is the only known human skill that will do that? So why aren’t all American schools teaching children how to read music, instead of cutting music programs?

Because too many people are ignorant of the benefits, I would assume.

It seems to me that if we want voters to have a better understanding of complex science so that they might make informed choices, at least some music training (as opposed to no music training, which you appear to advocate) would be valuable.

Craig Nazor (@190), while I don’t doubt that teaching children to sight read music will improve their test results on IQ tests (not quite the same thing as critical reasoning); teaching chess and philosophy both have similar effects, in addition to teaching skills more directly related to critical reasoning.

http://www.auschess.org.au/articles/chessmind.htm

http://www.philosophypress.co.uk/?p=1186

I have little doubt that an earlier and more cohesive introduction to science would have the same result.

> sight read … IQ

I’d like to see a cite to that. A few minutes’ searching turns up some support for the idea that some teachable skills get developed by learning sight-reading (being able to ‘hear music in one’s head’ when reading an unfamiliar score).

http://scholar.google.com/scholar?hl=en&q=child+%22sight-read%22+music+intelligence+skill&as_sdt=2001&as_ylo=2009&as_vis=1

Learning phonics — being able to ‘sound out words’ when reading unfamiliar text — might be related here. Big area of research, I didn’t find a good pointer to a comprehensive discussion anywhere.

Having learned to ‘hear’ reading music and text myself as a kid, I’d like to believe ….

Re #155 , #158 and #164

Its a pity. He is a really good scientist.Furthermore I think that he represents the tip of an ice-berg.

All too true. That needs echoing around the echo-chamber. It is also true for chemistry, biology, statistics etc. and it often applies to the distinguished, because they have given themselves less time to read widely.

[Hank: What’s XKCD? and how was it relevant?]

I must say that Rod B’s comments about the Texas lawsuit are among the most incoherent things I have ever read. Not to mention disingenuous.

The whole and entire basis of the lawsuit is the claim that “the IPCC, and therefore the EPA, relied on flawed science to conclude that greenhouse emissions endanger public health and welfare”, and Texas is seeking to block the EPA regulations explicitly and solely “Because the Administration predicated its Endangerment Finding on the IPCC’s questionable facts.” (Emphasis added.)

The Texas lawsuit is not arguing that the regulations should be overturned merely because they are inordinately burdensome or costly to certain economic interests — it is directly attacking the legitimacy of the science that the EPA relied on to reach an endangerment finding (which the Supreme Court declared the EPA was not only authorized, but obligated to do).

Without that attack on the science underlying the endangerment finding, there is no lawsuit.

for deconvoluter:

http://www.google.com/search?q=site%3Arealclimate.org+xkcd

Exactly, it’s not a constitutional argument, though “flawed science” is a bit weaker than the public claims, at least (i.e. climategate proves faked data blah blah).

A constitutional argument would be dead in the water anyway, as I pointed out.

SecularAnimist, I don’t know what filing you’re reading but what you write in 194 has virtually no resemblance to the Texas filing. You have to get almost half-way through it before IPCC is even mentioned; when it is mentioned, it is mostly administrative and procedure commentary. Assessing the science is only indirectly referred to by citing the IAC report. There is no “flawed science” phrase. Your quoted phrase, “Because the Administration predicated … ” ain’t there either.

Before you lambaste me for being disingenuous you might try checking out the stuff I’m commenting about. It probably seemed incoherent because it resembled nothing that you were dreaming up.

Deutsche Bank has issued a whitepaper on the “Climategate” emails. CRU has it on their site, and so do I.

http://legendofpineridge.blogspot.com/2010/09/deutsche-bank-issues-whitepaper-on.html

“The paper’s clear conclusion is that the primary claims of the skeptics do not undermine the assertion that humanmade climate change is already happening and is a serious long term threat. Indeed, the recent publication on the State of the Climate by the US National Oceanic and Atmospheric Administration (NOAA), analyzing over thirty indicators, or climate variables, concludes that the Earth is warming and that the past decade was the warmest on record.”—Deutsche Bank Whitepaper Addressing the Climate Change Skeptics

Maybe the Deutsche Bank whitepaper will make the denialists’ financial and political sponsors think about what they are doing.

http://www.banking-on-green.com/en/content/news_2744.html?dbiquery=null:climate

From a NYT book review

http://www.nytimes.com/2010/09/19/books/review/Morris-t.html?pagewanted=2

discussing ‘rogue waves’ and surfing,

this tidbit:

“average wave heights rose by more than 25 percent between the 1960s and the 1990s, and insurance records document a 10 percent surge in maritime disasters in recent years.”

Anyone got a cite? Estimate of how much energy could be ‘hidden’ in the form of larger waves rather than temperature of the ocean?

Um, Rod? This:

Appears on page 2 of the Petition for Reconsideration. I’d say that qualifies as attacking the science.

Perhaps you can provide a link to the document you cite?