This post is related to the substantive results of the new Marvel et al (2015) study. There is a separate post on the media/blog response.

The recent paper by Kate Marvel and others (including me) in Nature Climate Change looks at the different forcings and their climate responses over the historical period in more detail than any previous modeling study. The point of the paper was to apply those results to improve calculations of climate sensitivity from the historical record and see if they can be reconciled with other estimates. But there are some broader issues as well – how scientific anomalies are dealt with and how simulation can be used to improve inferences about the real world. It also shines a spotlight on a particular feature of the IPCC process…

One of the most intriguing differences between IPCC AR5 (section 10.8) and previous reports was the bottom line conclusion on equilibrium climate sensitivity (ECS). Compared to AR4, they moved the lower limit for the likely range from 2ºC to 1.5ºC and instead of suggesting a ‘best estimate’ of ~3ºC, they didn’t feel as if they could give any best estimate at all, leaving an impression of a wide (perhaps uniform) distribution of likelihoods from 1.5 to 4.5ºC. (NB. If you want a good background on climate sensitivity, David Biello’s article at Scientific American is useful or read our many previous posts on the topic).

The reason for this change was a series of new papers (particularly Otto et al, 2013 and Aldrin et al, 2012) which focused on sensitivity constraints from the historical period (roughly 1850 to the present). For a long time, this method had such large uncertainties that the resulting constraints were too broad to be of much use. Two things have changed in recent years – first, the temperature changes over the historical period are now more persistent, and so the trend in relation to the year-to-year variability has become more significant (this is still true even if you think there has been a ‘hiatus’). Secondly, recent papers and the AR5 assessment have made a case that the uncertainty in net aerosol forcing (a cooling) can be reduced from previous estimates. An increase in signal combined with a decrease in uncertainties should be expected to lead to sharper constraints – and indeed that is the case.

However, these papers make a number of simplifying assumptions. Most often, they approximate the energy balance of the Earth as a simple one-dimensional linear equation connecting forcing and global mean temperature response. This implies that: a) the approach to equilibrium in forcing/temperature space is linear – that sensitivity doesn’t change in time or in response to patterns of change, and b) that all forcings are, in a basic sense, equivalent. Given those assumptions, looking at the forcing over a long-enough multi-decadal period and seeing the temperature response gives an estimate of the transient climate response (TCR) and, additionally if an estimate of the ocean heat content change is incorporated (which is a measure of the unrealised radiative imbalance), the ECS can be estimated too.

[A quick note on terminology: All constraints have to be based on observations of some sort (historical trends, specific processes, paleoclimate etc.) and all constraints involve models of varying degrees of complexity to connect the observation to the sensitivity metric. People who only describe constraints based on the historical changes as ‘observational’ while every thing else is supposedly ‘model-based’ are just playing rhetorical games.]

Rather than simply assume that one subset of constraints are superior, it is important to investigate why there might be a systematic difference between methodologies and the coupled GCMs are excellent tools for that. It’s important to be clear how the GCMs are being used here – explicitly, they are being used as ‘analogs’ for the real system. It is a set up where we can calculate everything and know how all the diagnostics relate to each other across multiple scenarios. Thus – in this system – we can assess how well simple assumptions for the energy balance approach work out in a more complex system. The claim is not being made that the GCM is exactly a match to the real world, but rather that if a simplifying assumption made about the real world doesn’t hold up in a GCM, it probably won’t hold up in the real world either.

Results in a perfect model world

Back to the paper. Marvel et al use a series of simulations that reran the historical period, but with only one forcing at a time. For instance, simulations were run that only used the changes in volcanic forcing, or in land use or in tropospheric aerosols. These counterfactual simulations give a wide variety of possible ‘histories’ where we can apply the methodology of Otto et al (for instance) to see whether it gives the ‘right’ answer. Remember, since this is a model, we know exactly what the right answer is for the TCR and ECS.

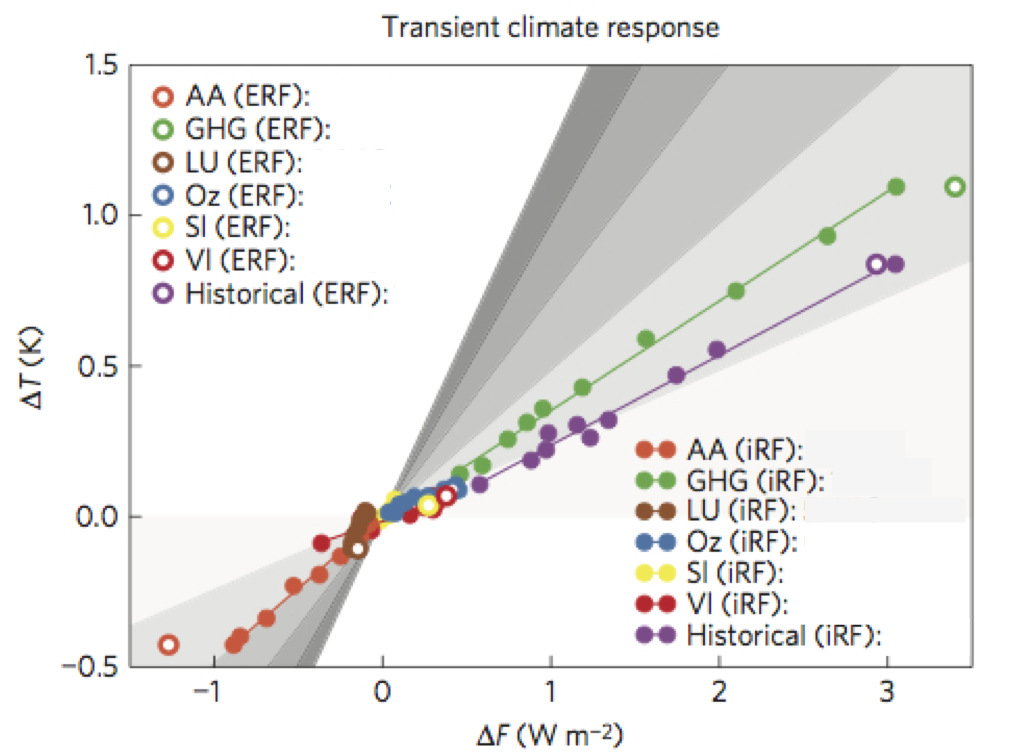

The interesting thing is that the answers for the different ‘histories’ aren’t right. Indeed, for each of the forcings, there is a systematic error in the answer they give. You can see this in the picture below. If all the answers were correct each of the colored lines (which correspond to a specific forcing) would all have the same slope. They obviously don’t.

Figure 1: The relationship between forcing and global mean temperature response for seven historical sets of experiments. Each color corresponds to a different experiment (AA is aerosols only, LU is land use only, GHG is greenhouse gases only, Oz is ozone forcing only, Sl is solar only, Vl is volcanic only, and Historical is all forcings together). Each dot is for an ensemble mean decadal-average and the filled vs open circles show a technical distinction that depends on how the forcings are specifically defined. The slope of each line is the estimated transient climate response (TCR).

This result is the same whether you look at the TCR or the ECS (using the ocean heat content changes in the same simulations), or whether you use instantaneous forcings or effective radiative forcings. In each case, estimates of the (known) TCR and ECS using the Otto et al methodology significantly underestimate the actual values from the model.

The reasons seem to be related to the spatial distribution of the forcings with respect to the forcing from CO2 which is relatively homogeneous globally. Aerosols and ozone are mostly important in the northern hemisphere, land use forcing is confined to land etc. That affects how quickly the land and ocean temperatures respond and make a different to the projection of the forcing onto the ocean, and hence the ocean heat content change.

Back to the real world

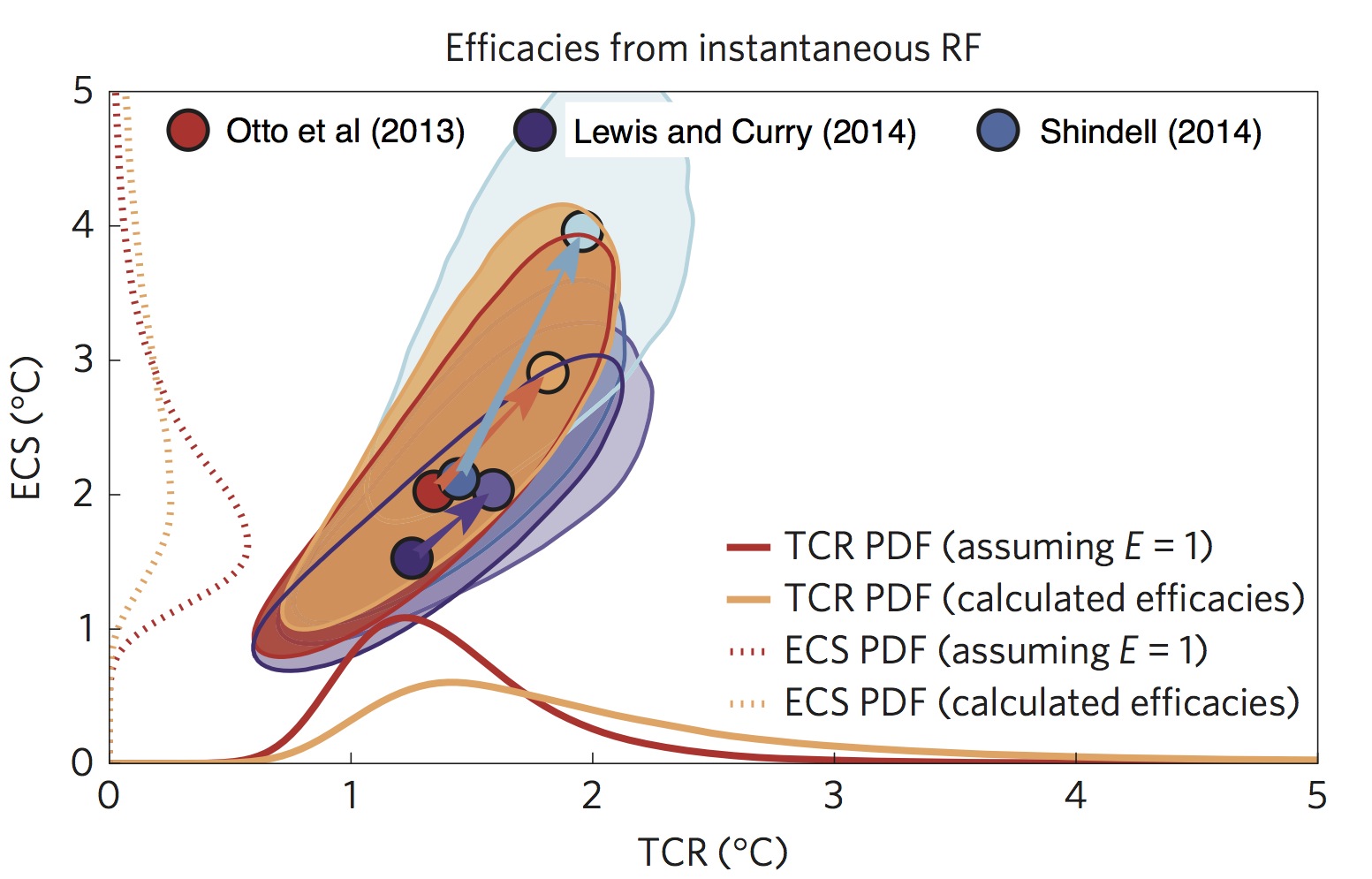

We can go further. If we characterise the error in the slopes in Fig. 1 by an ‘efficacy’ for each forcing (the ratio of the slope that it ought to have to be accurate to the slope it actually has), we can take the forcing estimates used in Otto et al (and other papers), adjust them for this bias, and redo the calculations for the real world. Of course, the adjustment we are making has it’s own uncertainty (this is derived from a single model after all) and that needs to be taken into account too.

Since the sensitivity estimates using the Otto et al method in the model world are biased low, using the estimated efficacies in the real world means that the sensitivities from the adjusted methodologies are going to be increased, and indeed that’s exactly what happens.

Figure 2. Joint probability density function for the climate sensitivity (TCR and ECS) and how they shift when you take the efficacies into account. In each case (which vary according to the estimated forcings) the mean of the distribution shifts to significantly higher numbers (follow the arrows!).

How big an effect is this? Well, for Otto et al (2013), the estimated TCR without taking account of the efficacies is 1.3ºC (which is on the low side of other estimates), but with this effect accounted for, it is closer to 1.8ºC. The results are similar for the other studies we looked at and for the ECS values too (which go from 2ºC to 2.9ºC). The net effect is to bring these studies directly into line with constraints from other methodologies.

And what about IPCC?

One of the key principles of the IPCC process is that it can only assess the published literature. This leads to a bit of an odd scramble at deadline time (which is mostly pointless IMO, but YMMV). But of course it also means IPCC can’t really adjudicate on ’emerging’ issues that haven’t really been resolved in the literature. For things that are just beginning to be talked about and where conflicting results exist, they are left with only one option, which is to simply describe the range of results with some of the caveats. This is (in my view) what occurred in AR5 with respect to the transient constraints on sensitivity and also perhaps on discussions of the ‘hiatus’. Both of these issues have been discussed in the literature since AR5, and I doubt very much that the relevant texts would be anything like as ambiguous if they were to be rewritten now.

In particular, with the publication of Marvel et al (2015) (and also Shindell (2014)), the reason for the outlier results in Otto et al and similar papers has become much clearer. And once those reasons are taken into account, those results no longer look like such outliers – reaffirming the previous consensus and reinforcing the idea that there really is a best estimate for the sensitivity around 3ºC.

One final point. This is not an example of ‘groupthink’ surpressing legitimate debate in estimating sensitivity – rather it’s the result of deeper explorations to examine why a certain type of estimate was an outlier, which had a conclusion that ended up reinforcing the existing, wider, body of knowledge. Surprising as it may seem to some people, this is actually the normal course of affairs in science. Mainstream conclusions are hard to shift because there is a lot of prior work that supports them, and single studies (or as here, a group of similar studies) have their work cut out to change things on their own. As with supposedly faster-than-light neutrinos, most anomalies eventually get resolved. But not necessarily on the IPCC timetable.

References

- K. Marvel, G.A. Schmidt, R.L. Miller, and L.S. Nazarenko, "Implications for climate sensitivity from the response to individual forcings", Nature Climate Change, vol. 6, pp. 386-389, 2015. http://dx.doi.org/10.1038/nclimate2888

- A. Otto, F.E.L. Otto, O. Boucher, J. Church, G. Hegerl, P.M. Forster, N.P. Gillett, J. Gregory, G.C. Johnson, R. Knutti, N. Lewis, U. Lohmann, J. Marotzke, G. Myhre, D. Shindell, B. Stevens, and M.R. Allen, "Energy budget constraints on climate response", Nature Geoscience, vol. 6, pp. 415-416, 2013. http://dx.doi.org/10.1038/ngeo1836

- M. Aldrin, M. Holden, P. Guttorp, R.B. Skeie, G. Myhre, and T.K. Berntsen, "Bayesian estimation of climate sensitivity based on a simple climate model fitted to observations of hemispheric temperatures and global ocean heat content", Environmetrics, vol. 23, pp. 253-271, 2012. http://dx.doi.org/10.1002/env.2140

- D.T. Shindell, "Inhomogeneous forcing and transient climate sensitivity", Nature Climate Change, vol. 4, pp. 274-277, 2014. http://dx.doi.org/10.1038/nclimate2136

The implication being that the geographical separation of most of the forcings (and CO2 to an extent at least low down in the atmosphere near cities, would allow one to tease out a better estimate for TCR, or in other words to define a local/regional TCR.

I would very much like to see your time-series data, especially for aerosols.

[Response: Here – gavin]

Gavin,

Thanks for the article.

What about the notion that, in the real world, the forcings don’t act in isolation, and therefore the spatial (and temporal) distribution is more homogenous (when the forcings are taken together) than in the isolated model runs. This increased homogeneity, then, may alter the “how quickly the land and ocean temperatures respond and make a different to the projection of the forcing onto the ocean, and hence the ocean heat content change” and return the real world, combination-of-forcing, efficacy closer to that of CO2?

Thanks for your thoughts,

-Chip

[Response: That’s a testable proposition – even if it’s unlikely to be true given that these are relatively small perturbations on a complex system where you would expect linearity to hold. And wouldn’t you know it, we have already tested it… – gavin]

Hi Gavin

The low net historical iRF efficacy result appears to be dominated by very strong cooling aerosol efficacy. However, capturing the full aerosol-cloud forcing requires an interactive troposphere, which presumably is not included in the iRF calculation. Isn’t this result largely a consequence of the iRF setup not reflecting the full aerosol forcing, rather than efficacy? (Though AR5’s aerosol forcing estimate was weighted strongly by satellite studies which only looked at the cloud-albedo effect, plus an ad-hoc positive longwave adjustment, so maybe it makes sense in practice).

For the ERF result the low net historical efficacy result appears to be dominated by low GHG efficacy. Would historical GHG have a substantially different spatial structure than CO2 alone, or are there other factors affecting efficacy here?

The robust low efficacy of Ozone is interesting. Would be good to see how well this is supported across models.

Unless I’m reading the documentation wrong–perhaps I am–this is data from simulations. Are there time series estimates for aerosols available that are from observations? Or at least some kind of accounting-based model?

Thanks for the link to the other Marvel et al., 2015 paper.

Let me summarize my understanding to make sure that it is in-line with your findings and their implications.

Basically, your conclusions are that the combination of historical forcings (from all causes) has resulted in less of a rise in the global average surface temperature than would have occurred had the change in total forcing been due to CO2 alone. Do I have this right?

[Response: Yes. – gavin]

As a check of this, one could comparing the climate model simulations of temperature change using the historical forcing runs with the temperature change produced by the same models under CO2-only forcing runs *at times of equivalent total forcing change*. Presumably, the temperature rise would be greater in the latter set of runs. Is this also correct?

[Response: Yes. And yes – this is effectively what is done in comparing the actual TCR (which comes from a 1% increasing CO2 run). – gavin]

Thanks for bearing with me!

Should we not be unrealising a lot more radiative imbalance by creating energy moving surface heat to the ocean abyss through heat engines? In part this is a conversion of global warming to global energy and the relocation of the remaining heat makes minimal impact on the temperature of the massive ocean deep.

What about the feedbacks that are not normally well represented by ECS and normally fall into the Earth System Climate Sensitivity, stuff like the Arctic Ice cover, which now has trends over decades closer to what was seen on centuries in paleoclimate:

http://i713.photobucket.com/albums/ww133/Sane_Person/Arctic%20Meltdown/Sea_Ice_models_v_reality-2012.jpg

[Response: Sea ice change is specifically included in calculations of ECS. It isn’t an additional feedback. – gavin]

>interactions between forcing agents.

Any speculation on the likelihood of other unexpected interactions emerging, either among known chemical compounds or novel ones?

We know that new persistent organic chemicals continue to be invented and produced.

Chlorofluorocarbons were a surprise, and if industry had chosen to produce analogous bromine compounds instead we’d have been toast before we identified the problem (see Crutzen’s Nobel speech)

http://www.nobelprize.org/nobel_prizes/chemistry/laureates/1995/crutzen-lecture.pdf

How’s that surveillance coming? Anyone publishing?

This might be much the same question Chip Knappenberger asked, but in a different form.

“for Otto et al (2013), the estimated TCR without taking account of the efficacies is 1.3ºC (which is on the low side of other estimates), but with this effect accounted for, it is closer to 1.8ºC.”

Does this mean that in Figure 1 the ratio of the slope of the green line to the slope of the purple line is 1.8/1.3?

[Response: Almost. The correction is to the TCR slope which is CO2 only (and the GHG slope is not quite the same). Close though. – gavin]

Thanks for the post,

I must be confused, but why do the volcanic-only forcings (red dots) hover around a positive value in the first graph? Even the negative values don’t seem negative enough, even after decadal averaging. I was initially wondering how well volcanic periods would fall cleanly on a regression line if the spatial structure of the forcing was that relevant in setting the efficacy factor, since some events have been expressed differently (e.g., Pinatubo pretty symmetric, El Chichon in the North, Agung in the South).

How well known is the assumption that climate sensitivity is a constant? This would accurately explain the temperature response in a closed, controlled system, but can we say the same in an open and chaotic system, such as the Earth’s atmosphere? Obviously, over a rather large range (0-100% CO2) it would not hold. But over how wide a range can we assume a constant response?

In the light of the Comment/Question/Answer above (re-quoted below), can you please advise what the ‘adjusted’ RF atmospheric CO2 values should now read for RCP scenarios 2.5, 4.5, 6.0 & 8.5?

Requote . . . . “for Otto et al (2013), the estimated TCR without taking account of the efficacies is 1.3ºC (which is on the low side of other estimates), but with this effect accounted for, it is closer to 1.8ºC.”

Does this mean that in Figure 1 the ratio of the slope of the green line to the slope of the purple line is 1.8/1.3?

[Response: Almost. The correction is to the TCR slope which is CO2 only (and the GHG slope is not quite the same). Close though. – gavin]

Question for Gavin or Kate (or anyone),

Based on NASA’s CMIP5 forcing model, year 2012 has a greenhouse forcing of 3.54 Wm2, ozone has 0.45 Wm2, atmospheric aerosols have -0.89 Wm2 combined direct/indirect, and land use has -0.19 Wm2, all based on iRF. If I apply the adjustments specified in the paper based on TCR to make equivalent to GHGs, then I get revised forcings of 3.54, 0.24, -1.19, and -0.68 respectively. Put it all together results in a forcing of 1.91 W/m2 out of a total of 3.78 for GHGs and Ozone. (I’ve ignored volcanoes and solar for simplicity. The ratio would be 1.79 W/m2 out of 3.66 W/m2 with the aerosol adjustments in Schmidt 2014).

Is my understanding correct that aerosols and land use then offset half of the GHG+Oz forcing? So instead of 0.9C of warming (relative to pre-industrial), we would have closer to 1.8C without them?

The AR5 chart (measuring human contribution to warming from 1950-2010) shows a much smaller aerosol offset, ie. +0.9C of warming without other anthro instead of +0.7C of warming with. The IPCC chart appears to assume no adjustments to the iRF.

I would appreciate comment to see if I’m understanding the implications here clearly. Goals of limiting warming to 1.5C or 2.0C aren’t going to be easy without aerosol offsets (which could dissipate quickly as we reduce CO2 pollution).

Thank you very much for sharing the study publicly!

Re Dan H. #12 asked “How well known is the assumption that climate sensitivity is a constant?”

A quote from Gavin (https://www.realclimate.org/index.php/archives/2013/01/on-sensitivity-part-i/ )

I have no estimates but with all the inertia in the system (Ocean and Air), and with changes taken place in these systems (transient constraints)), a transient response will likely be on the higher estimate scale. Because for instance the capability to sequester carbon by natural systems will be impaired, until the system settles at a new climate equilibrium state.

I suppose this is close to OT, but it’s well worth checking out Dr. Marvel’s blog. Her writing is crisp and funny, and despite the wisecracking has some rather thoughtful things to say.

For instance:

http://marvelclimate.blogspot.co.uk/?m=1

Nic Lewis has a post at WUWT claiming the conclusions of Marvel et al “have no credibility” (ironic that he posts it at WUWT). The main argument seems to be the usual WUWT “models are wrong” garbage, but there are some more technical points around efficacy values that are harder for non-experts to evaluate..

http://wattsupwiththat.com/2016/01/10/appraising-marvel-et-al-implications-of-forcing-efficacies-for-climate-sensitivity-estimates/

anyone care to comment?

[Response: It’s mostly confused, but we’ll have some sensitivity tests to address a couple of the technical points next week. – gavin]

What’s an “impulse response function” in a climate model?

http://onlinelibrary.wiley.com/doi/10.1002/2014GB005074/epdf

An impulse response function for the “long tail” of excess atmospheric CO2 in an Earth system model

Re #17:

I would also be interested to hear more about the regression model used for the iRF efficacy estimates potentially creating the seemingly un-physical situation where a zero-forcing causes a non-zero effect on temperature.

[Response: You should note that there are lags in the system that extend beyond a decade. Thus expecting each decade’s temperature and forcing to line up perfectly is too optimistic. Instead, one expects temperatures to lag forcing by some amount and this leads to a small shift in the temperatures w.r.t. the forcing. An alternate way of doing the calculation (using the last decade minus first decade) is effectively equivalent to forcing the regression through the origin and was tested in the paper – it does not significantly impact the results. – gavin]

Chris Colose, you asked “why do the volcanic-only forcings (red dots) hover around a positive value in the first graph?”. The explanation I give in my technical analysis of Marvel et al. at Climate Audit is that in Figure 1 the iRF for volcanoes appears to have been shifted by ~+0.29 W/m2 from its data values, available at http://data.giss.nasa.gov/modelforce/Fi_Miller_et_al14.txt. Why not check it out and see whether my analysis is confused, as has been suggested here?

[Response: You are confused because you are using a single year baseline, when the data are being processed in decadal means. Thus the 19th C baseline is 1850-1859, not 1850. We could have been clearer in the paper that this was the case, but the jumping to conclusions you are doing does not seem justified. – gavin]

Nope. Look at the purple line in Figure 1; ten decadal averages, where the forcing file contains 163 years of values. The mean of the first 63 volcanic values is ~-0.29 W/m2. Nothing’s been shifted; you’re just looking at a different timespan.

[Response: Yup. The baseline is 1850-1859 – the first decade. To be fair, that wasn’t explicitly stated in the paper. – gavin]

Gavin, you write: “You are confused because you are using a single year baseline, when the data are being processed in decadal means. Thus the 19th C baseline is 1850-1859, not 1850. We could have been clearer in the paper that this was the case, but the jumping to conclusions you are doing does not seem justified.”

Thank you for providing this information. To clarify, does this imply that 1850-59 was used as a baseline for all the delta T and delta Q values as well as for iRF?

Is the full paper available for downloading? The Supporting Information is available for downloading from NatureClimateChange, but not the main paper. I’d like to print it out for study.

#23 Matthew,

The full paper is available for free, but it makes a difference how you access it. If you click the link in the footnotes to this blog post, it will show the full paper (after a brief pause). If you accessed it through nature.com, you will encounter a paywall.

24, Todd Friesen, thank you, but that link will not let me download.

Todd Friesen says:

Question for Gavin or Kate (or anyone),

“Is my understanding correct that aerosols and land use then offset half of the GHG+Oz forcing? So instead of 0.9C of warming (relative to pre-industrial), we would have closer to 1.8C without them?

The AR5 chart (measuring human contribution to warming from 1950-2010) shows a much smaller aerosol offset, ie. +0.9C of warming without other anthro instead of +0.7C of warming with. The IPCC chart appears to assume no adjustments to the iRF.”

I wonder if yoy have got an answer on your question. It would be interesting to know if you have misunderstood.

Todd

I got an answer from Nic Lewis:

“What Todd Frieson wrote seems about right. I get a slightly lower figure for iRF GHG “effective” forcing (forcing x efficacy), so that over half the anthropogenic GHG + ozone forcing has been cancelled out by negative aerosol and land use change forcing.

As well as effective aerosol forcing of -1.2 W/m2 being mcuh stronger than the IPCC AR5 ERF of ~-0.7 W/m2 over 1850-2000, the land use change effective forcing of -0.7 W/m2, arsign from a very high efficacy of 3.89, seems absurd to me. It is partly due to the inclusion of LU run 1, but mainly due to the unphysical regression method used. If the regression is forced to go through the origin, LU efficacy it is much closer to one – or belwo 1 if run 1 is excluded. In AR5, the IPCC scientists thought it as likely as not that land use change actually caused warming rather than cooling.”

[Response: Dropping outliers just because they don’t agree with your preconceived ideas is a classical error in statistics – it’s much better to use the spread as a measure of the uncertainty. – Gavin]

Gavin,

Thanks for this post. I agree with your comments about the IPCC process and the relatively poor IPCC AR5 discussion on ECS and 1998-2012 global mean temperature variations (I don’t use the word “h*****”).

I have published a number of studies on the value of using some simple metrics of the spatial patterns of global temperature change, rather than just global mean temperature change. Given that you comment that the largest differences between the different forcings is between land and ocean or between the Northern and Southern Hemispheres, have you looked at the land – ocean temperature difference or the Northern – Southern Hemisphere temperature difference, as they both scale linearly with ECS, in the same way as global mean temperature for ghg forcing, but not for aerosol forcing.

Drost, F., and D. J. Karoly (2012) Evaluating global climate responses to different forcings using simple indices, Geophys. Res. Lett., 39, L16701, 5pp, doi:10.1029/2012GL052667.

Gavin,

I would be very grateful if you could respond to the following three questions.

1) Do you have available CO2 benchmarking data for GISS-E2-R, specifically, estimates of Fi, Fa and ERF for a range of concentrations? If not, more specifically, are you going to support or modify the Fi value of 4.1 which appears in Marvel et al?

2) Can you please advise if and how AEI forcing was included in Miller’s “All forcing together” Fi values for the 20th century historic run?

3) Can you confirm that the temperature and net flux data for GISS-E2-R, available via the CMIP5 portals and KNMI Climate Explorer are based on a model corrected to fix the ocean heat transport problem which you identified in the Russel ocean model in your 2014 paper?

Many Thanks

Gavin,

You wrote:-

“Dropping outliers just because they don’t agree with your preconceived ideas is a classical error in statistics – it’s much better to use the spread as a measure of the uncertainty. – Gavin”

Another classical error in statistics is to attribute the error associated with one property to the wrong variable.

Work by the RNMI, Sybren Drijfhout et al 2015, (http://www.pnas.org/content/112/43/E5777.abstract) confirms that GISS-E2-R has the capacity for abrupt climate change in the form of (inter alia) the local collapse of convection in the North Atlantic.

[Response: Sure. We have many examples in the simulations – lots of multidecadal variability there. As in the real world. – gavin]

In this instance, if the results of the “rogue run” in the single-forcing LU cases are due to the abrupt collapse of N Atlantic convection, as seems increasingly likely from the data, then the dramatically different temperature response in the rogue run has nothing whatsoever to do with the uncertainty in transient efficacy of LU forcing. The inclusion of the run leads quite simply to an erroneously inflated calculation of the mean transient efficacy for LU, and a misleading confounding of the uncertainty associated with the GCM’s internal mechanics with the uncertainty in LU transient efficacy.

[Response: No. This is within the realm of simulated internal variability (and the change in the run you are focused on is not a ‘collapse’). Any finite ensemble can only sample the potential for internal variability and the ultimate ensemble mean response has to integrate over all forms of internal variability. Sometimes you will get things that only occur infrequently, and so they won’t be in every 5-member ensemble – but this is true for all sorts of variations, not just changes in the Labrador Sea. Your transparent desire to ignore one run that is inconvenient for you is blinding you to the partiality of your special pleading. You haven’t even looked to see whether similar variations occur in the other ~200 runs in this experiment. – gavin]

Ultimately, the Marvel et al paper seeks to argue that sensitivities estimated from actual observational data are biased low on the grounds that GISS-E2-R over the historic period is responding to an overall low weighted average forcing efficacy. It then seeks to extend the conclusions drawn from the model to real-world observational studies.

[Response: Yes. I am aware. – gavin]

Since, we know from the real observational data that there was not a collapse of N Atlantic convection, then quite apart from other methodological questions, the inclusion of this run for the LU calculation is impossible to justify, and, on its own, is sufficiently large in its impact to bring the study results into question.

[Response: You misunderstand completely the nature of what is being done. All single forcing runs are counter-factual and so can’t be compared to observations (only the historical all-forcing runs are relevant to that). – gavin]

Applying the same logic, any of the 20th Century History runs which exhibited similar abrupt shifts (Southern Ocean sea-ice, Tibetan plateau snow melt and N Atlantic convection) which were not observed in the real-world, should have also been excluded from the ensemble mean for Marvel et al to have any hope of credibly extending inferences to real-world observational data – even if we suspend disbelief with respect to other problems associated with data, methods and relevance.

[Response: Nonsense. The weird thing is that all this special pleading is totally irrelevant to the basic result which that the historical runs don’t have the same forcing/response pattern as the response to CO2 alone. The single forcing run analysis is to explain that fact and it does a pretty good job. If you think it’s because of something else, you’d be better off investigating it yourself (all the model output is on the CMIP5 portal). – gavin]