After a record-breaking 2010 in terms of surface melt area in Greenland Tedesco et al, 2011, numbers from 2011 have been eagerly awaited. Marco Tedseco and his group have now just reported their results. This is unrelated to other Greenland meltdown this week that occurred at the launch of the new Times Atlas.

Analysis of the surface mass balance via regional modelling demonstrates that there has been an increasing imbalance between snowfall and runoff over recent years, leading to a lowering of ice elevation, even in the absence of dynamical ice effects (which are also occurring, mostly near the ice sheet edge).

Figure 2. Regional model-based estimates of snowfall (orange), surface melt and runoff (yellow) and the net accumulation (Gt/yr) (blue) since 1958.

The estimated 2010 or 2011 surface mass imbalance (~300 Gt/yr) is comparable to the GRACE estimates of the total mass loss (which includes ice loss via dynamic effects such as the speeding up of outlet glaciers) of 248 ± 43 Gt/yr for the years 2005-2009 Chen et al, 2011. Data for 2010 and 2011 will thus be interesting to see.

While the accelerating mass loss from Greenland is of course a concern, the large exaggeration of that loss rate by Harper Collins in the press release for the 2011 edition of the Times Atlas was of course completely wrong. The publishers have issued a ‘clarification’, which doesn’t really clarify much (Update (Sep 22, 2011): The clarification has been clarified from the original statement). As discussed on the CRYOLIST listserv, the confusion came most likely from a confusion in definitions of what is the permanent ice sheet, and what are glaciers, with the ‘glaciers’ being either dropped from the Atlas entirely or colored brown (instead of white) (No-one that I have seen has posted the legend from the Atlas that gives the definition of the various shadings, though in the 1994 edition I have, glaciers are (unsurprisingly) white, not brown).

The Times is still claiming that it stands by its maps (Update: The new clarification no longer makes this claim). This is quite silly, and presumably reflects the fact that it would be very expensive to reprint all the atlases that may have already been printed. In case this isn’t already clear, there is simply no measure — neither thickness nor areal extent — by which Greenland can be said to have lost 15 % of its ice. As a letter written by a group of scientists from the Scott Polar Research Institute says, “Recent satellite images of Greenland make it clear that there are in fact still numerous glaciers and permanent ice cover where the new Times Atlas shows ice-free conditions and the emergence of new lands”.

The push back from glaciologists on this issue was a good example of how the science community can organise and provide corrections of high-profile mis-statements by non-scientists – by connecting directly with journalists, providing easy access to the real data, and tracking down the source of the confusion. In so doing it also provides an opportunity to give the real story – that change in Greenland and the rest of the Arctic is fast and accelerating.

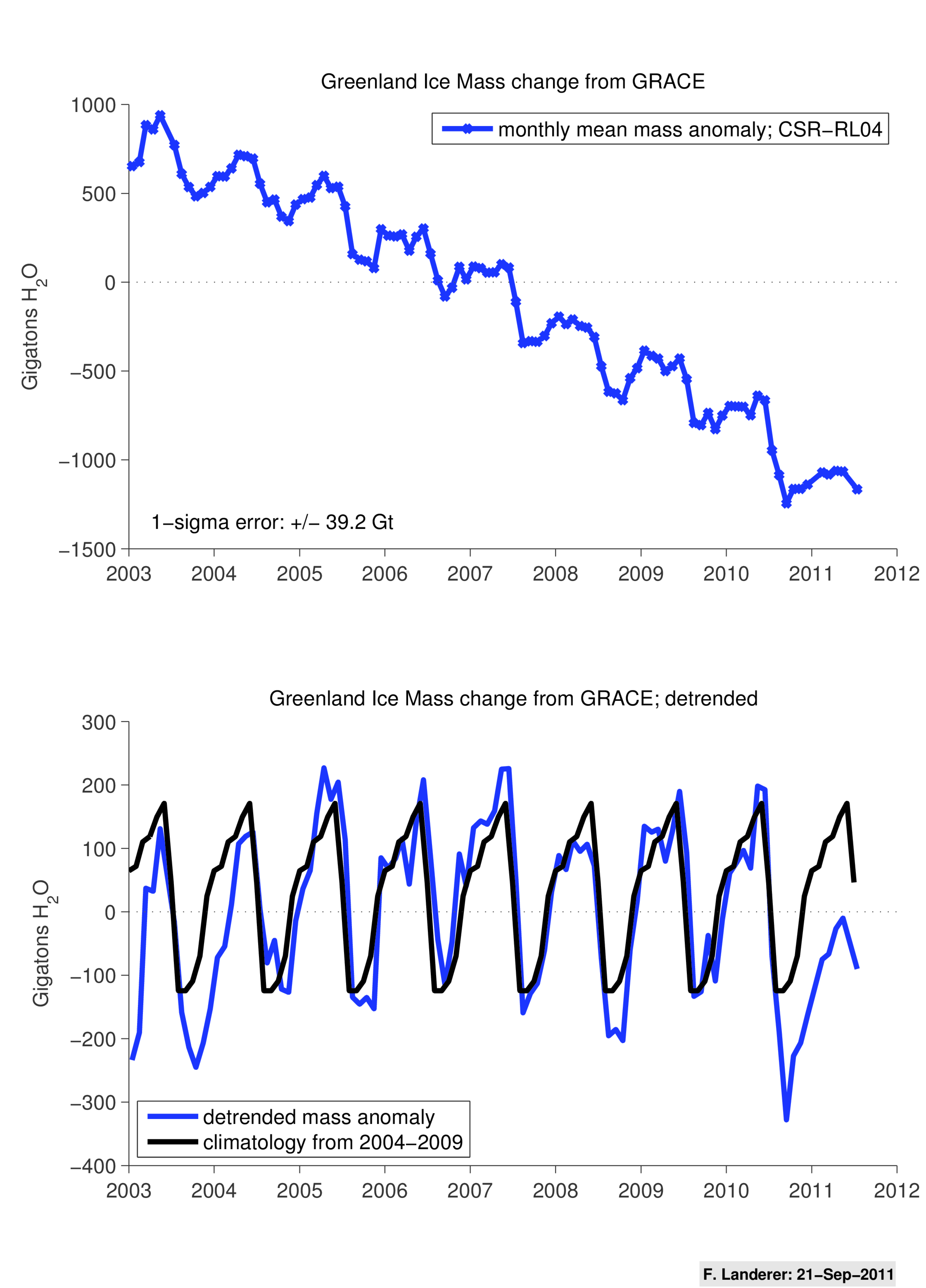

Update: Here is a figure showing the preliminary GRACE results for Greenland for 2010 and the beginning of 2011. The anomaly in 2010 was huge, indicating perhaps a total loss of about 500 Gt/yr:

Figure 3. The upper panel shows estimated monthly mass anomalies over Greenland from 01-2003 through 07-2011 as in Velicogna (2009); the lower panel shows the detrended monthly data (blue), plus a monthly climatology from 2004 through 2009, highlighting the exceptional mass loss in 2010 (~500 Gt-H2O).

Further Update: A more detailed analysis from the Scott Polar Research Institute in the UK demonstrates quite clearly that the Times map is wrong, and inconsistent with all other ice sheets in the Atlas:

Figure 4. The new Times Atlas map (left) together with a mosaic of two satellite images taken in August 2011 (right). Also on the right are contours of ice thickness shown every 500m. The blue line is the 500m ice thickness contour which is the same as the outline on the Times Map. The red line is the 0m ice thickness contour which would be a better representation of the ice sheet than the blue line. The Times Map excludes all the ice between the red and blue lines. Furthermore, it excludes all the ice caps and glaciers that are not part of the main ice sheet, visible on the satellite image but not shown on the map. (SPRI).

References

- M. Tedesco, X. Fettweis, M.R. van den Broeke, R.S.W. van de Wal, C.J.P.P. Smeets, W.J. van de Berg, M.C. Serreze, and J.E. Box, "The role of albedo and accumulation in the 2010 melting record in Greenland", Environmental Research Letters, vol. 6, pp. 014005, 2011. http://dx.doi.org/10.1088/1748-9326/6/1/014005

- J.L. Chen, C.R. Wilson, and B.D. Tapley, "Interannual variability of Greenland ice losses from satellite gravimetry", Journal of Geophysical Research, vol. 116, 2011. http://dx.doi.org/10.1029/2010JB007789

- I. Velicogna, "Increasing rates of ice mass loss from the Greenland and Antarctic ice sheets revealed by GRACE", Geophysical Research Letters, vol. 36, 2009. http://dx.doi.org/10.1029/2009GL040222

@ gavin re: 3-4 degrees & climate sensitivity:

“This kind of forecast doesn’t depend too much on the models at all – it is mainly related to the climate sensitivity which can be constrained independently of the models (i.e. via paleo-climate data),…

A question on the subject of climate sensitivity, though this probably has come up here at some time in the past.

The consensus seems to be that climate sensitivity is in the vicinity of 3.0 K per doubling of CO2, of which ~1 K is the direct radiative forcing impact of the CO2, and the remainder is the result of feedback effects which act as a multiplier on that original forcing and are the only real area of uncertainty.

What I question is how this 3.0 K value can be applied as a constant across all climactic conditions, when the feedback mechanisms themselves are not constant.

For example, ice-albedo feedback will be much stronger during a glacial period when the leading edge of ice sheets approaches mid-latitudes, than during an interglacial period, such as the present, when ice-sheets have retreated to higher latitudes, where the transitional zone covers less surface area and receives less solar radiation.

Looking to the planet’s past behaviour for guidance, the historical oscillation between two equilibrium states… long glacial periods and relatively shorter interglacial periods (the timing of which appears to be driven by orbital variations) would suggest that net climate feedback is not constant, but is higher in the transitional range (around 2-6 K cooler than present) and lower outside that range (preventing runaway cooling or warming).

Deriving a value for climate sensitivity from one set of conditions (a cooler past) and applying it to different set of conditions (a warmer future) could lead to overestimates of climate response.

[Response: It’s certainly conceivable that climate sensitivity is a function of base climate and surely is at some level. How large that dependency is unclear. But you need to distinguish between estimates of sensitivity derived from comparing older climates to today, and estimates of variability within an overall different base climate. Comparing the LGM or Pliocene to today is the former, looking at the variations during an ice age would be the latter. There have been a couple of papers indicating that sensitivity at the LGM is different to today (Hargreaves – not sure of the year – for instance), but in each case the differences (while clear), are small (around 10 to 20%). – gavin]

But the dominant feedback is negative… Stefan-Bolzmann cooling. That’s why the climate is stable at all on the relevant time scales. Consider that in a regime where radiative transport dominates, your turbulent flow intuitions may not be helpful.

http://www.youtube.com/watch?v=Pb7svelJP7c

I wanted to send you this music and lyrics.Global challenge impossible

Hope link works-otherwise search youtube-owatunes-“There is always hope”

The name of the piece.I hope he is right.It is depressing with the climate.

Ice sheet dynamics are mostly a non-equilibrium process, so thinking in terms of equilibrium could mislead you.

As insolation crosses a threshold value, the planet suddenly flips into an icehouse state when planetary albedo sharply increases due to the rapid growth of a thin ice/snow layer. However, the rate of ice sheet growth is limited not by temperature but by the rate of mass transport from the oceans to the ice. It takes tens of thousands of years for the ice sheet size to reach its equilibrium state with the atmosphere and radiative balance.

It seems that the glaciation cannot be terminated until the ice sheet volume finally reaches icehouse equilibrium, large enough that it becomes unstable to insolation increases, triggering massive releases of freshwater that alter ocean currents and in turn trigger the degassing of CO2 from the deep ocean to the atmosphere, latching in the next interglacial state.

At this glacial termination, the massive release of CO2 from the oceans puts the atmosphere way ahead of the ice sheets, and the ice takes 10,000 years of melting to catch up and reach the relatively brief interglacial equilibrium.

Even now, if we stopped all GHG emissions and held GHGs constant, it would take how many thousands of years for the planet to reach equilibrium as the oceans continued to warm and expand, ice sheets continued to melt, and sea level continued to rise.

Maybe 2/3 of the 80-120kyr ice age cycle is spent in non-equilibrium.

Gavin: “But extrapolating from average loss rates over the last ten years and expecting they will remain constant is guaranteed to be an underestimate.”

I think thats what you were telling us several years ago about the atmosphere! The ice in my freezer exhibits a delayed response to changes in direction of temperatures. Thus one might expect in the next few years or decade the beginning of about 15 years plus of no significant increase in melt rates. By then we should have a better idea of what comes after that.

Gavin, I understand what the IVP model runs are. I’m assuming that there are subgrid models. The part I don’t understand is what equations are being solved by the BVP runs. Is it standard Reynolds’ averaging or something else? If you can get the average effects of vortices, then you must have a turbulence model that we need to know about. The ones that I’m familiar with totally miss this.

[Response: The IVP or BVP simulations are using basically the same model codes. But note the the main atmospheric vortices are part of the resolved flow, not part of a sub grid scale parameterisation (this isn’t true for ocean models though). The sub grid scale stuff relates to the impact of convection, boundary layer mixing etc. – gavin]

I understand that in principle the vortices are part of the resolved flow. That’s true for aerodynamic flows also. The problem is that the subgrid (turbulence) model can give an answer without any vortices at all even when they are present. The mere fact of solving a BVP erases the most important dynamics and misses even the time averaged behaviour. Most people don’t realize this. I think what I need to see is the actual equations being solved. Is it Reynolds averaging or is it not?

If you have a reference for what the subgrid model is that would be useful.

I can email you the Drela and Fairman report. It is sufficiently interesting to warrant a detour to read it. Despite the reviewers reaction, this feature of Reynolds averaging is very poorly understood. I don’t know, maybe in climate modeling this is well known, but I would doubt it.

Gavin,

We are not talking the same language here. When I worked at NCAR in the 1970’s they had mathematicians who made significant contributions such as finding the problem with sound waves in the numerical models.

I think I now know what you mean by BVP and IVP. If its what is commonly done in all branches of fluid dynamics, the news is not good. Most CFD codes have time accurate mode and steady state mode. The problem (and its a huge one) is that the steady state mode uses relaxation methods that are equivalent to time marching methods but with the pseudo time step determined by stability rather than temporal accuracy. If this is the case, you are numerically forcing stability. This explains why your BVP method can track vortices. The real problem with it is that in interesting cases you NEVER converge to a true steady state solution. You always have some level of fluctations. Most people are happy if this level is O(10^-2) of the main scales. The real problem is that the BVP for the Navier-Stokes equations has mnay solutions in a lot of common problems, basically any problem involving separated flow.

With this pseudo time method you have no control over which one you find. And in fact, you are totally unaware of the other solutions. We have used Newton’s method (with an artificial time term) to obtain real (machine precision) solutions to the BVP. In almost all cases of interest, there are many. Because the systems are so large, its impossible to determine how many and their stability. You can also see the Darmofal and Krakos paper in AIAA journal for a very stark demonstration of how pseudo time methods can give you almost any solution, depending on the time marching method. At the very least, you should try modifying the pseudo time methods and see if there are any changes.

I wish the news were better, but I think you need to look carefully at the CFD literature (not the usual stuff where the results always agree with test data) but the results of the groups trying to work with Newton’s method.

Best Regards

[Response: GCMs do not solve for steady states directly. All calculations are explicitly time-stepped whether you are solving look for the trajectory from a specific initial condition or if you are looking the dependence of the flow statistics on a change in boundary conditions. Indeed, there are no real ‘steady states’ in any case. At best you have quasi-stable dynamic equilibria – which are variously sensitive to whatever forcing you are looking at. For the class of model we are looking at, there is no evidence of a multiplicity of potential states. – gavin]

@ Gavin:

“It’s certainly conceivable that climate sensitivity is a function of base climate and surely is at some level. How large that dependency is unclear.”

So, ultimately there’s no underlying justification for treating climate sensitivity as a constant across all climate states, except that it makes for a convenient “rule-of-thumb” that can be widely understood, even if it’s not strictly accurate.

A critical question then arises… is climate sensitivity an input to models, or an output (driven by the sum total of all the feedback mechanisms which are themselves modeled in detail)? I would imagine it’s the latter, but if so, are other input assumptions then adjusted within their plausible limits, so that the overall model’s climate sensitivity approximately conforms with the 3.0K “known” constant?

[Response: Climate sensitivity is an emergent property of the entire model code – and each component (however you break it down) is a function of many different aspects of the specific parameterisations. It is not a priori estimable from knowledge of the components taken individually (at least not in any obvious way). Thus it is almost impossible to tune a model for a specific sensitivity except perhaps empirical ‘trial and error’, so in practice this is never done because of the huge amount of computation that would be required. -gavin]

Gavin, I accept your word that there is “no evidence for multiple states.” The problem, and I hope you think about this, is that if you are not looking for them you will not see them. And your current states could be “wrong.” Please if you expect me to take climate modeling seriously, you should look at the paper in AIAA Journal, autumn 2010 by Krakos and Darmofal. You know that CFDers said 15 years ago exactly what you are saying. They were deluding themselves and it ultimately cost the field of CFD credibility with fund givers.

In any case, I appreciate your time in explaining things to me.

[Response: Lot’s of people have been looking for them – and indeed, in simpler models they have found them (indeed, 15 years ago I wrote a paper doing just that), but it is an empirical observation that the climate sensitivity or the trend over the 20th or the trend to 2100 in coupled GCMs is not especially sensitive to initial conditions. This is something that might change as more model feedbacks get added to the system (perhaps, dynamic ice sheets) and might be different in different climate regimes, but as far as anyone can tell, it is not a big issue for models of present day climate. This is not because of some dogma that states this is impossible, but simply a result of people looking for it extensively and not finding it. The main issues for climate modelling are far more the biases and systematic offsets that exist when comparing the present day climatology. – gavin]

Gavin, There is another thing that I will dangle here. If indeed there are multiple states (as we have found for all interesting CFD problems) then you can make a breakthrough by showing this fact. It could usher in a new era of understanding of the climate system. You don’t know me, but if you read the paper by Darmofal and Krakos, I think you will see that there is something here of great scientific interest. Now that I understand the models better, I realize that my initial questions have turned into serious issues.

At least in CFD, the problems we look at are designed by engineers to not have the pathologies, but yet they are there. The climate system is much more complex and blows up under time accurate simulation. CFD problems are generally at least bounded under time accurate simulation. This is a prima facia case that climate models are much more likely to have these pathologies than the CFD problems I’m used to seeing.

[Response: Where do you get the idea that climate models blow up under time accurate simulation? This is not the case at all. You can run the models for thousands of model years completely stably. – gavin]

No, no,no. if you run them in time accurate mode, the IVP, then they blow up. With industrial CFD, the systems are bounded if we run in IVP mode.

If multiple states exist in simpler models, they must surely exist in the more complex ones. People saw this in the 1970’s with boundary layer models and thought they had got rid of it by going to the Navier Stokes equations. They were wrong. You remember Lorentz’s work on a simple system that started a whole new field of nonlinear science.

In any case, I guess the best I can do is an appeal to scientific curiousity and a hope that you will look at the literature. You seem to be reluctant to do that. I understand why, but had higher hopes.

Best

[Response: I read a wide variety of literature and I will look at the papers you mention, but I have no idea why you think that models that I work with every day have behaviours that I have never seen. Climate or weather models do not blow up when run as an IVP simulation. They just don’t. So either we have different definitions of what ‘blow up’ or an initial value simulation or what time-accurate means, or you are getting other information from an untrustworthy source. So to be clear, if a climate or weather model is initialised with an observed state (approximated of course depending on data availability), the specific path of the weather – storm tracks, low pressure systems, tropical variability – will be tracked for a short period (a few days to a week to a few months – depending on quality of the model, and the specific metric that is being tracked – the longer timescales being associated with specific ocean variables). Thus the RMS errors increase quickly but then saturate – they are not unbounded and the solutions do not blow up or do anything unphysical. The statistical description of the state (mean temperatures, average storm tracks, ocean gyres etc.) are stable and provide a good approximation to the same metrics in the real world. If you think otherwise, you need to provide an actual reference that relates specifically to these kinds of models. – gavin]

“The ice in my freezer exhibits a delayed response to changes in direction of temperatures.” Bill Hunter — 25 Sep 2011 @ 5:51 PM

What you think is a delayed response is actually a non-linear response. If you raise the air temperature in your freezer at equilibrium from -10 C to -1 C, heat (joules) will start flowing into the ice in your freezer, but no apparent physical change will occur – CTE for ice is ~5e-5/deg, too small to see without sensitive equipment. As the ice warms, and the difference in temperature between the ice and air falls, the amount of heat transfer per unit time will decrease, and the ice temperature will rise slower and slower. There will also be a gradient from the surface of the ice to its core, which will decrease with time. if you then raise the air temperature to 10 C, The Ice temperature will rise until the surface is 0 C, then it will start melting. since the ice surface will be held at zero by melting, the difference between the air temperature and the ice surface is constant, and the rate of heat transfer will be constant: if you raise the air temperature to 20 C, the rate of heat transfer and ice melt will double; there will be no delay for the ice to “catch up”with the rate of heat transfer. If you drop the air temperature back to -10 C, the ice will immediately stop melting, and the wet surface will start to freeze, and the energy transfer will be from the wet ice surface to the air.

The acceleration of the melt rate in greenland and the acrtic circle is tied firmly to a 45% increase in global CO2 since 1990. 1990 is a significant starting year because that’s when some industrialied countries began taking decisive action to lower their industrial emissions. Russia for example has acheived a very commendable 28% reduction over this period and the EU-27 acheived a 7% reduction. Where was the USA??..oh! they were on the other side of the smokestack actually increasing their emissions by 5%. Other ‘developing’ countries such as China and India have increased theirs by 10% and 9% respectively over this period. Industialised western countries are still expected to meet a Kyoto emissions reduction quota of 5.0% by 2012 with absolutely no thanks to the USA which has demonstrated virtually no leadership in this area to date. Maybe we should be all be following what the Russians are doing..they seem to have their act in place. If this opens a hornets nest I’m just going strictly by the data found in “Long-term trend in global CO2 emissions,” prepared by the European Commission’s Joint Research Centre and PBL Netherlands Environmental Assessment Agency.

Russ R.,

You seem to have a fundamental misunderstanding of research on climate sensitivity. Most studies look at changes in a forcing and ask what the resulting change in temperature was.

When you do this, you get a remarkable agreement across many different lines of evidence that the changes in forcing due to CO2 will result in a change in temperature of 3 degrees per doubling. Moreover, the majority of the probability distribution is above this value rather than below it. You may find this page helpful:

http://agwobserver.wordpress.com/2009/11/05/papers-on-climate-sensitivity-estimates/

OK, I now see where I have gone wrong. There are language differences between CFD and climate.

1. The IVP case does not “blow up” its just ill-posed with respect to initial conditions.

[Response: In what sense is it ‘ill-posed’? There is nothing inherent about the set up of the equations or the specification of an IC that implies any internal inconsistency. Have you mentioned this to the National Weather Service? ;-) – gavin ]

2. What you mean by BVP is not the mathematical definition, viz., a steady state problem. What you mean is running an IVP to see the dependence on boundary values, I assume such as forcings.

[Response: Yes. – gavin]

3. While interesting, the references I gave you are more about steady state calculations. They will be relevant to your situation only if your “BVP” simulations are not time accurate. Can you really run an explicit time marching method for decades of real time while adhering to the CFL condition and achieving time accuracy? Bear in mind that as your spatial grid gets finer, the time step must decrease. (I’m sure you know this).

[Response: Yes. Most climate models are run for hundreds of model-years in order to get to a quasi-stable pre-industrial control, 150 years for the 1850-2000 transient and hundreds more years for specific future scenarios. Simulations for the last millennium (1000+ years) are also performed. (Though note they take upwards of 6 months to complete). – gavin]

I still am unsure about a couple of things:

1. What is the evidence for the stability of the statistics? Is it empirical or is it theoretical? I would a priori see no reason to suppose that for a strange attractor such a result should be true. For a stable orbit it seems more plausible. The range of the model sensitivities show I think that small changes in assumptions can have a big (but bounded) impact on the result.

[Response: Empirical. But be clear that this is stability to IC perturbations, not structural stability (to differences in the model specification). The latter is still unclear. – gavin]

2. I’m surprised that you don’t use implicit time marching methods. For the BVP you could increase your time step dramatically.

[Response: For some aspects perhaps (ice sheets, part of the ocean velocity calculations etc.), but there is very fast physics that has a very important role in the climate system (convection, clouds, storms, the diurnal cycle) which cannot be averaged over. Thus all climate models have physics time steps that are around 15 to 30 minutes (atmospheric dynamics require much smaller timesteps, which as you state, depends on the grid spacing and CFL criteria). No effective shortcuts appear to be possible. – gavin]

3. Bear in mind that there will be problems for which the statistics are not stable, i.e., if there are positive forcings. One instance of this is flutter in CFD, in which the oscillations become unbounded due to a positive feedback between the air loads and the structure. You would probably call these tipping points.

[Response: There are plenty of local threshold behaviours, and yet there is very little evidence of significant impacts on the global solutions. – gavin

4. I think there may be some interactions that are not correctly modeled if the spatial grid and time step are too coarse. You mentioned that spacial resolution doesn’t make much difference. That is interesting. The nature of the system implies that this is an empirical result from running models and not anything that should be expected a priori.

[Response: Agreed. – gavin]

Summarizing, I guess we have to rely in such a complex thing on our observations from running the models many times. I do think that sensitivity studies are critical, not just to grid resolution or time step but with respect to the many parameters and assumptions in the models.

I recall when I was a graduate student, Bob Richtmyer was looking for strange attractors in the Taylor column problem and couldn’t find them. It seemed that the Taylor vortices were pretty stable. Sometimes its interesting to revisit these issues with better computers and numerical methods. However, you are aware I’m sure of the existence of the transition to turbulent flow which is a tipping point for this system.

And of course there are the bifurcations. At a bifurcation point, the statistics do change a lot depending on which branch you get on. I would expect to see all these things in the climate models.

Best Regards

David Young …

Please stop with the superior airs … even *I* know that the time step must decrease as the spatial grid gets finer, and I’m just a humble software engineer who’s spent a few hours studying how models of this sort work.

To hint that a co-author of one of the best-known climate models in the world might possibly be ignorant of this is simply insulting. And this is just one example of your assumed air of superiority …

And you assume this air of superiority despite asking this question:

Oh dear, you’re “schooling” Gavin on the weakness of climate models when you’re ignorant of perhaps the most basic attribute of climate models. GIven that narrowing the range of climate sensitivity to CO2 concentrations is one of the primary goals of climate modeling research it’s *obvious* that climate sensitivity is an emergent property, not an input. Not only are you ignorant of how these models work, but also of one of the primary research goals of climate modelers in the first place!

You may be impressing yourself with your series of posts but other than that …

David Young and Gavin, thank you very much for this extremely interesting discussion. It has been very informative and has cleared up some misconceptions that I had too due to differences in terminology as well.

dhogaza,

I was the one who asked the “ignorant” question about climate sensitivity and models, not David Young.

So there’s really no need to belittle him over it.

Just one more clarification and then I’ll leave you in peace.

When I say the IVP is ill posed, I’m speaking in the mathematical sense. Ill-posed means that a small change in the IV leads to very large changes in the ouput. Technically, mathematicians usually measure the change in ouput in the L2 or H1 norms at the final time (certainly those are the norms for which most of our theory for differential equations holds). In the H1 norm, its not just values but slopes and I think its clear that in either norm, the change in output is large for any realistic simulation of the global circulation. Gavin says that in some other norm, maybe time averaged distribution of heat, time averaged distribution of vorticity, it isn’t ill-posed. Both statements can be true, it depends on how you measure the output.

[Response: Thanks. This is only a definition of ill-posedness if the system you are modelling doesn’t actually have this behaviour. In my experience, ‘ill-posed’ implies something fundamentally inconsistent in the set up – e.g. you have an elliptical equation without sufficient boundary conditions. – gavin]

Russ R.

Oh, yes, your post was followed by a string by David Young …

Still, David’s tone of master speaking to student is rather insulting … Gavin’s taking the high road, good for him, but as a reader I find it annoying.

I would add my admiration for the way Gavin and David have handled their exchange. I think it illustrates the difficulties that are inherent when we try to apply specialized knowledge gleaned from our experience in our day jobs to an unrelated field. It also shows that if both have good will, both can reach a better understanding (even if there are some difficulties of tone).

RE:72

Hey Ray,

I agree and also wish to thank you for the series of links in regards to climate sensitivity. I am in te process of reading them. Within the first three I find an issue that I cannot resolve.

In virtually every model run when the change in regional/zonal is graphed it seems to demonstrate a greater increase in the polar region then the other zones. The question that occurs to me is; If the temperature keeps rising faster at the poles then the equator how many doublings does it take before the poles are warmer then the equator?

Cheers!

Dave Cooke

for ldavidcooke

the term you’re looking for is ‘polar amplification’ — it’s been discussed, this should get you started, though won’t answer your hypothetical.

https://www.realclimate.org/index.php/archives/2006/01/polar-amplification/

found that using more general search words:

http://www.google.com/search?q=site%3Arealclimate.org+temperature+polar

You’ll also find you’ve had discussions of it at UKWeatherworld in topics you host.

Re 73l davidcooke – Poles won’t generally be warmer than the equator for the range of obliquities we’re in; but it’s an interesting point to make that polar amplification has a limit.

Well, once you’ve gotten most of the ice/snow out of the way (and replaced tundra with trees, for that matter), one reason for polar amplification is gone. If the stability of the lower troposphere at high latitudes is also an issue, then as the polar lower troposphere warms, presumably convection becomes more likely and the regional lapse rate feedback might eventually become negative (?)

#73

AFAIK you have to think in terms of absolute zero and insolation. However, this does make me wonder what the climate is on Venus at the poles relative to the equator.

To the general audience (i. e. to no one in particular);

Does anyone know of any applications of GCM’s that have attempted to model the last glacial to interglatial cycle (say from the LGM to pre-industrial times)?

In other words, if all the GHG’s were internal to the system (e. g. treated as internal feedbacks as opposed to external forcings).

Please forgive my ignorance on such matters.

I can’t resist one more comment that I’m sure has the wrong tone and for which I’m sure I’ll be taken to task, but . . .

You know this whole thing about “tone” strikes me as a little bit strange. On my technical team, challenges are encouraged. People should not be afraid to show negative results and dispute things that appear to others (especially me) to be obvious. You know there is a joke in mathematics: “The proof is omitted since it is obvious to the most casual observer.” This I think sums it up. I have found that a culture of openness works better than a culture based on respect for authority — just my personal prejudice. The challenge is to prevent fatigue from setting in and people just giving up and to keep the technical focus. The other issue is the increasing need for interdisciplinary teams. In such an environment, respect for authority has not worked in my experience.

We all know brilliant scientists, who are sometimes wrong and need occasionally to be challenged in strong terms. I won’t list specific things in my field, they are common and have resulted in defunding of CFD to a large extent, largely because laymen have gotten the mistaken impression from the leaders in the field that the problems are all solved, the equivalent of claiming that the science is settled. Such statements are not in my opinion scientific statements.

I do believe that Gavin and the climate science community are aware of the issues and are trying to do a better job in these areas, and this is to be applauded. In fact, my experience on this blog has been positive and has increased my respect for climate science. But I still want to see the sensitivity studies of the models with respect to the parameters and the subgrid models!!

So I applaud realclimate and its contributors. If they would only listen to me (just kidding). I know I’m pontificating, but its fun.

Respectfully,

Dave Young

#64 Lawrence Coleman says:

“The acceleration of the melt rate in greenland and the acrtic circle is tied firmly to a 45% increase in global CO2 since 1990.”

Really? Would you care to demonstrate how you arrive at this conclusion? Because I’d be happy to show you that you’re wrong.

“1990 is a significant starting year because that’s when some industrialied countries began taking decisive action to lower their industrial emissions. Russia for example has acheived a very commendable 28% reduction over this period and the EU-27 acheived a 7% reduction.”

Yes, 1990 was a significant start year, but not for the reason you suggest. It was incredibly significant for both Russia and the EU because 1990 happened to precede the collapse of the Soviet Union and the end of Communist control in nearly all of Eastern Europe (e.g. East Germany, Czechoslovakia, Former Yugoslavia, Bulgaria, etc.)

Those countries weren’t “taking decisive action to lower their industrial emissions” as you suggest… they were watching their make-believe economies crumble while shuttering inefficient state-run factories that were either producing goods that nobody wanted, or that couldn’t compete in a free market against the productive industrial centers of Western Europe. Basically, the former Soviet-Bloc’s industrial output collapsed, with the side-effect of sizable decreases in emissions.

By setting the Kyoto Protocol’s base-year as 1990 instead of 1997 (the year the treaty was actually signed), both Russia and the EU were able to claim credit for these “reductions” even though they had already occurred. Seems they did a great job of cherry-picking a start date.

“Maybe we should be all be following what the Russians are doing..they seem to have their act in place.”

If you mean abandoning sociaIism, then we’re in complete agreement.

“Where was the USA??..oh! they were on the other side of the smokestack actually increasing their emissions by 5%… demonstrated virtually no leadership in this area to date.”

Funny you should mention emissions, the USA and leadership…. From Table A1.1 on pg 33 of the very reference you cited, the USA’s emissions increased from 5.04 GtCO2/yr in 1992 to 5.87 GtC02/yr in 2000 (up 16% under Bill Clinton’s leadership), and then declined to 5.46 GtCO2 by 2008 (down 7% under George W. Bush’s leadership). So whom exactly are you blaming for a lack of leadership?

(Sorry, I couldn’t resist that last jab. I realize that US emissions didn’t rise or fall because of any specific environmental efforts by either administration… they rose because of the economic boom during the 90’s and fell because of the severe economic contraction in 2008.)

@David Young,

As you say you are surprised that explicit time methods are used in climate modeling. While true that implicit methods can be unconditionally stable, at the cost of some extra computational work and some less accuracy, explicit schemes are not unconditionally unstable. They do require more care in assessing the nature of the problem; velocities, nonlinearities, order of the equations modeled and the discretizations used, etc. And indeed for practical applications that commercial CFD tools are used for, requiring extreme spatial accuracy, this often leads to impractically small time steps. This is why they frequently just use implicit methods. However, with due care it’s perfectly possible to arrive at a conditionally, yet predictably, stable explicit scheme.

As a fluid dynamics engineer you can verify this for yourself with for example Burgers’ equation or Sod’s Shock Tube problem (Euler equations).

RE:74

Hey Hank,

HOST!? Try keep within the white lines and a best attempt to keep the eco warriors and the Thatcher/Reaganites at arms length… Most of the real science discussion was prior to my joining. Until the UEA e-mail fiasco we tried to allow for all POVs to be aired, we now are much more civilized though not near as active.

As to polar amplification concur, as in the reference from Ray, (Lutz et al 2010), it appeared that for a 400ppm CO2 loading roughly 3mya the Equator demonstrated a 2.75C and the Arctic a 10C amplification pattern. The last I had seen of this dscussion was in AR4 with a 7C current amplification. The issue is as Patrick suggests is what happens after the melt? Does the condition continue or amplify or does it adopt a seasonality.

During PETM we have evidence of 50C regional SSTs so as the Atlantic rift opened up and the “Bay of Mexico” became the Gulf of Mexico the transport poleward resulted in melt and then provided a great deal of radiational cooling prior to the formation of dense cloud cover. Which returns us to the quandry. Once conditions returned to allow the reformation of Polar cloud cover did polar amplfication return and was the character different with no ice cover? (I am still studying Ray’s references, it may be in there.)

Cheers!

Dave Cooke

> during PETM we have evidence of 50C regional sea surface temperatures

What is this evidence of which you speak?

My guess is that wherever it is you see “50C” you’re looking at a 5 followed by a little superscript circle and a capital C, and it’s describing a temperature difference above present day temps, not a temperature.

But I could be wrong.

What’s the cite for your source?

> Most of the real science discussion was prior to my joining.

Hm.

David Young:

Do you begin such challenges with the assumption that you’ve hired people ignorant of the engineering they’re being asked to do as part of the team? Do you begin by saying “I assume you know what 2+2 adds up to, but just in case, the answer’s 4 …”? Do you begin each skull session by trotting out everything you know in an attempt to impress your team members with your overarching superiority?

Or do you begin with the common courtesy of assuming that there is a shared knowledge base and shared competence?

What basis do you have for imagining that your knowledge of climate modeling exceeds that of one of the leading climate modelers in the world?

David Young:

NASA GISS Model E is open source. It’s well-written Fortran, and as an expert modeler yourself you’re obviously fluent in that language. There’s documentation and links to the scientific papers underlying the physics it implements.

Knock yourself out.

I see another troll has possessed the attentions of the posters here. Will we ever learn?

On Greenland melt–as it accelerates, what should we expect as far as isostatic rebound and seismic effects? How far could these propagate. The large methane releases now coming out of the East Siberian Arctic Shelf (which certainly deserves a thread of its own here, given the enormity of the possible consequences) seem to be the result of seismic activity. Is there any special reason, GW related or otherwise, why seismic activity should be increasing up there?

http://hainanwel.com/en/unusual-world/959-arctic-methane.html

http://english.ruvr.ru/2011/09/01/55512419.html

I know I should put this in “unforced variations” but in a way the cartoon linked below about the sh*t hitting the fan is not altogether irrelevant and being late in the month UF has gone well below the fold. Thanks to those who point out that current blogosphere ethics (or lack of same) allow people to tax the blog owner with all kinds of stuff, some not so nice, but not to require common courtesy and respect for expertise. Also, those who out of great tolerance, patience, and courtesy try to respond at length and in detail. I particularly like RealClimate because although mostly over my head it addresses common issues and the best commenters provide a wide variety of resources. It is a shame that a few feel the need to take up energy answering their all-too-answerable claims.

However, the real reason I’m here is to trumpet some new Throbglobins/Marc Roberts cartoons, in particular the final two (arrow to get less recent ones. You will also find them at his Throbglobins blog.

http://www.marcrobertscartoons.com/index.php?globalid=2184

Russ #78 [warning: off-topic rant follows],

I’ll certainly take your analysis of the Eastern European emissions trajectory over Lawrence’s. Back in 1997 there was a word for Russia’s emissions-permit windfall: “hot air”. Many of us were aghast at how the collapse of soci-alist heavy industry would cushion the richer parts of the EU from undertaking meaningful emissions reductions under Kyoto. It strikes me funny, though, that we never imagined Europe squeaking through its 2012 targets only thanks to the additional collapse of the capitalist finance industry.

Anyway, none of this puts a better complexion on the abject failure of U.S. leadership. The U.S. would do well just to get its per capita emissions down to the EU’s level, and in particular to the level of the most developed, non-former-communist EU-15 members, cf. p. 13 of the report you’re reading.

Re 70 David Young and inline response –

it may be worth emphasizing that – if I understood Gavin’s point “This is only a definition of ill-posedness if the system you are modelling doesn’t actually have this behaviour.” – that the system itself – the real physical system you are trying to model – is actually ill-posed in that way. Hence a time horizon limitation for weather forecasts. Climate is a more general description of the weather such that whether or not the weather takes one particular trajectory at one particular time is just not that interesting to the issue of climate, which is the statistics of weather that (generally may be) stable given stable boundary conditions although I like to suggest that this goes beyond simple averages – that the texture of the weather is climate – in the way that two lawns of grass planted at the same time with the same variety of seeds in the same type of soile and … etc, will look the same in the big picture but you can’t expect to map one lawn onto another with each blade of grass having the exact condition of it’s corresponding blade of grass. In a chaotic system with a strange attractor, the equilibrium climate is the attractor, and the trajectories are being pulled toward it, even though from different starting points they diverge from each other (up to a point) in some directions.

Also, I’m not sure if this has been addressed by others but:

Comparing short term climate change to such things as ice age-interglacial variation to can be like comparing apples to apple trees. If you know how to pick the apples off the tree then you can make meaningful comparisons. (Orbital forcing isn’t much in the global annual average, but is large regionally-seasonally; regional/seasonal responses can have a global average feedback; some feedbacks are typically slow-acting so that on short time scales they can be thought of as a forcing.)

About tunable parameters (for sub-grid scale processes) – parameters may be constrained by observations of the real world and some physics (for example, a climate model that occasional just adds momentum, energy, or mass to a grid cell without taking it from anywhere or anything else would obviously be wrong) – I think these constraints can come from the scale of short term weather processes. There may be a few(?) parameters that are tuned to produce a better global large scale pattern, but this tuning is not done to reproduce a trend in climate, rather to better reproduce an average climate. This was explained in two very useful RealClimate posts:

https://www.realclimate.org/index.php/archives/2008/11/faq-on-climate-models/

and

https://www.realclimate.org/index.php/archives/2009/01/faq-on-climate-models-part-ii/

there’s also

https://www.realclimate.org/index.php/archives/2008/04/butterflies-tornadoes-and-climate-modelling/

https://www.realclimate.org/index.php/archives/2007/05/climate-models-local-climate/

https://www.realclimate.org/index.php/archives/2005/01/is-climate-modelling-science/

RE:81

Hey Hank,

Of course you were right, even the P-Tr event did not demonstrate polar amplification temperatures in the 50C range. At best they appeared in the 14C range. The piece I had read must of had a conversion wrong and the intent had been to describe a 50 F est. Arctic surface temp. That certanly brings the problem into focus for me.

Cheers!

Dave Cooke

>> during PETM we have evidence of 50C

>> regional sea surface temperatures

> the piece I read must have had a conversion

> wrong and the intent had been to describe a

> 50 F est. Arctic surface temp

“50 F”??

Why not look this stuff up instead of posting fallible recollections?

It’s not hard.

PETM Home – University of Texas at Arlington

http://www.uta.edu/faculty/awinguth/PETM-Home.html

Sea-surface temperatures (SST) increased by 5°C in the tropics (Tripati and Elderfield, 2004; Zachos et al., 2003), by 6-8°C in the Arctic and sub-Antarctic …

Subtropical Arctic Ocean temperatures during the

Palaeocene/Eocene thermal maximum

http://www.nature.com/nature/journal/v441/n7093/full/nature04668.html

“… sea surface temperatures near the North Pole increased from ~18 °C to over 23 °C during this event.”

http://www.wbuf.noaa.gov/tempfc.htm

Fahrenheit to Celsius Converter

Enter a number in either field, then click outside the text box.

Re 76 EFS_Junior

from http://en.wikipedia.org/wiki/Venus

The surface is approximately isothermal. The heat capacity of the massive atmosphere prevents much of a diurnal cycle in surface temperature; my guess is the same applies to latitudinal temperature variation. (Though in slightly different ways – heat capacity alone reduces the diurnal temperature range to some extent (from where it would be otherwise); winds (or ocean currents, generalizing to other planets) that carry heat (small velocities are sufficient if the heat capacity is high enough) are necessary to reduce the latitudinal variation, and also help reduce the diurnal range (when the day is long relative to (zonal) wind (or current) speeds (see below)).

(Of course the greenhouse effect itself would tend to help reduce temporal temperature variations by making direct solar radiation a smaller part of the radiant and convective fluxes involved in helping to maintain the temperature – I think one way of looking at that effect is that each time a batch of energy is absorbed and emitted, the emission’s temporal and spatial variation would be reduced by the heat capacity and advection, relative to the temporal and spatial distribution of absorption; a given batch of energy will generally be traced through a larger number of such steps before exiting the system when the greenhouse effect is larger; and the emission that is reabsorbed in solar heated layers reduces the diurnal radiant (gross) heating cycle relative to the total (gross) radiant heating.)

From http://en.wikipedia.org/wiki/Atmosphere_of_Venus :

The whole atmosphere circles the planet in just four Earth days, much faster than the planet’s sidereal day of 243 days.

…

However, the meridional air motions are much slower than zonal winds.

On the other hand, the wind speed becomes increasingly slower as the elevation from the surface decreases, with the breeze barely reaching the speed of 10 km/h on the surface.[5]

…

They actually move at only a few kilometers per hour (generally less than 2 m/s and with an average of 0.3 to 1.0 m/s),…[1][18]

…

The density of the air at the surface is 67 kg/m3,….[1]

-? But perhaps much of the solar heating may be occuring higher up where it is redistributed by winds more quickly? (whereas if much of it were absorbed at the surface, the boundary layer (with a smaller heat capacity per unit area) might have a significant diurnal temperature cycle and perhaps latitudinal gradient, where convection out of the generally warmest parts of the boundary layer would transport heat into the rest of the troposphere where winds would carry it around to night and the poles and the heat capacity would reduce it’s diurnal temperature variation even if it stood still – but then, it would radiationally warm the night and polar surfaces – if the greenhouse effect is sufficiently strong over enough of the spectrum, I think the night and polar surfaces would tend to approach temperatures similar to the air above rather than remaining cooler due to partial optical exposure to the colder upper atmosphere and space (On Earth, polar night and diurnal night surface radiates partly (depending on clouds/fog, humidity) to space (and colder parts of the atmosphere) from which it get’s nearly nothing back (except from those parts of the atmosphere); warmer air (either immediately above or somewhere within the troposphere) radiates to surface and also may convectively and through conduction/condensation/frost heat the surface).

Order of magnitude considerations:

Considering a depth of 1 km (not that the Venusian boundary layer would be like Earth’s): 1 m/s * 67 kg/m3 * 1000 m * 1 K / 10^6 m * (**assumed** order of magnitude: 1000 J/(kg*K) – note a triatomic molecular ideal gas (not that the surface air is an ideal gas) would have larger molar specific heat but CO2 also has a larger molar mass than Earth’s air, so those effects would partly cancel)

=67 kg/m3 * J/(kg K) * K/m * m^2/s

= 67 W/m2

for a 1 K per 1000 km temperature gradient (order of magnitude) for 1 km layer at the surface moving 1 m/s.

Whole troposphere – replace 67 kg/m3 * 1000 m with ~ 100*1E4 kg/m2 (Venus’s atmosphere has (from the wikipedia article) 93 times the mass of Earth’s atmosphere – this is 99 % troposphere – and this is over a somewhat smaller surface area so I rounded up the per-unit area ratio – this is a rough approximation anyway), then we have ~ 15 times the heat flux per unit temperature gradient, or ~ 1000 W/m2 (about the noon-time low-latitude insolation at Earth’s surface at low latitudes absent clouds (and if humidity is not too high?) per 0.000 001 K/m (~ 10 K difference 1/4 of way around planet), not including the effects of faster winds aloft (though not as fast between pole and equator – in that case, assuming the meridional wind is at least as fast as the surface winds, whichever direction those generally have).

Remember, the point of sock puppets is to create FUD, thus they will make statements without providing references, make statements that are provably false, saying that it is “opinion” they hope that thus the naeive reading the commentary will be influenced to think there is a “problem”.. These people are getting tiring.. Time to trace back their IP addresses….

Gavin and Patrick,

Just a few thoughts about the models. If you already know these things, ignore this post.

1. Explicit time marching. You might gain a lot by going implicit if your time step is limited by numerical stability and not accuracy. There is a good survey in JCP about 6-8 years ago by Keyes and Knoll. They talk about Newton Krylov methods as a good way to go implicit. I’m assuming you are already using adaptive step size control. There is classical work by Hindmarsh and Skeel.

2. If you go implicit you will have to code up a Jacobian for your spacial operator. This has a lot of added bonuses. It has helped us find errors or questionable things in our models that affect accuracy. It gives you the potential to compute linear sensitivities cheaply. Omar Ghattas has some good work recently on linear sensitivities for time accurate calculations. He is interested in inverse problems, but things like that can be valuable in trying to fill in unknown data, etc. and the methods are perfectly usable in your context. Every time you do a calculation, you can compute hundreds of sensitivities very cheaply to everything form IVs, boundary values, forcings, even grid spacing, your operator coefficients and parameters.

In the nonlinear world, this kind of sensitivity analysis can be very helpful in finding multiple states, tracing out bifurcation diagrams, etc.

3. I try to resist the natural desire to go to ever more complex models with more parameters. CPU time always increases and eventually rules out doing fundamental work. The star of simpler models in CFD is Mark Drela at MIT. I don’t know if boundary layers are important in weather and climate. It’s probably secondary.

You already have a lot of expertise in Government. Jim Thomas at NASA Langley, Linda Petzold who used to be at Livermore and John Bell at LBL. You may already know John. They may not be able to help you but they might be interested in your problems. In any case, bridging the gap between physical science and computational science can be a big step forward.

Best

> But perhaps much of the solar heating may be occuring higher up

Higher up in what? The mountains?

> … (whereas if much of it were absorbed at the surface

What “higher up” stuff is solar heating able to heat?

#90

Yes, I did go to wiki, and kind of stopped after the isothermal part;

“The surface of Venus is effectively isothermal; it retains a constant temperature not only between day and night but between the equator and the poles.[1][45]”

Note that neither of the two references cited [1][45] really do show it to be isothermal, but you’d think it was isothermal given the large amount of CO2 and its opacity.

On Earth, even with all the ice sheets melted, I’d still expect somewhat cooler temperatures at the poles at all times (but particularly during the winter seasons in the NP and SP, respectively) relative to lower/higher latitudes (e. g. further distance from either pole).

Re 93 Hank Roberts – that is speculation on my part as I’m really not sure, but I figured the clouds on Venus, reflective though they are, don’t bring the albedo all the way to 100 %, yet they do completely visually obscure the surface, which suggests they are absorbing some visual radiation; I just don’t know how much (because, of course, there is such a thing as forward scattering). Even on Earth, a significant fraction (~2/7 I think), though smaller than half, of the solar radiation which is absorbed is absorbed in the air (H2O, clouds, etc, and of course ozone)(and this is of course especially in UV and solar IR).

Re 94 EFS_Junior –

right, [45] only makes that statement without citation. I suppose one could repeat the calculations done for Mars and Titan for the two (or three) formulations of D and see what happens.

[45]: http://sirius.bu.edu/withers/pppp/pdf/mepgrl2001.pdf

[1]:

http://nssdc.gsfc.nasa.gov/planetary/factsheet/venusfact.html

(PS from that, Venus TSI * (1-bond albedo) = 261.39 W/m2 (not following sig.fig’s) (Earth’s value: 949.11 W/m2) (divide by 4 to get global average solar heating). Assuming not too much variation in albedo**, that would be roughly the solar heating with the sun overhead, and thus would be roughly the upper limit for the temporal-spatial range in net radiant heating.

** – (from the factsheet:) Bond albedos are 0.90 and 0.306 for Venus and Earth, whereas visual geometric albedo: 0.67 Venus, 0.367 Earth; –

from http://nssdc.gsfc.nasa.gov/planetary/factsheet/fact_notes.html:

visual geometric albedo: “The ratio of the body’s brightness at a phase angle of zero to the brightness of a perfectly diffusing disk with the same position and apparent size, dimensionless.” (Is this an area-weighted global average?)

Bond albedo: “The fraction of incident solar radiation reflected back into space without absorption, dimensionless. Also called planetary albedo.” (equal to albedo averaged over area (and time) after weighting by local insolation as a fraction of average insolation)

I’m not clear yet on whether or how much the differences between values are due to what combination of spectral variations (visual vs all solar radiation), spatial-temporal variations (albedo distribution relative to the distribution of insolation at TOA), or the nature of scattering/reflection (Raleigh vs Mie vs specular reflection off calm water vs … vs the cool stuff that oriented ice crystals can do, whatever…)

and thus would be roughly the upper limit for the temporal-spatial range in net radiant heating.

at TOA. PS the 262 W/m2 range (~ 1000 W/m2 / 4) suggests that given the same approximated available heat capacity and only a 1 m/s wind speed (but what are the meridional wind speeds?), we can support heat fluxes that would allow the temperature range to be ~ 10 K/4 ~= 2.5 K (or more accurately, 2.62 K, but then again, a number of other rough approximations went into this; it was an order of magnitude scaling-type analysis so there could be some small constant with which to multiply or divide to get a better answer).

> speculation … clouds on Venus

http://www.sciencedirect.com/science/article/pii/S0019103511002338

… actually, since we’re already given that the heating is smoothed out zonally-diurnally by winds/heat capacity/greenhouse effect, we can divide 261 W/m2 (why did I round up to 262 earlier? oops) by pi to get 83.2 W/m2, which is the difference in solar heating between equator and pole (equinox or zero (or 180 deg) obliquity (the later being approximately the case for Venus)). So now we have a possible temperature difference on the order of 0.8 K (given the other assumptions/approximations). (PS on the other hand, if the zonal-diurnal smoothing were not greater than the meridional smoothing, then the temperature minimum might be ~ half way around from the temperature maximum and we’d have to double it to ~ 5 K, at least for this rough back-of-the-envelope work.)

PS more thinking about greenhouse effect’s role – absent direct solar heating at the poles (or night), the surface temperature would tend toward equilibrium with some weighted combination of space and various layers of the atmosphere. With a sufficiently strong greenhouse effect this would be mainly just the atmosphere, or just the lower atmosphere, or just the surface air (which would tend towards equilibrium with the air above that). If the sinking air (if a Hadley (or other such thermally-direct overturning) cell extends that far – setting aside the role of nonconvergent horizontal winds for the moment) can cool radiatively to maintain a stable lapse rate, the lower air and surface can be colder than at the same vertical levels at the locations of ascent (assuming that the adiabatic lapse rate doesn’t vary to compensate – dry ascent and descent as opposed to moist ascent and dry descent following a moist adiabat, for example) even without cooling during the horizontal motion. With increasing opacity, it may become harder for the air to radiatively cool (except near TOA or at the top of a cloud layer, etc.), and descent at the same rate could become more adiabatic (of course that would have some effect on the rate of descent via the physics of circulation). On the other hand, the same would be true with insufficient atmospheric opacity, but then the surface would be more optically exposed to space.

I’m not convinced that an ice-free Earth would necessarily mean cooler poles outside of winter seasons for each. Without snow and ice albedo in the Arctic gone, wouldn’t the temperature between there and the equator be equalised? Could the Arctic not be warmer than the tropics because of the large body of low albedo water? The equator may get more warming with the sun’s focus travelling constantly around it, but then the poles can have seasonal constant sunlight or darkness to some degree. Please tell me what I’m missing.

Here’s a model: http://www.falw.vu/~renh/methane-pulse.html

“For further information, please consult the following paper:

Renssen, H., C.J. Beets, T. Fichefet, H. Goosse, and D. Kroon (2004) Modeling the climate response to a massive methane release from gas hydrates, Paleoceanography 19, PA2010, doi: 10.1029/2003PA000968.”