We’ve often discussed the how’s and why’s of correcting incorrect information that is occasionally found in the peer-reviewed literature. There are multiple recent instances of heavily-promoted papers that contained fundamental flaws that were addressed both on blogs and in submitted comments or follow-up papers (e.g. McLean et al, Douglass et al., Schwartz). Each of those wasted a huge amount of everyone’s time, though there is usually some (small) payoff in terms of a clearer statement of the problems and lessons for subsequent work. However, in each of those cases, the papers were already “in press” by the time other people were aware of the problems.

What is the situation though when problems (of whatever seriousness) are pointed out at an earlier stage? For instance, when a paper has been accepted in principle but a final version has not been sent in and well before the proofs have been sent out? At that point it would seem to be incumbent on the authors to ensure that any errors are fixed before they have a chance to confuse or mislead a wider readership. Often in earlier times corrections and adjustments would have been made using the ‘Note added in proof’, but this is less used these days since it is so easy to fix electronic versions.

My attention was drawn in August to a draft version of a paper by Phil Klotzbach and colleagues that discussed the differences between global temperature products. This paper also attracted a lot of comment at the time, and some conclusions were (to be generous) rather unclearly communicated (I’m not going to discuss this in this post, but feel free to bring it up in the comments). One bit that interested me was that the authors hypothesised that the apparent lack of an amplification of the MSU-LT satellite-derived trends over the surface record trends over land might be a signal of some undiagnosed problem in the surface temperature record. That is not an unreasonable hypothesis (though it is not an obvious one), but when I saw why they anticipated that there should be an amplification, I was a little troubled. The key passage was as follows:

The global amplification ratio of 19 climate models listed in CCSP SAP 1.1 indicates a ratio of 1.25 for the models’ composite mean trends …. This was also demonstrated for land-only model output (R. McKitrick, personal communication) in which a 24-year record (1979-2002) of GISS-E results indicated an amplification factor of 1.25 averaged over the five runs. Thus, we choose a value of 1.2 as the amplification factor based on these model results.

which leads pretty directly to their final conclusion:

We conclude that the fact that trends in thermometer-estimated surface warming over land areas have been larger than trends in the lower troposphere estimated from satellites and radiosondes is most parsimoniously explained by the first possible explanation offered by Santer et al. [2005]. Specifically, the characteristics of the divergence across the datasets are strongly suggestive that it is an artifact resulting from the data quality of the surface, satellite and/or radiosonde observations.

(my emphasis).

For reference, the amplification is related to the sensitivity of the moist adiabat to increasing surface temperatures (air parcels saturated in water vapour move up because of convection where the water vapour condenses and releases heat in a predictable way). The data analysis in this paper mainly concerned the trends over land, thus a key assumption for this study appears to rest solely on a personal communication from an economics professor purporting to be the results from the GISS coupled climate model. (For people who don’t know, the GISS model is the one I help develop). This is doubly odd – first that this assumption is not properly cited (how is anyone supposed to be able to check?), and secondly, the personal communication is from someone completely unconnected with the model in question. Indeed, even McKitrick emailed me to say that he thought that the referencing was inappropriate and that the authors had apologized and agreed to correct it.

So where did this analysis come from? The data actually came from a specific set of model output that I had placed online as part of the supplemental data to Schmidt (2009) which was, in part, a critique on some earlier work by McKitrick and Michaels (2007). This dataset included trends in the model-derived synthetic MSU-LT diagnostics and surface temperatures over one specific time period and for a small subset of model grid-boxes that coincided with grid-boxes in the CRUTEM data product. However, this is decidedly not a ‘land-only’ analysis (since many met stations are on islands or areas that are in the middle of the ocean in the model), nor is it commensurate with the diagnostic used in the Klotzbach et al paper (which was based on the relationships over time of the land-only averages in both products, properly weighted for area etc.).

It was easy for me to do the correct calculations using the same source data that I used in putting together the Schmidt (2009) SI. First I calculated the land-only, ocean-only and global mean temperatures and MSU-LT values for 5 ensemble members, then I looked at the trends in each of these timeseries and calculated the ratios. Interestingly, there was a significant difference between the three ratios. In the global mean, the ratio was 1.25 as expected and completely in line with the results from other models. Over the oceans where most tropical moist convection occurs, the amplification in the model is greater – about a factor of 1.4. However, over land, where there is not very much moist convection, which is not dominated by the tropics and where one expects surface trends to be greater than for the oceans, there was no amplification at all!

The land-only ‘amplification’ factor was actually close to 0.95 (+/-0.07, 95% uncertainty in an individual simulation arising from fitting a linear trend), implying that you should be expecting that land surface temperatures to rise (slightly) faster than the satellite values. Obviously, this is very different to what Klotzbach et al initially assumed, and leaves one of the hypotheses of the Klotzbach paper somewhat devoid of empirical support. If it had been incorporated into their Figures 1 and 2 (where they use the 1.2 number to plot the ‘expected’ result) it would (at minimum) have left a somewhat different impression.

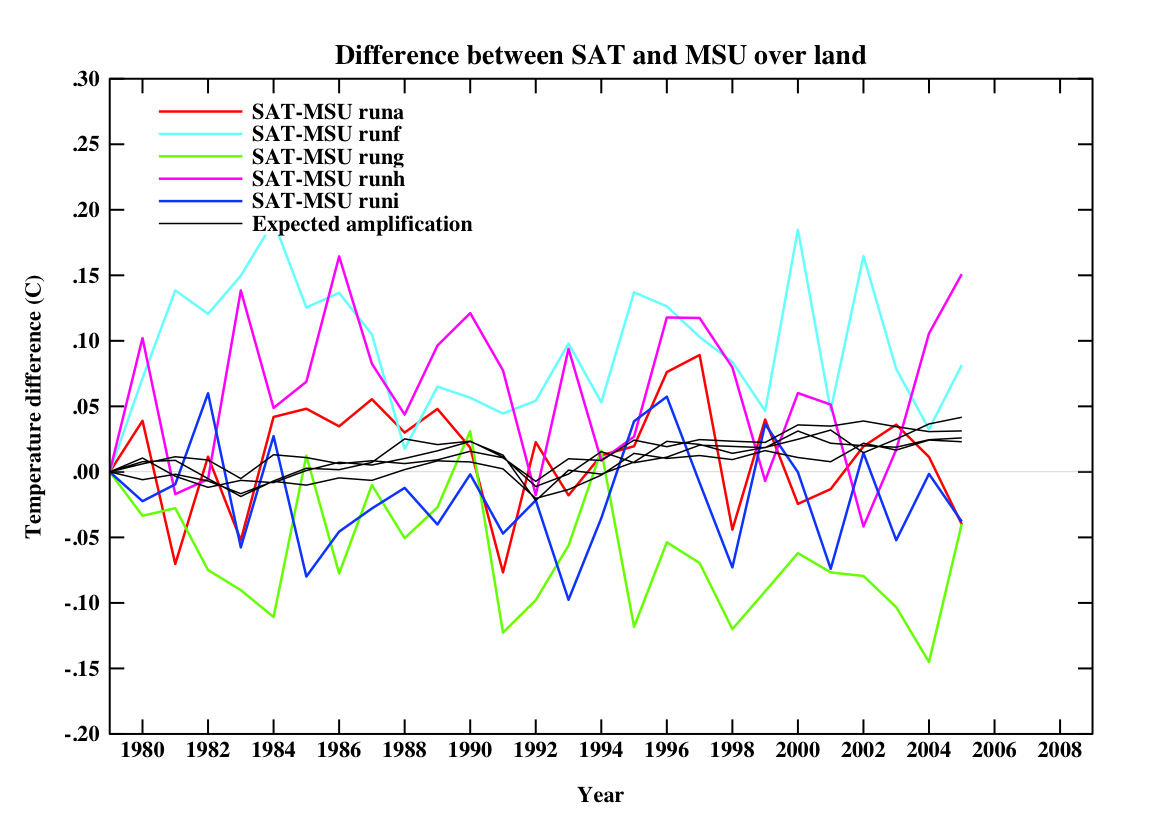

For reference, if you plot the equivalent quantities in the model that were in their figures, you’d get this:

(for 5 different simulations). Note that the ‘expected amplification’ line is not actually what you would expect in any one realisation, nor the real world. The differences on a year to year basis are quite large. Obviously, I don’t know what this would be like in different models, but absent that information, an expectation that land-only trend ratios should go like the global ratios can’t be supported.

Since I thought this was very likely an inadvertent mistake, I let Phil Klotzbach know about this immediately (in mid-August) and he and his co-authors quickly redid their analysis (within a week) and claimed that it was not a big deal (though their reply also made some statements that I thought unwarranted). Additionally, I provided them with the raw output from the model so that they could check my calculations. I therefore anticipated that the paper would be corrected at the proof stage since I didn’t expect the authors to want to put something incorrect into the literature. After a few clarifying emails, I heard nothing more.

So when the paper finally came out this week, I anticipated that some edits would have been made. At minimum I expected a replacement for the inappropriate McKitrick reference, a proper citation for the model output, acknowledgment that the amplification assumption might not be valid and an adjustment to the figures. I didn’t expect that the authors would have needed to change very much in terms of the discussion and so it shouldn’t have been too tricky. Note that the last paragraph in the paper does directly link the non-amplification over land to possible artifacts in the data products and so some rewriting would have been necessary.

To my great surprise, no changes had been made to the above-mentioned section, the figures or the conclusion at all. None. Not even the referencing correction they promised McKitrick.

This is very strange. Why put things in the literature that you know are wrong? The weird thing is that this is not a matter of interpretation or opinion about which reasonable people could disagree, but a straightforward analysis of a model that gives only one answer. If they thought McKitrick’s source data were appropriate, why wouldn’t they want the correct answer?

It’s almost always possible to make some edits in the proofs, and papers can always be delayed if there are more substantive changes required. Indeed, they were able to rewrite a section dealing with a misreading of the Lin et al paper that had been pointed out in September by Urs Neu (oddly, there is no acknowledgment of this contribution in the paper). Is it because they want to write a new paper? That’s fine, but why leave the paper with the old mistakes up without any comment about the problems (and two of the co-authors have already blogged about this paper without mentioning any of this or any of the other criticisms)? If these issues are trivial, then it would have been easy to fix and so why not do it? However, if they are substantive, the paper should have been delayed and not put in the literature un-edited.

I have to say I find this all very puzzling.

Note added in proof: I sent a draft of this blog post to Dr. Klotzbach and he assures me that the non-correction was just an oversight and that they will be submitting a corrigendum. He and his co-authors are of the opinion that the differences made by using the correct amplification factors are minor.

Gavin,

I had recently been calculating the trend field for the AR4 model data to get the amplification factor for some blog post I was working on. I found some strange results. Looking at it model-by-model, there are points in the ocean where the 2-m air temperature since Jan 1979 hasn’t increased appreciably. However, the synthetic TLT trend at that same point increased appreciably. So the amplification factor is either a large negative or positive number. Taking a global or tropical average gives a meaningful answer. But for what I was doing I needed to keep the data in gridded form. I was able to alleviate the problem of such huge numbers by just interpolating all the trend fields to a common grid and averaging. But the issue still bothers me. Do you have any idea why there would be such large amplification factors found over the ocean? Am I just looking at noise in that respect?

For those who’d like to see some pretty graphs of the satellite records minus the surface records: http://treesfortheforest.wordpress.com/2009/10/16/rss-vs-uah/

It doesn’t show the amplification factor, but it still gives you an idea of how the two metrics are changing relative to one another.

[Response: Hmm… I don’t think one expects grid-point amplifications to be useful since the horizontal advection terms (for both the atmosphere and ocean) will dominate over the vertical processes that are the basis of the expected amplification. Thus I wouldn’t worry about it too much. Averaging over latitude, or ocean basin or region is likely to be much more robust. – gavin]

[edit – moved to appropriate thread]

OT – I just noticed that the PDO is positive. Of course this could be a temporary condition so we will just have to wait and see…

http://www.esr.org/pdo_index.html

Strange, because I read on the internets that it was supposed to be negative for 30 years. Heck, there was even a video from some guy named Bastardo that said it was a 30 year cycle. Oh well… guess you just can’t trust just anyone that opines…]

[Response: The Pacific Decadal Oscillation is not particularly decadal and is not oscillatory. There may be more to it than simply “noise”, but the data avaialable are statistically indistinguishable from red noise with a physical time scale of about 1 year. This doesn’t mean it’s not real — it is clearly real in the sense that anomalies in SST show up in the Pacific, and on average they tend to last decades in one phase (but will happily move into the opposite phase for a year or three before switching back) — but it does mean it is not predictable.–eric]

Gavin,

I would like to report another flawed paper which became highly publicized : Lindzen and Choi 2009.

http://www.seas.harvard.edu/climate/seminars/pdfs/lindzen.choi.grl.2009.pdf

The claims of this paper quite significant :

(1) That Earth’s climate shows a considerable negative (-1) feedback factor

(2) That models are completely wrong (since they show positive feedback)

(3) That climate sensitivity is 6x lower than previous projections.

Paper was peer-reviewed, accepted and published by Geophysical Research Letters (in August).

After the GRL publication, this paper was highly publicized in popular media, including Sen. Inhofe’s web site, numerous newspapers, and Lord Monckton presented it on Fox News as “the end of the (AGW) scam”.

Here are some of the references to these media publications :

http://masterresource.org/?p=4307

http://www.examiner.com/examiner/x-7715-Portland-Civil-Rights-Examiner~y2009m8d18-Carbon-Dioxide-irrelevant-in-climate-debate-says-MIT-Scientist

http://www.youtube.com/watch?v=8bLUEMWicyo&feature=related

Back in May, Chris Colose already pointed out several problems with the data used, as well as the negative feedback claims.

http://chriscolose.wordpress.com/2009/03/31/lindzen-on-climate-feedback/

Lindzen apparently adjusted the data set (to Edition 3), although he still used the Edition 2 data plots in the GRL paper.

But besides that, he still claimed negative feedback (which contradicts with Wong et.al).

In August, James Annan and others early on already pointed at the questionable use of AMIP models in this paper for this purpose.

http://julesandjames.blogspot.com/2009/08/quick-comment-on-lindzen-and-choi.html

Then in the blogosphere more questions appeared around the claim of negative feedback in Lindzen and Choi.

It appeared that Lindzen eliminated the zero-feedback response in his formula.

http://motls.blogspot.com/2009/11/spencer-on-lindzen-choi.html

The omission of the zero-feedback response explains how Lindzen ‘found’ negative feedback.

But the most significant rebuttal came (unexpectedly) from Dr. Lindzen’s longtime ally Dr. Spencer :

http://www.drroyspencer.com/2009/11/some-comments-on-the-lindzen-and-choi-2009-feedback-study/

All these comments combined solidly debunk Lindzen and Choi 2009,

and we have not even dug into the exact way in which the negative feedback was “created” in this paper (you would be quite shocked).

Meanwhile, damage continue to be done in the media using this paper and talked about in the blogosphere.

Even worse, papers like this are being used as ‘proof’ that the ‘consensus’ view on climate science is wrong.

In that sense, they become the David (against Goliath) of the climate science debate.

This way, the authors of such papers become heroes in the eyes of the ones that don’t like the scientific ‘consensus’ view.

The scientific peer-review process is still a good way to filter out such mistakes.

However, once a flawed paper comes through that process, I claim that we currently have no good process in place to publicize the mistakes post-publication.

So, Gavin, I ask you this :

What do you think is the most efficient way to minimize damage done by such papers, or somehow keep a public record or suspect or rebunked papers ?

I thought about making Wikipedia entries, pointing out mistakes made in papers under the authors’ wiki page.

Do you guys at RealClimate have put any thought into this (flawed scientific paper containment) problem ?

Thanks

Rob

P.S. I hope this post does not get lost in the massive amounts of postings here on RC.

> hope this post does not get lost …

Rob, you can search:

http://www.google.com/search?q=site%3Arealclimate.org+lindzen+choi

#53

Yes, it’s been a bad year for GRL. I know that there was a formal comment submitted for McLean et al, but I’m not sure about Lindzen and Choi. Still the critiques from James Annan and Chris Colose were quite devastating.

L&C received less attention than McLean et al, although Lord Monckton was very taken with it.

Just been reading about the denyalist’s voice getting louder despite overwhelming evidence to the contrary..in this site..http://www.countercurrents.org/monbiot031109.htm

I think it’s time the people decide whether we wish to live or die..if we chose the former..then for christsake! we should do something about the source of these misguided and/or malicious contageons. Despite the incredible unity of nearly all cimate scientists on this problem and trying their damndest to present their data to world governments there are also the voters out there that are getting swayed from the truth by these reprehensible denyalsit groups who seem to be immune from reason or logic..I think Gavin or Eric would agree on that one. If the majority of the populous believe that CC is not anthropogenic and therfore natural..and that eventually mother nature will guide us back to the climatic status quo they wont re-elect a gov that’s going to impose hardline and ecomically painful Greenhouse targets are they. If this is the direction of the conditioning of the masses amongst most of the world’s most polluting countries then there isn’t much hope is there. I once thought homo-sapiens were smarter and wiser than ostriches..maybe-saldly…I was mistaken??

Rob Dekker #53: You can find some of this already at the RealClimate Wiki though I agree it would be useful to have some sort of shadow publication wiki that would make it easier to find rebuttals in one place.

I like “rebunked” though I suspect it’s a typo. I take it as meaning papers that repeat bunk :)

OT, Building on the joke in 53: It might be helpful if somebody writes a comprehensive post on the PDO: How the index is calculated, what different values of the index look like in the SST patterns, whether or how it is linked to ENSO and atmospheric circulation, and so on.

> “rebunked”

Ding! a pithier term than “whack-a-mole.”

[Response: “Rebunking” it is then. We will use it whenever appropriate. – gavin]

Then WUWT definitely suffers from a rebunker mentality.

Background — good collections of papers here on various subjects including this in Lindzen’s ‘Iris hypothesis’:

http://agwobserver.wordpress.com/2009/11/13/papers-on-the-iris-hypothesis-of-lindzen/

As a layman, I take a very simple view of assessing AGW – I listen to what the experts say. I cannot hope to follow and evaluate all the science myself, that’s as daft as me trying to have opinions on brain surgery or rocket science. So I just look at the summaries, and here I have 2 sources – first, pretty much the world’s entire independent scientific academic institutions back the theory, and second virtually all the peer-reviewed literature does too. For me, that’s basically case closed and end of discussion.

However, I am keen to keep a careful watch on these two areas over time as well. I’m aware of the famous Science journal meta study in 2004 which found no peer-reviewed papers against the consensus. But it’s clear now that, subsequently, some have been published. I’d like a rough idea of what the volume of papers published in the last five years which assess either way are for and against. I’m be extremely surprised if it wasn’t overwhelmingly FOR, but as I say I’d find the numbers (even approximate) more than a little useful. Could anyone here give an informed guesstimate?

As an outsider, I too think some form of formal correction procedure would be a very good idea, but I guess there are already published corrections by authors? I think a proper, independent site or webpage which can look at the big picture on these matters is sorely needed at this critical time.

Re #53, #59

I noticed I made a mistake. The guys name is Bastardi, not bastardo.

Eric, thank you for the context.

I too like the term rebunked.

I also agree with tharanga (#59), but not just PDO. I’d like to see a post or posts that address the myriad ocean cycles, interactions, effects, etc.

Gavin, A couple of additional comments. First, I appreciate that you indeed seemed to think about what I wrote.

I will reiterate – we do indeed believe the surface data set is contaminated if you take the surface as providing a clean signal of anthropogenic greenhouse gases and that the warming is tied to the warming in the deeper troposphere. The surface temperature in nocturnal boundary layers and in more persistent stable boundary layers at high latitudes is more dependent on the state of turbulence than it is to the top boundary condition at the top of the boundary layer. The state of turbulence is then very sensitive to longwave radiation forcing and land use changes.

[Response: There’s no problem with that, and indeed, one would expect a good model to reproduce such effects. It just does not amount to any problem with the surface temperature record itself (whether there are other problems in that or not). – gavin]

In regard to Urs Neu #43 comment. He does have our definition of bias correct which few people seem to grasp.

[Response: In no small measure that can be blamed on the somewhat unclear use of the word bias in your paper and surrounding commentary. ]

I agree that the stable boundary layer is complex and changes can occur in both directions due to parameter changes. However, I strongly disagree that this effect is small. The biggest signal in the historical surface data is the reduction in the diurnal temperature change which is largely due to nocturnal warming. The reduction in the the nocturnal warming since 1979 (Vose et al. 2005) may be in part be due to the small sample size since only about 1/3 the number of stations are retained after 1979 in the NOAA data set. Limited regional analyses (California, East Africa – Christy et al. 2009)appear to show continued and even accelerated nocturnal warming since 1979.

If it is so small why does it keep showing up in the historical record?

The dependence of the surface temperature on turbulence is not limited to only strongly stable light wind clear skies. It is exists through the entire range of strongly stable to weakly stable boundary layers. Although there are parameter spaces where it is more sensitive.

I was not involved in the Lin et al study so can’t adequately discuss it. But , the effect of increasing turbulent intensity (mixing more warm air down to the surface) means at some level the mixing cools the air aloft (generally near the top of the NBL). Thus it is not clear that trends between 9 meters and 1.5 meters are the right levels to capture this effect. I do note the last figure in Lin et al. shows in general a decrease in the diurnal temperature change.

Urs is absolutely correct that the SBL can be complicated and I continue to be surprised. I hope he and others can help unravel its complexities and role in interpreting temperature trends.

Guy, one other criterion you might add is the number of times a paper has been cited since publication. That gives a measure of its impact. For the most part, the denialist contributions sit there like a dog turd on a New York sidewalk. They simply don’t advance understanding of climate.

Guy: I don’t think that Naomi Oreskes’ study claimed to have found all published papers on climate change as there were certainly were a few published prior to one her study appeared. But, she had a decent and presumably representative fraction of those that had been published, thus showing that papers in contradiction to the consensus were a very small fraction of those appearing in the literature.

As Ray Ladbury notes, one also wants to look at whether the paper has been cited a lot, whether there have been comments on it or subsequent papers showing it to be incorrect, and where it was published, because of course peer review is not a perfect filter so some bad papers will get published (even in good journals). It is usually a bad sign if a paper is published in some obscure journal or in a journal that is in another field where they might have difficulty finding qualified reviewers. A good case of the former is the paper published by Ferenc Miskolczi [ https://www.realclimate.org/wiki/index.php?title=Ferenc_Miskolczi ] which appeared in some Hungarian journal. A good case of the latter is the paper by Gerlich and Tscheuschner [ https://www.realclimate.org/wiki/index.php?title=G._Gerlich_and_R._D._Tscheuschner ] published in a second-rate physics journal, although the physics in that paper was bad enough that even a competent reviewer ignorant of climate science should have been able to see through some of the major flaws and I am embarrassed for my own discipline that such nonsense did appear in a physics journal.

Guy, asking for pointers to corrections and followups, you should look at the RCWiki — there’s a link to it up at the top of each page, second button to the left of the “Start Here” link.

John P Reisman,

You pointed out the PDO was positive in October. This is correct – it was very marginally positive. However, in the two weeks sense it has gone back negative due to stalled GOA lows. No one ever said it would stay negative. There do appear to be ~30 year periods where it is prone to being in one phase over another, but that doesn’t mean you can’t go a whole year with an extremely +PDO in a predominantly negative phase. Also, we are in an El Nino which tends to strongly favor a +PDO. If anything it is surprising the PDO isn’t much higher than it is now considering the El Nino has been >1.7 in region 3.4 lately and the tri-monthly will be moderate or strong.

Thank you Ray, Joel and Hank for some useful insights. Playing devil’s advocate here for a moment, I guess a contrarian (which I very much am not!) might argue that a lack of citation reveals institutional bias in ignoring inconvenient evidence. Also comments about “second rate journals” might very well be true, but sound dangerously subjective to someone who just wants the basic facts – again, are we transparently sure that we’re not just ignoring bits we don’t like? Gavin’s arguments on this thread seem perfectly reasonable to me, but can I really honestly assess myself under all the accusation and counter-accusation whether or not he or Pielke has the more reasonable argument? Can I appeal to the volume of peer-reviewed literature each has contributed to the science? Is such information easy for the casual lay-person to come by, and if found is it necessarily a reliable indicator of who is right?

I keep feeling I need a bigger scientific overview.

I think there is a need for a gigantic (perhaps wiki?) spreadsheet where each climate science paper is listed, the journal it came from, the number of citations and whether it has any conclusions which back, question or do not comment on the basic theory of AGW (perferably with a reference and a relevant quote to illustrate). A similar (and much smaller) spreadsheet could cover academic institutions’ public statements.

The public debate is so often inflamatory and filled with claim and counter-claim that no reasonable lay-person can be expected to independently judge it. As a consequence, the myth that science is somehow equally divided continues to spread, and is becoming ever more accepted as an accurate summary (partly explaining the alarming drop in public support). I’d love something that was brutal and basic – just a hard data summary devoid of any editorialising one way of the other. To be able to say with some reasonably objective grounds that x% of published peer reviewed literature raises doubts on the theory each year, and how that figure changes over time, would be priceless imho.

Guy suggests that “that a lack of citation reveals institutional bias in ignoring inconvenient evidence.”

Except that to ignore something that truly advances understanding of climate makes it that much less likely that one’s future work will be worthwhile. You would have to explain what would motivate a scientist to tie a hand behind his back in his efforts to understand his field of research. And even if one scientist were so biased, what are the chances that his peers would be so biased and short-sighted to act counter to their own interests?

The database you ask for doesn’t really exist just yet. However, something equally useful does exist:

http://www.eecg.utoronto.ca/~prall/climate/climate_authors_table.html

You will find your denialists well down the list. It is not that they are stupid or evil–rather, it is that their way of looking at climate is not productive. In other words, if you reject a value of CO2 sensitivity greater than 2, you can’t understand Earth’s climate.

> I keep feeling I need a bigger scientific overview.

http://www.ipcc.ch/

It may take a few years to read; that’s why the people who read the papers listed there boiled them down to summary form, and continue to do so every five years or so.

Expanding on #72

Guy,

IPCC AR4 WG1 report need not be totally overwhelming. Start with the Summary for Policymakers and then read the Executive Summaries of chapters that interest you. The FAQ sections that are a feature of a couple of the longer chapters are also accessible.

The chapter that gives the background for issues concerning the recent temperature record is Chapter 3, “Observations: Surface and Atmospheric Climate Change”.

Ray Ladbury wrote in 71:

I believe that particular insight isn’t so much a matter of science or even the philosophy of science, but of something much more basic. In any case it is something which I hadn’t seen before — at least in that context or from that perspective. However, I assume the three alternatives you’ve mentioned aren’t in any way mutually exclusive.

Oh — and welcome back!

Ray, please note that the lack-of-citation-indicated-bias categorically isn’t my view (or even suggestion) at all, but this is a possible contrarain argument. Thanks for that link though, it certainly looks useful.

Similarly, I’ve found a ludicrous resistance to any argument that involves the IPCC, as it is “government-influenced” (never mind the fact that the influence works the opposite way to how the contrarians pretend!) I understand the basic science, and the most fundamental arguments from the AGW side, but in my experience within arguments things very quickly get bogged down in detail which goes beyond my ability to sensibly assess.

Have to say, all this is convincing me further that this sort of database is needed. With all the endless arguing over the science and bias, it seems somewhat perverse that a simple (if large) summary of all the peer-reviewed scientific papers does not exist. It would do us all a great service, I’m sure.

Re 65

Ralph, thanks for the comments. I only agree with your statements, that there could be some changes in the structure of the Nocturnal Boundary Layer (NBL), be it through vegetation changes, or through changes in turbulence. However, there isn’t any (observational) evidence at all, that there really have been any NBL trends related to such processes (the only observations in Lin et al. show a cooling influence).

I do not understand on what evidence you base your suggestion that this influence is not very small.

The fact that nighttime temperature increases more than daytime temperature is something that we just expect from the increase of greenhouse gas concentrations. But this effect does not depend on the state of the boundary layer at all. It occurs independent of the NBL state. In contrary, this effect might influence the characteristics of the NBL. Thus it is rather the warming of the surface, that leads to a destabilization of the NBL, and not changes in the NBL which alter surface temperature.

With respect to this the use of the term ‘bias’ is somewhat strange. You use this term for the difference between trends at the surface and in the lower troposphere, while ‘bias’ mainly is used to describe the difference of a measurement to a suggested ‘real-world’ value. There might be differences between surface and lower troposphere trends in the real-world (they are even expected to occur), so the use of ‘bias’ might be misleading. The warming of the surface through increased longwave radiation (clouds or GHGs) is a real-world phenomenon, which influences vegetation, snow cover, animals, etc., independent of what is happening in the lower troposphere.

Guy, One thing that I think it is important to appreciate is that publication in climate is directed toward understanding the climate–not to whether anthropogenic causation is “real”.

It is only the denialists who set out with the goal of “disproving” anthropogenic causation. It is probably better to view work as either consistent or inconsistent with the consensus theory of climate–of which anthropogenic causation of the current warming epoch is an inescapable consequence. To date there is no strong evidence that is inconsistent with this theory, and there is a mountain–or rather a whole world–of evidence that favors it.

> a simple (if large) summary of all the peer-reviewed

> scientific papers does not exist.

The lists at the IPCC site are what you want.

No individual can be an expert on all the areas involved; no individual has time to read all the papers written and being written, even as an amateur.

If you want something oversimplified to the point of deception, that’s easy; co2science, and Morano’s ever-growing list, and such — which pretend to summarize papers, putting their own desired conclusions on the page, knowing most readers can’t tell the difference.

Possibly you’re channeling questions from someone who’s working up to the “no human can possibly understand climate, so there’s no way we could be affecting it” notion, which is as bankrupt as, well, the banking system.

I had the impression these experts and their work didn’t exist?

450 Peer-Reviewed Papers Supporting Skepticism of “Man-Made” Global Warming

http://www.populartechnology.net/2009/10/peer-reviewed-papers-supporting.html

[Response: We’ll probably have some collective effort to look more carefully at this, but there is a lot of nonsense in that list, padded out with papers that are completely mainstream and not ‘skeptical’ at all, and containing dozens of mutually incompatible results (Khilyuk and Chillangar anyone?). Still there are clearly some papers (as we discussed above) that get into the literature despite fundamental flaws. A nice complement to this list would be the number of papers that subsequently rebut them (lots) or how often they are cited outside the ‘skeptic’ circle (rarely). Nonetheless, even if this list was legit (which it isn’t), 450 papers in ten years (or so) out of the tens of thousands on the subject is a pretty small fraction. – gavin]

Hi everyone,

Just two quick questions about peer review. Recently I submitted a paper (not on climate change) to a Elsevier journal and was surprised that I could not complete my submission until I had provided them with the names and contact details of two suggested reviewers. This seemed odd to me. I have published in AMS journals and they did not request such data. So my questions are:

1) Is this practice of asking authors to provide suggestions for reviewers becoming more common?

2) Can one opt out? That is, fill the dialogue box with “I am not comfortable doing that”

Thanks.

Hank – could you provide a URL on the IPCC site? I can’t see any lists of peer-reviewed papers.

It’s a fairly cheap shot to say I want something oversimplified to the point of deception. I’m asking for a very straightforward and reasonable proposition (at least in concept) – a list of papers, and whether or not any of their contents back or challenge AGW (or, as most will be, neutral because it doesn’t make any comment one way or the other).

Likewise I’m channeling nothing. There is a basic concept that I think isn’t being understood here. Unless we work in the field, we don’t have the expertese to make informed judgements on climate science. I don’t work in the field, my only rational response is to rely on those who do. I’m asking for basic data here, which doesn’t seem to be available. If it is impossible, fair enough… but is it really impossible?

I get that there needs to be an assessment of each paper, which is a time consuming process. I’m kind of hoping that some starting points must be out there somewhere.

There seems to be not nearly enough concern that public acceptance of AGW has dropped, despite all the efforts of Real Climate, the IPCC, and the entire scientific community. On the contrary, I often detect a rather weary attitude for everyone to just go read more. This approach, clearly, isn’t working. Also, as debates degenerate into mudslinging on both sides, the public simply deduce – incorrectly – that the science is equally divided.

The public need to be able to see facts without bias. The contrarian campaign is very effectively fought, and science is currently losing, as far as I can tell.

RE: Gavin’s in-line response to John H:

Greenfyre’s already on the case.

Gavin,

I fully suspected and expected that some or many of the 450 were not legit.

However, that was not my point.

I can’t tell you how many times I’ve been told by AGW voices that there are NO qualified skeptics or peer reviewed/published work by them.

Including right here by RC regulars.

In truth there is serious work and questions raised by significant work by very qualified skeptics which has been peer reviewed and published.

It should be at least a bit disturbing for this type of denial to have been perpetrated with such a chorus.

It’s one thing to engage and refute. But it’s not right to misrepresent as not even existing the counter viewpoints.

I fully recognize the adversarial environment between the two opposing camps which RC and CA/WUWT represent, but the the perpetual declaration that there is no legitimate rejection of AGW is out of line.

[Response: Err… actually, no it isn’t. We have never claimed to my knowledge that no papers purporting to be skeptical of AGW have ever been published. On the contrary, we spent a disproportionate amount of our time dealing precisely with the odd bit of nonsense that gets through the initial peer-review filter. But no random list of nonsensical or irrelevant papers (however long) is the same as stating that there is a ‘legitmate rejection’ of AGW – not even close. How is Chilingar’s complete ignorance of what the greenhouse effect is, along with perfectly legitimate papers that examine the role of CO2 feedbacks in the ice age cycle, and papers that don’t even think that the surface temperature record even exists, a coherent argument? If you like, pick out 10 papers from the list that you think constitute the ‘best shot’ at being a ‘legitimate rejection’ and we can discuss the actual issues. – gavin]

John H. @ 79:

Two quick points about that silly list:

1) I find it curious that about 80 of the references are from Energy & Environment. Despite the blog editors’ disingenuous assertion to the contrary, it is a journal of such low quality that it’s not listed on Thomson ISI and, more damningly, even RPJr. regretted publishing there. Of course, I am not saying that E&E is not a peer-reviewed journal, but as Jay Sherman would say, “IT STINKS!”

2) I’m not sure about this (can someone verify this?), but most of the 18 Climate Research papers in that list were published when Chris de Freitas was one of the editors…and lets just say that he did a pretty poor job in editing – so much so that the then incoming editor-in-chief and 4 other editors resigned in protest when a poorly-written, highly-flawed paper was published.

Guy, no cheap shot intended. There are oversimplified lists of papers available, I wondered if whoever is asking you these questions was steering you toward finding them.

The IPCC documents are mostly PDFs — you have to click on the links, open the documents, then look at the references.

Ray’s advice was good above:

“The database you ask for doesn’t really exist just yet. However, something equally useful does exist:

http://www.eecg.utoronto.ca/~prall/climate/climate_authors_table.html “

Also, Guy, this is important — a paper is cited by a report as the source for some particular point; having a list of papers in an area tells you very little if you’re not an expert in that area.

A list of papers that were relied on by one of the IPCC panels — by itself — tells you nothing about which particular point in each paper was cited (footnoted) — it may have been cited as support for a particular observation or conclusion among many others.

I’m sure you know this, but you might explain it to whoever’s asking you for such a list that the reason the lists are at the back of the papers or reports citing them is because each one is the cite for some individual statement in the main paper.

It’s not easy learning to use a science library. Just going to the shelf list to see what’s in it isn’t going to help a whole lot in catching up in any individual area. Much of what’s on the shelves may be outdated, but some of what’s there is good information. Knowing how to find that is part of being able to write such a paper, citing for the individual points relied on to the paper that’s still good on that bit of information.

Well, I’m done, this is really just a poor attempt at saying what I think the library reference desk would tell your friend.

On the sceptic 450: We should be careful not to say something is shoddy just because it’s in Energy and the Environment. There’s an off-chance that something worthwhile might end up in there. I’d say that a paper in E&E should be treated with the same regard as a blog post or a letter to the editor: it hasn’t passed any real bar, but it might still contain useful information.

How do they keep persisting in saying that “CO2 lags temperature in the ice ages” is a disproof of CO2 as a greenhouse gas? How many times does this have to be addressed for it to sink in? If I recall correctly, didn’t Hansen even predict the lag, before it was clearly resolved? If so, how can a successful prediction by Hansen be turned into a sceptic talking point?

Guy, 81: The IPCC reports can probably be considered the most comprehensive review papers ever written. Just go to http://www.ipcc.ch/, click on WG1, 2 or 3, and read up on whichever topic interests you. The report text has all the references you’ll ever want. It even notes some papers which are often cited by sceptics. A simple list of references without any context at all isn’t really helpful, though that’s what the sceptics have produced with their 450.

FWIW, since the issue is raised again by Richard McNider, the use of bias in their paper is consistent with how it is used in electronics but not as a general usage. Surprisingly this has not much come up

[Response: I don’t think this is the problem at all. I am very happy with the use of the word ‘bias’ implying some offset from an expected signal (as opposed to the colloquial connotations of the word). The issue is that the language in the paper very often conflates a bias of the surface temperature record qua surface temperature record (which is what most people are concerned about), with a bias in the surface temperature record as an indicator of atmospheric heat content changes (which these auhtors are concerned with but which I can find no trace of anyone else actually assuming). – gavin]

re: consensus

Gavin, would it be fair to describe the results on the linked page below, on findings from the AIRS satellite data, as showing a lack of immediate consensus ?

AIRS Satellite – Significant Findings

Relating to water vapor data, the web-page lists 2 papers (published in Climate and GRL) that find that their confidence in the models is increased, (including the Dessler et al. 2008 paper mentioned in some news articles), and 3 papers (Climate, GRL x 2) that find that the models show too much moisture between 300 mb and 800 mb, and too little below 800 mb , lowering confidence in the accuracy of the model predictions.

(There’s also a paper (GRL) on that page that finds that “the apparent good agreement of a climate model’s broadband longwave flux with observations may be due to a fortuitous cancellation of spectral errors.”)

I assume that the Climate and GRL aren’t considered to be beyond the pale in the way that E&E is.

With respect to citation counts, the ‘pro-AGW’ papers were cited 3-4 times each, compared to the ‘anti-AGW’ papers, cited 7-8 times each. The ‘pro-AGW’ papers were published later though, in 2008, compared to 2006-7.

As an amateur, how should I be approaching these papers and their findings?

[Response: I have no idea what strawman argument you are making here. Why do you think these papers are contradictory to mainstream thinking on water vapour feedback issues? Science at the cutting edge is always about what is as-yet-unknown (obviously). You appear to be conflating cutting edge explorations of the details of new data sets with some undermining of well-established textbook stuff. No-one here has ever claimed there is no more to find out or that no disagreements among scientists exist, and we have certainly never claimed that models are perfect, so what are you arguing with? This is simply the normal course of science – new data leads to improved understanding and hopefully better predictions. You as an amateur could be interested in that, or be happy that new stuff is being incorporated into the science. But you shouldn’t immediately jump to the conclusion that a single new paper immediately invalidates everything in the textbooks. – gavin]

(The citation counts above come from a Google Scholar search for the title of each paper.)

Terran, did you read the page you link to, or just copy the link from somewhere else? The first text on the page says:

“These studies used the AIRS data to show that surface warming leads to an increase in water vapor. This water vapor acts as a greenhouse gas and amplifies the surface warming. The AIRS observations are also consistent with warming predicted by numerical climate models, increasing confidence in model predictions of future warming….”

On topic, Annan, who’s worked with Pielke in the past, has a pretty good review of this issue.

http://julesandjames.blogspot.com/2009/11/klotzbach-ad-nauseam-2009.html

Oops, too hasty in hitting ‘Submit’ — followup:

Terran, you need to go beyond counting citations per year. Number of citations per year is just one piece of information.

You have to look at what it is that the citing paper is referring to — what particular statement or piece of information the footnote is next to that is cited to the source paper.

Take this one:

http://scholar.google.com/scholar?hl=en&q=DOI%3A+10.1175%2FJAS3782.1

Look at the citing papers if you have access to them; look for the citation and see what the point was for which the paper was cited.

Citing something isn’t a stamp of approval — it’s a flag saying that paper has a particular piece of information in it and the citing paper is referring to that source.

What people make of the information may be quite different than what the original paper made of the same information — a citation can be a correction, an improvement, an extension of a line of thought.

Don’t make the mistake of thinking that scientists are setting out to write “pro” or “anti” papers on the global subject you’re interested in. They’re writing about specific things and citing other authors for specific things in those papers.

The bias thing has been a source of considerable comment, however, IEHO, even here, your use of bias as a simple offset is not quite what it means in electronics. There biasing sets voltages at various points in the circuit in a way that determines the operating parameters of the system, something pretty close to the meaning of the paper, where it is claimed that quiescent wind conditions bias the system to the warm side.

Much ado about nothing, but this is a blog, eh?

MarkB #92:

“On topic, Annan, who’s worked with Pielke in the past …”

I looked for publications on which they were co-authors, and came up empty. Could you point me to their “work” together?

Gavin,

Pierce et al. 2006 compared AIRS water vapor observations to 22 models from the PCMDI database (1990-1999), and additionaly the NCAR CCSM3, CSIRO Mk 3, MPI ECHAM5, UKMO HadCM3, and CCCMA CGCM3-T63 models.

John and Soden 2006 compared the NCAR CAM3 to observations, and Gettelman et al. 2006 used 16 climate model simulations from WCRP CMIP3 database for their comparison.

The 3 papers found a systematic tendency in most GCM’s to overestimate the moisture levels in the upper troposphere, and to underestimate them in the lower troposphere.

Since the Dessler paper and the Gettelman and Fu paper were written about a year or so afterwards, is it possible that models were amended as a result and hence the good performance later?

I asked hoping for an explanation for what seem to be contradictory results, and an area of non-consensus. These papers are referenced by NASA, they were published by reputable journals, and they haven’t been found to be flawed, as far as I’m aware.

Hank, I included the citation counts merely out of interest, as they’d been mentioned earlier. I don’t interpret them as providing any hard information about the truth of a paper, whatever their usefulness as a heuristic guide.

[Response: Don’t know particularly. But the updated models from CMIP3/AR4 are only starting to be run now, so it’s unlikely that the models were suddenly changed between papers. – gavin]

Guy (#70) says: “A similar (and much smaller) spreadsheet could cover academic institutions’ public statements.”

Not sure that academic institutions will tend to make official public statements directly about climate change (although a lot will probably do so implicitly by explaining what actions they are taking in terms of mitigation or academic programs geared toward sustainability). However, a good source of the statements from various scientific organizations (professional societies, national academies of science, etc.) is this Wikipedia page: http://en.wikipedia.org/wiki/Scientific_consensus_on_climate_change

Terran: Here is the abstract of John and Soden 2006 paper —

“A comparison of AIRS and reanalysis temperature and humidity profiles to those simulated from climate models reveals large biases. The model simulated temperatures are systematically colder by 1–4 K throughout the troposphere. On average, current models also simulate a large moist bias in the free troposphere (more than 100%) but a dry bias in the boundary layer (up to 25%). While the overall pattern of biases is fairly common from model to model, the magnitude of these biases is not. In particular, the free tropospheric cold and moist bias varies significantly from one model to the next. In contrast, the response of water vapor and tropospheric temperature to a surface warming is shown to be remarkably consistent across models and uncorrelated to the bias in the mean state. We further show that these biases, while significant, have little direct impact on the models’ simulation of water vapor and lapse-rate feedbacks.”

In other words, despite the fact that the mean state of the moisture in the atmosphere has large biases in the models, and that the bias varies from model to model, the models consistently react in the same way to a surface warming…and, in particular, these mean-state biases seem to have very little effect on the simulations of the feedbacks.

So, the only way this seems to fall into the category of a paper going against the consensus is if you make the consensus a strawman that “the models are perfect in all respects”. It in no way shows that the water vapor feedback is being exaggerated by the models and, in fact, Soden has done some nice work previously showing that the models do a good job with the water vapor feedback, both in comparison to satellite signatures of upper tropospheric moistening and in simulating the temperature after the eruption of Mt. Pinatubo.

Terran: And, Pierce et al. 2006 basically reach the same conclusion as John and Soden with regard to the mean state and then basically “punt” on the issue of whether this matters for climate change predictions, noting that it might but would need further investigation.

I suppose this paper could be classified as potentially representing a challenge to the consensus view but basically inconclusive on that point and, when taken along with the John and Soden paper (along with other work on the water vapor feedback like Dessler’s), the evidence seems to be that the identified deficiencies in the models do not end up having a significant effect on the strength of the water vapor and lapse rate feedback.

I was following a discussion at http://wattsupwiththat.com/2009/11/09/a-statistical-significant-cooling-trend-in-rss-and-uah-satellite-data/ in which much was made of the difference between RSS and UAH data, so I downloaded the data from woodfortrees and started playing with it in my own spreadsheet (so that I could overlay graphs displayed separately at WUWT. I noticed what appeared to be an annual signal in the difference RSS-UAH in the recent record, and I have convinced myself that the annual signal appears in the UAH data, starting in 2002. Jeff Id pointed out that the UAH data source changed to the Aqua satellite in 2002.

You can see the sequence of events including my mistakes in using the analysis tools at woodfortrees by searching on my name at the WUWT URL. The bottom line is the signal at a 1 year harmonic in the UAH data – see http://www.woodfortrees.org/plot/rss/from:2002/window/fourier/magnitude/from:2/to:40/plot/uah/from:2002/window/fourier/magnitude/from:2/to:40/plot/nsidc-seaice-n/from:2002/scale:0.01/window/fourier/magnitude/from:2/to:40. One data processing error that would cause an annual signal to appear would be to shift the phase between the baseline and current data when calculating the anomaly (I very much doubt such a simple mistake would occur). I don’t think such a phase shift would cause a change in trend. Are there processing inaccuracies (in going from the raw satellite data to the temperature anomaly data) which could simultaneously produce an annual signal and differences in trend compared to surface data? Is finding and correcting such inaccuracies a big publishable project?

It also has occurred to me that global warming might cause a phase shift in the annual temperature cycle(warming earlier in spring and cooling earlier in fall, or vice versa), so an annual signal popping up in an anomaly series might not be a processing artifact but a real signal. It just occurred to me that warming earlier and cooling later would create a six month harmonic, which shows up around harmonic 15 in the woodfortrees graph. wut’s up wit dat? &;>)