We’ve often discussed the how’s and why’s of correcting incorrect information that is occasionally found in the peer-reviewed literature. There are multiple recent instances of heavily-promoted papers that contained fundamental flaws that were addressed both on blogs and in submitted comments or follow-up papers (e.g. McLean et al, Douglass et al., Schwartz). Each of those wasted a huge amount of everyone’s time, though there is usually some (small) payoff in terms of a clearer statement of the problems and lessons for subsequent work. However, in each of those cases, the papers were already “in press” by the time other people were aware of the problems.

What is the situation though when problems (of whatever seriousness) are pointed out at an earlier stage? For instance, when a paper has been accepted in principle but a final version has not been sent in and well before the proofs have been sent out? At that point it would seem to be incumbent on the authors to ensure that any errors are fixed before they have a chance to confuse or mislead a wider readership. Often in earlier times corrections and adjustments would have been made using the ‘Note added in proof’, but this is less used these days since it is so easy to fix electronic versions.

My attention was drawn in August to a draft version of a paper by Phil Klotzbach and colleagues that discussed the differences between global temperature products. This paper also attracted a lot of comment at the time, and some conclusions were (to be generous) rather unclearly communicated (I’m not going to discuss this in this post, but feel free to bring it up in the comments). One bit that interested me was that the authors hypothesised that the apparent lack of an amplification of the MSU-LT satellite-derived trends over the surface record trends over land might be a signal of some undiagnosed problem in the surface temperature record. That is not an unreasonable hypothesis (though it is not an obvious one), but when I saw why they anticipated that there should be an amplification, I was a little troubled. The key passage was as follows:

The global amplification ratio of 19 climate models listed in CCSP SAP 1.1 indicates a ratio of 1.25 for the models’ composite mean trends …. This was also demonstrated for land-only model output (R. McKitrick, personal communication) in which a 24-year record (1979-2002) of GISS-E results indicated an amplification factor of 1.25 averaged over the five runs. Thus, we choose a value of 1.2 as the amplification factor based on these model results.

which leads pretty directly to their final conclusion:

We conclude that the fact that trends in thermometer-estimated surface warming over land areas have been larger than trends in the lower troposphere estimated from satellites and radiosondes is most parsimoniously explained by the first possible explanation offered by Santer et al. [2005]. Specifically, the characteristics of the divergence across the datasets are strongly suggestive that it is an artifact resulting from the data quality of the surface, satellite and/or radiosonde observations.

(my emphasis).

For reference, the amplification is related to the sensitivity of the moist adiabat to increasing surface temperatures (air parcels saturated in water vapour move up because of convection where the water vapour condenses and releases heat in a predictable way). The data analysis in this paper mainly concerned the trends over land, thus a key assumption for this study appears to rest solely on a personal communication from an economics professor purporting to be the results from the GISS coupled climate model. (For people who don’t know, the GISS model is the one I help develop). This is doubly odd – first that this assumption is not properly cited (how is anyone supposed to be able to check?), and secondly, the personal communication is from someone completely unconnected with the model in question. Indeed, even McKitrick emailed me to say that he thought that the referencing was inappropriate and that the authors had apologized and agreed to correct it.

So where did this analysis come from? The data actually came from a specific set of model output that I had placed online as part of the supplemental data to Schmidt (2009) which was, in part, a critique on some earlier work by McKitrick and Michaels (2007). This dataset included trends in the model-derived synthetic MSU-LT diagnostics and surface temperatures over one specific time period and for a small subset of model grid-boxes that coincided with grid-boxes in the CRUTEM data product. However, this is decidedly not a ‘land-only’ analysis (since many met stations are on islands or areas that are in the middle of the ocean in the model), nor is it commensurate with the diagnostic used in the Klotzbach et al paper (which was based on the relationships over time of the land-only averages in both products, properly weighted for area etc.).

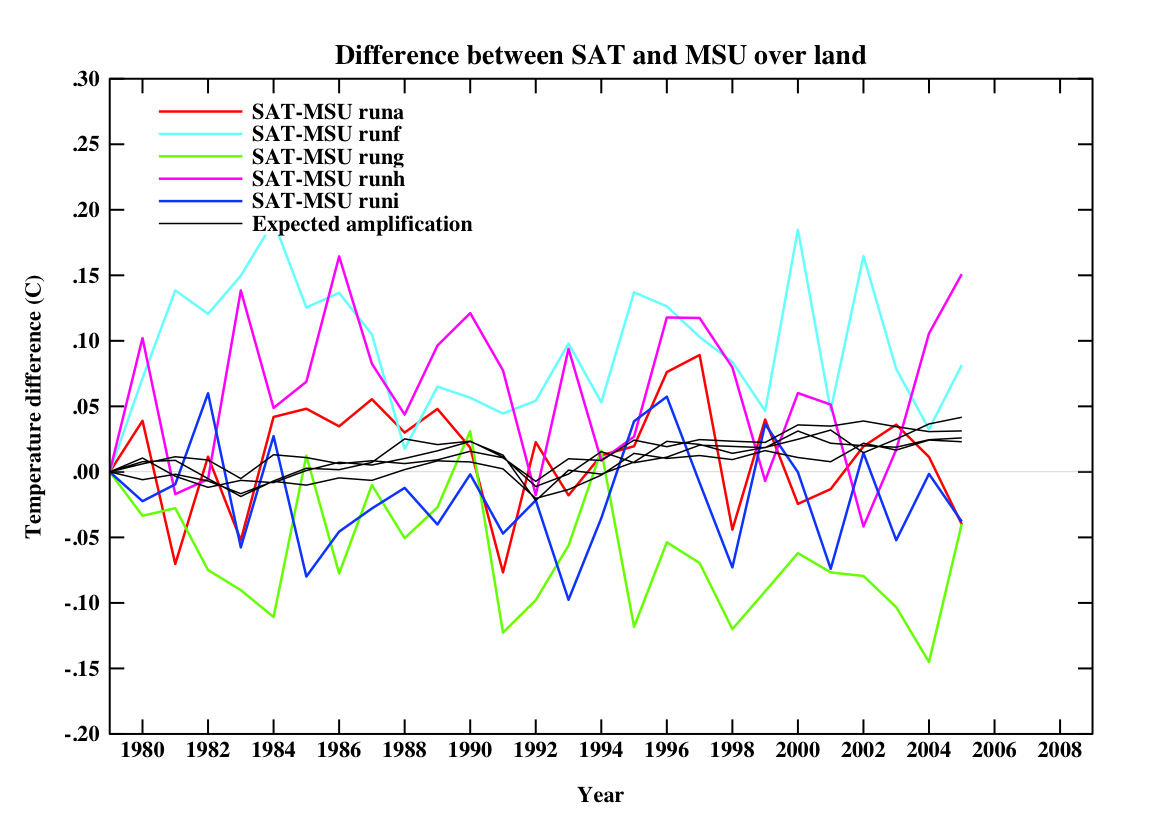

It was easy for me to do the correct calculations using the same source data that I used in putting together the Schmidt (2009) SI. First I calculated the land-only, ocean-only and global mean temperatures and MSU-LT values for 5 ensemble members, then I looked at the trends in each of these timeseries and calculated the ratios. Interestingly, there was a significant difference between the three ratios. In the global mean, the ratio was 1.25 as expected and completely in line with the results from other models. Over the oceans where most tropical moist convection occurs, the amplification in the model is greater – about a factor of 1.4. However, over land, where there is not very much moist convection, which is not dominated by the tropics and where one expects surface trends to be greater than for the oceans, there was no amplification at all!

The land-only ‘amplification’ factor was actually close to 0.95 (+/-0.07, 95% uncertainty in an individual simulation arising from fitting a linear trend), implying that you should be expecting that land surface temperatures to rise (slightly) faster than the satellite values. Obviously, this is very different to what Klotzbach et al initially assumed, and leaves one of the hypotheses of the Klotzbach paper somewhat devoid of empirical support. If it had been incorporated into their Figures 1 and 2 (where they use the 1.2 number to plot the ‘expected’ result) it would (at minimum) have left a somewhat different impression.

For reference, if you plot the equivalent quantities in the model that were in their figures, you’d get this:

(for 5 different simulations). Note that the ‘expected amplification’ line is not actually what you would expect in any one realisation, nor the real world. The differences on a year to year basis are quite large. Obviously, I don’t know what this would be like in different models, but absent that information, an expectation that land-only trend ratios should go like the global ratios can’t be supported.

Since I thought this was very likely an inadvertent mistake, I let Phil Klotzbach know about this immediately (in mid-August) and he and his co-authors quickly redid their analysis (within a week) and claimed that it was not a big deal (though their reply also made some statements that I thought unwarranted). Additionally, I provided them with the raw output from the model so that they could check my calculations. I therefore anticipated that the paper would be corrected at the proof stage since I didn’t expect the authors to want to put something incorrect into the literature. After a few clarifying emails, I heard nothing more.

So when the paper finally came out this week, I anticipated that some edits would have been made. At minimum I expected a replacement for the inappropriate McKitrick reference, a proper citation for the model output, acknowledgment that the amplification assumption might not be valid and an adjustment to the figures. I didn’t expect that the authors would have needed to change very much in terms of the discussion and so it shouldn’t have been too tricky. Note that the last paragraph in the paper does directly link the non-amplification over land to possible artifacts in the data products and so some rewriting would have been necessary.

To my great surprise, no changes had been made to the above-mentioned section, the figures or the conclusion at all. None. Not even the referencing correction they promised McKitrick.

This is very strange. Why put things in the literature that you know are wrong? The weird thing is that this is not a matter of interpretation or opinion about which reasonable people could disagree, but a straightforward analysis of a model that gives only one answer. If they thought McKitrick’s source data were appropriate, why wouldn’t they want the correct answer?

It’s almost always possible to make some edits in the proofs, and papers can always be delayed if there are more substantive changes required. Indeed, they were able to rewrite a section dealing with a misreading of the Lin et al paper that had been pointed out in September by Urs Neu (oddly, there is no acknowledgment of this contribution in the paper). Is it because they want to write a new paper? That’s fine, but why leave the paper with the old mistakes up without any comment about the problems (and two of the co-authors have already blogged about this paper without mentioning any of this or any of the other criticisms)? If these issues are trivial, then it would have been easy to fix and so why not do it? However, if they are substantive, the paper should have been delayed and not put in the literature un-edited.

I have to say I find this all very puzzling.

Note added in proof: I sent a draft of this blog post to Dr. Klotzbach and he assures me that the non-correction was just an oversight and that they will be submitting a corrigendum. He and his co-authors are of the opinion that the differences made by using the correct amplification factors are minor.

@MapleLeaf:

1. It is indeed getting more common that authors may suggest reviewers. Sometimes it is OK, as some Editors take at most one reviewer from that list. Others…well…may lead to fundamentally flawed papers getting published. It is, however, most common to the lower impact journals.

2. You can always write to the Editor that you do not feel comfortable doing so. But there’s no predicting to how they will react.

Thanks to all for your various replies. The IPCC is a source of considerable frustration for me – I consider all the contrarian arguments about governmental bias (usually because THEY want us afraid so THEY can raise taxes) to be ludicrous.

The fact remains that, for contrarians, this entire colossol IPCC project cuts no ice. My view is that this conclusion commonly reflects a faith-based position on thier part (in as much that they have reached an ideological conclusion and choose to ignore evidence). But because part of the process involves politicians who help shape the final document (on considerable record as watering it down of course), I don’t think scientists will ever definitively win that argument, however ludicrous. Politicians ARE part of the process, it therefore isn’t pure science. The IPCC’s main use is that it has convinced policy makers who DO understand how it works, and that’s more than good enough. The irrational contrarian public who believe what they want to believe need something else.

That list of 450 supposedly contrarian papers is, imho, spectactularly useful. Before I hear howls of protest, let me explain why – this is the best they can do. It is clearly a partisan, pointless list in and of itself, but it is exactly the starting point I was hoping for. It’s probably fair to deduce that a small subset of this 450 will represent the sum total of genuine papers that raise significant questions. Why don’t qualified people now take this list and go through it properly (of course I realise this will be an organisational challenge). There should be a basic criteria of a recognised peer-review journal if some really are so shoddy that their general reputation on all subjects is no better than a blog, so that’s one simple filter that is easily applied. I think a minimum requirement to be classed as a paper which “questions” is one quote that actually does this – as has been pointed out, merely referencing that CO2 lags temperature rise historically is not a contentious point in the AGW argument (AFAIK no-one disagrees on this).

And to counter this, there must be people with a strong enough working knowledge of papers that could begin to put together a list on the other side using the same criteria (and complete with one quote each positively supporting a central plank in AGW). I’d be very surprised if the total numbers weren’t extremely powerful. Add in a citation colum too, for more firepower. As a final part of this puzzle, the list needs to be open to correction and review – the wiki format would probably do. Wikipedia, flaws and all, is correctly regarded has fundamentally independent. If a science blog publishes the list as a finished piece of work, of course it will be disregarded as biased, along with the IPCC et al.

Gavin et al – your work here is clearly vital. There needs to be informed public discussion on why some flawed peer-reviewed papers make it through the system. But it can’t, and never will, convince a contrarian who will always see your conclusions as biased because they don’t understand the science enough to evaluate it themselves and simply wish to believe it isn’t true. And to be fair, I don’t fully understand the science either. But this is only one way to win the argument that, so far, science is losing.

Given the gravity of the situation and falling public support, surely it is incumbent on the science community to consider anything they can do to better present the solid science case to a sceptical public?

Brian Dodge (#100): You are not the only one to notice this annual signal in the UAH data. Tamino did a post on it about a year ago ( http://tamino.wordpress.com/2008/10/30/annual-cycle-in-uah-tlt/ ).

Guy (#102): Yeah, there are some people who refuse to accept the conclusions of the IPCC and even all of the scientific organizations who have concurred with the IPCC’s conclusions (AAAS, NAS, AGU, AMS, APS, ACS, …). They come up with the notion that the leadership of all of these organizations have somehow been co-opted. It is kind of difficult to argue against such logic; I don’t think such people are really convince-able, so I am content to try to point out how absurd this is with the hope that the more convince-able folks who read it will be swayed.

DeepClimate (#95),

I had in mind the survey of scientists they jointly conducted, one that I personally don’t think was very well-written.

http://pielkeclimatesci.wordpress.com/2008/03/13/weblog-by-james-annan-on-the-preliminary-poll-of-the-2007-wg1-ipcc-report/

Guy, the problem with your recommendations is that they are directed at making the science accessible to ideologically blinkered, wilfully ignorant dumbasses. I’m sorry, there is only so far that the science can be dumbed down. It’s time for the human race to make a decision: Do they want to base their policy on the best science available or on the sanguine platitudes of hucksters blowing sunshine up our collective skirts? It ought to be pretty clear which path has a better chance of leading to the continued existence of human civilization.

Guy, Google; here’s my first try, you can no doubt improve the search terms:

http://www.google.com/search?q=debunking+450+papers+global+warming

Looking at the results, after a few pages of copypaste stuff, you’ll start finding links to pages debunking the “450 papers” list.

Just as one example — you can find more:

http://carbonfixated.com/skeptical-about-450-peer-reviewed-papers-skeptical-of-global-warming/

Ray, #105. We’re not wilfully ignorant, but we do continually question the (possibly overweening) confidence of climate scientists because we also want the “continued existence of human civilization”, but without dismantling the extraordinary achievements of the industrial revolution and its subsequent developments. The realization that there were vast resources of cheap energy on hand fuelled that revolution. Should we not now build on that (E=mc2 again) rather than roll things back in a remorseful agony of self-reproach?

Guy, your numerical analysis of publications, I agree, is a good indication of the validity and veracity of scientific analyses. But, a word of caution: there is a point of diminishing returns so one ought to take that only so far. If it sneaks over into science by democracy or by tyranny of the masses it loses its value.

Simon Abingdon,

One cannot be skeptical of the science without understanding it, and if you don’t understand how hard it would be to overturn the conclusion of anthropogenic causation, you don’t understand the science. The problem is that the denialists have no science, no evidence. All they have is a nagging suspicion that all the experts must be wrong because their conclusions don’t allow unlimited consumption in perpetuity.

And your characterization of the industrial revolution being entirely due to cheap energy is simply flat wrong. The energy resources were always there. Indeed, coal had been used for home heating even in prehistoric times. What changed and allowed the industrial revolution to occur was our uderstanding of energy and material properties–in other words: science. This is precisely what is needed again. We have to learn how to develop an economy that is sustainable. So, Simon, you might want to erect your straw men in a corn field where they will at least perhaps at least scare birds.

Guy, your “list on the other side” proposal is misguided:

1. You can’t convince “the irrational contrarian public who believe what they want to believe” by throwing another list of rational arguments at them.

2. Your proposed list of peer-reviewed paper quotes, “each positively supporting a central plank in AGW” would have no credibility whatsoever with people who think the IPCC is biased despite the IPCC’s conscientious discussions of uncertainties, unknowns, discrepancies, divergent findings and alternative hypotheses.

3. Indeed, by mimicking the “450 papers” list you would just validate a denialist debate tactic. Science can do better.

4. Science isn’t losing the argument. Contrarians are a big deal in the blogosphere, not so much in the real world. Most decision-makers belong to the reality-based community, know something about assessing the credibility of sources, and recognize a crank when they see one. Time and effort is better spent keeping them informed enough that they won’t be easily fooled, rather than trying to persuade those who want to fool themselves. When on a battlefield, practice triage.

Terran writes

> a lack of immediate consensus ?

Wrong idea about consensus; consensus is not “immediate” — a few papers don’t make a “consensus” in a field that has more than a few people publishing. A consensus is an “as of” occasional summary of the state of the field taking everything–up to a given date–into account.

(Done annually for many medical issues for example.)

Here are some examples:

http://scholar.google.com/scholar?sourceid=Mozilla-search&q=+consensus+statement

#109 Ray. I have never doubted the basic physics of climatology. But that we attempt to apply it to a chaotic system with so many unknowns and innumerable imponderables gives me much pause. To the best of my knowledge science has not until now been tried in such a fraught environment. It’s obviously not rocket science (nor anything nearly so simple). And the evidence from its application has not so far been (for me) altogether convincing, despite your oft-quoted “mountains of evidence”.

For me the likelihood of the difference between 2deg warming (beneficent) and 6deg (catastrophic) has not been established beyond peradventure.

As to the opening of your second paragraph “And your characterization of the industrial revolution being entirely due to cheap energy is simply flat wrong” is quite surprising. Why do you say this? For me, and for all commentators I have ever read, it is this assertion of yours that is, on the contrary, actually “flat wrong”. Sorry.

Re #69 Andrew P

Sorry for the confusion, my post is a marginal joke at best. No respectable scientist would claim it would remain positive or negative for 30 years.

The context is that Joe Bastardi (from accuwealther http://www.accuweather.com/news-bio.asp?blog=bastardi) claimed we would have global cooling for the next 30 years. So I was merely pointing out the incorrect nature of what ‘some, so-called’ experts claim.

He’s using the fact that he has a job as a weatherman, to say the climatologists are all wrong about AGW, while using incorrect charts and facts out of context to support his argument.

simon abingdon (112) — I suggest you rethink the idea that 2 K warming would be beneficial in light of recent experiences in Bolivia, Nepal and Bhutan, not to mention East Africa; also take into account Mark Lynas’s report on his paleoclimate researches in “Six Degrres”, reviewed here:

http://www.timesonline.co.uk/tol/news/uk/science/article1480669.ece

Ray, further to my #112 and to avoid splitting hairs, I do not regard the likes of Abraham Darby, James Brindley, James Watt and Robert Stephenson as scientists. Josiah Wedgwood and Michael Faraday were probably the only significant protagonists of the era who deserved to be considered so. When James Clerk Maxwell, the first true scientist of the modern era was born, the industrial revolution was already well under way.

The insights of the early engineers/technologists (James Watt watching the kettle lid rattling as the water boiled for example) paved the way. But they were not scientists, and it was certainly the availability of cheap fuels which enabled their insights to be realized.

#114 David B Benson. Thanks for your response. As you suggested I read Mark Lynas’s report (dated 11 March 2007). Reminded me rather of Al Gore’s scaremongering style. Basically I believe that 2deg warming will reduce the number of cold-related fatalities worldwide by an order of magnitude compared to heat-related increases. (No citation, just read it somewhere and found it obviously credible).

Hank,

By “immediate consensus”, I meant as applying to the set of papers discussing water vapor observations and climate models on the AIRS page I linked to.

The word “immediate” was used with it’s meaning of “of the present time and place”- I should have phrased it better.

Joel,

The total column integrated profiles of water vapor may be well-described by the models, but the distribution of water vapor and its perturbations are of greater importance, as acknowledged in the Pierce et al. 2006 paper and elsewhere.

From “Understanding Climate Change Feedbacks”, NAS Panel on Climate Change Feedbacks, 2003:

“According to Harries 1989, “[U]ncertainties of only a few percent in knowledge of the humidity distribution in the atmosphere could produce changes to the outgoing spectrum of similar magnitude to that caused by doubling carbon dioxide in the atmosphere”, underscoring the importance of reliable upper tropospheric water vapor observations.” ”

The following from Pierce et al. doesn’t sound like a “punt”, as you described it, on this topic:

“The results show the models we investigated tend to have too much moisture in the upper tropospheric regions of the tropics and extra-tropics relative to the AIRS observations, by 25–100% depending on the location, and 25–50% in the zonal average. This discrepancy is well above the uncertainty in the AIRS data, and so seems to be a model problem. Even though the total column integrated water vapor profile may be nearly correct, this is an important finding for model simulations of future climate change, because even small absolute changes in water vapor in the upper troposphere can have a strong effect on radiative forcing if they are appreciable fractional changes [IPCC, 2001].”

“A fixed absolute change in water vapor concentration in the upper troposphere

due to anthropogenic effects will have a varying fractional change that depends on the base water vapor concentration present before the change is applied. If the

models simulate this base water vapor concentration incorrectly (as we find here), then the strength of the water vapor feedback mechanism might be misrepresented. Given the importance of the water vapor feedback in determining the magnitude of future climate change, numerical simulations addressing this question are urgently needed.”

The findings of Gettelman et al 2006 also appear significant :

“Variability in the model and particularly in the Tropics and subtropics is not as well simulated as the mean and seasonal cycle. This is a general feature of GCMs and is not a problem with representations of RH per se, but rather a general issue with the representation and initiation of convection. Differences in the Tropics and subtropics result in zonal mean differences in heating rates of 10% in the upper troposphere and zonal mean OLR differences of 1–3 W/m2, with a global average near 1 W/m2. In some regions, such as the extremely dry upper troposphere west of the Asian monsoon, differences are up to 15 W/m2.”

Terran, why go back to the older (2001) IPCC?

Did you compare it to the more recent one?

You seem to be pulling up old papers from somewhere, but have you got references forward in time from those to more current work?

This might help:

http://geotest.tamu.edu/userfiles/216/dessler09.pdf

Discussed here:

http://www.grist.org/article/Looking-for-validation/

Simon, ever hear of Sadie Carnot? James Joule? Fourier? Simon LaPlace? Newton? Joseph Priestley? Is it your serious contention that these men were not scientists? Do you seriously think that the explosion of science in the 17th century had nothing to do with the subsequent industrial revolution? As I said before, the “abundant energy” had been there all along, and was known. What was missing was understanding of how to exploit it. Science provided this.

Our prosperity owes far more to science than to cheap energy.

Thanks again everyone for contributions to this thread of the thread. Forgive me for just taking CM’s points, as it covers many others too:

CM – Guy, your “list on the other side” proposal is misguided:

CM – 1. You can’t convince “the irrational contrarian public who believe what they want to believe” by throwing another list of rational arguments at them.

This is true, and possibly a fundamental flaw in my idea. What frightens me, however, is not the hardcore denialists, but the number of perfectly intelligent people who do now believe that the science is very unettled. Here in the UK, Ian Pilmer was on BBC Radio 4’s high profile morning show last week, with no counter-voice. He even appeared to win sympathy from the presenter. Casual listners heard he was a scientists, and heard him say that there is no consensus. And most will simply believe it.

There are 3 possible responses here. 1 – give up. 2 – keep plugging away. 3 – try to find a new approach. I have faith (!) that among the now-majority who seem to be unconvinced by AGW, not ALL adopt a faith-based position. To some of them, the IPCC may be problematic because it is the word GOVERNMENT in the title of the organisation. I suggest that a simple, transparent, open format spreadsheet of peer-review papers might be a more potent weapon.

CM – 2. Your proposed list of peer-reviewed paper quotes, “each positively supporting a central plank in AGW” would have no credibility whatsoever with people who think the IPCC is biased despite the IPCC’s conscientious discussions of uncertainties, unknowns, discrepancies, divergent findings and alternative hypotheses.

As above, I don’t agree on this, necessarily. Many people – begrudgingly – can see the value in science without politics. Mistrust of politicians is now an epidemic, so the IPCC has an additional barrier of political interference to overcome… they never get as far as the uncertainties.

CM – 3. Indeed, by mimicking the “450 papers” list you would just validate a denialist debate tactic. Science can do better.

And here’s the nub of the problem. To date, science HASN’T done better. In terms of public persuasion, it’s done worse.

Further (and crucially), a “mimic” of that 450 list is not what I am proposing at all – see my previous posts on eligibility criteria (any sort of criteria is clearly missing from the contrarian list). And further further… my database idea is not science, just as wikipedia is not science. I’m proposing open format… and that’s a very big and very important difference.

CM – 4. Science isn’t losing the argument. Contrarians are a big deal in the blogosphere, not so much in the real world. Most decision-makers belong to the reality-based community, know something about assessing the credibility of sources, and recognize a crank when they see one. Time and effort is better spent keeping them informed enough that they won’t be easily fooled, rather than trying to persuade those who want to fool themselves. When on a battlefield, practice triage.

I agree that among policy makers, science has made some big inroads (and thank goodness for the IPCC there). But among the public, we are losing support very rapidly indeed. And if the electorate don’t want to know, the politicians will surely follow. There’s big votes to be had right now in asking punters to vote Contrarian. So far, few politicians are going for it, but with falling public support it’s only a matter of time.

My idea is surely a long way from perfect – I’m very much open to better ideas. However, I don’t buy that there is no problem. There is a huge problem, and the climate science community should be alarmed that their work is being increasingly misunderstood and disbelieved by the public.

Because of the risk of repeating myself, I better leave it there for this thread. I hope at least it causes some to think outside the box at new, better ways of communicating the most important information of our generation.

Hank,

The Pierce et al. 2006 paper cited the IPCC 2001 report because it was the most current one at the time of writing.

They refer to the 2001 report on how “small absolute changes in water vapor in the upper troposphere can have a strong effect on radiative forcing if they are appreciable fractional changes”. This is still the scientific understanding of the matter, isn’t it?

The Dessler 2009 paper is about the strong evidence for a postive water vapor feedback. It doesn’t refute or supercede the findings of the 3 papers I mentioned, which are about discrepancies between model predictions and observations of water vapor and temperature in the troposphere.

I haven’t read the Sherwood article yet but will do, thank you.

(I should have added, perhaps unneccesarily, that none of the 3 papers mentioned in my last post dispute the existence of postive water vapor feedback.)

This deserves to be framed and hung up on a wall somewhere, as some sort of iconic statement of “how to think entirely opposite to the way scientists think – and work”.

And of course if it weren’t for science and technology that energy wouldn’t be cheap. Kinda hard to crack crude into distillates like gasoline without some understanding of chemistry.

Terran (#117): Yes, I would say that Pierce et al. did “punt” on the issue of how their results speak to how well the models simulate the water vapor feedback. They said, “the water vapor feedback mechanism might be misrepresented. Given the importance of the water vapor feedback in determining the magnitude of future climate change, numerical simulations addressing this question are urgently needed.” Note the use of the word “might” and the emphasis on the need for simulations to address the question. They don’t claim to have the answer…but are just speculating on what might occur and acknowledging the need for more study.

By contrast, John and Soden quite directly concluded “these [mean state] biases, while significant, have little direct impact on the models’ simulation of water vapor and lapse-rate feedbacks.” That is a pretty direct statement. (I haven’t read their full paper yet so I am not sure what evidence they present to back up this conclusion.)

Also, you quote from Gettelman et al. (2006) again talking about issues involving the mean state but then don’t note the fact that Gettelman et al. (2008) directly addresses the water vapor feedback and they also conclude that the feedback in the upper troposphere is positive, with the change in water vapor such that relative humidity is approximately constant. [Gettelman et al. is not as explicit in concluding that the strength of the water vapor feedback as the result of this is correct…but John and Soden seem to be more explicit on that point, as is Dessler.]

You seem to have a strong desire to conclude that these papers on the mean state biases have major implications for the consensus view but I see no evidence that this is the case from any of those papers. In fact, at least one (John and Soden) of the three (and with weaker statements, also a second of the three, Gettelman et al. 2008) seem to conclude that the models are simulating the water vapor feedback correctly. And, the other papers that I have mentioned by Soden and by Dessler also seem to provide evidence of that.

Again, the consensus view is not the models get everything perfect (as you have probably heard the oft-repeated general statement about modeling, “All models are wrong but some models are useful”), but rather that there is a significant positive water vapor feedback that raises the climate sensitivity (and a negative lapse rate feedback that takes some fraction of that rise back).

Re: 80 (@Maple Leaf). Different journals have different policies. When I edited an AMS journal, we got suggested reviewers fairly often. Whether I used one of them depended on my knowledge of the subdiscipline the paper came from and what I could glean about the relationship between the authors and the suggested reviewers. There were a few papers that came in that I knew little about, so I might take one of the suggested people, then dig around to find two others (going through the references, googling, etc.)

Re: The 450 papers list

I just noticed I’m the lead author on one of the papers on the list. I have absolutely no idea how that paper could be construed as “skeptical of man-made global warming.” I have no idea how it could be construed as saying anything at all about man-made global warming.

Terran: I just skimmed through John and Soden ( http://193.10.130.23/members/viju/publication/airscomp/2007GL030429.pdf ) and it actually explicitly talks about Pierce, basically saying that they confirm Pierce’s results regarding the mean state bias but show that this bias does not seem to influence the resulting feedback:

simon abingdon: You seem to have a view that the industrial revolution and our resulting prosperity as very fragile and believe that it somehow would not have occurred (at least to anything near the degree we’ve had) if it were not for the fortunate happenstance that there are lots of fossil fuels buried in the ground. I would tend to believe that if such a fortunate happenstance were not the case, we would have used our ingenuity to find other energy sources (and perhaps also to use energy sources more efficiently) … And, that now that we realize that it would be folly to fully-exploit those fossil fuel resources, we will in fact find other resources, especially once the appropriate market incentives are in place to encourage this by pricing fossil fuels to reflect the damage that they do to our environment.

You seem to worry about Al Gore’s “scaremongering style” but perhaps you should also be concerned about those who warn about the economic effects of reducing our greenhouse gas emissions might also be fear-mongering. The fact that they have essentially no support in the peer-reviewed economics literature, as far as I’ve seen, would make such fear-mongering even less justifiable! Furthermore, it is not as if the only concern regarding AGW is how it affects cold- or heat-related fatalities. There are lots of other concerns revolving around sea level rise, change in precipitation patterns (and increased drying of soils with increased heat), stresses to already-stressed flora and fauna, and many other issues.

Joel,

All 3 papers find, as John and Soden (2007) put it, “systematic and consistent patterns of bias in tropospheric temperature and humidity compared to observations”, which seems an important result, as do the findings that “the models we investigated tend to have too much moisture in the upper tropospheric regions of the tropics and extra-tropics relative to the AIRS observations, by 25–100% depending on the location, and 25–50% in the zonal average” (Pierce et al. 2006) and that “[d]ifferences in the Tropics and subtropics result in zonal mean differences in heating rates of 10% in the upper troposphere and zonal mean OLR differences of 1–3 W/m2, with a global average near 1 W/m2.” (Gettelman et al. 2006).

The fact that the models show a consistent water vapor feedback across models unimpacted by the varying biases of the models does not in some way cancel out these modelling issues mentioned above.

Response: no it doesn’t, and modellers will be working to address that. But if it doesn’t make much of a difference to the net feedback,it isn’t much of an issue for future temperature projections (though it might be for other impacts, you’d have to look and see). -gavin]

#119 Ray, you ask if I’ve heard of Newton. (Thanks, Ray). However, I do admit that I see no direct connection between Newton and the Industrial Revolution, other than noting that the attitudes and discoveries of the Enlightenment (Newton being the chief protagonist) prepared the ground for it to happen.

Now you take me to task for saying that it was the abundant availability of fuel (coal) that drove the Industrial Revolution; that no, it came about only through the insights of the scientists of the day. I’m afraid I still beg to differ.

James Brindley´s canals of the 18th century were constructed by pick and shovel and their traffic was powered by the horse. (The self-propelled steam engine was yet to come).

Meanwhile the Abraham Darbys discovered how to produce iron cheaply. This development was crucial.

James Watt’s (probably apocryphal) boyhood observation of the boiling kettle enabled him to design the first efficient steam engines (building on Newcomen’s early examples) and this led directly to the explosive developments of the steam age in the early 19th century. I would say this epoch marked the onset of the Industrial Revolution proper.

So would you call these men scientists? If not perhaps you think they were in constant correspondence with the likes of Fourier and Laplace, not otherwise knowing how to proceed, eh? Incidentally, Carnot’s ideas were only of theoretical interest until developed by Clausius late in the day, well after the Industrial Revoltion had gained unstoppable momentum.

Whatever you may say, I still feel certain that if combustible fuels had not been available in abundance, then the Industrial Revolution could not have happened.

Guy, as a general rule, BBC does a piss poor job of covering science. In fact, I would contend that anyone who relies on television or other broadcast media for their science knowledge is condemning themselves to ignorance. Unfortunately, Snow’s Two Cultures have bifurcated several times since he wrote his essay, and we now have everything from sceintist through artsy-fartsy to the folks who vote in American Idol but not in elections.

The problem is that while the science is quite straightforward, there are a lot of details one must understand before it becomes straightforward. It all boils down to this: The planet absorbs energy from the sun and heats up to some temperature at which it emits enough energy (in a longer wavelength portin of the spectrum) that Energy_in=Energy_out. When you introduce an atmosphere, some of the gasses (e.g. greenhouse gasses) will block some of the outgoing radiation, and the planet’s temperature must rise until Energy_in=Energy_out again. This is absolutely fundamental, and you cannot possibly understand Earth’s climate without understanding this greenhouse mechanism. Now, when you change the composition of the atmosphere so that it absorbs more radiation, it is an inevitable consequence that the temperature of the planet must rise further.

That’s it. That’s how basic the situation is. Energy balance. Now there are a ton of details that determine exactly how much the planet’s temperature must rise, but there are about 10 separate lines of evidence that all agree on a level of about 3 degrees per doubling, so it’s kind of hard to dissent from the consensus without being in outright denial of the evidence.

So it comes down to this. We can’t dumb down the science any more. Could the public maybe meet us half way and frigging wise up?

Gavin, this comment (currently #130 by murteza) to which you responded is from a link-spamming robot. The content of the post is grabbed from this earlier Eli Rabbett comment on this thread.

Indeed. I will clear it up- gavin]

Gavin, the comment by murteza you replied to in 130 is a copy of Eli’s comment in 94. Something similar happened to me in the bristlecone topic. I fear a spammer is playing games here.

Simon,

How long have the coal beds been there? How long had people known about them and known that you could release a lot of heat by burning coal? And yet there was no industrial revolution until the 18th century. Why? What was different about that time that made energy-intensive industry possible.

And do you seriously contend that understanding of Newtonian dynamics, calculus, etc., had nothing to do with our suddenly acquired ability to capitalize on energy resources? Wow!

Harold Brooks, 127: You likely aren’t the only one confused why your paper is on the list. I suppose the list-maker found Pielke’s work on the economic damage of hurricanes, and then stuck in your paper for fun. I suggest you take it up with the author of the list.

Drew Shindell, GISS, is even on there. Go figure.

Guy: I don’t see any value in replicating the list on the ‘other side’. Perhaps a look into the most heavily cited papers might be interesting, but simple lists of papers aren’t helpful; literature reviews are. And plenty of those already exist, including the IPCC reports themselves.

Web page broken?

Sirs,

Your RSS feed doesn’t seem to function properly – my newsreader signals an error.

‘Older’ links – upto this article work fine, but the one before last (“it’s all about me(thane)”) redirects also an error page (“Coming soon: Another fine website hosted by WebFaction”).

Clicking on the ‘home’ button (top left of the page) yields also the error page, while the ‘archive’ button returns a page with:

”

Archives by Month:

Archives by Category:

”

And lastly, clicking on the ‘about’ button yields the right page, but the french version (I do write from France, but usually watch the English version, and the ‘about’ page does also not mention the “/langswitch” thing which appears on other translated articles)

My apologies for the interruption in the discussion.

Ray said:

In a nut shell mind you …

Because coal was, and is, pretty useless for iron production.

Hitherto the production of iron had utilised charcoal. Wood was plentiful and easy to harvest. But production of charcoal became expensive and large-scale deforestation meant it was a dwindling resource, necessitating moving the iron industry around every “few” years. Also, the iron smelting process with charcoal was rather slow, and so limited output somewhat.

Then along came Abraham Darby I, who we can thank for the insight that led to the discovery of coke (eventually making a large market for coal) and its use in iron production. Then, after a few more years of furnace design improvements, Abraham Darby III, along with John Wilkinson, built a ruddy great bridge in iron over the Severn and said, “Hey, look what we can do in iron.” The Industrial Revolutionists were impressed and then beat a path to their doors in Coalbrookdale. The rest, as they say, is history.

Whatever’s broken is not just RSS; web browser also hits the same error page, when directed to the home page. I got in ‘sideways’ via older links.

Re 139, Hank–

Curiously, I experienced this problem yesterday, but now it’s fine for me.

Pretty weird.

Guy, have a look at this website illustrating the basic point Ray Ladbury explains above:

http://climateinteractive.org/simulations/bathtub

Guy (#120),

Fair enough. I misunderstood you a bit, and I take back (3).

As for whether or not the science is losing the public (I assume we’re talking about the U.S.), and whether it’s because of a government taint, first take the long view (two decades):

So let’s say that, up to 2007, Americans were largely behind the scientific consensus view, even if they did not understand it. But a recent Pew survey did show a dip in the public’s ranking of the importance of the global warming issue amid other concerns, as well as a dip in the public ‘belief’ in global warming. Nisbet has some useful links on interpreting it.

Now, whatever’s the reason for the recent polls, scientists haven’t suddenly begun communicating worse, and the IPCC hasn’t somehow become more governmental, than in the previous 20 years when their message got through to the public. So I don’t think that’s where the problem lies.

Hmm, I seem to believe I am writing posts that contain words that I’ve carefully chosen, and yet people are clearly reading posts responding to entirely different words that I don’t recognise…

For example, I have never suggested a comparable “other side” list to the notorious 450 list, which I’ve pointed out several times now (I think the last time was #120), so really no point in me doing so again. Anyone who is very bored feel free to look back and what I actually proposed – still think it’s a decent idea.

Similarly I’m not sure why I’m getting (admittedly very good) lessons in the Greenhouse effect – I do get the basics no problem at all, and have done for many, many years. What I don’t get is technical detail which requires many years of study, and most climate science discussion ends up in this level of detail. Indeed, how I wish more people were as simple and honest in this regard as me – the world is filled with armchair climate science experts who genuinely believe that with no further qualification that a chat down the pub and reading the Murdoch press that they are able to reasonably assess the science themselves (“well, we’ve had climate change for billions of years haven’t we, so it must be all a load of rubbish” is a scientific statement of supreme arrogance and stupidity, but boy do I hear it a lot). By contrast, at least I read up on actual science, am moderately intelligent and am interested, but in the final analysis I defer to the general body of opinion among those best qualified to assess the data – ie, not me. Summaries of peer reviewed papers, academic institutions and the IPCC make my mind up instead.

I couldn’t agree more that the greenhouse effect basics (as Ray nicely and succinctly points out) present the fundamental case that often gets ignored, but I feel I’m insulting people’s intelligence here if I say that this point has been made endlessly for decades, and it seems to make no difference at all.

Ray, you’ve presented a perfectly reasonable challenge – could the public meet us halfway? At the moment the answer is “no”, it seems. And I’m asking what “we” intend to do about it. Folks, seriously – we need some good ideas, and we need them fast. What’s the alternative – just moan at how thick the public are and give up? There may not be a simple solution here – one figures that the word “education” should feature prominently somewhere – but I really do think there needs to be a new urgency in trying to get the simple message from scientists across in a way that is meaningful to the guy and gal on the street. I’m kinda disheartened that even here in RC discussion, there seems to be little comprehension of the trouble we’re in.

Ray Ladbury, and just what was the scientific breakthrough that permitted us to burn/use magnitudes more coal than before the Industrial Revolution?

Terran,

Keep in mind that it is possible for models to be correct for the wrong reasons. One might expect that the roughly logarithmic dependence on radiation limits the impact of various biases. The AIRS from Pierce et al (2006) showed that the models tend to have a poor representation of upper tropospheric water vapor, with the models being too moist. Now keep in mind that the water vapor feedback depends on the fractional change of upper tropospheric vapor, so that if the simulated change in WV due to greenhouse feedback was roughly correct, then you’d expect the feedback to be incorrect. This is because the fraction of interest is delta-WV/base-WV. Now further work (e.g., by Soden et al. and summarized in the Dessler and Sherwood perspective piece) has shown that the strength of the water vapor feedback in models is reasonable, and thus one might conclude that the processes controlling delta-WV are also misrepresented in models.

Considering an example with carbon dioxide, say we didn’t know what the initial CO2 concentration in the atmosphere was, and we used a climate model with a perturbed carbon cycle, and a resulting change in the CO2 amount by another unknown amount. Well, consider that the real CO2_base concentration is 350 parts per million, and the simulated change in CO2 is + 20 parts per million. Now say we have a model with a background concentration of 280 parts per million (a 20% error), but the CO2 response to the carbon cycle perturbation is +16 parts per million. In both cases, the resultant radiative forcing will be the same, amounting to about 0.3 W m^-2. Here is an issue with problems offsetting.

That said, I’m not sure if anyone has actually tested how problems with these components of the water vapor feedback tend to cancel each other out, or if any other papers have re-examined the results you refer to and found something else. Brogniez and Pierrehumbert (2007) for example found that various models, at least in the tropics, do a good job of representing the water vapor distribution in the troposphere. In the wider context of things, this is a problem in the details though, and is not threatening to the general “consensus” regarding how water vapor amplifies the CO2 forced climate change.

Hey, Rod, a broad sweeping answer:

http://www.google.com/search?q=what+caused+the+Enlightenment%3F

Results … about 666,000 for what caused the Enlightenment?

A single specific page that sums it up pretty well:

http://davidbrin.blogspot.com/2005/01/modernism-part-3-era-of-can-do.html

Shorter answer you may not like:

It was the success of the notion that people could get together and create their own governments — including services that served people rather than rich lords, like lending libraries, post offices, and public education.

In any society where the rich could simply take from people, the penalty for any innovation was you got robbed.

Now people will complain that still happens. But they fail to notice the ring of soldiers protecting them from the wolves and pirates, provided by their fellow citizens because that benefits everyone. It ain’t robbery for people to get together do create things they can’t buy, or don’t want to buy when they can do them by organizing.

Apropos several people’s issues — a good answer to the question about why we can’t seem to find smarter right-wingers:

http://davidbrin.blogspot.com/2009/10/rant-about-stupidity-and-coming-civil.html

—excerpt—

An article on Salon asks “Why Can’t We Have Smarter Right Wingers?”

It’s been my own stark plaint for a decade — and not from any lefty reflex. Rather, as one who openly avows some libertarian and classic “conservative” views, sprinkled in a mostly-progressive goulash.

Shouldn’t there be clear-headed voices, articulating the attractiveness of balanced budgets, national readiness, genuinely competitive free enterprise, and caution in international entanglements? Isn’t it good to have someone in the room demanding: “Prove that something really is broken, before using the the blunt instrument of the state to fix it”?

I’ve long felt that the best minds of the right had useful things to contribute to a national conversation — even if their overall habit of resistance to change proved wrongheaded, more often than right. At least, some of them had the beneficial knack of targeting and criticizing the worst liberal mistakes, and often forcing needful re-drafting.

That is, some did, way back in when decent republicans and democrats shared one aim — to negotiate better solutions for the republic…..

—-end excerpt——

It seems I waded in to a conversation that had already had some legs. The road to the Industrial Revolution required the birth of “engineering science” (in the 16th and 17th centuries), and this is inextricably linked with the work of some of the greats: Newton, Hooke and Boyle most certainly; and if I thought a little harder then I’d probably come up with some more of the earlier greats without whom the Industrial Revolution would have had difficulty getting going. It’s a tad more than the Enlightenment being responsible for it.

Rod B., Well, steam engines were pretty inefficient until Sadie Carnot provided his insights in the 1820s. Joule, Fourier, Clausius, and many others also contributed to transforming the steam engine from a dangerous oddity to an engine of prosperity. And I still contend that steam engines wouldn’t have gotten far without Newtonian mechanics. The fact of the matter is that science changed the way people look at the world. It provided us with a hope of understanding and controlling it

Guy, The answer to your question is pretty simple. If the human race doesn’t wise up and meet the scientific community half way, then we will die, along with the rest of our species. This is humanity’s midterm, and we haven’t been preparing for it. Some of us are still refusing to admit there’s a test. Most of us are praying to get by on generous partial credit. It would appear that no one is fully prepared. If we fail this test, our environment–the environment that fostered the growth of human civilazation, the only environment that civilization has known–changes, irreparably and for the worse. Moreover, this happens at a time when human population crests at 9-10 billion.

So, you tell me. What do we do, apart from waiting for our progeny to curse us with their dying breath?