As we did roughly a year ago (and as we will probably do every year around this time), we can add another data point to a set of reasonably standard model-data comparisons that have proven interesting over the years.

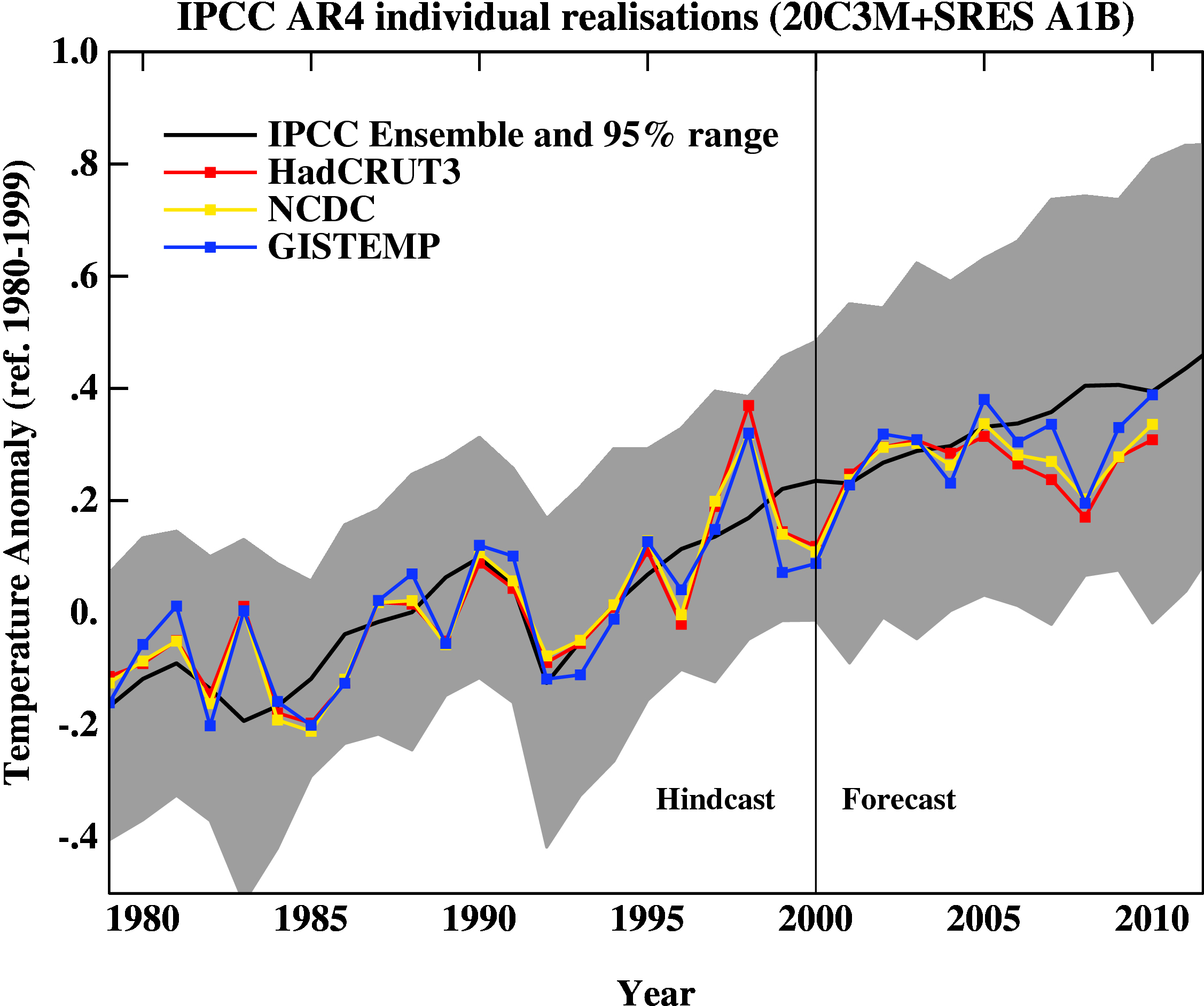

First, here is the update of the graph showing the annual mean anomalies from the IPCC AR4 models plotted against the surface temperature records from the HadCRUT3v, NCDC and GISTEMP products (it really doesn’t matter which). Everything has been baselined to 1980-1999 (as in the 2007 IPCC report) and the envelope in grey encloses 95% of the model runs.

The El Niño event that started off 2010 definitely gave last year a boost, despite the emerging La Niña towards the end of the year. An almost-record summer melt in the Arctic was also important (and probably key in explaining the difference between GISTEMP and the others). Checking up on our predictions from last year, we forecast that 2010 would be warmer than 2009 (because of the ENSO phase last January). Consistent with that, I predict that 2011 will not be quite as warm as 2010, but it will still rank easily amongst the top ten warmest years of the historical record.

The comments on last year’s post (and responses) are worth reading before commenting on this post, and there are a number of points that shouldn’t need to be repeated again:

- Short term (15 years or less) trends in global temperature are not usefully predictable as a function of current forcings. This means you can’t use such short periods to ‘prove’ that global warming has or hasn’t stopped, or that we are really cooling despite this being the warmest decade in centuries.

- The AR4 model simulations are an ‘ensemble of opportunity’ and vary substantially among themselves with the forcings imposed, the magnitude of the internal variability and of course, the sensitivity. Thus while they do span a large range of possible situations, the average of these simulations is not ‘truth’.

- The model simulations use observed forcings up until 2000 (or 2003 in a couple of cases) and use a business-as-usual scenario subsequently (A1B). The models are not tuned to temperature trends pre-2000.

- Differences between the temperature anomaly products is related to: different selections of input data, different methods for assessing urban heating effects, and (most important) different methodologies for estimating temperatures in data-poor regions like the Arctic. GISTEMP assumes that the Arctic is warming as fast as the stations around the Arctic, while HadCRUT and NCDC assume the Arctic is warming as fast as the global mean. The former assumption is more in line with the sea ice results and independent measures from buoys and the reanalysis products.

There is one upcoming development that is worth flagging. Long in development, the new Hadley Centre analysis of sea surface temperatures (HadISST3) will soon become available. This will contain additional newly-digitised data, better corrections for artifacts in the record (such as highlighted by Thompson et al. 2007), and corrections to more recent parts of the record because of better calibrations of some SST measuring devices. Once it is published, the historical HadCRUT global temperature anomalies will also be updated. GISTEMP uses HadISST for the pre-satellite era, and so long-term trends may be affected there too (though not the more recent changes shown above).

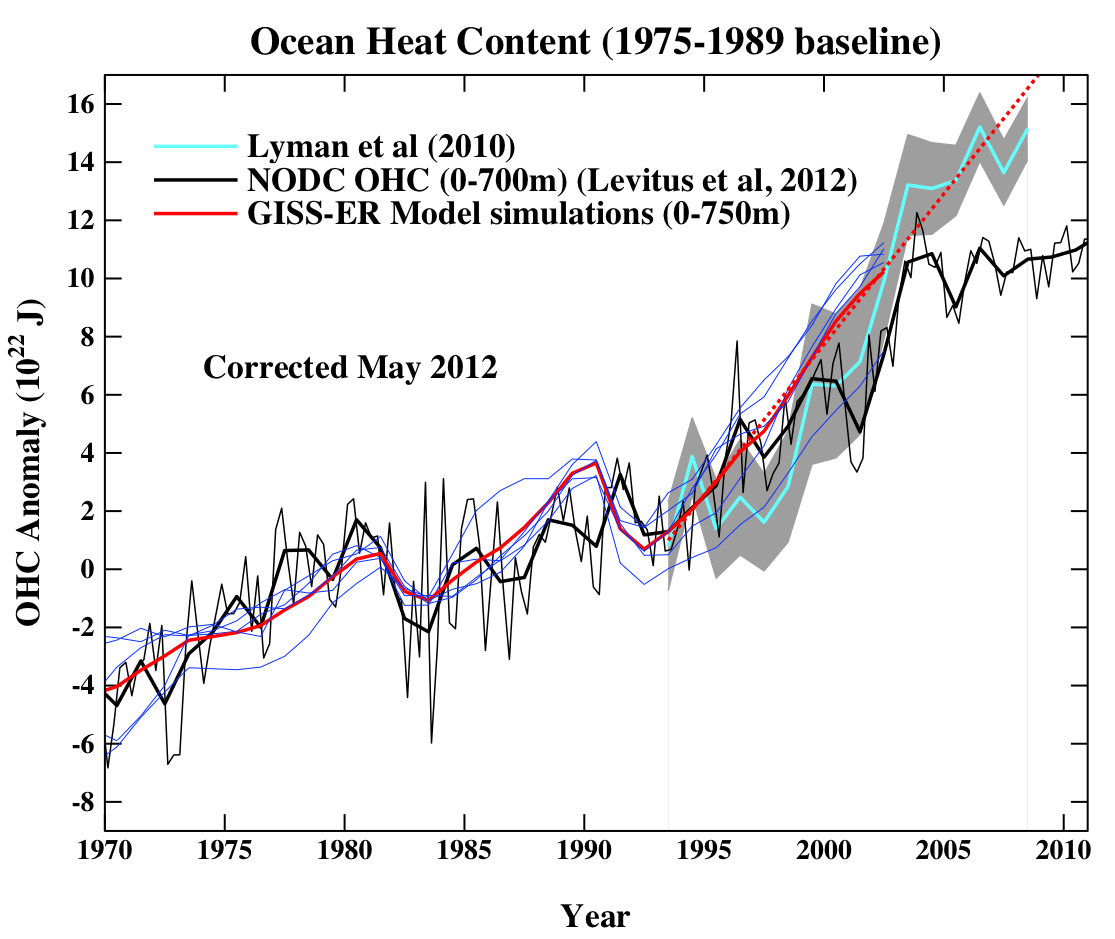

The next figure is the comparison of the ocean heat content (OHC) changes in the models compared to the latest data from NODC. As before, I don’t have the post-2003 model output, but the comparison between the 3-monthly data (to the end of Sep) and annual data versus the model output is still useful.

To include the data from the Lyman et al (2010) paper, I am baselining all curves to the period 1975-1989, and using the 1993-2003 period to match the observational data sources a little more consistently. I have linearly extended the ensemble mean model values for the post 2003 period (using a regression from 1993-2002) to get a rough sense of where those runs might have gone.

Update (May 2010): The figure has been corrected for an error in the model data scaling. The original image can still be seen here.

As can be seen the long term trends in the models match those in the data, but the short-term fluctuations are both noisy and imprecise.

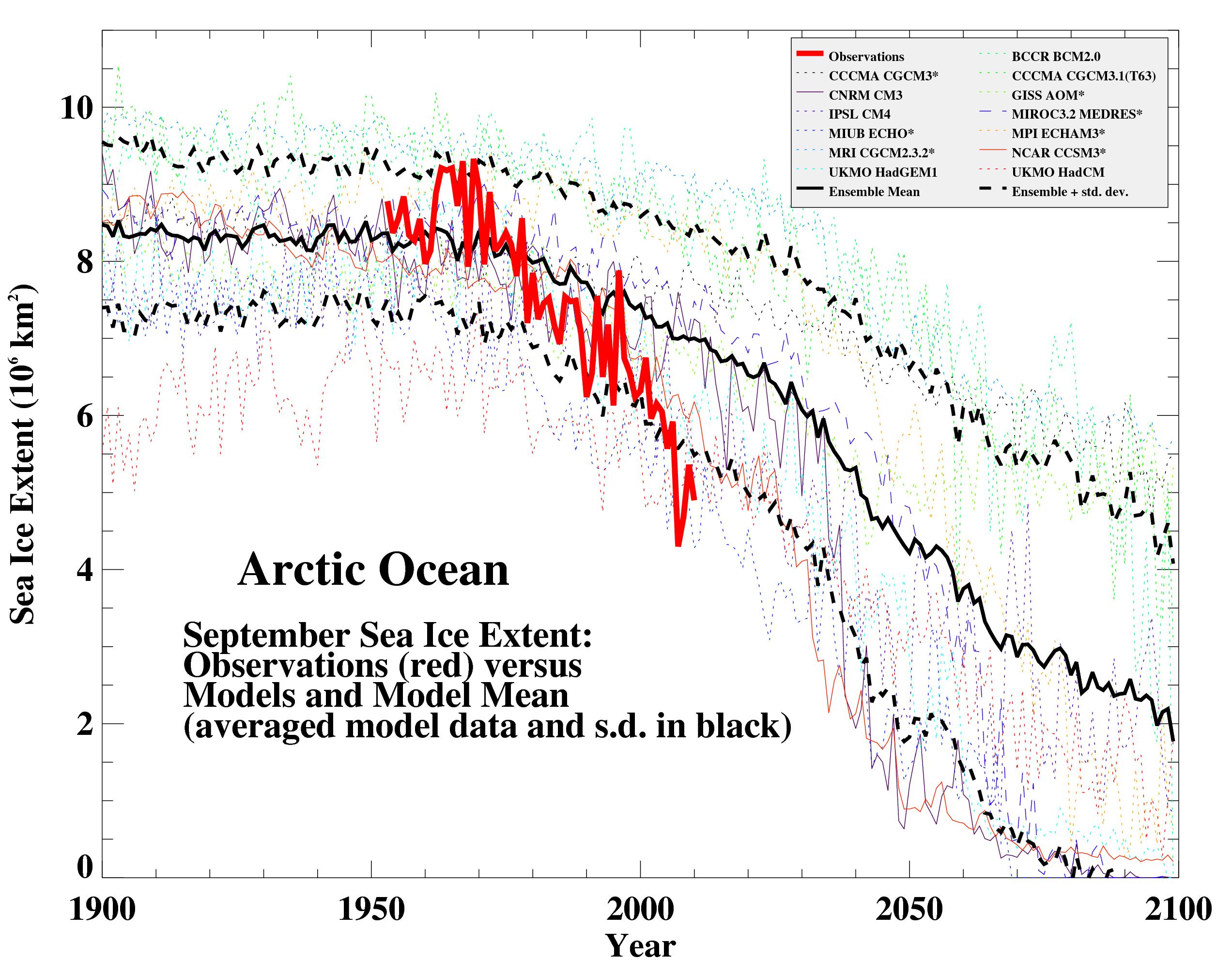

Looking now to the Arctic, here’s a 2010 update (courtesy of Marika Holland) showing the ongoing decrease in September sea ice extent compared to a selection of the AR4 models, again using the A1B scenario (following Stroeve et al, 2007):

In this case, the match is not very good, and possibly getting worse, but unfortunately it appears that the models are not sensitive enough.

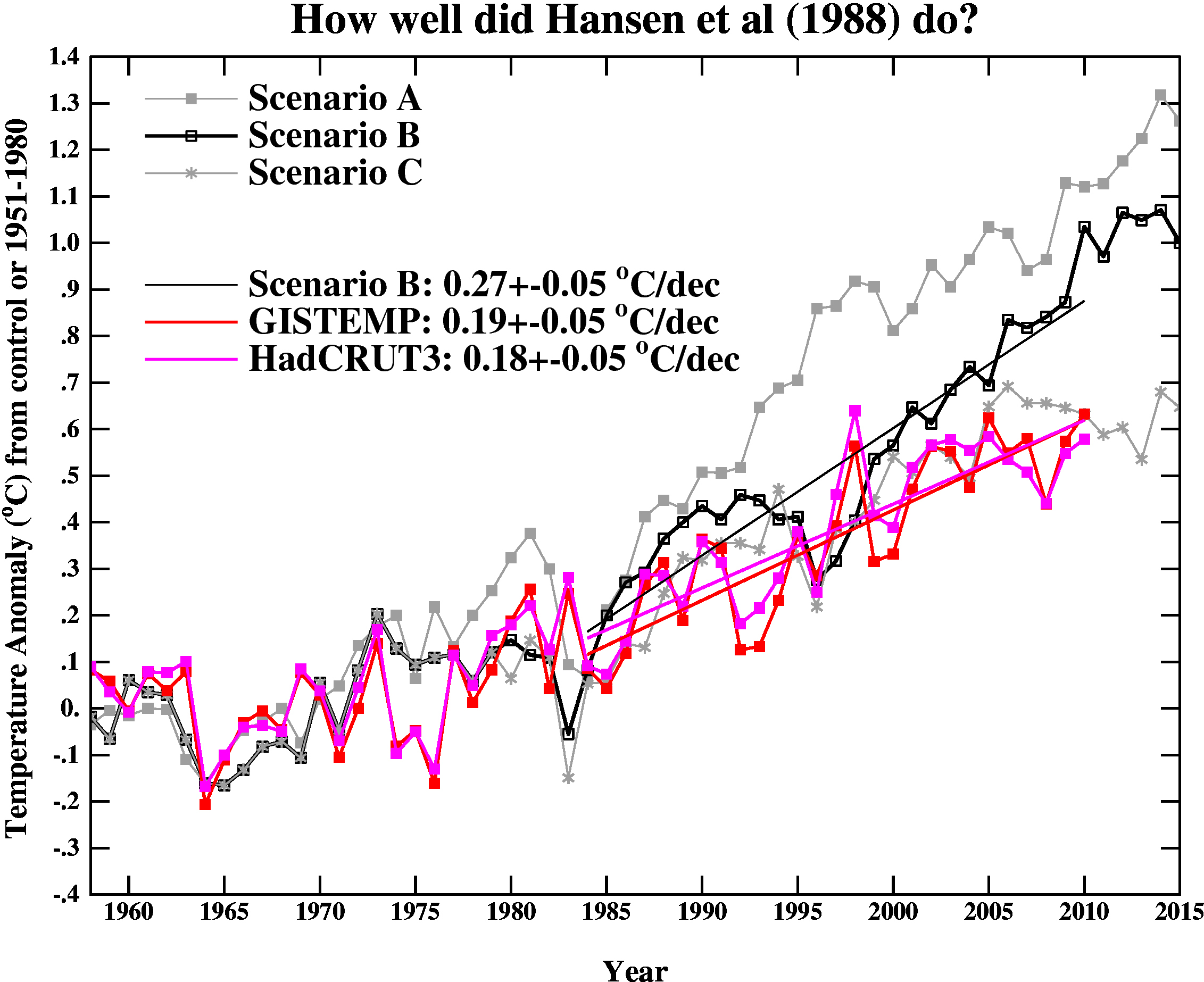

Finally, we update the Hansen et al (1988) comparisons. As stated last year, the Scenario B in that paper is running a little high compared with the actual forcings growth (by about 10%) (and high compared to A1B), and the old GISS model had a climate sensitivity that was a little higher (4.2ºC for a doubling of CO2) than the best estimate (~3ºC).

The trends for the period 1984 to 2010 (the 1984 date chosen because that is when these projections started), scenario B has a trend of 0.27+/-0.05ºC/dec (95% uncertainties, no correction for auto-correlation). For the GISTEMP and HadCRUT3, the trends are 0.19+/-0.05 and 0.18+/-0.04ºC/dec (note that the GISTEMP met-station index has 0.23+/-0.06ºC/dec and has 2010 as a clear record high).

As before, it seems that the Hansen et al ‘B’ projection is likely running a little warm compared to the real world. Repeating the calculation from last year, assuming (again, a little recklessly) that the 27 yr trend scales linearly with the sensitivity and the forcing, we could use this mismatch to estimate a sensitivity for the real world. That would give us 4.2/(0.27*0.9) * 0.19=~ 3.3 ºC. And again, it’s interesting to note that the best estimate sensitivity deduced from this projection, is very close to what we think in any case. For reference, the trends in the AR4 models for the same period have a range 0.21+/-0.16 ºC/dec (95%).

So to conclude, global warming continues. Did you really think it wouldn’t?

As before, it seems that the Hansen et al ‘B’ projection is likely running a little warm compared to the real world.

=================

Statistically significantly so.

Enough data to reject the hypothesis (and the conclusions) that Scenario B is correct.

[Response: But no model is ‘correct’ and no forecast is perfect. The question instead is whether a model is useful or whether a forecast was skillful (relative to a naive alternative). Both of these are answered with a yes. We can even go one step further – what climate sensitivity would have given a perfect forecast given the actual (as opposed to projected) forcings? The answer is 3.3 deg C for a doubling of CO2. that is a useful thing to know, no? – gavin]

Thank you.

A few curiosities from the article. Why do you calculate the climate sensitivity from Hansen’s scenario B projection when it is running a little warm? Actual temperature data appears to be tracking much better under scenario C.

Also, any comment as to why the ocean heat content has appeared to level off during the post 2003 period?

[Response: Scenario C assumed no further emissions after 2000, and is much further from what actually happened. I doubt very much that a linear adjustment to the forcings and response is at all valid (and I’m even a little dubious about doing it for scenario B despite the fact that everything there is close to linear. The error bars are about +/- 1C so it isn’t a great constraint in any case. As for OHC, it is likely to be a combination of internal variability, not accounting for heat increases below 700m, and issues with the observing system – compare to the Lyman et al analysis. More time is required for that to become clear. -gavin]

>The model simulations use observed forcings up until 2000 (or 2003 in a couple of cases) and

> use a business-as-usual scenario subsequently (A1B).

Does that mean the model simulations have not accounted for the unusually deep solar minimum or would that not make much of a difference?

Any highlights in the modelling world on new or improved physics which is being included? Thanks.

Did the Hansen ‘B’ projection properly take into account the thermal inertia of the oceans? Put another way, is it possible that the Hansen ‘B’ projection gets the rate of warming wrong (too fast), but the overall final sensitivity (4.2˚C/doubling) right (and it’s just the far distant tail of the graph that will differ, but it will ultimately end at the correct temperature)?

My untrustworthy memory tells me that the degree of thermal inertia of the oceans came as a mild surprise to science in the last decade, so the answer to these questions could be yes.

[P.S. On a separate note, as if it couldn’t get worse, reCaptcha’s new method of outlining some of and sometimes only parts of the characters is really, really, really annoying. And I was just, finally getting used to it…]

[Response: The recaptcha issue looks like a weird CSS issue, possibly related to an update of the live comment preview. Any pointers to what needs to be fixed will be welcome!- gavin]

Gavin,

with regards to Dan’s question, is there any estimate of the short-term effect of ENSO on radiative forcing? Also, do you think that sea level rise is a good proxy for OHC?

Gavin,

I don’t think so. I think the captcha itself is an image generated and delivered as a unit by Google (right click and “View Image” in Firefox)… CSS has no effect on it, so I think it’s just a coincidence in timing (captcha change vs. preview change). Google must be generating that image using a different algorithm/method than they were. They’re just outsmarting themselves (and us).

So, if I read this correctly, your method yields an estimate for climate sensitivity of 3.3ºC. It’s easy to derive from this the CO2 level compatible with the policy goal of limiting the rise in global mean surface temperature to 2ºC over the pre-industrial level. I make it about 426ppm. Of course, this assumes a few things, such as that levels of other GHGs, such as methane, are returned to their pre-industrial levels, or continue to be counter-balanced by aerosol forcings.

At the current rate of increase in atmospheric CO2 levels (around 2ppm/yr), we’ll pass 426ppm within less than another 2 decades. We’ll have to reduce CO2 levels after that if we’re to avoid an eventual temperature rise of more than 2ºC.

Gavin (or anyone else qualified to answer):

when the measured temperature drops from one year to the next (as happened in 2008-2009 if I’m reading the graph correctly), where does the heat go? Upper ocean / lower ocean / atmosphere / someplace on the surface where we don’t have thermometers?

Put another way, if our grid of measuring devices was truly comprehensive in 3-d, could we see how heat moved from one place to another from year to year? Would the result be a straight rising trend line?

ps: Once again, thanks for all your hard work explaining these issues to the public. Some days I’m not sure how you keep your sanity.

[Response: The heat content associated with the surface air temperature anomalies is very small, and so it can go almost anywhere without you noticing. – gavin]

For the period 2000-2010, Tamino’s analysis might be a better measure

http://tamino.wordpress.com/2011/01/20/how-fast-is-earth-warming/#comment-47256

It’s not a huge difference–it does make everything a lot more consistent.

This is a good idea. It could be improved (IMHO) (a) by assembling the yearly predictions in a pdf file that can be easily accessed here and (b) including similar graphs for the measured variables that Barton Paul Levenson has included in his list of correct predictions.

The data seem to strongly confirm or support scenario c over the other two scenarios. Would you say that’s a fair inference to date?

1, Gavin in comment: But no model is ‘correct’ and no forecast is perfect. The question instead is whether a model is useful or whether a forecast was skillful (relative to a naive alternative).

Instead of “correct”, how about “sufficiently accurate”? Box (and others who use the word “useful”) never clarified what good qualities are necessary or sufficient for a model to be “useful” if it is “false” (Box’s word.) If model c is the most skillful to date, should model c be the model that is used for planning purposes? (This looks like an idea that might appeal to Congressional Republicans.)

You are aware (I am sure) of “sequential statistics”. Would you like to propose a criterion (or criteria) for deciding how much accuracy (or skill) over what length of time would be sufficient for deciding which of the scenarios was “most useful”?

I’m not so sure 2011 will be “easily” in the top 10, considering the strong la Nina in place and expected to last at least mid-year and perhaps beyond. Are January surface anomalies not looking that cool?

2008, for example, appears to be ranked 11th, and this year’s la Nina seems to be a little stronger. So more atmospheric greenhouse gases, sure, but it’s only 3 years removed. Solar influence won’t be much different.

Interesting to see arctic sea ice extent still tracking below 1 SD.

Any updates to Schuckmann 2009 (ocean heat storage down to 2000 m)?

re: MarkB @8

“Interesting to see arctic sea ice extent still tracking below 1 SD.”

From the graph it looks like it’s tracking ~4 SD below mean and ~1 SD below 2007.

Jeffrey Davis: “From the graph it looks like it’s tracking ~4 SD below mean and ~1 SD below 2007.”

I don’t follow that. The dotted lines above and below the solid one (ensemble mean) are 1 SD. Maybe you’re confusing this with the vertical axis, which is sea ice extent.

I wonder how the model ensemble and observations (especially GISTemp) track north of 60.

Intuitively, one would think that model the underestimate of the decline in Arctic sea ice extent would also be reflected, at least partly, in an underestimate of Arctic temperature rise.

Thanks for this update. I agree with others that some mention/discussion wouuld be helpful to discuss natural forcings/inputs not included in the models:

1) Certainly the very low solar activity and prolonged solar minimum.

2) We’ve had two rather significant La Ninas in the past 5 years

3) The PDO has swtiched to a cool phase (accentuating the La Nina?) And what is that doing to the OHC?

4) The AMO might be heading for a cool phase, and this combined with a continued cool PDO, on top of a weak Solar Cycle 24 could prove interesting.

Related to all this is of course the issue of how AGW might be affecting the very nature of longer term frequency ocean cycle such as the PDO and AMO.

The most exciting thing is we’ll get a chance to see the relative strength of all of these over the next few years, and it will most interesting to compare the total decade of 2010-2019 to previous decades in terms of the trends in Arctic Sea ice, Global Temps, and of course, OHC.

gavin : we could use this mismatch to estimate a sensitivity for the real world. That would give us 4.2/(0.27*0.9) * 0.19=~ 3.3 ºC

But with this calculus, don’t you estimate transient climate response (and so transient climate sensivity)?

[Response: I am assuming that the sensitivity over the 27 years is linearly proportional to the equilibrium sensitivity. If I knew the transient sensitivity of this model (which I don’t), I could have scaled against that. In either case, one would find that a reduction by a factor 0.19/(0.27*0.9) = 0.78 would give the best fit. – gavin]

Couple questions. Since H88, what are the most important changes made to the model (a non slab ocean and improved sulfur cycle? etc,etc)

if you repeated H88 with today’s version of the model what would you get?

Academic questions, just curious.

I sent off my 2nd (revised) version of the planet temperatures paper yesterday. Wish me luck…

re: #19 Good luck BPL. I just read your easy greenhouse effect article. Now I’m going to find out how Fourier figured it out in the first place, and another learning curve begins. Thank you.

#19 Looking forward to reading it BPL ,

“Consistent with that, I predict that 2011 will not be quite as warm as 2010, but it will still rank easily amongst the top ten warmest years of the historical record.”

Sounds like a “close shave” prediction for 2011. What’s the probability that it is or isn’t?

Let’s ignore NASA gmao ensemble for ENSO and instead eyeball a prolonged La Nina episode for 2011, which decays into 2012 giving neutral conditions:

In this case, we would expect around 0.2 deg C drop similar to 1999. So, 2011 will still rank amongst the top ten warmest years of the historical record, but only by 0.05 deg C.

Given that the MEI ranked the 1999 -2000 La Nina episode quite poorly, and this year we have seen record SOI values, I wouldn’t say 2011 will “easily” be in the top ten.

[Response: Wanna bet? – gavin]

re: 13

I was looking at this graph:

http://nsidc.org/images/arcticseaicenews/20110105_Figure2.png

Short learning curve :)

Given the historical performance of Nasa gmao, no I don’t.

[Response: Not sure what they’ve got to do with anything. But once again we have someone going on about cooling in the blog comments who backs off when pushed. Good to know. -gavin]

Isotopious,

I am going to agree with you that 2011 will not be as warm as 2010 (La Nina vs El Nino and all). However, I will take the position that it will fall outside the top 10. According to the CRU data, a 0.1C drop from 2010 would knock 2011 down to 12th place. Based on the Dec. temperature drop, a 0.1-0.2C drop is quite possible. By the way, according to CRU, 2010 finished 4th by a nose to 2003.

gavin : in IPCC AR4, table 8.2 gives a 2.7 °C equilibrium climate sentivity for GISS-EH and GISS-ER. In your text, the value is 3.3 °C. What is actually the best estimate?

[Response: Range is 2 to 4.5, best estimate ~3 C -gavin]

Jeffrey (#23),

I was referring to the 3rd graph listed in this post comparing model ensemble to data, so SD refers to the model SD. Yours compares the 1979-2000 average to recent extent.

This makes me wonder if over time, (decades) we should expect to see a growing gap emerge between the anomalies measured by GISS compared to the other methods? I wonder this because GISS attempts to include the arctic and the others do not and the arctic is warming much faster than the global average and will likely continue to do so as the ice melt continues. Or are the anomalies (large as they are) not over a big enough area of the globe to create such a difference?

The 2011 temperature result will obviously depend on ENSO. As you know, El Nino is seasonal (as the name implies), yet the anomalies have persisted unchanged into the New Year. While it is still possible for the event to decay in 2011(as gmao ensemble is predicting), historically, it could have already began to decay since Christmas time….mmmm

So I don’t want to bet, just pointing out that the odds of 2011 making the top 10 warmest years are not as good as they could be…that’s all.

:)

The hindcast of the models, against the temperature record from 1900 to 2000, is indeed very impressive.

It is that very fact that leads me to believe they have to be wrong!

They are not comparing like to like. An apple doesn’t equal an orange no matter which you cut it.

The models are set up to to produce the climate signal. However, there are some weather signals such as the PDO influenced ENSO conditions that introduce medium term warming and cooling signals and they can be quite large as we know. They are cyclical so, in the long term, they average out and are not an additive effect so the long term climate signal will always emerge from this masking weather noise.

Now the models average out these temporary weather forcings so they are only showing the true climate signal. Well, I can understand that, it seems a reasonable thing to do, makes them less complicated

How can, therefore, a model set to match the climate signal only, match so well the climate plus weather signal, which is what the temperature record is, so well?

We are told that the fact the models are not representing the 21st century record vey well is that ‘weather’ conditions are temporarily masking the true climate signal which will emerge when the weather conditions cycle.

http://www.woodfortrees.org/plot/hadcrut3gl/from:2001%20/to:2011/trend/plot/hadcrut3gl/from:2001/to:2011

Well I could accept that as an explanation if it wasn’t that the hindcasts of the model match the climate plus weather signal so well.

We know that when James Hansen made his famous predictions to congress in 1988 that he didn’t know he was comparing a period, which was in the warm end of a sixty year PDO weather cycle with periods in the cool end. The PDO cycle was not identified until 1996.

Surely the hindcast of the models should show periods of

time where the climate signal is moving away, up and down, from the climate and weather signal?

The fact that it doesn’t suggests to me that the modellers wanted to tune their models as close as possible to the temperature record so that people would have high confidence in them. However they overlooked, in their hubris, that if they were truly accurate they shouldn’t.

So it appears that the only way we could solve this conundrum is to say that during the period of the hindcast weather was never anything other a very minor force. However, in the period of the forward cast weather has transformed itself into a major masking force.

Sorry I can’t buy it.

Alan

Mike C #29 I’ve been wondering about this: is it correct to think that the ice mass itself acts as a sort of ‘cooling forcing’? Implying that its melt has a double whammy – less ‘cooling forcing’ plus increased sea levels…or have my perceptions led me astray again?

> Alan Millar

> woodfortrees

You’ve used his tool to do exactly what he cautions about!

_______________

“I finally added trend-lines (linear least-squares regression) to the graph generator. I hope this is useful, but I would also like to point out that it can be fairly dangerous…

Depending on your preconceptions, by picking your start and end times carefully, you can now ‘prove’ that:

Temperature is falling! ….”

————— http://www.woodfortrees.org/notes

Alan Millar #31, reference needed for the match between climate hindcasts and weather 1900-2100 you say is too good.

The first figure of the OP, for instance, shows 1980-2010. The model ensemble hindcast tracks the two big dips in the real temps, as it should if the models know about El Chichon and Pinatubo. (The OP says the simulations used the observed forcings.) That apart, what’s so impressive about the match? Can you quantify your suspicions?

Alan Millar:

If the models are behaving correctly, then they absolutely should reproduce weather effects such as ENSO. They just don’t produce the same actual weather that occurs in the real world.

So if you look at an individual model run, you will see peaks and troughs that represent La Nina and El Nino in the model run. But those peaks and troughs don’t correspond to any real world index – they represent model weather.

When you average together many model runs, these weather effects average out, and you don’t see the same patterns in the ensemble mean. However, you should be aware that the majority of the variance (the big gray 95% range in Gavin’s graph) is caused by these large scale model weather events. That is why we should expect the observed global temperature to bounce around quite dynamically in that 95% (even going outside it sometimes) and not cling closely to the mean. The fact that this is exactly what we see is evidence that the models are right, and not whatever it is that you are trying to imply. In the AR4 graph, the only time that the observed temperatures closely follow the model is just after Pinatubo, because observed volcanic forcings are included in the model.

The models also illustrate nicely that more subtle, hard to delineate oscillations such as PDO do not have a significant effect on long-term global climate. Enhancing models so that they can do better in that area may improve regional modelling results, but it won’t affect the global picture much.

On a different note, you are actually trying to argue that because the models are so good at reproducing climate, then they can’t be real. That’s a ludicrous argument, and you know it. Even really simple, naive models do a good job of reproducing climate. Even statistical models can do it.

Regarding model output for OHC, Gavin writes : ” As before, I don’t have the post-2003 model output”

Why not? I dont understand. Are you saying the models never output any OHC predictions past 2003?

[Response: No. It is a diagnostic that would need to be calculated, and I haven’t done it. That’s all. – gavin]

One Anonymous Bloke – The ice mass doesn’t act so much as a cooling ‘force’ as it does as a lid covering or insulating warmer water. The smaller the lid gets – because warmer water is eating away at its edges and its underside – the more that warmer water is exposed to the local air mass, the less that air is cooled by contact with ice. And on and on.

AM 31: The models are set up to to produce the climate signal… the modellers wanted to tune their models as close as possible to the temperature record so that people would have high confidence in them. However they overlooked, in their hubris, that if they were truly accurate they shouldn’t.

BPL: You appear to have no idea whatsoever how these models actually work. They are not “tuned” to any historical records at all. They are physical models, not statistical models. They start with the Earth in a known state (say, 1850) and then apply known forcings and physics to see what happens. THAT is what makes the agreement with the historical data so impressive.

You might want to get a copy of this and read it–IF you’re really interested in learning about how these models work, that is:

Henderson-Sellers and McGuffie 2005 (3rd ed). A Climate Modeling Primer. NY: Wiley.

Okay, CAPTCHA choked me twice on what I wanted to write.

Gavin et al.: I think it would help immensely if you put the “Say It!” button AFTER the reCAPTCHA section. That way at least I wouldn’t often forget to do the reCAPTCHA at all.

#30–You make it sound like ENSO is still going on. We’ve been in a cold phase since beginning of last summer. In fact, this has been a fairly strong cold event in the fall of this year.

For those that want to get current conditions and ENSO predictions, please go here:

http://www.cpc.ncep.noaa.gov/products/analysis_monitoring/enso_advisory/ensodisc.html

Re. 32 One Anonymous Bloke

Loosely related but could be of interest:

Some interesting work has been done by Jerry Mitrovica on the effects of glacial melt due to gravity, affecting sea levels:

* http://harvardmagazine.com/2010/05/gravity-of-glacial-melt

* http://www.theglobeandmail.com/news/technology/science/article9752.ece

* http://www.goodplanet.info/eng/Contenu/Points-de-vues/The-Secret-of-Sea-Level-Rise-It-Will-Vary-Greatly-by-Region

In Greenland an ‘Area of the size of France melted in 2010 which was not melting in 1979’. (H/T to Neven)

* http://www.msnbc.msn.com/id/41197838/ns/us_news-environment/

Can’t somebody just re-run Hansen’s old model with the observed forcings to the current date? Why are we forever wed to his old Scenarios A, B and C? I want to separate the physical skill of the model from his skill in creating instructive emissions scenarios.

Alan Millar: “Sorry I can’t buy it.”

No, Alan, you just don’t understand it.

38 BPL

“They are not “tuned” to any historical records at all. They are physical models, not statistical models. They start with the Earth in a known state (say, 1850) and then apply known forcings and physics to see what happens. THAT is what makes the agreement with the historical data so impressive.”

Hmmm………..

Unfortunately they also have to apply figures for forcing whose values and effects are not known and have ongoing debate about them, reflective aerosols, land use, black carbon etc etc. You get to play around with them in the model.

With black carbon we are not yet positive what the sign is even.

So before you run the model you have to estimate values and effects for these forcings and estimate their changing values over time even though we do not have good measurement.

In the GISS E model for instance they are flat lining the effects of the following factors since the late 1980s. Black Carbon, Reflective Aerosols, Land Use and Ozone all of which it had changing values for in the model prior to the late 80s.

So BPL you are saying that having done all this when they ran the model the first time they had this excellent fit? They never had to go back in and tweak any forcings or assumptions?

[edit]

[Response: Aerosol forcings in the GISS model are derived from externally produced emission inventories, combined with online calculations of transport, deposition, settling etc. The modern day calculations are compared to satellite and observed data (see Koch et al 2008 for instance). As models improve and more processes are added the results change and are compared again to the modern satellite/obs. The inventories change because of other people reassessing old economic or technological data. The results don’t change because we are trying to fit every detail in the observed temperature trend. If we could do that, we’d have much better fits. Plus, running the suite of runs for the IPCC takes about a year, they are not repeated very often. – gavin]

#31 Allen, I got similar results, showing a decline, over the last 10 years, using a Fourier filter with a 0.1 cy/yr cut-off freq.

Just a thought but I wonder how much these models relay on physical laws, AND engineering empirical equations.

38, Barton Paul Levenson: Henderson-Sellers and McGuffie 2005 (3rd ed). A Climate Modeling Primer. NY: Wiley.

I have just purchased “Dynamic Analysis of Weather and Climate” 2nd ed. by Marcel Leroux. I was wondering whether this was considered a good book among experts in the field.

A question, when a model is initialized far in the past (1850 has been mentioned as a starting year), how can the initialization be accurate enough given the relative scarcity of data from those times? I would guess that, with three virtual centuries available to develop, systematic errors in the starting conditions could impact the final result quite a bit.

Or is the hindcast made initializing things in 2000, where the relevant climate parameters are much better known, and then running the model backwards (for all I know, the relevant equations work in both directions…)

For Alan Millar: http://www.woodfortrees.org/notes

“… graph will stay up to date with the latest year’s values, so feel free to copy the image link to your own site, but please link back to these notes ….”

31, Alan Millar: The hindcast of the models, against the temperature record from 1900 to 2000, is indeed very impressive.

Sorry to pile on, but where do you see that?

A clarification. HadSST and HadISST are different products. The latter (used in GISTEMP until 1982) interpolates missing regions while the former (used in HadCRUT) does not. The next versions of these analyses, HadISST2 and HadSST3, will be available shortly.

Of course, when they are released, there will be much howling in certain quarters of “adjusting the past.” Said adjustments will be examined by certain bloggers while leaving out crucial explanation and details in favor of endless speculation and innuendo.