Readers may recall discussions of a paper by Thompson et al (2008) back in May 2008. This paper demonstrated that there was very likely an artifact in the sea surface temperature (SST) collation by the Hadley Centre (HadSST2) around the end of the second world war and for a few years subsequently, related to the different ways ocean temperatures were taken by different fleets. At the time, we reported that this would certainly be taken into account in revisions of data set as more data was processed and better classifications of the various observational biases occurred. Well, that process has finally resulted in the publication of a new compilation, HadSST3.

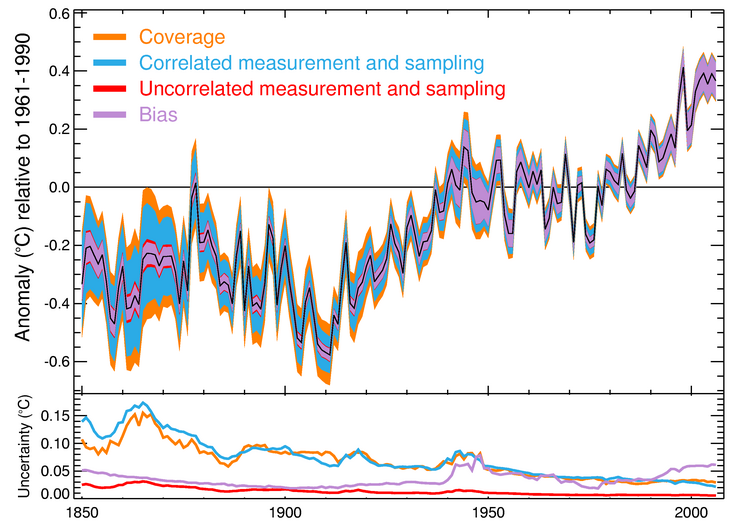

Figure: The new HadSST3 compilation of global sea surface temperature anomalies and the uncertainty.

HadSST3 not only greatly expands the amount of raw data processed, it makes some important improvements in how the uncertainties are dealt with and has a more Bayesian probabilistic treatment of the necessary bias corrections. That is to say that instead of picking the most likely factors and providing a single reconstruction, they perform a Monte Carlo experiment using a distribution of factors and provide a set of 100 reconstructions – the average and spread of which inform the uncertainties. This is a noteworthy approach and one which is likely to set a new standard for other reconstructions. (The details of the procedures are outlined in two new papers Kennedy et al, part I and part II).

One potential problem is going to be keeping the analysis up-to-date. Currently, HadSST2 (and HadCRUT3) use the real time updating related to NCEP-GTS. However, this service was scheduled to be phased out in March 2011 (was it?), in lieu of near-real time updating of the underlying ICOADS dataset. This is what HadSST3 uses, but unfortunately, the bias corrections in the modern period rely on being able to track individual fleets. For security reasons, data since 2007 has been anonymized (so you can’t tell what ship reported what data), and so the HadSST3 analysis currently stops at 2006. Apparently this is being worked on, so hopefully a solution can be found. Note that the ocean temperatures in the GISTEMP analysis use the Reynolds satellite SST data from 1979 and so are unaffected by the ICOADS security issue.

The ocean temperature history is obviously a big part of the global surface air temperature history and these new estimates will be used eventually in updates of the HadCRUT3 product. Currently HadCRUT uses HadSST2 and we can expect that to be updated soon.

Impacts

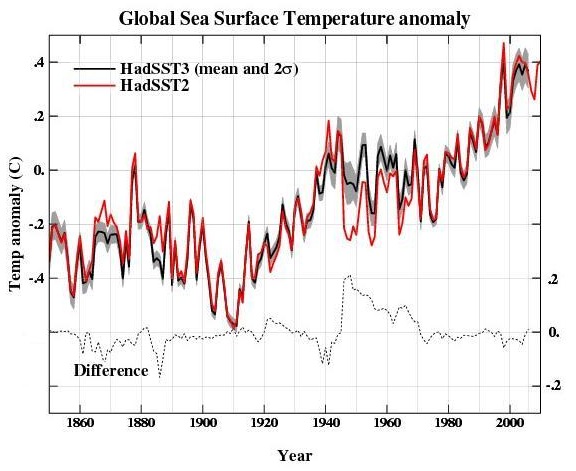

Obviously, when a new analysis is performed, it is interesting to see how it differs from previous ones. The differences between HadSST3 and HadSST2 are shown here:

and are important in a few key time-periods – the 1940s (because of issues highlighted previously), the 1860s to 1890s (more extensive data), and perhaps the last few years (related to more minor changes in technologies and corrections). The biggest difference around 1946-8 is just over 0.2ºC.

One odd feature of the HadSST2 collation was that the temperature impact of the 1883 Krakatoa eruption – which is very clear in the land measurements – didn’t really show up in the SST. Thus comparisons to model simulations (which generally estimate an impact comparable to that of Pinatubo in 1991) showed a pretty big mismatch (see Hansen et al (2007)). With the larger amount of data in this period in HadSST3, did the situation change?

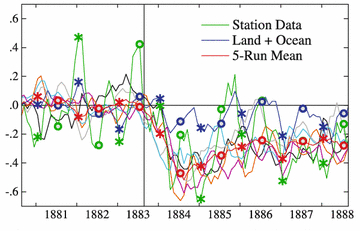

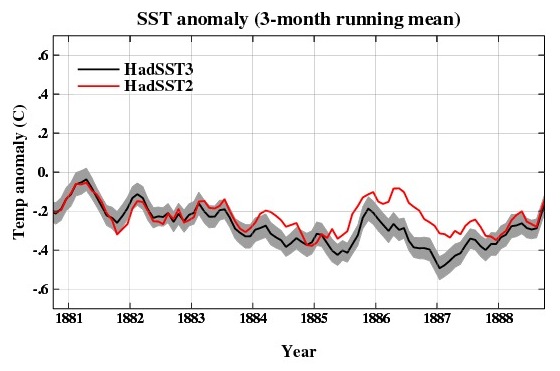

Figure: 1880’s comparison of (left) global surface air temperature anomalies using HadSST2 (as part of GISTEMP) and the GISS AR4 simulations, (right) global SST estimates from HadSST2 and HadSST3.

It seems clear that the new data (including HadSST3) will be closer to the models than previously, if not quite perfectly in line (but given the uncertainties in the magnitude of the Krakatoa forcing, a perfect match is unlikely). There will also be improvements in model/data comparisons through the 1940s, where the divergence from the multi-model mean is quite large. There will still be a divergence, but the magnitude will be less, and the observations will be more within the envelope of internal variability in the models. Neither of these cases imply that the forcings or models are therefore perfect (they are not), but deciding whether the differences are related to internal variability, forcing uncertainties (mostly in aerosols), or model structural uncertainty is going to be harder.

So how well did the blogosphere do?

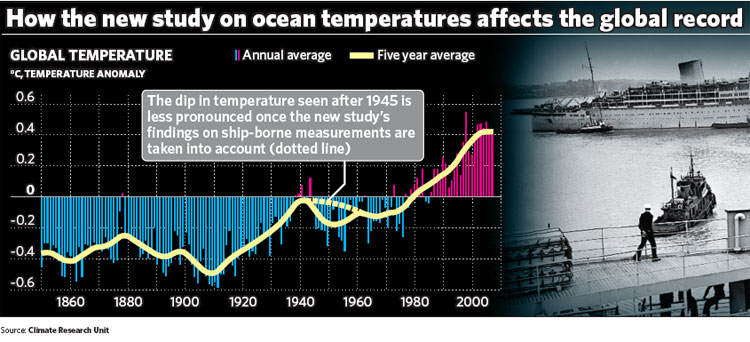

Back in 2008, a cottage industry sprang up to assess what impact the Thompson et al related changes would make on the surface air temperature anomalies and trends – with estimates ranging from complete abandonment of the main IPCC finding on attribution to, well, not very much. While wiser heads counselled patience, Steve McIntyre predicted that the 1950 to 2000 global temperature trends would be reduced by half while Roger Pielke Jr predicted a decrease by 30% (Update: a better link). The Independent, in a imperfectly hand drawn graphic, implied the differences would be minor and we concurred, suggesting that the graphic was a ‘good first guess’ at the impact (RP Jr estimated the impact from the Independent’s version of the correction to be about a 15% drop in the 1950-2006 trend). So how did science at the speed of blog match up to reality?

Here are the various graphics that were used to illustrate the estimated changes on the global surface temperature anomaly:

Figure: graphics from Steve McIntyre, Roger Pielke Jr and the Independent. Update: RP Jr’s graph shown above is an emulation of McIntyre’s adjustments, his own attempt is here (though note that the title on the graph is misleading).

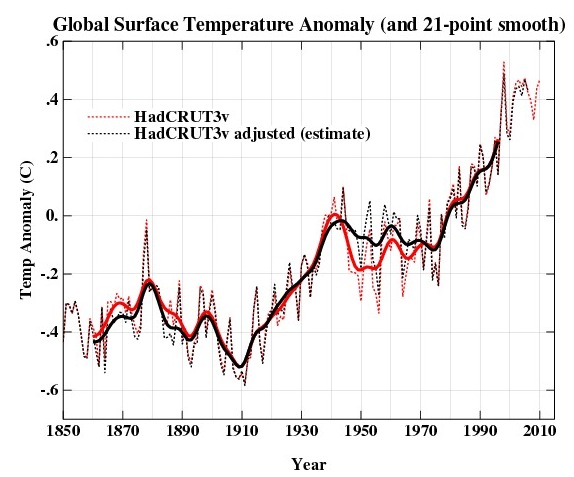

Now, we don’t yet have the real updates to the HadCRUT data, but we can calculate the difference between mean HadSST3 values and HadSST2, and, making the (rough) assumption that ocean anomalies determine 70% of the global SAT anomaly (that’s an upper limit because of the influence of spatial sampling and sea ice coverage), estimate an adjusted HadCRUT index as SAT_new=SAT_old + 0.7*(HadSST3-HadSST2).

Figure: Estimated differences in the global surface air temperature anomaly from updating from HadSST2 to HadSST3. The smoothed curve uses a 21 point binomial filter.

While not perfect, the Independent graphic is shown to have been pretty good – especially for a hand-drawn schematic, while the more dramatic implications from McIntyre or Pielke were large overestimates (as is often the case). The impact on trends is maximised for trends starting in 1946 (a 21% drop in the 1946-2006 case), but are smaller for the 1956-2006 trend (11% decrease from 0.127±0.02 to 0.113±0.02 ºC/decade) (the 50 year period highlighted in IPCC AR4). More recent trends (i.e. 1970 or 1975 onwards) or much longer trends (1900 for instance) are barely changed. For reference, the 1950-2006 trend changes from 0.11±0.02 to 0.09±0.02 ºC/decade – a 17% drop in line with what was inferred from the Independent graphic. Note that while the changes appear to lie within the uncertainties quoted, those uncertainties are related solely to the fitting of an regression line and have nothing to do with structural problems and so aren’t really commensurate. The final analysis will probably show slightly smaller changes because of the coverage/sea ice issues. Needless to say the 50% or 30% reductions in trends that so excited the bloggers did not materialize.

In summary, the new HadSST3 analysis is a big step forward in both data coverage and error analysis. The differences are much smaller however than the somewhat exaggerated (and erroneous) blog speculations that appeared in the immediate aftermath of the Thompson et al paper. Those speculations were wrong because of a lack of familiarity with the data (McIntyre confused his buckets), basic mistakes (such as applying ocean-only corrections to global temperature series) and an over-eagerness to overturn established positions. Among the lessons to be drawn should be that science does not travel at the speed of blog and that confirmation biases and desires to be first on the block sometimes get in the way of the rational accumulation of knowledge. Making progress in science takes work, and with big projects like this, patience.

steven mosher says: “divorce yourself from the parties involved and just look at the logical form of the argument.”

Aye, there’s the rub – the denialist mindset, which gladly embraces anything contrary to climatological science no matter what assumptions or pipe dreams are involved, and especially if those “what ifs” come from certain contrarians. Making the misleading fantasies plain can’t be a bad thing – the IPCC isn’t speculating that the emission scenarios will come to pass and their underestimated projections of temperatures, ice melt and sea level rise are being pointed out.

I think the question has been answered.

Instead of quibbling over words like “prediction” vs imply or whatever, why can’t those who were way off just admit the obvious? O wait, if they started admitting the obvious ….

steve mosher,

You’re just justifying a game that Philip Stott did in a global warming debate with people like Lindzen, Somerville, Gavin, and others starting at 7:55 here. In this video, Stott raises the big issue of “cosmic rays” and its contribution to climate change, but then backs off and says he didn’t say it was causing global warming and that it’s just a “hot topic” of research. Why did he bring it up? (you may need watch the whole thing to see it really had no relevant context whatsoever).

As a more extreme example, Judith Curry “summarizes” extensively her thoughts on the Montford Hockey Stick book (comment 168) and then backs off and says none of it was actually her opinion, just her summary, after gavin calls her out on the nonsense (comment here). These people are lawyers and choose their words carefully, so with just knowledge of the construction of english sentences at your disposal, you can never call them out on anything; you actually need to use your head and see through what they try to do.

He’s just doing a similar game of speculation and raising hypothetical meant to distract the people who aren’t going to keep up with the next several years of research on the matter.

The penultimate sentence of RC’s original “Buckets and Blogs” coverage of the issue sums this up pretty well.

“The excuse that these are just exploratory exercises in what-if thinking wears a little thin when the ‘what if’ always leads to the same (desired) conclusion.”

I would also point out that many other people, not just gavin (and including Roger), interpreted Steve M’s remarks to Peter Webster in a similar fashion. Many people who do know how to read, in fact. Regardless of whether we misread what he actually meant, no one is intentionally misrepresenting him, and we’re mostly calling for an end to the absurd games that he tries to play with his views of “The Team,” his distortions of what he thinks he is auditing, climate gate, etc.

If he doesn’t want to contribute to the science, and rather play his childish name-calling games and appealing to the “it’s a hoax” crowd, then no one is going to listen to him except for the very small non-expert community that already agrees with the conclusions of his crowd.

The arguments between climate scientists on the one hand and the befogged archipelago of deniers, lukewarmers and “auditors” on the other is getting too arcane and convoluted for me.

Pielke Jr., Curry, McIntyre, et al. are long- and-well known to me as dissemblers and obfuscationists. I cannot bring myself to pick back through the trail of this particular example of their dismal efforts in order to follow the arguments. I know them for what they are; I don’t need to re-confirm my conclusions. It grieves me that useful people such as Gavin must continue to spend their time confronting these drones.

Mosher: ” divorce yourself from the parties involved and just look at the logical form of the argument.”

Does it help to stand on your head and close one eye, too? ‘Cause I’m just sayin, I don’t think my head would fit where it would need to go for McI’s “What if” to make sense.

Chris @53,

Well stated!

I take seriously what others take seriously. His ‘what if’ was based on poor understanding. That’s still a mistake and should corrected, especially if others are disseminating the information.

You do understand the difference between making several scenarios on different control variables and a single scenario, right? See pjclark, perhaps McIntyre highlighting a scenario that is not based in reality means “Everyone in” Climate Audit “should be terminated and, if the institution is to continue, it should be re-structured from scratch.”

A projection needs to have proper boundary conditions to be valid. So yes, correcting a ‘what if’ statement, even post-mortem, is absolutely necessary to avoid other errors. If you were to guess I am from the planet Mars, or the IPCC guessed that CO2 might be 420ppm by 2011, only if pigs flew, then that would certainly be an issue.

Of course this all ignores that you just don’t get the big picture here, and why being smart about what you “project” or “predict” is important if you would like to avoid being “corrected”.

how did the marine air temperature data stack up?

as I recall that was suggest as a constraint on how big the corrections would be.

thanks for your reply.

[Response: The NMAT data are here, and the discussion of the SST/NMAT difference is in Rayner et al (2003). I’ll see if I can find time to do a comparison (though if someone beats me to it, that would be great). – gavin]

McIntyre has a new post where he tries to rescue the previous ‘projections’ – but he confuses the changes in HadSST (ocean temperatures, which he is plotting) and the changes in HadCRUT3 (the global surface air temperature anomaly) which is what his projection was for (as can be seen in the figures in the main post). Pielke’s projections were also for changes in HadCRUT3, not for the SST data alone.

Corrections to the global mean are obviously less than for the oceans alone (since they comprise 70% of the surface) and that is taken into account above.

Overall, we expect land temperatures to rise substantially faster than ocean temperatures because of the lower heat capacity on land. Even at equilibrium though the land response to increased GHGs is expected to be higher than over the ocean.

It is always worth noting that all of these reconstructions are works in progress. Newly digitised data is being continually added, better and more accessible metadata is coming on line, and different groups will have different ideas for how to deal sensibly with the inevitable ambiguities. Neither HadSST2 nor HadSST3 (or HadSST4 for that matter) were the final word, and simply because they used as the best available data set, does not imply that people think they are error-free. Indeed, it is precisely that reason why modellers don’t spend time tuning models to specific datasets of climate change – you never know when some feature might disappear.

Gavin, from McIntyre’s post:

“The original blog discussion at CA was entirely focused on SST (CRUTEM never being mentioned), though one graphic used HadCRU though the discussion was about HadSST.”

[Response: This is obvious – this post too is about HadSST. However, the impacts that everyone was talking about was on HadCRUT (in his figure, in Pielke’s emulation of his figure, in Pielke’s other predictions) and that is what all the % changes referred too, and so that is what I calculated. It’s not that complicated. – gavin]

Gavin, what did you get as the difference in 1950-2006 trend between HadSST2 and HadSST3, the series illustrated in the second figure of your post?

[Response: Odd question. The data are available and anyone can calculate the different trends, I don’t think I have any special method or anything, but for completeness the 1950-2006 trend went from 0.097 deg C/dec to 0.068 deg C/dec (mean of all realisations) a 31% drop (uncertainties on OLS trends +/-0.017 deg C/dec; for 100 different realisations of HadSST3 the range of trends is [0.0458,0.0928] deg C/dec). Your post insinuates that I deliberately did not give these numbers – that is false. I calculated the changes in trends for the metrics that people had talked about previously, and especially the ones they had graphed (and you will note that your graph is of the change to HadCRUT, not HadSST; as was RP’s). You are of course aware that oceans are 70% of the world, not 100%. – gavin]

Loved watching RPJr fume and attempt to be nonchalant about it… “Please just correct it, OK? It is not a big deal.” HA! Will relish the squirming of the self-styled auditor too.

SteveM’s 2008 graph, which he uses as a basis for the 50% reduction claim, explicitly says “HadCRUT3”, the global temperature record. RogerP’s 2008 post, which praises SteveM and postulates a 30% reduction, is also clearly to the global record. SteveM’s recent post attempts to say RogerP’s prediction was correct by calculating the trend difference for just the ocean record, not the global record.

Both of them should know better but who are they trying to fool? I find such games to be really silly.

Another oddity is that while RogerP staunchly claims that he has been misrepresented here with “there was no such prediction of 30% reduction from me, can you correct this post?”, SteveM says in his recent post “a value that is “remarkably similar” to the figure of 30% postulated in 2008 by Pielke Jr.” to which RogerP responds to SteveM with “nice post”. I would think RogerP would be outraged, especially given the further HadSST vs HadCRUT confusion.

Moving beyond the cheap blogo sniping and selective outrage from these fellows, I have a serious question. What does the adjustment imply for attribution and model-data comparisons during this period? I understand that the models seemed to have some problems at the decadal level with the early 40’s spike (explained by el Nino anomalies?) and with the subsequent sharp drop that aerosol forcing didn’t quite account for.

Stepping back from the details, personally I find it mind-boggling to think of the amount of extra energy that needs to be trapped by the atmosphere to heat up the ocean surface (over 70% of the earth’s surface) by ~0.1C per decade. It’s almost as mind-boggling as the amount of energy some individuals and organizations spend trying to convince the public that this heating up isn’t anything to worry about and/or that anthropogenic greenhouse gases aren’t the cause!

We don’t have revised HADCRUT yet, but I have done a direct land/sea calc using HADSST3 with GHCN v2. It does look fairly similar to what is projected here. I also did a plot (at the end) of the trend from 2006 back to an arbitrary starting year, again with HADSST3, HADSST2 and GHCNv2.

Thanks for the link Nick. One thing I hadn’t noticed from looking at Gavin’s plots was the slight downgrading of 1998 to about the same level as 2005. If this turns out to be the final result in HadCRUT4(?) it may well upset a few people.

PaulS,

Don’t worry, the conspiracy theorists will jump all over that one just as soon as they figure it out!

For those who are more comfortable with Excel rather than a language, or don’t feel comfortable downloading the data, I have made a file of HadSST2 and HadSST3 (all realizations for a particular month, as well as the mean); this has a function to calculate the linear trend so you can reproduce, for example, gavin’s result in his response to Steve M. I didn’t put the error bars in this sheet, but they can easily be added by the user, for those who want a more rigorous file. You can download it here (it is an xlsx file, so not sure if it is compatible with older Excel versions). Of course, let me know if I screwed something up.

Note if you choose 1960 rather than 1950 as a start date to estimate the trend, the percent decrease is ~18%; if you choose 1970 as the start date, it is ~2.7%.

It does appear from the spreadsheet Chris provided that the 1998 through 2006 SST trend increases from about 0.08 (HADSST2) to 0.12 (HADSST3). Like Paul said, that will upset some people.

The study indicates analysis isn’t available beyond 2006 because of the “problem of missing or non-unique call signs, exacerbated in recent years by the decision of several countries to deliberately anonymise their meteorological reports.”

What are the prospects that this problem will be resolved, and how might adjustments from 2007 through date affect the trends?

> … the decision of several countries to deliberately

> anonymise their meteorological reports.

It would be interesting to know more about that, if anyone has a pointer.

That problem is mentioned twice here:

http://www.metoffice.gov.uk/hadobs/hadsst3/part_2_figinline.pdf

Re. 70 Hank Roberts

The Met Office, as an example, is part of the Ministry of Defence. I read (somewhere) a few years back that Argentina may have been able to track British ships during the Falklands War by checking where British weather reports came from in the Atlantic. I don’t know if that really was the case, but it’s a theory out there. Don’t forget that piracy has been on the increase, too, and naval ships are the primary means of thwarting such actions.

Chris Colose @ 68, those “x” files with the extra x on the end are not compatible, but you may “save as” XP compatible. Some of us will appreciate it. ;)

#72 Pete.

Do you have the Microsoft Office Compatibility Pack for Word, Excel, and PowerPoint File Formats installed for your version of Office/Excel?

http://www.microsoft.com/download/en/details.aspx?displaylang=en&id=3

You “should” be able to read *.*x files and then save them as *.* (i. e. *.xls).

This worked back in the days when I had Excel 2003.

Of course, if you don’t have an older copy of Office to begin with, than this won’t help much, sorry.

Good luck.

[Response: Open Office (or Neo Office on the Mac) will open these files – and they are free to download. – gavin]

Some ships are implementing call sign masking, by using an unique callsign like BATEU01 or BATEU49 instead of their real callsign. Some others are just using SHIP as a call sign (today 18Z there were at least 18 ships called SHIP).

It has to do with the possibility of “a link between VOS data availability on external Websites and piracy and other ship security issues”.

See WMO pub. 508 (page II-113):

http://www.wmo.int/pages/governance/policy/documents/508_E.pdf

> track … ships … by checking where … weather reports came

That’s a reason for short term anonymizing or delays, but I’d guess some countries keep site identifications anonymized to protect the data they make money by selling.

I recall confidentiality agreements came up previously since climatologists needed the details but some countries profit by selling such detailed weather data to businesses for scheduling activities like travel or roadbuilding.

Perhaps those countries have ceased to trust the ability of the climate scientists to guarantee their data isn’t disclosed in violation of such agreements?

I’d think the next IPCC reporters need to resolve the problem, whatever it is, fairly soon.

Gavin the paper you cited is here right?

http://www.metoffice.gov.uk/hadobs/hadisst/HadISST_paper.pdf

thanks

Re. 76 Hank

The Ministry of Defence, being the owner of the UK Met Office, makes millions in dividends from Met Office profits, since UKMO became a trading fund (it can be tens of millions of quid per annum). The UKMO’s financial report is available online, where we see a drop in revenue but UKMO still able to pay for itself, IIRC.

But I’d have thought anonymising the data would make it less commercially valuable, academia being a main customer? Given how the naval protection of maritime trade is an ancient raison d’etre for navies, which traditionally have also sought to have the best knowledge of weather for tactical advantage, plus the increase in high seas piracy (coincidentally since not too long before when the data went anonymous) where it’s to a navies advantage for not allowing resourceful pirates to know whether their ships have been in the area even in the last few days, I wouldn’t discount the anonymising being primarily for military reasons. I imagine the lives of merchant seamen and cruise ship passengers take priority over academic papers.

Of course, the Falklands War hypothesis I read may be bunk, which I fully acknowledge.

In the opening paragraph you state:

“This paper demonstrated that there was very likely an artifact in the sea surface temperature (SST) collation by the Hadley Centre (HadSST2) around the end of the second world war and for a few years subsequently, related to the different ways ocean temperatures were taken by different fleets”

In fact Thompson et al. (2008) demonstrated that there was likely an artifact in *all* SST data sets that use ICOADS. Not just HadSST2, all of them.

worth a look:

http://www.weatherdata.com/services/news_5myths.php

“While the sale of reformatted information does occur, many commercial weather companies integrate both governmental and nongovernmental sources ….

… operate their own computer models using proprietary algorithms in order to provide value for their clients….

…

… commercial weather companies use research and development efforts to better their competitive position, many research and development efforts are often confidential …. (Pielke 2002, manuscript submitted to Bull. Amer. Meteor. Soc.) …. the commercial weather industry has been pressing Congress for legislative action.”

Sounds like a real mess as far as getting access to information useful to climatology.

Gavin,

From what I can read of the graphs given (not at all easy given what you’ve provided), the adjustment does look like an entire degree – 50% of the value, as MacIntyre claimed.

Perhaps you could provide an graph plotting the actual item of interest: the amount of the adjustment.

My best reading of the graph: there is a downward adjustment of about 0.2 degrees in 1939 or 1940 of about 0.2 degrees which must be for something else; and then an abrupt upward adjustment of 0.8 degrees.

[Response: The difference between HadSST3 and HadSST2 is in the second graph. The differences this makes in the Land/Ocean HadCRUT3v is estimated in the last graph using an anomaly of 0.7*(HadSST3-HadSST2), therefore the difference in the last graph is just 70% of the difference in the second one. Thus the maximum change is around 0.15 deg C around 1946-1948. – gavin]