Every so often people who are determined to prove a particular point will come up with a new way to demonstrate it. This new methodology can initially seem compelling, but if the conclusion is at odds with other more standard ways of looking at the same question, further investigation can often reveal some hidden dependencies or non-robustness. And so it is with the new graph being cited purporting to show that the models are an “abject” failure.

The figure in question was first revealed in Michaels’ recent testimony to Congress:

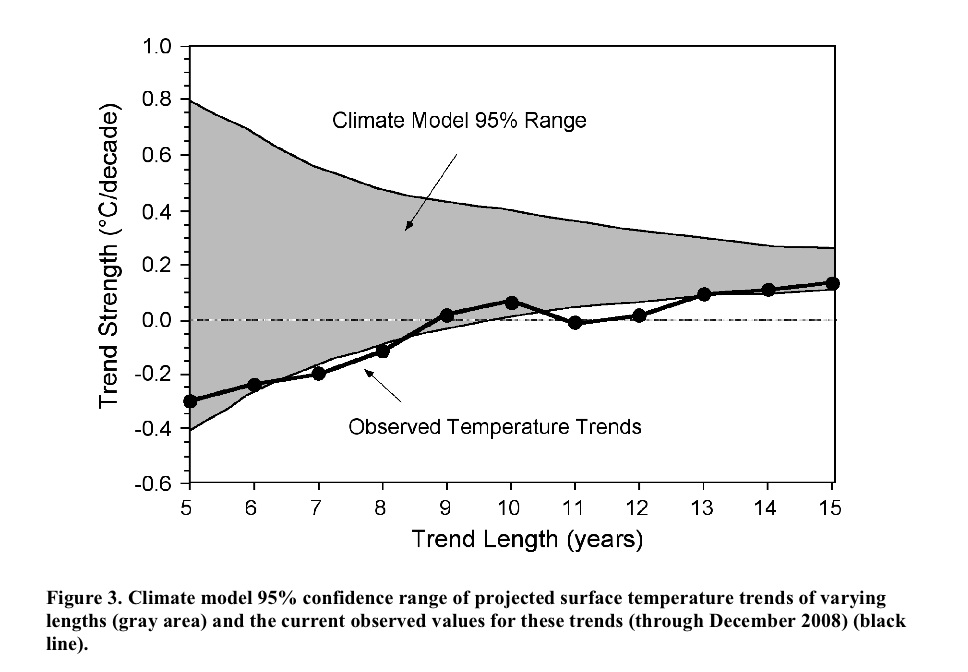

The idea is that you calculate the trends in the observations to 2008 starting in 2003, 2002, 2001…. etc, and compare that to the model projections for the same period. Nothing wrong with this in principle. However, while it initially looks like each of the points is bolstering the case that the real world seems to be tracking the lower edge of the model curve, these points are not all independent. For short trends, there is significant impact from the end points, and since each trend ends on the same point (2008), an outlier there can skew all the points significantly. An obvious question then is how does this picture change year by year? or if you use a different data set for the temperatures? or what might it look like in a year’s time? Fortunately, this is not rocket science, and so the answers can be swiftly revealed.

First off, this is what you would have got if you’d done this last year:

which might explain why it never came up before. I’ve plotted both the envelope of all the model runs I’m using and 2 standard deviations from the mean. Michaels appears to be using a slightly different methodology that involves grouping the runs from a single model together before calculating the 95% bounds. Depending on the details that might or might not be appropriate – for instance, averaging the runs and calculating the trends from the ensemble means would incorrectly reduce the size of the envelope, but weighting the contribution of each run to the mean and variance by the number of model runs might be ok.

Of course, even using the latest data (up to the end of 2008), the impression one gets depends very much on the dataset you are using:

More interesting perhaps is what it will likely look like next year once 2009 has run its course. I made two different assumptions – that this year will be the same as last year (2008), or that it will be the same as 2007. These two assumptions bracket the result you get if you simply assume that 2009 will equal the mean of the previous 10 years. Which of these assumptions is most reasonable remains to be seen, but the first few months of 2009 are running significantly warmer than 2008. Nonetheless, it’s easy to see how sensitive the impression being given is to the last point and the dataset used.

It is thus unlikely this new graph would have seen the light of day had it come up in 2007; and given that next year will likely be warmer than last year, it is not likely to come up again; and since the impression of ‘failure’ relies on you using the HadCRUT3v data, we probably won’t be seeing too many sensitivity studies either.

To summarise, initially compelling pictures whose character depends on a single year’s worth of data and only if you use a very specific dataset are unlikely to be robust or provide much guidance for future projections. Instead, this methodology tells us a) that 2008 was relatively cool compared to recent years and b) short term trends don’t tell you very much about longer term ones. Both things we knew already.

Next.

Consensus.

If EVERYONE who drops a ball sees it fall DOWN, there is a consensus that things fall DOWN. Not UP.

Because of this, is the probably apocryphal story of the apple hitting Newton on the head proof that gravity isn’t science? I mean, it’s a CONSENSUS, isn’t it. We all agree on that experiment, don’t we. ‘cept maybe David Copperfield who will say he can make it float and disappear.

So if consensus is NOT science and therefore your implication that if there is consensus it cannot BE science, gravity isn’t scientific.

Really weird people out there.

#147 Dan

A mere drop in the ocean compared to what the state spends.

This is a fundamental error. Different selections of facts can point to different conclusions.

Consensus can be bought. As can peer-reviewers.

[Response: This is hilarious. You obviously don’t know any scientists either. But my pointing out that this is complete nonsense without a shred of evidence to back it up can simply be dismissed by the claim that I too am doing my paymaster’s bidding. You have no idea how far away this is from the truth. – gavin]

[Response: You have absolutely no idea how the federal government works.]

YES HE DOES!!!

He’s watched ALL the X-Files and read all the way up to level 10 on Xenu biography. And David Ike told him about the TRUTH.

They’re all non-human lizard overlords who hide EVERYTHING and want to keep the real humans from realising that the lizards are in charge.

And he knows that there are no lobbying efforts from any corporation to coerce corruption in government because The Corporation Is Your Friend (Please Report For Termination. Have A Nice Day) and they wouldn’t do anything *bad*. Not like governments do. ‘cos governments are “peopled” by our inhuman lizard overlords whereas corporations are built from the VERY BEST humans.

So there!

#148 dhogaza

That wrongly assumes no change in thinking in the government.

Hank Roberts in 117 wrote:

He thinks Al Gore wants to blow up the King of England. A few too many role playing games if you ask me.

BFJ Cricklewood, OK. Let me get this straight. We have temperature measurements that show each subsequent decade of the last 3 is warmer than the last, but you don’t trust them because the data are processed by the government. We have satellite measurements showing we’ve lost 2 trillion tons of ice in the past 5 years, but you don’t trust them because they’re government satellites. We’ve got glaciers retreating the world over, but you don’t trust those measurements because government scientists made them. We have a hundred and fifty years of climate science all of which supports anthropogenic causation of the current warming, but you don’t trust all that work because some of the scientists worked for the government.

You contend that the whole motivation is for governments to extend their power, even though the measures needed to address climate change will alter the very fabric of the economies that have supported governments up to now.

Gee, BFJ, given that this blog is about climate science, and you have no interest in science of any kind, since it’s all a government plot, as I have said above, it would appear we have nothing to discuss. Have a nice life, and enjoy your irrelevance.

#149 Mark

No, compare the oil/coal/gas (why tobacco?) spend on climatology, to state spending on climatology. Peanuts.

(Your comments on fuel tax I again cannot make head or tail of)

BFJ Cricklewood (146) — In many, if not all, branches of science there are some researchers who don’t manage to put it togethr properly. So there are always some few producing way-out, easily seen to be wrong, kooky papers.

Usually competent researchers just ignore the work as such individuals quickly gain an unfavorable reputation. That is certainly my view of some noisy few, from my amateur back-bench in climatology.

A plea from a lurker:

BFJ Cricklewood is proposing an argument whose natural conclusion is that *no* statement of fact can be trusted, as the person or institution making the statement has motivations that might cause them to lie. This view is completely consistent, totally uninteresting, and self-evidently being applied selectively to reject those views for which s/he has other reasons to reject. I see no value in Gavin or other non-troll commenters on this site paying him any further attention.

HOWEVER,

the argument BFJ Cricklewood is proposing is conceivably somewhat persuasive to someone who knows nothing about the (for example) NSF funding process. And, in fact, even though I’m an academic-in-training, I don’t know very much about this process, either. It would be far more instructive if those people who *do* know about it could describe it in a little detail, rather than spending their time pointing out the (self-evident) fact that BFJ Cricklewood is engaging in nutcase conspiracy theorizing.

In particular, I gather that NSF receives a pot of money from Congress each year. Then there is some process by which this money gets distributed to individual researchers, institutitons, or students. How exactly are these decisions made? Does Congress direct the funds in any way? I know that people outside of the NSF are invited to take part in the grant-evaluation process — who takes part, and what is their role? What is the basic decision-making process? Has anyone on this thread ever taken part in an NSF grant review and so could describe it briefly?

#159 JBL

No, you repeat the earlier mistake of inserting conspiracy into my argument. It’s more about systemic bias, the ultimate source of funding unavoidably having some influence – eg by selecting which institutions get money. This influence is up at the political level, which is why is goes way over the head of your typical working scientist who has technical issues in the forefront of his consciousness. And it applies mainly to discinplines like climatology, where the conclusions can have large political implications.

[Response: Ahh…. so now we are unwitting dupes, unconsciously doing the bidding of our reptilian overlords! As the earlier commenter said, this a completely invulnerable shield of nonsense, and so, we must reluctantly bring down the curtain on this amusing interlude. No more responses on this please. – gavin]

Ray Ladbury wrote in 156

Sounds about right — right down to your quantum mechanics, the absorption and emission of photons (Einstein was a patent clerk) by matter, the spectra, any inconvenient fact. All the studies, papers, scientists and sciences one vast conspiracy to hide the truth of his world view. Reminds me of some of the more extreme views I found among young earth creationists.

Of course the larger the conspiracy, the more difficult it would be to keep it quiet:

But even today the UK is home to a few who believe that the sun revolves around the earth.

Date Index for geocentrism, 02-2005

http://www.free lists.org/archive/geocentrism/02-2005

JBL (159) — NSF used to mainly use individual reviewers, much as peer review of paper publication. It seems that NSF is going for more panels these days; I’ve served on two and will never do it again, even if asked, which I won’t, being retired.

The panelists are supposed to read the funding requests before meeting to discuss the requests and then write a bit about the requests all while NSF program managers are observing.

In either form of review, the funding requests fall into three easily divisable groups: definitely fund, don’t no matter what, and the hard cases. The hard cases are those whose funding depends upon the amount of funds that NSF has to grant, so some words about importannce are necessary to provide some guidance to the NSF program directors.

In any case the NSF program directors have the finally say about who is funded and at what level, subject only review and potential changes by the NSF oversight committee. In this aspect it is slightly different, but not much, from the editorial process used by most quality journals.

[reCAPTCHA agrees: “confer- hearing”.]

RE JBL 30 March 2009 at 6:10 PM:

I cannot speak for NSF, but I do work at NIH on extramural programs, so I have some knowledge about grant processes, and I have published some medical research papers so I have some knowledge of the peer-review publishing process.

Congress makes an appropriation to the funding agencies to use for disbursement through grants. A researcher (Principal Investigator or PI for short) submits a grant application to the agency. The application describes the aims of the research, research plan, personnel and a proposed budget that covers the cost of the research project. The budget would normally include salaries, fringe benefits, supplies, equipment, and overhead for the institution (university or research center).

The grant gets grouped together with other grants and the set of grants is reviewed by other researchers in the field (peers – at NIH they are mainly outside the government), who score the grant based on the importance (and novelty) of the specific aims, feasibility based on preliminary data and experimental plan and past performance of the PI. This is the basis of peer-review of funding.

There is another peer review process for the publication of research findings. A set of authors (driven by the lead or senior author – first and last names on the author list) writes a manuscript and submits it to a scientific journal. There are various tiers of journals based on impact – Science and Nature rank near the top, followed by various specialty journals. Gavin, et al. can fill in the order for climatology, but I would assume that Energy and Environment doesn’t rank very high.

The journal editor assigns the manuscript review to two or three reviewers (other scientists who should have the technical expertise to judge the work) and the reviewers try to pick apart the arguments that the authors are trying to put forward and give a judgment as to whether the article is publishable or not (or could be improved to make it publishable). If the manuscript is rejected, the authors will usually redo the manuscript and submit to another journal – the authors and the publishers look for a good fit, and authors often aim high.

Congress will sometimes exert influence by directing some of the appropriation to a category ($X billion for AIDS, for example) or provide earmarks that are not part of the NIH or NSF budget and are not peer-reviewed.

Peer review is an important part of the process, but the charge of conspiracy, whether applied to climate or viral origin of AIDS, falls flat. There are examples of truly novel research that goes against conventional wisdom; Marshall and Warren managed to publish their “heretical” H. pylori research and the Alvarezes got their K/T boundary paper published in Science. I know medical researchers who established strong reputations by thinking outside the box.

RE David B. Benson 30 March 2009 at 7:10 PM: But wouldn’t the discretion exercised by NSF Program Staff be somewhat limited? My experience is that the more highly scored applications generally get funded and applications with lower scores do not. I do know that there is a gray area that can be more up in the air (and there are adjustments for “program balance”).

I also wonder how much many programs get competed through contracts – contracts also get funded through a peer-review system, unless the agency can justify “other than full and open competition” and not have the process get challenged by a competitor.

David Benson has it about right. Most individual investigator proposals are evaluated by letter review. Special programs are evaluated in panels (center grants, etc.) Panels, which tend to look at proposals over a large range of fields, will often receive written reviews from experts. NSF panels tend to be fairly small, 10-15 people. Each person is asked to be a primary on ~5 and secondary reviewer ~5 proposals in the panel. The job of the secondary is to summarize the letter reviews. A third person keeps track of the discussion and writes the review. Everyone has to sign off on every review

In NIH almost everything runs over very large panels called study sections. Very intense stuff.

NASA runs mostly by panels, at least in the areas Eli is familiar with

If Eli was a grad student getting ready to graduate, rather than an old and tired bunny, he would call up a program officer in his area and discuss how the reviews are done in that directorate, what reviewers tend to stress. Anyone who actually has a new faculty position should volunteer to serve on a panel to get an idea of what wins.

Have to go now, I owe a review

JBL wrote in 159:

I would change that to “either has motivations or may have motivations that might cause the to lie.” This way he can assume that they are merely being hapless pawns fooled by the grand conspiracy until they start detailing why they are certain.

JBL wrote in 159:

Not too far removed from the theory that one’s brain is in a vat being zapped by aliens. Or that some omnipotent being created the world last Wednesday. But I’ve spent eighty pages before analyzing Descartes Six Meditations almost line by line. I probably wouldn’t want to go through that again — and yet it would be a great deal more interesting than playing Cricklewood’s game.

What is it with all these tin-foil hats anyway? Are we being flash-mobbed by a convention of schizophrenics?

JBL wrote in 159:

Can’t help you there, but I would be interested.

“Never argue with an idiot. They drag you down to their level then beat you with experience.”–Jawaad Abdullah

If a man alleges that all the evidence is tainted by politics, that all the scientists are tainted by politics and refuses to even discuss the theory, the chances of having a discussion based on science are pretty slim. Mr. Cricklewood would reduce climate change to politics because he knows he cannot win on the basis of the evidence.

Don’t play that game. Don’t feed the troll.

It is truly a sad reflection on the state of science education when a software engineer (i.e., Cricklewood) actually believes he knows something that all the major climate science professional societies across the world do not. It reminds me of the “chemtrail” people. Now that’s one to Google for a laugh.

Thanks to everyone who responded!

Timothy Chase wrote in 166: “but I would be interested.”

Yes, me too — it was certainly more interesting to hear a description of why BFJ Cricklewood was wrong directed at those of us who mostly just listen in than it would have been to see a few more posts noting that BFJ Cricklewood is completely nuts.

I think I’m straying into Hank Roberts’ territory in noting this, but writing *to trolls* is almost always worthless: they don’t learn, and it sidetracks threads into uninteresting tangents. But writing *about why trolls are wrong* (and directing the comments elsewhere) can be quite interesting, and also feeds them less.

Deech56 (164) — NSF Program Directors usually, almost always, take the advice of the reviewers. But in the gray area where the proposals are not of the very highest quality and the program dollars just about all committed, some judgement calls must be made.

Re: JBL (159), David B. Benson (162), Deech56 (163, 164), Eli Rabett (165)

So basically one of the key problems with Cricklewood’s “theory” is that the decision-making that determines which studies get funded and which do not is itself highly decentralized.

However, I would argue that another (albeit related) key problem is the decentralized nature of the process of scientific discovery. Those who determine what funding gets done won’t know beforehand what will be discovered — and they wouldn’t be able to keep track of all of the interconnections which will be discovered by the vast number of independent and highly intelligent minds that are involved in this process. To do so would greatly exceed the intelligence of any central authority, whether it be an individual or committee.

Something about all of this sounds oddly familiar.

However, I would argue it is also closely related to why science is so powerful:

In science there are a great many largely independent lines of evidence and investigation, each of which are supported by a great many more.

The more speculative a proposal is, the more the reviewers want to see preliminary evidence that there is some there somewhere or other.

#26-29 Gavin, as would be expected, anti-science media picked up on Dyson word for word we discussed. Tragically predictable, quite human I am afraid, only Dyson can completely untangle the mess. I doubt he will, given that retractions are rare amongst high reputation scientists.

http://mediamatters.org/countyfair/200903300047?show=1

“No, compare the oil/coal/gas (why tobacco?) ”

For those who aren’t whacko nutjobs but are likewise unsure why tobacco was included, Phillip Morris (big Tobacco) is a big supporter of all the better funded skeptic circuits. The reason for this is that if it can be “proven” that climate science is wrong about GW, then maybe the biologists are wrong about the dangers of smoking.

And if Body Thetan Cricklewood wants only what’s spent on climatology for these people, then it should be the same for government sponsored work. Exclude meteorology even, not just biology, engineering, astronomy, particle physics,… But should include all lobbying efforts by the companies, since they wouldn’t waste money on lobbying if they didn’t think it would work and wouldn’t miss out countering works that would see their revenue sink like a stone.

You still have more money on the anti side.

PS I now have Batfink on my mind ‘cos of that guy: “your truths cannot harm me! My brainpan is like a shield of steel!”

PS any way to skip the “please reword ‘cos it’s spam” when theres naff all spammy about the message. Or at least show up the text with the bad words in there.

Apparently either “muc ho” or “dine ro” were spam.

How?

The day that spammers start selling e is a day wordpress sites will die. Or maybe I should say “Th day that spammrs start slling is a day wordprss sits will di”. Heck even wordprss will have to change its name…

I can understand WHY there’s a spam filter. It seems as if it’s not only throwing the baby out, but the bath, sink, plumbing and the family dog out with the bathwater.

Re #171 Timothy Chase

“However, I would argue that another (albeit related) key problem is the decentralized nature of the process of scientific discovery. Those who determine what funding gets done won’t know beforehand what will be discovered — and they wouldn’t be able to keep track of all of the interconnections which will be discovered by the vast number of independent and highly intelligent minds that are involved in this process.”

One is reminded of the recent UK GM Field-scale trials. For example see the range of commentaries here:

http://www.agbioworld.org/biotech-info/articles/biotech-art/farmscaleevaluations2.html

Note e.g. the contrast between Nigel Williams & Conrad Lichtenstein.

RE Timothy Chase 30 March 2009 at 21:33

“So basically one of the key problems with Cricklewood’s ‘theory’ is that the decision-making that determines which studies get funded and which do not is itself highly decentralized.”

Good point – for the most part, it’s out of the hands of the government (or more correctly, the government relies on the reviewers for advice and almost always follows their advice). Program people are also glad that grant/contract review is separate from the influence of Congress and lobbyists. We really want the science to be the highest quality – a successful grant or contract portfolio (advancement of the science, publications) is good for everyone.

Timothy Chase (171): Of course, the response to your reasonable conclusion is usually (as expressed to me by one of the leading practitioners of the Chewbacca defense) is that scientists are engaged in “groupthink.” This impression is fed by the utterings of people like Spencer, W. Gray and Lindzen.

“that scientists are engaged in “groupthink.” This impression is fed by the utterings of people like Spencer, W. Gray and Lindzen.”

Which is another group think.

Odd, eh?

Re: Dyson (please correct if I have made an error)

The most substantive point in the Wikipedia article is

“The effect of carbon dioxide is more important where the air is dry, and air is usually dry only where it is cold. The warming mainly occurs where air is cold and dry, mainly in the arctic rather than in the tropics, mainly in winter rather than in summer, and mainly at night rather than in daytime. The warming is real, but it is mostly making cold places warmer rather than making hot places hotter. To represent this local warming by a global average is misleading,”

While the last sentence may have some merit (for different reasons from those above) , the rest seems dubious.

1. Positive feedback caused by rise in water vapour (caused by warming) accounts for perhaps half of the estimated warming and this will be located most where the air is humid in contradiction to Dyson’s “cold and dry”.

2. The enhanced CO2 will also have a direct effect where the air is humid because its absorption spectrum does not completely overlap the water vapour.

2a). Some of the extra CO2 may end up lying above (i.e at a greater height) than a humid region. Why can’t this act to make hot places hotter?

[Point 2 is also in Michael Tobis’s web page who makes other points]

—————————-

By the way I think that Dyson is a great enough physicist without the hype being given to his contributions in some places. But the conjecture that he is right about everything needs to be tested. In the past he appears to have assumed Moore’s law for everything for a century or more. I’m surprised that his economic models only contain growing exponential functions. How about Malthus?

Don’t forget that the tobacco industry has also invested in the “DDT is harmless and environmentalists banned it because they want to kill poor black people in Africa” scam.

Re #181

They don’t have to prove that that climate science is wrong. Just through some doubt on the science. Then that doubt rolls over onto the “cigarettes causes cancer” and people take a chance.

But the spreading of doubt has worked! See http://tigger.uic.edu/~pdoran/012009_Doran_final.pdf It is no use calling for more education of the public. What we need is more public relations training in “some scary scenarios” for scientists.

Cheers, Alastair.

RE Mark 31 March 2009 at 8:1 AM

But the denial crowd really can’t agree on anything (CO2 up? No warming? Solar? Surface temps?) except that the published literature is wrong. That fact doesn’t seem to bother them. Excuse me – I think my head is going to explode.

Weaving of Threads, part I of II

Deech56 wrote in 178:

And your acquaintance would be right after a fashion. Dialogue is a form of group-think in which the group is capable of far more than any individual in isolation.

Please see:

I have seen this principle at work — particularly at St. Johns College:

That is the power of human thought and civilization:

… and it is key to understanding science:

Weaving of Threads, Part II of II

Science and civilized thought aren’t merely some sort of echo chamber or mass delusion — and if someone were to argue otherwise they would be guilty of self-referential incoherence insofar as the very fabric of their thought is dependent upon that “mass delusion.” How could they possibly know what they claim to know? This is the problem with radical skepticism.

So as not to post something especially long, I will refer you to something I wrote some time ago. I posted the piece in DebunkCreation, although it was actually part of a much longer paper.

The part that I am refering you to begins here, a few paragraphs down:

But in short, if a radical skeptic were to claim that all of this is simply a mass delusion, then in logic he couldn’t claim to know this or to even know that the proposition were meaningful.

However, the above is concerned with the global problem of radical skepticism, not some more localized form of denialism. So how do we respond to that? Is it possible for the scientific consensus to be wrong?

First we must admit that there exist widespread interdependencies between the sciences.

Please see the following comment, and in particular the section that begins:

Likewise, we must admit that errors and possible, but then we can point to the fact that science is self-correcting:

… and that even in the case of major paradigms for which there existed a great deal of evidence but which were later replaced, much of what was at one time thought to be true has in fact been preserved in the form of a correspondence principle between the older theory and its newer replacement — that the greatest difference between the two lies simply in the languages in which the theories are expressed (ibid.).

What the particular conclusions of empirical science draw their strength from consists for the most part in the multiple, largely independent lines of empirical investigation. The more they accumulate, the stronger the justification for those conclusions become, such that even when some of the stronger conclusions are eventually replaced, we may know with some confidence that much of their content will be preserved, and that the difference will largely consist of the form (language) in which that content is expressed.

A great deal of empirical evidence has accumulated for the major conclusions of climatology, evidence from many largely indepedent lines of investigation. In logic, if one is to avoid radical skepticism for skepticism of a more deliniated form with respect to empirical conclusions justified by reference to evidence, one cannot offer a broad, philosophic argument that by its very nature would undermine all human thought, but one must provide arguments of a more deliniated form. In logic, one cannot arbitrarily proclaim the major conclusions of climatology unwarranted without addressing the issue of the evidence that supports them — and presenting an alternative which is equally coherent and capable of explaining that evidence with the same degree of specificity.

This is something which denialists will never attempt because even they see the utter futility of such an endeavor.

Geoff Wexler, That Dyson quote is a wonderful illustration of how a very smart guy can be very wrong when he ventures outside his realm of expertise. How wet does he think the atmosphere is above cloudtops? As I’ve said before, I don’t think Dyson likes to get bogged down in details, and therein lies the devil.

I had written towards the end:

Case in point:

*

Captcha fortune cookie:

to listen

Seeing how the envelope in any of the graphs spans a 1.6 deg C possible trend, and the observed temp has only varied 0.4 over the graph time span. Then not only is the model envelope an abject failure, the whole thing fails by design, especially with %75 play from the envelope. What is being proven or disproven here, and why so many “told you so’s” from the commenters, it’s getting old.

[Response: You miss the point entirely. Short term trends are too variable due to the internal variability to constrain long term sensitivity. – gavin]

I do now see that you are correct in your response, Gavin. Maybe this argument should not be made from either side then, at this time. Thanks

“But the denial crowd really can’t agree on anything”

Oh, they all agree that AGW isn’t a problem.

There’s a large group (think about where the term “groupthink” has its etymological roots in) that believe that anything the government does is WRONG.

There’s plenty of agreement.

They don’t agree on WHY or HOW.

Mostly because they don’t CARE about having a theory, they just want to tear down one. No need for a consistent theory if all you want is another one torn down, is there. That’s likely to result in you being proven wrong. So don’t give a counter theory, just make out how bad the AGW theory is. DO NOT REPLACE IT. It’s not wanted and it hurts the denialist aim: remove AGW as a theory.

Deech56 wrote in 183:

We took different paths, but we appear to have arrived at the same place.

Deech56 wrote in 183:

Quite possibly.

“abject failure”! sounds familiar…

Read what Hansen wrote about the NYT before you fall for the spin in their article about Dyson and Hansen.

http://solveclimate.com/blog/20090329/ny-times-invents-climate-science-war

Go to Hansen’s page for the full letter he wrote.

He’s quite open to Dyson’s point of view, when understood. It’s a mature response.

Recommended.

Of course the graph is dependent on the end point. Of course the various points are highly correlated. Why would anyone expect differently?

DID MICHAELS CLAIM OTHERWISE?

No.

He is showing that, independent of starting point, the models aren’t doing particularly well. He is making this argument SPECIFICALLY because previous attacks have claimed that the starting point was cherry picked. As far as I know, he hasn’t been attacked for choosing the most recent data as an end point, because until now that was considered the right thing to do.

Using the end of 2008 is ONLY cherry picking if we know that temperatures are going to go up significantly in 2009 and beyond. Are we sure of this? Is Real Climate ready to make a prediction for 2009 and 2010 temperatures?

If you are going to criticize his graph because he doesn’t use 2009 and 2010 data, then you should tell us what you expect temperatures in those years to be, so we can revisit the issue should your expectations be wrong. [I’ll be using HadCRUT to see how accurate you were]

OTOH, if we don’t know that 2009 and 2010 temperatures are going to be up significantly, then there really isn’t any basis for criticizing the graph.

Everybody agrees that a very warm 2009 will render this graph useless, while a very cold 2009 will mean that the model mismatch has been greatly understated. What is news, in your post, is that “next year will likely be warmer than last year”. That’s good to know. Would you care to quantify this so we can understand just how misleading an endpoint 2008 really is?

[Response: Actually Michaels did show his graph with 2009 data filled in equal to 2008. That wasn’t my invention. But he didn’t show what you get using GISTEMP and he didn’t show what would happen if (as is likely) 2009 is warmer than 2008. And yes, that is a prediction. The point is that Michaels claimed that these results imply that the models are ‘abject failures’. Such a strong conclusion should rely on more than a single year of data no? – gavin]

Jason (194):

“He is showing that, independent of starting point, the models aren’t doing particularly well”

No, he THINKS he is showing that. What he’s actually showing is that short term plateaus, or even declines, can occur in a long-term upward trend, which is absolutely not news. Also, what does he have to say when the observed trends are higher than predicted by the models? Let me guess “the temporal variance is too high–the models never correctly predict the time course of T evolution, and therefore are not to be trusted”

“Using the end of 2008 is ONLY cherry picking if we know that temperatures are going to go up significantly in 2009 and beyond.”

Wrong. It’s also cherry picking if you wait for a relatively low year and then do your analysis. As mentioned in the article, why didn’t he do it last year?

“Everybody agrees that…a very cold 2009 will mean that the model mismatch has been greatly understated.”

Yeah everybody…except the ones who know something about it. Model mismatch for what exactly? For how long? And since you’re fond of odds-making, what are your odds for a “very cold 2009” given that like 9 of the warmest years in the last century have occurred in the last 11 years?

Michaels is making this presentation to a political audience. While I would not agree that the models are an “abject failure”, he is presenting to people who have been told that “the science is settled”. Properly nuanced testimony on capitol hill falls on deaf ears.

A quick review of your boss’s public statements show a similar lack of nuance. Climate sensitivity has been “nailed” at three degrees centigrade. Hardly any acknowledgment is made of how very limited our understanding of the climate system is.

And I have a hard time faulting him for it. Hansen and Michaels both understand the environment in which their statements are being interpreted. They are using stronger language and fewer caveats than I would like. But they aren’t talking to me. They are talking to congress and to the public at large, and they are adjusting their statements accordingly.

I honestly find “the science is settled” to be a far more unreasonable statement than “the models are an abject failure”. Science (which the models are part of) is evolving based on real world observations. Our understanding of climate WILL improve. The current models WILL be replaced. Climate sensitivity in the new models WILL be higher or lower than in the old ones. Congressional testimony about global warming WILL remain largely devoid of nuance. [Those are my four predictions.]

[Response: The only scientist I can find that has ever said the ‘science is settled’ is…… Patrick Michaels (last paragraph). You will not find such a statement made in any post on RC. – gavin]

I’ll hazard a prediction for the global surface temperature for 2009 CE: one of the cooler years this century (2000 CE onwards) and in the top 12 overall. I base this solely on the prolonged solar minimum just now. If a goodly el Nino happens to come along, I probably lose.

With Lucia showing here:

http://rankexploits.com/musings/2009/multi-model-mean-trend-for-models-forced-with-volcanic-eruptions-mega-reject-at-95/

And a study by Evan et al (2009) The Role of Aerosols in the Evolution of Tropical North Atlantic Ocean Temperature Anomalies

Does this not show that the models that incorporate volcanic forcing cannot model aerosol forcing since there are no measurements to use to parameterize and per Hansen, we do not know enough to use first principles.

[Response: You are confusing many separate issues. a) volcanic stratospheric aerosol loads are reasonably well known back to Agung (1963). Earlier eruptions (such as Krakatoa etc.) are estimated based on ice core sulphate loads in both hemispheres. They are probably ok (though it gets worse going into the pre-industrial). The size distribution and particle type for these eruptions is reasonably well known and the radiation perturbations match well what was observed by ERBE etc. This is not what Hansen is talking about. b) there are many natural aerosols in the atmosphere. In the tropical Atlantic there are significant amounts of dust that come in from the Sahara. Depending on the rainy season in any one year, there might be more or less dust. Dust has a direct radiative effect and hypothesised impacts on ice nucleation, the variance in the dust then, might affect sea surface temperatures. This is mostly what Evan et al are talking about. c) anthropogenic aerosols – mainly sulfate and nitrate (from emissions of SO2 and NOx/NH3) have a strong direct effect and undoubted liquid cloud nucleation impacts (the indirect effects). These are not well known and are what Hansen is talking about. – gavin]

Jim Bouldin (195):

“Wrong. It’s also cherry picking if you wait for a relatively low year and then do your analysis. As mentioned in the article, why didn’t he do it last year?”

Off the top of my head I can think of two different blog that performed substantially similar analyzes a year ago.

There are two reasons (besides declining global temperatures) why this issue is receiving increased attention now:

1. There is a readily available public archive of model runs.

2. Many of those models have remained largely static since AR3.

This means that for the first time a large number of models can be readily tested against temperature data recorded AFTER those models were finalized.

Each year that passes will give us another year of real data to compare to the models. More years mean more statistical certainty when performing these analyses.

“Yeah everybody…except the ones who know something about it. Model mismatch for what exactly? For how long? And since you’re fond of odds-making, what are your odds for a “very cold 2009″ given that like 9 of the warmest years in the last century have occurred in the last 11 years?”

I AM very fond of odds making. I would bet that the IPCC prediction for the first three decades of this century as I understand it (0.2 degrees per decade) overstates the amount of global warming we will actually experience. I would also bet that Hansen’s estimate of climate sensitivity at 3 degrees Centigrade is too high.

I would be flexible in terms of creating a structure for such a bet. Both parties should believe that winning proves something about the other’s a prior assumptions. A one year bet probably wouldn’t do since you could blame a cold 2009 on random noise, and I would feel compelled to agree. (And anyway, 2009 needs to be a fair bit warmer than 2008 to make Michael’s graph look bad in hindsight.)

Is this something you are interested in?

Gavin wrote:

“But he didn’t show what you get using GISTEMP”

I think that providing GISTEMP is a very good thing that GISS does. But I, and many other skeptical folks view the GISTEMP product with some suspicion. In particular, the tendency of retroactive temperature adjustments to increase the trend, and your statement about 0.25 FTEs being used to produce it.

These issues are not so troubling that I would refuse to use it if it were the only option. But HadCRUT is available. It is just as well accepted by the climate science community. And there is no obvious trend to their retroactive adjustments.

So I don’t think it is unreasonable to use HadCRUT for analyzing global temperatures and not bother comparing the results to GISTEMP. If I convince anybody to take a bet, I would want to use HadCRUT to determine the results (even if this meant that I lost).

[Response: I was not suggesting that GISTEMP was better (though that is arguable), but I was alluding to the fact that where there are structural uncertainties in an observational quantity (like GMST or MSU-LT) then ignoring them in assessing significance is wrong. It is much better to use both UAH and RSS, or HadCRUT and GISTEMP (and NCDC) than it is to simply pick the one that gives you the trend or character you prefer. As for GISTEMP, it is what it is – an analysis of the raw data. As part of the calculation it needs to estimate the difference between rural and urban trends – it does this for the whole time-series and so will change as more data comes in. There is nothing ‘suspicious’ about this, and anyone who claims otherwise has no clue what they are talking about. Read the papers to see what is done and why. – gavin]