I just came across an interesting way to eliminate the impression of a global warming. A trick used to argue that the global warming had stopped, and the simple recipe is as follows:

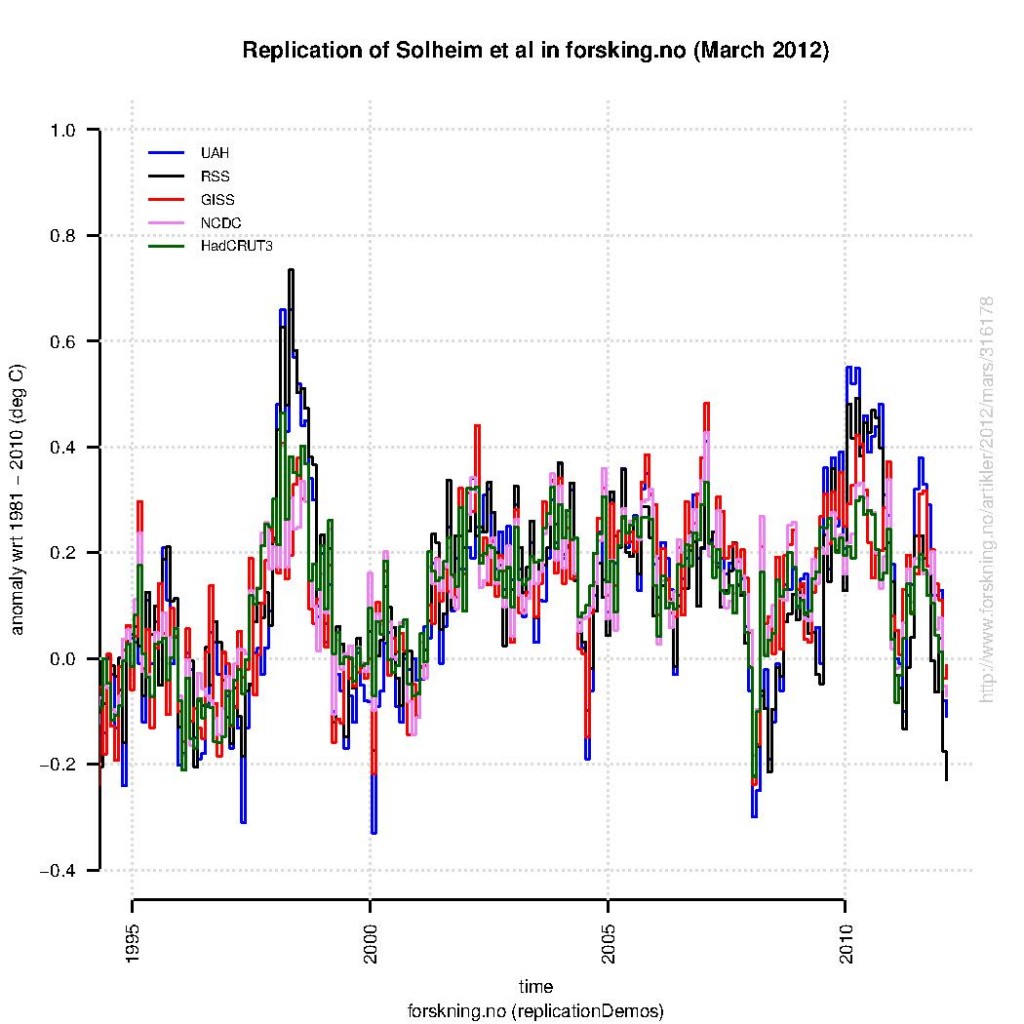

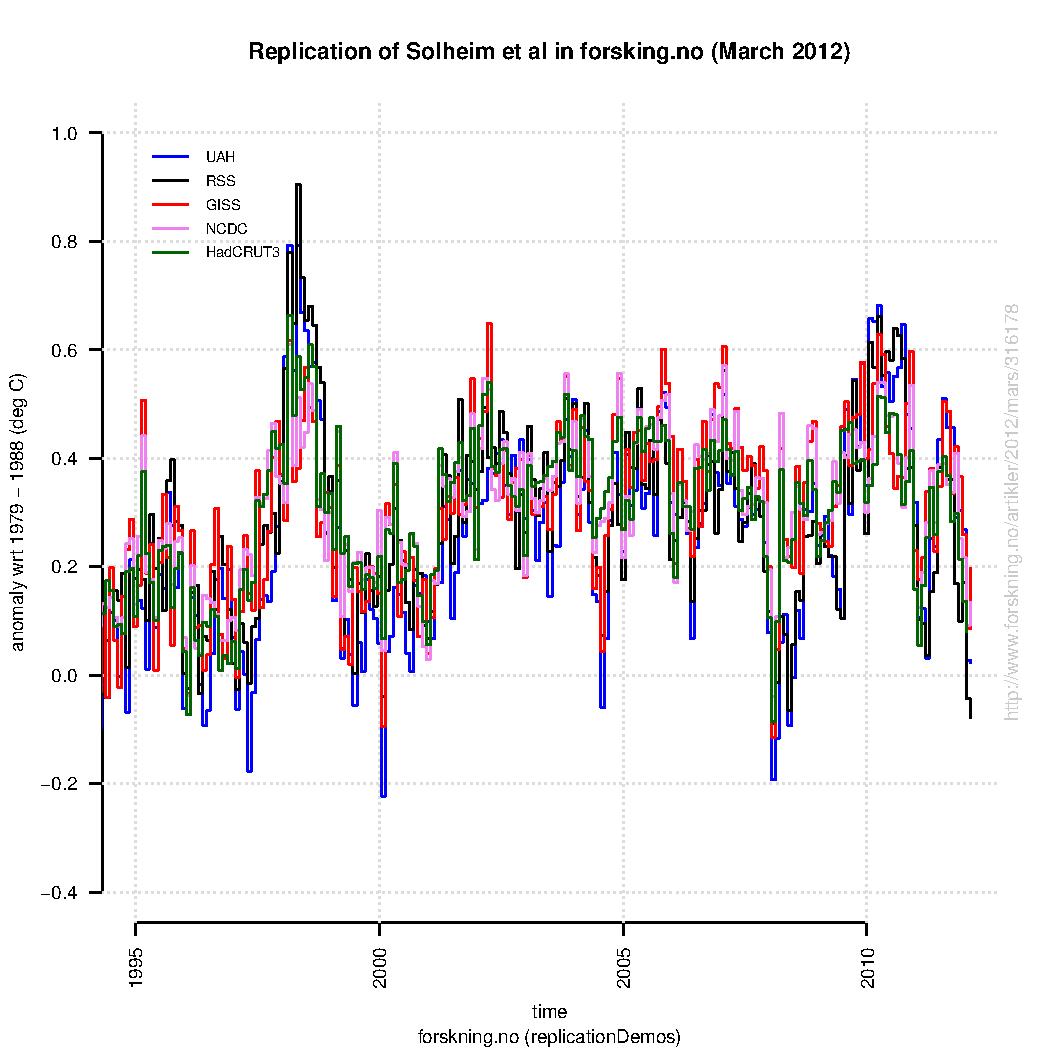

I’ve tried to reproduce the plot below (here is the R-script):

At least, many different global analyses were shown – not just the one which indicates weakest trends since year 2000. The data presented in this case included both surface analyses (GISTEMP, NCDC, and HadCRUT3) in addition to satellite products for the lower troposphere (Microwave Sounding Unit – MSU). The MSU data tend to describe more pronounced peaks associated with the El Nino Southern Oscillation.

A comparison between the original version of this plot and my reproduction (based on the same data sources) is presented below (here is a link to a PDF-version). Note, my attempt is very close to the original version, but not identical.

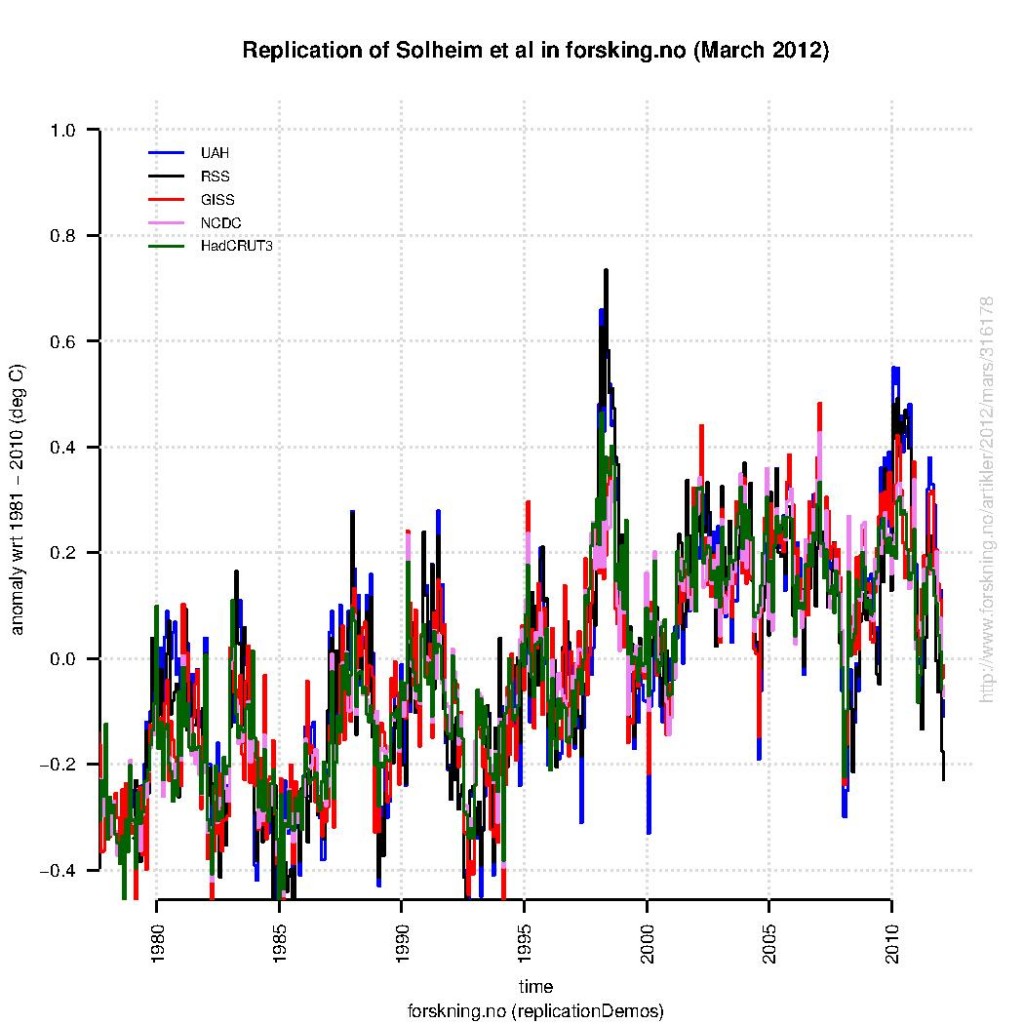

One should note that plotting the same data over the their entire length (e.g. from the starting date of the satellites in 1979) will make global warming trends more visible (see figure below). Hence, the curves must be cropped to give the impression that the global warming has disappeared.

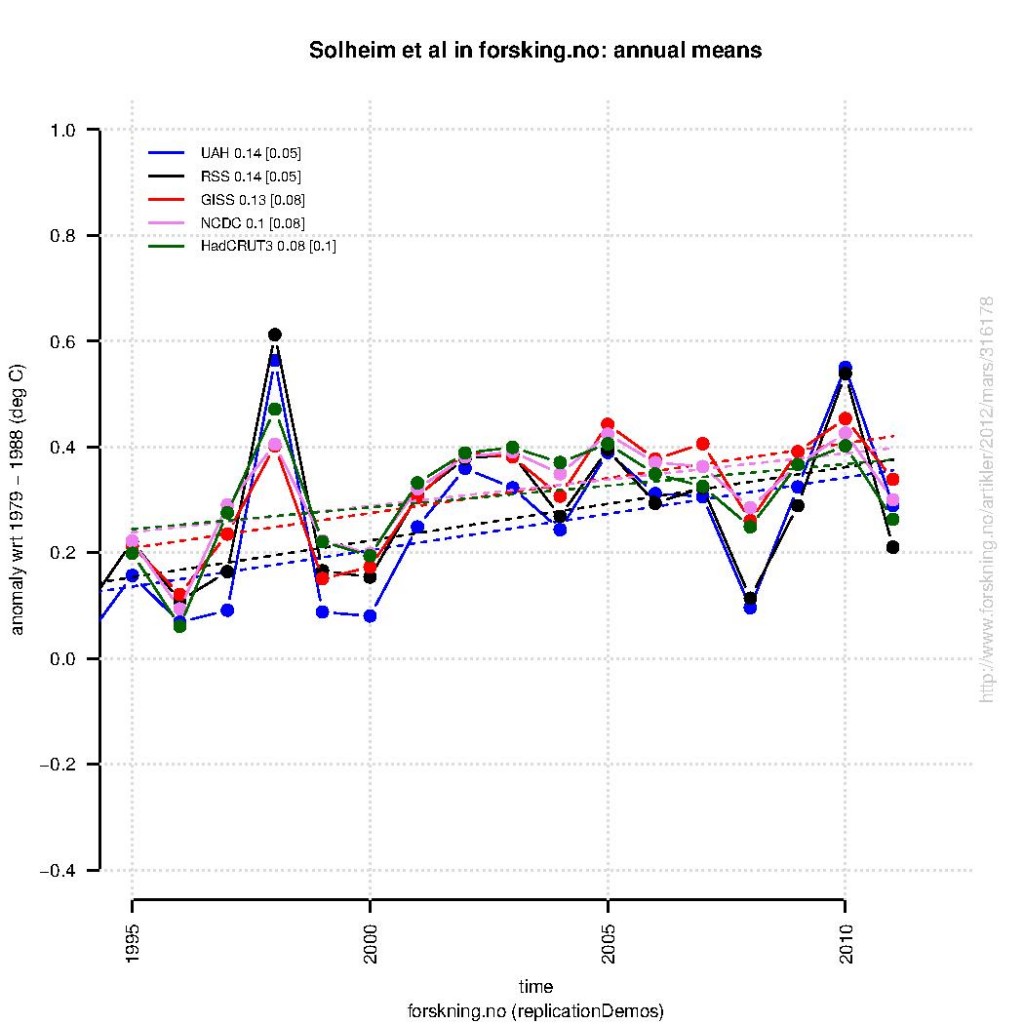

The real trick, however, is to show all the short-term variations. Hourly and daily values would be an over-kill, but showing monthly values works. Climate change involves time scales of many years, and hence if emphasis is given to much shorter time scales, the trends will drown in noisy variations. This can be seen if we show annual mean anomalies (as shown below for exactly the same data), rather than the monthly anomalies (again, done with the same R-script)

A linear trend fit to the annual mean anomalies the last 17 years suggest similar warming rates as reported by Grant Foster and Stefan Rahmstorf. These trends are derived from exactly the same data as those used in the original figure, that was used to argue that the global warming had stopped – by two professors and a statistician, the very same who performed curve-fitting and removed data not fitting their conclusion.

References

- G. Foster, and S. Rahmstorf, "Global temperature evolution 1979–2010", Environmental Research Letters, vol. 6, pp. 044022, 2011. http://dx.doi.org/10.1088/1748-9326/6/4/044022

Pointers:

Categories (in the right sidebar) is a list of links, including The Bore Hole

To look “Bore Hole” up: Search box, top right corner

In the top navigation bar, left side: Start Here button.

I think that the original plot on the “forskning” website is a product of Photoshop and the “AllinONE” graph you can find on the Climate4You website of Ole Humlum:

http://www.climate4you.com/images/AllCompared%20GlobalMonthlyTempSince1979.gif

The colours are exactly the same, the lay-out, the text in the graph, the axis text et cetera. The creator only removed the data before 1995, the y-axis values -0.5/-0.6 and the text “Climate4you graph” in the upper left corner.

> called an idiot by Hank Roberts

John, I’m glad to apologize for being fooled by a Poe; wouldn’t be the first time. I don’t always succeed at pretending to be patient. Someone using “Hank” has a few times posted nasty stuff that’s been mistaken for mine, so I’d like to know for sure.

41: I wrote: “You cannot defeat it with reason. ” but meant

“You cannot defeat it with reason ALONE.”

Erica,

The fact that denialists equate money spent on science with money spent on propaganda merely illustrates that the denialists haven’t the first idea what science is.

I would welcome any amount of scientific investment by fossil fuel interests to research alternative models to the consensus model of Earth’s climate. I rather doubt they would get anywhere, but if they did, it would revolutionize the field.

Here’s the problem: science requires a model. The model makes predictions and suggests avenues of inquiry. If those predictions are successful, they in turn suggest further predictions and avenues of research. If not, they suggest ways of modifying the model or if severe enough, development of a new model. That’s science, and the impressive successes of climate models show they are good models.

http://bartonpaullevenson.com/ModelsReliable.html

That is not what the denialists are doing. They are executing a classic gish gallop to blind the public with bullshit–usually not even bothering to publish for a technical audience. That is classic anti-science.

So, go with the scientists or go with the anti-scientists. That is the choice you have.

The de

Erica, you don’t need a supercomputer to produce PR.

Erica says:

Why 17? Why not 16 or 18 years? I suspect 17 is chosen because it starts with a particularly warm year. As the start year was chosen because it is an outlier, any statistical tests of significance you do are invalid as they all assume a random starting and ending points (random in the sense that they were not selected on the basis of their temperatures).

There is no magic number of years that is needed to establish that a linear trend exists. It depends on the signal-to-noise ratio, so for global temperature in recent decades 20 years has been about enough, for CO2 concentration 4 years is more than enough while for hurricane frequency 50 years is probably too short. BTW: it can never be shown that no trend exists. The problem is that the question is poorly phrased.

#52 Hank Roberts

No worries. Michael expressed:

https://www.realclimate.org/?comments_popup=6013#comment-231573

that he wishes global warming were real or that some other event such as a comet of a Coronal Mass Ejection might wipe out the human race to prevent us form doing more damage apparently. Odd though since a comet or (truly large) CME would do just as much damage to the rest of nature on the planet. Hard to reconcile that logic, though I can understand the root frustration.

BTW I gave up patience for lent ;)

Interesting illustration that finding the signal in the noise is made easier by working with intermediate averages.

The one thing which is arbitrary is the start of the year. I expect it will, but would you mind showing us that January-December annual mean anomaly shows the same trend as the April-March annual mean anomaly?

Thanks in advance!

The reason for my previous question is, suppose for simplicity, we have only two seasons winter and summer, and winters are getting warmer and summers are getting cooler (or vice versa) with anomalies in a series like this ( units of micro-Kelvin if you like). The annual anomaly is the average of two successive numbers in the sequence.

1, -2, 4, -8, 16, -32, ….

Depending on how I group to make the year, I get for the annual anomaly

-0.5, -2, -8, …

Or

0.5, 1, 4, …..

Am not saying that physically something like this is plausible, but it is a logical possibility that should be ruled out.

@Richard, Erica,

It really depends what the original paper was trying to show. I think Ben Santer had a paper out last year to counter “It hasn’t warmed for 10yrs argument therefor the models are wrong” argument.

My recollection of it is- Even in individual Climate model runs there are periods ~10yrs with no warming, so it is unexceptional. Santer suggests a minimum of 17yrs would be required before it could be said there was a problem with models.

My only reason for suggesting this is the coincidental recurrence of the 17yrs figure.

[Apologies if this appears twice, got lost? the first time]

@Richard, Erica,

It really depends what the original paper was trying to show. I think Ben Santer had a paper out last year to counter “It hasn’t warmed for 10yrs argument therefore the models are wrong” argument.

My recollection of it is- Even in individual Climate model runs there are periods ~10yrs with no warming, so it is unexceptional. Santer suggests a minimum of 17yrs would be required before it could be said there was a problem with models.

My only reason for suggesting this is the coincidental recurrence of the 17yrs figure.

#48 Erica

Some focused model work has it down to 17 years to separate signal to noise. 30 years is good for most models. Think about it this way, if you can model out the noise of natural variation, you can see the human climate signal.

There is now a clear and identifiable human climate signal above the noise with strong scientific confidence.

Going back in the thread a bit…

Re: #9 “There’s something I don’t understand. When I run linear regression over UAH data between 1994 and 2012 as per both the original graph and your reconstruction, I get the same trend value regardless whether I run it on monthly or annual averages.

Why is that a surprise? It’s a characteristic of linear systems. Perhaps it’s my age (I remember when I had do do linear regressions with a pencil and paper for the sums, and a slide rule to help with the squares and square roots), but a fundamental principle of a linear least squares regression is that the best fit line passes through the point represented by the mean X and mean Y values. A quick-and-dirty regression result can be had by calculating mean X and mean Y, and then using a ruler to draw the “best fit by eye” line through that point.

[Response: Oddly enough linear regression is not actually linear, per se! It is based on minimizing the sum of squares of the departures of the data points from the linear model. Because of this, it is potentially sensitive to outliers (i.e. it is not “robust”). And in general, one could actually get a somewhat different trend using monthly data rather than the annual means of those data. This would be true, for instance, if the earliest or latest individual monthly values in the series are outliers–that will have more leverage on the trend in the monthly series than in the corresponding annual means. Try it out on some synthetic data and see for yourself. This is one of the weaknesses of linear regression–its not resistant to outliers. This is also the motivation for other alternatives such as robust trend estimation (which minimizes the distance rather than squared distance from the linear model; but it doesn’t have the nice closed-form analytical solution that least squares has). -mike]

A linear regression says “let’s allow our mean Y to depend on X in a linear fashion”, instead of using a single mean Y to represent the data (independent of X). If you have equally spaced points along the X axis, and decide to average them in groups of 12, you’re just pre-averaging the data before feeding it into the regression. Since it is a linear combination, where you do the averaging doesn’t matter when it comes to the resulting slope.

Of course, the other things related to the regression (significance, standard error, etc.) will not be the same, so averaging the data before doing a regression is frowned upon (to say the least), but it won’t affect the trend.

Chris Reynolds #7

I’ve had a look at the paper you reference. Although it looks mainly at Arctic sea ice the points it raises go to the heart of the wider climate debate. Climate models represent the late 20th century warming more accurately than any other period. If the warming in that period is caused wholly, or largely, by the forcing represented in those models then their projection are likely to be valid. (The paper you reference believes they are.) On the other hand, if much of that warming was due to pseudo oscillations not included in the models (AO, NAO, AMO, ENSO, PDO, etc)then their projections may be less valid.

@John

“if you can model out the noise of natural variation”

Sorry John, I don’t know if you’ve just chosen your words badly, but you definitely cannot “model out” noise. It’s well, noise.

[Response: One person’s noise is another person’s signal. – gavin]

[Response:He’s talking about its reduction relative to the signal, not an actual removal–Jim]

52: Hank was several years ago. All was long since forgiven. Point is that people here are too touchy. I understand the touchiness but still…

My first attempt at humor here was way too subtle. I was certain that yesterday’s attempt couldn’t possibly have been mistaken but that’s the way it is. Go figure.

[Response:You need to get back to looking at all temperature data on the planet John. There’s been a lot of it in the last 24 hours ;)–Jim]

> people here are too touchy

I resemble that remark, at times:

http://www.cartoonstock.com/newscartoons/cartoonists/amc/lowres/amcn39l.jpg

I swear the ReCaptcha AI is watching me; it just asked me to type:

focus jookes

Re: response to #62

Actually linear regression is indeed linear, because the result of a linear regression is a strictly linear function of the input data. Hence if the data x is the sum of two different sets of data x1 and x2, then linear regression on x is the sum of the linear regressions on x1 and x2. This is a remarkably useful property in many contexts, and is one of the advantages of linear regression which is rarely appreciated.

The “basis” for linear regression is that if the noise (deviation from the model) follows the normal distribution, then linear regression is the maximum-likelihood solution for a straight-line fit. Extreme outliers can spoil the fit, not because of any inherent weakness of the method but because their presence indicates that the underlying assumption does not hold, i.e. the noise does not follow a normal distribution — not even approximately.

There are lots of “robust” (i.e. resistant to outliers) regression methods (besides least-absolute-difference regression). They all have their own weaknesses (not just the lack of closed-form solution), including the fact that in some cases the solution may be ambiguous.

Even when the noise isn’t normally distributed (e.g. in the presence of outliers), as long as the noise is unbiased, uncorrelated, and homoscedastic, when the number of available data gets large one can rely on linear regression because of the Gauss-Markov theorem, that linear regression gives the BLUE, i.e., “Best Linear Unbiased Estimator” of the regression line.

If you work out the gory mathematical details you can see that the regression on annual means is *necessarily* very close to the regression on monthly means (assuming that the annual means are the averages of the monthly means). There can be tiny differences, but even with outliers at the extremes of the time range the results will still be close.

Linear regression isn’t perfect — nothing is — but it’s probably the best single trend-line estimator for general use in more situations than any other. There’s a reason it’s the workhorse of trend-line estimation.

Incidentally, I wouldn’t say that averaging the data before linear regression is frowned upon (although it’s less than optimal). In fact if your data are strongly autocorrelated, then averaging before regression can be a *good* idea because it reduces the autocorrelation of the data used for the regression. Linear regression on monthly temperature data, for instance, will give you a reliable trend, but the estimated *uncertainty* that most computer programs compute for the regression fit will be way off. If you do the same on annual means instead, the uncertainty most programs report will be much closer to reality.

BUT: linear regression on *moving* averages is a very bad idea. Moving averages make the data strongly autocorrelated even if it wasn’t already, violating the assumptions of just about every regression method (even invalidating the Gauss-Markov theorem). Don’t do it!

#64 GSW

Far be it from me to always choose my words well :)

I sometimes assume others see my context, which is sometimes foolish on my part.

But I’ve never been afraid to play the part of the fool either. In my lifetime, i’ve probably been more wrong than right. If I have paid attention properly, it is possible I may have gained some relevant understanding and knowledge in the process.

Thanks Gavin/Jim.

#65 John E. Pearson

And my apologies to you as it becomes more clear I misunderstood the joke. When I first read your post, I thought ‘that’s got to be a joke’… but then I thought well, maybe others might not think it’s a joke. And then I thought placing an analogy referring to it would help those that are following the thread.

As I’m sure you do understand the sensitivity reasoning though… so many times, and so many things, said out of context by so many denialists… I certainly can be ‘touchy’ about such things at times.

John E. Pearson (~#65), I greatly appreciate your attempts to combat the humor denialists. Steve

#64 GSW

“if you can model out the noise of natural variation”

Attempts at less bad (wow, that is truly bad grammar) wording:

– if you can separate the human signal from the noise of natural variation

– if the model can distinguish the human signal for natural variation

– if natural variation signal can be identified in order to parse it from the human signal

– if the natural variation noise can be reduced in order to more clearly see the human signal

There’s an old saying in production: There are a million ways to do it (say it); a hundred ways to do it (say it) right; and three really bitchin ways. If you can at least get one of the hundred, then that’s not too bad.

Lynn, you’ll save time and typing by using either the search box (upper right corner) or most any web browser’s Site Search function — for example:

https://duckduckgo.com/?q=site%3Arealclimate.org+schmittner

It’s been covered well previously.

As to Chip K — “New Hope” sells “advocacy science”

(one sided presentation, spin for paying clients to make their arguments)

#57–OT, OT, OT–

“BTW I gave up patience for lent ;)”

And now you can’t wait for Easter?

#73 Kevin McKinney

Easter?

Alas, I fear all I have left to look forward to now is the next festival of Saturnalia and hope that Ba’al Hammon and Cronus would acquiesce and allow the crowning of a new lord of misrule that somehow the king could return disorder to the feast of fools that there may be some hope that our current insane reality can be restored to some semblance of the antithetical order of the now by achieving the antithesis of the faux facade we currently face in the circus of our social medium and its related maelstrom.

Unfortunately, as far as I know, the last Saturnalia festival was five to six centuries ago seems to have died with the end of the Roman Empire… I might have to wait sometime I suppose.

See the book, “How to Lie with Statistics”

http://www.amazon.com/How-Lie-Statistics-Darrell-Huff/dp/0393310728

Darrell Huff’s classic 1954 book, “How to Lie With Statistics” might be of some value here.

@John #71,#68,

“Signal as noise, noise as signal?”

Apologies John, I did know what you were getting at. Just being overly pedantic. ;)

It’s certainly possible to filter out “weather” from the temperature record, but I’m uncomfortable ascribing the label “Human Signal” to what’s left – You could use “Climatic/Temperature trend” instead, and attribute an influence on that trend as being Anthropogenic in origin – which I think is what you mean.

Possibly just being pedantic again, but view the words “Human Signal” as describing a “specific”/known quantity (poor choice of words) superimposed on top of the temperature record. I prefer to think of it as a mid to long term “bias” towards higher temperatures rather than a “signal”.

But perhaps it just playing words.

;)

#74–You might be surprised!

http://www.facebook.com/saturnalia2012

Though apparently you’ll have to wait for the 2013 edition now.

In another sense, we *always* seem to have a few ‘lords of misrule’ lounging about these days, if you know what I mean… some might even claim to be Lords…

@65 John E. Pearson:

Have you considered the use of emoticons? ;)

On the internet, in the absence of body language and intonation, it’s often hard to interpret the more subtle forms of humour. It’s a remarkably thin line between those who are serious but appear humorous, and those who are satirising the former. Hence, a strategy can be to respond only to what people write and not what they might mean, given no explicit cues of intent. This in turn may appear touchy or overly serious, especially if responding in a similar tone as the original post. However, given your imitation, you know how obtuse some serious posts can be…

I must add that your sprinkling of caps and exclamation points did not convince me in this case ;).

Speaking of data presentations, here’s a good explanation of why these merit careful attention. It’s also an explanation of advocacy science at work:

http://mikethemadbiologist.com/2012/03/23/a-nation-run-by-ignoramuses-the-congressional-staffer-edition/

A brief excerpt with his quote of a

“… former Congressional staffer ….:

> I often (some would say usually) had absolutely no

> idea what I was doing, substantatively, or procedurally

> or politically. I was constantly in need of reliable

> information, provided quickly, tailored to my specific

> situation, dumbed down to my level.

>

> And so, as you can imagine, my best experiences with

> lobbyists were the ones who gave me useful information

> when I needed it

This is what happens when you underpay staffers–and for the scientists, they make the equivalent of a postdoc salary. If you want professional, competent, experienced help, then it’s going to cost us.

And it does cost us, often greatly. This another reason why we can’t have nice things.”

— end excerpt—-

#79 GSW

I understand your point and in a science forum it is easy show interest in hyper-accuracy from ones own perspective. But what I’m trying to do in my work is be hyper-meaningful to an audience that does not readily understand science. I always try to avoid words like anthropogenic as it does not quickly convey a point and increases dissonance.

The human signal in temperature is that temperature that is there by human causation factors, such as induced by increased radiative forcing. To the natural signal I suppose the human signal is the noise though and this becomes a semantic point. I use the Meehl UCAR/NCAR chart in presentations to explain the difference:

http://ossfoundation.us/projects/environment/global-warming/attribution/

I must say this is a very good article for explaining the simple ways data cherrypicking and graphical presentation can misrepresent observations, would be very good reading for some journalists

However I think it works both ways and good as it is there is a little self righteous hubris within

How would you react to the following a sceptical blog say

“I just came across an interesting way to eliminate the impression of slowing of the warming trend . A trick used to argue that the global warming is continuing at the same rate as the latter part of the 20th century, and the simple recipe is as follows:

•Extend all of the measurements as far back into the 20th century as possible, at least 1979.

•Plot annual averages of these 17 years to get fewer of data points and disguise true monthly averages.

•A good idea is to show a streched plot with longer anomaly axis vertically to enhance temperatures.

The third point used to excellent effect in the third graph (from 1979)by the way

:-)

> PKthinks says: 26 Mar 2012 at 12:42 PM …

> … How would you react to the following a sceptical blog say …

I’d ask for a cite. Got one? Google can’t find the stuff you appear to be quoting; where did you get it?

@John,

Not a problem John honestly. Its worth bearing in mind that climate response is not linear, so be cautious about ascribing distinct Human and Natural components to what is observed.

;)

Nothing as tricky – fraudulent actually – as:

http://www.sustainableoregon.com/_wp_generated/wpd7eded6e.png

with the “argument”:

“Except for the well known fact that temperature changes precede CO2 changes (!!),

the supposed CO2-driven raise of temperatures works ok before temperature reaches

max peak. No, the real problems for the CO2-rescue hypothesis appears when temperature

drops again. During almost the entire temperature fall, CO2 only drops slightly.

In fact, CO2 stays in the area of maximum CO2 warming effect. So we have temperatures

falling all the way down even though CO2 concentrations in these concentrations where

supposed to be a very strong upwards driver of temperature”.

I have been taking some notice of the little tricks that are used to obscure temperature and other trends. One such that is currently in fashion is comparing gradients rather than actual values. This is effective since gradients visually exaggerate noise and therefore provide more cherry picking opportunities via range selection.

On the other hand I don’t think they even need to do this. C.S.s are quite capable of looking directly at an upward trend graph and ignoring the first 99% of the range and focussing on the last 1% that makes them happy.

Not sure how they do it though. Place an index card over the offending vision on their computer screen? Or do they just gouge out their left eyeball with their thumbs?

@PKthinks

You question is certainly a legitimate one, albeit not completely symmetrical (longer time series is just beneficial for trend seeking). Symmetry notwithstanding, you should note from the discussion that objective analysis yields same result regardless of 2. and 3. step. So it’s not so much about ‘How to lie with statistics’, it’s more about ‘How to tweak the chart to fool human’s faculty to extract trends visually’.

But there are more capable guys here to provide more authoritative answer.

Often what is loosely called “noise”is not only not normally distributed(thank you tamino), but is in fact an interfering signal from a known source. For instance, the annual variations in CO2 rise aren’t strictly speaking noise, but a signal from vegetation in the northern hemisphere. Since they repeat fairly regularly, averaging for integral multiples of the annual cycle is very effective at quashing them, but an 18 month average fluctuates more than a 12 month average – http://www.woodfortrees.org/plot/esrl-co2/from:2000/plot/esrl-co2/from:2000/mean:12/plot/esrl-co2/from:2000/mean:18/plot/esrl-co2/from:2000/mean:24

True random noise would only be decreased by root n, where n is the number of samples averaged, but it would be better reduced by 18 averages than 12.

Knowing the underlying physics is necessary for determining the appropriate model to use – Gaussian statistics, Fourier transform, linear or polynomial fits, and so on. When the underlying physics is isn’t yet known, testing which model fits best may give insight as to what the underlying processes may be, but randomly applying mathturbation until it gives an answer you like, then proudly proclaiming “It’s cycles” or “it’s a fifth order polynomial, and cooling is imminent” isn’t even wrong.

Here’s another one. Calculate the previous 17 year trend line slope for each month and plot. The current slope is as low as it has been in the last 30 years. What happens over the next few years is going to be very interesting as to whether the growth snaps back or trends indicate a peak in global temps.

Tom,

Agreed. I have done the same calculations, and arrived at the same conclusion.

This is an interesting paper that corrects temperature records for short term effects like ENSO, volcanoes and solar variability, which gives a marked upward underlying trend:

http://iopscience.iop.org/1748-9326/6/4/044022

Has this been covered here anywhere? It seems to provide a very good rebuttal for those who parrot the “global warming has stopped” line.

For Tony Weddle: Yes.

Here’s how to check:

In the upper right corner of the web page, there’s a white rectangle; type the words there — for the paper you ask about, the authors’ names, or the journal cite can be pasted in there. Click the Search button next to it.

That will find any previous mention of whatever you’re searching for.

> the previous 17 year trend … is as low

> as it has been in the last 30 years

Please show your work. Got a web page somewhere?

Bojan,

Yes I was aware of 2 and 3 being merely what I would call ‘spin’

Hank , the issues are generic but well demonstrated in most 21st cent publications(being the converse of the sceptical trick you would expect this), and if you pushed me for a citation ‘classic’ I would look no further than F+R (and figure 8 especially)

but it was a little tongue in cheek

#91, 92–

And what will you gentleman conclude, should March be warmer than February was?

#80 Kevin McKinney

Amazing! Not only is Saturnalia back, it has a facebook page!!!

“The current slope is as low as it has been in the last 30 years” Tom & DanH

It’a pretty damn close to the trend since 1950.(0.013 vs 0.011 per year) I don’t see that these “trends indicate a peak in global temps.”

“The current slope is as low as it has been in the last 30 years” Tom & DanH

Seriously, guys, you both claim independently to have determined this is a fact. Please show your work. You’re not just porting someone else’s claims over here and saying you did the analysis yourself, so — show how you decided this was the case.