Not small enough to ignore, nor big enough to despair.

There is a new review paper on climate sensitivity published today (Sherwood et al., 2020 (preprint) that is the most thorough and coherent picture of what we can infer about the sensitivity of climate to increasing CO2. The paper is exhaustive (and exhausting – coming in at 166 preprint pages!) and concludes that equilibrium climate sensitivity is likely between 2.3 and 4.5 K, and very likely to be between 2.0 and 5.7 K.

For those looking for some context on climate sensitivity – what it is, how can we constrain it from observations, and what observations are available, browse our previous discussions on the topic: On Sensitivity, Sensitive but Unclassified Part 1 + Part 2, A bit more sensitive, and Reflections on Ringberg among others. In this post, I’ll focus on what is new about this review.

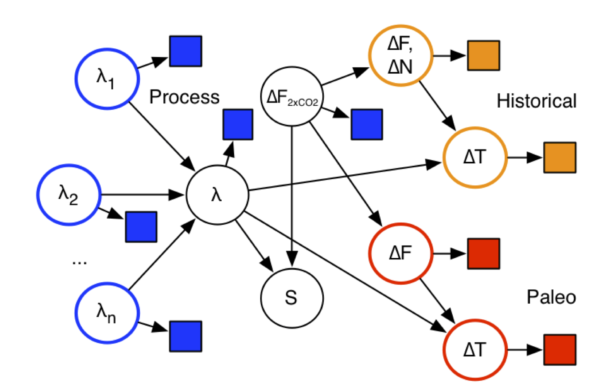

Climate Sensitivity is constrained by multiple sets of observations

The first thing to note about this study is that it attempts to include relevant information from three classes of constraints: processes observed in the real climate, the historical changes in climate since the 19th Century, and paleo-climate changes from the last ice age (20,000 years ago) and the Pliocene (3 million years ago) (see figure 1). Each constraint has (mostly independent) uncertainties, whether in the spatial pattern of sea surface temperatures, or quality of proxy temperature records, or aerosol impacts on clouds, but the impacts of these are assessed as part of the process.

Importantly, all the constraints are applied to a coherent, yet simple, energy balance model for the climate. This is based on the standard ‘temperature change = energy in – energy out + feedbacks’ formula that people have used before, but it explicitly tries to take into account issues like the spatial variations of temperature, non-temperature-related adjustments to the forcings, and the time/space variation in feedbacks. This leads to more parameters that need to be constrained, but the paper tries to do this with independent information. The alternative is to assume these factors don’t matter (i.e. set the parameters to a fixed number with no uncertainty), and then end up with mismatches across the different classes of constraints that are due to the structural inadequacy of the underlying model.

This is fundamentally a Bayesian approach, and there is inevitably some subjectivity when it comes to assessing the initial uncertainty in the parameters that are being sampled. However, because the subjective priors are explicit, they can be adjusted based on anyone else’s judgement and the consequences worked out. Attempts to avoid subjectivity (so-called ‘objective’ Bayesian approaches) end up with unjustifiable priors (things that no-one would have suggested before the calculation) whose mathematical properties are more important than their realism.

What sensitivity is being constrained?

There are a number of definitions of climate sensitivity in the literature, varying depending on what is included, and how easy they are to calculate and to apply. There isn’t one definition that is perfect for each application, and so there is always a need to translate between them. For the sake of practicality and to not preclude increases in scope in climate models, this paper focuses on the Effective Climate Sensitivity (Gregory et al, 2004) (based on the 150 yr response to an abrupt change to 4 times CO2), which is a little smaller than the Equilibrium Climate Sensitivity in most climate models (Dunne et al, 2020). It can allow for a wider range of feedbacks than the standard Charney sensitivity, but limits the very long term feedbacks because of its focus on the first 150 years of the response.

Issues

Each class of constraint has it’s own issues. For the paleo-climate constraints, the uncertainties relate to the fidelity of the temperature reconstructions and knowledge of the forcings (greenhouse gas levels, ice sheet extent and height, etc). Subtler issues are whether ice sheet forcing has the same impact as greenhouse gas forcing per W/m2 (e.g. Stap et al., 2019), and whether there is an asymmetry between colder and warmer climates than today. For the transient constraints, there are questions about the difference between the pattern of sea surface temperature change over the last century compared to what we’ll see in long term, and the implications of aerosol forcing over the twentieth century which is still quite uncertain.

The process-based constraints, sometimes called emergent constraints, face challenges in enumerating all the relevant processes and finding enough variability in the observational records to assess their sensitivity. This is particularly hard for cloud related feedbacks – the most uncertain part of the sensitivity.

The paper goes through each of these issues in somewhat painful detail, highlighting as it does so areas that could do with further research.

Putting it together

There have been a few earlier papers that tried to blend these three classes of constraints, notably Annan and Hargreaves (2006), but doing so credibly while accounting for possible shared assumptions has been difficult. This paper explicitly looks at the sensitivity to the (subjective) priors, the quality of the evidence, and reasonable estimates of missing information. Notably, the paper also addresses how wrong the assumptions would need to be to have a notable impact on the final results.

Bottom line

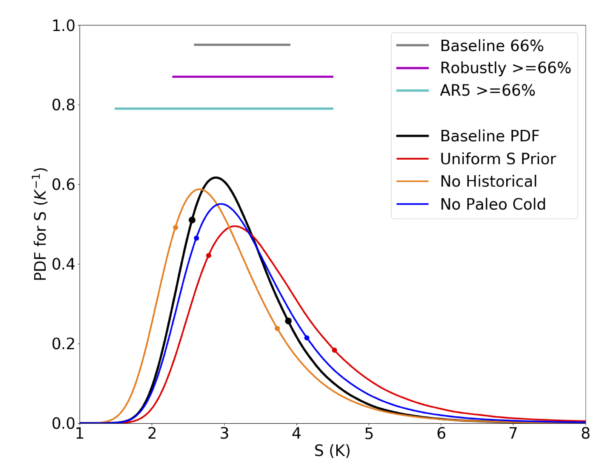

The likely range of sensitivities is 2.3 to 4.5 K, which covers the basic uncertainty (“the Baseline” calculation) plus a number of tests of the robustness (illustrated in Figure 2). This is slightly narrower than the likely range given in IPCC AR4 (2.0-4.5 K), and quite a lot narrower than the range in AR5 (1.5-4.5 K). The wider range in AR5 was related to the lack (at that time) of quantitative explanations for why the constraints built on the historical observations were seemingly lower than those based on the other constraints. In the subsequent years, that mismatch has been resolved through taking account of the different spatial patterns of SST change and the (small) difference related to how aerosols impact the climate differently from greenhouse gases.

This range the paper comes up with is not a million miles from what most climate scientists have been saying for years. That a group of experts, trying their hardest to quantify expert judgement, comes up with something close to the expert consensus perhaps isn’t surprising. But, in making that quantification clear and replicable, people with other views (supported by evidence or not) now have the challenge of proving what difference their ideas would make when everything else is taken into account.

One further point. When this assessment started, it was before anyone had seen any of the CMIP6 model results:

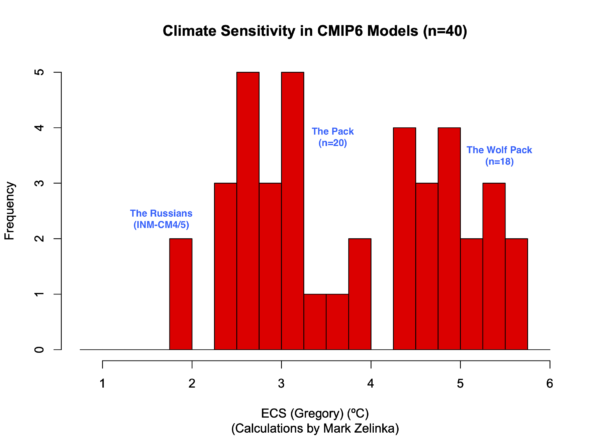

As many have remarked, some (but not all) reputable models have come out with surprisingly high climate sensitivities, and in comparison with the likely range proposed here, about a dozen go beyond 4.5 K. Should they therefore be ruled out completely? No, or at least not yet. There may be special combinations of features that allow these models to match the diverse observations while having such a high sensitivity. While it may not be likely that they will do so, they should however be tested to see whether or not they do. That means that it is very important that these models are used for paleo-climate runs, or at least idealised versions of them, as well as the standard historical simulations.

In the meantime, it’s certainly worth stressing that the spread of sensitivities across the models is not itself a probability function. That the CMIP5 (and CMIP3) models all fell within the assessed range of climate sensitivity is probably best seen as a fortunate coincidence. That the CMIP6 range goes beyond the assessed range merely underscores that. Given too that CMIP6 is ongoing, metrics like the mean and spread of the climate sensitivities across the ensemble are not stable, and should not be used to bracket projections.

The last word?

I should be clear that although (I think) this is the best and most thorough assessment of climate sensitivity to date, I don’t think it is the last word on the subject. During the research on this paper, and the attempts to nail down each element of the uncertainty, there were many points where it was clear that more effort (with models or with data analysis) could be applied (see the paper for details). In particular, we could still do a better job of tying paleo-climate constraints to the other two classes. Additionally, new data will continue to impact the estimates – whether it’s improvements in proxy temperature databases, cloud property measurements, or each new year of historical change. New, more skillful, models will also help, perhaps reducing the structural uncertainty in some of the parameters (though there is no guarantee they will do so).

But this paper should serve as a benchmark for future improvements. As new data comes in, or better understandings of individual processes, the framework set up here can be updated and the consequences seen directly. Instead of claims at the end of papers such as “our results may have implications for constraints on climate sensitivity”, authors will be able to work them out directly; instead of cherry-picking one set of data to produce a conveniently low number, authors will be able to test their assumptions within the framework of all the other constraints – the code for doing so is here. Have at it!

References

- S.C. Sherwood, M.J. Webb, J.D. Annan, K.C. Armour, P.M. Forster, J.C. Hargreaves, G. Hegerl, S.A. Klein, K.D. Marvel, E.J. Rohling, M. Watanabe, T. Andrews, P. Braconnot, C.S. Bretherton, G.L. Foster, Z. Hausfather, A.S. von der Heydt, R. Knutti, T. Mauritsen, J.R. Norris, C. Proistosescu, M. Rugenstein, G.A. Schmidt, K.B. Tokarska, and M.D. Zelinka, "An Assessment of Earth's Climate Sensitivity Using Multiple Lines of Evidence", Reviews of Geophysics, vol. 58, 2020. http://dx.doi.org/10.1029/2019RG000678

- J.M. Gregory, W.J. Ingram, M.A. Palmer, G.S. Jones, P.A. Stott, R.B. Thorpe, J.A. Lowe, T.C. Johns, and K.D. Williams, "A new method for diagnosing radiative forcing and climate sensitivity", Geophysical Research Letters, vol. 31, 2004. http://dx.doi.org/10.1029/2003GL018747

- J.D. Annan, and J.C. Hargreaves, "Using multiple observationally‐based constraints to estimate climate sensitivity", Geophysical Research Letters, vol. 33, 2006. http://dx.doi.org/10.1029/2005GL025259

BPL (50): ‘That the globe is warming, that we’re doing it, and that it’s extremely dangerous, is all quite settled. The rest is details.’

Yes, your still very useful page at http://bartonlevenson.com/ModelsReliable.html explains a surprising amount that is settled and a few things that are or were less certain; I hope it’s OK to share that link a lot online will all comers!

I still don’t see a consensus on blocking events. That detail doesn’t change the points you make about releases of greenhouse gases being extremely dangerous and a case for urgent action. However, warming’s effect on blocking events may be interesting in its own right and contribute to differentiating between change in heatwaves, droughts, dieback and wildfires being extremely dangerous or merely very, very dangerous.

Thanks to ‘Billy Pilgrim’ for those links, which in a sense support my speculation that extremes and impacts may be higher than expected from ECS alone or from some models (the LA Times interpretation is that the less-extreme mid-latitude winters have stronger polar jets and fewer persistent Rossby waves than the extreme winters, which seems sensible). To summarise from Carbon Brief, little trend has been detected in observed frequency of blocking patterns, and ‘climate models generally struggle with simulating blocking, and have the tendency to underestimate both the frequency and duration of events’ (such as near Greenland), while spatial extent of blocks has been modelled to increase with climate change. ‘there’s still a lot of debate in the scientific literature about whether these theories are supported by observations and climate-model experiments’. Yet as I understand it Prof Jennifer Francis projects an increase in blocking from basic physics.

So if the ‘wolf pack’ (ECS ≥ 4.5 °C) models aren’t showing any increase in blocking, I’m suggesting they would be neglecting relatively higher Planck negative feedback (perhaps in unscientific terms, an attempt to shed some of the excess heat by concentrating it in hot, dry regions). So (still speculating), those models show unrealistically high ECS, while potentially underestimating impacts of extremes and carbon-cycle feedbacks. I’m not an expert and just wondering what the state of the art is.

I notice these papers and articles related to blocking:

Davini & D’Andrea (2016) https://doi.org/10.1175/JCLI-D-16-0242.1 models still don’t capture blocking

2019 CB article: https://www.carbonbrief.org/qa-how-is-arctic-warming-linked-to-polar-vortext-other-extreme-weather

Mann et al (2017) https://doi.org/10.1038/srep45242 find models do show signs of increasedpositive Rossby waves

Francis & Vavrus (2015) https://doi.org/10.1088/1748-9326/10/1/014005 positive observations

Kretschmer et al (2019) https://doi.org/10.1175/BAMS-D-16-0259.1 positive observations

He and Sheffield (2020) https://doi.org/10.1029/2020GL087924 https://phys.org/news/2020-05-frequency-patterns-drought-heavy-regional.html positive observations and increased impacts

I’m not set on the idea that climate models might be missing something subtle about an increase in blocking patterns, but it could be additional bad news. It might perfectly well be the expected case that blocking isn’t increasing significantly but we still need to be careful of it in summer because of overall warming. Maybe the question needs a similar large interdisciplinary project to resolve. Thanks for your time replying.

15, 16, 20, 35, 37, 38, 49… the original comment was meant for a different thread, and maybe these responses about ‘Does CO2 kill people?’ will be moved elsewhere. I’ve been watching some nice Climate Adam videos comparing Covid and climate change: it would be unreasonable to expect so direct a link between emissions and deaths. All the same, deaths from cold and heat extremes are important even if they’re only one effect of disruption to climate norms. I read somewhere that deaths from cold aren’t declining because people are decade by decade taking fewer precautions against cold; likewise deaths from heat will be reduced in many places by increased adoption of air-conditioning, but that doesn’t help if you’re working outside.

Anyway I’d like to share this article from a couple of weeks ago, a heatwave attribution study, ‘The First Undeniable Climate Change Deaths’:

https://slate.com/technology/2020/07/climate-change-deaths-japan-2018-heat-wave.html

Re 43 B Fagan

Re 43 B Fagan

“Actual deaths – Lake Nyos in Cameroon – August 26, 1986 – over 1,700 people in one night, and their cattle. Suffocation as heavier-than-air CO2 filled the area one night. https://en.wikipedia.org/wiki/Lake_Nyos_disaster”

The cause of death was actually the volcano, NOT atmospheric CO2.

My question was ,”Does CO2 kill people?” You like most have wandered away from the question. You have not shown that atmospheric CO2 kills people. My reference to the submarine CO2 levels showed that Atmospheric CO2 would have to increase to highly unlikely levels to kill anyone. In addition levels of 1,000 ppm are beneficial for plant growth.

RE 52 Cedric Knight

“Anyway I’d like to share this article from a couple of weeks ago, a heatwave attribution study, ‘The First Undeniable Climate Change Deaths’:

https://slate.com/technology/2020/07/climate-change-deaths-japan-2018-heat-wave.html”

Reading the article leaves me with a different name for the “attribution game”. It should really be called the “blame game”. I have already seen this used in forest fires and Arctic ice. You already know what to blame, just write it up.

GM 54: levels of 1,000 ppm are beneficial for plant growth.

BPL: Only in a greenhouse, where additional water, fertilizer, etc. can be added ad libitum. Not out in the real world, where extra CO2 raises the temperature, which makes the stomata of leaves leak more water vapor, and ultimately gets to a point where the plant is worse off. Then there are the droughts caused by global warming, the higher precipitation which washes away more soil nutrients, etc. The “CO2 is plant food” meme is just wrong, especially in the light of this:

https://www.scientificamerican.com/article/earth-stopped-getting-greener-20-years-ago/

#55, GM–

Snark is not an actual rebuttal, and your snark isn’t even true; there are certainly examples of attribution studies that have had negative results. (For just one example, BAMS published a study a few years ago showing that climate change had actually *reduced* the probability of a particular flood event in Colorado–which, however, had occurred anyway.)

So, no, attribution study in general is legitimate inquiry.

But it’s worth highlighting this from Cedric’s link:

That’s anecdotally corroborated by the on-the ground reporting in the story, such as this:

Or this:

People who lived through the event knew by direct experience that it was as unprecedented as the modeling showed.

Your expressed belief is that CO2 doesn’t–can’t!–kill anybody. Cedric’s reply is a strong and convincing retort giving good reason to think that indirectly it can and does–snark or no snark.

Dominik Lenné writes: “true multistability with badly defined ECS – or enough complexity for a well defined ECS”

I think that among other things, the transitions during glacial cycles argue for ECS being a “local” number, specific to a particular region of climate space and path history dependent (it matters how you got to that particular region)

So at this point we might do well to look at paleo history for ECS in Eemian, Wisconsinian or even earlier to Pliocene. But admittedly, present atmospheric CO2 spike takes us so far beyond comparison to last few million year paleo.

sidd

RE 57

With respect to CO2 killing anyone I can use the same example with falling leaves in the autumn. I have not been hurt while sitting under a tree, but if someone dumped several tons of leaves on me I could be killed.

Re 57

“Snark is not an actual rebuttal, and your snark isn’t even true”

You are wrong here. You have not proved your point.

I have already researched my point. There was an attribution study done on the Calgary flood of 2013. They used an example in trying to establish that the flooding was due to climate change – attribution paper. However they did not go back far enough to the late 1800’s and early 1900’s when the stream flow exceeded the 2013 event three different times. So they proved nothing but got a paper printed.

Gerald Machnee,

Look, Dude. Cause of death from the Lake Nyos disaster was suffocation by CO2. I know this. I have written stories on Lake Nyos. I have traveled within 30 miles of Lake Nyos. I have talked to people who lost loved ones at Lake Nyos.

There was no volcanic eruption. There was, rather, a limnic eruption driven by outgassing of CO2 from the deep, CO2 rich bottom layers of the lake. Read the references and don’t be an ass.

RE 37 BA Roger

“Your quote looks more a cut-&-paste from a piece of nonsense posted up on the boghouse door on the rogue planetoid Wattsupia than from the reference cited”

Why do you create fictional responses? What was cut and pasted????

Re 61 Ray Ladbury

You can imply whatever you want, it was not atmospheric CO2 that killed the people, it was an ERUPTION.

From your favorite source: Wikipedia

Eruption and gas cloud

It is not known what triggered the catastrophic outgassing.[5][6][7] Most geologists suspect a landslide, but some believe that a small volcanic eruption may have occurred on the bed of the lake.[8][9] A third possibility is that cool rainwater falling on one side of the lake triggered the overturn. Others still believe there was a small earthquake, but because witnesses did not report feeling any tremors on the morning of the disaster, this hypothesis is unlikely. The event resulted in the supersaturated deep water rapidly mixing with the upper layers of the lake, where the reduced pressure allowed the stored CO2 to effervesce out of solution.[10]

Gerald Machnee @62,

You ask for explanation of my post @37 asking “What was cut and pasted????”. @37 I evidently referred to your post @35 which contains the following:-

You made no explanation as to the origin or the relevance of this “passage” but the words you present are surely cut-&-pasted as they are identical to a quote appearing in this pack of nonsense posted on the fake-filled planitoid ruled-over by the witless Anthony Willard Watts and who puts his name to that particular pack of nonsense.

The “passage” as it appears under Willard’s authorship is presented as a quote from National Research Council (2007) ‘Emergency and Continuous Exposure Guidance Levels for Selected Submarine Contaminants: Volume 1’ where it appears in Chapter 3 on page 47. That your version of this “passage” is word-for-word that presented by witless Willard (complete with Willard’s wrong page number) is pretty strong evidence as to where you delved to obtain this “passage” which describes the effects of CO2 on fit and healthy grown men with, as witless Willard tells us, “their finger on the nuclear weapons keys.”

Witless Willard presents the “passage” while considering a research paper Satish et al (2012) ‘Is CO2 an Indoor Pollutant? Direct Effects of Low-to-Moderate CO2 Concentrations on Human Decision-Making Performance’ described in a 2012 Op-Ed as:-

This Op-Ed prompts witless Willard to address the question ‘CO2 makes you stupid?’

Witless Willard was reassured by the delusions of the ageing AGW-denier/sub-submariner William Happer and also that “passage” but I would suggest the extensive literature now citing Satish et al (2012) would be today a more authoritative source to answer Willard’s question. (Of course, I think it unlikely that the remarkable level of stupidity exhibited by witless Willard results from exposure to elevated levels of CO2.)

#60, GM–

To quote you, GM, “You are wrong here. You have not proved your point.”

The putative existence of a single flawed paper (or for that matter, more than one) does not invalidate a field of research in general. Particularly so when it’s utterly irrelevant to the validity of my counterexample–a mere changing of subject on your part.

#59, GM–

Really? That’s the best you can do? Let me remind you that there was nothing hypothetical about the deaths and hospitalizations reported–unlike your “several tons of leaves.” (Or are they really horsefeathers?)

Gerald Machnee,

Thank you for providing an absolutely wonderful example of confirmation bias. From the same Wikipedia entry:

“The gas cloud initially rose at nearly 100 kilometres per hour (62 mph) and then, being heavier than air, descended onto nearby villages, displacing all the air and suffocating people and livestock within 25 kilometres (16 mi) of the lake.[3][4]”

They were suffocated by the CO2. That means the CO2 killed them. Have your mommy help you parse the sentences in case there are big words you don’t understand.

Also, 2 sentences down from where you stopped quoting:

“Since carbon dioxide is 1.5 times the density of air, the cloud hugged the ground and moved down the valleys, where there were various villages. The mass was about 50 metres (160 ft) thick, and travelled downward at 20–50 kilometres per hour (12–31 mph). For roughly 23 kilometres (14 mi), the gas cloud was concentrated enough to suffocate many people in their sleep in the villages of Nyos, Kam, Cha, and Subum.[3]”

Dude, did you miss the point where I said that I’d written a published article on this disaster? In actuality, the CO2 in the deep lake water could not have come from an eruption. An eruption is a violent event–it would cause overturn of the deep water and a violent degassing. Instead, the CO2 must accumulate in the deep water slowly over time, bubbling out of vents on the lake floor, dissolving under high pressure in the deep lake water, further increasing the density of the deep lake water. So the sequence of events was as follows:

1) Volcano becomes dormant.

2) Water accumulates in a deep vent forming a deep volcanic lake

3) Because the volcano is near the equator, the temperature profile of the lake is very stable, so there is almost no overturning or circulation of water from deep to shallow depths.

4) CO2 outgasses from the vent and dissolves under the high pressure deep in the vent. The Carbonic acid that results is denser than fresh water and further stabilizes the density profile of the lake. This goes on for hundreds or even thousands of years.

5) A violent event–a small eruption, a landslide, a small earthquake…triggers an overturn, forces an overturn,driving deep water to shallower depths where the pressure is less.

6) The lower pressure allows the water to degas, forming a foam that is even less dense. The pressure drives the column of foam and water to the surface, resulting in the outgassing of nearly 1 km^3 of CO2.

7) The CO2, being denser than air, displaces the air and flows rapidly (100 km/hr) down the hill.

8) Nearly 2000 people and countless animals suffocate because there is not oxygen–that is, they are killed by the CO2.

This is NOT a volcanic eruption. It is a limnic eruption.

https://en.wikipedia.org/wiki/Limnic_eruption

For comparison, this is what an insect infected forest looks like from above.

https://tinyurl.com/y2khw4q3

Martin Smith says:

Who cares? if you double from a different point, the increase is the same relative to the starting point.