A new paper from Scafetta and it’s almost as bad as the last one.

Back in March, we outlined how a model-observations comparison paper in GRL by Nicola Scafetta (Scafetta, 2022a) got wrong basically everything that one could get wrong (the uncertainty in the observations, the internal variability in the models, the statistical basis for comparisons – the lot!). Now he’s back with a new paper in a different journal (Scafetta, 2022b) that could be seen as trying to patch the holes in the first one, but while he makes some progress, he now adds some new errors while attempting CPR on his original conclusions.

Observational uncertainty

We pointed out in March that Scafetta had totally neglected observational uncertainty in calculating the observed change in temperature (2011-2021 compared to 1980-1990). Now he has included it, but has made a massive error so that it is roughly a factor of ten too small (though this is progress of a sort!). His error is clear in his Appendix 1, and consists of him using the accuracy of the annual global mean anomaly (around 0.05ºC, cheekily referencing the GISTEMP calculation!) to estimate the uncertainty in the 11-year mean (as ![]() ºC). Why is this wrong? Well, the observational time-series has a number of different things going on: In any 11 year period, there will be a particular realisation of the weather (from daily to season variability to ENSO effects etc.). This realisation of the ‘noise’ is not however what we are trying to compare the models to (since there is no expectation that would be able to match the specific weather trajectory seen in the real world), we are targetting the long-term change. So in the estimate of that long term change, we need to include the uncertainty of that because of the interannual variability (the ‘noise’ in this case).

ºC). Why is this wrong? Well, the observational time-series has a number of different things going on: In any 11 year period, there will be a particular realisation of the weather (from daily to season variability to ENSO effects etc.). This realisation of the ‘noise’ is not however what we are trying to compare the models to (since there is no expectation that would be able to match the specific weather trajectory seen in the real world), we are targetting the long-term change. So in the estimate of that long term change, we need to include the uncertainty of that because of the interannual variability (the ‘noise’ in this case).

In formal terms, we are modeling the estimated decadal change in temperature as a long term climate change, a change associated with stochastic internal variability and measurement error:

![]()

with ![]() assumed to be constant by definition over each decade, and so

assumed to be constant by definition over each decade, and so

![]()

The ![]() can be estimated from the decadal sample and for GISTEMP or ERA5 it’s around 0.05ºC, while

can be estimated from the decadal sample and for GISTEMP or ERA5 it’s around 0.05ºC, while ![]() is much smaller (0.016ºC or so). So the 95% confidence interval on the decadal change due to internal variability is therefore around

is much smaller (0.016ºC or so). So the 95% confidence interval on the decadal change due to internal variability is therefore around ![]() ºC – this can’t just be ignored! There is yet another source of uncertainty – that of the forcings themselves. In particular, we don’t have perfect information about how aerosols have varied over the last few decades and uncertainty there translates into differences in temperature trends even if climate sensitivity were perfectly known.

ºC – this can’t just be ignored! There is yet another source of uncertainty – that of the forcings themselves. In particular, we don’t have perfect information about how aerosols have varied over the last few decades and uncertainty there translates into differences in temperature trends even if climate sensitivity were perfectly known.

Scafetta also has a bit of a discussion of the uncertainty derived from monthly values instead of the annual numbers, but misses the real issue of the large amount of auto-correlation in the residuals of the monthly SAT time-series (![]() ) which needs to be accounted for when estimating the uncertainty of the mean or trend (since it reduces the effective degrees of freedom). Auto-correlation in the residuals of the annual SAT time-series is small (

) which needs to be accounted for when estimating the uncertainty of the mean or trend (since it reduces the effective degrees of freedom). Auto-correlation in the residuals of the annual SAT time-series is small (![]() ) and can mostly be neglected, and so the standard formulas can be used for the uncertainty.

) and can mostly be neglected, and so the standard formulas can be used for the uncertainty.

Pointless distractions

For whatever reason, this new paper is padded with a lot of analyses that add nothing to the conclusions (IMO). This isn’t really very important – many papers suffer from this (maybe even some of mine!) – but it gets in the way of seeing what’s really going on. For instance, note the lack of substantive difference between SSPs in Figure 4 for the period 1980 to 2021 – what was the point in doing the same thing for each scenario when nothing depends on it? (Note that the short period 2015-2021 is not long enough to have the differences in emissions make a big dent in the concentrations or, subsequently, the climate).

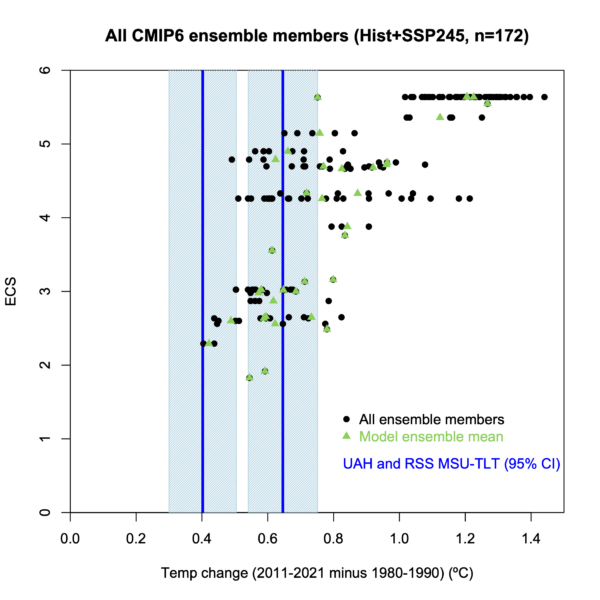

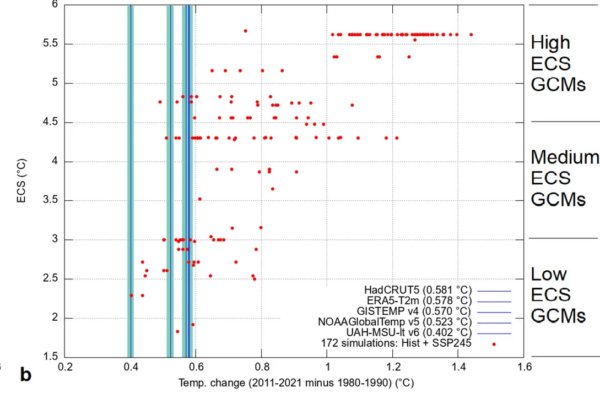

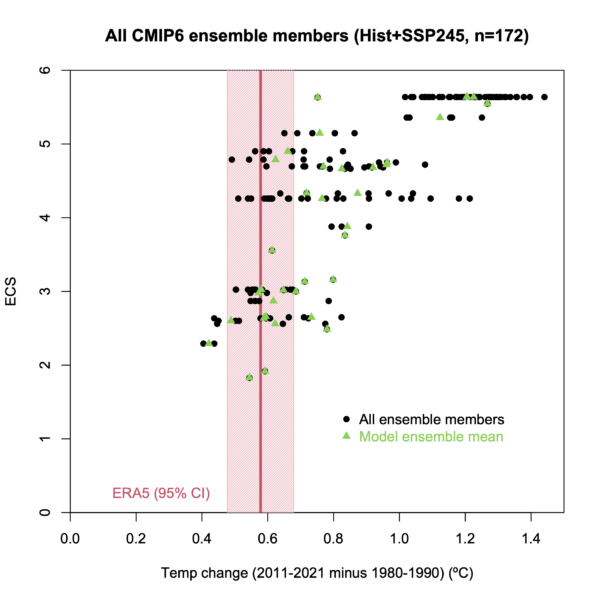

Second, Scafetta takes up a lot of time playing games inspired by the MSU-TLT data – not both versions, that exist from both UAH and RSS, but just the one from UAH which he suggests is (on the basis of nothing) more accurate than any in-situ surface data. My opinion on that probably differs somewhat from his, but the issue is again one of neglected uncertainty. The RSS TLT record warms by 0.65ºC (more than any product in the paper!), compared to just 0.40ºC in the UAH record, and this is not even mentioned, even though on it’s own would make just as clear an argument for an underestimate of climate model sensitivity. The neglect of the very real structural uncertainty in the MSU-TLT records is a(nother) blatant thumb on the scale in support of his preferred conclusion. As you can see here, the inclusion of the RSS data would further undermine Scafetta’s argument:

Third, and this is a minor point (though telling), the spatial map of the GISTEMP results (fig. 7c) and associated text is just wrong. Indeed, all the statements related to the sparseness of the GISTEMP product are wrong. The GISTEMP product is also infilled at the poles (using stations within 1200km), and does not have the gaps shown. I think he has used data from a plotting option with the radius of influence reduced to 250km, but this is not the actual GISTEMP product. This affects (at least) what is reported as the GISTEMP global mean data in Table 4 and probably the rest, but I didn’t check every number.

Triage Mirage

Scafetta’s approach, in both the last paper and this one, is to split the models into low, middle, and high climate sensitivity groups. The IPCC ‘best guess’ for ECS is around 3ºC (with a likely range of 2.5 to 4ºC) from various observational constraints, so someone looking to assess IPCC’s assessment would have made the three groups correspond to ECS < 2.5ºC, ECS between 2.5ºC and 4ºC, and ECS > 4ºC. We suggested this screening in our recent Nature commentary (Hausfather et al, 2022). Scafetta however chooses to split the groups at 3ºC and 4.5ºC, with the groups having a mean ECS of 2.5, 3.7 and 5.1ºC respectively, compared to 2.2, 3.2, and 4.9ºC for an ‘IPCC-like’ grouping, in particular pushing the middle group up near to the edge of the IPCC ‘likely’ values. (Note that group means depend on model selection, and I’m using the 58 models we used in Hausfather et al (2022), compared to Scafetta’s 38. Note also, that the KACE-1-0-G ECS value is wrong: it should be 4.75ºC instead of 4.48ºC and the model should be in the ‘high ECS’ group. This was something we found in writing our commentary and is in the supplemental data there, but obviously hasn’t propagated fully through the data ecosystem). The point is to bolster unsupported claims such as “only the low ECS GCM group can be considered sufficiently validated” which can then be recycled in the contrarian media-sphere.

But even so, it’s clear from Fig. 4 (as it was in our figure in the initial complaint) that the in situ and reanalysis observations are consistent with having been drawn from many of the model ensembles in Scafetta’s medium and even high ECS groups.

Specifically, while on average, there is a tendency to higher temperature changes in higher ECS models (as would be expected), the observations (with the exception of UAH-TLT) are clearly compatible with many of the models with ECS>3ºC (though not for ECS>5ºC – the conclusion reached by the IPCC!). Given that the real world is just one realization of a chaotic system, you should not reject a model if the real world falls within the expected ensemble spread. In our note from March, we gave a specific statistical test that specifically addresses this situation (taken from a similar case discussed in Santer et al (2008)) – unsurprisingly, Scafetta ignores this, since the ERA5 observations are compatible with over 65% of the models for which there are multiple ensemble members.

Even real issues are botched

To be clear, there are real issues with the CMIP6 ensemble. Some of the high ECS models (ECS > 5ºC) are incompatible with recent trends and it does make sense to screen them out (or down-weight them) in near-term transient predictions (Hausfather et al, 2022). There are systematic differences in the Southern Ocean in recent decades between the observed and modeled SST trends. However, the real issues that these mismatches engender are not even mentioned, let alone discussed: Are there issues with the forcings that are artificially boosting warming in some models? Yes. (Fasullo et al, 2021); Are there processes missing in all these CMIP6 models that can explain the southern ocean discrepancy? Probably. (Rye et al, 2020); Is cloud phase in some models being inappropriately tuned? Maybe (Cesana et al, subm). Instead we get a litany of vague insinuations about supposedly ignored urban heat island effects (citing the same three papers multiple times) with a dash of astrology. I mean, really, who reviewed this? (And don’t get me started on the information-free extrapolation of his results to climate policy!).

Bottom line

As was clear in the comment we made on his first paper, the internal variability of the multi-model ensemble and the observational uncertainty in the trend, make Scafetta’s preferred conclusion (that we should reject all models with ECS above 3ºC), untenable. Rather than some new ‘advanced’ methodology that better constrains ECS than any previous work, the efforts here are shoddy, derivative, and, when examined, actually support the previous conclusions from the IPCC and the papers they relied on. These two papers should become object lessons in how not to compare models with observations which, ironically, might make them relatively well cited.

References

- N. Scafetta, "Advanced Testing of Low, Medium, and High ECS CMIP6 GCM Simulations Versus ERA5‐T2m", Geophysical Research Letters, vol. 49, 2022. http://dx.doi.org/10.1029/2022GL097716

- N. Scafetta, "CMIP6 GCM ensemble members versus global surface temperatures", Climate Dynamics, vol. 60, pp. 3091-3120, 2022. http://dx.doi.org/10.1007/s00382-022-06493-w

- Z. Hausfather, K. Marvel, G.A. Schmidt, J.W. Nielsen-Gammon, and M. Zelinka, "Climate simulations: recognize the ‘hot model’ problem", Nature, vol. 605, pp. 26-29, 2022. http://dx.doi.org/10.1038/d41586-022-01192-2

- J.T. Fasullo, J. Lamarque, C. Hannay, N. Rosenbloom, S. Tilmes, P. DeRepentigny, A. Jahn, and C. Deser, "Spurious Late Historical‐Era Warming in CESM2 Driven by Prescribed Biomass Burning Emissions", Geophysical Research Letters, vol. 49, 2022. http://dx.doi.org/10.1029/2021GL097420

- C.D. Rye, J. Marshall, M. Kelley, G. Russell, L.S. Nazarenko, Y. Kostov, G.A. Schmidt, and J. Hansen, "Antarctic Glacial Melt as a Driver of Recent Southern Ocean Climate Trends", Geophysical Research Letters, vol. 47, 2020. http://dx.doi.org/10.1029/2019GL086892

The “Astrology” link doesn’t work. Looks like it’s to your intranet: http://10.0.3.248/j.jastp.2010.04.015

[Response: Sorry. Fixed. – gavin]

Cue the North Carolina Denial Chorus and Marching Band.

I just realized that I know Scafetta. He taught my graduate classical mechanics class when I was in grad school. He was ok as a teacher, I got an A in his class, and I was the only one who got an A on the final (he had a tricky question about a gyroscope that I was the only one to figure out). We were aware of his research (or “research”) but it never came up in his class. Some of the other grad students really didn’t like him as a teacher but I and a few other students didn’t think he was that bad.

“Are there processes missing in all these CMIP6 models that can explain the southern ocean discrepancy?”

A simple “hot fix” would be the consideration that there are basically no aviation induced cirrus over the southern ocean. But sure few would like the implications..

“In particular, we don’t have perfect information about how aerosols…”

Well, that is a very interesting point. Looking up AR6 I see aerosols cancel about 1/3 of anthropogenic GHG forcing. As noted above, this question is specifically important for the assessment of climate sensitivity. The more aerosols counter(ed) the warming by GHGs, the higher climate sensivity hereto might be, and vice verse.

I looked up GISTEMP v4 and simply compared the last 20 year average vs. the first 20 years. So it is 2002-2021 vs 1880-1899. The data are split up by latitude with 8 segments (90N-64N, 64N-44N, 44N-24N, 24-EQU, and the same for the SH). From north to south the warming is (in K)..

3.028

1,5815

1,0975

0,798

0,768

0,7615

0,402

0,3135

In other words, we have a very distinct north-south divide. Strong polar amplification in the Artic, none at all at the South Pole. But once we consider aerosols, it gets a little spicy. Aerosols tend not to spread globally, they rather stay close to their origin (unless they get ejected into the stratosphere by a volcano). The negative forcing by aerosols thus should be located, and concentrated in mid NH latitudes. And given it amounts to 1/3 of total GHG forcing, it should be pretty strong there.

I think the problem is pretty obvious. Lots of warming where aerosols shall be cooling, little warming where they are not. In science we call this a falsification.

E. Schaffer, No. In science, we call a missive like yours cerebral flatulence. I mean, if only there might be some other difference between North and South…

Hi E. Schaffer,

Have you factored in the following things that might also affect warming in various latitudes – esp. since the numbers you show demonstrate the highest northern latitudes are warming faster than where all that pollution-based aerosol generation is going on in Asia (and where US and European had been emitting prior to clean air laws.)

– North pole is a surface-level ocean surrounded by land with several outlets to Atlantic and Pacific, with daylong solar irradiation over dark, open waters increasing every decade

– Most land surface is in the northern hemisphere, and land is warming faster than sea surface and amplifies warming around the Arctic

– There is a circumpolar surface current and wind patterns in the Southern Ocean that affects surface mixing from lower latitudes (and contrasts with landmasses at same latitudes in the NH)

– Antarctica’s average elevation is 2500 meters up atop the ice, with elevation and perpetual ice combining to keep it dry and very cold (and avoids mixing much lower-altitude air and pollutants – again, esp. compared to its polar opposite). Very different albedo from the north, too, under long summer daylight.

What have you falsified?

Putting aside questions about data quality in the polar regions – especially Southern Hemisphere – in the late 1800s, it looks like a decent match for ice/snow albedo feedback. This is quite strong. Ice and snow are extremely good reflectors at visible wavelengths, but also strong radiators in the longwave.

As B Fagan pointed out, replacing floating arctic ice with dark ocean water is quite effective for warming. Meanwhile, cold season snow cover on the northern continents has also decreased. This would be strong warming feedback at 64-44N, less in the mid latitudes. However, there is still winter snow cover in the continental interiors and higher elevations below 44N. The tropics already warmed nearly 0.8C, which is quite a large number when you consider how important tropical temperatures are to global dynamics. The Southern Ocean is hard to warm compared to the large landmasses in the Northern Hemisphere, with virtually no opportunity for ice/albedo feedback.

You haven’t falsified the other processes. You only show that aerosols aren’t the whole story.

Albedo feedback is not that significant. On average snow has an albedo of about 50% only. This is a) a spectral thing, as snow has low reflectivity in the near IR and b) it drops with age. Water on the other side is highly reflective at low angles of incidence. Between 23.44° and 0° water reflects 10% to 100% of sun light.

In the current literature positive lapse rate feedback plays a more important role. As the surface warms, the lapse rate increases (on average), and the higher the lapse rate, the larger the GHE. So goes the logic.

I think both are wrong. Rather it is convection (if that is the right term) from water to air. It the same thing we know as maritime climate, with mild temperatures especially in winter. As arctic sea ice declines, the winters become much warmer. Not the summers though..

With regard to the falsification: Not I falsify, the data do.

E. Shaffer,

The GISTEMP zonal mean page provides analysis of period trends by latitude and this shows the warming is a northern high latitude thing but only in the winter, centred on Christmas. So if we can get Santa Claus to reduce his carbon footprint, it will be ‘problem sorted.’

He is an old, white man.. not a chance

I haven’t read this paper yet, but speaking of aerosols and climate impacts – a paper that looks at localized differences in climate and social cost of that kind of pollution.

“Geographically resolved social cost of anthropogenic emissions accounting for both direct and climate-mediated effects”

SCIENCE ADVANCES 23 Sep 2022

DOI: 10.1126/sciadv.abn7307

Here’s from the start of their Results section: “We find a large divergence in impacts resulting from identical amounts of aerosols emitted from each source region that begins with strongly differing physical system responses under both air quality and climate conditions.”

And a brief description of the work courtesy of National Science Foundation

https://beta.nsf.gov/news/air-pollution-can-amplify-effects-climate-change

Glad to see that you finally managed to stop laughing, Gavin…

Hr Schmidt

Scafetta again

I have commented on him before

I worked for a while for an antroposopher ( peace be with them) at the university,, but that one was one- eyed, unreliable and very large.

I got to know Theodor Schwenk Das sensible Chaos and was able to design fabulous capillary wave and hydrodynamic experiments for the Antroposophic society in the newtonian and the van der waals field with colours.

Scafetta relates to this, but he cannot quite convince and perform.

Theodor Schwenk, the chief antroposophic hydrologist, was better.

After all his very convincing experiments he published that what he did only show and hardly anything else, was Water!

Scafetta hardly found water, so he had to sell the alternatives such as the barycenter and Saturnus, the alternatives with error bars and confidence, , smile smile.

And there you have him.

Scafettas methods and sales are hardly appliciable exept for professional cheaters.

Golly!! The eagerness of poor deluded Nicola Scafetta to conclude that:-

This suggests he doesn’t grasp the difference between climatological analysis and spouting bullshit. And just in case the prospect of +2ºC AGW by 2050 is seen as alarming (and it should be), he gathers together other purveyors of bullshit to suggest it won’t be so bad – folk who swear blind they have found that mythical archipelago called the Urban Heat Islands (Connolly et al; D’Aleo, Scafetta himself & that old salty seadog Watts who grand works have gained publication within Heartland Institute propaganda) or those who have also shown ECS to be very very low (Lewis and Curry, Dickie Lindzen and Choi, Scafetta hinself again, Stefani & Wijngaarden and Happer) or have grand theories of long-term wobbles in global climate (Scafetta himself yet again & Wyatt and Curry with Wyatt’s Unified Wobble Theory).

Mind, until the Gentlemen Who Prefer Fantasy give Scafetta (2022) their blessing, it cannot be properly graded as AAA*-rated bullshit.

You’ve really hit the nail on the head here MA Rodger….

You claim 2º by 2050 should be alarming. We are currently about 1.2º up on late 19th century temperatures. What can we see that has happened so far? Global cyclones down, No trend in floods or droughts. Weather related deaths plummet (mostly due to forecast and adaptation). Sea level up a scary 25cm. Land area increased. Human life expectancy more than doubled, Crop yields soar

Why should the remaining 0.8º be a problem when the 1.2º hasn’t been? Or is it that the 1880 temp wasn’t as perfect as we thought and it is actually the turn of the century that was ideal? Or the 1950s? Maybe Pleasantville was a documentary

Run away, the sky is falling

Spoken like a man who has never encountered a nonlinear system in his life…and also doesn’t know how to read or interpret statistics.

perhaps Ray, you can point to some thing written about how global cyclones (for example) reduce for the first 1.2º rise and then increase with the next 0.8º rise.

If there is nothing written already, maybe you could jot down your own explanation

Number of storms is only one metric–and climate models do not in fact indicate that there will be more storms. They indicate increased rainfall per storm and perhaps some intensification, the former of which is verified and the latter still under investigation.

Why is it you denialists can’t handle anything more complicated that two variables at a time?

I wasn’t referring to only one variable. All variable typically used as a measure for cyclone impact show no trend or a reduction. Number of storms, number of 3+ storms, ACE, number of storm days etc etc

As for “increased rainfall per storm”… It’s odd that you managed to even find that as a measure, let alone find that the increase is “verified”

Seems denialists are on both sides of the debate. The difference is that I referenced a number of variables and you found one obscure one

Ray Ladbury,

You presume the denialist Keith Woolland means “number of storms” when he talks of “how global cyclones (for example) reduce” and “global cyclones down.” While the number of storms is something that has reduced, this predicted by the climatology, it may be the denialist is talking of something else. Perhaps he talks of the numbers of tropical cyclones that never make landfall (which is “down”) or the distance to shore of cyclones at maximum intensity (which is “down”) or the distance to the poles of global cyclone activity (which is also “down”). And I’m sure there are other measures of global cyclones that are “down.”

Keith, that is not hard to find. The number of storms dropping double-digit precipitation totals has definitely increased. The people of Puerto Rico support me on this one.

The main problem with propagandist/denier “stats” with extreme events is that they know nothing of–or perhaps they know much and are abusing their knowledge of–extreme event stats.

To look for “trends” in individual extreme events using extreme events only one must wait for very long periods of time. There are about 3 major hurricanes per year anywhere in the Atlantic which works out to ~30 data points per decade. And of these three, very few make landfall in the US in any one year. For any stat to pick up a “trend” with any sort of actual statistical power is going to take a very long time. “Trends” in US landfalls will take far longer to measure directly since the expected number in any one year is ~.6. (~6/decade).

Propagandists/deniers absolutely LOVE this sort of setup–i.e., lack of any statistical power–to “prove” lack of change. As well, we see it in their invocation of the “lack” of all sorts of extreme event trends.

Science and actuaries have worked out numerous ways of dealing with extreme events. Propagandists/deniers pretty much always completely skip over them for obvious reasons.

Keith Woolard said “All variable typically used as a measure for cyclone impact show no trend or a reduction. Number of storms, number of 3+ storms, ACE, number of storm days etc etc…… ”

He says this without citing any studies. Several variables associated with hurricanes have in fact changed over about the last 50 years, based on empirical evidence. Plenty of links to published studies embedded in the following commentary. He will probably wave his arms about them, but until he publishes his own studies its just arm waving

“Climate change is worsening hurricane impacts in the United States by increasing the intensity and decreasing the speed at which they travel…….Over the 39-year period from 1979-2017, the number of major hurricanes has increased while the number of smaller hurricanes has decreased….Warmer sea temperatures also cause wetter hurricanes, with 10-15 percent more precipitation from storms projected. Recent storms such as Hurricane Harvey in 2017 (which dropped more than 60 inches in some locations), Florence in 2018 (with over 35 inches) and Imelda in 2019 (44 inches) demonstrate the devastating floods that can be triggered by these high-rain hurricanes…”

“Sea level rise increases the risk of coastal flooding and has intensified the impact of several recent storms. A study of Hurricane Katrina estimated that higher sea levels led to flood elevations 15-60 percent higher than climate conditions in 1900. A study of Hurricane Sandy estimated that sea levels at the time increased the likely of flooding by three times and that additional rising will make severe flooding four times more likely in the future….”

“Changes in the atmosphere, like the warming of the Arctic, may be contributing to other trends seen in the hurricane record. Hurricanes today travel more slowly than they previously did. Though the mechanism that is causing this slowdown is still debated, it is clear that storms are “stalling” and subjecting coastal regions to higher total rainfall and longer periods of high winds and storm surge. This has increased the destruction caused by recent storms in the United States…..The warming of mid-latitudes may be changing the pattern of tropical storms, leading to more storms occurring at higher latitudes. A northward shift in the location at which storms reach their peak intensity has been observed in the Pacific, but not in the North Atlantic, where hurricanes that make landfall in the Gulf and East Coast are created. This shift could put much more lives and property at risk, however more research is required to better understand how hurricane tracks might change….”

https://www.c2es.org/content/hurricanes-and-climate-change/#:~:text=Based%20on%20modeling%2C%20the%20National,more%20precipitation%20from%20storms%20projected.

Stubborn cranks like Woolard. This website sure has a few.

Turns out that it’s not true that all indicators for tropical cyclones are down globally. Per Klotzbach et al (2022), there are at least three that are up:

They do allow, however, that the first and last of those three are likely not due to climatic factors:

Not so for the second, however:

And while we’re considering attribution, they also say that:

So, two possibilities there: either this is natural variability, or (as is still AFAICT a possibility under investigation) it’s a systemic change in ENSO characteristics due to warming. In the former case, we’ve been lucky in recent years; in the latter, AGW is helping out Mr. Woolard’s Australia (and other western Pacific nations) at the expense of those of us living on or near the east coast of North America.

Keith Woolland,

The idea of restricting AGW to significantly less than +2ºC is because climatology shows the prospect of greater warming is something that will be very damaging for human civilization. Scafetta’s “projected warming is expected to be around 2ºC or less even for the worst greenhouse gas emission scenarios” is probably based on his Fig 9 which assumes low ECS (1.5ºC<ECS≤3.0ºC). The IPCC scenarios include warming by 2050 averaging +2.4ºC (within the likely range +1.9ºC to +3.0ºC) under SSP5–8.5 but this is a scenario others see as being unlikely (although it expressing this situation Scafetta (2022) makes worrying mistakes).

But should we be alarmed by +2ºC AGW by 2050?

The IPCC AR6 show warming for the period 2041-60 averaging +2.1ºC for SSP3–7.0, a little mitigation scenario so something akin to Scafetta's proposals. That's only an additional +0.9ºC by 2050. And by 2100 the temperatures continue to rise with AGW reaching +3.9ºC by 2100. Of course, that is the central 2100 value for that scenario. The likely range runs +3ºC to +5ºC.

Keith,

I’ve poisoned this body for decades. The results aren’t dire: I cut easily. I heal slower. My nails are cupping and ridging. I get pains, but generally not too bad. If I extrapolate the pain and whatnot linearly my life won’t be impacted enough to negate the fun of inebriation before I die. So….

Dr. Woodward, my personal physician, do you recommend that I resume drinking as much as I used to? Or maybe that I stop drinking at all?

Ignoring the symptoms of impending systemic collapse even as a nice chunk of Florida is erased…

Did ya hear about the reinsurance industry’s ongoing discussion about whether to exit the US gulf coast?

No trend in floods or drought? (Tell that to the people of Bangladesh). Life expectancy up, (like that was a climate dependent/related change? LOL!) Crop yields soar (sure as long as we keep destroying Mississippi basin life with exponentially increasing chemicals!) Note: the “Green Revolution” failed-Sea level rise not a worry? (Tell that to city planners in Miami) what has this author been sedating self with?

Peter,

Your answer typifies the anti-science attitude of the vast majority of people on this site.

And the “Bangladesh floods” is the poster child for this attitude.

BANGLADESH FLOODS ARE NOT GETTING WORSE!

Any search of the literature will show this. As usual you are confusing the current trend with what the models say will happen. My original point exactly….. things are getting better but they are about to get worse.

Life expectancy is increasing. – you are right, it probably doesn’t have much to do with climate, but it certainly has a lot to do with access to cheap, reliable transport and energy

And crop yields have improved due to many factors (including CO2 increase) but my point is that they are improving. Under your the “sky is falling” attitude, they’re rising but that is all just about to change and the trend is going to reverse any minute now,

Perhaps you should read the actual science rather than newspapers and evangelists

Let me just preempt Ray’s response here. I have said floods in Bangladesh are not getting worse, but I feel confident that Ray will show that despite all the papers confirming this, if you look at floods that occur on prime numbered years, you will find the water is wetter nowadays and that is why we should all be panicking

Actually, the situation wrt Bangladesh is complicated. Flooding has always occurred there. In fact, some level of flooding is essential to the health of the country’s agriculture. And there are different kinds of floods that occur–flooding of the three major rivers, impulse flooding that causes landslides…

There are also competing trends:

1) Decreasing snowpack in the Himalayan glaciers means lower river levels and groundwater levels. This is not entirely good,.

2) Increased impulsive rain events

You have to do careful analysis to disentangle all of these effects. I don’t have to ask Keith whether he’s done such analysis. I already know the answer is in the negative. Here is one pretty good analysis:

https://www.ncbi.nlm.nih.gov/pmc/articles/PMC4344662/

It shows pretty clearly that what used to be a 100-year event now occurs more often than once in 10 years and that the events are lasting longer as well.,

Couple this with demographic trends and the potential for human misery should concern anyone with a heart.

@ Ladbury

The glaciers are an important issue, and only very few people are aquainted to them.

The monsune rain, not the melting of Himalayan glaciers, are causing the Bangladesh floods.

We have seen unqualified rabid political arguments in the climate dispute of Himalayan glacier disappearence will mean that they will have no water.

Without high mountain glaciers that store possible water and maybe damp the rushing flow, you will have to build large artificial dams,…. that are expensive …. and quite dangerous if they break.

But Glacier storage may also be quite dangerous.

Have you heard of http://www.jøkullaup ?

Carbomontanus,

If the monsoons come early–when glacial melt is still swelling the 3 big rivers in Bangladesh, then the flooding will be a lot worse. This will be especially if they have anomalously high melting in that year.

Ladbury:

What you argue with is springtime flood, that is quite much more water than glacial melt.

Large areas and quantities of winter snow uphill Himalaya and Assam province that melts off and rushes down Bramaputra each spring is not glacial meltoff..

The serious glacial meltoff is when you have maximum blue ice melting down and running off in August later in the summer, under heavy and warm monsune rains..

That situationh is fameous in our days in the alps, in western Norway, on Island and even on Grønland where there was warm late summer rain with blue ice appearing up to the very top of the glacier some years ago.

But the main area of very high percipitation for the Bramaputra, Ganges, and Indus rivers… is not glaciated. It is uphill Himalaya still with bushes and tigers, that only may have winter snow delivering springtime meltwater flooding.

We have the western european monsune with glaciers here,and hydroelectric industries & road riverway systems that shall keep control of it. The main volumes of creek and riverflow comes from rain and snow on naked rock and bush and mosses land areas, and hardly from those tiny melting glaciers.

Retreating glaciers do only give off a small part of the river water

Rain and snow in Himalaya and Tibet, the Alps, Island and Norway will come down in any case. But not via glaciers. Because there are not enough glaciers for all that. Grønland may represent another situation that catches up allmost all of Grønlands total annual percipitation. and delivers it partly as icebergs to the sea.

Or to give it this way Dr. Ladbury,

it being glacial melt is vulgar supersticion and easy political propaganda.

Glaicial melt, it aint not, proof: Then all those tiny glaciers would have disappeared years ago allready.

It is rather annual winter snow melt…. as allways,……. in addition to monsune rain more or less,… as allways…..

That annual winter snow comes and goes very regularly and in huge quantities. The tiny glaciers in the same landscape are obviouslky more permmanent remaining allthough more and more vanishing.

Moral: You should learn rather to conscider the material budget of it.

@ all and everyone

http://www.Frühlingsrauschen …..Vårflom…

Chr. Sinding, Norway

Your problem is that of the Faraday temperatures, that are silly and confusing.

Zero Celsius or rather Linnaeus…. is what it is about in the climate

Yes Ray I had read that paper. Is this an appropriate paraphrase? ……..

We took all the data and plotted out the trends for the min, max and three quartiles and clearly the major flood events were reducing and that wouldn’t be acceptable. On top of that minimum levels were also increasing. So we then threw out a randomly chosen 40 year chunk from the middle of the dataset with no explanation as to why we chose that amount and compared the first 30 years with the last 30 years to get the result that would fit the perception that “current observations of extreme events having become more extreme”

They even highlight their data selection lie by comparing the other key paper I read (Mirza) and say both teams found a decrease in major flood events, but then talks about the first half and the second half of the century when in fact they analysed no such thing

I’d have a question for him if Scafetta suggests the following: “However, adaptation policies are much more affordable than mitigation ones”. The question is how many of those cheaper adaptation policies can we buy in bulk, so the patches can be stacked up sequentially in place? I’d love to see his numbers to compare the approaches through 2100.

Elevating a stretch of coastal highway, for example, really pushes the concept of “affordable” and then repeating that “adaptation” every few decades won’t be cheaper each time. Sewer lines are gravity fed, so sewage treatment plants for cities are typically at the lowest local elevation. Raising all that is major civil engineering as water tables move up, and people tend to expect even a raised sewer line is still kept underground. Of course, in Scafetta’s world, major civil engineering projects are quick, cheap and fun.

Mitigating means all future fuel purchases are avoided after the replacement is bought. Going to that highway he’d keep re-raising, how much avoided cost would accrue over three decades if you assume vehicles are electrified and people don’t buy gasoline, diesel and motor oil anymore – especially when their electricity is from non-fossil source, too?

But I love that he feels “the risks associated with possible future climate change should not be overestimated” – because as we all know, if science is “uncertain”, then Nature automatically favors the outcome most beneficial to modern industrialized society. Because the uncertainty monster loves people, I guess.

I suspect he would suggest that the Bangladeshis and the Pakistanis grow gills.

And if that happy outcome were the result of genetic engineering, someone might turn a handsome profit. The miracle of economic growth!

There you go, considering Others again. This is kind of like an IRA. As long as the First World is comfy enough until 2050, the old white men’s climate IRA is fully funded.

Can you think of any reason other than pure distain for those who follow to use 2050 as an absolute end point? (Which he does by going into policy recommendations).

I can: Fundamentalists get off on End Times porn. Kinda interesting how religious prophecy actually does work. No deity needed.

(And yes, it’s me. My new friends in Cali have started calling me BDR.)

Gavin or anyone else,

Do you have any comments on Nic Lewis’ most recent paper, which he claims corrects the IPCC AR6 estimates from 3.26 K ECS to 2.16 K? https://link.springer.com/article/10.1007/s00382-022-06468-x#Sec12

Thanks

[Response: Yes… but with so many authors organizing a response is a process. Clearing up what are real mistakes, straightforward updates, matters of opinion, and/or unjustified or biased choices and what the impacts of these takes a bit of time. So, yes, there will be something but not at the speed of blog. – gavin ]

Philip Meyer,

I don’t think Lewis corrects the IPCC but rather Lewis evaluates Sherwood et al (2020) An Assessment of Earth’s Climate Sensitivity Using Multiple Lines of Evidence. Lewis concludes that ” This sensitivity (of the derivation of ECS) to the assumptions employed implies that climate sensitivity remains difficult to ascertain, and that values between 1.5 °C and 2 °C are quite plausible.”

IPCC AR6 Sec 7.5 concludes saying “Based on multiple lines of evidence the best estimate of ECS is 3°C, the likely range is 2.5°C to 4°C, and the very likely range is 2°C to 5°C. It is virtually certain that ECS is larger than 1.5°C.”

So the IPCC is saying ECS in the range 2°C to 1.5°C is ‘very unlikely” while Lewis is saying it is “quite plausible” which in my book means “a little bit likely.” So Lewis & the IPCC are not a million miles apart (which is a big improvement on past positions when Lewis in addressing a Parliamentary committee was calling the IPCC a bunch of liars).

A tornado hitting Witchita Falls (TX) on Aug 4, 2023 at 5:00pm and not at any other time that month is “plausible”. However only a complete fool would bet on it.

To all and everyone

Gavin Schmidts correctures to Scafettas astrology adress was important.

By that, I could recall and recognize information details o9f Scafetta , that I have from before, and further judge if he is worthy of being followed for facultary reasons related to geophysics, which he is not.

But on the other hand, it is quite important for us also to have some knowledge and experience about cheating quackery and popular parasciences, their ideas arguments and style, and eventual relations and sources and its history.. And in that respect, Scafetta is quite interesting.

I can see it also from the side of popular antroposophy, new age, …. and hydro & aerodynamics- pythagoreanism and musical acoustycs.

My judgement and evaluation of his contributions in relation to that is rather negative. As I wrote above, a Theodor Schwenks contributions may be useful at least, for funny and interesting experiments and effects and for good art. That aspect and those horizons as far as I can see are rather lacking by Scafetta. However luna- tic , Jovial saturnic and periodic, it is not astronomic and no Harmonices Mundi, no physical musical practical pythagoreanism.

It will rather inhibit and contaminate such practical and fruitful interests.

If you whish to design a funny impressive fountain , gardeen creek or aqvarium, then Schwenks works is practical and useful. But Scafetta is not. One can have fun at the beach and in the snow with Schwenk, but not with Scafetta.

Scafetta hardly discusses Nature.

I have a suggestion. Why don’t you both put your money where your mouths sit. Each purchase same computational machine. The each build a climate model. Collect data from same collection points. And start making predictions. Let outsiders request predictions. And see Who’s right. I’ll put a $1000 on scaffetta making Schmidt eat his yap yippity yap.

As they say about fools and their money….

THEY say a lot of things. They say oil is a fossil fuel. Only fossil fuel I know of that can replenish itself in decades,

TP: They say oil is a fossil fuel. Only fossil fuel I know of that can replenish itself in decades,

BPL: Except it can’t, which is why oil companies have to consistently dig deeper and deeper in poorer and poorer sites over time, and turn to sources like shale and tar sands. Don’t spread lies.

Uh, Tyler, do you have the faintest idea of the kind of “machine” required to run a fully-coupled GCM these days?

Your proposed wager suggests you’re the guy with the “yap yippity yap.”

I suspect any machine capable of fea or Monte Carlo would suffice. I’m one private citizen. Few wagers and they’ll be fine. I’m not benefiting but for the possibility of crisis dependent academics shutting their traps.

Your “suspicions” are not based in fact. It takes rather significant supercomputers to run real climate models.

Bwaaaaahaaaaahaaaaa! Tyler, when are you taking your act to a comedy club?

-v/\

o|_O

—

You’re too late for that train Tyler. There is an already well established history climate bets, with its attendant record of wins and losses.

https://www.realclimate.org/index.php/archives/2021/02/dont-climate-bet-against-the-house/

https://skepticalscience.com/impossible-to-lose.html

Thanks, I appreciate the sincerity and links. But I differ in conclusion. Theres been some bets and some people dont want to pay. Hilariously, for claiming to predict the dire fate of humanity, from a blip of recording time on a changing metering stick, sure couldnt predict that money goes up first or you may not see it.

Further, the vagueness of “its gonna be warmer” isn’t gonna cut it. There is constant claim of forgone conclusion of the sky falling is and it will fall . That’s come and gone at least half a dozen times. No ones payed up for those.

What amazes me is how cocky and snide “Real Climate Scientists” (whatever those are) remain even though they’re wrong daily. I mean, its called climate change now because they can’t call it global warming.

It’s warmer. Lol. I want pinpoint. Flip a coin 6 times, you might get heads six times. Flip it a thousand, and it’s gonna come out about 5o/50.

All the while real dangers and concerns are metastisizing for lives of people, and are be accelerated by academic snake oil salesmen.

Tyler Peterson: “It’s warmer. Lol. I want pinpoint.”

James Hansen, Makiko Sato and Reto Ruedy wrote in their August Temperature Update, dated Sep 22:

See also Fig. 3:. Global surface temperature relative to 1880-1920 mean.

http://www.columbia.edu/~jeh1/mailings/2022/AugustTemperatureUpdate.22September2022.pdf

Data will determine by early:

• 2023 if the first ‘prediction’ – “approximately a dead heat with 2017” – is close to the mark (or not);

• 2024 if the second ‘prediction’ – “2023 temperature should be higher than in 2022, rivaling the warmest years“.- is close to the mark (or not);

• 2025 if the third ‘prediction’ – “2024 is likely to be off the chart as the warmest year on record” – is close to the mark (or not).

Not long to wait for confirmation!

So does that mean if 2024 is not “off the chart as the warmest year on record” Then we can dispense with this people-killing mega experiment in social engineering and go back to providing the world’s poor with what the rest of us have taken for granted over the last 100 years?

Will talk to you all again in 26 months

They made the mistake of presuming denialists were gentlemen. It is not a mistake any of us will make twice.

TP: its called climate change now because they can’t call it global warming.

BPL: Global warming is a type of climate change, TP. The IPCC, founded in 1988, is the International Panel on Climate Change. Nobody changed anything.

TP: All the while real dangers and concerns are metastisizing [sic] for lives of people, and are be accelerated by academic snake oil salesmen.

BPL: Don’t spread lies.

I’ll see that action rube!

Hr Schmidt

Somehow I( feel that discussing and talking about Scafetta behinde his back is immoral and vulgar, rather Mobb and Prole- tarian, maybe “norwegian” so to speak.

We must let the patient get to word also, that is the academic and psycho- analytical way

If Scafetta is a plague and disease, a pollution to the climate , then we can discuss him / it on the levels of coalsmoke and fluor hydrocarbons that will be our own guilt and blame and we will have to correct our lifestyle..

But, if he happens to be alive, then call him here for examination . Take him to court in person and alive for scrutiny, the way we want Donald Trump and Vladimir Putin & cetera served. with the full respect and rights that are secured by the UN declaration of Human Rights articles 2…………15 as far as I can see… and signed under to by The King,

…. along with the FAWC farm animal welfare councile all paragraphs but especially § 4 give them right in all there is as far as possible. That rules also for a Nicola Scafetta.

Do you anticipate commenting on Scafetta’s recent (18 Sep 22) paper

“CMIP6 GCM ensemble members versus global surface temperatures” ?

https://link.springer.com/article/10.1007/s00382-022-06493-w

tx

“Are there processes missing in all these CMIP6 models that can explain the southern ocean discrepancy? Probably. (Rye et al, 2020); Is cloud phase in some models being inappropriately tuned? Maybe (Cesana et al, subm). Instead we get a litany of vague insinuations about supposedly ignored urban heat island effects (citing the same three papers multiple times) with a dash of astrology. I mean, really, who reviewed this? (And don’t get me started on the information-free extrapolation of his results to climate policy!).”

Gavin Schmidt carries on about his ‘models’ while in the real world this is what is happening.

“Amid a record hot summer in large parts of the Northern Hemisphere, beset by devastating fires, floods and hurricanes, Antarctica was mired in a deep, deep freeze. That’s typically the case during the southernmost continent’s winter months, but 2021 was different.

The chill was exceptional, even for the coldest location on the planet.

The average temperature at the Amundsen–Scott South Pole Station between April and September, a frigid minus-78 degrees (minus-61 Celsius), was the coldest on record, dating back to 1957. This was 4.5 degrees lower than the most recent 30-year average at this remote station, which is operated by United States Antarctic Program and administered by the National Science Foundation.

https://www.washingtonpost.com/weather/2021/10/01/south-pole-coldest-winter-record/

A record cold temperature in Antarctica has nothing to do with what you quoted, but coherence doesn’t matter as long as you can say it’s cold somewhere. It’s also best to imply the point (what global warming?) rather than openly completing the thought, because saying it directly would be more obviously asinine. So, well done there.

Of course, weather is not climate, and there are record-breaking cold events around the world every year. But the record-breaking hot events tend to outweigh the cold ones by about 5 to 1 on average.

Here’s a pile of statistics on that over the last 20 years. You could go for gold and quote-mine all the cold record-breakers and ignore the far greater number of hot ones. Good luck!

https://www.mherrera.org/records.htm

https://www.mherrera.org/records1.htm

https://www.mherrera.org/records2.htm

https://www.mherrera.org/temp.htm

“”” that exist from both UAH and RSS, but just the one from UAH which he suggests is (on the basis of nothing) more accurate than any in-situ surface data. My opinion on that probably differs somewhat from his, “””

Everybody is entitled to their opinion.

As long as the UAH dataset remains valid and R. Spencer has good scientific reasons for his analysis (https://www.drroyspencer.com/2019/04/uah-rss-noaa-uw-which-satellite-dataset-should-we-believe/

“Despite the most obvious explanation that the NOAA-14 MSU was no longer usable, RSS, NOAA, and UW continue to use all of the NOAA-14 data through its entire lifetime and treat it as just as accurate as NOAA-15 AMSU data.”, Scafetta seems to just follow that reasoning)

adding an additional data set like RSS does not disqualify UAH data, but MUST only result in an increased systematic uncertainty about measured temperature trends and G. Schmidt´s analysis needs to reflect that in a correct way.

In particular the confidence intervals in his graphs then need to to be cast wide enough envelope both datasets UAH and RSS! He cannot simply ignore data he does not like as he is apparently showing in his graphs!

So, if NOAA-14 data is ‘the culprit,’ then why do the analyses using it end up being more consilient with the instrumental record than UAH?

You complain about Gavin “ignoring data he does not like,” but wasn’t it rather Scafetta who ignored RSS?

>> being more consilient with the instrumental record than UAH?

R. Spencer writes “Clearly, the RSS, NOAA, and UW satellite datasets are the outliers when it comes to comparisons to radiosondes and reanalyses, having too much warming compared to independent data.”

So, I think you are mistaken.

As to a model, analysis or anything being “right” beside using a questionable method, why dont we just let 4 year olds draw up random scribble and pick what we like? To me that seems very similar to making and still using predictions based on CMIP5 and older generations from which we know that they do not represents clouds correctly!

>> You complain about Gavin “ignoring data he does not like,”

I do, more precisely his analysis should in my opinion incooperate the systematic uncertainty coming from the two methods to have any scientific meaning, pointing so someone else’s potential mistakes does NOT absolve Gavin!

>> So, if NOAA-14 data is ‘the culprit,’

Why do you say if? R. Spencer clearly shows that there is a problem, do you doubt the data or find any flaw in his analysis in this regard:

“the NOAA-14 satellite carrying that MSU had drifted much farther in local observation time than any of the previous satellites, we chose to cut off the NOAA-14 processing when it started disagreeing substantially with AMSU.”

Sounds to me like a solid scientific decision and perfectly defensible by either Spencer or Scafetta.

Laws of Nature,

Should you be quoting Spencer’s own “comparisons” in this matter? Surely it requires far too much credulity to insist somebody is correct and everybody else wrong (aka “outliers”) because that same individual insists that they are correct when all other disagree. Do remember that this is the same RW Spencer (along with his chum JR Christy) who for years used his own analysis to insist the troposphere was cooling until others found the mistakes in his analysis. I wouldn’t trust Spencer any more than the fantasist Scarfetta. Indeed, Spencer’s theorising is so crazy, it is scooped up and rebroadcast by the Gentlemen Who Prefer Fantasy. Any sane researcher would not allow that.

My opinion does not count,

your opinion does not count

and Gavin´s opinions also do not count in science.

It comes down to a scientific method and here specifically, there is a systematic difference from two methods to extract temperatures from Satellite data. This is not a matter of opinion.

Gavin cannot choose to ignore valid data in an scientific analysis.

>> troposphere was cooling until others found the mistakes in his analysis

It seems you agree that Spencer´s current method has corrected that mistake.

Then Gavin´s graphs are incomplete (if they want to portrait the current scientific data)

Laws of Nature,

The differences between RSS TLTv4 & UAH TLTv6 are big enough for us to say that one of them is evidently wrong. If there is difficulty identifying which is wrong, rather than wring our hands in despair, perhaps we should ignore them both.

But then there is the evidential argument set out by Spencer which doesn’t seem to find much favour with his fellow climatologists. Looking at the first point Spencer attempts to make in that link, he tries to defend UAH TLT because a comparison with HadCRUT4 gives a not-dissimilar trend 2003-17. Yet HadCRUT5 does not, a new version of HadCRUT that was not unexpected in early 2019.

OLS TR|ENDS 2003-17

HadCRUT5 +0.24ºC/decade

GISTEMP +0.24ºC/decade

ERA5 +0.25ºC/decade

RSS TLT +0.23ºC/decade

UAH TLT +0.18ºC/decade

Simply put, if numpties like Spencer or Scarfetta had even a half-decent reason for behaving as they do, there work would be treated seriously. But they don’t so they aren’t.

>> everybody else wrong (aka “outliers”) because that same individual insists that they are correct when all other disagree.

Actually, I would like to add, that you misuse the word outlier as in statistics it has nothing to do with the systematic errors which we are discussing here.

Also, I am not sure who exactly disaggrees with R. Spencers observation that

“””the NOAA-14 satellite carrying that MSU had drifted much farther in local observation time than any of the previous satellites””

Or that

“”the older MSU was known to have a substantial measurement dependence on the physical temperature of the instrument (a problem fixed on the AMSU)””

I do not think that those facts are under debate in the community.

And this clearly does point to a negligence of those other datasets as to not account for it.

Laws of Nature,

The term “outlier” is Spencer’s not mine.

And the period 2003-17 was chosen by Spencer specifically because it dodged the NOAA-14 controversy. The position of UAH TLT as an “outlier” if the analysis is repeated for 2003-21. Mind, things look a little bit different if the period-length is maintained and 2007-21 is used to bring the analysis up-to-date. (But a 15-year-long OLS on global temps is not usually recommended.)

OLS TR|ENDS 2007-21

HadCRUT5 +0.27ºC/decade

GISTEMP +0.29ºC/decade

ERA5 +0.34ºC/decade

RSS TLT +0.32ºC/decade

UAH TLT +0.28ºC/decade

>> The term “outlier” is Spencer’s not mine.

My apologies, I did not know that, it is still not the right term.

>> the NOAA-14 controversy.

Could you elaborate on that, there does not seem to be much controversy.

I already wrote:

“””

Also, I am not sure who exactly disagrees with R. Spencers observation that

“””the NOAA-14 satellite carrying that MSU had drifted much farther in local observation time than any of the previous satellites””

Or that

“”the older MSU was known to have a substantial measurement dependence on the physical temperature of the instrument (a problem fixed on the AMSU)””

“””

Are there any scientists trying to ignore/deny these facts making up meaningless temperature products as a result?

Are we at least agree that this creates a systematic difference and as long as the two methods exist, “Gavin” should use proper uncertainty intervals in his graphs reflecting this?

Laws of Nature,

I am not enthusiastic about the argument that runs – the UAH TLT record is globally showing vastly different results from RSS TLT and all SAT records and thus the UAH TLT trecord is obviously wrong. However, my lack of enthusiasm for such a simplistic argument is somewhat overcome by the silly comments from Spencer who attempts to use the exact same argument to insist that his UAH TLT record is an accurate measure of AGW and all others are wrong.

So let’s consider the SAT records.

Relative to HadCRUT5, there is very little difference between them. The ERA5 reanalysis shows an acceleration through the period 1979-to-date that is not so evident in GISTEMP and not evident in HadCRUT5 & BEST. (Mind HadCRUT5 & BEST do use the same SST.)

But I have seen no reasonable argument that these SST records are as systematically inaccurate to the extent that UAH TLT would be more accurate a measure.

This then leaves us with the comparison of TLT records with the SAT records. TLT are measuring different parts of the global system so there may be good reason for them showing different rates of warming.

But here is the thing.

If you plot out a comparison of these SAT & TLT records (see here a graph of SAT & TLT 5-yr rolling aves relative to HadCRUT5), the UAH TLT record runs along with the other records (specifically here HadCRUT5, GISTEMP, BEST, ERA5 & RSS TLT) from 1979-2000. It then plunges away from the other records 2000-07 before resuming an SAT-like rate of warming post-2007.

So if you want to defend Spencer, you explain what was going on 2000-07 that causes this divergence because Spencer’s satellite drift doesn’t explain it.

So, do you not know what the term “instrumental record” means, then?

Hint:. Not “RSS, NOAA, and UW satellite datasets.”

Laws of Nature,

You may be unaware that UAH also uses NOAA14 data, just less of it than RSS.

Spencer’s comment; “RSS, NOAA, and UW continue to use all of the NOAA-14 data through its entire lifetime and treat it as just as accurate as NOAA-15 AMSU data,” is just plain rhetoric. RSS do not consider NOAA14 (or NOAA15) accurate.. From their method paper:

“If we exclude MSU data after 1999 (implicitly assuming the error is due to NOAA-14), the long-term trend decreases by 0.008 K decade−1, and if we exclude AMSU data before 2003 (implicitly assuming the error is due to NOAA-15), the long-term trend increases by 0.007 K decade−1. Our baseline dataset will use both MSU and AMSU measurements during the overlap period, implicitly assuming that the error is caused by both satellites, but the other possibilities are equally likely, so these trend differences contribute to the structural uncertainty in the final product. This analysis implicitly assumes that the calibration drifts only occur during the period of overlap. If the drift extends to periods before or after the overlap, its impact on the final results would be larger. However, during most of the period when NOAA-14 and -15 do not overlap with each other, they overlap with other satellites and do not show evidence of continuing calibration drifts immediately before or immediately after the overlap period (NOAA-15 does appear to undergo a calibration drift later in its lifetime).”

https://journals.ametsoc.org/view/journals/clim/30/19/jcli-d-16-0768.1.xml

It seems about adequate to repeat my earlier statement, because none of those details adress “Gavin”´s mistake:

I wrote:

“””

Everybody is entitled to their opinion.

As long as the UAH dataset remains valid and R. Spencer has good scientific reasons for his analysis (https://www.drroyspencer.com/2019/04/uah-rss-noaa-uw-which-satellite-dataset-should-we-believe/

“Despite the most obvious explanation that the NOAA-14 MSU was no longer usable, RSS, NOAA, and UW continue to use all of the NOAA-14 data through its entire lifetime and treat it as just as accurate as NOAA-15 AMSU data.”, Scafetta seems to just follow that reasoning)

adding an additional data set like RSS does not disqualify UAH data, but MUST only result in an increased systematic uncertainty about measured temperature trends and G. Schmidt´s analysis needs to reflect that in a correct way.

“””

Remains just as true as before, and thus the graphs in this post seem unscientific.

Laws of Nature,

It seems to be an innate part of your nature to ignore what you don’t wan’t to see but perhaps I should be so bold as to point out that your ridiculous belief in the usefulness of UAH TLT was the subject of an interchange between you & me. And you will see up-thread I am asking you to explain the crazy part of the UAH TLT record 2000-07 and you have yet to respond.

And in science it is not true to say “Everybody is entitled to their opinion.”

No, it’s not nearly “adequate.” You don’t even pretend to answer most of the points made.

Most fundamentally, you are inverting the situation. It’s not that Gavin–and, by the way, that’s his real name so you can lose the scare quotes–ignores UAH; it’s that Scafetta ignores RSS. And it’s precisely Gavin’s point that this results in an incorrect error assessment.