You need to be careful in inferring climate sensitivity from observations.

Two climate sensitivity stories this week – both related to how careful you need to be before you can infer constraints from observational data. (You can brush up on the background and definitions here). Both cases – a “Brief Comment Arising” in Nature (that I led) and a new paper from Proistosescu and Huybers (2017) – examine basic assumptions underlying previously published estimates of climate sensitivity and find them wanting.

Last year, there was a paper that described a new composite record of temperatures going back 2 million years (Snyder, 2016). As we discussed at the time, those results were used to conclude that the Earth System Sensitivity (the total response to a doubling of CO2 after the short and long-term feedbacks have kicked in) was around 9ºC — much larger than any previous estimate (which is ~4.5ºC) — and inferred that the committed climate change with constant concentrations was 3-7ºC (again much larger than any other estimate – most are around 0.5-1ºC). We posted a discussion about why (even in principle) this was not a good methodology for estimating ESS. Well, now there is a Brief Comment Arising (and a rather combative response) published in Nature.

As simple as possible (but no simpler)

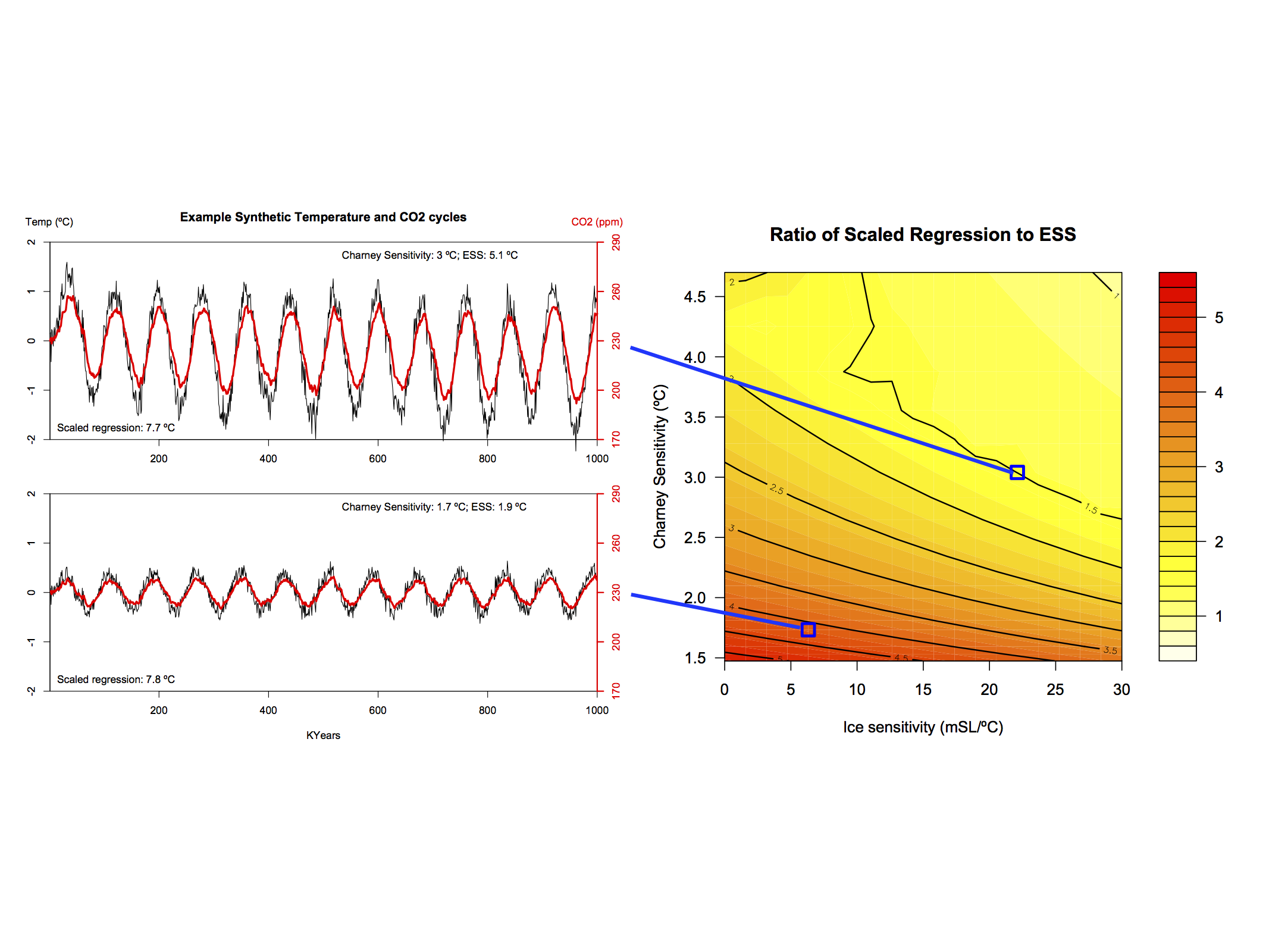

The essence of the comment is a model that we put together that (I think) is the simplest that you can derive that includes a carbon cycle, ice sheets, and allows for the standard ‘Charney’ sensitivity (ECS) and the ESS to vary independently. The documentation and R code for the model is part of the supplementary material, as is a Jupyter notebook for a python version (so knock yourself out if you want to explore it further). What this model shows is that if orbital variations in insolation impact ice sheets directly in any significant way (which evidence suggests they do Roe (2006)), then the regression between CO2 and temperature over the glacial-interglacial cycles (which was used in Snyder (2016)) is a very biased (over)estimate of ESS. The results from this model demonstrate clearly (and in line with our initial criticisms) that the Snyder (2016) suggestion of a very high ESS and committed warming is unfounded.

Relationship between the CO2/T regression and the actual ESS for two specific cases of our simple model when you run it with an 80,000 year orbital cycle. The ratio in the right hand figure and is almost everywhere greater than one, implying an overestimate when using the regression.

The second example follows a few other papers in challenging the assumptions behind constraints on the Charney sensitivity derived from historical changes in temperature and forcings. These transient constraints have tended to come in lower than the other estimates based on paleo-climate or emergent constraints, and thus have been embraced by (let’s say) more ‘optimistic’ commentators (though until the mismatches are resolved it would be premature to only favor only one class of results).

The basic issue looked at in Proistosescu and Huybers (2017), is the model result that suggests that there is a time dependence in the evolution to equilibrium. Others have identified the lags in the southern ocean (which warms more slowly than the northern hemisphere, and northern land in particular) as the source of this time dependence of feedbacks, and we’ve demonstrated that different forcings have subtly different impacts – to some extent based on their spatial signatures. This implies that analyses in the early part of a transition to a new equilibrium will give lower sensitivities than they should.

One persistent (yet sometimes interesting) critic of these results is Nic Lewis (a few previous cases), and he has attempted to dismiss these results as well. The common thread in his criticisms is that these results are based on behaviour seen in models. However, these models are much more complex and better validated than the 1-D energy balance model used in these constraints, so the more correct view is that the simplistic assumptions needed in his approach don’t seem to work in more sophisticated set-ups, and thus are unlikely to be valid in the real world. These effects may not be perfectly captured in the CMIP5 ensemble or in any specific model, but that doesn’t justify assuming that they are zero with zero uncertainty.

The overall conclusion to be drawn is that both very low (<2ºC) and very high estimates (>5ºC) of sensitivity can likely be ruled out by recourse to a wider set of data. Hopefully those constraints will get narrower as we get make more progress, but the current range has stood the test of time for good reasons.

References

- C. Proistosescu, and P.J. Huybers, "Slow climate mode reconciles historical and model-based estimates of climate sensitivity", Science Advances, vol. 3, 2017. http://dx.doi.org/10.1126/sciadv.1602821

- C.W. Snyder, "Evolution of global temperature over the past two million years", Nature, vol. 538, pp. 226-228, 2016. http://dx.doi.org/10.1038/nature19798

- G.A. Schmidt, J. Severinghaus, A. Abe-Ouchi, R.B. Alley, W. Broecker, E. Brook, D. Etheridge, K. Kawamura, R.F. Keeling, M. Leinen, K. Marvel, and T.F. Stocker, "Overestimate of committed warming", Nature, vol. 547, pp. E16-E17, 2017. http://dx.doi.org/10.1038/nature22803

- G. Roe, "In defense of Milankovitch", Geophysical Research Letters, vol. 33, 2006. http://dx.doi.org/10.1029/2006GL027817

- K.C. Armour, "Energy budget constraints on climate sensitivity in light of inconstant climate feedbacks", Nature Climate Change, vol. 7, pp. 331-335, 2017. http://dx.doi.org/10.1038/nclimate3278

Gavin

First, congratulations on your comment in Nature on the Snyder et al 2016 paper. I had come to much the same conclusions as you, but had not pursued the matter.

Secondly, you write about me “The common thread in his criticisms is that these results are based on behaviour seen in models”.

If you read my critique at ClimateAudit of the Proistosescu and Huybers, you would see that your remark is untrue. My main criticism of their study is that they have calculated effective climate sensitivity (their ICS) on a basis which is wrong for ICS in GCMs; their basis is also inconsistent with observationally-based estimates of ICS. They also use a method of estimating CO2 forcing that is incompatible with producing an ERF-basis estimate.

Gavin,

Just a small typo in your 2nd paragraph: You typed “5 million years” instead of “two…” as in the Snyder reference.

[Response: Thanks. Fixed. – gavin]

A question is how does Lewis’ 1-D model have to be enhanced to match the more complex ones, paleo data and modern ones. Is it enough to go 2D with latitude and altitude?

Hi: I am having trouble accessing the supplementary materials http://www.nature.com/nature/journal/v547/n7662/extref/nature22803-s1.pdf and

http://www.nature.com/nature/journal/v547/n7662/extref/nature22803-s2.zip

could you plase provide an alternative link. I appreciate it, thanks

“The implications of strongly state-dependent fast sensitivity reach far beyond the early Paleogene. The study of past warm climates may not narrow uncertainty in future climate projections in coming centuries because fast climate sensitivity may itself be state-dependent” http://www.pnas.org/content/110/35/14162.full

Is it likely that how far we go on a doubling of CO2 depends on the path the climate takes getting there? Is there a “butterfly effect” on paths, on which transient climate response gets triggered, which will then determine an equilibrium climate sensitivity out of a range of possibilities?

For instance, early winter snowfall is increasing, which is affecting the growth rate and carbon sequestration in the Canadian boreal forest http://www.pnas.org/content/113/52/E8406.full . At the same time, increasing depth and duration of drought, along with warmer temperatures enabling the spread of pine beetles has increased the flammability of this forest region –

http://www.nature.com/nclimate/journal/v1/n9/full/nclimate1293.html

http://www.vancouversun.com/fires+through+tinder+pine+beetle+killed+forests/10047293/story.html

Can climate models give different TCR and ECS with different timing/extent of when or how much boreal forest burns, and how the soot generated alters the date of an ice free Arctic Ocean or the rate of Greenland ice melt and its influence on long term dynamics of the AMOC transport of heat?

Even if one were to stipulate all of the ostensible “errors” Lewis claims, the only way he is actually able to justify his claim of disagreement with observations’ ICS is by throwing out the observational ICS estimate used in the paper in favor of once he likes and obviously likes simply because of their low values.

There’s no mystery there, nor “there” there.

These estimates are made from times when CO2 levels were much lower. Can we be reasonable sure that ESS does not change with higher CO2 levels? Applying the historic ESS to the current and future CO2 growth assumes an exponential relationship. If it is only linear, the future ESS would be lower.

Dumb question from a non-scientist….

Are there simple, controlled laboratory experiments that could either shed light on climate sensitivity and/or else help demonstrate, including mostly to skeptics, how changes in trace concentrations of an IR absorber/re-radiator are so effective at changing the temp of a system?

I’m imagining two different, parallel setups that might both behave similarly for an effective double demonstration — one a gaseous artificial atmosphere and the other liquid based. Each consisting of transparent containers within a controlled, ambient temp environment, and each containing appropriate and variable trace concentrations of GHG’s or, for the liquid system, other IR absorbing molecules, and both exposed to appropriate IR radiation.

The point not to measure changes of IR absorption/transmission with changing concentration but to measure the changes in the temp itself.

Would changes of the system temps within the containers be a useful and contributory demonstration of anything?

Even for just a high school science project?

A couple more likely typos:

“which was used in Snyder (20106)”, perhaps a future paper from Snyder?

“Others have identified the lags in the southern ocean (which warms more slowly in the northern hemisphere, and northern land in particular)” seems to be missing a “than”, perhaps instead of “in”.

[Response: Thanks. Fixed. – gavin]

Dr. Gavin Schmidt, a question, please:

“… most [committed global warming estimates with present (around 410ppm CO2, etc) constant concentrations] are around 0.5-1ºC…”

But aren’t these way too low, since LOTI shows we are – as of 2017 – already around 0.95C warmer than the 1951-1980 average, and there is more warming “in the pipeline” because of the time lag, and another (estimated) 0.5C warming when the anthropogenic aerosols dimming effect is removed?

https://data.giss.nasa.gov/gistemp/tabledata_v3/GLB.Ts+dSST.txt

Prufrocks #8,

Short answer is “no”.

First, because those who call themselves “skeptics” will never be convinced.

But that aside, I have some experience with designing the kind of thing you are talking about for Physics 101/HS level students, and you couldn’t “model” the phenomenon physically without a lot of fudging (and a really expensive bill of materials).

My suggestion would be for some group with the skills to create an “app” that has really good illustrative graphics– maybe that already exists(??), but I haven’t seen a reference to it.

After all, we live in a virtual, post-physical world at this point, right? The app might be more convincing to young people than a physical demonstration.

Prufrocks,

The problem is that the majority of the climate response is driven by feedbacks that cannot be replicated holistically in a laboratory setting. Ice sheets, ocean currents and the like require an entire planet to operate. This is why, we must simulate these things using computers.

Adding to t marvell’s questions, there are many occurrences in the historical record of divergent trends between temperature and atmospheric CO2 levels – with multi-millennial timescales. Recently a paper by Hasenclever et al proposed a new mechanism related to volcanism that would explain the levels of CO2 in one of those episodes.

Has any study of sensitivity focused on those events of divergent trends?

Prufrocks, 8–

Not a dumb question, IMO! However, as far as true climate sensitivity, the answer is ‘no’, because that is a complex property of the the entire Earth system. Even just the required definitions are not all that simple, and there are different formulations of sensitivity, depending mostly on characteristic time-scale. (“Charney sensitivity”, or “Earth System Sensitivity”, for instance.) Discussion here, if needed:

https://en.wikipedia.org/wiki/Climate_sensitivity

However, for demonstration projects, emphatically yes! The original Tyndall setup of 1859 comes to mind.

There’s a whole series of classic climate papers available online, including Tyndall’s. The collection as a whole is here:

http://nsdl.library.cornell.edu/websites/wiki/index.php/PALE_ClassicArticles/GlobalWarming.html

The Tyndall paper is here:

http://nsdl.library.cornell.edu/websites/wiki/index.php/PALE_ClassicArticles/archives/classic_articles/issue1_global_warming/n3.Tyndall_1861corrected.pdf

The most climatically relevant stuff is on p. 279 et seq.–and note that there is intervening irrelevant material (marked with strike-throughs) separating Parts I & II of Tyndall’s discussion.

Regression on recent temperature vs multi-model average (MMA) is not a robust way to estimate Charney sensitivity.

https://www.theguardian.com/environment/climate-consensus-97-per-cent/2017/jun/28/climate-scientists-just-debunked-deniers-favorite-argument#img-2

1) Real temperature changes will incorporate auto-correlation, which can be on the scale of years, decades, centuries and so on. (Decades are of interest in Charney sensitivity in this context.) MMAs will average out auto-correlation – making the response appear linear – and so will underestimate Charney sensitivity.

2) There is no sharp cut-off between Charney sensitivity and ESS. As warming continues slower feedbacks will increasingly be incorporated. One would expect observed temperature to be non-linear with time, assuming a log-increase of CO2with time (Charney sensitivity is a log function – linear increase with doubling of CO2), the response will show upward curvature. A linear fit is probably not appropriate.

The observed temperature response to my eye (I’m a statistician) is not detailed enough to rule out both auto-correlation and a non-linear temperature response function – with time anyway. The argument is far from over, and temperature rises may well accelerate on the Charney response timescale.

Sensitivity calculation is a product of heat density in Watts/square meter (for the doubling) and thermal sensitivity in degree K*m^2/watt, right? It seems to me that all feedback should be expressed as power densities and the thermal sensitivity taken from change in temperature versus solar power density from poles to equator at sea level as at most 0.3 k per w/m^2

Another way to estimate climate sensitivity from both models AND observations is to calculate the ratio of observed warming to forecast warming…then multiply that by the ECS value used in the model.

(Obs/fcst)*ECS modelled = est ECS

When I run these numbers, I generally get a number in the ball park of 2. Caveat… I’ve only done back of the envelope calcs. That is less than most models start with but higher than some of the observational studies.

Kevin McKinney, #14

Thanks so much.

Now, forgetting entirely the more complex issue of “climate sensitivity” and focusing only on how tiny, minute concentrations of CO2 can make a difference to global temps — one of the oft-repeated and simplistic denialist memes — is there a simple desktop experiment to demonstrate how that can work?

For some reason I’m stuck with the idea of a water-based system in a jar as perhaps the easiest and most simple demo, in which appropriately minute trace concentrations of an IR absorber/re-radiator can be varied with a predictable effect on the temp. The ‘feedback’ element would be missing, of course.

(Still looking at the Tyndall stuff, thanks.)

Re10

present day warming must include the effects of the other greenhouse gases like methane, HFCs etc. These are at the moment causing warming about the same as the dimming produced by sulphate aerosols. So CO2 alone the estimate is probably correct.

Prufrocks, this youtube video shows how to utterly destroy the “minute concentrations of CO2” argument.

https://www.youtube.com/watch?v=81FHVrXgzuA

Just point out that CO2 is as opaque to IR in the band centered on 15 microns as the black ink is to visible light in the video. Also point out that in excess of 99.5% of the atmosphere is completely transparent to IR and might as well not be there at all, except that it acts as a heat storage medium after IR absorption by CO2 (H2O, CH4, etc) and subsequent relaxation through collision with the O2 and N2 molecules of that medium.

It really is one of the dumbest arguments employed by the ignorati.

Supplemental: in this one Dr Iain Stewart shows very clearly how effective an IR absorber CO2 is:

https://www.youtube.com/watch?v=SeYfl45X1wo

> is there a simple desktop experiment

You should read the history — first link under the Science heading, in the sidebar.

For your convenience, http://history.aip.org/climate

https://en.wikipedia.org/wiki/Carbon_dioxide_laser

Nic Lewis:

While I’ve come to consider RealClimate authoritative and have learned a great deal from it, I’ve understood that rigorous scientific debate does not take place on blogs as a rule. Should I update my understanding?

That’s not to say expert commentary by one Nic Lewis isn’t welcome here, to be sure. OTOH, it will be a cold day on a hot planet when I surf over to CA. Just sayin’.

Prufrocks @18

“For some reason I’m stuck with the idea of a water-based system in a jar as perhaps the easiest and most simple demo, in which appropriately minute trace concentrations of an IR absorber/re-radiator can be varied with a predictable effect on the temp. The ‘feedback’ element would be missing, of course.”

My two cents worth. A water based system doesn’t achieve much, as the oceans participate in weather and climate, but aren’t the primary driving forces, which are global atmospheric circulation patterns and greenhouse gases etc.

Mythbusters have done a simple experiment with different containers of air subjected to a light source, with one container ordinary air, the other having air plus a higher concentration of CO2, quite high I think, possibly 5%. The second canister showed a higher temperature increase. The aim was simply to show that temperatures were higher with higher CO2 concentrations.

It’s not really possible to go much beyond this level of simplicity. The real world atmosphere is a large body of air, has circulation patterns, and is not held in a container, so the effects of CO2 have different effects and behaviours than a simple container and very low concentrations can have significant effects.

It’s just not really possible to either put the planet in a laboratory, or adequately model it in an experiment. I have wondered this myself but various people have pointed out the difficulties.

You would also have to duplicate feedbacks and this becomes impossible ( as you noted)

Unless you built a giant greenhouse and tried to set up a miniature climate, but I think it would be impossible to model crucial features of the planet, so I think any results would be of very dubious value. However I’m just an interested observer, and I would be interested to hear what a climate scientist would have to say.

This means we have to be reliant on past climate history, calculations and computer models.

More recent experience suggests that a high ESS is credible. CO2 grew 17.8% between the decades of 1907-1916 to 1987-1996, and temperature grew by .845 degrees from 1927-1936 to 2007-2016, for an ESS of 4.75. This assumes a 20-year lag between CO2 changes and temperature changes.

Much depends on the lag selected. With no lag, comparing 1907-16 to 2007-16 for CO2 and temperature produces an ESS of only 3.16.

This difference between estimates with and without lags pertains to pretty much any calculation ending with current temperature figures. This is because CO2 production was relative low until the mid-1950s, and recent temperature growth does not reflect the large CO2 growth in the last few decades.

It is crucial to determine the lag. The best I can gather from the literature it is 20+ years, but I have not been able to find any definitive estimate.

Re #18 Where Prufrocks wrote “on how tiny, minute concentrations of CO2 can make a difference”,that is not really a problem because we know that they do. The problem is we have increased CO2 from 280 ppm to 410 ppm, by nearly 50%, which will be reached within the next few years.

for ‘prufrocks’:

https://www.google.com/search?q=co2+infrared+mean+free+path+photon

http://www.theonion.com/article/new-study-finds-most-of-earths-oxygen-used-for-com-36489

The Onion

July 17, 2014

New Study Finds Most Of Earth’s Oxygen Used For Complaining

SEATTLE—Following a multiyear study of atmospheric gases and their role in organic processes on earth, a team of researchers at the University of Washington reported this week that the majority of the oxygen on the planet is used for complaining. “By carefully measuring the processes of gas exchange, the respiratory capacities of living organisms, and resulting metabolic activities, we discovered that most oxygen molecules in Earth’s troposphere are used for the purposes of sighing, whining, and most commonly, complaining,” said the study’s lead author, James Lauderio, who noted that an adult human converts an average of 19 cubic feet of oxygen per day into petty grievances about acquaintances, nitpicking objections about popular media or the weather, criticisms about tasks they are performing, and general fussing with family members. “And while humans are the species most responsible for transforming oxygen into complaints, it’s important to note that other animal life, including mammals, birds, and reptiles, also convert massive amounts of O2 into displeased growls and screeches about their habitats and food sources.” Lauderio added that the research team has not been able to determine a verifiable upper limit to the number of complaints that can be produced from a single inhalation, with many human subjects reportedly producing upwards of 40 or more complaints with each breath.

prufrocks, Hank can seem a bit brusque on occasion, but he means well ;^D. And his ‘oogle fu is superior, no sarcasm intended.

Re: #24 t marvell

“It is crucial to determine the lag. The best I can gather from the literature it is 20+ years, but I have not been able to find any definitive estimate.”

I refer you to this paper: https://www.gfdl.noaa.gov/bibliography/related_files/rw0101.pdf

From Discussion, page 1537

“Results of this study suggest that the climate system’s transient SAT [temperature] response lags the present day radiative forcing by approximately 20 years, leading to a present day warming commitment of approximately 1.0K.”

The paper is from 2001. Since then radiative forcing has increased from approximately 2.5W/m2 to 3W/m2 (https://www.esrl.noaa.gov/gmd/aggi/aggi.html).

We are already approximately 0.95C above the 1951-1980 average (LOTI, https://data.giss.nasa.gov/gistemp/tabledata_v3/GLB.Ts+dSST.txt)

If we are committed to an extra approximately 1.2C warming from present day forcing with a time lag of approximately 20 years, that would put us around 2.15C above the 1951-1980 average in 2037.

Which is much more than the 2C above pre-industrial “safe” threshold in the Paris Agreement.

So, even conservative estimates of committed warming indicate that we have to urgently reduce radiative forcing, in other words peak global GHG emissions as soon as possible and then reduce them as quickly as possible by reducing our use of fossil fuels drastically, if we want to have a chance at keeping warming under 2C.

Any lagged feedback have to be considered unvalidated forecasts. Such forecasts should be balanced bipolar around static responses with no change to forcing. i.e. changes in precipitation, vegetation, deep ocean currents, etc. are at any time as likely to cool as to heat.

tm 24: This assumes a 20-year lag between CO2 changes and temperature changes.

BPL: I get a higher correlation (r ~ 0.9) between CO2 and temperature anomalies in the same year, from 1850-2016.

31 – As I said before, a simple correlation here is useless if conducted in levels, as I assume you did. The correlations will be large, highly significant, but spurious, because CO2 and temperature are trending variables (that is, they have unit roots). You must difference the variables (such as using percent changes).

tm 32: As I said before, a simple correlation here is useless if conducted in levels, as I assume you did. The correlations will be large, highly significant, but spurious, because CO2 and temperature are trending variables (that is, they have unit roots). You must difference the variables (such as using percent changes).

BPL: Thank you, I am competent to distinguish spurious correlations. CO2 and temperature anomalies are cointegrated by Engel-Granger test. The relation stands. Do the math.

t marvel – There is no lag in the 5.35 * ln(c1/c0) w/m2 radiant heating to earth’s surface. The real climate reference link early in the article has a graphic showing timescales, i.e lags, of different feedbacks

Andrew at 29 has stated something important in calculating the current level of CO2e in atmosphere and projecting the corresponding temp increase that we (some of us maybe) will experience in 2037. Another way to express this situation is to point out that the record heat we are experiencing in the past three years correlates to the CO2e in atmosphere in 1987. That number in 1987 was approximately 420. So the record temps we are experiencing now reflect the environment that we purchased for ourselves when we hit CO2e of 420.

We have not seen the environment we have purchased for ourselves and our progeny with a CO2e of 490 (our current state), but it’s coming, we ordered, we paid for it, we are not going to like it when it gets here, but it’s not clear that we have any way to return it to the sender.

https://www.esrl.noaa.gov/gmd/aggi/aggi.html

Daily CO2

July 15, 2017: 407.14 ppm

July 15, 2016: 404.00 ppm Noisy number

Weekly CO2

July 9 – 15, 2017 407.01 ppm

July 9 – 15, 2016 404.51 ppm

Monthly CO2

June CO2

June 2017: 408.84 ppm

June 2016: 406.81 ppm

All the talk about emissions is interesting, but a distraction from the important number, which is the accumulation number.

We desperately need to stop the increase in the accumulation number, then we need to start moving that number back down, probably need to head back to 385 and below

Warm regards

Mike

By the way, Kyle Armour has a thread on Twitter taking some issue with any alleged disagreement between sensitivity estimates derived ‘quasi-observationally’ and what CMIP5 is doing. Some of Nic’s minor points may be valid (I think the issue with volcanic forcing is)– to me, the real issue is the lack of robust interpretation regarding model performance that can be derived from these types of approaches.

To touch upon Eli’s so far ignored question (#3) on bridging the hierarchy of models: The issue at stake is the curvatuve in a plot of N vs. Ts, where N is basically the TOA imbalance (which decays to zero as equilibrium is approached) and Ts is the surface temperature. The curvature seems to arise not so much from non-linearity in feedbacks, but from evolving spatial patterns of ocean heat uptake and ‘realized warming.’

If I instantly quadruple CO2 in an experiment, I’d expect ocean heat uptake (OHU) to occur pretty uniformly in latitude for the initial few years, but then become pretty localized to the subpolar oceans after, say, year 100. Where the heat is actually stored is another matter…the Southern Ocean, for instance, appear to be taking up far more heat than is being stored there due to equatorward transport. The structure of the ocean circulation basically anchors this region to something like pre-industrial temperatures, at least until deep bottom water originating in the North Atlantic also warms.

There is a growing literature thinking about these OHU issues in detail, but if you think about OHU as a “forcing” on the surface temperature for a second (just like CO2 or solar, or anything else), one of the robust results seems to be that if you add CO2 and remove 1 W/m2 from the subpolar oceans, you offset far more warming than if you removed 1 W/m2 from an equal area of the tropics (see e.g., here). You can make contact with some of the concepts of ‘efficacy’ and also retain a global mean perspective (see e.g., Hansen’s work on the efficacy of climate forcing, or Kate Marvel’s work looking at how 1 W/m2 expresses itself in different ways for different forcings) by noting that efficacy > 1 for OHU that is maximized in subpolar areas.

Re: #35 mike

“… the record heat we are experiencing in the past three years correlates to the CO2e in atmosphere in 1987…” err, no.

2017-20 = 1997

“All the talk about emissions is interesting, but a distraction from the important number, which is the accumulation number.”

Actually, it’s the other way around, because the only actionable variable here are emissions. Also, nobody uses the expression “the accumulation number”; the accepted expression is “atmospheric concentration” (of GHG’s or CO2).

“We desperately need to stop the increase in the accumulation number, then we need to start moving that number back down, probably need to head back to 385 and below”

To “stop the increase in the accumulation number” means we are back to:

1. Peak emissions as soon as possible.

2. Decrease emissions as quickly as possible.

3. Reach zero net emissions as soon as possible.

As you can see, it’s all about emissions.

“to start moving that number back down” means negative net emissions. This is very, very, very far removed from the reality we are faced with today, but I am certain you have some ideas on how to achieve that. Let’s hear them.

34 – Cointegration is beside the point and does not help. Cointegration is not supposed to tell one about the causal direction or the lag relationship. Temperature increases cause CO2 increases (e.g., ocean outgassing), as well as visa versa, so in itself cointegration, and therefore correlation in levels, is not evidence of AGW. If there is cointegration, the tests will show cointegration when alternatively using many lags and leads of temperature and CO2. Also, I find that the cointegration tests here are ambivalent (you get cointegration with some tests, and specifications of each test, and not others).

In the end, correlations in levels are meaningless when determining lags, and you have to difference.

Try doing a levels correlation of temperature and CO2 the next year (or several years later). You get a huge positive correlation. I have talked to deniers who say this disproves AGW.

34 – Of course, the lag is in the feedbacks, negative and positive. Most AGW results from positive feedbacks, and it is my impression that positive feedbacks have longer lags than negative feedbacks.

I like the simple calculations that I did in post 24 because it dodges all the uncertainties about the feedbacks and about getting to an equilibrium. But there needs to be many more such calculations to determine if chance occurrences in the decades selected do not skew the estimates.

37 – The 20 year estimate is uncertain. The lag could be 30 years or more. The best eyeball comparison is between the rapid growth of CO2 production after WWII and the later rapid temperature gains in the 1970’s.

– The accumulated CO2 level is more useful because the emissions data are less reliable (they are self-serving data given by governments) and because CO2 levels are effected by more than emissions. It’s the accumulated CO2 that causes the damage, not each year’s emissions.

39 – The 20 year lag is not an estimate in the paper I referred you to. It is a characteristic of the climate system that is evidenced by the models used in the paper. You run the simulations, and the lag is seen in the results. And yes, the models are probabilistic, but the uncertainty around the lag is in years, not in decades.

“The lag could be 30 years or more.” Any paper to support your “estimate”? Otherwise, it’s just bs.

“The accumulated CO2 level is more useful…” for what? For armchair climate amateurs posting vague dismissive comments on a climate website, perhaps. For policy makers and in international agreements where people have to deal with what is actionable, setting objectives in terms of GHG emissions is the only option available.

As the word “accumulated” implies, atmospheric CO2 concentration at any point in time is the result of *past emissions*, and since we don’t have a time machine or a magic wand to change the past, people in the real world have to deal with *future emissions*.

tm 38: Cointegration is beside the point and does not help.

BPL: It helps if you suggested it was a spurious correlation. You’re wrong. Deal with it.

41 – right, your correlation is not spurious in the Granger sense, provided that temperature and CO2 are cointegrated, which is uncertain.

As I said, cointegration is beside the point because a regression in levels is wrong because it does not deal with simultaneity and lag structure.

The lag in temperature change will depend on the feedback. Usually the lag is figured from Charney feedbacks, if I remember correctly. However, if others come into play, like net CO2 emissions from soils/permafrost and albedo change from melting ice sheets, the temperature rise can continue for much longer.

Re #43 – Mitch

“Usually the lag is figured from Charney feedbacks, if I remember correctly.”

The approximately 20-year lag (between atmospheric CO2 concentration change and reaching equilibrium temperature) is an emerging property (just like sensitivity) of the global climate system in the GCM models used in the paper I linked to above, if I understood it correctly.

Older models only included fast (Charney) feedbacks, more recent and complex ones do include some slow feedbacks (as far as I know; also note that new possibly significant feedbacks are being discovered all the time, making things… complicated).

As usual, we only have a single Earth and we are running the experiment on it right now, so models are the only way we can run multiple “experiments” and see what properties of the climate system we can figure out.

All models are wrong but some are useful – George Box

The ESS has a problem in that it assumes temperature changes are linear, whereas they have been exponential in recent times. The ESS for CO2 is the number of degrees rise in temperature for a doubling of CO2. The better measure is the percent rise in temperature for a doubling of CO2 – in other words, the elasticity.

This is especially important when comparing CO2 growth to temperature growth in the past century, and when looking at the lagged effect of CO2 on temperature (and there is a lag of decades). By a statistical artifact, the ESS becomes much larger the longer the lag (because the longer lag gives time for temperature to increase at an accelerated pace). In other words, the ESS is not useful for calculating the impact of CO2 growth on temperature.

Thus one should use the elasticity. I calculate an elasticity of .014 for the impact of CO2 growth on temperature (degrees in Kelvan). A recent 25% increase in CO2 translates into a .35% increase in temperature, or one degree. It is risky to extrapolate this beyond recent experience, but it is interesting to note that a doubling of CO2 would thus lead to a 1.4% increase in temperature, or about 4 degrees.

Re: #45 t marvell

What is the subtitle of this post by Dr. Gavin Schmidt, in bold above?

“You need to be careful in inferring climate sensitivity from observations.”

That is the case whether you are extrapolating from paleoclimate data or from any recent temperature dataset vs atmospheric CO2 concentration measurements (eg Keeling curve).

46 – of course one must always be careful, whether modeling or doing regression analysis. There are lots of uncertainties are chances to make mistakes. Here, they do different things. Modeling studies lots of types of forcing, enters feedbacks and looks for an equilibrium centuries away. The regression simply looks at the outcome of adding more CO2, net of feedbacks and not including the direct effect of other forcings. I view the ESS models as academic exercises and the regression models as relevant to policy, since in practice the only policy being discussed is limiting CO2.

47 – “I view the ESS models as academic exercises…”

There is no such thing as an “ESS model”. Again, ESS and lag are “emerging properties” in GCM models. I refer you to past discussions of ESS here on realclimate.

“… and the regression models as relevant to policy…”

Regression models? Regression analysis is a fine tool for many things, but it’s of limited value to determine a relevant value of ESS, compared to GCM models.

“… since in practice the only policy being discussed is limiting CO2.”

Nope. While CO2 atmospheric concentration undeniably remains the main driver of climate change, CO2 is not the only GHG, and peaking and reducing CO2 emissions is not the ONLY policy being discussed. Climate change mitigation and adaptation policies discussions go way beyond discussing just CO2 atmospheric concentration numbers.

Sorry, but it seems you have got it all wrong.

“Others have identified the lags in the southern ocean (which warms more slowly than the northern hemisphere, and northern land in particular) as the source of this time dependence of feedbacks, and we’ve demonstrated that different forcings have subtly different impacts – ‘

It might help Peter Huybers and his collegues if we understood more about the temperature response of the albedo of the calcite belt, and other bioogically variable components of radiative equilibrium that impact SST in both the southern ocean and the arctic seas

48 – my point is that the notion ESS is wrong given the exponential growth of CO2 and temperature in recent decades. ESS assumes non-exponential growth of temperature. This causes estimates of the impact of CO2 on temperature to be understated, if one assumes no lag or a short lag; and to be overstated if one assumes longer lags.

The consensus medium of 2.5 ESS seems to be an understatement. Any embarrassment caused by underestimating is less than that caused by overestimating.