Confirmation bias and a profound lack of curiosity mark the latest ABC (Anything But Carbon) contrapalooza in DC this week and a decade-old albedo error trips them up.

I occasionally dip into the contrarian-sphere to see if there is anything new that might be of actual interest. I am usually disappointed, and last week’s escapade was no different. The quality of the talks was pretty abysmal – bad slides, monotone reading of notes, and abundant errors, misunderstandings, fallacies and cherry picks but, if there was a theme, it was that everything was so complicated and uncertain that no-one can know anything. This is a notable contrast to previous outings where everything was definitely due to the sun or ‘natural’ variability (anything but carbon remains the organizing principle).

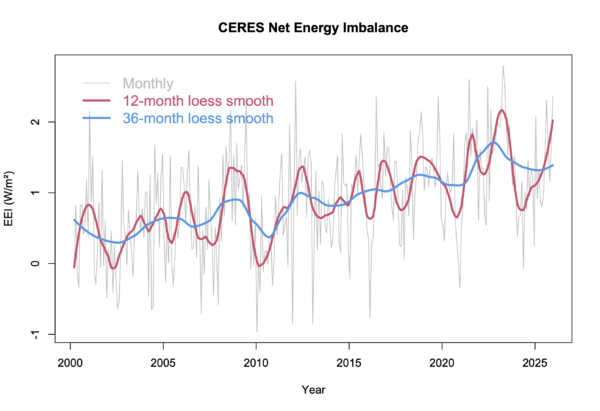

Multiple speakers (including Willie Soon, John Clauser) purported to be very irate that the CERES Earth’s energy imbalance (EEI) record is calibrated to the changes in the in situ heat content data (dominated by the ocean heat content changes). Quite why they were so exercised was a little mysterious because their sources of information on this topic were the papers that clearly explained why and how this was being done (i.e. Loeb et al. (2009) or Loeb et al. (2018)). [Basically, the satellite data for the EEI does not have a good enough absolute calibration to be an independent estimate, and so the CERES EBAF product is adjusted to match the (much better characterized) in situ heat gain (Jul 2005-Jun 2015) in a way that does not affect the trends]. Also the EEI based on in situ data is apparently wrong because the AI told them so. Ok then.

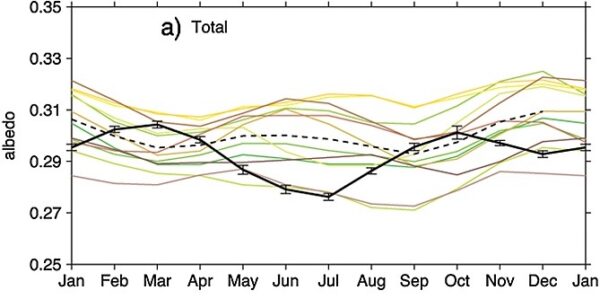

In both Soon’s and Clauser’s talk, a particular figure made an appearance – Fig. 11a from Stephens et al. (2015).

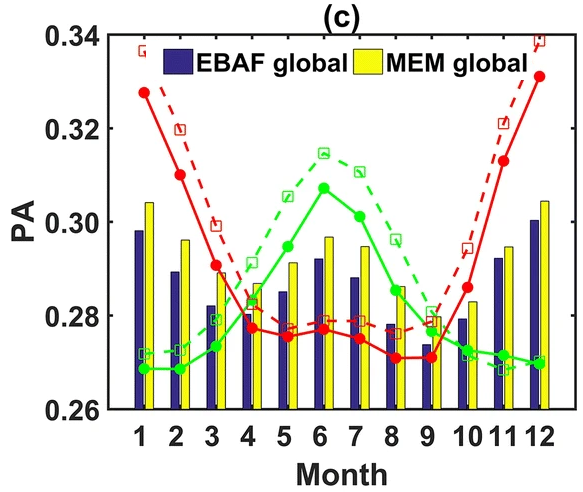

Unsurprisingly, this was used to claim that the CMIP5 models (and, by implication, all models) were terribly wrong, can’t be trusted etc. etc. Oddly, neither of them chose to show the comparison with the later CMIP6 models (Jian et al., 2020):

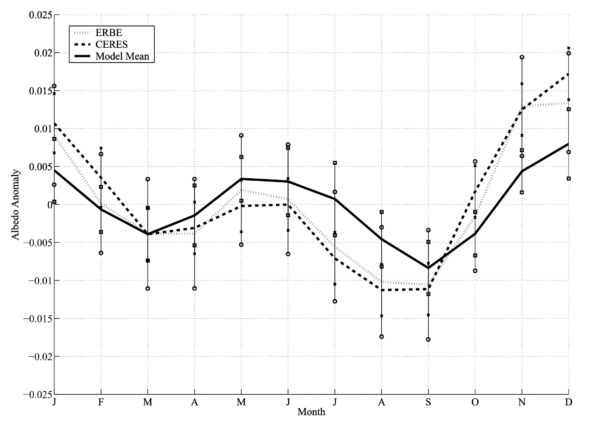

Or even the earlier CMIP3 models from Bender et al (2006):

Well, it’s not so odd, since these comparisons are much more favorable to the models. But lets look closer…

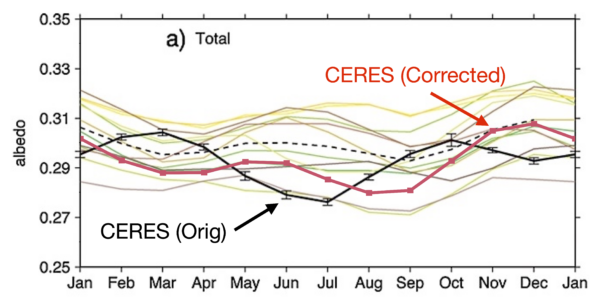

The CERES observations in the three plots do not agree at all! The 2015 figure has maximum albedo in March and October, while the other two have maxima in June and December – a 2 or 3 month phase shift. Something is wrong here. Fortunately, the CERES project has a very accessible website for downloading data, and it’s trivial to get the incoming solar flux and reflected solar flux for every month. The albedo is just the ratio, and we can average the months to create a climatology. The differences in the averaging periods makes no visible difference, and the differences in the EBAF version are likely to be minor (though that is harder to check). However, the bottom line is that the CERES data in the 2015 figure is wrong, while the 2006 and 2020 papers are correct.

We can speculate about what led to this (possibly related to the first month with data being March 2000 assumed to be January?), but there are two immediate consequences. First, the CMIP5 models (like the CMIP6 and CMIP3 models) turn out not to be so bad: phasing is ok, but the annual mean albedo can be a little variable. Second, it’s likely that the other panels in Fig 11, Figs. 5a-c, the discussion about them in section 6 etc. in the Stephens et al (2015) are also affected by this. Despite citing Bender et al (2006), and also Kato (2009) (see his figure 1a) who have it correct, the phase offset was not addressed. The Stephens et al paper has since been cited over 240 times, and it seems odd that no-one else had noticed this issue [Aside, if you know of a reference that does make this point, please let me know in the comments].

Why now?

Interest in the EEI is obviously growing, both as a function of the increasing length of the CERES timeseries and the fact that the EEI is growing. Even the WMO is elevating this metric in importance. So one might expect the contrarian-sphere to try and undermine it – that’s just what they do.

But here is the difference between doing real science and what is on show at the DC contrapalooza. Scientists are curious about what is actually going on. Given a discrepancy, they want to understand what’s happening. The changes in albedo over the CERES record are indeed interesting and a little challenging to explain (the CERESMIP project is looking into this in more detail), but the scientists’ goal is to dig deeper until it becomes clear. For Soon and Clauser, discrepancies are just weapons – they don’t care that something doesn’t look right – in fact they want it to look wrong regardless of whether it’s an error in an old paper, or an ambiguous statement that they can read uncharitably, or a genuine issue. Thus the chances of them checking into this themselves is zero – despite their frequent claims that they want to ‘follow the data’.

Do I expect everyone to check every figure in every paper they cite before using them in a presentation? No. But this example outlines how important open science is. When something comes up like this, people should be able to check quickly that the label and the contents match. It also highlights the danger of leaving issues uncorrected in the literature. I don’t know if this issue has been brought to the attention of the journal or the authors already, but even papers from a decade ago get cited and used (see here for another example). We owe it to everyone (yes, even the contrarians!) to make sure that the literature is as free of error as we can make it.

References

- N.G. Loeb, B.A. Wielicki, D.R. Doelling, G.L. Smith, D.F. Keyes, S. Kato, N. Manalo-Smith, and T. Wong, "Toward Optimal Closure of the Earth's Top-of-Atmosphere Radiation Budget", Journal of Climate, vol. 22, pp. 748-766, 2009. http://dx.doi.org/10.1175/2008JCLI2637.1

- N.G. Loeb, D.R. Doelling, H. Wang, W. Su, C. Nguyen, J.G. Corbett, L. Liang, C. Mitrescu, F.G. Rose, and S. Kato, "Clouds and the Earth’s Radiant Energy System (CERES) Energy Balanced and Filled (EBAF) Top-of-Atmosphere (TOA) Edition-4.0 Data Product", Journal of Climate, vol. 31, pp. 895-918, 2018. http://dx.doi.org/10.1175/JCLI-D-17-0208.1

- G.L. Stephens, D. O'Brien, P.J. Webster, P. Pilewski, S. Kato, and J. Li, "The albedo of Earth", Reviews of Geophysics, vol. 53, pp. 141-163, 2015. http://dx.doi.org/10.1002/2014RG000449

- B. Jian, J. Li, Y. Zhao, Y. He, J. Wang, and J. Huang, "Evaluation of the CMIP6 planetary albedo climatology using satellite observations", Climate Dynamics, vol. 54, pp. 5145-5161, 2020. http://dx.doi.org/10.1007/s00382-020-05277-4

- F.A. Bender, H. Rodhe, R.J. Charlson, A.M.L. Ekman, and N. Loeb, "22 views of the global albedo—comparison between 20 GCMs and two satellites", Tellus A: Dynamic Meteorology and Oceanography, vol. 58, pp. 320, 2006. http://dx.doi.org/10.1111/j.1600-0870.2006.00181.x

- S. Kato, "Interannual Variability of the Global Radiation Budget", Journal of Climate, vol. 22, pp. 4893-4907, 2009. http://dx.doi.org/10.1175/2009JCLI2795.1

Comment Policy:Please note that if your comment repeats a point you have already made, or is abusive, or is the nth comment you have posted in a very short amount of time, please reflect on the whether you are using your time online to maximum efficiency. Thanks.