In the Paris Agreement, just about all of the world’s nations pledged to “pursue efforts to limit the temperature increase to 1.5 degrees Celsius above pre-industrial levels”. On Saturday, the top climate diplomats from the U.S. and China, John Kerry and Xie Zhenhua, reiterated in a joint statement that they want to step up their climate mitigation efforts to keep that goal “within reach”.

But is that still possible? Here are two graphs.

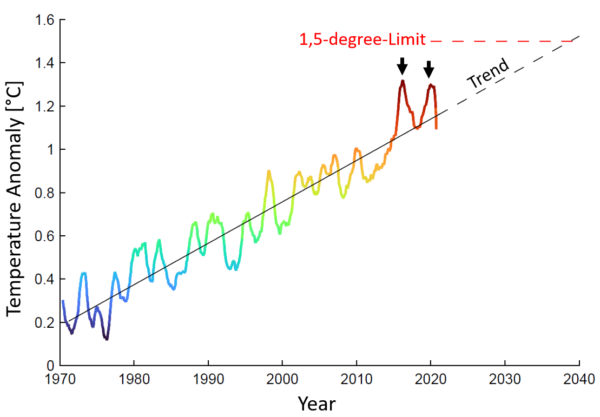

The first graph shows the global temperature trend. Warming has progressed essentially linearly for fifty years in response to increasing CO2 emissions. Although the latter accelerate the rise of CO2 in the atmosphere, on the other hand, radiative forcing (which causes warming) increases only with the logarithm of CO2 concentration, and therefore roughly linearly since the 1970s. Any acceleration of warming over the last decade is not a significant trend change. It is linked to two El Niño events in recent years, but that is part of natural variability. Does anyone remember the discussion about the supposed “warming pause” in the early 2000s? It also never was statistically significant, nor did it signify a trend change.

Therefore, if emissions continue to grow, we expect a further roughly linear increase in temperature, which would then exceed 1.5 degrees around 2040. If we lower emissions, the trend will flatten out and become roughly horizontal as we reach zero emissions. Therefore, these observational data do not argue against the possibility to still keep warming below 1.5°C.

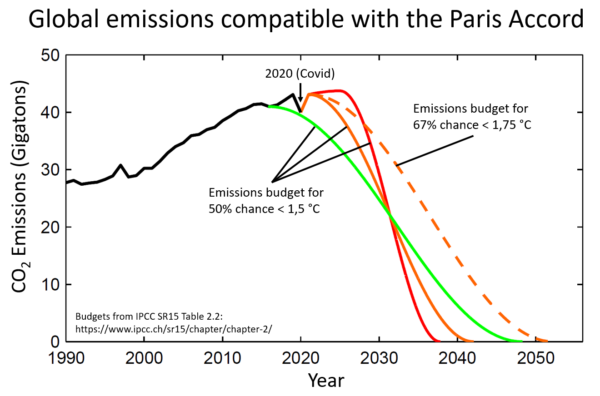

The second graph shows global CO2 emission trajectories with which we can still limit warming to 1.5 °C, at least with 50:50 probability. This means: given the uncertainties, this could also land us at 1.6 degrees, but with a bit of luck, it could land us a bit below 1.5 degrees. The core conclusions:

- It is not yet impossible to keep warming below 1.5 °C.

- This requires roughly a halving of global CO2 emissions by 2030 (as already stated in the IPCC 1.5 degree report).

- If the world dithers for another ten years before emissions fall, it will no longer be possible (red curve).

It should be noted that I have not assumed net-negative emissions here. Many scenarios assume that we first emit too much and that our children then have to pull CO2 out of the atmosphere after mid-century – I think this is not very realistic and also ethically questionable. I think we will probably not be able to achieve more than reducing global emissions to net zero. Even that would require CO2 sinks to compensate for unavoidable residual emissions, e.g. from agriculture.

Conclusion: The limitation to 1.5 degrees is still possible and from my point of view also urgently advised to avert catastrophic risks, but it requires immediate decisive measures. I am curious to see what the climate summit scheduled by US President Joe Biden will bring in the coming days!

Link

Fact check by Climate Analytics to the claim that we can no longer limit warming to 1.5°C.

This article originally appeared in German at KlimaLounge.

Guest post by Veronika Huber

Guest post by Veronika Huber