As previewed last weekend, I spent most of last week at a workshop on Climate Sensitivity hosted by the Max Planck Institute at Schloss Ringberg. It was undoubtedly one of the better workshops I’ve attended – it was focussed, deep and with much new information to digest (some feel for the discussion can be seen from the #ringberg15 tweets). I’ll give a brief overview of my impressions below.

As we’ve discussed previously, there are multiple classes of observational data that could provide some constraints on how warm the planet will get as CO2 increases (either on a multi-decadal timescale (TCR) or for the long term equilibrium (ECS)). Principally, there is paleo-climate data from previous quasi-equilibria like the Last Glacial Maximum or Eocene; evidence from the instrumental trends since the 19th Century; and climatological observations that correlate to longer term responses (either in the mean, seasonally or over interannual variations). Each class of data has its own problems, either in terms of observational quality, its relationship to sensitivity, or the level of simplicity of the underlying model.

The workshop spent a lot of time examining the explicit (and implicit) assumptions that underlie the methods and constructively criticising all of them to see how they can be best reconciled (because we are just looking for one number after all). Andy Dessler recorded his own talk on short term constraints and posted it already. Slides from the other talks are posted on the MPI website.

There were two major themes that emerged across a lot of the discussions: the stability of the basic ‘energy balance’ equation (![]() ) that defines the sensitivity,

) that defines the sensitivity, ![]() , to zeroth order; and the challenge of estimating cloud feedbacks from process-based understanding. The connection occurs because the clouds are the cause of the biggest variation in sensitivity across GCMs.

, to zeroth order; and the challenge of estimating cloud feedbacks from process-based understanding. The connection occurs because the clouds are the cause of the biggest variation in sensitivity across GCMs.

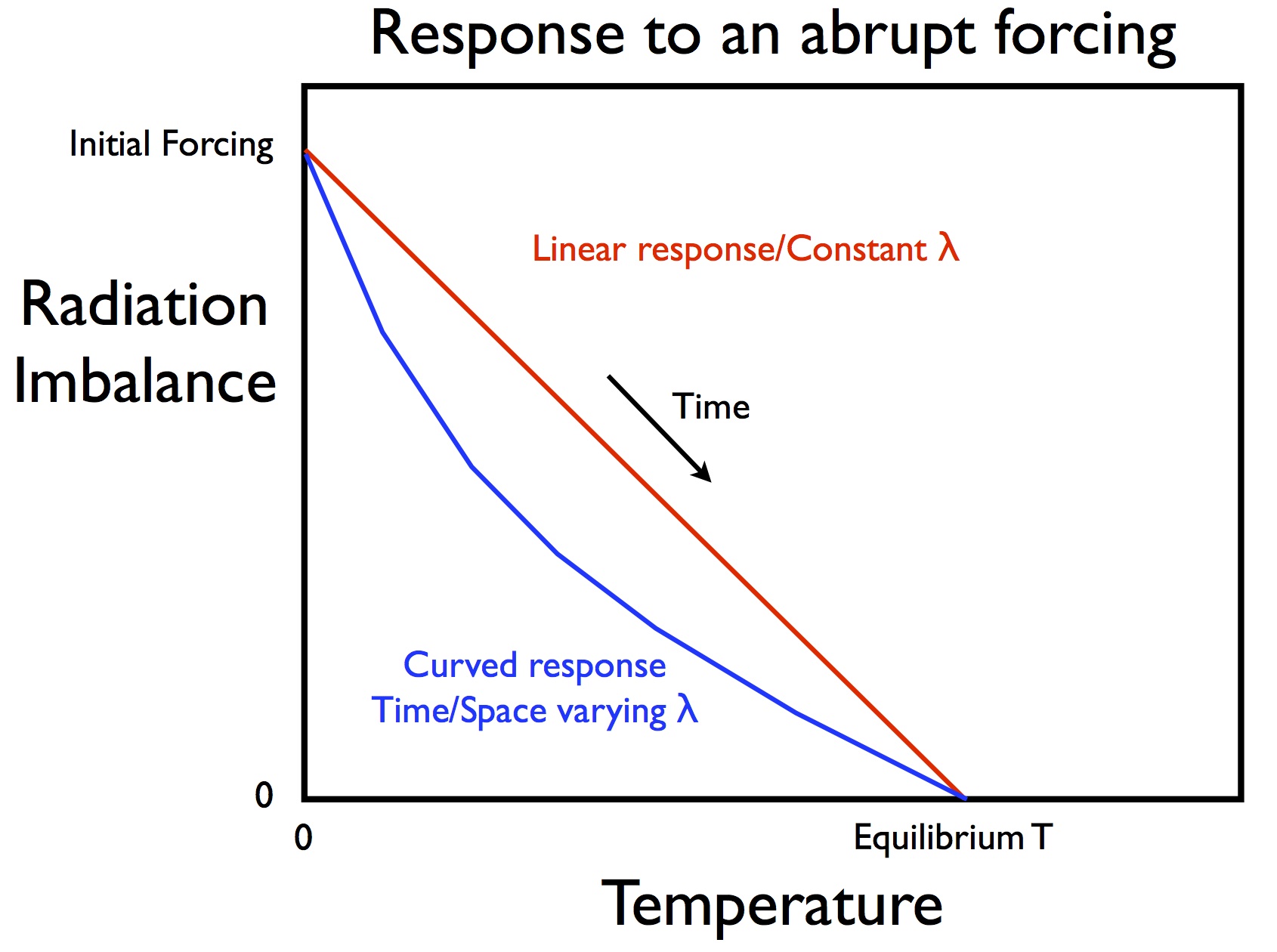

The first topic was triggered by extensive evidence in models that there are structural variations associated with changes of base state, time variations, spatial variations and the different physics of each forcing that imply ![]() needs to be thought of as more than constant. For instance, plotting

needs to be thought of as more than constant. For instance, plotting ![]() against

against ![]() in an experiment with an abrupt forcing (such as 4xCO2) should give a straight line (red) if

in an experiment with an abrupt forcing (such as 4xCO2) should give a straight line (red) if ![]() were constant, but instead there is almost always some curvature implying that temperature changes a more for the same forcing change after a century or so than at the start (blue line).

were constant, but instead there is almost always some curvature implying that temperature changes a more for the same forcing change after a century or so than at the start (blue line).

That curvature implies that applying a strictly ‘constant ![]() ‘ model to a limited set of observations (such as the trends over the last hundred years) is likely to bias any estimate of the sensitivity. Quantification of these issues is ongoing. Without a resolution though (or a set of reasonable corrections), efforts to combine multiple constraints without taking this into account are going to be flawed.

‘ model to a limited set of observations (such as the trends over the last hundred years) is likely to bias any estimate of the sensitivity. Quantification of these issues is ongoing. Without a resolution though (or a set of reasonable corrections), efforts to combine multiple constraints without taking this into account are going to be flawed.

The #ringberg15 blackboard. (Spoiler: cloud feedbacks are complicated) pic.twitter.com/DZ4WPOJLGl

— Gavin Schmidt (@ClimateOfGavin) March 26, 2015

The cloud feedback discussion was extremely interesting since there are a multitude of different theorised effects depending on which clouds are being discussed (Mark Zelinka and Graeme Stevens did a great job in particular in explaining these effects). The variation in climate sensitivity in models seems to be dominated by the simulations of low clouds (which are a net cooling to the climate) which have a tendency to disappear as the climate warms. Whether this can be independently constrained in the observations is unclear.

The conversations around these issues got into multiple connected areas, including aerosol forcings, observational uncertainty, climate model tuning and independence, the nature of probability, Bayesian updating, detection and attribution, and internal variability. It looks like there will be some interesting upcoming papers on many of these aspects that will help clarify matters.

While the workshop wasn’t designed to produce a new assessment of the evidence, we did spend time specifying the problems there would be if equilibrium sensitivity was less than 2ºC or greater than 5ºC. Specifically, what would have to be true for all the evidence to fit? This was useful at underlining the challenge in shifting or constraining the ‘classic’ range by very much.

More generally, the workshop was a great example of how a diverse group (in attitude, background, temperament as well as the more usual classes), can tackle complex and difficult problems in a situation with only minimal distractions. Some of the these discussions were (to say the least) quite intense, but were all done in a spirit of constructive collegiality so nobody came to blows ;-).

As an aside, it also underlines the problems with a move towards more virtual conferences – I know of no online methodology to replicate the intensity or depth of the conversations, sustained over a week that we had here. Much in science can certainly be done fine by video conferencing (including a PhD defence I ‘attended’ in Paris, or for recent testimony to the Texas Legislature I gave by Skype), but the experience at this kind of focused workshops is really the hardest challenge to emulate.

Sure. Energy is required to perform that work. It must come from somewhere. However…

If the level is raised via thermal expansion (as opposed to, e.g., via melting land ice), then more or less yes, depending upon which layers expanded how much. But using your figures, it takes 58,800J/m^2 to do that, which means that a flux of 4W/m^2 could accomplish it in 14,700s, or 0.17d. So it is a very small constraint.

48, Steve Fish: This is pretty basic stuff.

It is pretty basic stuff, as were my question and calculation. Given the information on flows of energy from surface to troposphere, that is available now, how much of an increase in surface temperature can be driven by a 4 W/m^2 increase in downwelling LWIR at the surface? You get somewhat different answers depending on whether you use the Stevens et al flows or the Trenberth et al flows, but they are not a lot different.

47, Kevin McKinney: The surface radiates ~400 Wm2, per the updated K & T diagram. The ‘non-radiative processes’ amount to about 1/5 of that. – See more at: https://www.realclimate.org/index.php/archives/2015/04/reflections-on-ringberg/comment-page-1/#comments

Using the figure of about 400 W/m^2 I calculated that an increase in mean temperature from 288K to 289K would increase the upward radiation by about

4 W/m^2. That would be absorbed throughout the atmosphere, resulting in some increase in downwelling LWIR, but not very much. The increases in the SH and LH go directly to the mid-upper troposphere, and very little of that would be radiated back as well. So I think my calculation of the current climate sensitivity is not too bad.

At the surface, the net of upwelling LWIR-downwelling LWIR is smaller than the net of upward transfer of LH + SH.

Matthew. You are rediscovering climate sensitivity in the absence of feedbacks. But in the real world, there are feedbacks. Water vapor feedback, whereby increased temperatures lead to more water vapor in the atmosphere increase downwelling radiation as well. I believe that effect along roughly double the classic sensitivity one would expect from naively using Stephan-Boltzman. The water vapor feedback is stronger near the equator than near the poles too, and increases with temperature.

Matthew, you are asking interesting questions, and really digging in. However, you seem to be working from the old ‘Callendarian’ viewpoint of warming being driven by DWIR at the surface. He thought of it that way in part because in the 30s and 40s of the last century, there was obviously no satellite data!

My understanding of current thinking is that GE is primarily driven by radiation at the effective radiating level (ERL).

http://www.aos.wisc.edu/~aos121br/radn/radn/sld012.htm

That’s where, as Steve said, energy can be radiated out of the system. What happens is that ERL rises as GHG concentrations increase. Because ERL is below the tropopause, that means radiative efficacy to space drops, hence less energy leaves the system, which warms in response. This in turn increases radiative efficacy again–Planck feedback–and voila, a new, slightly warmer equilibrium temperature. That warming is propagated through the entire system–eventually. But the lapse rate tends to constrain things in the troposphere, if I’ve got this more or less right, so the atmospheric response should be fairly rapid.

Of course, the thermodynamic sums still have to add up, so your project may not be dead in the water. But I don’t think it’s driven as you seem to be thinking of it.

I’d just add that Chris Colose has written the most about this aspect of things here on RC. Might be worth looking up some of those old comments.

@50 Huh? Sea level rise occurs due to both temperature increase and glacial melting. In the latter case, the water has gravitational potential energy. It is also relatively fresh, and so less dense than the deep ocean. In the case of thermal expansion, this depends on the detailed temperature profiles before and after. I don’t see a meaningful constraint coming via this approach.

54, Thomas: You are rediscovering climate sensitivity in the absence of feedbacks. But in the real world, there are feedbacks. – See more at: https://www.realclimate.org/index.php/archives/2015/04/reflections-on-ringberg/comment-page-2/?wpmp_tp=1#sthash.DSHc0c5M.dpuf

No. I am computing (some of) the energetic costs of warming the surface.

55, Kevin McKinney: However, you seem to be working from the old ‘Callendarian’ viewpoint of warming being driven by DWIR at the surface – See more at: https://www.realclimate.org/index.php/archives/2015/04/reflections-on-ringberg/comment-page-2/?wpmp_tp=1#sthash.DSHc0c5M.dpuf

That is possible. But the 4 W/m^2 is quoted in recent accounts (such as Atmospher, Clouds and Climate by David Randall. If the global surface is warmed by CO2 accumulating in the troposphere, there has to be a process transferring that energy from the troposphere to the surface. By itself, accumulation of CO2 in the troposphere does not reduce any of the rates of surface cooling.

56, Ray Ladbury: In the case of thermal expansion, this depends on the detailed temperature profiles before and after. – See more at: https://www.realclimate.org/index.php/archives/2015/04/reflections-on-ringberg/comment-page-2/?wpmp_tp=1#sthash.DSHc0c5M.dpuf

Yes it does. Without a convincing calculation, I do not see it one way or another.

In the Stephens et al diagram ~510.6 Wm-2 of radiation is absorbed by the surface of the earth. ~510 leaves the surface, and .6 stays behind.

Ice is melting. Water is expanding. Surface temperature is going up, quite rapidly over the last 4-plus years, and water below the surface is warming.

Matthew needs to see the calculation for how .6Wm-2 is accomplishing all of this.

~510.6 absorbed:

165 SW plus 345.6 LW = 510.6

~510 absorbed in the atmosphere:

398 plus 88 plus 24 = 510

Re- Comments by Matthew R Marler

I finally found full text access to the Stephens et al (2012) article and now I think I understand what you are saying. I apologize for hassling you about this. It would have been helpful to have had the paper earlier- http://users.clas.ufl.edu/prwaylen/GEO2200 Readings/Readings/Radiation balance/An update on Earth%27s energy balance in light of latest global observations.pdf -. Your problem, as I see it, involves how sensible and latent heat from the earth surface integrates with the overall radiation balance.

Here is my version. If one subtracts the all sky long wave (LW) emissions back to the earth surface from the upwelling surface emission of LW, there is only a 53 W (watts per square meter) difference, while sensible heat (SH) and latent heat (LH) accounts for 112 W suggesting that this is more than two times as large. However, the total outgoing LW to space is 239 W and if SH & LH radiated directly to space it would be less than half. Further, because surface emission of SH & LH are low troposphere LW emitting phenomena, it is a large contributing source for the all-sky emission back to the surface (e.g. LW back to the surface is about 87% of LW emission from the surface) which further reduces SH & LH to about one eighth of the LW emissions to space.

Now, does this sound like gobbledygook to you? It does to me and I wrote it. I trust the practicing climate scientist to figure this stuff out for me.

Steve

Re- me on- 8 Apr 2015 @ 11:02 AM, ~#59

Sorry about the link to the Stephens et al paper. Just copy and paste the URL including everything from http through .pdf into your browser for the full text article. Steve

Steve Fish: Further, because surface emission of SH & LH are low troposphere LW emitting phenomena, – See more at: https://www.realclimate.org/index.php/archives/2015/04/reflections-on-ringberg/comment-page-2/#comment-628220

I think that statement is wrong. The SH and LH are conveyed to the upper troposphere, hence contribute little to the total downwelling IR at the surface. If the increases in SH, LH, and IR transfer from the surface are approximately what I calculated, how much will the 1 in downwelling LWIR at the surface be?

Hassling is valuable. You might “hassle” me out of my tentative belief that this is an important calculation of a limit to surface warming.

With a 1C increase in surface temperature, what will be the net change in the rate of cooling of the Earth surface, given what we know about the flows and other empirical knowledge and model output? Romps et al calculated a 10% increase in CAPE per 1C of warming; estimates of increased rainfall range from 2% to 7% per 1C; radiation from the surface follows closely the Stefan-Boltzmann law. What is their net effect?

All of this quibbling about how the surface prefers to lose energy to the atmosphere seems well beside the point.

To take one extreme limit, in a very hot atmosphere the net infrared radiation is close to zero at the surface, because the surface is radiating like Ts**4 and the atmosphere is back-radiating like Ta**4 (since low-level emissivity would be near one in a very moist case, and Ta wouldn’t be very different than Ts). One would then infer that the net infrared radiation is not the predominant energy loss term at the surface, which might be true, but it’s not clear to me why this is supposed to be an important realization in this context. For many purposes, knowing precisely which term in the surface budget is doing the transfer to the atmosphere is not necessary.

Of course, the surface energetics come into play more explicitly for a lot of problems (especially for precipitation and temperature over many land surfaces), and must be/are being modeled in more comprehensive experiments.

In such a hot regime described above, adding CO2 also wouldn’t really affect the downwelling IR to the surface much, since the low levels are already radiating like a blackbody. Matthew’s formulation of the climate problem is to think of the surface budget in this sense, by adding CO2 while keeping the tropospheric temperature unchanged and playing mental games about how the surface fluxes are supposed to end up closing, and then inferring climate sensitivity.

This thinking gets convoluted quickly, and doesn’t respect the whole column (TOA) energy budget, since adding CO2 still warms the whole troposphere by virtue of the fact that the whole planet is now radiating less efficiently to space than before and so Ta**4 still acts to increase the downwelling radiation to the surface, which is not a *direct* CO2 effect, nor is it how we define “radiative forcing.” The strength of the greenhouse effect is basically G=σTs**4 – OLR, it’s not a ratio of radiative and non-radiative surface fluxes.

So, I admittedly don’t get Matthew’s point. I think he’s framing everything incorrectly to begin with, but like I said before, we’re fundamentally interested in the column temperature adjustment that is demanded in order for the system to radiate an equal (but opposite) amount of energy as that of the original forcing so we can balance our system again. That is, the radiative forcing (RF) looks something like RF=-λΔT, where λ is a measure of the radiative restoring efficiency in W/(m2 K). You can’t just think about surface fluxes to close the problem.

“…accumulation of CO2 in the troposphere does not reduce any of the rates of surface cooling.”

Sure it does. Cooling rate is the net of all processes, and the whole troposphere is warmer, not to mention optically thinner in IR.

Kevin-san,

More CO2 would make the atmosphere optically thicker, not thinner.

62, Chris Colose: To take one extreme limit, in a very hot atmosphere the net infrared radiation is close to zero at the surface, because the surface is radiating like Ts**4 and the atmosphere is back-radiating like Ta**4 (since low-level emissivity would be near one in a very moist case, and Ta wouldn’t be very different than Ts). – See more at: https://www.realclimate.org/index.php/archives/2015/04/reflections-on-ringberg/comment-page-2/#comment-628258

I am working with the atmosphere and surface as they are, and considering the effects of a small increase in surface temperature.

This thinking gets convoluted quickly, and doesn’t respect the whole column (TOA) energy budget, since adding CO2 still warms the whole troposphere by virtue of the fact that the whole planet is now radiating less efficiently to space than before and so Ta**4 still acts to increase the downwelling radiation to the surface, which is not a *direct* CO2 effect, nor is it how we define “radiative forcing.” – See more at: https://www.realclimate.org/index.php/archives/2015/04/reflections-on-ringberg/comment-page-2/#comment-628258

While my focus is on the flux changes at the surface, I am not neglecting the whole column. I am building on a calculation by Romps et al of a 10% increase in CAPE per 1C of warming, and including the transfer of LH from the surface to the upper troposphere. I do not treat TOA as the “whole” column.

That is, the radiative forcing (RF) looks something like RF=-λΔT, where λ is a measure of the radiative restoring efficiency in W/(m2 K). You can’t just think about surface fluxes to close the problem. – See more at: https://www.realclimate.org/index.php/archives/2015/04/reflections-on-ringberg/comment-page-2/#comment-628258

I am not trying to “close” a problem other than to calculate the change in the upward surface fluxes as the Earth surface warms. If my calculation is reasonably accurate, then a new problem arises: What is the constraint on surface warming imposed by those changes? O’Gorman et al titled their paper “Energetic Constraints on Precipitation Under Climate Change”. My calculation could be called “Energetic Constraints on Surface Warming Given Precipitation Change, Radiative Change, and Advection/Convection Change”.

What would your calculation be? Given a 1C increase in mean Earth surface temperature, what would the change in upward energy fluxes be? The question assumes that working with means is accurate to perhaps the first significant figure; greater accuracy requires looking at the distribution of changes across varieties of surface features in the latitude bands. Almost everyone does work with means, but O’Gorman et al do warn that the increase in precipitation with temperature may be different on different surfaces. You do not have to get my point in order to make the calculation.

JCH: Ice is melting. Water is expanding. Surface temperature is going up, quite rapidly over the last 4-plus years, and water below the surface is warming.

Matthew needs to see the calculation for how .6Wm-2 is accomplishing all of this.

– See more at: https://www.realclimate.org/index.php/archives/2015/04/reflections-on-ringberg/comment-page-2/#comment-628263

That is basically what I need, but add in the increase in the energy flow in the precipitation rate increase (Romps et al calculate that at 2% per 1C; O’Gorman et al give a range up to 7% per 1C), and the increase in the advection/convection energy flow. I am looking at the changes in the surface fluxes, which do not necessarily exactly equal the changes in the TOA fluxes over all intervals of time (hence surface and troposphere can warm at different rates until something like an approximate steady-state results.)

> If the global surface is warmed by CO2 accumulating in the troposphere

It doesn’t; it accumulates in the atmosphere.

CO2 is a well mixed gas, all the way up.

You can’t know that without knowing also the changes in the downward fluxes, because those change the surface’s temperature and therefore the upward fluxes (yes, it’s recursive). And you can’t know the downward flux changes without (at the very least) closing the energy budgets for the surface, the atmosphere, and the TOA. Try starting with Kiehl & Trenberth 1997.

67, Hank Roberts: It doesn’t; it accumulates in the atmosphere. CO2 is a well mixed gas, all the way up. – See more at: https://www.realclimate.org/index.php/archives/2015/04/reflections-on-ringberg/comment-page-2/#comment-628264

Well sure, a modest writing error on my part. From all the places that CO2 is accumulating, if it is the cause of the surface warming, there has to be a mechanism. Increase in downwelling LWIR is most often cited as the mechanism.

So Gavin, since Nic Lewis does appear to be someone we ought to take seriously also in the reality-based community, I´d be curious to hear: I know it can be hard to meaningfully talk about a “general opinion” in a diverse community of so many individually brilliant scientists – but anyway: after the discussions among those of you gathered in Ringberg, was there a general sense of agreement about the expected size of the equilibrium climate sensitivity (ECS)? Did you intensely discuss respect to Björn Stevens´ recent paper (and Nic Lewis´ update of his recent work) in the light of the revised aerosol forcing? Does it appear that the best estimate of ECS should indeed be somewhat lowered from the ~3C, and that the long right skewed tail of the curve might be shortened a bit?

As lots of septics are (as always) crowing about this and that, I´d really appreciate a comment from the true science-based community.

#64–Barton, gently stated. Thank you.

However, this terminology is particularly confusing. Knowing that, I did check my usage, and was guided by this statement:

“Optical depth or optical thickness… is dimensionless, and in particular is not a length, though it is a monotonically increasing function of path length, and approaches zero as the path length approaches zero.”

Hence ‘thinner’ for an atmosphere with shorter mean path lengths–or so I reasoned.

68, meow: You can’t know that without knowing also the changes in the downward fluxes, because those change the surface’s temperature and therefore the upward fluxes (yes, it’s recursive). – See more at: https://www.realclimate.org/index.php/archives/2015/04/reflections-on-ringberg/comment-page-2/#comment-628283

I think the weakest part of the calculation is that I can’t compute the increase in downward flux that results from the increased upward flux that I have calculated. I think that the increase in downward flux is only about 1/10th the increase in upward flux (because so much of the upward flux distributes energy to the upper troposphere, cf the Romps et al calculation of the increase in CAPE). If that is what Chris Colose meant by “closing the energy budget”, then clearly I missed it. Also, working with mean temperature and aggregate fluxes is necessarily imprecise: what are needed are the changes in small regions at various times of the year, and to integrate all those small changes with respect to their distribution. That is an inaccuracy that afflicts all calculations based on means and aggregates.

51, meow: If the level is raised via thermal expansion (as opposed to, e.g., via melting land ice), then more or less yes, depending upon which layers expanded how much. But using your figures, it takes 58,800J/m^2 to do that, which means that a flux of 4W/m^2 could accomplish it in 14,700s, or 0.17d. So it is a very small constraint. – See more at: https://www.realclimate.org/index.php/archives/2015/04/reflections-on-ringberg/comment-page-2/#comment-628295

Thank you. I came to the same conclusion after some mental arithmetic, but I hadn’t gotten around to writing it out yet.

62, Chris Colose: In such a hot regime described above, adding CO2 also wouldn’t really affect the downwelling IR to the surface much, since the low levels are already radiating like a blackbody. Matthew’s formulation of the climate problem is to think of the surface budget in this sense, by adding CO2 while keeping the tropospheric temperature unchanged and playing mental games about how the surface fluxes are supposed to end up closing, and then inferring climate sensitivity. – See more at: https://www.realclimate.org/index.php/archives/2015/04/reflections-on-ringberg/comment-page-2/#comments

Nowhere have I asserted that the troposphere temperature remains constant. If you look at “layers”: surface, lower troposphere, middle troposphere, upper troposphere and higher layers, all I have written is that the rate of cooling of the surface via 3 processes increases, and that increase consumes energy. Two of the processes carry energy to at least the middle troposphere; where the increase of the radiative heat flux is distributed is mostly above the lower troposphere, because absorption in the lower troposphere is (allegedly) nearly “saturated”. I omitted any attempt to calculate the increased temperature of any part of the troposphere, but I do think that the temperature of the lower third of the troposphere increases the least. The temperature increase in the troposphere resulting from these three upward flux increases is not going to be very great, or uniformly distributed.

Regarding clouds, path length, and optical depth. When I point my infrared thermometer up into the sky, clouds always measure warmer than clear sky. I think the difference in temperature is greater than the difference between the moist and dry lapse rate. This indicates to me(a college dropout; YMMV) that the IR is coming from a lower warmer level, near the cloud base. Some radiation will be coming from inside the cloud where the optical depth allows the IR to escape, most will be coming from the shallowest layers near the bottom, and some will be coming from GHGs between the cloud and sensor. Some of the IR will be thermal radiation fom the water/ice droplets, to the extent that they are grey bodies, capable of absorbing & emitting radiation, and some some of the IR will come from radiation emitted up(ish) by GHGs(or the earth’s surface) and scattered down to my thermometer by the cloud. It seems to me that at the IR wavelengths which are absorbed by the cloud, the physical processes of absorption/emission are indistinguishable from those of GHGs, each cloud water droplet or ice particle behaving like a GHG molecule, albeit with a broader absorption/emission spectrum. It also seems to me that IR which was emitted upwards and scattered back down by the cloud is equivalent to outbound IR which is absorbed by GHGs and emitted back to the ground, except for the “different spectrum” caveats. There is another process that I think may be important to climatology; photons that are scattered in the cloud follow a longer path. Satellite observations of lightning flashes show pulse broadening on the order of 100-400 microseconds, depending on cloud shape, optical density, and extent. This longer path length increases the probability of absorption by greenhouse gases; a doubling of the effective path length is equivalent to a doubling of the concentration.

“…when we compare our median effective pulse duration with those obtained below clouds [e.g., Guo and Krider, 1982; MacKerras, 1973], one may infer an estimate of the amount of additional path length incurred by cloud scattering. The additional path length is inferred by assuming that the photons propagate at the speed of light from the source to the sensor but that the propagation path is lengthened by Mie scattering so that some photons arrive much later than would be expected from transport through a cloud-free atmosphere. For comparison, MacKerras [1973] observed a median pulse width of 200 us below clouds in Australia. Guo and Krider [1982] inferred a median effective pulse width of 157us below thunderclouds in Florida. The median value of the down-selected PDD event distribution shown in Figure 9 (solid curve) is 592 us.”

Optical observations of terrestrial lightning by the FORTE satellite photodiode detector, M. W. Kirkland et.al.,JOURNAL OF GEOPHYSICAL RESEARCH, VOL. 106, NO. D24, PAGES 33,499 –33,509, DECEMBER 27, 2001

http://www.researchgate.net/profile/David_Suszcynsky/publication/228746599_Optical_observations_of_terrestrial_lightning_by_the_FORTE_satellite_photodiode_detector/links/0deec521d21aa3971c000000.pdf

“Long geometric path lengths traveled inside thick clouds account for anomalously large reported absorption by atmospheric trace gasses.” and “an event occurring within a planar cloud will appear brighter when viewed from directly above than if it were observed elsewhere; also, those photons reaching the zenith will have been on average delayed quite a bit, in that they traverse the plane for some time before being redirected.”

Monte Carlo Simulations of Light Scattering by Clouds, T.E. Light (&;>), D.M. Suszcynsky, M.W. Kirkland, A.R.Jacobson

A poster presented At the Dec 1999 AGU Meeting. (LA-UR-99-4682)

http://www.forte.lanl.gov/science/publications/1999/Light_1999_1_Monte_Carlo.pdf

“Inspection of the spectra shows three characteristic features: (1) For comparable SZAs(solar zenith angles) the cloudy sky oxygen A band absorptions are larger than for the clear sky observations. 2) The absorption increase of the weak absorption lines is relatively larger than for the already under clear sky strong absorption lines (note the logarithmic intensity in the scale of Figure 4). (3) The solar Fraunhofer lines (Sil, Mgl, K1, Fel, Fe2, Nil) show the same optical densities for both observations as expected. Feature (1) directly demonstrates that for the skylight transmitted to the ground, larger geometrical paths occur under cloudy than under clear sky conditions. Mainly this is a consequence of the light path enhancement due to multiple Mie scattering in tropospheric clouds…”

First geometrical pathlengths probability density function derivation of the skylight from spectroscopically highly resolving oxygen A band observations 1. Measurement technique, atmospheric observations and model calculations, K. Pfeilsticker et. al.

JOURNAL OF GEOPHYSICAL RESEARCH, VOL. 103, NO. D10, PAGES 11,483-11,504, MAY 27, 1998

http://onlinelibrary.wiley.com/doi/10.1029/98JD00725/pdf

100 useconds additional path length in a cloud that’s 3 kilometers thick is equivalent to 30km additional path length in clear air, or a 10 fold increase in concentration (if i did my math right). These effects (upwelling IR backscatter, cloud particle absorption/emission mimicking GHGs, and enhanced absorption of GHGs especially in the weak “wings” of the spectral lines) might go a long way towards negating the increase in albedo from low continuous cloud cover, and perhaps even make some low cloud types a net positive forcing. Maybe the clouds in the Kiehl & Trenberth energy diagram ought to be replaced by http://www.atmo.arizona.edu/students/courselinks/spring08/atmo336s1/courses/spring15/atmo589/ATMO489_online/lecture_25/multiple_scattering.jpg . If clouds increase albedo and cause cooling, why is Venus so bloody hot?

Thermodynamic geoengineereing: a third way. This proposition has been submitted as a proposal to the MIT climatecolab. Anyone wishing to take a run at it, support it or collaborate in it is welcome.

On optical thickness:

http://bartonlevenson.com/Optical.html

Came across this discussion and thought it might interest some here, in light of some recent comments:

Kevin McKinney, 11 Apr 2015 at 8:14 PM:

That’s a great post you link [ditto, the previous one, on the small-percentage fallacy]. The comment by A.A. Lacis, which is the subject of the post, is a great lesson. Plus, it’s followed by some good comments where it’s been posted whole.

Really, it’s one for the books. Many thanks, A.A. Lacis. It’s the understanding that counts.

The body temperature comparison is particularly meaningful. Regarding outdoor labor in a warming world, it’s more than a comparison. The control knob image is a very good fit (sorry) for the actual physics of the system. It’s a mini thing with a big effect, particularly over time, when you see how the system works.

#77–Thanks, Barton. Think I’ve got it–“path length” does not equal “mean path length.” ;-)

@#50 Dr. Marler, the artist formerly known as septic Matthew:

Power is energy by time, not energy. The energy to lift a m² of ocean 3000m deep is about

3000 [m] * 1000/[kg/m] * 10 [m/s²] * 0.004 [m} = 120.000 [m²*kg/s²] or 120000 [J] or 120000 [Ws]

So this energy would be gathered by 4 watt (per square m) in 30000s or something over 8 hours.

On the other hand, the assumption that adding 4 mm of water to the sea surface means lifting up the whole ocean from its bed, seems completely nonsensical to me

All the best

81, Marcus: On the other hand, the assumption that adding 4 mm of water to the sea surface means lifting up the whole ocean from its bed, seems completely nonsensical to me

Luckily, no one mentioned such an operation. A uniform expansion of the water that raises the top of a column 4mm raises the center of mass of the column 2mm. The density and salinity are not constant throughout the column, so it’s only an approximation. The energy required does not in fact put much of a constraint on how much temperature increase a 4W/m^2 power increase at the surface can produce.

@Thomas/#3: “Please define terms. Everyone in your community may know what the definition of delta N and delta F are, but I doubt too many RC readers do.”

I’m not sure how/why this got skipped by the real scientists on this list, but it did, so I (a mere modeler) will take a whack at it. (Whether or not many RC readers know this equation, it would still be (IMHO) good practice for a general-public-facing site to routinely define terms in equations.) The “basic ‘energy balance’ equation [that] defines the [sensitivity λ] to zeroth order” is given in this piece as

ΔN = ΔF – λΔT_s

Personally I prefer (though the latter is not the same equation as the former)

λΔT_s = ΔN – ΔF [1]

In the latter case,

* N == incoming shortwave solar radiation absorbed by the Earth (net of reflection and scattering)

* F == longwave/IR emitted from the Earth into space (net of atmospheric reabsorption–you should be thinking “greenhouse effect” now)

* T_s == Earth surface temperature (a major determinant of F, which is blackbody radiation[2])

The main idea here (IIUC) is to relate “energy balance” and temperature: to a first approximation (aka 0th order), if an object absorbs more radiation than it emits, it gains energy, which necessarily increases its temperature. (And vv.) Obviously we’re assuming the object has no significant internal heat source, that energy transfer is purely radiative (e.g., no convection or conduction), etc. This should seem reasonable, even tautological.

The question is then, *how much* does T_s change for a given net energy change (ΔE = ΔN – ΔF[1])? There’s no reason to assume that the function {f(E) = T} is linear, but, again, we’re doing 0th-order/first approximations, so let’s assume it is. We can then define the “sensitivity” λ as the proportionality constant.

[1]: You might also see this given as an addition. A vector-like sign convention is often observed such that what comes in/down to the Earth’s surface is defined as positive, and vv. In this case, N is by definition positive, and F is by definition negative, thus addition gives the same net result, with the same sign.

[2]: https://en.wikipedia.org/wiki/Black-body_radiation